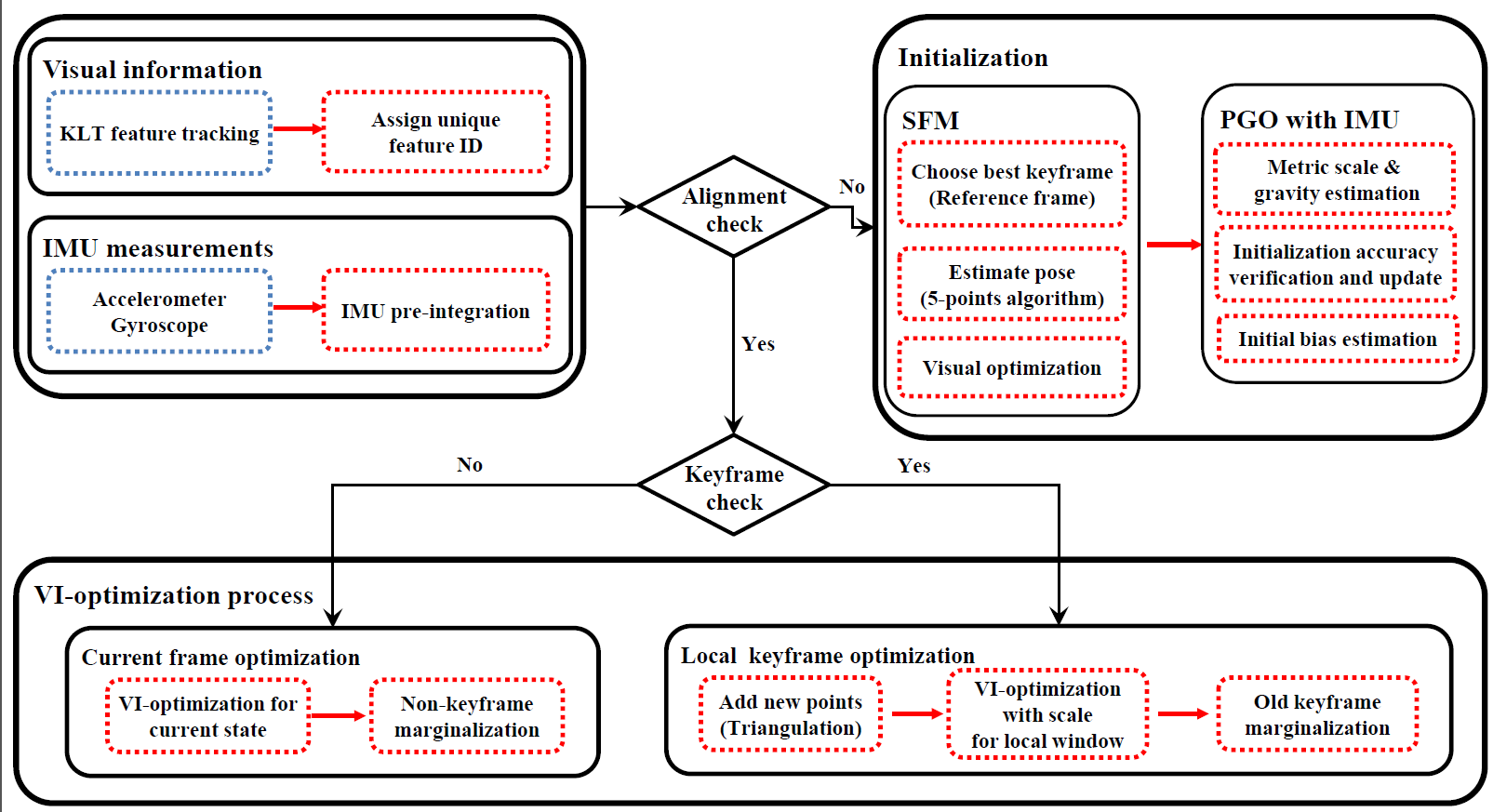

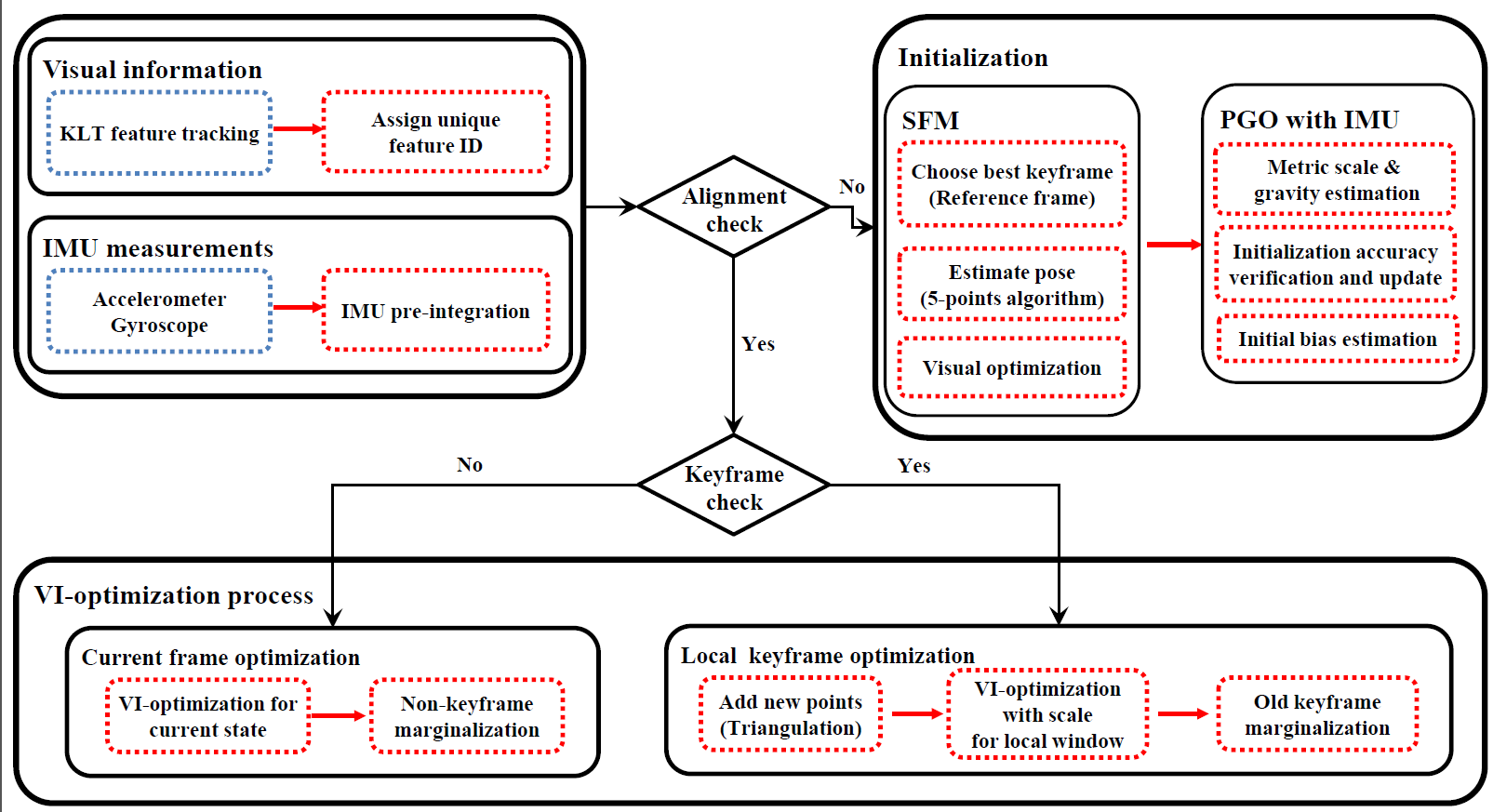

Visual inertial odometry (VIO) has recently received much attention for efficient and accurate ego-motion estimation of unmanned aerial vehicle systems (UAVs). Recent studies have shown that optimization-based algorithms achieve typically high accuracy when given enough amount of information, but occasionally suffer from divergence when solving highly non-linear problems. Further, their performance significantly depends on the accuracy of the initialization of inertial measurement unit (IMU) parameters. In this paper, we propose a novel VIO algorithm of estimating the motional state of UAVs with high accuracy. The main technical contributions are the fusion of visual information and pre-integrated inertial measurements in a joint optimization framework, and the stable initialization of scale and gravity using relative pose constraints. To count for ambiguity and uncertainty of VIO initialization, a local scale parameter is adopted in the online optimization. Quantitative comparisons with the state-of-the-art algorithms on the EuRoC dataset verify the efficacy and accuracy of the proposed method.