Submitted:

01 November 2023

Posted:

02 November 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

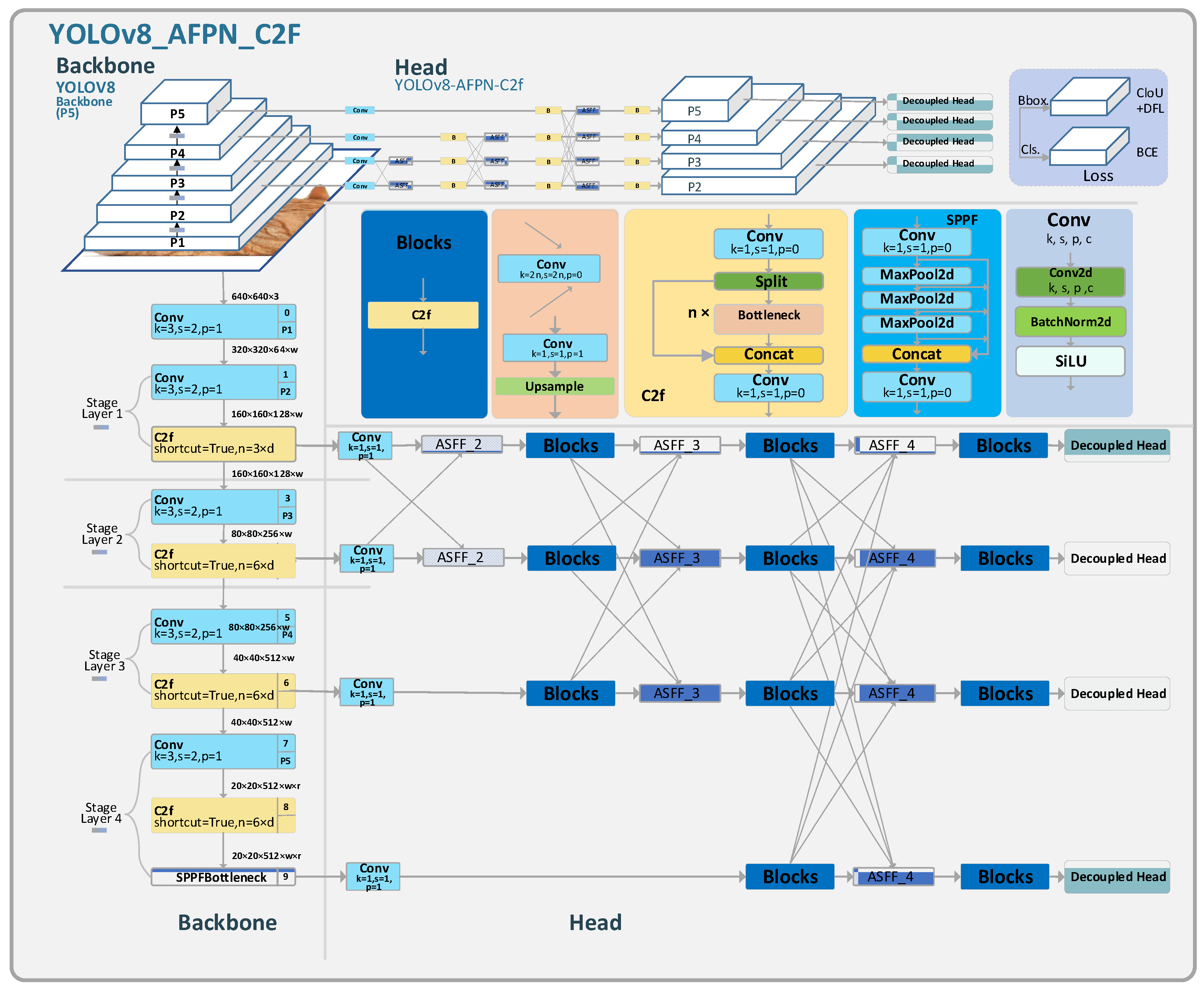

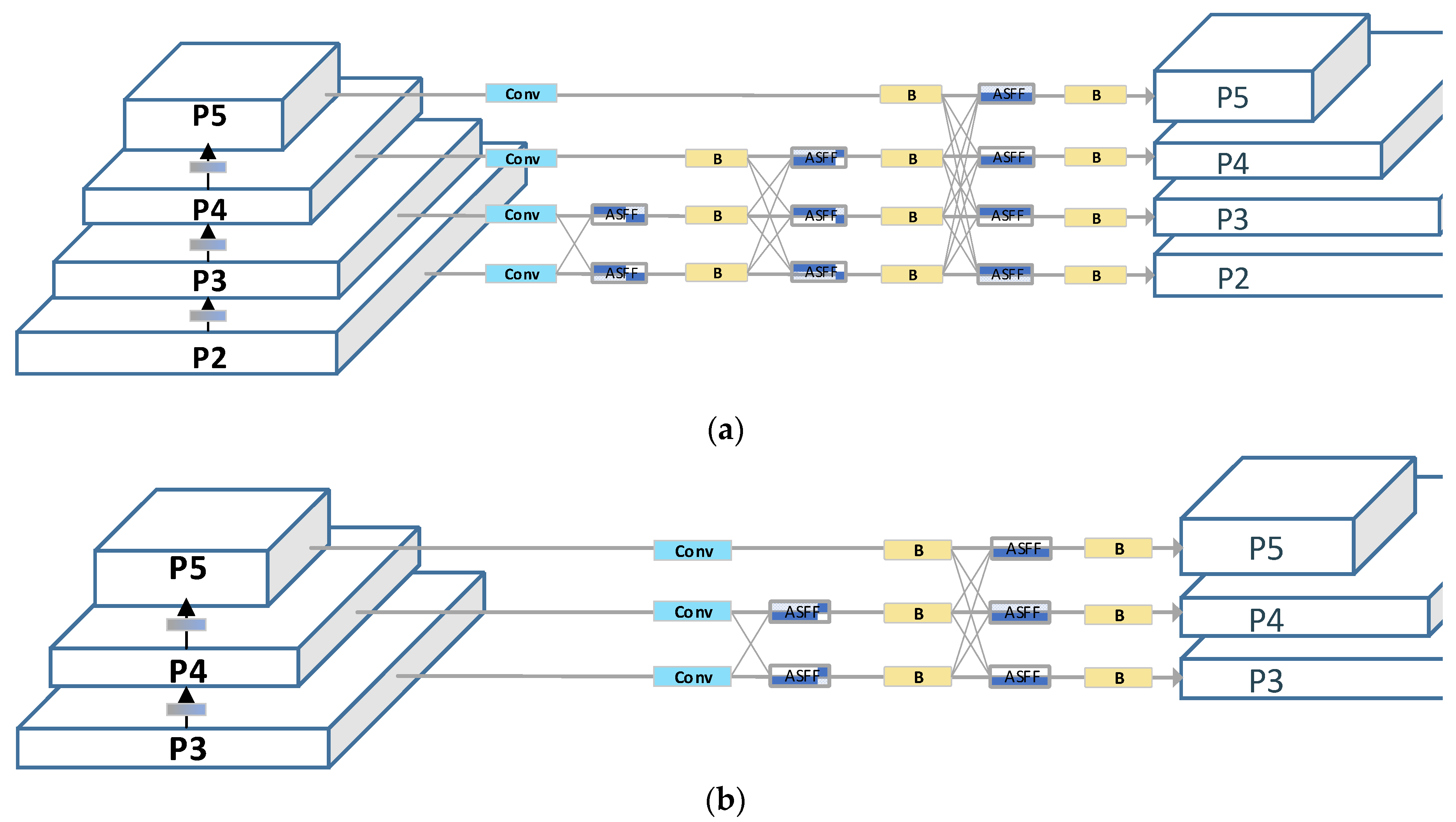

- We have devised a novel feature fusion pyramid, christened AFPN-C2f, which supplants the pre-existing PAFPN network within YOLOv8. This modification is engineered to foster the fusion of feature vectors between non-adjacent layers, mitigating the semantic discrepancies between low-level and high-level features. Moreover, we delve into the implications of varying C2f concatenation counts and other feature extraction modules on the model’s performance.

- We introduced a superficial feature layer enriched with detailed image feature information, enhancing the model’s perception of surface information.

- We made a dataset suitable for factory glove detection. This dataset, collected from the production workshop of Zhengxi Hydraulic Company, comprises 2,695 annotated high-resolution images capturing workers either wearing gloves or working barehanded with machinery.

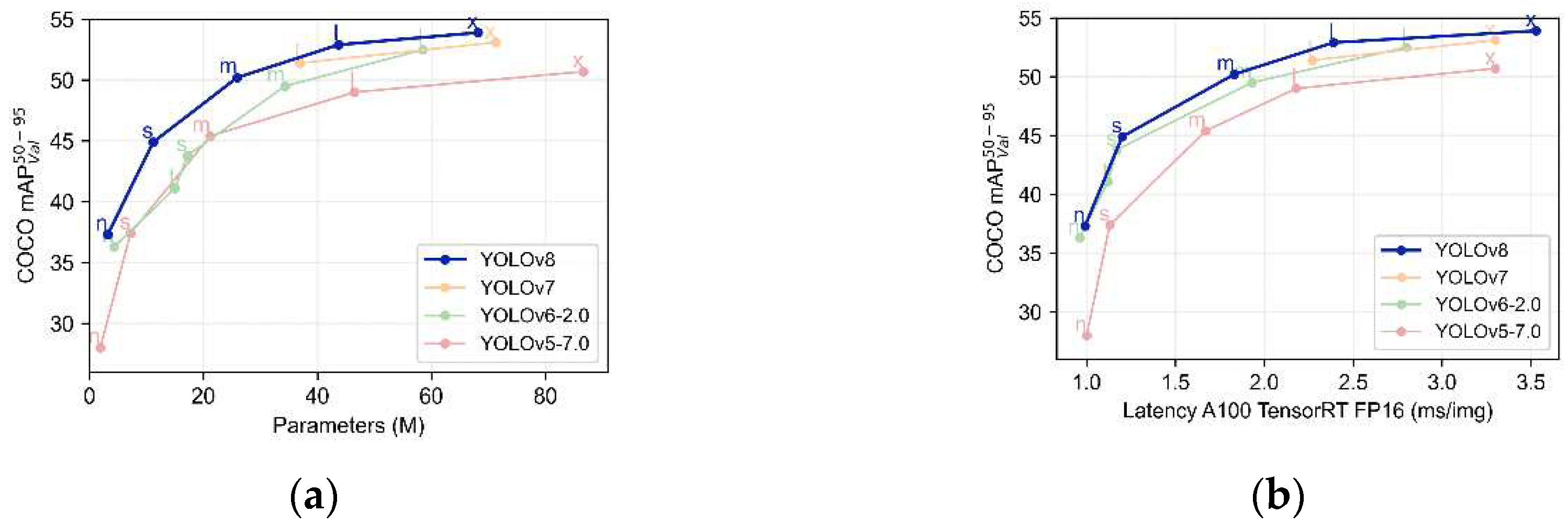

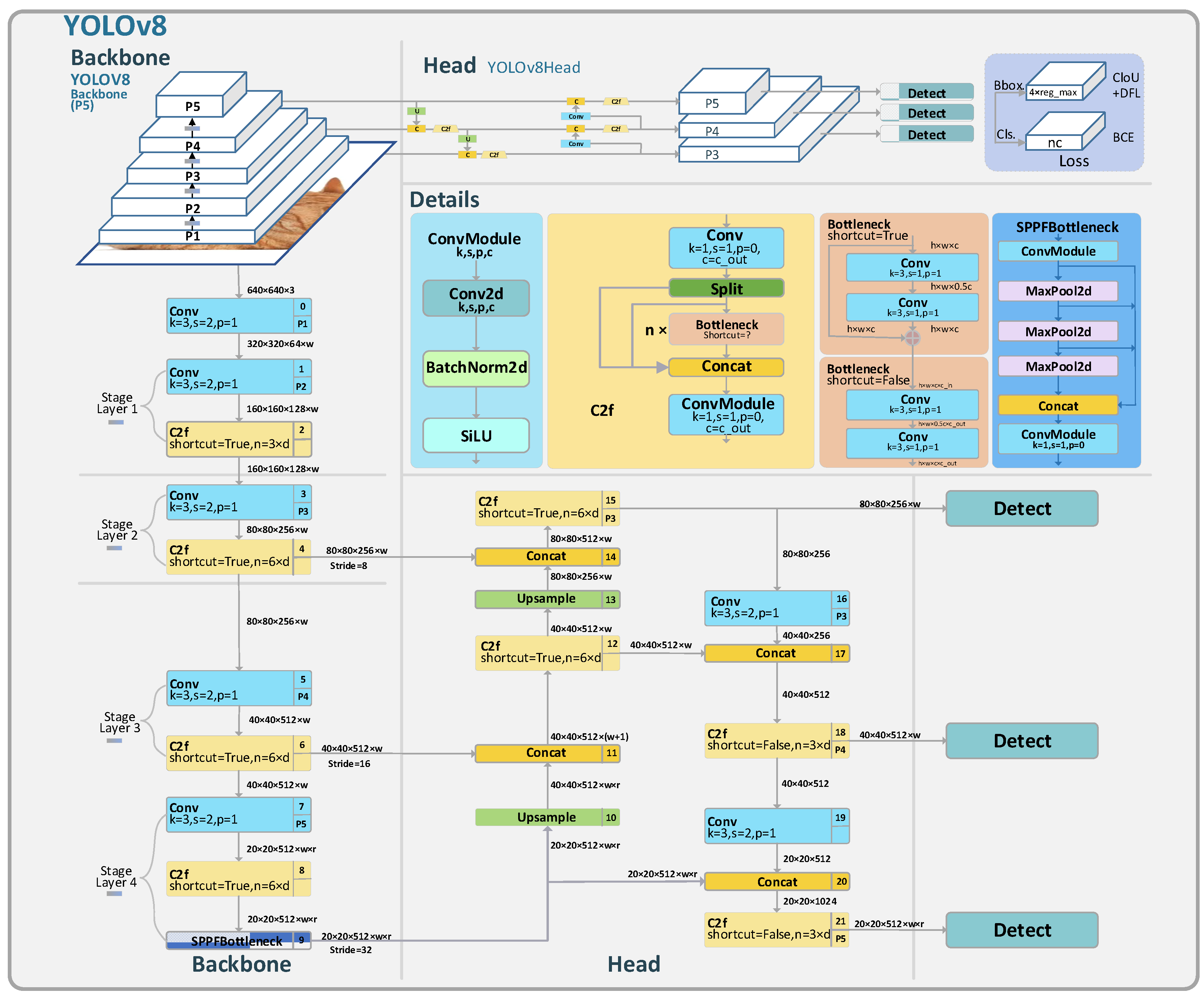

2. YOLOv8 Algorithm

3. Improved Algorithm: YOLOv8-AFPN-M-C2f

3.1. Feature Pyramid Network

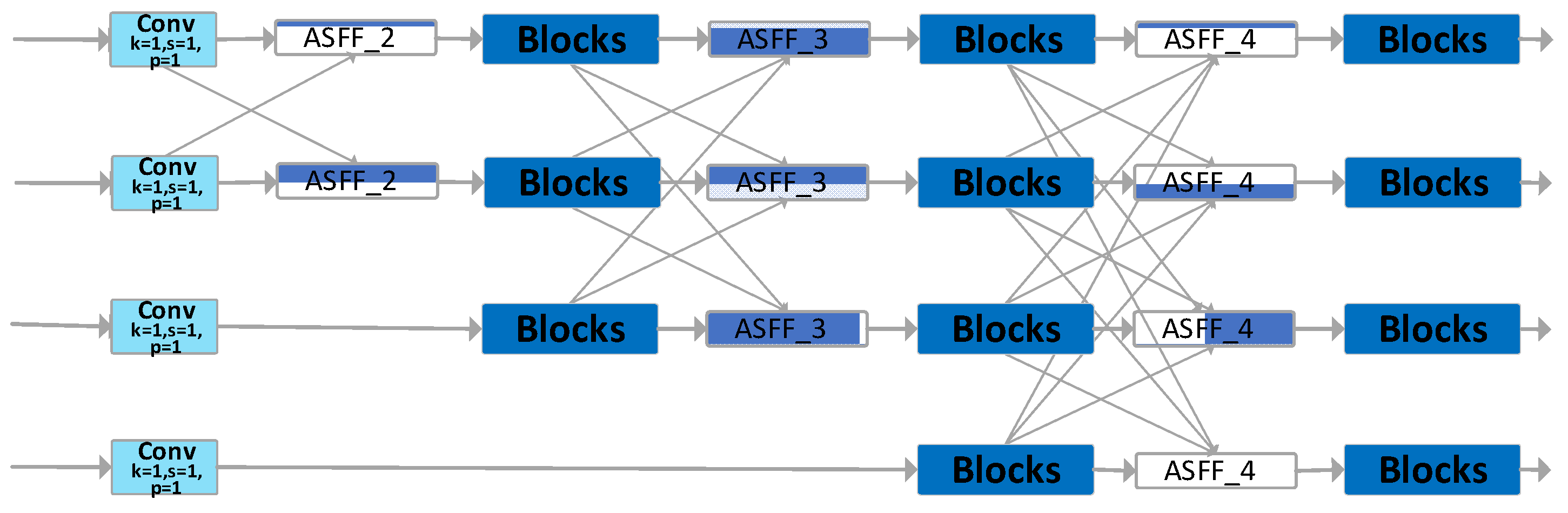

3.2. Improved FPN: AFPN-M-C2f

- It facilitates the fusion of features between non-adjacent layers, preventing the loss or degradation of features during their transmission and interaction.

- It incorporates an adaptive spatial fusion operation, suppressing conflicting information between different feature layers and preserving only the useful features for fusion.

3.2.1. Feature Vector Adjustment Model

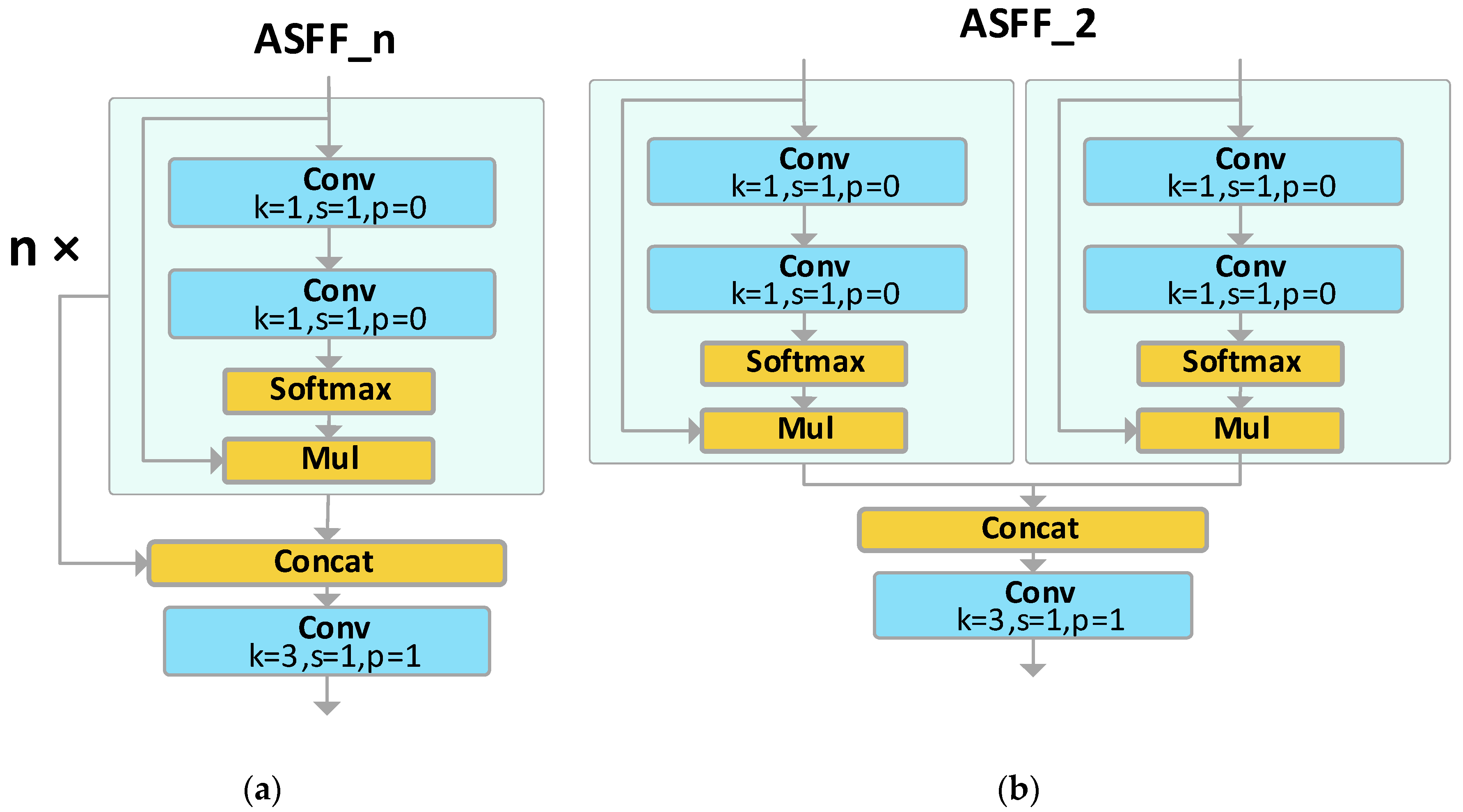

3.2.2. Adaptively Spatial Feature Fusion

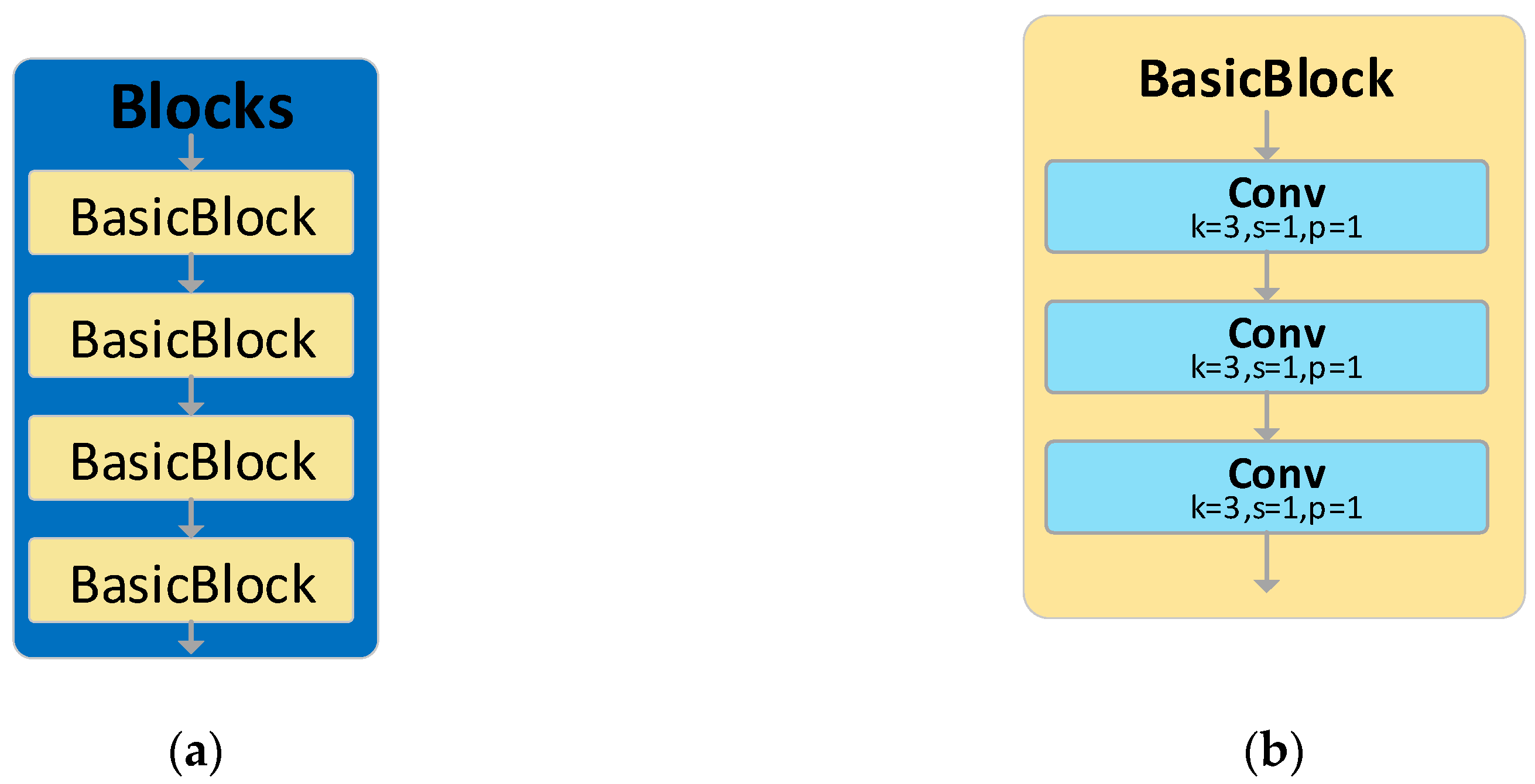

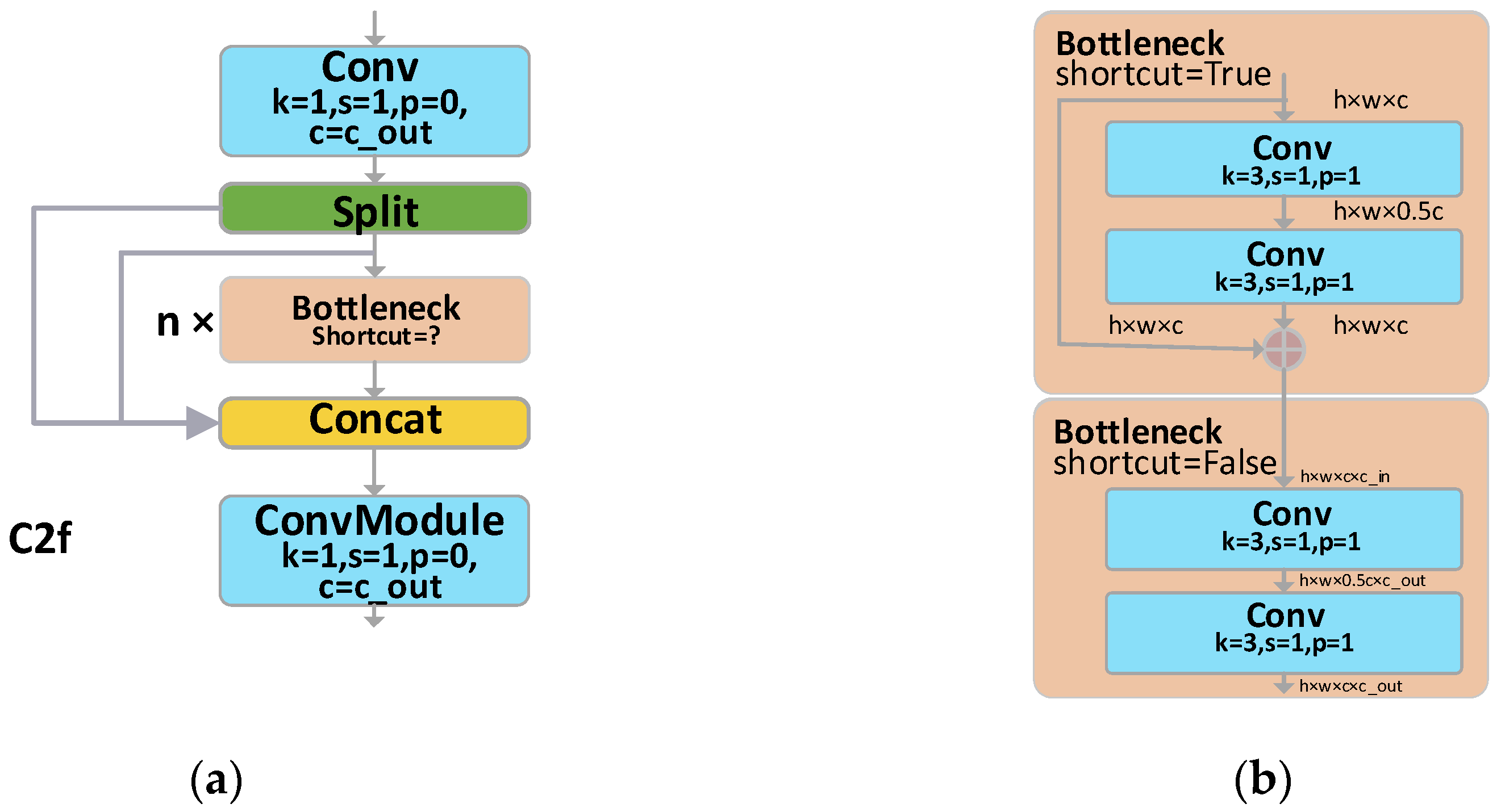

3.2.3. Enhancing the Feature Fusion Module of AFPN

3.3. More Feature Layer

- The inclusion of the P2 feature layer, enriched with shallow feature information, enhances the model’s perceptibility of smaller objects and facilitates the transmission of surface feature vectors.

- An additional 16 feature layer transmission channels deeply integrate feature information.

- The introduction of five more Blocks modules allows for multi-dimensional, in-depth feature extraction and fusion.

4. Deep Learning Object Detection Datasets.

- The content collected closely mirrors the authentic working conditions of the workers, as we ventured directly into the Zexi Hydraulic Company’s workshop to capture the tasks performed by the staff.

- Compared to other similar public datasets, ours boasts a much larger quantity, featuring several thousand images rather than merely a few hundred.

- Our images are of pristine clarity with a high resolution of 3840×2160 pixels.

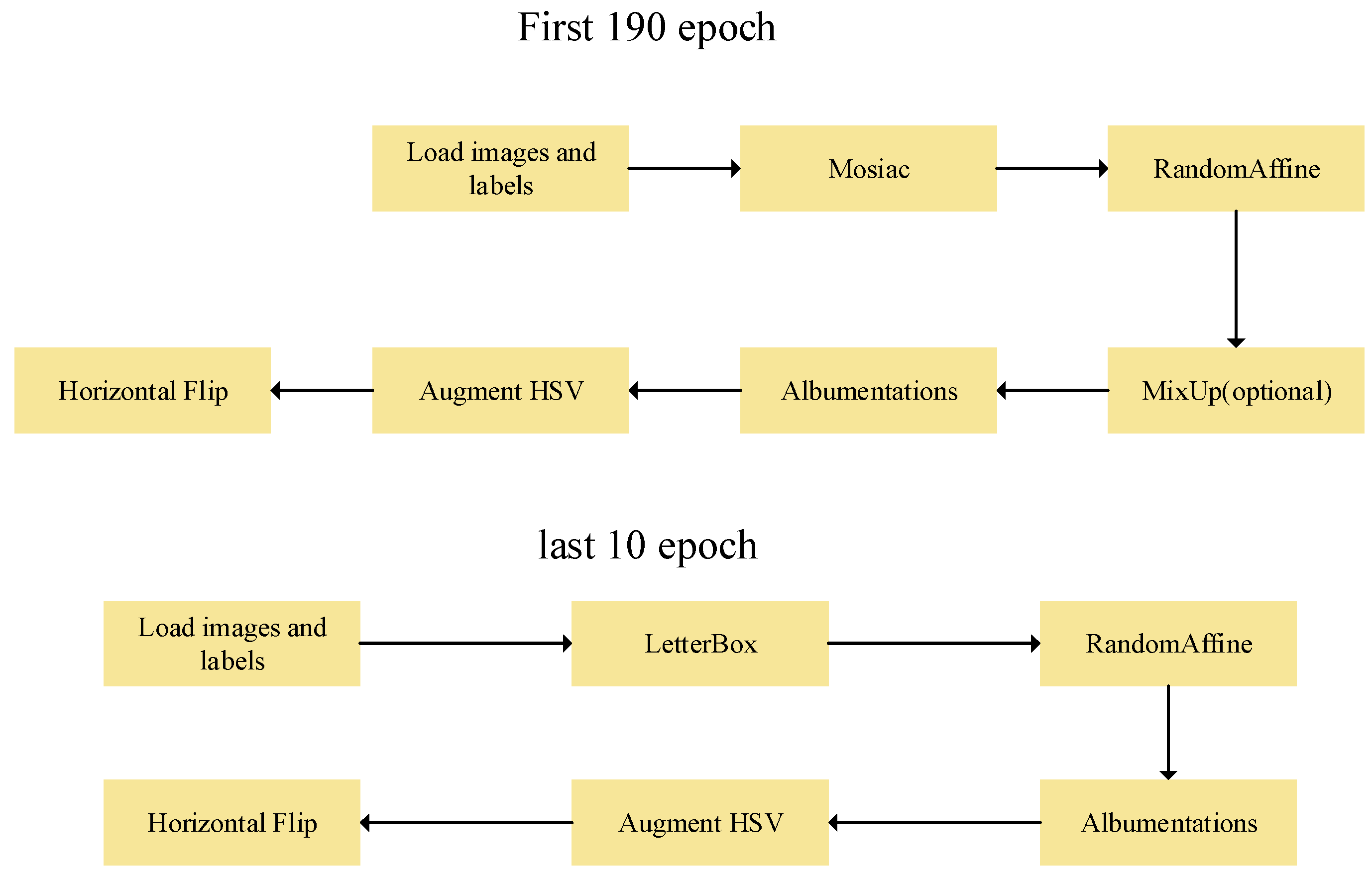

5. Training methodology and evaluation metrics.

5.1. Experimentation and parameter configuration.

5.2. Evaluation Metrics

6. Results and Analysis of the YOLOv8-AFPN-M-C2f Algorithm.

6.1. Comparative Analysis of Algorithmic Prediction Outcomes

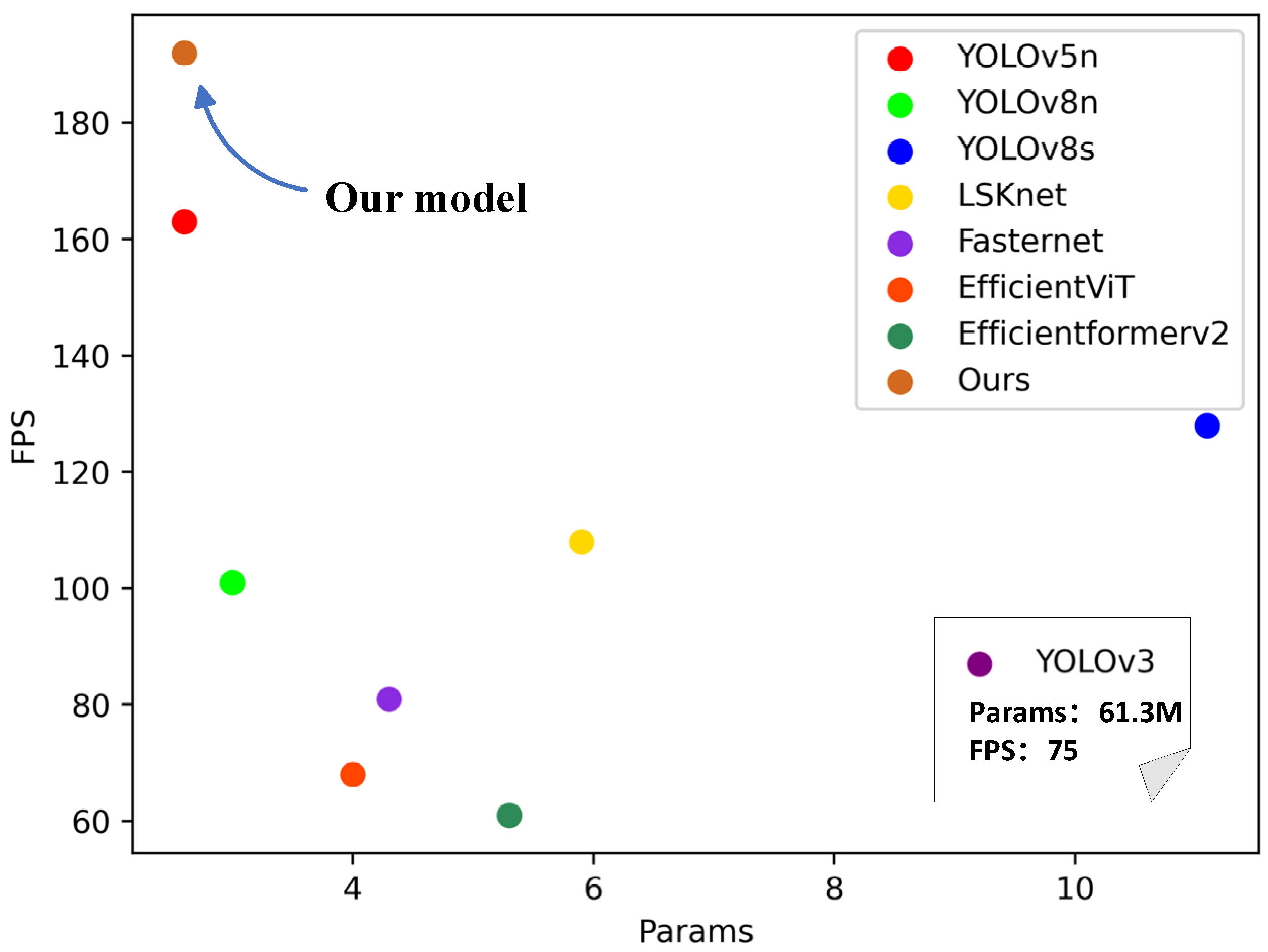

6.2. Comparison Experiment

6.3. Ablation Study.

6.4. The Experiments of Methods to Enhance AFPN

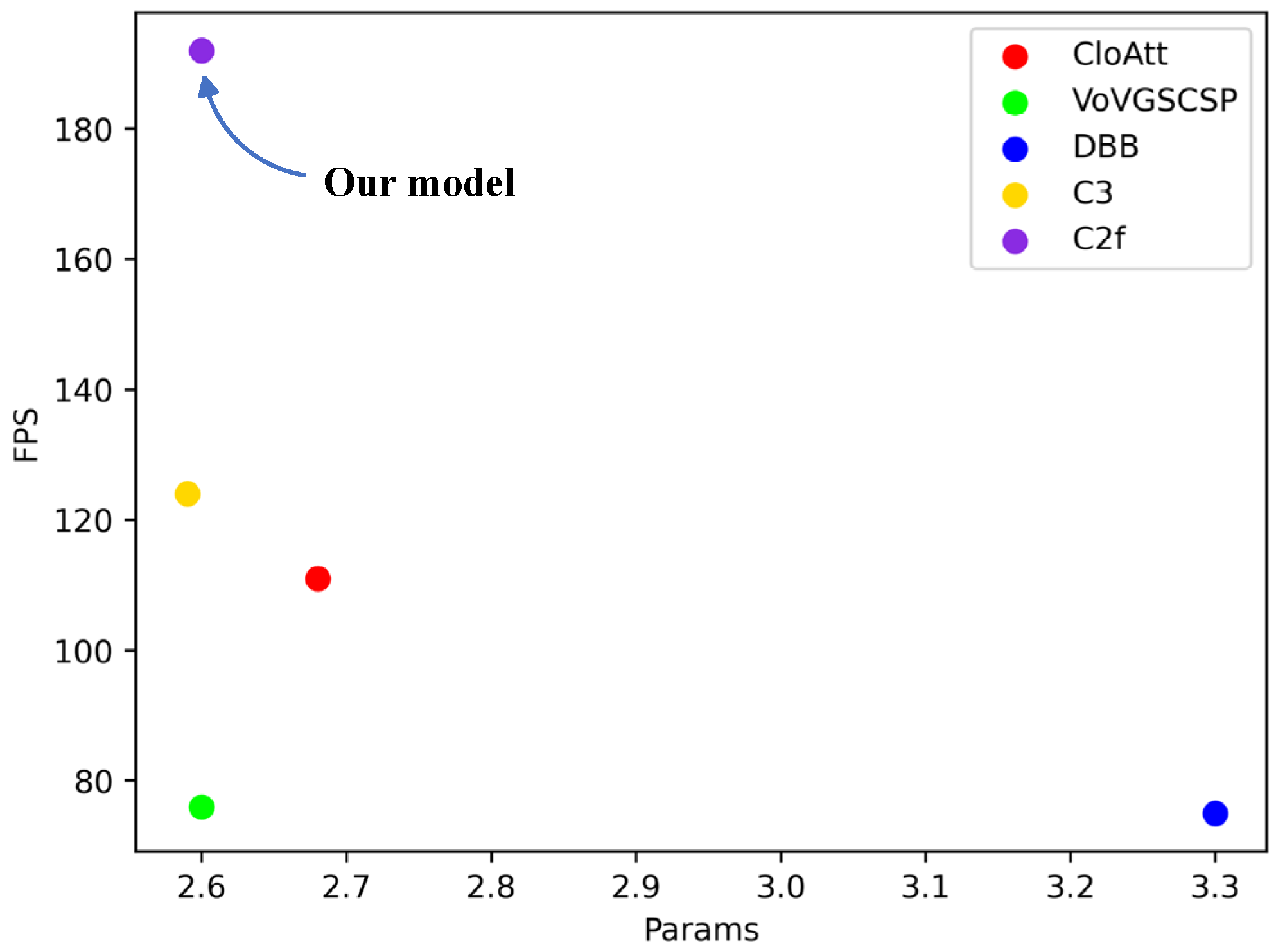

6.4.1. Experiments with various feature extraction modules replacing Blocks.

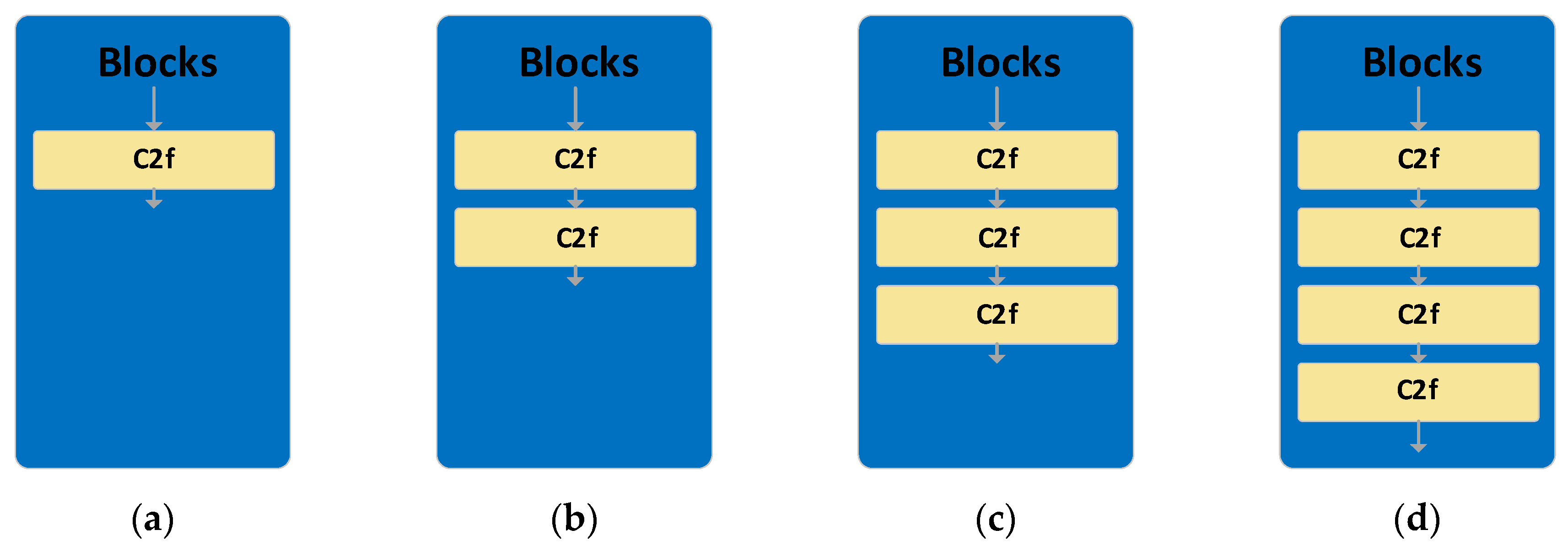

6.4.2. Number of C2f modules in series.

7. Conclusions

Author Contributions

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Emmanuel, N.O., Perceived Health Problems, Safety Practices and Performance Level among Workers of cement industries in Niger Delta.

- Osonwa Kalu, O.; Eko Jimmy, E.; Ozah-Hosea, P., Utilization of personal protective equipments (PPEs) among wood factory workers in Calabar Municipality, Southern Nigeria. Age 2015, 15, (19), 14.

- Tramontana, M.; Hansel, K.; Bianchi, L.; Foti, C.; Romita, P.; Stingeni, L., Occupational allergic contact dermatitis from a glue: concomitant sensitivity to “declared” isothiazolinones and “undeclared”(meth) acrylates. Contact Dermatitis 2020, 83, (2), 150-152.

- Girshick, R., In Fast r-cnn, Proceedings of the IEEE international conference on computer vision, 2015; 2015; pp. 1440-1448.

- Dai, J.; Li, Y.; He, K.; Sun, J., R-fcn: Object detection via region-based fully convolutional networks. Advances in Neural Information Processing Systems 2016, 29.

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R., In Mask r-cnn, Proceedings of the IEEE international conference on computer vision, 2017; 2017; pp. 2961-2969.

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A., In You only look once: Unified, real-time object detection, Proceedings of the IEEE conference on computer vision and pattern recognition, 2016; 2016; pp. 779-788.

- Redmon, J.; Farhadi, A., In YOLO9000: better, faster, stronger, Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; 2017; pp. 7263-7271.

- Redmon, J.; Farhadi, A., Yolov3: An incremental improvement. Arxiv Preprint Arxiv:1804.02767 2018.

- Bochkovskiy, A.; Wang, C.; Liao, H.M., Yolov4: Optimal speed and accuracy of object detection. Arxiv Preprint Arxiv:2004.10934 2020.

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.; Berg, A.C., In Ssd: Single shot multibox detector, Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, October 11–14, 2016, Proceedings, Part I 14, 2016; Springer: 2016; pp. 21-37.

- Law, H.; Deng, J., In Cornernet: Detecting objects as paired keypoints, Proceedings of the European conference on computer vision (ECCV), 2018; 2018; pp. 734-750.

- Zhao, Q.; Sheng, T.; Wang, Y.; Tang, Z.; Chen, Y.; Cai, L.; Ling, H., In M2det: A single-shot object detector based on multi-level feature pyramid network, Proceedings of the AAAI conference on artificial intelligence, 2019; 2019; pp. 9259-9266.

- Roy, A.M.; Bhaduri, J., DenseSPH-YOLOv5: An automated damage detection model based on DenseNet and Swin-Transformer prediction head-enabled YOLOv5 with attention mechanism. Adv Eng Inform 2023, 56, 102007.

- Jiang, S.; Zhou, X., DWSC-YOLO: A Lightweight Ship Detector of SAR Images Based on Deep Learning. J Mar Sci Eng 2022, 10, (11), 1699. [CrossRef]

- Sun, C.; Zhang, S.; Qu, P.; Wu, X.; Feng, P.; Tao, Z.; Zhang, J.; Wang, Y., MCA-YOLOV5-Light: A faster, stronger and lighter algorithm for helmet-wearing detection. Applied Sciences 2022, 12, (19), 9697. [CrossRef]

- Feng, C.; Zhong, Y.; Gao, Y.; Scott, M.R.; Huang, W., In Tood: Task-aligned one-stage object detection, 2021 IEEE/CVF International Conference on Computer Vision (ICCV), 2021; IEEE Computer Society: 2021; pp. 3490-3499.

- Lin, T.; Goyal, P.; Girshick, R.; He, K.; Dollár, P., In Focal loss for dense object detection, Proceedings of the IEEE international conference on computer vision, 2017; 2017; pp. 2980-2988.

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R., In Mask r-cnn, Proceedings of the IEEE international conference on computer vision, 2017; 2017; pp. 2961-2969.

- Cai, Z.; Vasconcelos, N., In Cascade r-cnn: Delving into high quality object detection, Proceedings of the IEEE conference on computer vision and pattern recognition, 2018; 2018; pp. 6154-6162.

- Tan, M.; Pang, R.; Le, Q.V., In Efficientdet: Scalable and efficient object detection, Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; 2020; pp. 10781-10790.

- Wang, K.; Liew, J.H.; Zou, Y.; Zhou, D.; Feng, J., In Panet: Few-shot image semantic segmentation with prototype alignment, proceedings of the IEEE/CVF international conference on computer vision, 2019; 2019; pp. 9197-9206.

- Yang, G.; Lei, J.; Zhu, Z.; Cheng, S.; Feng, Z.; Liang, R., AFPN: Asymptotic Feature Pyramid Network for Object Detection. Arxiv Preprint Arxiv:2306.15988 2023.

- Liu, S.; Huang, D., In Receptive field block net for accurate and fast object detection, Proceedings of the European conference on computer vision (ECCV), 2018; 2018; pp. 385-400.

- Liang, J.; Deng, Y.; Zeng, D., A deep neural network combined CNN and GCN for remote sensing scene classification. Ieee J-Stars 2020, 13, 4325-4338. [CrossRef]

- Available online: https://github.com/ultralytics/yolov5 (accessed on 12 April 2021).

- Available online: https://github.com/ultralytics/ultralytics (accessed on 10 January 2023).

- Li, Y.; Hou, Q.; Zheng, Z.; Cheng, M.; Yang, J.; Li, X., Large Selective Kernel Network for Remote Sensing Object Detection. Arxiv Preprint Arxiv:2303.09030 2023.

- Chen, J.; Kao, S.; He, H.; Zhuo, W.; Wen, S.; Lee, C.; Chan, S.G., In Run, Don’t Walk: Chasing Higher FLOPS for Faster Neural Networks, Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023; 2023; pp. 12021-12031.

- Liu, X.; Peng, H.; Zheng, N.; Yang, Y.; Hu, H.; Yuan, Y., In EfficientViT: Memory Efficient Vision Transformer with Cascaded Group Attention, Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023; 2023; pp. 14420-14430.

- Li, Y.; Hu, J.; Wen, Y.; Evangelidis, G.; Salahi, K.; Wang, Y.; Tulyakov, S.; Ren, J., In Rethinking vision transformers for mobilenet size and speed, Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023; 2023; pp. 16889-16900.

- Fan, Q.; Huang, H.; Guan, J.; He, R., Rethinking Local Perception in Lightweight Vision Transformer. Arxiv Preprint Arxiv:2303.17803 2023.

- Li, H.; Li, J.; Wei, H.; Liu, Z.; Zhan, Z.; Ren, Q., Slim-neck by GSConv: A better design paradigm of detector architectures for autonomous vehicles. Arxiv Preprint Arxiv:2206.02424 2022.

- Ding, X.; Zhang, X.; Han, J.; Ding, G., In Diverse branch block: Building a convolution as an inception-like unit, Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021; 2021; pp. 10886-10895.

| Experimental Component | Version |

|---|---|

| OS | Ubuntu20.04 |

| CPU | AMD EPYC 7T83 64-Core Processor |

| GPU | RTX4090 |

| CUDA version | 11.8 |

| Python version | 3.8 |

| Pytorch version | 2.0.0 |

| Parameter Name | Setting |

|---|---|

| Image dimensions | |

| Number of epochs | 200 |

| Batch size | 64 |

| Data augmentation menthod | Mosaic |

| Hyperparameter | Value |

|---|---|

| gradient-based optimizers | SGD |

| initial learning rate(lr0) final learning rate |

0.01 0.0001 |

| momentum | 0.937 |

| Weight decay | 0.0005 |

| Model | mAP50/(%) | FPS | Params/M | ||||||

| Bar hand | White glove | Canvas glove | Black glove |

All 1 | P | R | |||

| YOLOv3[9] | 0.935 | 0.944 | 0.972 | 0.987 | 0.959 | 0.97 | 0.91 | 75 | 61.3 |

| YOLOv5[26] | 0.924 | 0.936 | 0.984 | 0.989 | 0.958 | 0.96 | 0.89 | 163 | 2.6 |

| YOLOv8[27] | 0.934 | 0.914 | 0.947 | 0.994 | 0.947 | 0.95 | 0.89 | 101 | 3.0 |

| YOLOv8s[27] | 0.957 | 0.952 | 0.984 | 0.986 | 0.970 | 0.95 | 0.93 | 128 | 11.1 |

| LSKnet[28] | 0.919 | 0.917 | 0.958 | 0.974 | 0.942 | 0.95 | 0.90 | 108 | 5.9 |

| Fasternet[29] | 0.949 | 0.938 | 0.944 | 0.944 | 0.956 | 0.96 | 0.89 | 81 | 4.3 |

| EfficientViT[30] | 0.919 | 0.917 | 0.993 | 0.985 | 0.954 | 0.95 | 0.93 | 68 | 4.0 |

| Efficientformerv2[31] | 0.932 | 0.911 | 0.959 | 0.991 | 0.948 | 0.94 | 0.91 | 61 | 5.3 |

| Ours | 0.949 | 0.962 | 0.983 | 0.992 | 0.972 | 0.96 | 0.91 | 192 | 2.6 |

| Model | AFPN | More Detect head | C2f | mAP50(%) | Params/M | |

|---|---|---|---|---|---|---|

| YOLOv8n(baseline) | 0.959 | 3.01 | ||||

| YOLOv8+AFPN | √ | 0.964 | 2.74 | |||

| YOLOv8+AFPN+ more Detect head | √ | √ | 0.956 | 3.00 | ||

| YOLOv8+AFPN+C2f | √ | √ | 0.942 | 2.31 | ||

| Ours | √ | √ | √ | 0.972 | 2.60 | |

| Model | mAP50/(%) | FPS | Params/M | ||||||

| Bare hand | White glove | Canvas glove | Black glove |

All1 | P | R | |||

| CloAtt[32] | 0.927 | 0.962 | 0.953 | 0.995 | 0.959 | 0.96 | 0.92 | 111 | 2.68 |

| VoV-GSCSP[33] | 0.949 | 0.952 | 0.957 | 0.982 | 0.96 | 0.97 | 0.91 | 76 | 2.60 |

| DBB[34] | 0.932 | 0.943 | 0.937 | 0.995 | 0.952 | 0.95 | 0.89 | 75 | 3.3 |

| C3 2[26] | 0.928 | 0.874 | 0.940 | 0.995 | 0.934 | 0.96 | 0.88 | 124 | 2.59 |

| C2f(ours) | 0.949 | 0.962 | 0.983 | 0.992 | 0.972 | 0.96 | 0.91 | 192 | 2.60 |

| The number of C2f | mAP50/(%) | FPS | Params/M | ||||||

| Bare hand | White glove | Canvas glove | Black glove |

All1 | P | R | |||

| 1(ours) | 0.949 | 0.962 | 0.983 | 0.992 | 0.972 | 0.96 | 0.91 | 192 | 2.60 |

| 2 | 0.940 | 0.942 | 0.960 | 0.981 | 0.956 | 0.96 | 0.90 | 93 | 2.65 |

| 3 | 0.954 | 0.942 | 0.949 | 0.995 | 0.96 | 0.96 | 0.92 | 72 | 2.70 |

| 4 | 0.949 | 0.932 | 0.958 | 0.994 | 0.958 | 0.96 | 0.90 | 75 | 2.75 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).