Submitted:

11 August 2024

Posted:

12 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Works

3. Fundamentals of RNNs

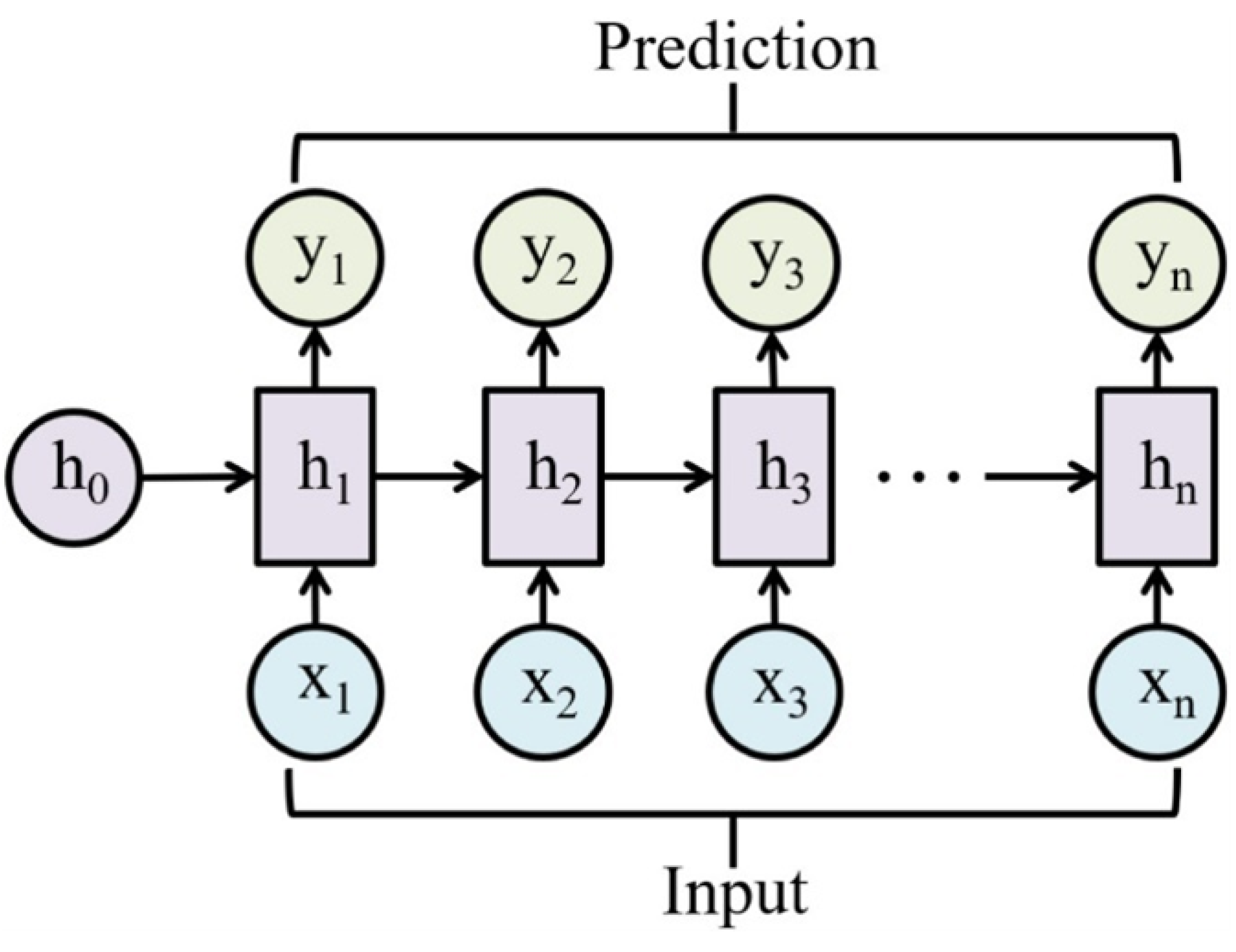

3.1. Basic Architecture and Working Principle of Standard RNNs

3.2. Activation Functions

3.3. The Vanishing Gradient Problem

3.4. Bidirectional RNNs

3.5. Deep RNNs

4. Advanced Variants of RNNs

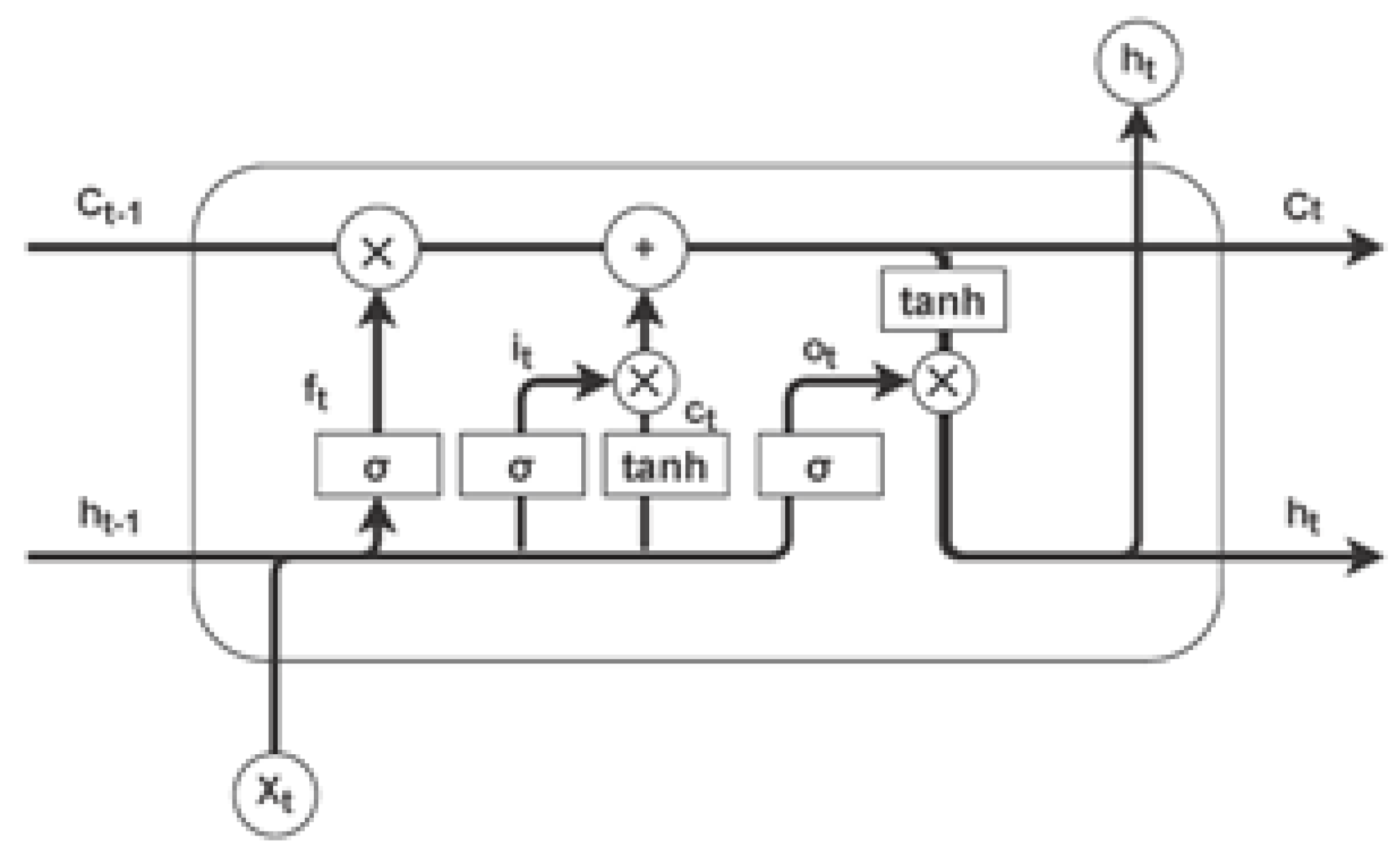

4.1. Long Short-Term Memory Networks

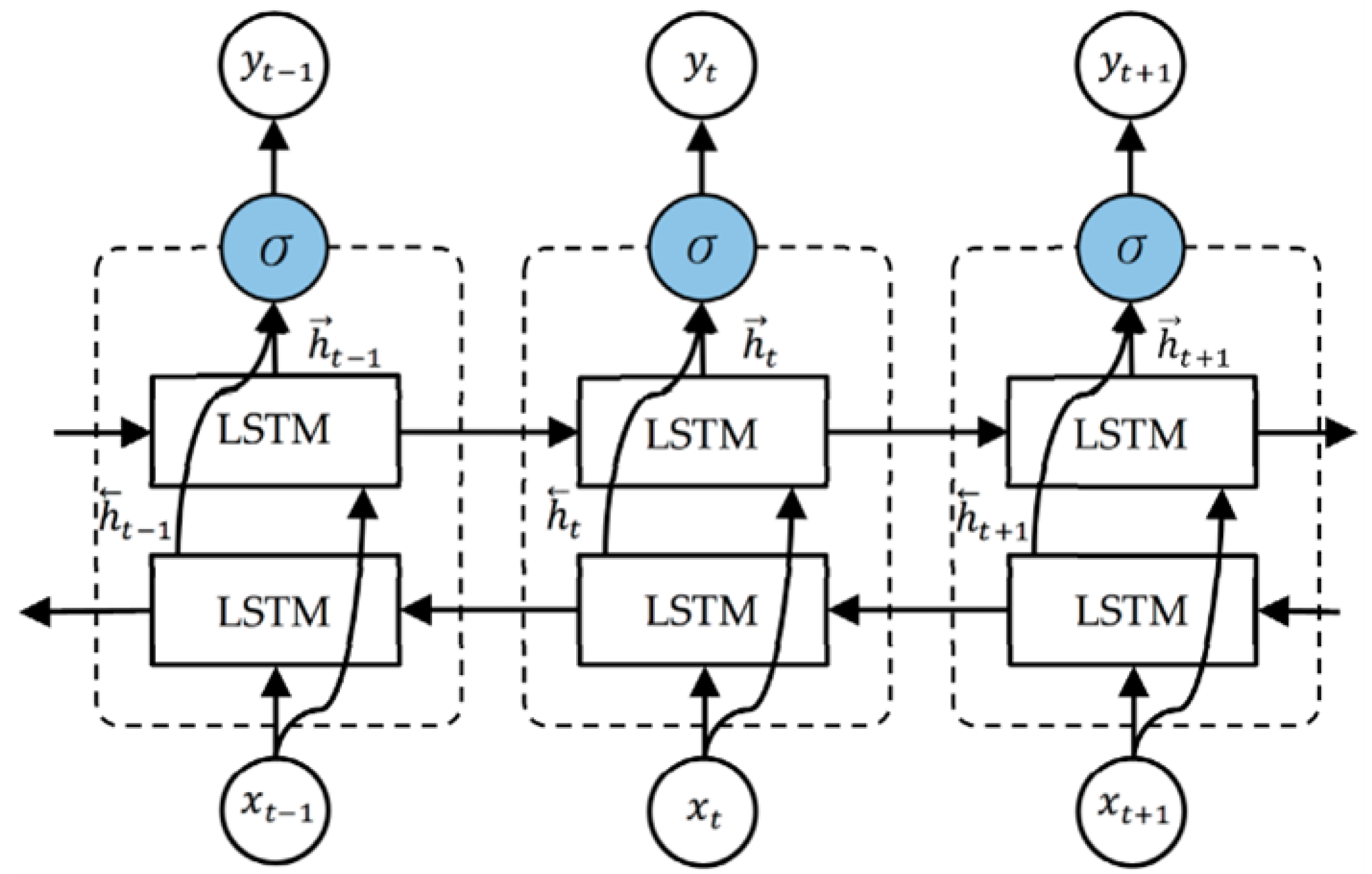

4.2. Bidirectional LSTMs

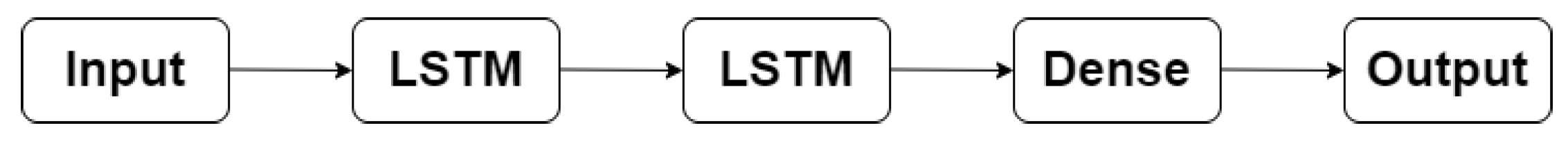

4.2.1. Stacked LSTMs

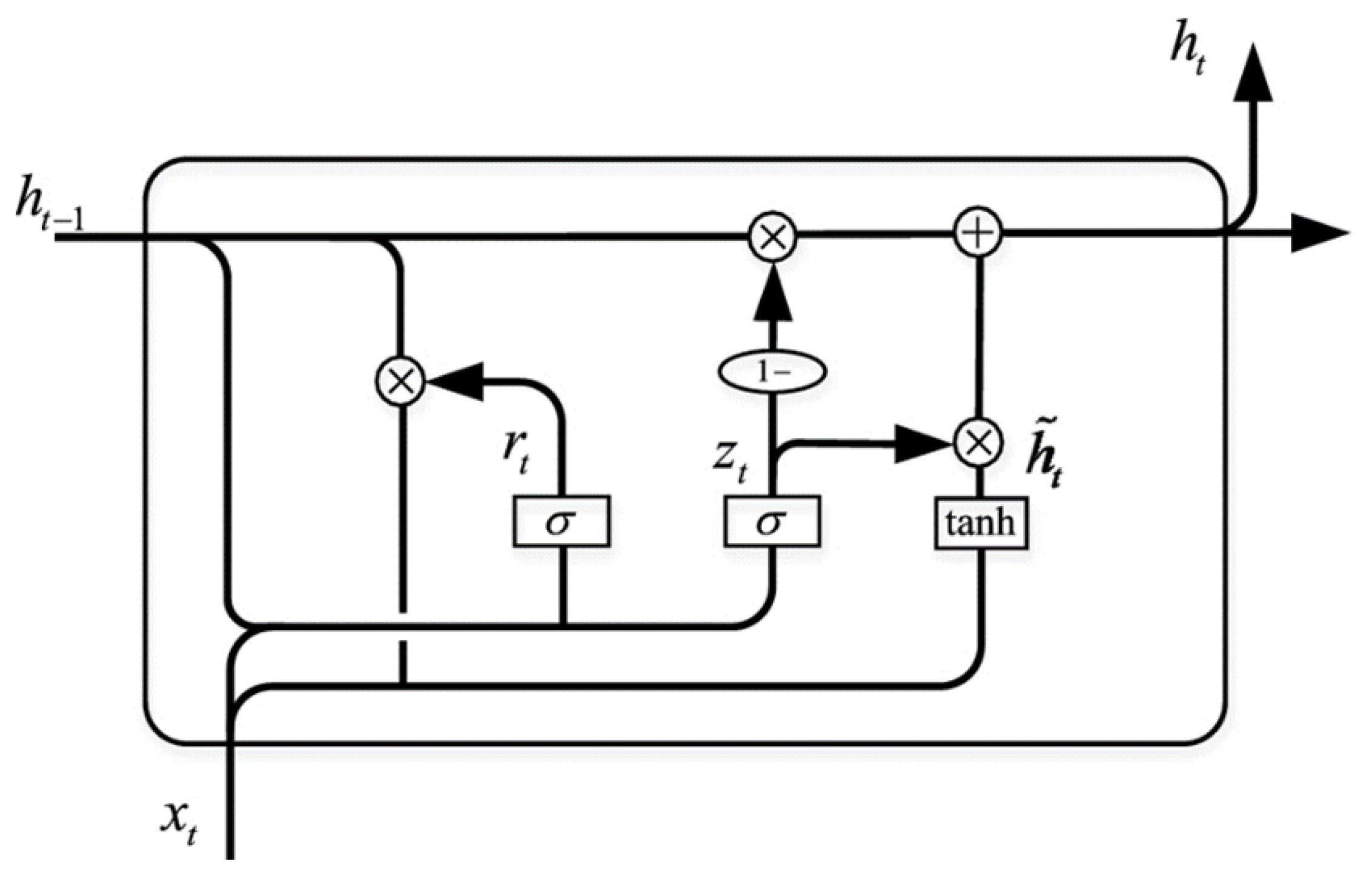

4.3. Gated Recurrent Units

4.3.1. Comparison with LSTMs

4.4. Other Notable Variants

4.4.1. Peephole LSTMs

4.4.2. Echo State Networks

4.4.3. Independently Recurrent Neural Network

5. Innovations in RNN Architectures and Training Methodologies

5.1. Hybrid Architectures

5.2. Neural Architecture Search

5.3. Advanced Optimization Techniques

5.4. RNNs with Attention Mechanisms

5.5. RNNs Integrated with Transformer Models

6. Applications of RNNs in Peer-Reviewed Literature

6.1. Natural Language Processing

6.1.1. Text Generation

6.1.2. Sentiment Analysis

6.1.3. Machine Translation

6.2. Speech Recognition

6.3. Financial Time Series Forecasting

6.4. Bioinformatics

6.5. Autonomous Vehicles

6.6. Anomaly Detection

7. Challenges and Future Research Directions

7.1. Scalability and Efficiency

7.2. Interpretability and Explainability

7.3. Bias and Fairness

7.4. Data Dependency and Quality

7.5. Overfitting and Generalization

8. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| ANN | Artificial Neural Network |

| BiLSTM | Bidirectional Long Short-Term Memory |

| CNN | Convolutional Neural Network |

| DL | Deep Learning |

| GRU | Gated Recurrent Unit |

| LSTM | Long Short-Term Memory |

| ML | Machine Learning |

| NAS | Neural Architecture Search |

| NLP | Natural Language Processing |

| RNN | Recurrent Neural Network |

| RL | Reinforcement Learning |

| SHAP | SHapley Additive exPlanations |

| TPU | Tensor Processing Unit |

| VAE | Variational Autoencoder |

References

- OâHalloran, T.; Obaido, G.; Otegbade, B.; Mienye, I.D. A deep learning approach for Maize Lethal Necrosis and Maize Streak Virus disease detection. Machine Learning with Applications 2024, 16, 100556. [Google Scholar] [CrossRef]

- Peng, Y.; He, L.; Hu, D.; Liu, Y.; Yang, L.; Shang, S. Decoupling Deep Learning for Enhanced Image Recognition Interpretability. ACM Transactions on Multimedia Computing, Communications and Applications 2024. [Google Scholar] [CrossRef]

- Khan, W.; Daud, A.; Khan, K.; Muhammad, S.; Haq, R. Exploring the frontiers of deep learning and natural language processing: A comprehensive overview of key challenges and emerging trends. Natural Language Processing Journal 2023, 100026. [Google Scholar] [CrossRef]

- Al-Jumaili, A.H.A.; Muniyandi, R.C.; Hasan, M.K.; Paw, J.K.S.; Singh, M.J. Big data analytics using cloud computing based frameworks for power management systems: Status, constraints, and future recommendations. Sensors 2023, 23, 2952. [Google Scholar] [CrossRef] [PubMed]

- Gill, S.S.; Wu, H.; Patros, P.; Ottaviani, C.; Arora, P.; Pujol, V.C.; Haunschild, D.; Parlikad, A.K.; Cetinkaya, O.; Lutfiyya, H.; et al. Modern computing: Vision and challenges. Telematics and Informatics Reports 2024, 100116. [Google Scholar] [CrossRef]

- Mienye, I.D.; Jere, N. A Survey of Decision Trees: Concepts, Algorithms, and Applications. IEEE Access 2024. [Google Scholar] [CrossRef]

- Alhajeri, M.S.; Ren, Y.M.; Ou, F.; Abdullah, F.; Christofides, P.D. Model predictive control of nonlinear processes using transfer learning-based recurrent neural networks. Chemical Engineering Research and Design 2024, 205, 1–12. [Google Scholar] [CrossRef]

- Shahinzadeh, H.; Mahmoudi, A.; Asilian, A.; Sadrarhami, H.; Hemmati, M.; Saberi, Y. Deep Learning: A Overview of Theory and Architectures. In Proceedings of the 2024 20th CSI International Symposium on Artificial Intelligence and Signal Processing (AISP); IEEE, 2024; pp. 1–11. [Google Scholar]

- Baruah, R.D.; Organero, M.M. Explicit Context Integrated Recurrent Neural Network for applications in smart environments. Expert Systems with Applications 2024, 124752. [Google Scholar] [CrossRef]

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning representations by back-propagating errors. nature 1986, 323, 533–536. [Google Scholar] [CrossRef]

- Lalapura, V.S.; Amudha, J.; Satheesh, H.S. Recurrent neural networks for edge intelligence: a survey. ACM Computing Surveys (CSUR) 2021, 54, 1–38. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural computation 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Cho, K.; Van Merriënboer, B.; Gulcehre, C.; Bahdanau, D.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv preprint 2014, arXiv:1406.1078. [Google Scholar]

- Liu, F.; Li, J.; Wang, L. PI-LSTM: Physics-informed long short-term memory network for structural response modeling. Engineering Structures 2023, 292, 116500. [Google Scholar] [CrossRef]

- Ni, Q.; Ji, J.; Feng, K.; Zhang, Y.; Lin, D.; Zheng, J. Data-driven bearing health management using a novel multi-scale fused feature and gated recurrent unit. Reliability Engineering & System Safety 2024, 242, 109753. [Google Scholar]

- Niu, Z.; Zhong, G.; Yue, G.; Wang, L.N.; Yu, H.; Ling, X.; Dong, J. Recurrent attention unit: A new gated recurrent unit for long-term memory of important parts in sequential data. Neurocomputing 2023, 517, 1–9. [Google Scholar] [CrossRef]

- Lipton, Z.C.; Berkowitz, J.; Elkan, C. A critical review of recurrent neural networks for sequence learning. arXiv preprint 2015, arXiv:1506.00019. [Google Scholar]

- Yu, Y.; Si, X.; Hu, C.; Zhang, J. A review of recurrent neural networks: LSTM cells and network architectures. Neural computation 2019, 31, 1235–1270. [Google Scholar] [CrossRef] [PubMed]

- Tarwani, K.M.; Edem, S. Survey on recurrent neural network in natural language processing. Int. J. Eng. Trends Technol 2017, 48, 301–304. [Google Scholar] [CrossRef]

- Dutta, K.K.; Poornima, S.; Sharma, R.; Nair, D.; Ploeger, P.G. Applications of recurrent neural network: Overview and case studies. In Recurrent Neural Networks; CRC press, 2022; pp. 23–41. [Google Scholar]

- Quradaa, F.H.; Shahzad, S.; Almoqbily, R.S. A systematic literature review on the applications of recurrent neural networks in code clone research. Plos one 2024, 19, e0296858. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep learning; MIT press, 2016. [Google Scholar]

- Greff, K.; Srivastava, R.K.; Koutník, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A search space odyssey. IEEE transactions on neural networks and learning systems 2016, 28, 2222–2232. [Google Scholar] [CrossRef]

- Al-Selwi, S.M.; Hassan, M.F.; Abdulkadir, S.J.; Muneer, A.; Sumiea, E.H.; Alqushaibi, A.; Ragab, M.G. RNN-LSTM: From applications to modeling techniques and beyondâSystematic review. Journal of King Saud University-Computer and Information Sciences 2024, 102068. [Google Scholar] [CrossRef]

- Zaremba, W.; Sutskever, I.; Vinyals, O. Recurrent neural network regularization. arXiv preprint 2014, arXiv:1409.2329. [Google Scholar]

- Bai, S.; Kolter, J.Z.; Koltun, V. An empirical evaluation of generic convolutional and recurrent networks for sequence modeling. arXiv 2018, arXiv:1803.01271. [Google Scholar]

- Che, Z.; Purushotham, S.; Cho, K.; Sontag, D.; Liu, Y. Recurrent neural networks for multivariate time series with missing values. Scientific reports 2018, 8, 6085. [Google Scholar] [CrossRef]

- Chung, J.; Gulcehre, C.; Cho, K.; Bengio, Y. Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv preprint 2014, arXiv:1412.3555. [Google Scholar]

- Badawy, M.; Ramadan, N.; Hefny, H.A. Healthcare predictive analytics using machine learning and deep learning techniques: a survey. Journal of Electrical Systems and Information Technology 2023, 10, 40. [Google Scholar] [CrossRef]

- Ismaeel, A.G.; Janardhanan, K.; Sankar, M.; Natarajan, Y.; Mahmood, S.N.; Alani, S.; Shather, A.H. Traffic pattern classification in smart cities using deep recurrent neural network. Sustainability 2023, 15, 14522. [Google Scholar] [CrossRef]

- Mers, M.; Yang, Z.; Hsieh, Y.A.; Tsai, Y. Recurrent neural networks for pavement performance forecasting: review and model performance comparison. Transportation Research Record 2023, 2677, 610–624. [Google Scholar] [CrossRef]

- Chen, Y.; Cheng, Q.; Cheng, Y.; Yang, H.; Yu, H. Applications of recurrent neural networks in environmental factor forecasting: a review. Neural computation 2018, 30, 2855–2881. [Google Scholar] [CrossRef]

- Linardos, V.; Drakaki, M.; Tzionas, P.; Karnavas, Y.L. Machine learning in disaster management: recent developments in methods and applications. Machine Learning and Knowledge Extraction 2022, 4. [Google Scholar] [CrossRef]

- Zhang, J.; Liu, H.; Chang, Q.; Wang, L.; Gao, R.X. Recurrent neural network for motion trajectory prediction in human-robot collaborative assembly. CIRP annals 2020, 69, 9–12. [Google Scholar] [CrossRef]

- Tsantekidis, A.; Passalis, N.; Tefas, A. Recurrent neural networks. In Deep learning for robot perception and cognition; Elsevier, 2022; pp. 101–115. [Google Scholar]

- Mienye, I.D.; Jere, N. Deep Learning for Credit Card Fraud Detection: A Review of Algorithms, Challenges, and Solutions. IEEE Access 2024, 12, 96893–96910. [Google Scholar] [CrossRef]

- Rezk, N.M.; Purnaprajna, M.; Nordström, T.; Ul-Abdin, Z. Recurrent neural networks: An embedded computing perspective. IEEE Access 2020, 8, 57967–57996. [Google Scholar] [CrossRef]

- Yu, Y.; Adu, K.; Tashi, N.; Anokye, P.; Wang, X.; Ayidzoe, M.A. Rmaf: Relu-memristor-like activation function for deep learning. IEEE Access 2020, 8, 72727–72741. [Google Scholar] [CrossRef]

- Mienye, I.D.; Ainah, P.K.; Emmanuel, I.D.; Esenogho, E. Sparse noise minimization in image classification using Genetic Algorithm and DenseNet. In Proceedings of the 2021 Conference on Information Communications Technology and Society (ICTAS); IEEE, 2021; pp. 103–108. [Google Scholar]

- Ciaburro, G.; Venkateswaran, B. Neural Networks with R: Smart models using CNN, RNN, deep learning, and artificial intelligence principles; Packt Publishing Ltd, 2017. [Google Scholar]

- Nwankpa, C.; Ijomah, W.; Gachagan, A.; Marshall, S. Activation functions: Comparison of trends in practice and research for deep learning. arXiv preprint 2018, arXiv:1811.03378. [Google Scholar]

- Szandała, T. Review and comparison of commonly used activation functions for deep neural networks. Bio-inspired neurocomputing 2021, 203–224. [Google Scholar]

- Clevert, D.A.; Unterthiner, T.; Hochreiter, S. Fast and accurate deep network learning by exponential linear units (elus). arXiv preprint 2015, arXiv:1511.07289. [Google Scholar]

- Dubey, S.R.; Singh, S.K.; Chaudhuri, B.B. Activation functions in deep learning: A comprehensive survey and benchmark. Neurocomputing 2022, 503, 92–108. [Google Scholar] [CrossRef]

- Mienye, I.D.; Sun, Y.; Wang, Z. An improved ensemble learning approach for the prediction of heart disease risk. Informatics in Medicine Unlocked 2020, 20, 100402. [Google Scholar] [CrossRef]

- Martins, A.; Astudillo, R. From softmax to sparsemax: A sparse model of attention and multi-label classification. In Proceedings of the International conference on machine learning. PMLR; 2016; pp. 1614–1623. [Google Scholar]

- Bianchi, F.M.; Maiorino, E.; Kampffmeyer, M.C.; Rizzi, A.; Jenssen, R.; Bianchi, F.M.; Maiorino, E.; Kampffmeyer, M.C.; Rizzi, A.; Jenssen, R. Properties and training in recurrent neural networks. In Recurrent Neural Networks for Short-Term Load Forecasting: An Overview and Comparative Analysis; 2017; pp. 9–21. [Google Scholar]

- Mohajerin, N.; Waslander, S.L. State initialization for recurrent neural network modeling of time-series data. In Proceedings of the 2017 International Joint Conference on Neural Networks (IJCNN). IEEE; 2017; pp. 2330–2337. [Google Scholar]

- Forgione, M.; Muni, A.; Piga, D.; Gallieri, M. On the adaptation of recurrent neural networks for system identification. Automatica 2023, 155, 111092. [Google Scholar] [CrossRef]

- Fei, H.; Tan, F. Bidirectional grid long short-term memory (bigridlstm): A method to address context-sensitivity and vanishing gradient. Algorithms 2018, 11, 172. [Google Scholar] [CrossRef]

- Dong, X.; Chowdhury, S.; Qian, L.; Li, X.; Guan, Y.; Yang, J.; Yu, Q. Deep learning for named entity recognition on Chinese electronic medical records: Combining deep transfer learning with multitask bi-directional LSTM RNN. PloS one 2019, 14, e0216046. [Google Scholar] [CrossRef] [PubMed]

- Chorowski, J.K.; Bahdanau, D.; Serdyuk, D.; Cho, K.; Bengio, Y. Attention-based models for speech recognition. Advances in neural information processing systems 2015, 28. [Google Scholar]

- Zhou, M.; Duan, N.; Liu, S.; Shum, H.Y. Progress in neural NLP: modeling, learning, and reasoning. Engineering 2020, 6, 275–290. [Google Scholar] [CrossRef]

- Naseem, U.; Razzak, I.; Khan, S.K.; Prasad, M. A comprehensive survey on word representation models: From classical to state-of-the-art word representation language models. Transactions on Asian and Low-Resource Language Information Processing 2021, 20, 1–35. [Google Scholar] [CrossRef]

- Adil, M.; Wu, J.Z.; Chakrabortty, R.K.; Alahmadi, A.; Ansari, M.F.; Ryan, M.J. Attention-based STL-BiLSTM network to forecast tourist arrival. Processes 2021, 9, 1759. [Google Scholar] [CrossRef]

- Min, S.; Park, S.; Kim, S.; Choi, H.S.; Lee, B.; Yoon, S. Pre-training of deep bidirectional protein sequence representations with structural information. IEEE Access 2021, 9, 123912–123926. [Google Scholar] [CrossRef]

- Jain, A.; Zamir, A.R.; Savarese, S.; Saxena, A. Structural-rnn: Deep learning on spatio-temporal graphs. In Proceedings of the Proceedings of the ieee conference on computer vision and pattern recognition; 2016; pp. 5308–5317. [Google Scholar]

- Pascanu, R.; Gulcehre, C.; Cho, K.; Bengio, Y. How to construct deep recurrent neural networks. arXiv preprint 2013, arXiv:1312.6026. [Google Scholar]

- Shi, H.; Xu, M.; Li, R. Deep learning for household load forecastingâA novel pooling deep RNN. IEEE Transactions on Smart Grid 2017, 9, 5271–5280. [Google Scholar] [CrossRef]

- Gal, Y.; Ghahramani, Z. A theoretically grounded application of dropout in recurrent neural networks. Advances in neural information processing systems 2016, 29. [Google Scholar]

- Moradi, R.; Berangi, R.; Minaei, B. A survey of regularization strategies for deep models. Artificial Intelligence Review 2020, 53, 3947–3986. [Google Scholar] [CrossRef]

- Salehin, I.; Kang, D.K. A review on dropout regularization approaches for deep neural networks within the scholarly domain. Electronics 2023, 12, 3106. [Google Scholar] [CrossRef]

- Cai, S.; Shu, Y.; Chen, G.; Ooi, B.C.; Wang, W.; Zhang, M. Effective and efficient dropout for deep convolutional neural networks. arXiv preprint 2019, arXiv:1904.03392. [Google Scholar]

- Garbin, C.; Zhu, X.; Marques, O. Dropout vs. batch normalization: an empirical study of their impact to deep learning. Multimedia tools and applications 2020, 79, 12777–12815. [Google Scholar] [CrossRef]

- Borawar, L.; Kaur, R. ResNet: Solving vanishing gradient in deep networks. In Proceedings of the Proceedings of International Conference on Recent Trends in Computing: ICRTC 2022; Springer, 2023; pp. 235–247. [Google Scholar]

- Mienye, I.D.; Sun, Y. A deep learning ensemble with data resampling for credit card fraud detection. IEEE Access 2023, 11, 30628–30638. [Google Scholar] [CrossRef]

- Kiperwasser, E.; Goldberg, Y. Simple and accurate dependency parsing using bidirectional LSTM feature representations. Transactions of the Association for Computational Linguistics 2016, 4, 313–327. [Google Scholar] [CrossRef]

- Zhang, W.; Li, H.; Tang, L.; Gu, X.; Wang, L.; Wang, L. Displacement prediction of Jiuxianping landslide using gated recurrent unit (GRU) networks. Acta Geotechnica 2022, 17, 1367–1382. [Google Scholar] [CrossRef]

- Cahuantzi, R.; Chen, X.; Güttel, S. A comparison of LSTM and GRU networks for learning symbolic sequences. In Proceedings of the Science and Information Conference. Springer; 2023; pp. 771–785. [Google Scholar]

- Shewalkar, A.; Nyavanandi, D.; Ludwig, S.A. Performance evaluation of deep neural networks applied to speech recognition: RNN, LSTM and GRU. Journal of Artificial Intelligence and Soft Computing Research 2019, 9, 235–245. [Google Scholar] [CrossRef]

- Vatanchi, S.M.; Etemadfard, H.; Maghrebi, M.F.; Shad, R. A comparative study on forecasting of long-term daily streamflow using ANN, ANFIS, BiLSTM and CNN-GRU-LSTM. Water Resources Management 2023, 37, 4769–4785. [Google Scholar] [CrossRef]

- Mateus, B.C.; Mendes, M.; Farinha, J.T.; Assis, R.; Cardoso, A.M. Comparing LSTM and GRU models to predict the condition of a pulp paper press. Energies 2021, 14, 6958. [Google Scholar] [CrossRef]

- Gers, F.A.; Schmidhuber, J. Recurrent nets that time and count. In Proceedings of the Proceedings of the IEEE-INNS-ENNS International Joint Conference on Neural Networks. IJCNN 2000. Neural Computing: New Challenges and Perspectives for the New Millennium; IEEE, 2000; Vol. 3, pp. 3189–194. [Google Scholar]

- Gers, F.A.; Schraudolph, N.N.; Schmidhuber, J. Learning precise timing with LSTM recurrent networks. Journal of machine learning research 2002, 3, 115–143. [Google Scholar]

- Jaeger, H. Adaptive nonlinear system identification with echo state networks. Advances in neural information processing systems 2002, 15. [Google Scholar]

- Li, S.; Li, W.; Cook, C.; Zhu, C.; Gao, Y. Independently recurrent neural network (indrnn): Building a longer and deeper rnn. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition; 2018; pp. 5457–5466. [Google Scholar]

- Yang, J.; Qu, J.; Mi, Q.; Li, Q. A CNN-LSTM model for tailings dam risk prediction. IEEE Access 2020, 8, 206491–206502. [Google Scholar] [CrossRef]

- Ren, P.; Xiao, Y.; Chang, X.; Huang, P.Y.; Li, Z.; Chen, X.; Wang, X. A comprehensive survey of neural architecture search: Challenges and solutions. ACM Computing Surveys (CSUR) 2021, 54, 1–34. [Google Scholar] [CrossRef]

- Mellor, J.; Turner, J.; Storkey, A.; Crowley, E.J. Neural architecture search without training. In Proceedings of the International conference on machine learning. PMLR; 2021; pp. 7588–7598. [Google Scholar]

- Zoph, B.; Le, Q.V. Neural architecture search with reinforcement learning. arXiv preprint 2016, arXiv:1611.01578. [Google Scholar]

- Chen, X.; Wu, S.Z.; Hong, M. Understanding gradient clipping in private sgd: A geometric perspective. Advances in Neural Information Processing Systems 2020, 33, 13773–13782. [Google Scholar]

- Qian, J.; Wu, Y.; Zhuang, B.; Wang, S.; Xiao, J. Understanding gradient clipping in incremental gradient methods. In Proceedings of the International Conference on Artificial Intelligence and Statistics. PMLR; 2021; pp. 1504–1512. [Google Scholar]

- Zhang, Z. Improved adam optimizer for deep neural networks. In Proceedings of the 2018 IEEE/ACM 26th international symposium on quality of service (IWQoS); Ieee, 2018; pp. 1–2. [Google Scholar]

- de Santana Correia, A.; Colombini, E.L. Attention, please! A survey of neural attention models in deep learning. Artificial Intelligence Review 2022, 55, 6037–6124. [Google Scholar] [CrossRef]

- Lin, J.; Ma, J.; Zhu, J.; Cui, Y. Short-term load forecasting based on LSTM networks considering attention mechanism. International Journal of Electrical Power & Energy Systems 2022, 137, 107818. [Google Scholar]

- Chaudhari, S.; Mithal, V.; Polatkan, G.; Ramanath, R. An attentive survey of attention models. ACM Transactions on Intelligent Systems and Technology (TIST) 2021, 12, 1–32. [Google Scholar] [CrossRef]

- Bahdanau, D.; Cho, K.; Bengio, Y. Neural machine translation by jointly learning to align and translate. arXiv preprint 2014, arXiv:1409.0473. [Google Scholar]

- Luong, M.T.; Pham, H.; Manning, C.D. Effective approaches to attention-based neural machine translation. arXiv preprint 2015, arXiv:1508.04025. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Souri, A.; El Maazouzi, Z.; Al Achhab, M.; El Mohajir, B.E. Arabic text generation using recurrent neural networks. In Proceedings of the Big Data, Cloud and Applications: Third International Conference, BDCA 2018, Kenitra, Morocco, April 4–5, 2018; Revised Selected Papers 3. Springer, 2018; pp. 523–533. [Google Scholar]

- Islam, M.S.; Mousumi, S.S.S.; Abujar, S.; Hossain, S.A. Sequence-to-sequence Bangla sentence generation with LSTM recurrent neural networks. Procedia Computer Science 2019, 152, 51–58. [Google Scholar] [CrossRef]

- Gajendran, S.; Manjula, D.; Sugumaran, V. Character level and word level embedding with bidirectional LSTM–Dynamic recurrent neural network for biomedical named entity recognition from literature. Journal of Biomedical Informatics 2020, 112, 103609. [Google Scholar] [CrossRef] [PubMed]

- Hu, H.; Liao, M.; Mao, W.; Liu, W.; Zhang, C.; Jing, Y. Variational auto-encoder for text generation. In Proceedings of the 2020 IEEE 5th Information Technology and Mechatronics Engineering Conference (ITOEC). IEEE; 2020; pp. 595–598. [Google Scholar]

- Holtzman, A.; Buys, J.; Du, L.; Forbes, M.; Choi, Y. The curious case of neural text degeneration. arXiv preprint 2019, arXiv:1904.09751. [Google Scholar]

- Yin, W.; Schütze, H. Attentive convolution: Equipping cnns with rnn-style attention mechanisms. Transactions of the Association for Computational Linguistics 2018, 6, 687–702. [Google Scholar] [CrossRef]

- Hussein, M.A.H.; Savaş, S. LSTM-Based Text Generation: A Study on Historical Datasets. arXiv preprint 2024, arXiv:2403.07087. [Google Scholar]

- Baskaran, S.; Alagarsamy, S.; S, S.; Shivam, S. Text Generation using Long Short-Term Memory. In Proceedings of the 2024 Third International Conference on Intelligent Techniques in Control, Optimization and Signal Processing (INCOS); 2024; pp. 1–6. [Google Scholar] [CrossRef]

- Keskar, N.S.; McCann, B.; Varshney, L.R.; Xiong, C.; Socher, R. Ctrl: A conditional transformer language model for controllable generation. arXiv preprint 2019, arXiv:1909.05858. [Google Scholar]

- Guo, H. Generating text with deep reinforcement learning. arXiv preprint 2015, arXiv:1510.09202. [Google Scholar]

- Yadav, V.; Verma, P.; Katiyar, V. Long short term memory (LSTM) model for sentiment analysis in social data for e-commerce products reviews in Hindi languages. International Journal of Information Technology 2023, 15, 759–772. [Google Scholar] [CrossRef]

- Abimbola, B.; de La Cal Marin, E.; Tan, Q. Enhancing Legal Sentiment Analysis: A Convolutional Neural Network–Long Short-Term Memory Document-Level Model. Machine Learning and Knowledge Extraction 2024, 6, 877–897. [Google Scholar] [CrossRef]

- Zulqarnain, M.; Ghazali, R.; Aamir, M.; Hassim, Y.M.M. An efficient two-state GRU based on feature attention mechanism for sentiment analysis. Multimedia Tools and Applications 2024, 83, 3085–3110. [Google Scholar] [CrossRef]

- Pujari, P.; Padalia, A.; Shah, T.; Devadkar, K. Hybrid CNN and RNN for Twitter Sentiment Analysis. In Proceedings of the International Conference on Smart Computing and Communication; Springer, 2024; pp. 297–310. [Google Scholar]

- Wankhade, M.; Annavarapu, C.S.R.; Abraham, A. CBMAFM: CNN-BiLSTM multi-attention fusion mechanism for sentiment classification. Multimedia Tools and Applications 2024, 83, 51755–51786. [Google Scholar] [CrossRef]

- Sangeetha, J.; Kumaran, U. A hybrid optimization algorithm using BiLSTM structure for sentiment analysis. Measurement: Sensors 2023, 25, 100619. [Google Scholar] [CrossRef]

- He, R.; McAuley, J. Ups and downs: Modeling the visual evolution of fashion trends with one-class collaborative filtering. In Proceedings of the proceedings of the 25th international conference on world wide web; 2016; pp. 507–517. [Google Scholar]

- Samir, A.; Elkaffas, S.M.; Madbouly, M.M. Twitter sentiment analysis using BERT. In Proceedings of the 2021 31st international conference on computer theory and applications (ICCTA); IEEE, 2021; pp. 182–186. [Google Scholar]

- Prottasha, N.J.; Sami, A.A.; Kowsher, M.; Murad, S.A.; Bairagi, A.K.; Masud, M.; Baz, M. Transfer learning for sentiment analysis using BERT based supervised fine-tuning. Sensors 2022, 22, 4157. [Google Scholar] [CrossRef] [PubMed]

- Mujahid, M.; Rustam, F.; Shafique, R.; Chunduri, V.; Villar, M.G.; Ballester, J.B.; Diez, I.d.l.T.; Ashraf, I. Analyzing sentiments regarding ChatGPT using novel BERT: A machine learning approach. Information 2023, 14, 474. [Google Scholar] [CrossRef]

- Wu, Y.; Schuster, M.; Chen, Z.; Le, Q.V.; Norouzi, M.; Macherey, W.; Krikun, M.; Cao, Y.; Gao, Q.; Macherey, K.; et al. Google’s neural machine translation system: Bridging the gap between human and machine translation. arXiv preprint 2016, arXiv:1609.08144. [Google Scholar]

- Sennrich, R.; Haddow, B.; Birch, A. Neural machine translation of rare words with subword units. arXiv preprint 2015, arXiv:1508.07909. [Google Scholar]

- Kang, L.; He, S.; Wang, M.; Long, F.; Su, J. Bilingual attention based neural machine translation. Applied Intelligence 2023, 53, 4302–4315. [Google Scholar] [CrossRef]

- Yang, Z.; Dai, Z.; Salakhutdinov, R.; Cohen, W.W. Breaking the softmax bottleneck: A high-rank RNN language model. arXiv preprint 2017, arXiv:1711.03953. [Google Scholar]

- Song, K.; Tan, X.; Qin, T.; Lu, J.; Liu, T.Y. Mass: Masked sequence to sequence pre-training for language generation. arXiv preprint 2019, arXiv:1905.02450. [Google Scholar]

- Hinton, G.; Deng, L.; Yu, D.; Dahl, G.E.; Mohamed, A.r.; Jaitly, N.; Senior, A.; Vanhoucke, V.; Nguyen, P.; Sainath, T.N.; et al. Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups. IEEE Signal processing magazine 2012, 29, 82–97. [Google Scholar] [CrossRef]

- Hannun, A.; Case, C.; Casper, J.; Catanzaro, B.; Diamos, G.; Elsen, E.; Prenger, R.; Satheesh, S.; Sengupta, S.; Coates, A.; et al. Deep speech: Scaling up end-to-end speech recognition. arXiv preprint 2014, arXiv:1412.5567. [Google Scholar]

- Amodei, D.; Ananthanarayanan, S.; Anubhai, R.; Bai, J.; Battenberg, E.; Case, C.; Casper, J.; Catanzaro, B.; Cheng, Q.; Chen, G.; et al. Deep speech 2: End-to-end speech recognition in english and mandarin. In Proceedings of the International conference on machine learning. PMLR; 2016; pp. 173–182. [Google Scholar]

- Chiu, C.C.; Sainath, T.N.; Wu, Y.; Prabhavalkar, R.; Nguyen, P.; Chen, Z.; Kannan, A.; Weiss, R.J.; Rao, K.; Gonina, E.; et al. State-of-the-art speech recognition with sequence-to-sequence models. In Proceedings of the 2018 IEEE international conference on acoustics, speech and signal processing (ICASSP); IEEE, 2018; pp. 4774–4778. [Google Scholar]

- Zhang, Y.; Chan, W.; Jaitly, N. Very deep convolutional networks for end-to-end speech recognition. In Proceedings of the 2017 IEEE international conference on acoustics, speech and signal processing (ICASSP); IEEE, 2017; pp. 4845–4849. [Google Scholar]

- Dong, L.; Xu, S.; Xu, B. Speech-transformer: a no-recurrence sequence-to-sequence model for speech recognition. In Proceedings of the 2018 IEEE international conference on acoustics, speech and signal processing (ICASSP); IEEE, 2018; pp. 5884–5888. [Google Scholar]

- Bhaskar, S.; Thasleema, T. LSTM model for visual speech recognition through facial expressions. Multimedia Tools and Applications 2023, 82, 5455–5472. [Google Scholar] [CrossRef]

- Daouad, M.; Allah, F.A.; Dadi, E.W. An automatic speech recognition system for isolated Amazigh word using 1D & 2D CNN-LSTM architecture. International Journal of Speech Technology 2023, 26, 775–787. [Google Scholar]

- Dhanjal, A.S.; Singh, W. A comprehensive survey on automatic speech recognition using neural networks. Multimedia Tools and Applications 2024, 83, 23367–23412. [Google Scholar] [CrossRef]

- Nasr, S.; Duwairi, R.; Quwaider, M. End-to-end speech recognition for arabic dialects. Arabian Journal for Science and Engineering 2023, 48, 10617–10633. [Google Scholar] [CrossRef]

- Kumar, D.; Aziz, S. Performance Evaluation of Recurrent Neural Networks-LSTM and GRU for Automatic Speech Recognition. In Proceedings of the 2023 International Conference on Computer, Electronics & Electrical Engineering & their Applications (IC2E3); IEEE, 2023; pp. 1–6. [Google Scholar]

- Fischer, T.; Krauss, C. Deep learning with long short-term memory networks for financial market predictions. European journal of operational research 2018, 270, 654–669. [Google Scholar] [CrossRef]

- Nelson, D.M.; Pereira, A.C.; De Oliveira, R.A. Stock market’s price movement prediction with LSTM neural networks. In Proceedings of the 2017 International joint conference on neural networks (IJCNN). Ieee; 2017; pp. 1419–1426. [Google Scholar]

- Luo, A.; Zhong, L.; Wang, J.; Wang, Y.; Li, S.; Tai, W. Short-term stock correlation forecasting based on CNN-BiLSTM enhanced by attention mechanism. IEEE Access 2024. [Google Scholar] [CrossRef]

- Bao, W.; Yue, J.; Rao, Y. A deep learning framework for financial time series using stacked autoencoders and long-short term memory. PloS one 2017, 12, e0180944. [Google Scholar] [CrossRef]

- Feng, F.; Chen, H.; He, X.; Ding, J.; Sun, M.; Chua, T.S. Enhancing Stock Movement Prediction with Adversarial Training. In Proceedings of the IJCAI, Vol. 19; 2019; pp. 5843–5849. [Google Scholar]

- Rundo, F. Deep LSTM with reinforcement learning layer for financial trend prediction in FX high frequency trading systems. Applied Sciences 2019, 9, 4460. [Google Scholar] [CrossRef]

- Li, Y.; Huang, C.; Ding, L.; Li, Z.; Pan, Y.; Gao, X. Deep learning in bioinformatics: Introduction, application, and perspective in the big data era. Methods 2019, 166, 4–21. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Qiao, S.; Ji, S.; Li, Y. DeepSite: bidirectional LSTM and CNN models for predicting DNA–protein binding. International Journal of Machine Learning and Cybernetics 2020, 11, 841–851. [Google Scholar] [CrossRef]

- Xu, J.; Mcpartlon, M.; Li, J. Improved protein structure prediction by deep learning irrespective of co-evolution information. Nature Machine Intelligence 2021, 3, 601–609. [Google Scholar] [CrossRef] [PubMed]

- Yadav, S.; Ekbal, A.; Saha, S.; Kumar, A.; Bhattacharyya, P. Feature assisted stacked attentive shortest dependency path based Bi-LSTM model for protein–protein interaction. Knowledge-Based Systems 2019, 166, 18–29. [Google Scholar] [CrossRef]

- Aybey, E.; Gümüş, Ö. SENSDeep: an ensemble deep learning method for protein–protein interaction sites prediction. Interdisciplinary Sciences: Computational Life Sciences 2023, 15, 55–87. [Google Scholar] [CrossRef] [PubMed]

- Li, Z.; Du, X.; Cao, Y. DAT-RNN: trajectory prediction with diverse attention. In Proceedings of the 2020 19th IEEE International conference on machine learning and applications (ICMLA); IEEE, 2020; pp. 1512–1518. [Google Scholar]

- Lee, M.j.; Ha, Y.g. Autonomous Driving Control Using End-to-End Deep Learning. In Proceedings of the 2020 IEEE International Conference on Big Data and Smart Computing (BigComp); 2020; pp. 470–473. [Google Scholar] [CrossRef]

- Codevilla, F.; Müller, M.; López, A.; Koltun, V.; Dosovitskiy, A. End-to-end driving via conditional imitation learning. In Proceedings of the 2018 IEEE international conference on robotics and automation (ICRA); IEEE, 2018; pp. 4693–4700. [Google Scholar]

- Altché, F.; de La Fortelle, A. An LSTM network for highway trajectory prediction. In Proceedings of the 2017 IEEE 20th international conference on intelligent transportation systems (ITSC); IEEE, 2017; pp. 353–359. [Google Scholar]

- Li, P.; Zhang, Y.; Yuan, L.; Xiao, H.; Lin, B.; Xu, X. Efficient long-short temporal attention network for unsupervised video object segmentation. Pattern Recognition 2024, 146, 110078. [Google Scholar] [CrossRef]

- Li, R.; Shu, X.; Li, C. Driving Behavior Prediction Based on Combined Neural Network Model. IEEE Transactions on Computational Social Systems 2024, 11, 4488–4496. [Google Scholar] [CrossRef]

- Liu, Y.; Diao, S. An automatic driving trajectory planning approach in complex traffic scenarios based on integrated driver style inference and deep reinforcement learning. PLoS one 2024, 19, e0297192. [Google Scholar] [CrossRef]

- Altindal, M.C.; Nivlet, P.; Tabib, M.; Rasheed, A.; Kristiansen, T.G.; Khosravanian, R. Anomaly detection in multivariate time series of drilling data. Geoenergy Science and Engineering 2024, 237, 212778. [Google Scholar] [CrossRef]

- Matar, M.; Xia, T.; Huguenard, K.; Huston, D.; Wshah, S. Multi-head attention based bi-lstm for anomaly detection in multivariate time-series of wsn. In Proceedings of the 2023 IEEE 5th International Conference on Artificial Intelligence Circuits and Systems (AICAS); IEEE, 2023; pp. 1–5. [Google Scholar]

- Kumaresan, S.J.; Senthilkumar, C.; Kongkham, D.; Beenarani, B.; Nirmala, P. Investigating the Effectiveness of Recurrent Neural Networks for Network Anomaly Detection. In Proceedings of the 2024 International Conference on Intelligent and Innovative Technologies in Computing, Electrical and Electronics (IITCEE); IEEE, 2024; pp. 1–5. [Google Scholar]

- Li, E.; Bedi, S.; Melek, W. Anomaly detection in three-axis CNC machines using LSTM networks and transfer learning. The International Journal of Advanced Manufacturing Technology 2023, 127, 5185–5198. [Google Scholar] [CrossRef]

- Minic, A.; Jovanovic, L.; Bacanin, N.; Stoean, C.; Zivkovic, M.; Spalevic, P.; Petrovic, A.; Dobrojevic, M.; Stoean, R. Applying recurrent neural networks for anomaly detection in electrocardiogram sensor data. Sensors 2023, 23, 9878. [Google Scholar] [CrossRef] [PubMed]

- Zhou, C.; Paffenroth, R.C. Anomaly detection with robust deep autoencoders. In Proceedings of the Proceedings of the 23rd ACM SIGKDD international conference on knowledge discovery and data mining; 2017; pp. 665–674. [Google Scholar]

- Ren, H.; Xu, B.; Wang, Y.; Yi, C.; Huang, C.; Kou, X.; Xing, T.; Yang, M.; Tong, J.; Zhang, Q. Time-series anomaly detection service at microsoft. In Proceedings of the Proceedings of the 25th ACM SIGKDD international conference on knowledge discovery & data mining; 2019; pp. 3009–3017. [Google Scholar]

- Munir, M.; Siddiqui, S.A.; Dengel, A.; Ahmed, S. DeepAnT: A deep learning approach for unsupervised anomaly detection in time series. Ieee Access 2018, 7, 1991–2005. [Google Scholar] [CrossRef]

- Hewamalage, H.; Bergmeir, C.; Bandara, K. Recurrent neural networks for time series forecasting: Current status and future directions. International Journal of Forecasting 2021, 37, 388–427. [Google Scholar] [CrossRef]

- Ahmed, S.F.; Alam, M.S.B.; Hassan, M.; Rozbu, M.R.; Ishtiak, T.; Rafa, N.; Mofijur, M.; Shawkat Ali, A.; Gandomi, A.H. Deep learning modelling techniques: current progress, applications, advantages, and challenges. Artificial Intelligence Review 2023, 56, 13521–13617. [Google Scholar] [CrossRef]

- Li, X.; Qin, T.; Yang, J.; Liu, T.Y. LightRNN: Memory and computation-efficient recurrent neural networks. Advances in Neural Information Processing Systems 2016, 29. [Google Scholar]

- Katharopoulos, A.; Vyas, A.; Pappas, N.; Fleuret, F. Transformers are rnns: Fast autoregressive transformers with linear attention. In Proceedings of the International conference on machine learning; PMLR, 2020; pp. 5156–5165. [Google Scholar]

- Shao, W.; Li, B.; Yu, W.; Xu, J.; Wang, H. When Is It Likely to Fail? Performance Monitor for Black-Box Trajectory Prediction Model. IEEE Transactions on Automation Science and Engineering 2024. [Google Scholar] [CrossRef]

- Jacobs, W.R.; Kadirkamanathan, V.; Anderson, S.R. Interpretable deep learning for nonlinear system identification using frequency response functions with ensemble uncertainty quantification. IEEE Access 2024. [Google Scholar] [CrossRef]

- Mamalakis, M.; Mamalakis, A.; Agartz, I.; Mørch-Johnsen, L.E.; Murray, G.; Suckling, J.; Lio, P. Solving the enigma: Deriving optimal explanations of deep networks. arXiv preprint 2024, arXiv:2405.10008. [Google Scholar]

- Shah, M.; Sureja, N. A Comprehensive Review of Bias in Deep Learning Models: Methods, Impacts, and Future Directions. Archives of Computational Methods in Engineering 2024, 1–13. [Google Scholar] [CrossRef]

- Goethals, S.; Calders, T.; Martens, D. Beyond Accuracy-Fairness: Stop evaluating bias mitigation methods solely on between-group metrics. arXiv preprint 2024, arXiv:2401.13391. [Google Scholar]

- Weerts, H.; Pfisterer, F.; Feurer, M.; Eggensperger, K.; Bergman, E.; Awad, N.; Vanschoren, J.; Pechenizkiy, M.; Bischl, B.; Hutter, F. Can fairness be automated? Guidelines and opportunities for fairness-aware AutoML. Journal of Artificial Intelligence Research 2024, 79, 639–677. [Google Scholar] [CrossRef]

- Bai, Y.; Geng, X.; Mangalam, K.; Bar, A.; Yuille, A.L.; Darrell, T.; Malik, J.; Efros, A.A. Sequential modeling enables scalable learning for large vision models. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2024; pp. 22861–22872. [Google Scholar]

- Taye, M.M. Understanding of machine learning with deep learning: architectures, workflow, applications and future directions. Computers 2023, 12, 91. [Google Scholar] [CrossRef]

| Reference | Year | Description |

|---|---|---|

| Zaremba et al. [25] | 2014 | Insights into RNNs in language modeling. |

| Chung et al. [28] | 2014 | Survey of advancements in RNN training, optimization, and architectures. |

| Goodfellow et al. [22] | 2016 | Review on deep learning, including RNNs. |

| Greff et al. [23] | 2016 | Extensive comparison of LSTM variants. |

| Tarwani et al. [19] | 2017 | In-depth analysis of RNNs in NLP. |

| Chen et al. [32] | 2018 | Effectiveness of RNNs in environmental monitoring and climate modeling. |

| Bai et al. [26] | 2018 | Comparison of RNNs with other sequence modeling techniques like CNNs and attention mechanism. |

| Che et al. [27] | 2018 | Potential of RNNs in medical applications. |

| Zhang et al. [34] | 2020 | RNN applications in robotics, including path planning, motion control, and human-robot interaction. |

| Dutta et al. [20] | 2022 | Overview of RNNs, challenges in training, and advancements in LSTM and GRU for sequence learning. |

| Linardos et al. [33] | 2022 | RNNs for early warning systems, disaster response, and recovery planning in natural disaster prediction. |

| Badawy et al. [29] | 2023 | Integration of RNNs with other ML techniques for predictive analytics and patient monitoring in healthcare. |

| Ismaeel et al. [30] | 2023 | Application of RNNs in smart city technologies, including traffic prediction, energy management, and urban planning. |

| Mers et al. [31] | 2023 | Performance comparison of various RNN models in pavement performance forecasting. |

| Quradaa et al. [21] | 2024 | Start-of-the-art review of RNNs, covering core architectures with a focus on applications in code clones. |

| Al-Selwi et al. [24] | 2024 | Review of LSTM applications from 2018-2023. |

| Application Domain | Reference | Year | Methods and Application |

|---|---|---|---|

| Text Generation | Souri et al. [90] | 2018 | RNNs for generating coherent and contextually relevant Arabic text. |

| Holtzman et al. [94] | 2019 | Controlled text generation using RNNs for style and content control. | |

| Hu et al. [93] | 2020 | VAEs combined with RNNs to enhance creativity in text generation. | |

| Gajendran et al. [92] | 2020 | Character-level text generation using BiLSTMs for various tasks. | |

| Hussein and Savas [96] | 2024 | LSTM for text generation. | |

| Baskaran et al. [97] | 2024 | LSTM for text generation, achieving excellent performance. | |

| Islam [91] | 2019 | Sequence-to-sequence framework using LSTM for improved text generation quality. | |

| Yin et al. [95] | 2018 | Attention mechanisms with RNNs for improved text generation quality. | |

| Guo [99] | 2015 | Integration of reinforcement learning with RNNs for text generation. | |

| Keskar et al. [98] | 2019 | Conditional Transformer Language (CTRL) for generating text in various styles. | |

| Sentiment Analysis | He and McAuley [106] | 2016 | Adversarial training framework for robustness in sentiment analysis. |

| Pujari et al. [103] | 2024 | Hybrid CNN-RNN model for sentiment classification. | |

| Wankhade et al. [104] | 2024 | Fusion of CNN and BiLSTM with attention mechanism for sentiment classification. | |

| Sangeetha and Kumaran [105] | 2023 | BiLSTMs for sentiment analysis by processing text in both directions. | |

| Yadav et al. [100] | 2023 | LSTM-based models for sentiment analysis in customer reviews and social media posts. | |

| Zulqarnain et al. [102] | 2024 | Attention mechanisms and GRU for enhanced sentiment analysis. | |

| Samir et al. [107] | 2021 | Use of pre-trained models like BERT for sentiment analysis. | |

| Prottasha et al. [108] | 2022 | Transfer learning with BERT and GPT for sentiment analysis. | |

| Abimbola et al. [101] | 2024 | Hybrid LSTM-CNN model for document-level sentiment classification. | |

| Mujahid et al. [109] | 2023 | Analyzing sentiment with pre-trained models fine-tuned for specific tasks. | |

| Machine Translation | Sennrich et al. [111] | 2015 | Byte-Pair Encoding (BPE) for handling rare words in translation models. |

| Wu et al. [110] | 2016 | Google Neural Machine Translation (GNMT) with deep RNNs for improved accuracy. | |

| Vaswani et al. [89] | 2017 | Fully attention-based transformer models for superior translation performance. | |

| Yang et al. [113] | 2017 | Hybrid model integrating RNNs into the transformer architecture. | |

| Song et al. [114] | 2019 | Incorporating BERT into translation models for enhanced understanding and fluency. | |

| Kang et al. [112] | 2023 | Bilingual attention-based machine translation model combining RNN with attention. | |

| Zulqarnain et al. [102] | 2024 | Multi-stage feature attention mechanism model using GRU. |

| Application Domain | Reference | Year | Methods and Application |

|---|---|---|---|

| Speech Recognition | Hinton et al. [115] | 2012 | Deep neural networks, including RNNs, for speech-to-text systems. |

| Hannun et al. [116] | 2014 | DeepSpeech: LSTM-based speech recognition system. | |

| Amodei et al. [117] | 2016 | DeepSpeech2: Enhanced LSTM-based speech recognition with bidirectional RNNs. | |

| Zhang et al. [119] | 2017 | Convolutional recurrent neural networks (CRNNs) for robust speech recognition. | |

| Chiu et al. [118] | 2018 | RNN-transducer (RNN-T) models for end-to-end speech recognition. | |

| Dong et al. [120] | 2018 | Speech-Transformer: Leveraging self-attention for better processing of audio sequences. | |

| Bhaskar and Thasleema [121] | 2023 | LSTM for visual speech recognition using facial expressions. | |

| Daouad et al. [122] | 2023 | Various RNN variants for automatic speech recognition. | |

| Nasr et al. [124] | 2023 | End-to-end speech recognition using RNNs. | |

| Kumar et al. [125] | 2023 | Performance evaluation of RNNs in speech recognition tasks. | |

| Dhanjal et al. [123] | 2024 | Comprehensive study on different RNN models for speech recognition. | |

| Financial Time Series Forecasting | Nelson et al. [127] | 2017 | Hybrid CNN-RNN model for stock price prediction. |

| Bao et al. [129] | 2017 | Combining LSTMs with stacked autoencoders for financial time series forecasting. | |

| Fischer and Krauss [126] | 2018 | Deep RNNs for predicting stock returns, outperforming traditional ML models. | |

| Feng et al. [130] | 2019 | Transfer learning with RNNs for stock prediction. | |

| Rundo [131] | 2019 | Combining reinforcement learning with LSTMs for trading strategy development. | |

| Luo et al. [128] | 2024 | Attention-based CNN-BiLSTM model for improved financial forecasting. |

| Application Domain | Reference | Year | Methods and Application |

|---|---|---|---|

| Bioinformatics | Li et al. [132] | 2019 | RNNs for gene prediction and protein structure prediction. |

| Yadav et al. [135] | 2019 | Combining BiLSTM with CNNs for protein sequence analysis. | |

| Zhang et al. [133] | 2020 | DeepSite: Bidirectional LSTM for predicting DNA-binding protein sequences. | |

| Xu et al. [134] | 2021 | RNN-based model for predicting protein secondary structures. | |

| Aybey et al. [136] | 2023 | Ensemble model for predicting protein-protein interactions using RNN, GRU, and CNN. | |

| Autonomous Vehicles | Altché and de La Fortelle [140] | 2017 | LSTM-based model for predicting the future trajectories of surrounding vehicles. |

| Codevilla et al. [139] | 2018 | Conditional imitation learning combining RNNs with imitation learning for autonomous driving. | |

| Li et al. [137] | 2020 | RNNs for path planning, object detection, and trajectory prediction in autonomous vehicles. | |

| Lee et al. [138] | 2020 | Integrating LSTM with CNN for end-to-end driving. | |

| Li et al. [142] | 2024 | Combining RNNs with CNN to predict the intentions of other drivers. | |

| Li et al. [141] | 2024 | Attention-based LSTM for improving the detection and tracking of video objects. | |

| Liu and Diao [143] | 2024 | Deep reinforcement learning framework with GRU for decision-making in traffic scenarios. | |

| Anomaly Detection | Zhou and Paffenroth [149] | 2017 | Unsupervised anomaly detection using robust deep autoencoder models with RNNs. |

| Munir et al. [151] | 2018 | Hybrid model integrating CNNs and RNNs for anomaly detection in multivariate time series data. | |

| Ren et al. [150] | 2019 | Attention-based RNN model for improving accuracy and interpretability in anomaly detection. | |

| Li et al. [147] | 2023 | Combining RNNs with Transfer learning for anomaly detection in manufacturing processes. | |

| Mini et al. [148] | 2023 | RNNs for detecting abnormal patterns in ECG signals in healthcare. | |

| Matar et al. [145] | 2023 | BiLSTM for anomaly detection in multivariate time series. | |

| Kumaresan et al. [146] | 2024 | RNNs for detecting anomalies in network traffic in cybersecurity. | |

| Altindal et al. [144] | 2024 | LSTM networks for anomaly detection in time series data. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).