Submitted:

13 August 2024

Posted:

14 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- The consideration of multiple diseases (typhoid fever and malaria) allows for a thorough evaluation of the patient's health, which is essential for managing co-infection and comorbidity.

- Using real-world data ensures that the models are trained and validated on clinical cases thereby enhancing the practical relevance and applicability of our findings.

- The black-box nature of many ML models is addressed by the integration of an XAI method, which gives medical professionals transparent and comprehensible insights into how each feature influences the diagnosis, ensuring that diagnostic results are presented in a way that is meaningful for easier interpretation. By focusing on interpretability, healthcare workers can make more accurate and timely diagnoses.

- LLMs give the diagnosis process an extra layer of context-aware understanding and incorporating them makes it possible to better understand and analyze complex medical outcomes.

- The combination of LLMs and conventional ML models enables a thorough comparison of various diagnosis strategies. This not only demonstrates the models' efficacy but also the advantages and disadvantages of each approach to managing medical data.

- The integration of XAI, LLMs, and ML puts this work at the forefront of medical AI research. It demonstrates the viability and benefits of using a hybrid approach to address difficult diagnostic problems, establishing a standard for further study in the area.

2. Methodology

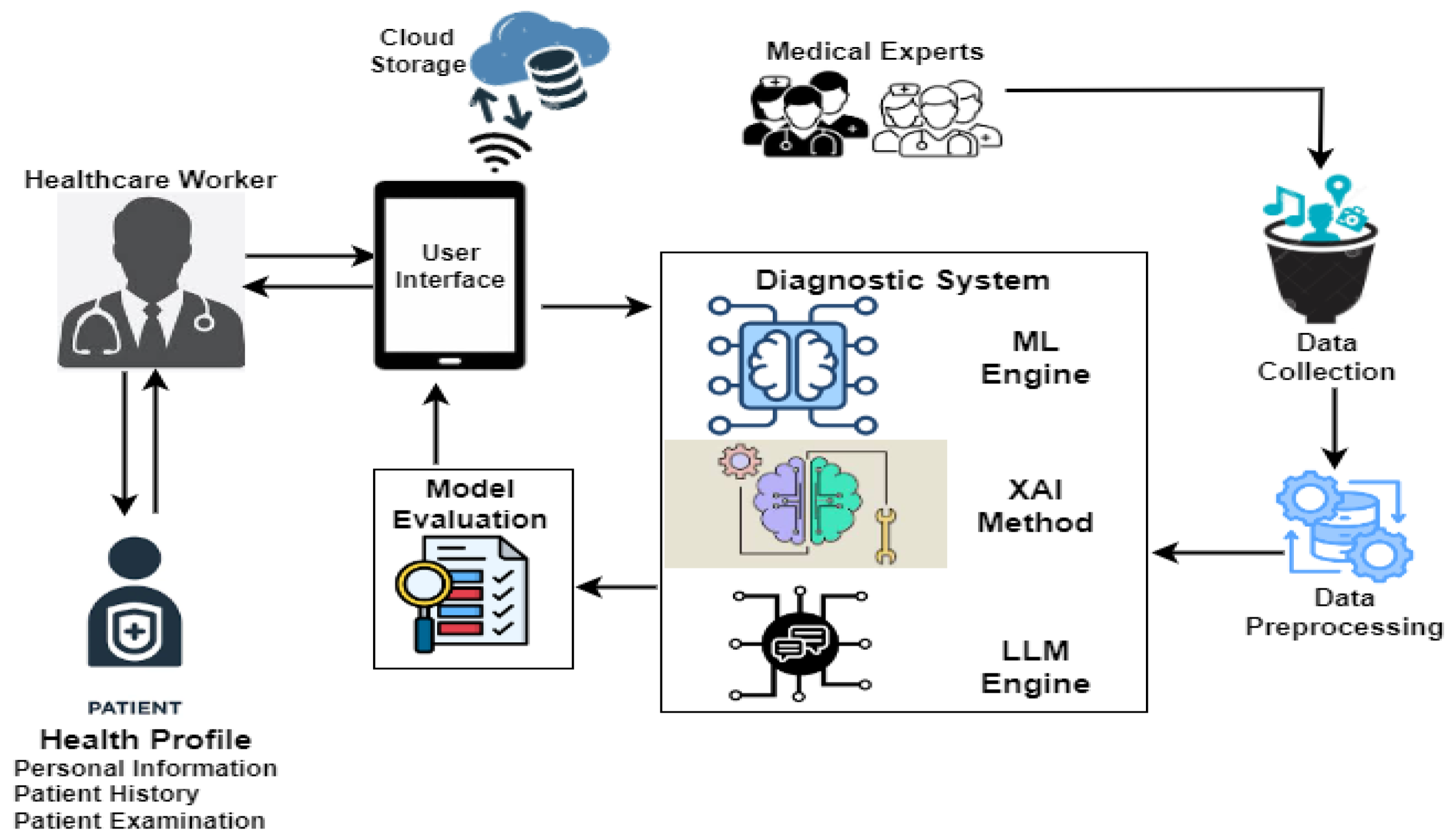

2.1. Malaria and typhoid diagnosis framework

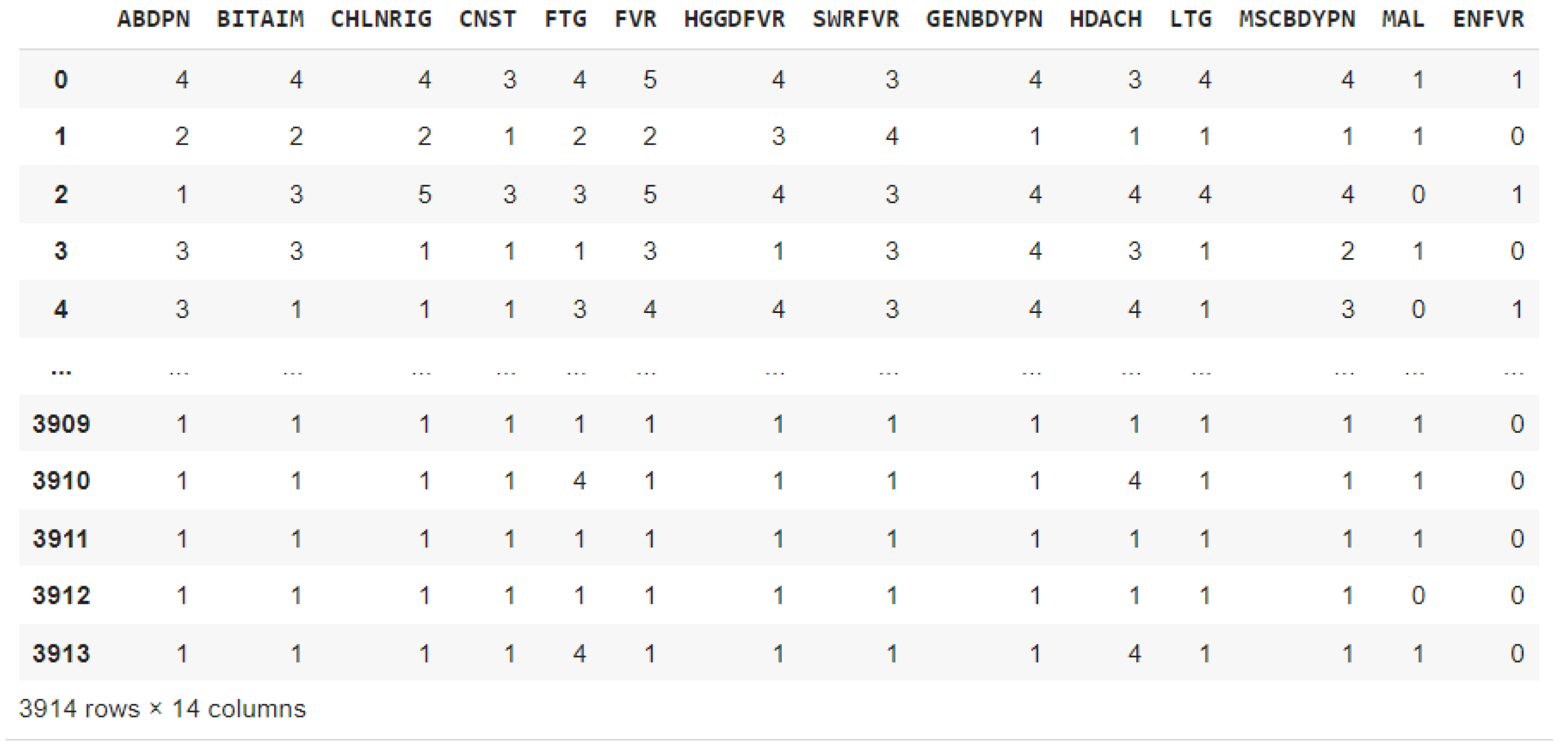

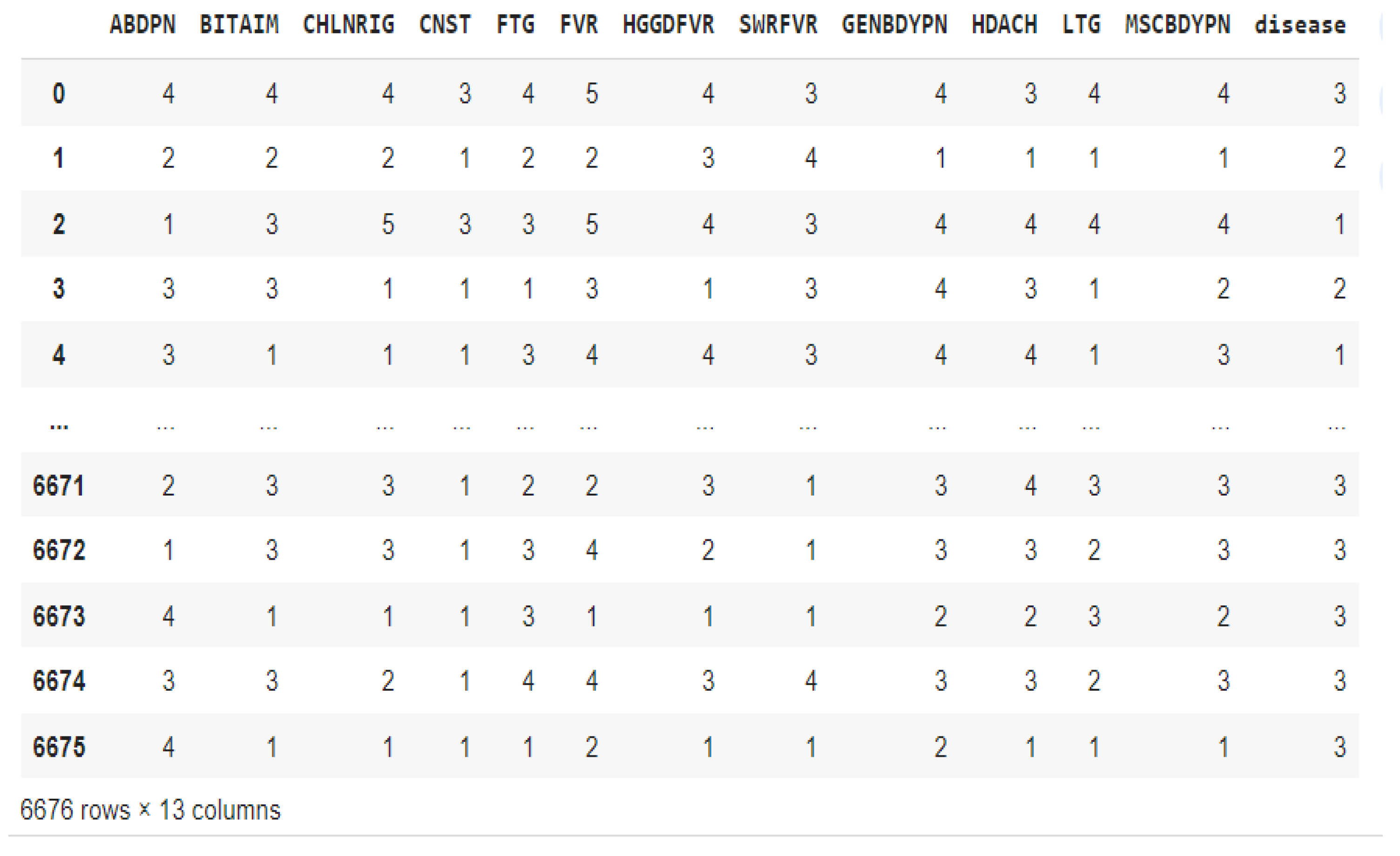

2.2. Description of the dataset used for the study

2.3. Data Preprocessing and Oversampling

2.4. Diagnostic Models and Model Optimization

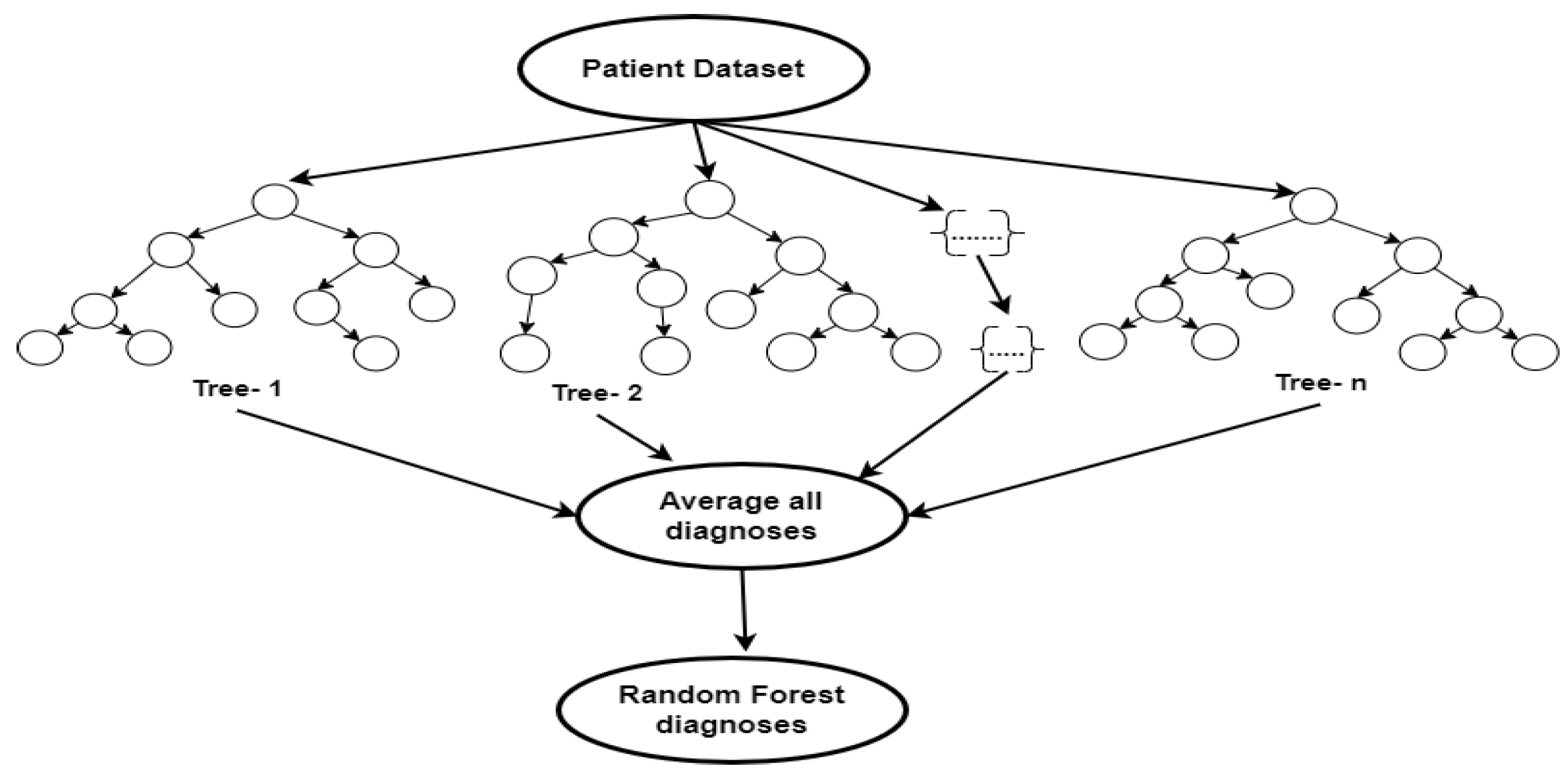

2.4.1. Random Forest

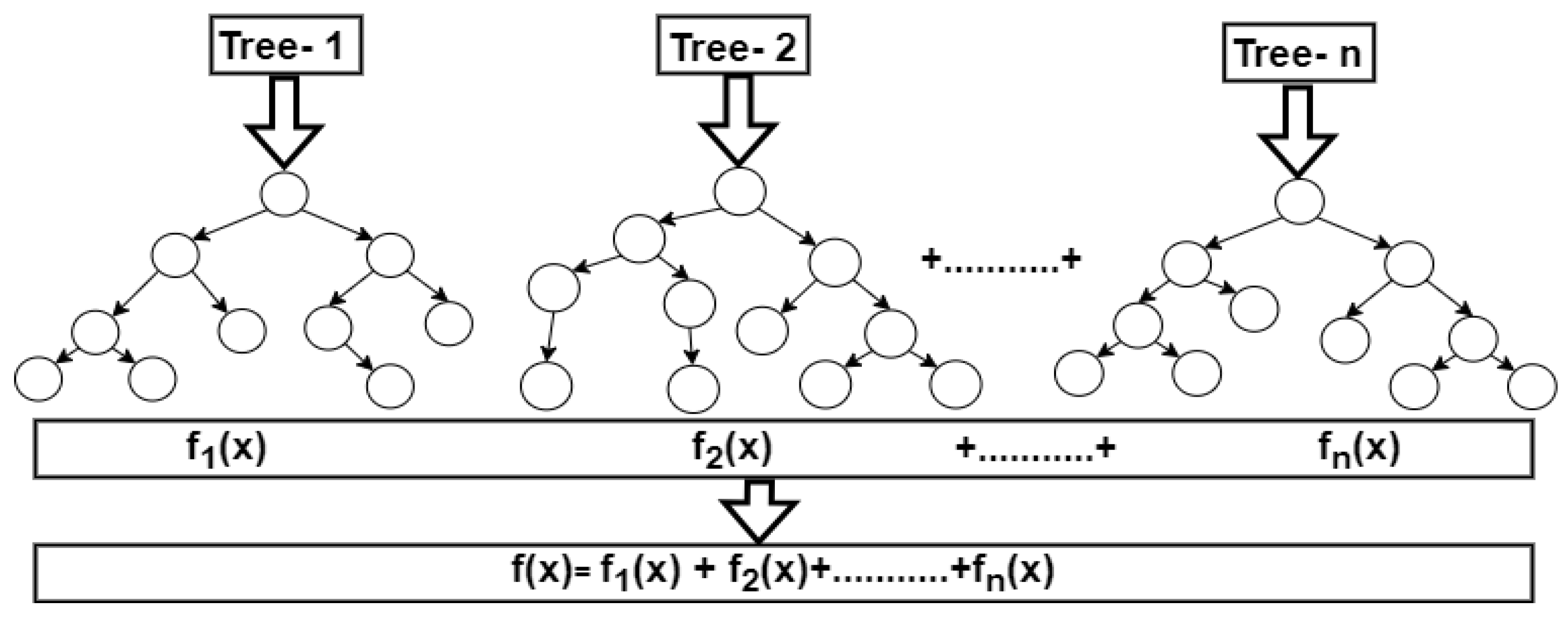

2.4.2. Extreme Gradient Boost

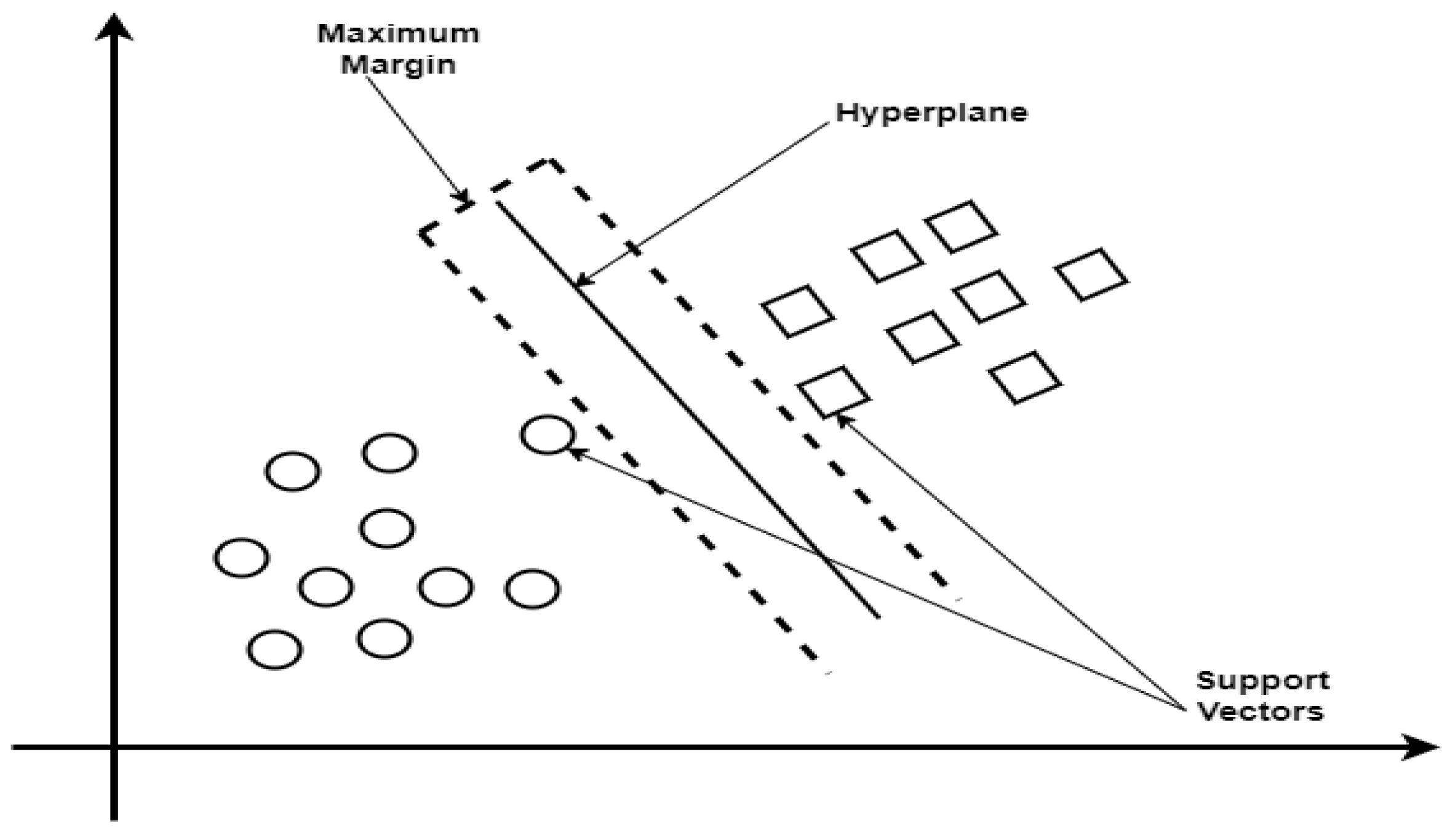

2.4.3. Support Vector Machine

2.5. Interpretability and Explainability Methods

2.6. Model Performance metrics

3. Results and Discussion

- Train, test, and validate an ML model to diagnose malaria and typhoid based on the patient dataset

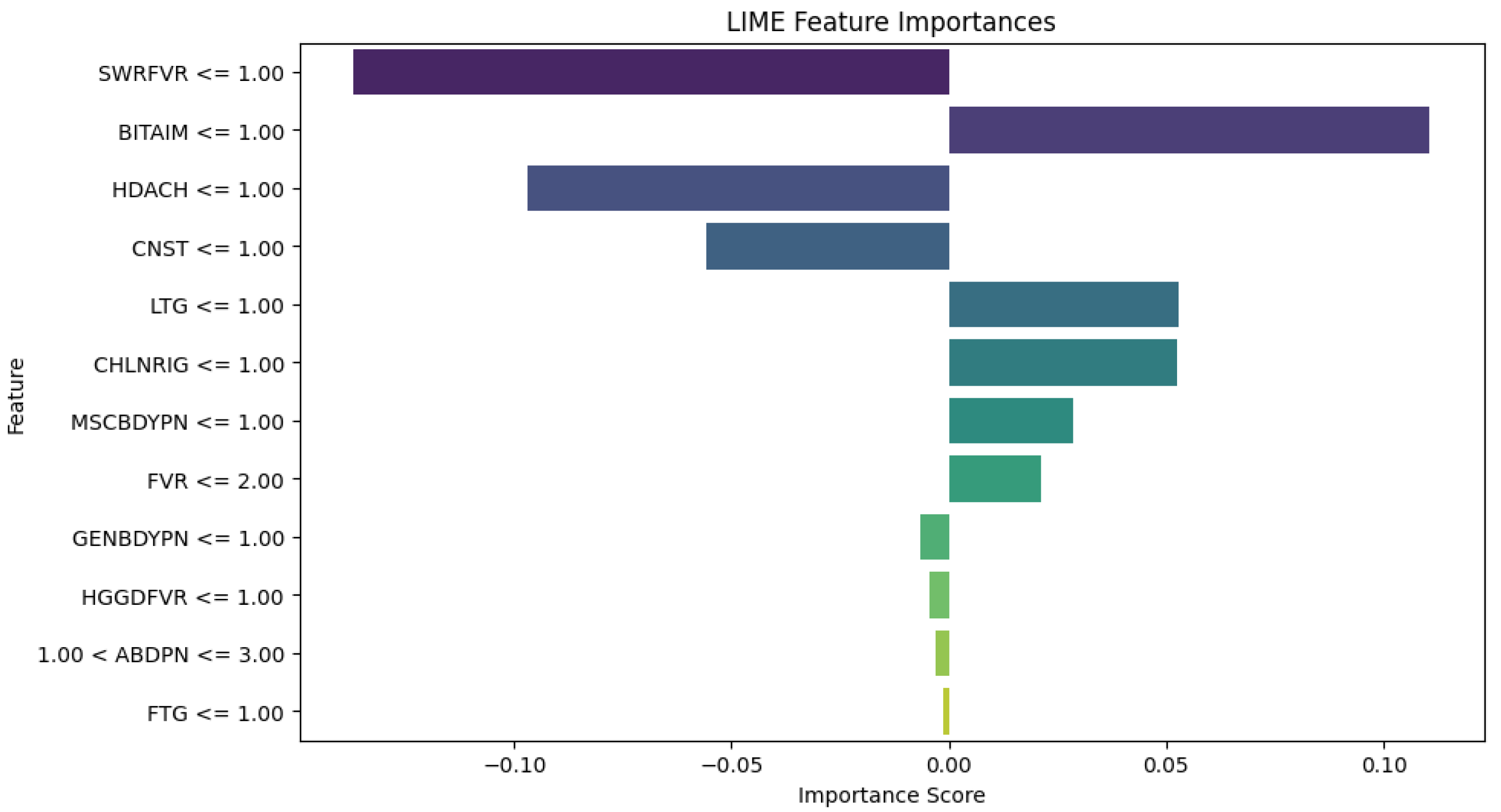

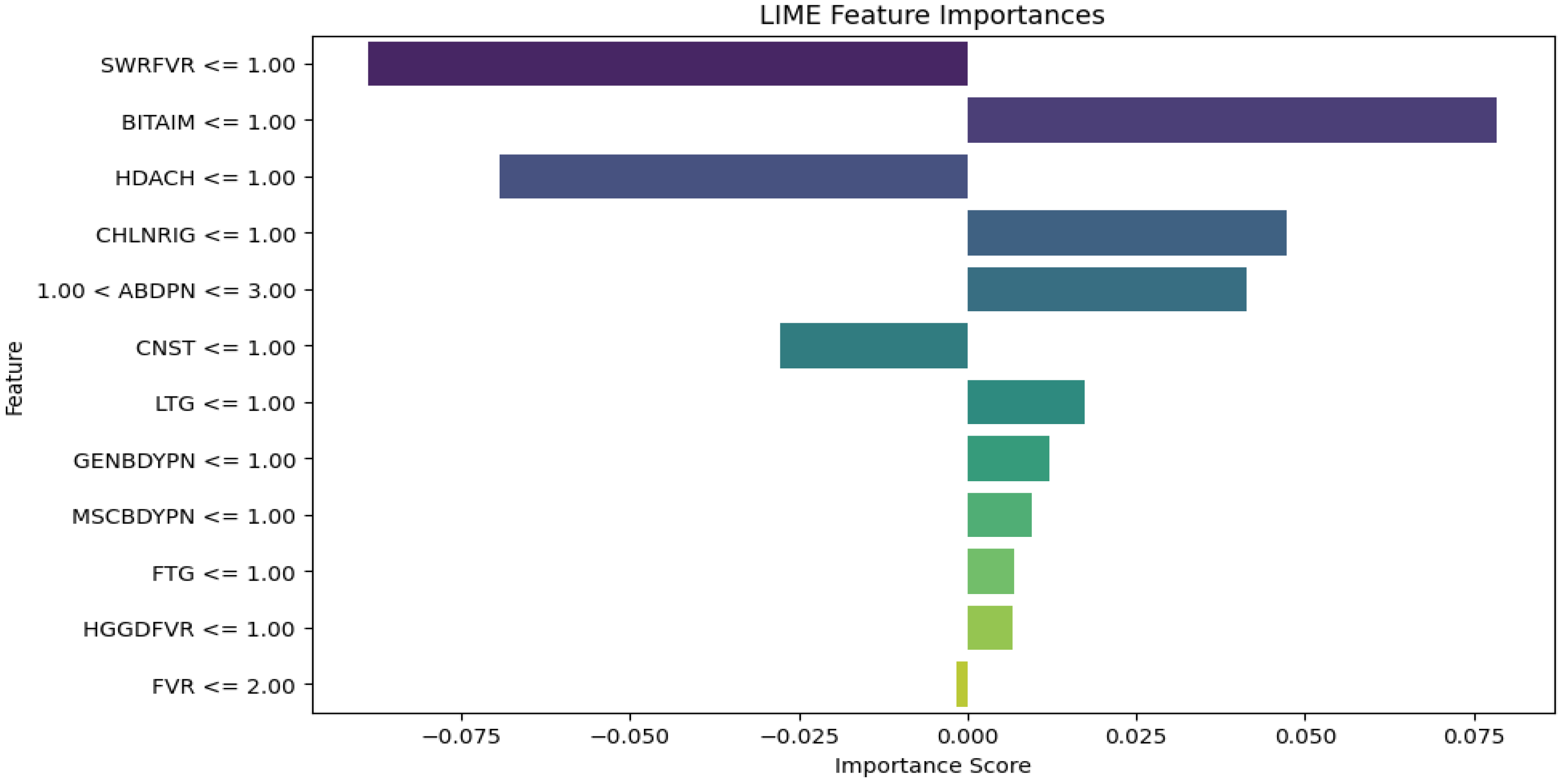

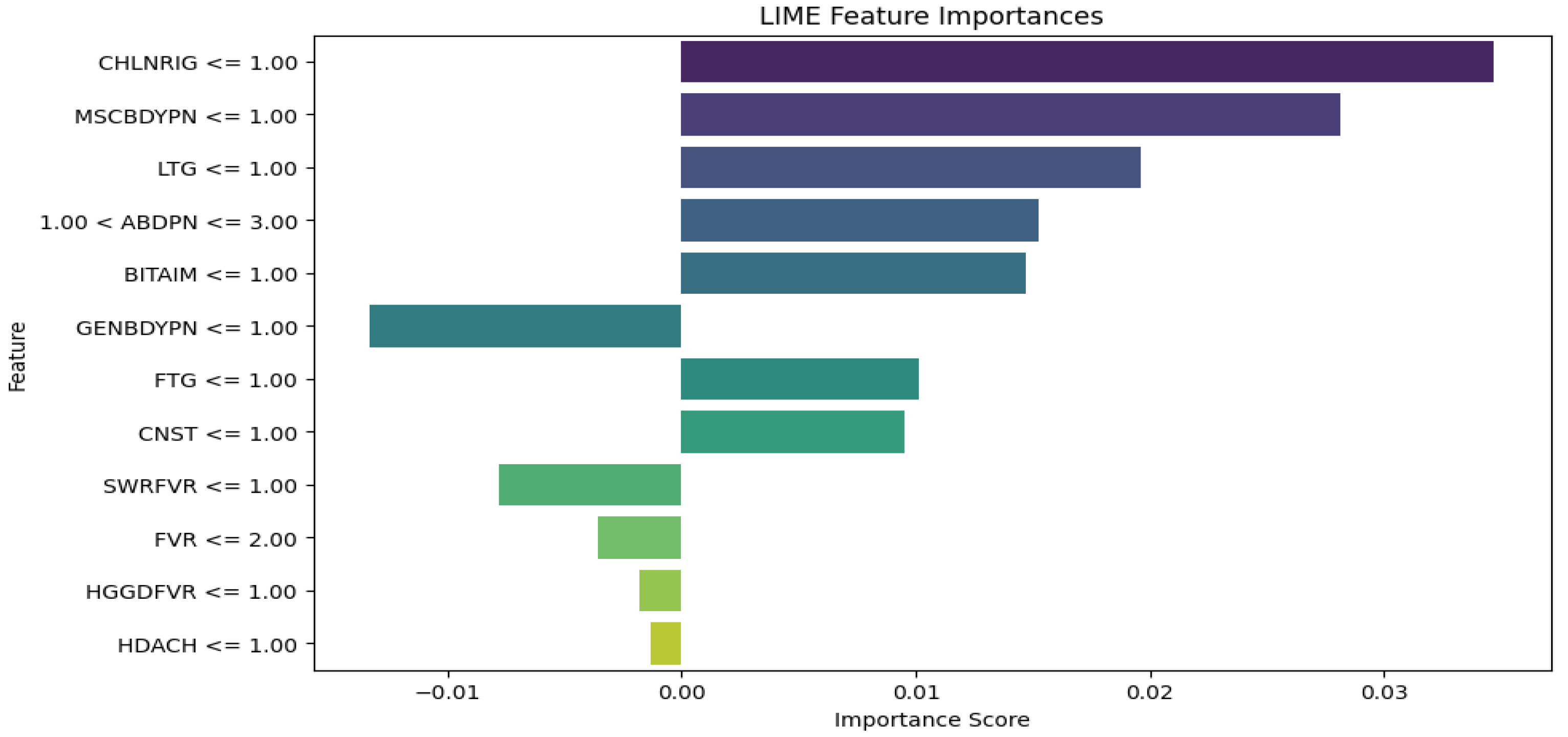

- Apply LIME to explain the ML models' diagnoses and how each symptom contributed to the diagnoses

- Train, Test, and Validate an LLM model independently for generating explanations based on the patient dataset

- Integrate the outputs from ML, LIME, and LLM to provide a comprehensive and interpretable diagnosis.

- Train, Test, and Validate an ML model to diagnose malaria and typhoid based on the patient dataset

- Apply LIME to explain the ML models' diagnoses and how each symptom contributed to the diagnoses

- Use LLM for further explainability by passing the patient symptoms and ML results (with LIME explanations) through the model to generate diagnostic explanations in natural language.

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Asuquo, D.; Attai, K.; Obot, O.; Ekpenyong, M.; Akwaowo, C.; Arnold, K.; Uzoka, F. M. Febrile disease modeling and diagnosis system for optimizing medical decisions in resource-scarce settings. Clin. eHealth 2024, 7, 52–76. [Google Scholar] [CrossRef]

- Galán, J. E. Typhoid toxin provides a window into typhoid fever and the biology of Salmonella Typhi. Proc. Natl. Acad. Sci. USA 2016, 113, 6338–6344. [Google Scholar] [CrossRef] [PubMed]

- Gashaw, T.; Jambo, A. Typhoid in less developed countries: a major public health concern. In Hygiene and Health in Developing Countries-Recent Advances; IntechOpen, 2022. [Google Scholar] [CrossRef]

- Alhumaid, N. K.; Alajmi, A. M.; Alosaimi, N. F.; Alotaibi, M.; Almangour, T. A.; Nassar, M. S.; Tawfik, E. A. Reported Bacterial Infectious Diseases in Saudi Arabia. Overview and Recent Advances 2023, 1–39. [Google Scholar] [CrossRef]

- Paton, D. G.; Childs, L. M.; Itoe, M. A.; Holmdahl, I. E.; Buckee, C. O.; Catteruccia, F. Exposing Anopheles mosquitoes to antimalarials blocks Plasmodium parasite transmission. Nature 2019, 567, 239–243. [Google Scholar] [CrossRef] [PubMed]

- Sato, S. Plasmodium—a brief introduction to the parasites causing human malaria and their basic biology. J. Physiol. Anthropol. 2021, 40(1), 1. [Google Scholar] [CrossRef]

- Carson, B. B., III. Mosquitos and Malaria Take a Toll. In Challenging Malaria: The Private and Social Incentives of Mosquito Control; Springer International Publishing: Cham, 2023; pp. 15–25. [Google Scholar] [CrossRef]

- Attai, K.; Amannejad, Y.; Vahdat Pour, M.; Obot, O.; Uzoka, F. M. A systematic review of applications of machine learning and other soft computing techniques for the diagnosis of tropical diseases. Trop. Med. Infect. Dis. 2022, 7(12), 398. [Google Scholar] [CrossRef] [PubMed]

- Boina, R.; Ganage, D.; Chincholkar, Y. D.; Wagh, S.; Shah, D. U.; Chinthamu, N.; Shrivastava, A. Enhancing Intelligence Diagnostic Accuracy Based on Machine Learning Disease Classification. Int. J. Intell. Syst. Appl. Eng. 2023, 11, 765–774. [Google Scholar]

- Asuquo, D. E.; Umoren, I.; Osang, F.; Attai, K. A Machine Learning Framework for Length of Stay Minimization in Healthcare Emergency Department. Stud. Eng. Technol. J. 2023, 10(1), 1–17. [Google Scholar] [CrossRef]

- Anderson, J.; Thomas, J. Interpretable Machine Learning Models for Healthcare Applications. EasyChair No. 12358. 2024. [Google Scholar]

- Albahri, A. S.; Duhaim, A. M.; Fadhel, M. A.; Alnoor, A.; Baqer, N. S.; Alzubaidi, L.; Deveci, M. A systematic review of trustworthy and explainable artificial intelligence in healthcare: Assessment of quality, bias risk, and data fusion. Inf. Fusion 2023. [Google Scholar] [CrossRef]

- Tan, L.; Huang, C.; Yao, X. A Concept-Based Local Interpretable Model-Agnostic Explanation Approach for Deep Neural Networks in Image Classification. In International Conference on Intelligent Information Processing; Springer Nature Switzerland: Cham, 2024; pp. 119–133. [Google Scholar] [CrossRef]

- Thombre, A. Comparison of decision trees with Local Interpretable Model-Agnostic Explanations (LIME) technique and multi-linear regression for explaining support vector regression model in terms of root mean square error (RMSE) values. arXiv Preprint 2024, arXiv:2404.07046. [Google Scholar] [CrossRef]

- Okay, F. Y.; Yıldırım, M.; Özdemir, S. Interpretable machine learning: A case study of healthcare. In 2021 International Symposium on Networks, Computers and Communications (ISNCC); IEEE, October 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Attai, K.; Akwaowo, C.; Asuquo, D.; Esubok, N. E.; Nelson, U. A.; Dan, E.; Uzoka, F. M. Explainable AI modelling of Comorbidity in Pregnant Women and Children with Tropical Febrile Conditions. In International Conference on Artificial Intelligence and its Applications; December 2023; pp. 152–159. [Google Scholar] [CrossRef]

- Ashraf, K.; Nawar, S.; Hosen, M. H.; Islam, M. T.; Uddin, M. N. Beyond the Black Box: Employing LIME and SHAP for Transparent Health Predictions with Machine Learning Models. In 2024 International Conference on Advances in Computing, Communication, Electrical, and Smart Systems (iCACCESS); IEEE, March 2024; pp. 1–6. [Google Scholar] [CrossRef]

- Li, F.; Jin, Y.; Liu, W.; Rawat, B. P. S.; Cai, P.; Yu, H. Fine-tuning bidirectional encoder representations from transformers (BERT)–based models on large-scale electronic health record notes: an empirical study. JMIR Med. Inform. 2019, 7(3), e14830. [Google Scholar] [CrossRef] [PubMed]

- Nakamura, Y.; Hanaoka, S.; Nomura, Y.; Nakao, T.; Miki, S.; Watadani, T.; Abe, O. Automatic detection of actionable radiology reports using bidirectional encoder representations from transformers. BMC Med. Inform. Decis. Mak. 2021, 21, 1–19. [Google Scholar] [CrossRef] [PubMed]

- Gorenstein, L.; Konen, E.; Green, M.; Klang, E. BERT in radiology: a systematic review of natural language processing applications. J. Am. Coll. Radiol. 2024. [Google Scholar] [CrossRef] [PubMed]

- Yenduri, G.; Ramalingam, M.; Selvi, G. C.; Supriya, Y.; Srivastava, G.; Maddikunta, P. K. R.; Gadekallu, T. R. GPT (generative pre-trained transformer)–a comprehensive review on enabling technologies, potential applications, emerging challenges, and future directions. IEEE Access 2024. [Google Scholar] [CrossRef]

- Wang, Z.; Guo, R.; Sun, P.; Qian, L.; Hu, X. Enhancing Diagnostic Accuracy and Efficiency with GPT-4-Generated Structured Reports: A Comprehensive Study. J. Med. Biol. Eng. 2024, 1–10. [Google Scholar] [CrossRef]

- Caruccio, L.; Cirillo, S.; Polese, G.; Solimando, G.; Sundaramurthy, S.; Tortora, G. Can ChatGPT provide intelligent diagnoses? A comparative study between predictive models and ChatGPT to define a new medical diagnostic bot. Expert Syst. Appl. 2024, 235, 121186. [Google Scholar] [CrossRef]

- Han, H.; Zhang, Z.; Cui, X.; Meng, Q. Ensemble learning with member optimization for fault diagnosis of a building energy system. Energy Build. 2020, 226, 110351. [Google Scholar] [CrossRef]

- Zhu, L.; Qiu, D.; Ergu, D.; Ying, C.; Liu, K. A study on predicting loan default based on the random forest algorithm. Procedia Comput. Sci. 2019, 162, 503–513. [Google Scholar] [CrossRef]

- Ghosh, D.; Cabrera, J. Enriched random forest for high dimensional genomic data. IEEE/ACM transactions on computational biology and bioinformatics 2021, 19, 2817–2828. [Google Scholar] [CrossRef]

- Jackins, V.; Vimal, S.; Kaliappan, M.; Lee, M. Y. AI-based smart prediction of clinical disease using random forest classifier and Naive Bayes. J. Supercomput. 2021, 77, 5198–5219. [Google Scholar] [CrossRef]

- Palimkar, P.; Shaw, R. N.; Ghosh, A. Machine learning technique to prognosis diabetes disease: Random forest classifier approach. In Advanced Computing and Intelligent Technologies: Proceedings of ICACIT 2021; Springer: Singapore, 2022; pp. 219–244. [Google Scholar] [CrossRef]

- Kavzoglu, T.; Teke, A. Advanced hyperparameter optimization for improved spatial prediction of shallow landslides using extreme gradient boosting (XGBoost). Bull. Eng. Geol. Environ. 2022, 81(5), 201. [Google Scholar] [CrossRef]

- Budholiya, K.; Shrivastava, S. K.; Sharma, V. An optimized XGBoost based diagnostic system for effective prediction of heart disease. J. King Saud Univ.-Comput. Inf. Sci. 2022, 34, 4514–4523. [Google Scholar] [CrossRef]

- Zhao, Y.; Li, X.; Li, S.; Dong, M.; Yu, H.; Zhang, M.; Gao, Z. Using machine learning techniques to develop risk prediction models for the risk of incident diabetic retinopathy among patients with type 2 diabetes mellitus: a cohort study. Front. Endocrinol. 2022, 13, 876559. [Google Scholar] [CrossRef]

- Asselman, A.; Khaldi, M.; Aammou, S. Enhancing the prediction of student performance based on the machine learning XGBoost algorithm. Interact. Learn. Environ. 2023, 31(6), 3360–3379. [Google Scholar] [CrossRef]

- Devikanniga, D.; Ramu, A.; Haldorai, A. Efficient diagnosis of liver disease using support vector machine optimized with crows search algorithm. EAI Endorsed Trans. Energy Web 2020, 7, 10. [Google Scholar] [CrossRef]

- Asuquo, D. E.; Attai, K. F.; Johnson, E. A.; Obot, O. U.; Adeoye, O. S.; Akwaowo, C. D.; Uzoka, F. M. E. Multi-criteria decision analysis method for differential diagnosis of tropical febrile diseases. Health Inform. J. 2024, 30 (2). [CrossRef]

- Salih, A. M.; Raisi-Estabragh, Z.; Galazzo, I. B.; Radeva, P.; Petersen, S. E.; Lekadir, K.; Menegaz, G. A Perspective on Explainable Artificial Intelligence Methods: SHAP and LIME. Adv. Intell. Syst. 2024, 2400304. [Google Scholar] [CrossRef]

- Maidabara, A. H.; Ahmadu, A. S.; Malgwi, Y. M.; Ibrahim, D. Expert system for diagnosis of malaria and typhoid. Comput. Sci. IT Res. J. 2021, 2, 1–15. [Google Scholar] [CrossRef]

- Awotunde, J. B.; Imoize, A. L.; Salako, D. P.; Farhaoui, Y. An Enhanced Medical Diagnosis System for Malaria and Typhoid Fever Using Genetic Neuro-Fuzzy System. In The International Conference on Artificial Intelligence and Smart Environment; Springer International Publishing: Cham, 2022; pp. 173–183. [Google Scholar] [CrossRef]

- Mariki, M.; Mkoba, E.; Mduma, N. Combining clinical symptoms and patient features for malaria diagnosis: machine learning approach. Appl. Artif. Intell. 2022, 36(1), 2031826. [Google Scholar] [CrossRef]

| Patient Age (Years) | < 5 | 5 -12 | 13 – 19 | 20 – 64 | ≥ 65 | Total |

| Male | 534 | 346 | 150 | 1012 | 133 | 2175 |

| Female | 419 | 323 | 213 | 1605 | 135 | 2695 |

| Total | 953 | 669 | 363 | 2617 | 268 | 4870 |

| Symptom/Disease | Abbreviation |

|---|---|

| Abdominal pains | ABDPN |

| Bitter taste in mouth | BITAIM |

| Chills and rigors | CHLNRIG |

| Constipation | CNST |

| Fatigue | FTG |

| Fever | FVR |

| Generalized body pain | GENBDYPN |

| Headaches | HDACH |

| High-grade fever | HGGDFVR |

| Lethargy | LTG |

| Muscle and body pain | MSCBDYPN |

| Stepwise rise fever | SWRFVR |

| Malaria | MAL |

| Typhoid fever/ Enteric fever | ENFVR |

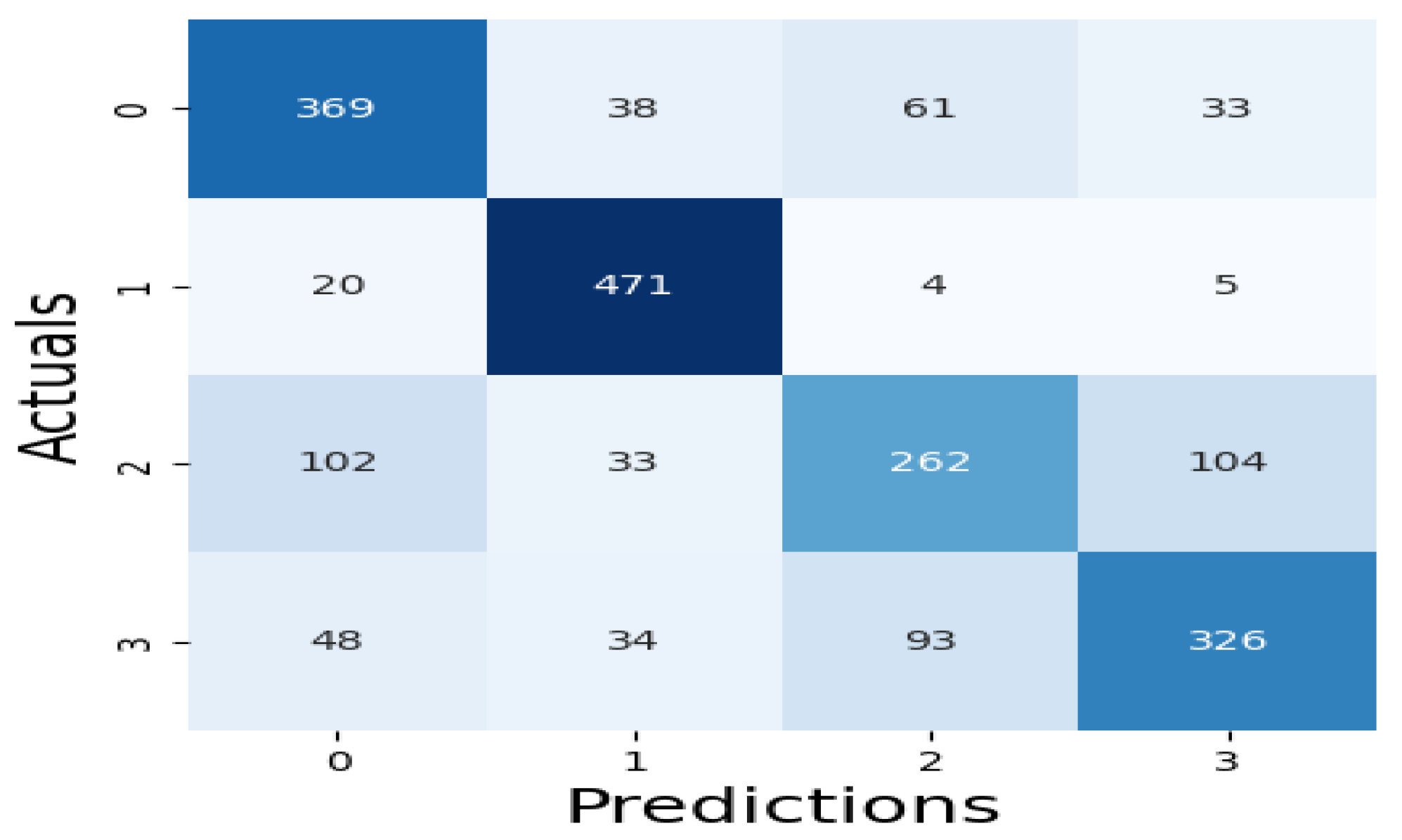

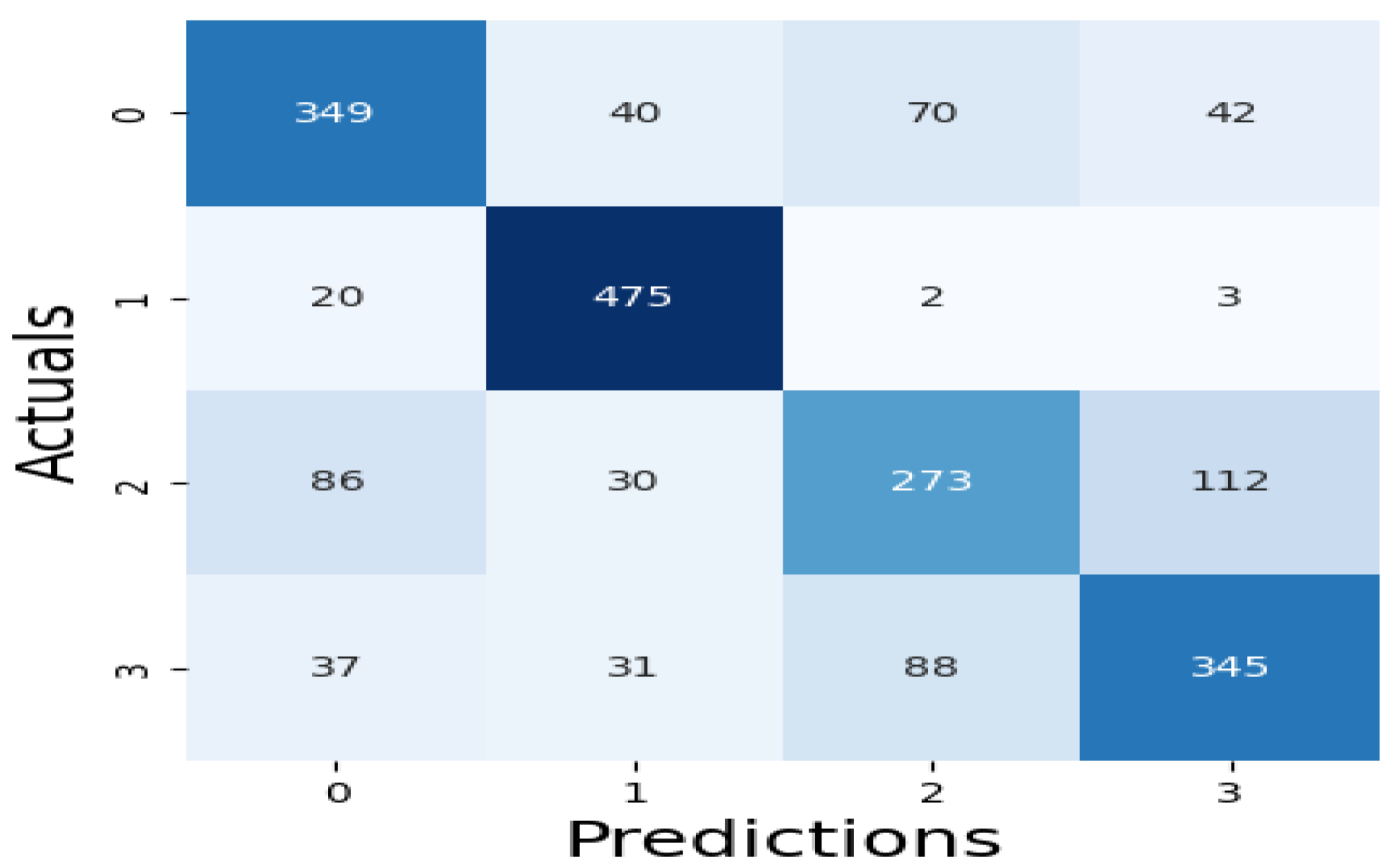

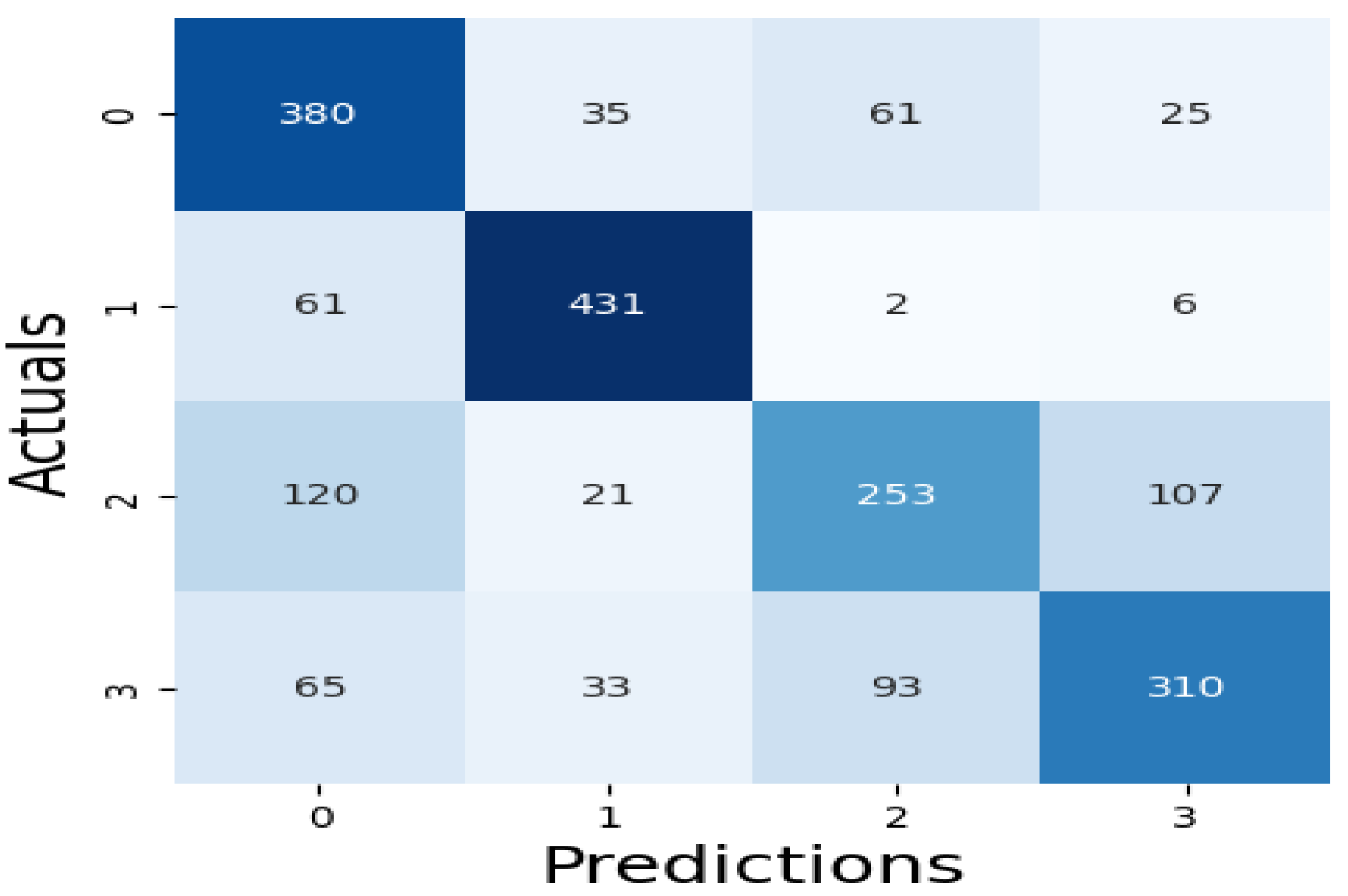

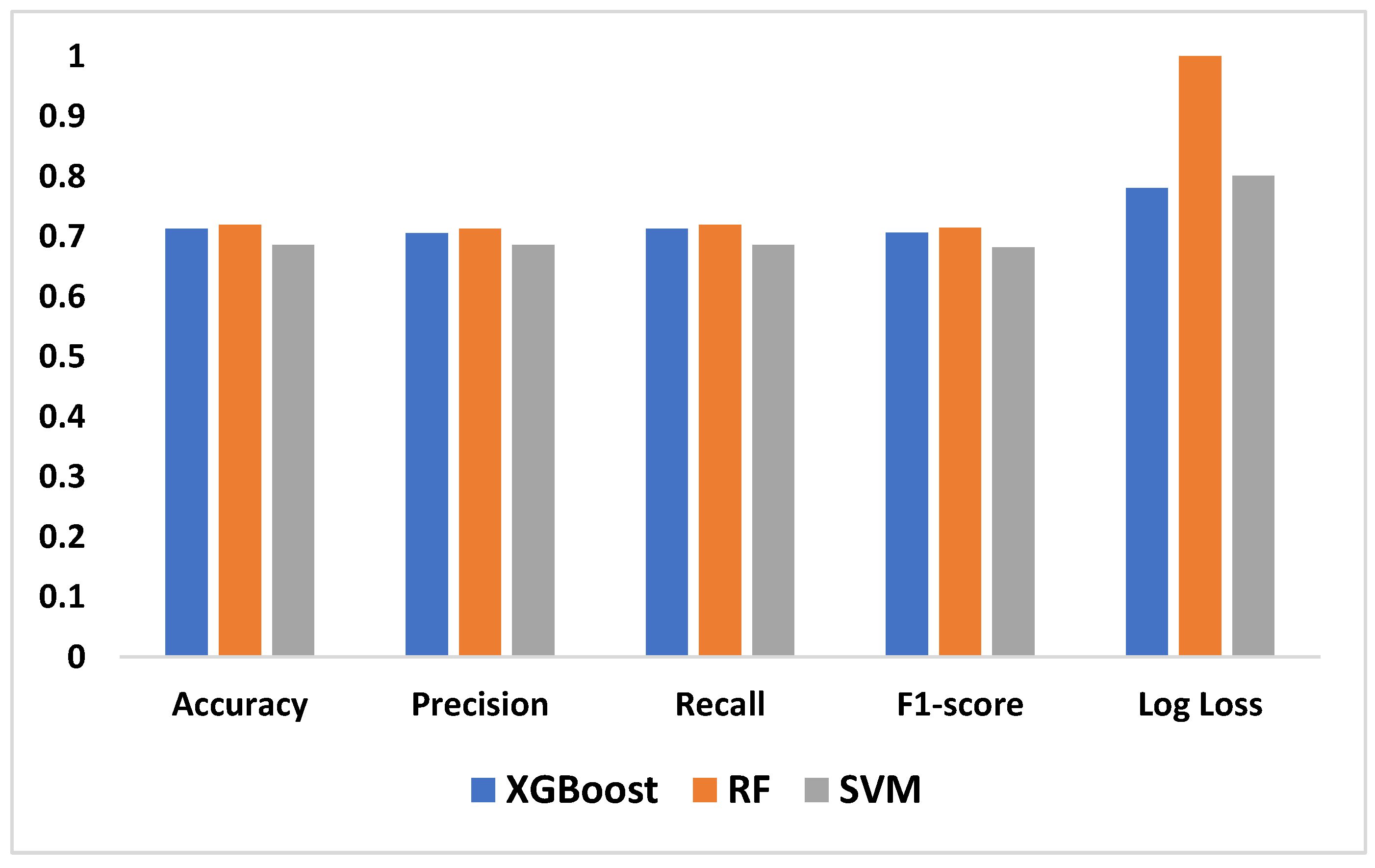

| Algorithm | Accuracy | Precision | Recall | F1-score | Log Loss | Computation time |

|---|---|---|---|---|---|---|

| XGBoost | 0.7129 | 0.7056 | 0.7129 | 0.7066 | 0.7808 | 2 minutes, 32 seconds |

| RF | 0.7199 | 0.7129 | 0.7199 | 0.7145 | 1.0548 | 14 minutes, 9 seconds |

| SVM | 0.6860 | 0.6865 | 0.6860 | 0.6821 | 0.8016 | 1hr, 22 minutes, 7 seconds |

| Experiment | Algorithm | Accuracy | Precision | Recall | F1-score |

|---|---|---|---|---|---|

| Exp 1 | Chat GPT 3.5 | 0.3000 | 0.35562 | 0.3000 | 0.30999 |

| Gemini | 0.3000 | 0.3449 | 0.3000 | 0.2908 | |

| Perplexity | 0.2600 | 0.3890 | 0.2600 | 0.28736 | |

| Exp 2 | Chat GPT 3.5 | 0.2600 | 0.2909 | 0.2600 | 0.2615 |

| Gemini | 0.2700 | 0.2607 | 0.2700 | 0.2296 | |

| Perplexity | 0.2800 | 0.2524 | 0.2800 | 0.2629 | |

| Exp 3 | Chat GPT 3.5 | 0.3297 | 0.3324 | 0.3297 | 0.2926 |

| Gemini | 0.2895 | 0.2709 | 0.2895 | 0.2728 | |

| Perplexity | 0.2632 | 0.1957 | 0.2632 | 0.2171 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).