Submitted:

28 August 2024

Posted:

29 August 2024

You are already at the latest version

Abstract

Keywords:

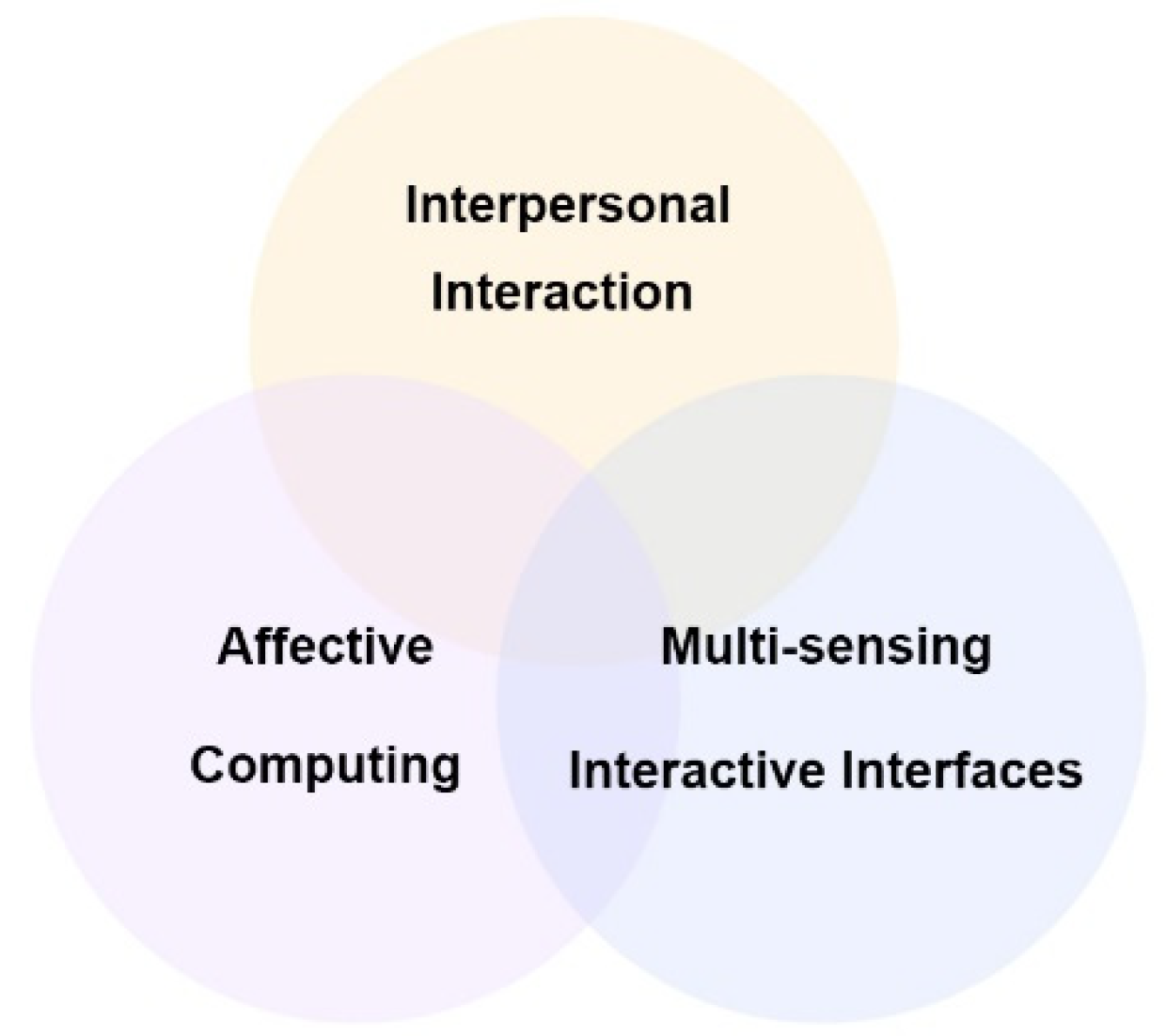

1. Introduction

1.1. Background

1.2. Research Motivation

1.3. Research Problems and Purposes

- (1)

- How can the integration of multi-sensing interaction and affective computing technologies enhance interpersonal relationships?

- (2)

- Does constructing an interactive system based on multi-sensing interaction and affective computing technologies strengthen positive interpersonal communication experiences and stimulate positive emotion transmission?

- (3)

- Can the features of multi-sensing interaction and affective computing technologies improve the pleasantness of the interaction experience?

- (1)

- To analyze cases of multi-sensing interaction and affective computing, and summarize design principles for the proposed interactive system.

- (2)

- To develop an emotion-aware interactive system that enhances positive interpersonal relationships.

- (3)

- To evaluate whether the system effectively makes users feel pleasant and relaxed.

1.4. Research Scope and Limitations

- (1)

- Voice recognition and Leap Motion gesture sensing are selected as the primary multi-sensing interfaces.

- (2)

- Explore emotion recognition in interactive experiences will be explored, but the development of semantic analysis technologies will not be addressed.

- (3)

- The designed multi-sensing system will be tested with users who have intact limbs, good auditory and visual abilities, and understand typical interpersonal interactions, specifically targeting active users aged 18 to 34.

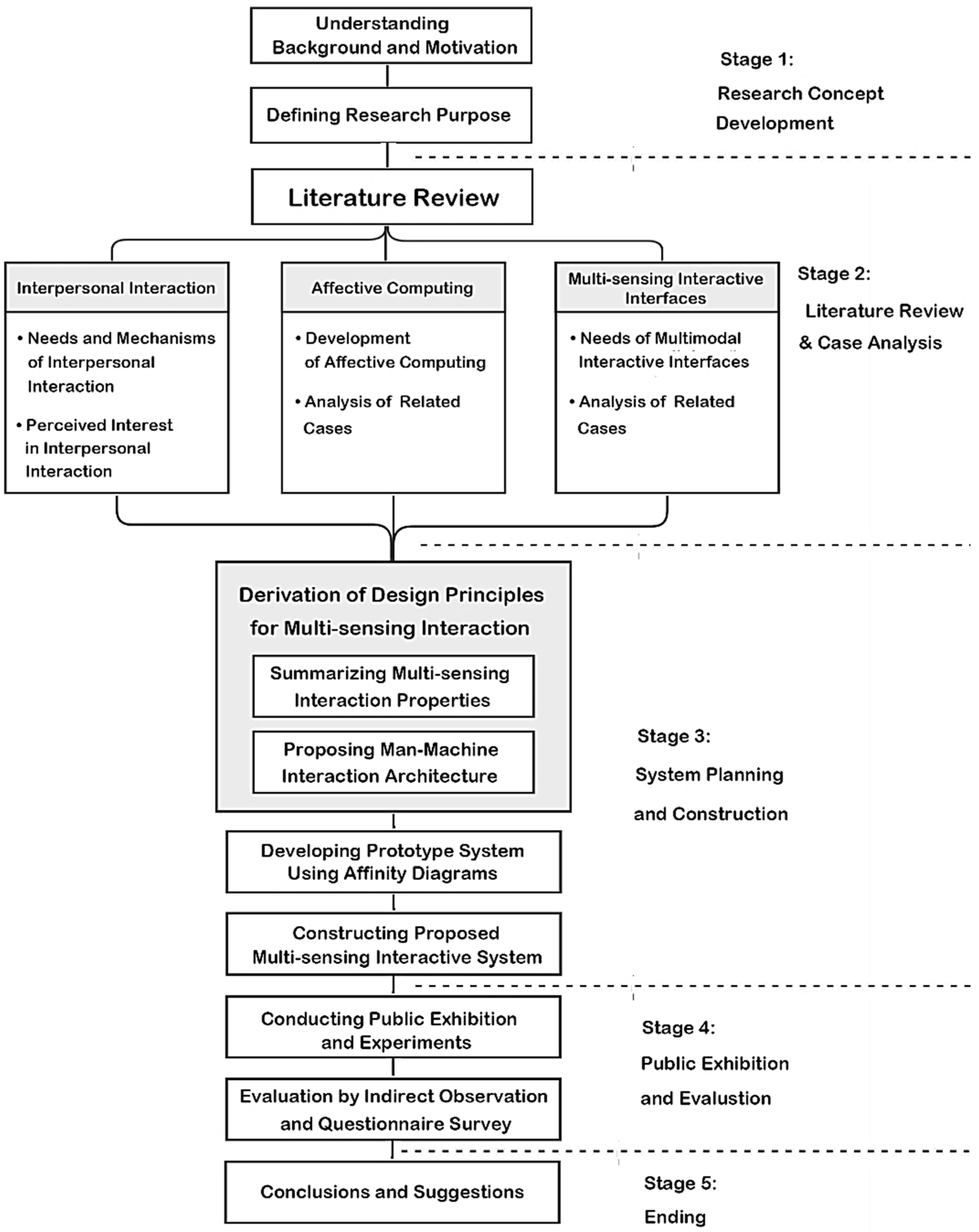

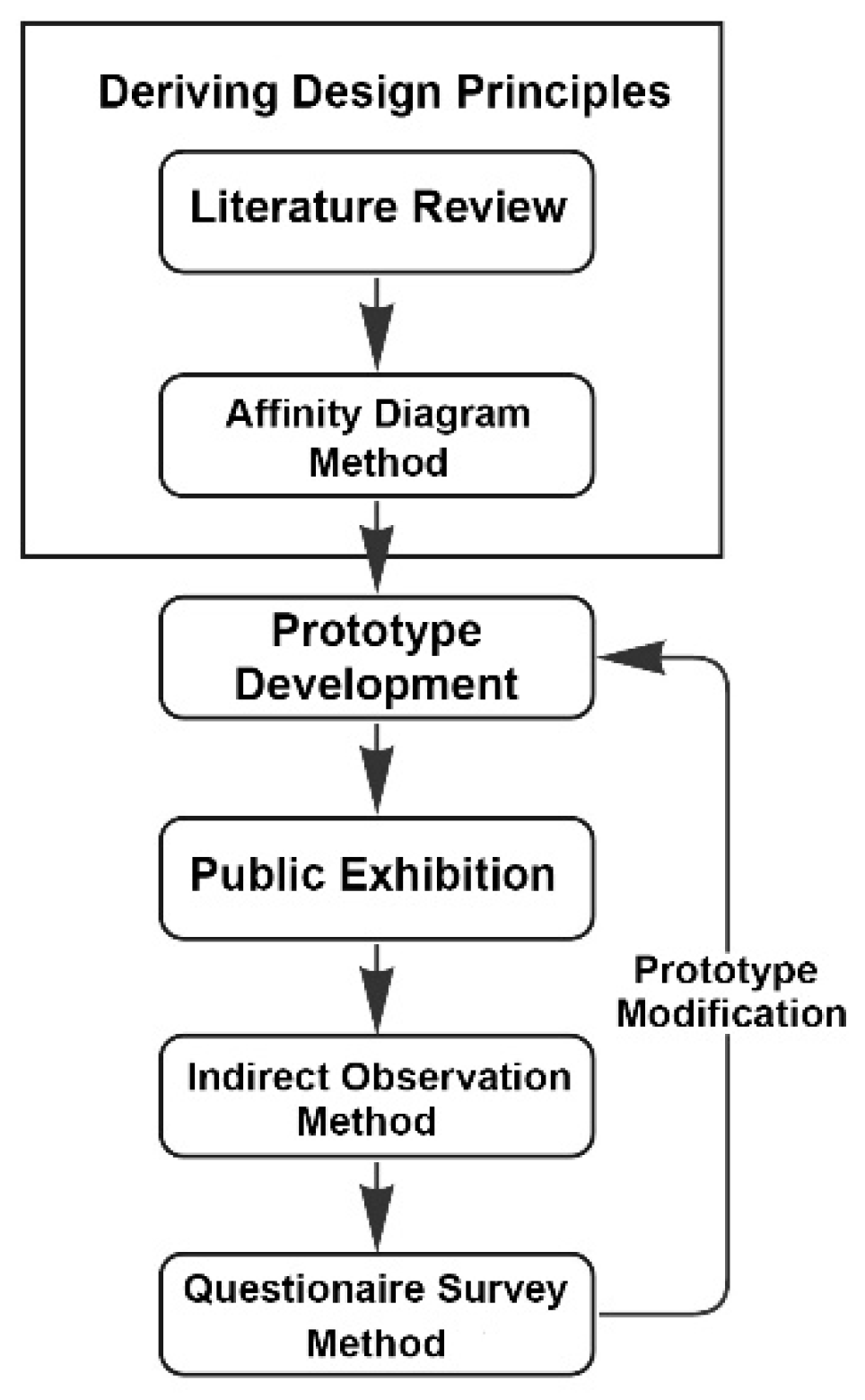

1.5. Research Process

- (1)

- Stage 1 - Research concept development:

- (2)

- Stage 2 - Literature review and case analysis:

- (3)

- Stage 3 – System planning and construction:

- (4)

- Stage 4 – Exhibition and evaluation:

- (5)

- Stage 5 – Ending:

2. Literature Review

2.1. Interpersonal Interaction

2.1.1. Interpersonal Interaction Needs and Mechanisms

2.1.2. Perceived Interest in Interpersonal Interaction

- (1)

- Effectiveness: Providing users with systems and functions that prioritize immediacy and convenience.

- (2)

- Enjoyability: Considering interface diversity and emotional resonance to enhance user focusing and immersion.

- (3)

- Simplicity: Offering a straightforward, intuitive, and natural user interface.

- (4)

- Interactive Context: Recognizing the significance of multimedia virtual spaces in daily life and leveraging social interaction behaviors to stimulate users’ emotional awareness and behaviors.

2.1.3. A Summary

- (1)

- Emotional resonance and empathy: Interpersonal interactions evoke emotional resonance and empathy, fostering positive feelings through active communication.

- (2)

- Intrinsic motivation in interaction contexts: Exploring intrinsic motivations within online contexts facilitates emotional exchanges and enjoyable flow experiences.

- (3)

- Real-time interaction in digital multimedia spaces: These spaces enable interactions across formats, times, and locations, promoting continuous participation.

- (4)

- Integration of cognitive and emotional aspects: Combining these aspects enhances system usability, addressing needs such as loneliness alleviation and self-understanding.

- (5)

- Relationship between usability, enjoyment, and interaction quality: The system’s usability and enjoyment directly impact the quality of interpersonal interactions.

2.2. Affective Computing

2.2.1. Development of Affective Computing

- (1)

- Recognizing Emotion: Analyzing physiological signals, voice, facial expressions, and text to determine users’ emotional states. This is the most widely applied level.

- (2)

- Expressing Emotion: Enabling computers to communicate with users by expressing emotions. This level often involves simulation based on predefined settings, commonly seen in chatbots and virtual characters.

- (3)

- Having Emotion: Allowing computers to possess emotions similar to humans, leading to emotional decision-making and actions. This level is still developing and requires careful management.

- (4)

- Showing Emotional Intelligence: Computers can self-regulate, moderate, and manage their emotions, effectively functioning as “friendly artificial intelligence.”

2.2.2. Related Cases of Affective Computing

- (1)

- Users construct emotional cognitive experiences based on information about interpersonal interactions.

- (2)

- Emphasis may be placed on how users experience and understand emotions through system interactions.

- (3)

- Emotional data should be situated within emotional dimensions to allow for free and rich emotional expression.

- (4)

- The aim is to develop systems that provide users with meaningful emotional stimulation.

- (5)

- Evaluation focuses on detecting and interpreting how users’ emotions change over time and with different stimuli.

2.2.3. A Summary

- (1)

- Applications in communication: Affective computing is widely applied in interpersonal communication, with remote tools enhancing the detection of users’ emotional states.

- (2)

- Empathy and emotional resonance: It enables computers to understand and express emotions, fostering empathy, relaxation, and focusing in users.

- (3)

- Case study insights: Case studies commonly aim to improve negative emotions and enhance empathic communication, focusing on a better quality of life and human-centered experiences through techniques like semantic analysis.

- (4)

- Encouraging interaction: System designs should promote user interaction and emotional awareness, offering cues that stimulate reflection without overly defining emotions.

2.3. Multi-Sensing Interactive Interfaces

2.3.1. Needs of Multi-Sensing Interactive Interfaces

- (1)

- Modular design framework: Modular design enhances flexibility and provides a more intuitive interaction experience.

- (2)

- Design principles: Effective gesture commands often rely on directional sensors to increase interaction naturalness and immersion. Emphasis is placed on paired command combinations and minimizing the use of pause commands during interactions.

- (3)

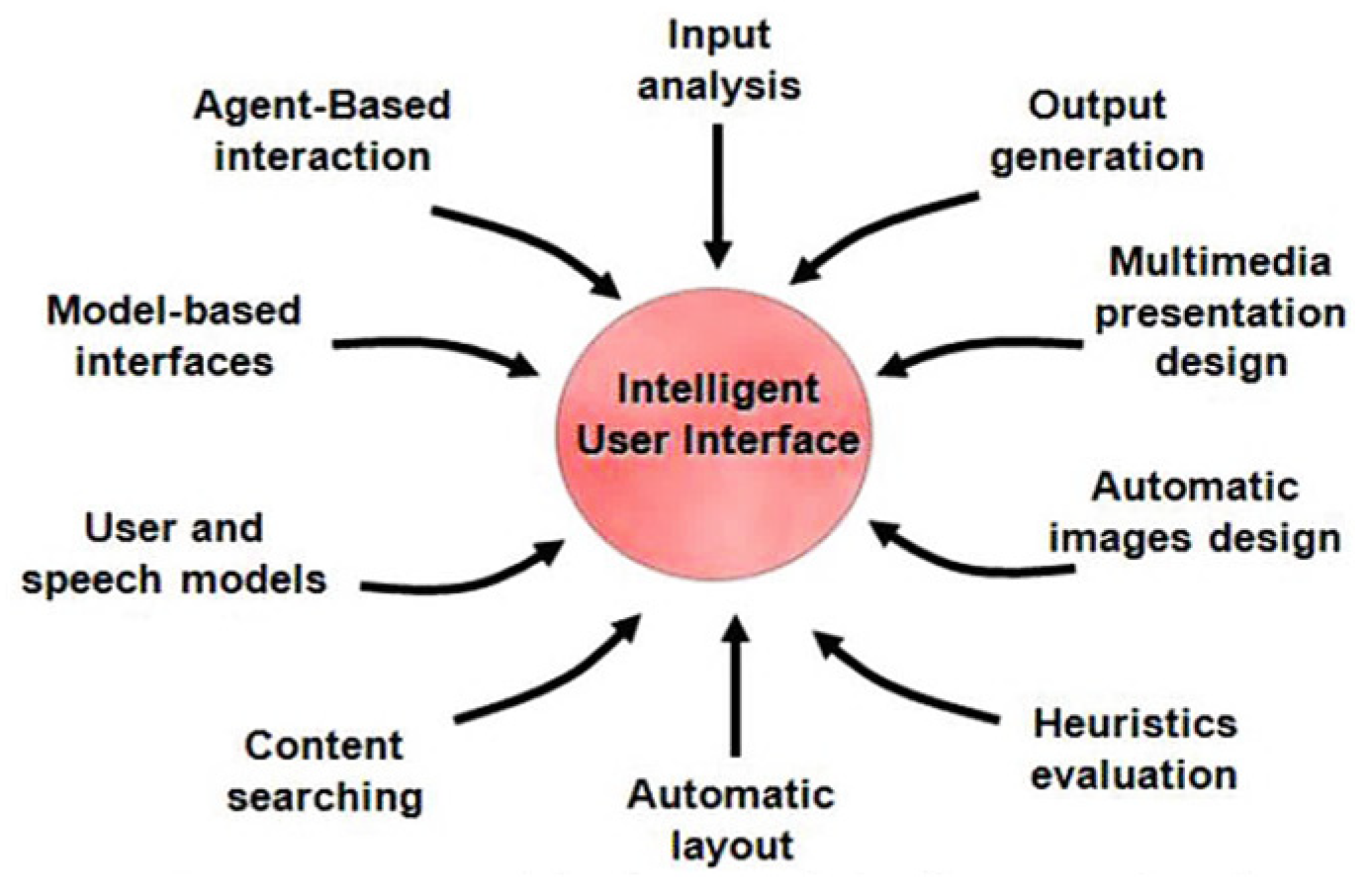

- Integration of voice and gesture: The combination of non-contact multi-sensing interfaces (voice and gesture) within the Intelligent User Interfaces (IUI) framework supports the development of an innovative and engaging multi-sensing interactive affective computing system.

2.3.2. Related Cases of Multi-Sensing Interactive Interfaces

2.3.3. A Summary

- (1)

- Enhanced accuracy and efficiency: Multi-sensing inputs improve system accuracy and efficiency, leading to greater focusing and immersion.

- (2)

- User preferences: Users generally favor interfaces that combine non-contact gestures and voice control, with voice interfaces boosting user concentration.

- (3)

- Intelligent user Interfaces: The IUI framework can enhance performance, emotional engagement, and naturalness in interactions by sensing emotional information.

- (4)

- Growing importance of assistive features: Assistive features are becoming more critical in multi-sensing interfaces. In this study, it will be tried to explore integrating affective computing into interaction interfaces to improve user quality of life and evaluate user experience in a simple, intuitive manner.

2.4. Concluding Remarks of the Literature Review

- (1)

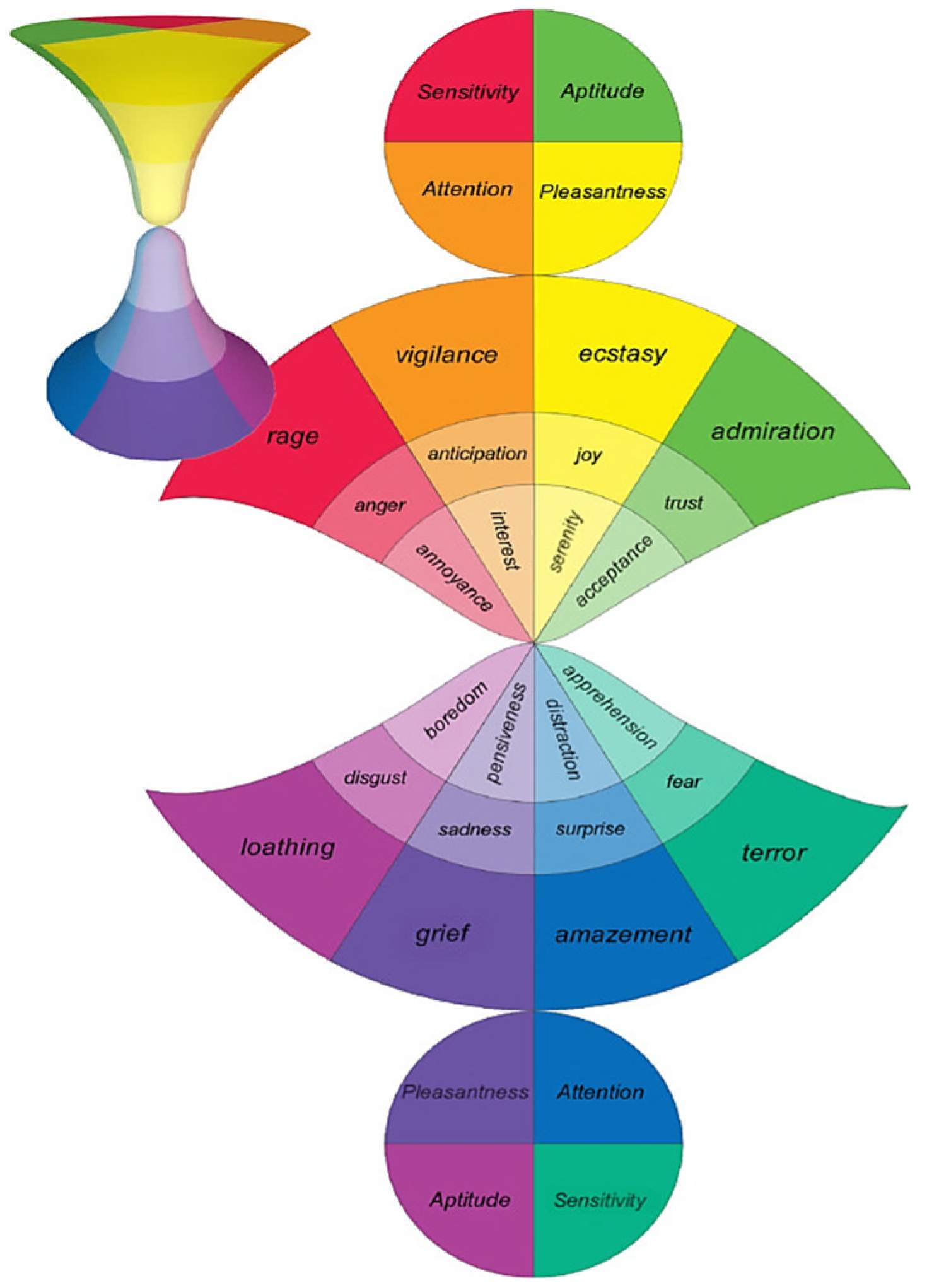

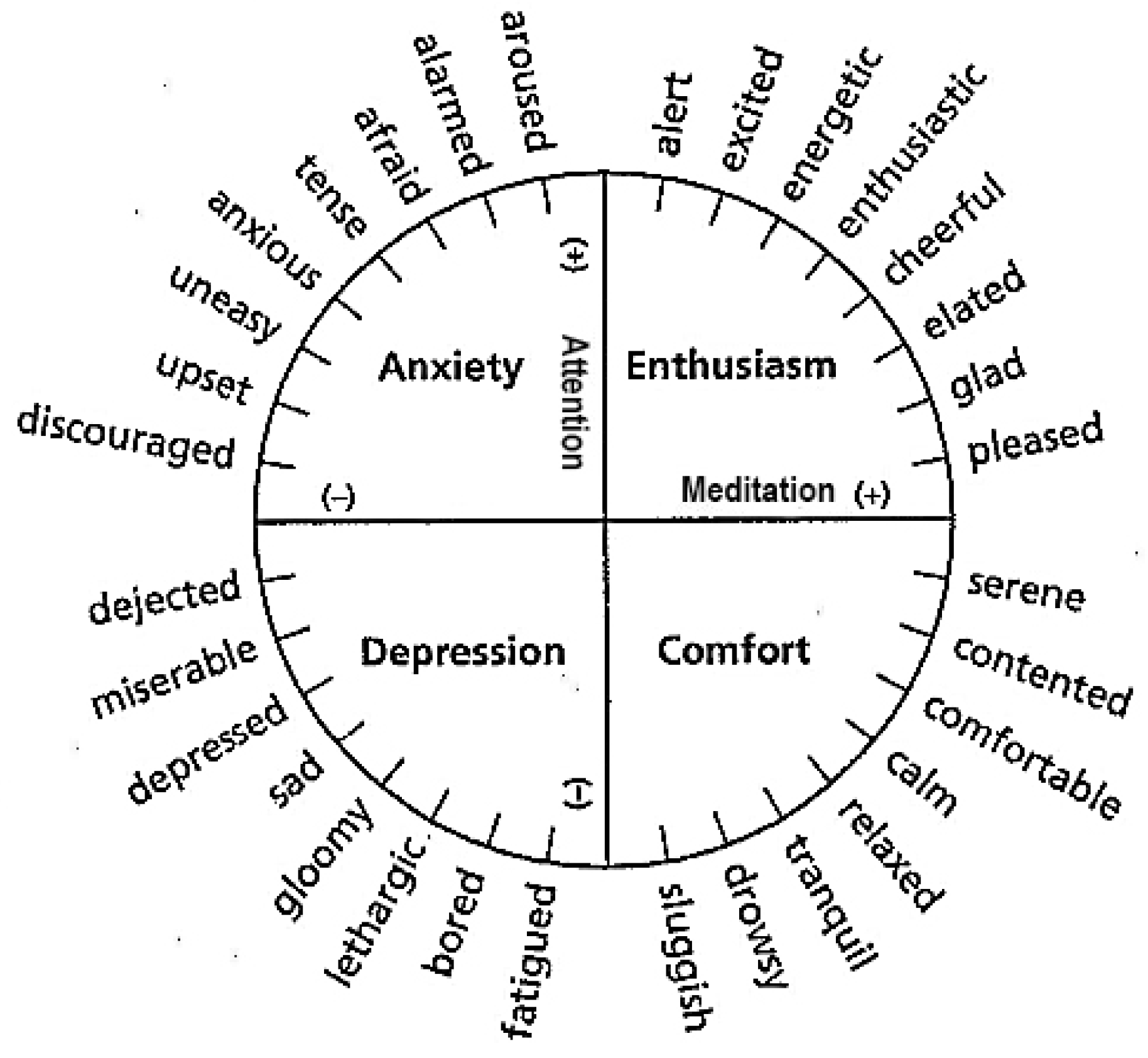

- Intentions of interpersonal interaction: Goals include alleviating loneliness, seeking stimulation, satisfying needs, and enhancing self-understanding. In this study, it is aimed to evoke emotional resonance and generate positive experiences by integrating cognitive processes and context, measuring pleasantness, attention, and emotional intensity based on the emotional hourglass model.

- (2)

- Benefits of affective computing: Affective computing aids in real-time emotional regulation and interpersonal issue resolution, while also enhancing decision-making capabilities.

- (3)

- Advantages of multi-sensing interactive interfaces: Combining voice and gestures in multi-sensing interfaces surpasses traditional methods, improving focusing and resolving ambiguous inputs through contextual semantic analysis. The interface’s effectiveness is evaluated based on sensitivity, flexibility, and measurement range, enhancing system accuracy and effectiveness.

- (4)

- Integration and evaluation of sensor data: Integrating multiple sensors to capture user input is assessed using the indicators of effectiveness, simplicity, and pleasantness to highlight the importance of user involvement in the interaction context.

3. Methodology

3.1. Selection of Research Methods

3.2. The Affinity Diagram Method

3.2.1. Idea of Using the Affinity Diagram Method

3.2.2. Application in This Study and Brainstorming Result

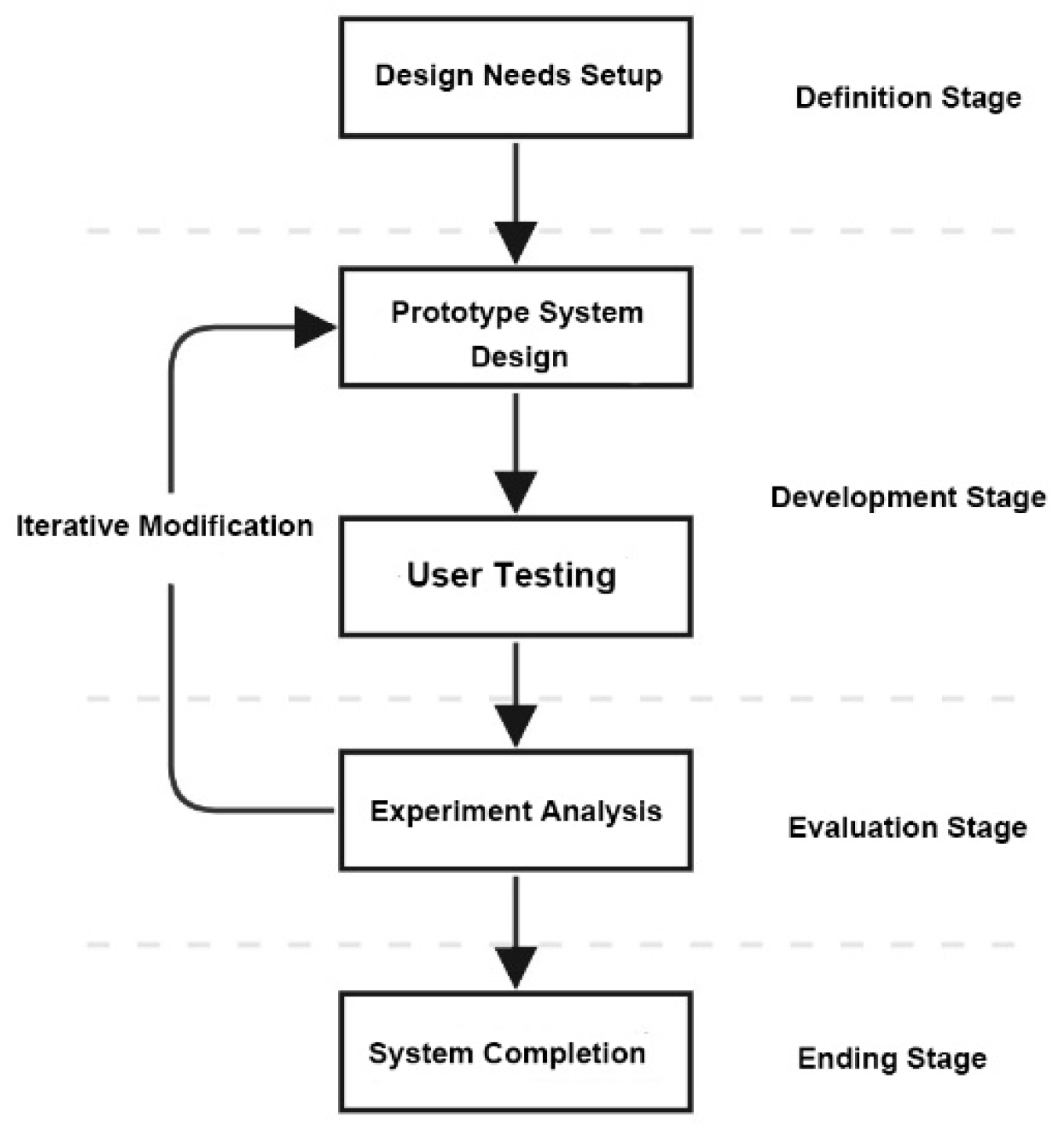

3.3. The Prototype Development Method

- (1)

- Defining stage - Defining the design needs for the system to be constructed.

- (2)

-

Development stage –

- (2.1)

- Designing a prototype system based on the techniques of multi-sensing interactive interfaces and affective computing.

- (2.2)

- Conducting user testing of the prototype system.

- (3)

-

Evaluation stage –

- (3.1)

- Conducting experiment analysis.

- (3.2)

- Iterating to Step (2.1) to modify the system whenever necessary.

- (4)

- Ending stage - Completing the system development

3.4. Indirect Observation Method

3.5. Questionnaire Survey Method

3.5.1. Ideas and Applications of the Method

3.5.2. Purpose of Survey and Question Design

4. System Design

4.1. The Design Concept of the Proposed System

4.2. Design of the Multi-Sensing Interaction Process for the Proposed System

4.2.1. Idea of the Design

4.2.2. Detailed Description of the Interaction Process

- (1)

-

Guidance Stage:

- (1.1)

- System Awakening - The user activates the proposed interactive system by approaching it. Audiovisual effects are used to bring the “emotional” drifting sailing journey to life on the TV screen through gesture control and interaction with objects.

- (1.2)

- Instruction learning - The user reviews the interactive scene and process instructions.

- (2)

-

Experience Stage:

- (2.1)

- Sailing forward - The user rows the boat forward using guided gestures to explore the interactive experience.

- (2.2)

- Finding a drafting bottle - The user locates a bottle drifting on the water, containing a letter presumably left by the previous user.

- (2.3)

- Picking up the bottle – Using appropriate gestures, the user retrieves the bottle, which contains four letters. Each letter includes answers to questions about “becoming a person with superpowers,” provided by the previous user.

- (2.4)

- Taking out a letter – The user imaginatively extracts one of the letters from the drift bottle and displays it on the interface.

- (2.5)

- Reading the letter content - Through gesture-based operations on the interface, the user reads the letter’s content, listening to the spoken message to experience and empathize with the emotions expressed by the previous user.

- (2.6)

- Repeating message reading four times – The user repeats Steps (2.4) and (2.5) until all four letters have been read.

- (3)

-

Sharing Input Stage:

- (3.1)

- Activating voice recording - The user presses a voice-control button on the interface using gestures to initiate the system’s recording mode.

- (3.2)

- Answering a question and recording – The user verbally responds to each of the four questions, sharing their emotions interactively with the previous user. The system records these responses for playback.

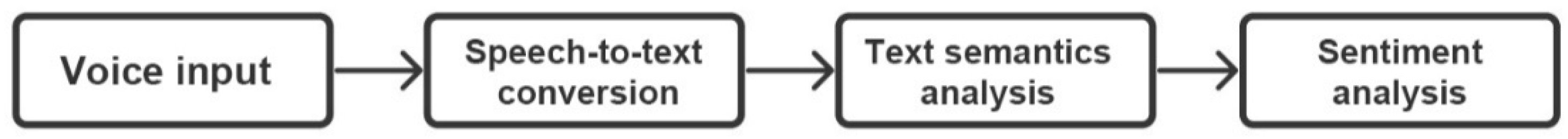

- (3.3)

- Transforming the message into text - The recorded voice is automatically converted into text in real time by speech-to-text technology, which is then displayed on the interface.

- (4)

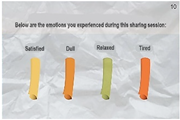

-

Emotional Review and Output Stage:

- (4.1)

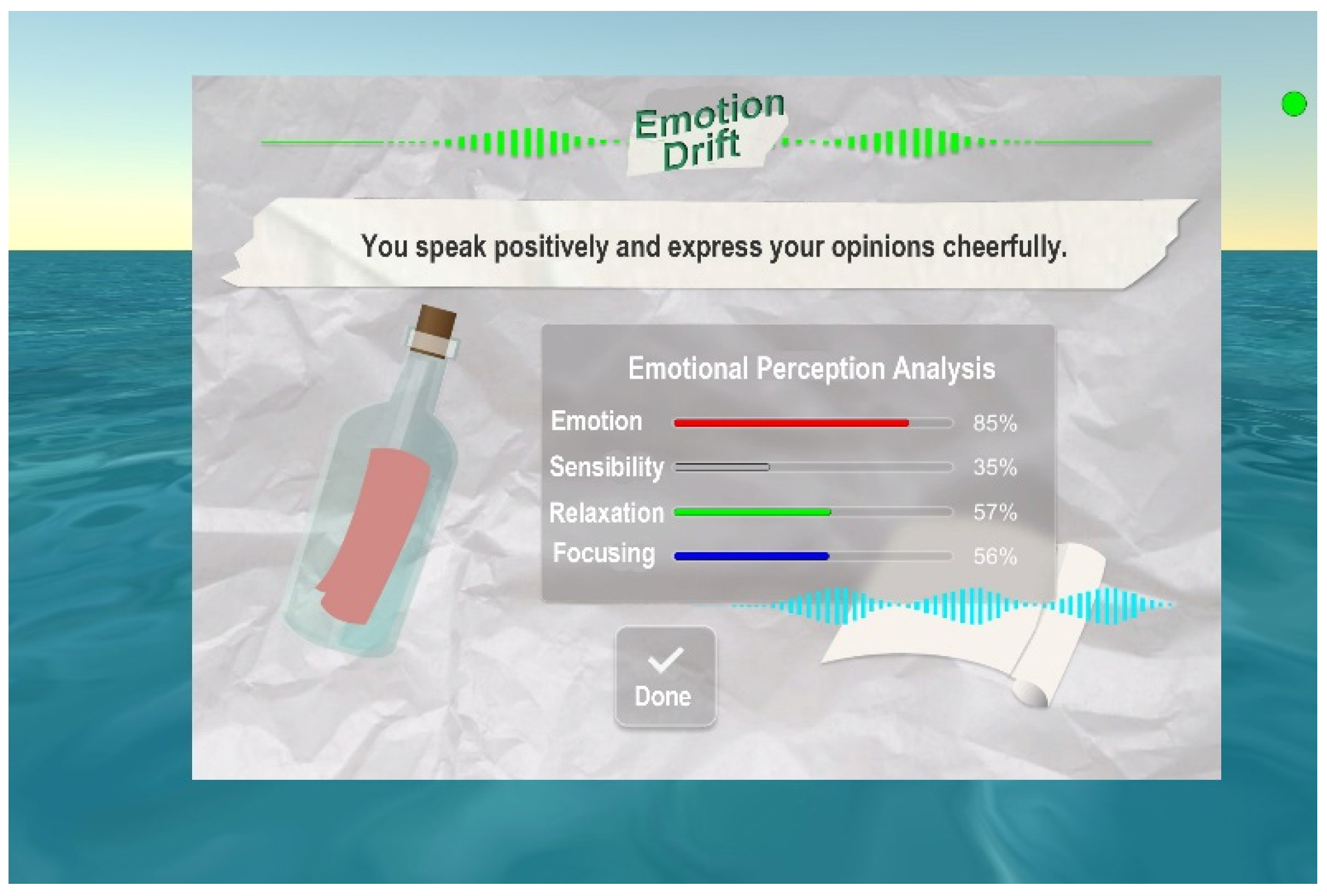

- Semantic analysis and emotion presentation - Using natural language processing, the system analyzes the text generated in the previous stage along with the brainwave data collected from the EEG. This analysis identifies the user’s emotions and related parameters, which are then displayed on the interface for review, including a text description and four parameter values.

- (4.2)

- Repeating answer recording and analysis four times – The user repeats the tasks outlined in Steps (3.1) through (3.3) and (4.1) until all four questions are answered.

- (5)

-

Ending Stage:

- (5.1)

- Showing the conclusion of emotion analysis – The system presents a summary of the user’s four emotional states through textual descriptions for review.

- (5.2)

- Drafting a bottle with message letters – The user writes responses to the questions on letters, places them in a bottle, and releases it into the water for the next user.

| Stage | Step theme | User’s interactive action | System’s operation | Interface display or Enviroment situation |

|---|---|---|---|---|

|

(1) Guidance Stage |

(1.1) System wakening | The user awakens the system before the Leap Motion by hand waving gesture. | The Leap Motion in the system senses and recognizes the user’s gesture and moves the boat forward |  |

| (1.2) Instruction learning | The user views the interactive scene and the process instruction. | The system displays the process instructions on the TV screen. |  |

|

|

(2) Experience Stage |

(2.1) Sailing forward | The user sails the boat forward by rowing gesture. | The system shows the rowing action of the user’s two hands and the forward moving of the boat. |  |

| (2.2) Finding a drafting bottle | The user finds out a drafting bottle on the water. | The system displays the bottle in front of the boat. |  |

|

| (2.3) Picking up the bottle | The user picks up by two-hand gestures the drafting bottle on the water with a letter left inside by the previous user. | The system shows two hands holding the bottle. |  |

|

| (2.4) Taking out a letter | The user takes out a letter inside the bottle by pushing a button on the interface. | The system displays the cirresponding question and four “push buttons” on the interface shown on the TV screen. |  |

|

| (2.5) Reading the letter content | The user reads the dsiplayed message on the letter by listening to the message spoken audibly. | The system plays the audio of the message recorded previously whne the previous user used the system. |  |

|

| (2.6) Repeating message reading four times | The user does the same task of Steps (2.4) and (2.5) until all four letters are read. | The system repeats the operations done in Steps (2.4) and (2.5) four times as requested by the user. |  |

|

|

(3) Sharing Input Stage |

(3.1) Activating voice recording | The user pushes the “voice-recording button” on the interface by gestures to activate the system’s recording status | The system gets into the voice-recording state. |  |

| (3.2) Answering a question and recording | The user responds by speaking out his/her thoughts to answer a question after pushing a corresponding buttom on the interfce. | The system records the voice of the user’s answer that can be re-played for review if the user push the “listening button.” |  |

|

| (3.3) Transforming the message into text | The user views the text yielded by the system, which is what he/she said as the answer to the question. | The system transforms the recorded user’s verbal statements into text and displays the result on the interface. |  |

|

|

(4) Emotion Review and Output Stage |

(4.1) Semantic analysis and emotion presentation | The user views the emotion analysis result on the TV screen, including a text descriprtion and four parameter values. | By natural language processing, the system analyzes the text and brainwave data to identify the user’s emotions and present them on the screen. |  |

| (4.2) Repeating answer recording and analysis four times | The user conducts the tasks of Steps (3.1) through (3.3) and (4.1) repeatedly until all four questions are answered. | The system repeats the operations done in Steps (3.1) through (3.3) and (4.1) four times as requested by the user. |  |

|

|

(5) Ending Stage |

(5.1) Showing the conclusion of emotion analysis | The user views the concluding textual listing of the system’s analysis of his/her emotion. | The system presents a concluding listing of the textual descriptions of the user’s four types of emotions. |  |

| (5.2) Drafting a bottle with message letters | The user writes imaginatively the answers on letters, puts them into a bottle, and drafts it away for the next user. | The system shows the image of drafting the bottle on the TV display. |  |

4.2.3. Questions to Answer as Messages for User Interactions

| No. | Property | Question content |

|---|---|---|

| 1 | Introduction | What superpower did the previous person share? What are your thoughts on it? |

| 2 | Development | What do you think are the good and bad aspects of it? |

| 3 | Turning | What superpower would you want and why? |

| 4 | Conclusion | How would you recommend this superpower to friends and family? |

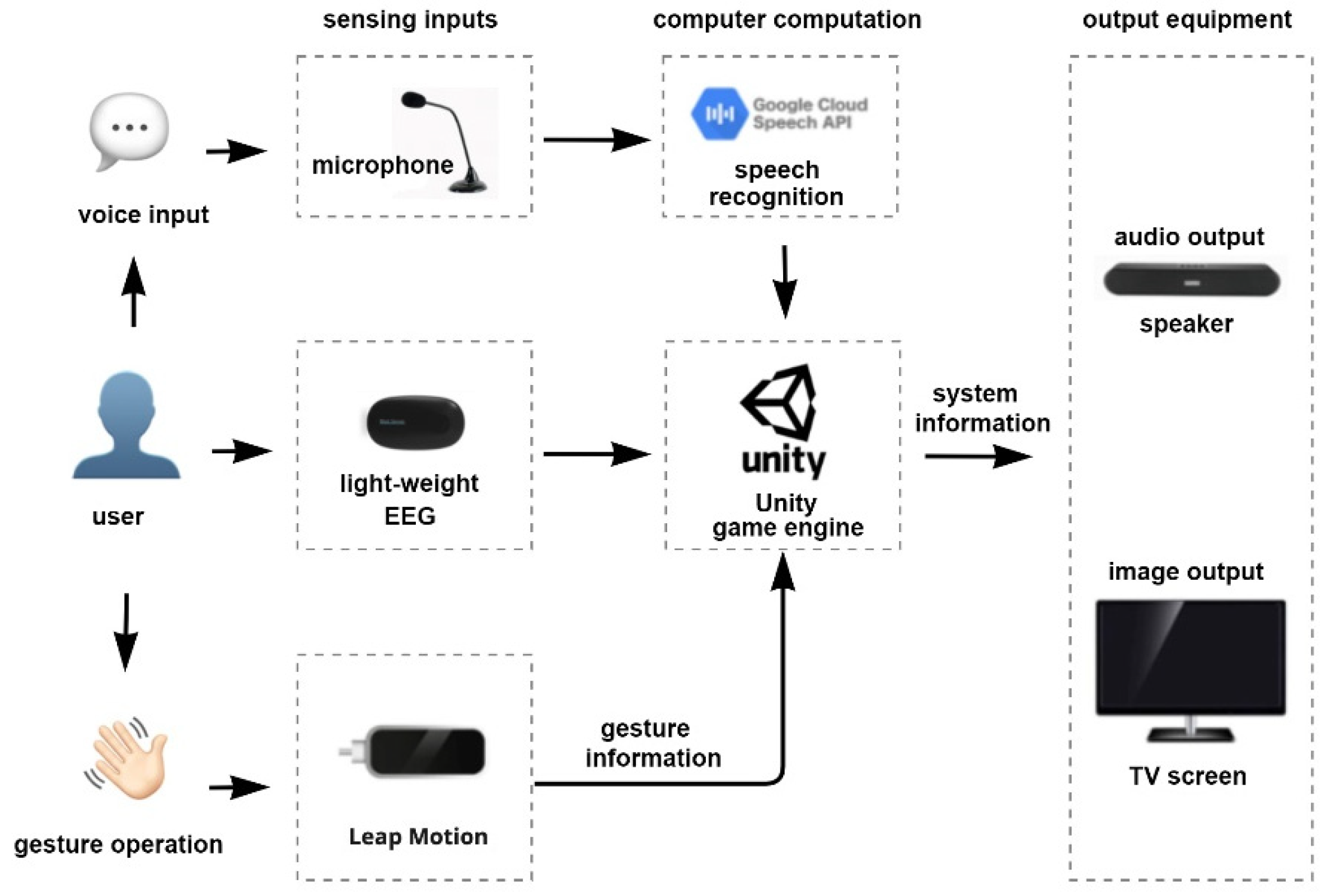

4.3. System Architecture and Employed Technologies

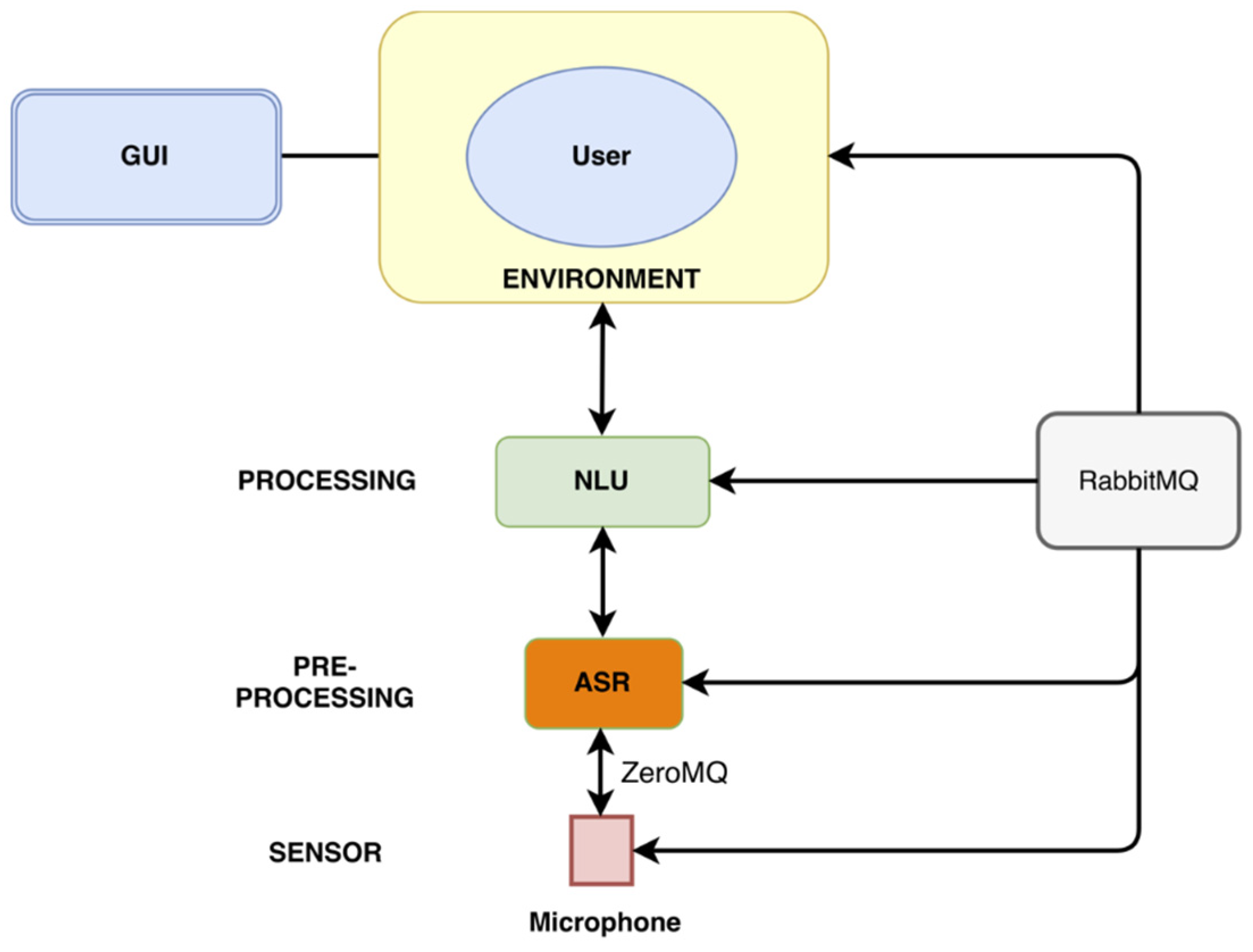

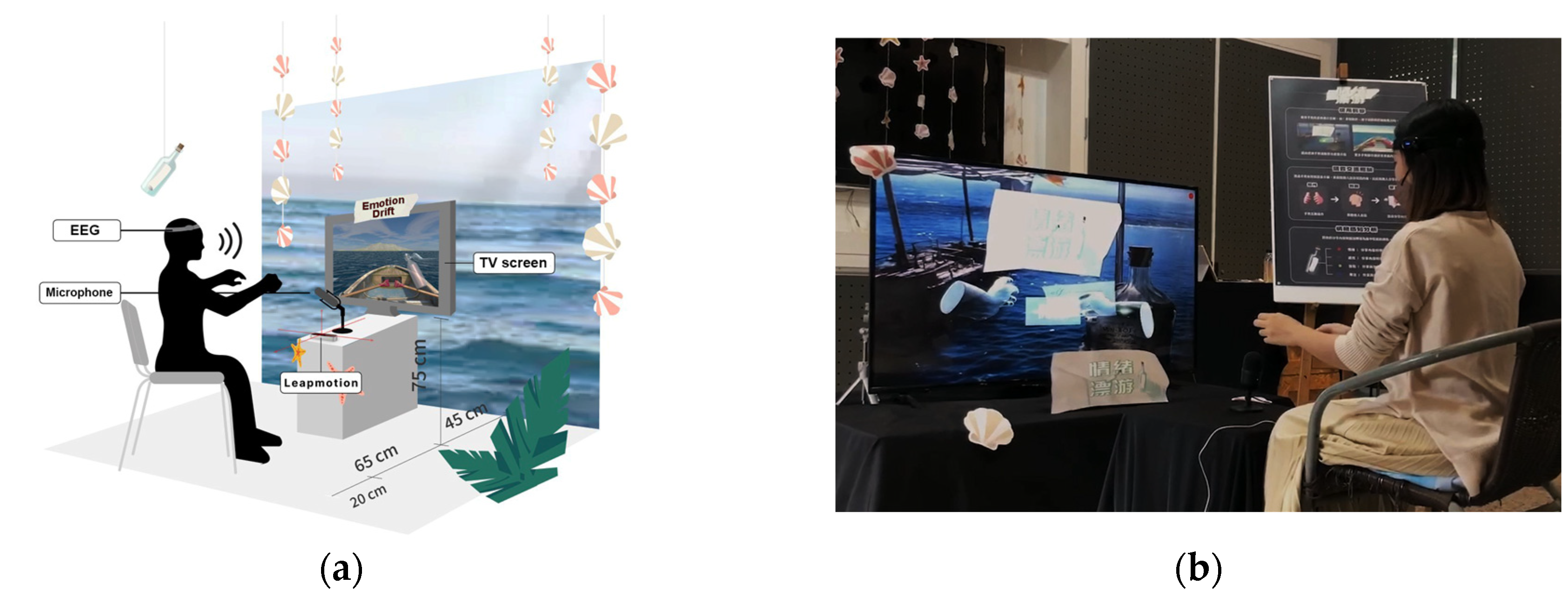

4.3.1. Overview of the Architecture

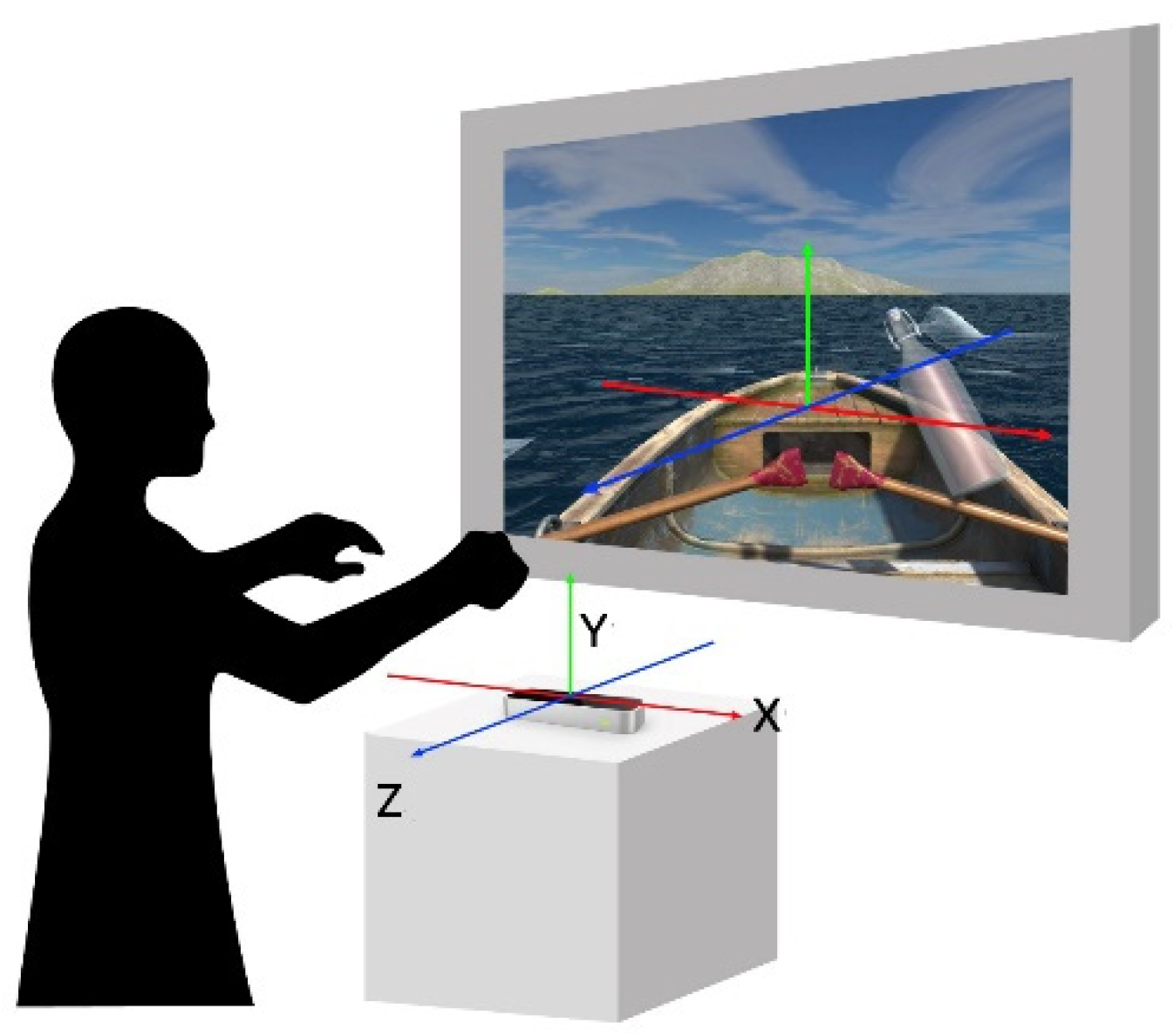

- Gesture Recognition: The Leap Motion sensor detects hand gestures to control objects within the virtual interface of the Leap Motion software.

- Voice Recognition: The Google Speech API processes voice input, converting speech to text in real time (Speech-to-Text, STT). It also employs natural language processing (NLP) to extract semantic meanings and performs sentiment analysis to assess the emotional content of the user’s speech.

- Brainwave Monitoring: The Mind Sensor EEG headset captures brainwave activity, providing data for observing and visualizing the user’s emotional state.

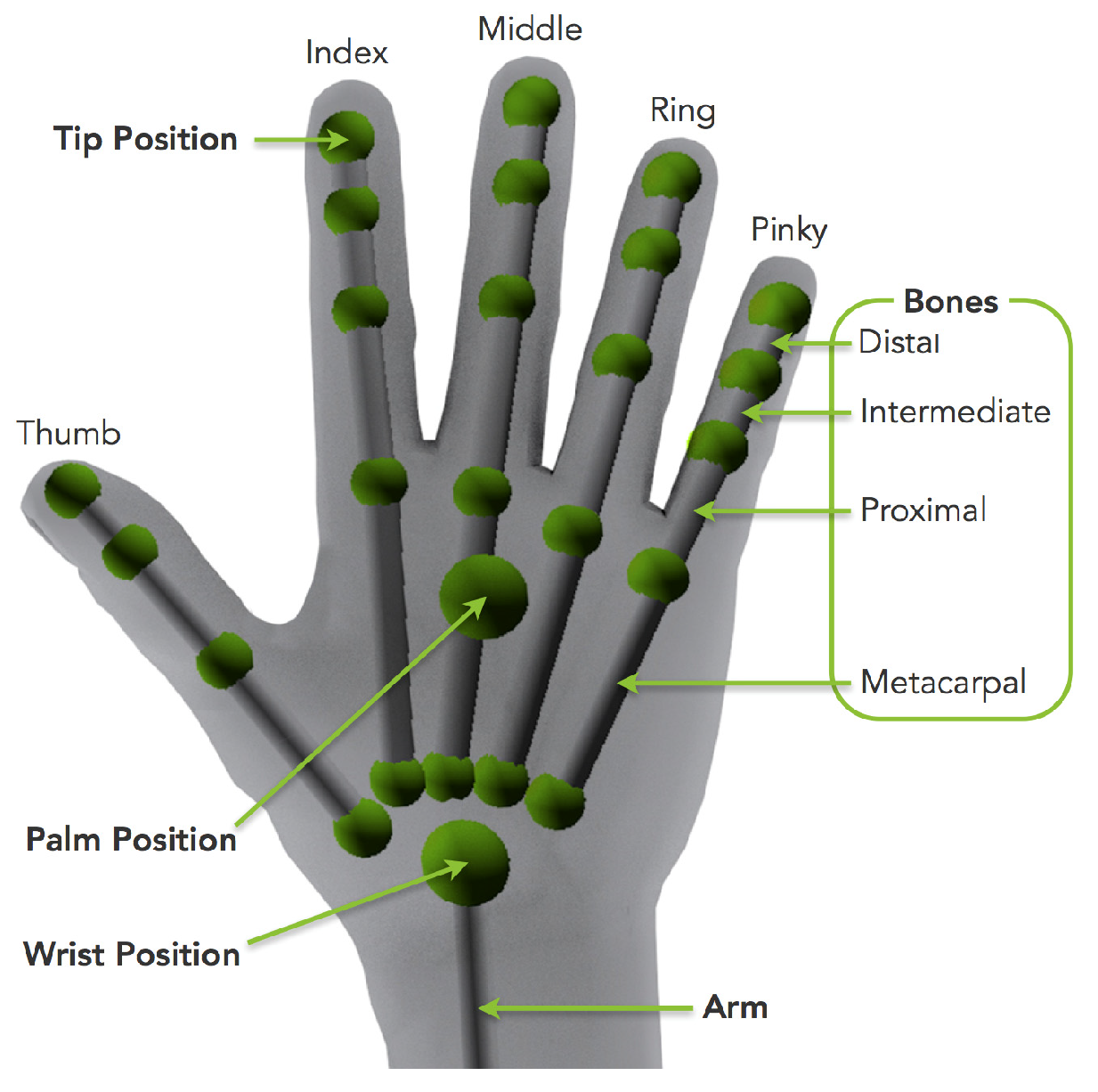

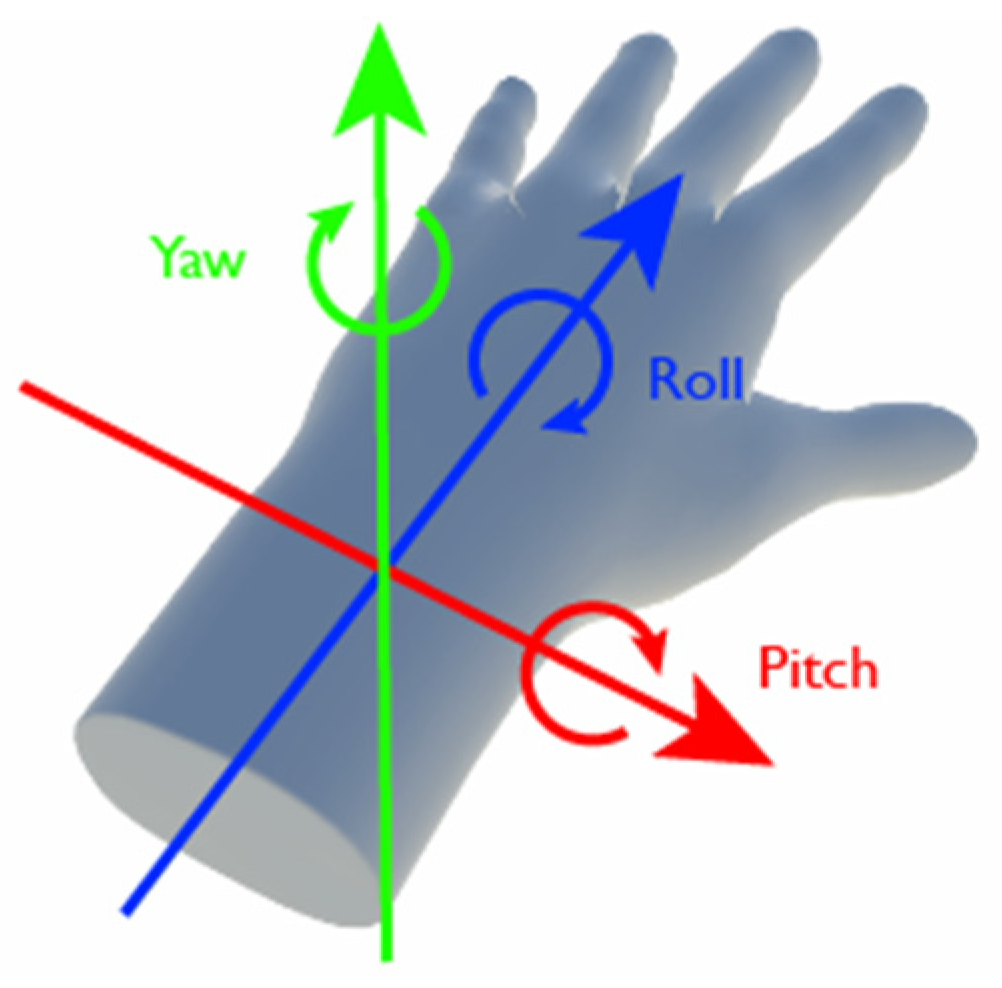

4.3.2. Leap Motion Hand Gesture Sensor

- (1)

- If four fingers on one hand are bent, it indicates a fist gesture.

- (2)

- If four fingers are straight, it indicates an open-hand gesture.

- (3)

- By using the codes of the OnTrigger collision event offered by Unity, when the arm is detected to contact with an interactive object, collision detection is activated.

- (4)

- When the object is grabbable, making a fist will allow the object to be picked up, and an open hand will allow the object to be placed down.

- (5)

- When the object is an interactive button, the button will be pressed and moved by the arm. This can be cross-referenced with the gesture command table (Table 10) mentioned previously.

4.3.3. Google Speech API

4.3.4. Mind Sensor EEG

4.3.5. Visual Displays of System Data and Verbal Descriptions of Users’ Emotion States

5. System Experience Experiment and Experimental Data Analysis

5.1. System Exhibition and Experiment Processes

- (1)

- Experiment Venue: Design Building II, First Floor, and Interactive Multimedia Design Laboratory in Yunlin University of Science and Technology in Yunlin, Taiwan.

- (2)

- Participants: Users aged 18 and above.

- (3)

- Sample Size: 60 participants.

- (4)

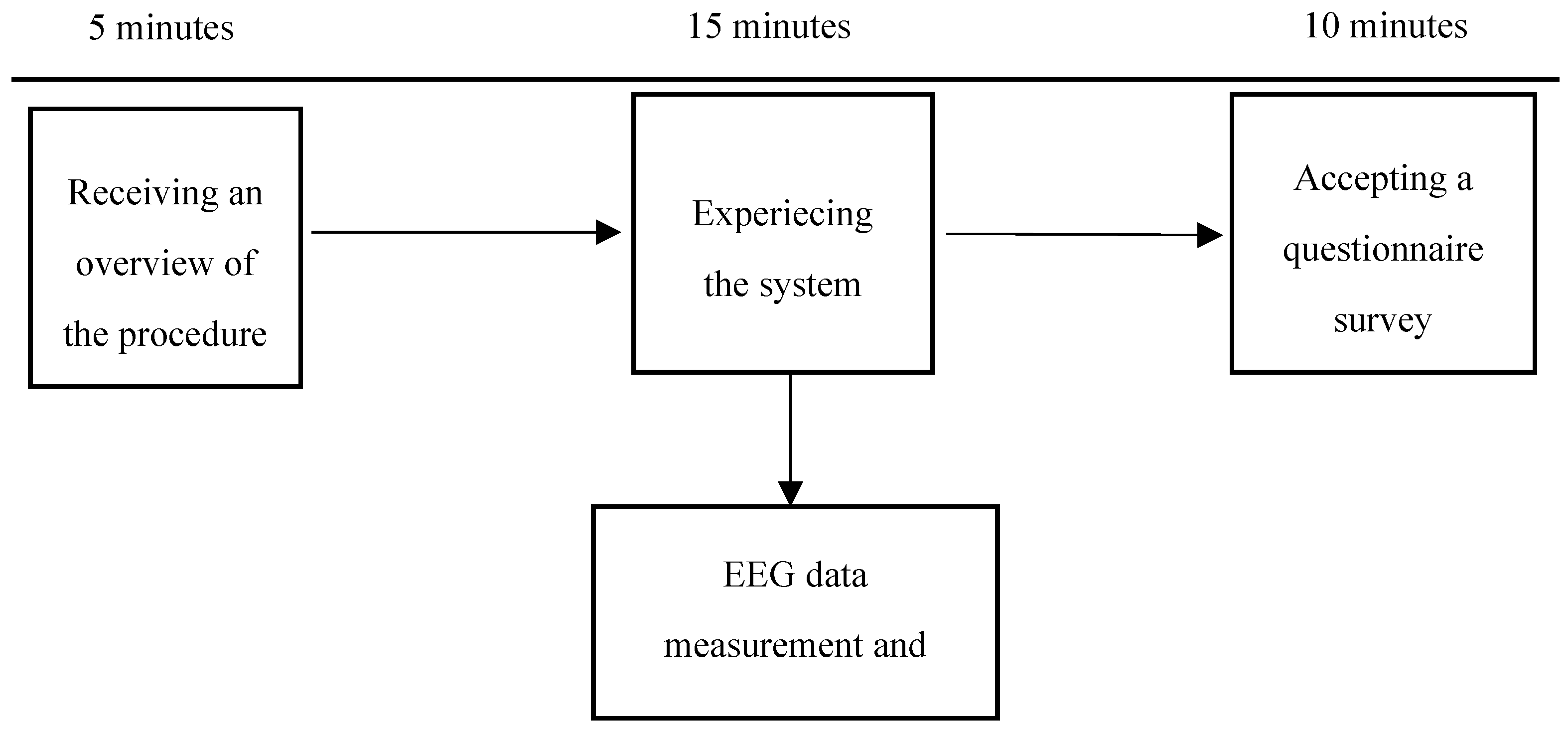

- Procedure: The experiment lasts about 20 minutes per participant. Upon arrival, each participant receives a brief overview of the procedure. They then engage with the interactive system while their behaviors are observed. Finally, participants complete a questionnaire survey. The details from a user’s view are shown in Figure 21.

5.2. Indirect Observation and Analysis of Collected Data

5.2.1. Ideas of Applying the Indirect Observation Method

5.2.2. Statistical Analysis of Correlations of Semantic and Brainwave Data

- (A)

- Terminology definitions ---

- (1)

- semantic (listening i) – the semantic data of the i-th message left by the previous user which is listened to by the current user, with i = 1 through 4;

- (2)

- semantic (speaking i) – the semantic data of the i-th message spoken by the current user, with i = 1 through 4;

- (3)

- meditation (listening i) – the meditation value measured of the current user when listening to the i-th message left by the previous user;

- (4)

- attention (listening i) – the same as above except that the parameter value is attention;

- (5)

- meditation (speaking i) – the meditation value measured of the current user when speaking the i-th message;

- (6)

- attention (speaking i) – the same as above except that the parameter value is attention;

- (B)

- Types of investigated correlations of emotion states ---

- (1)

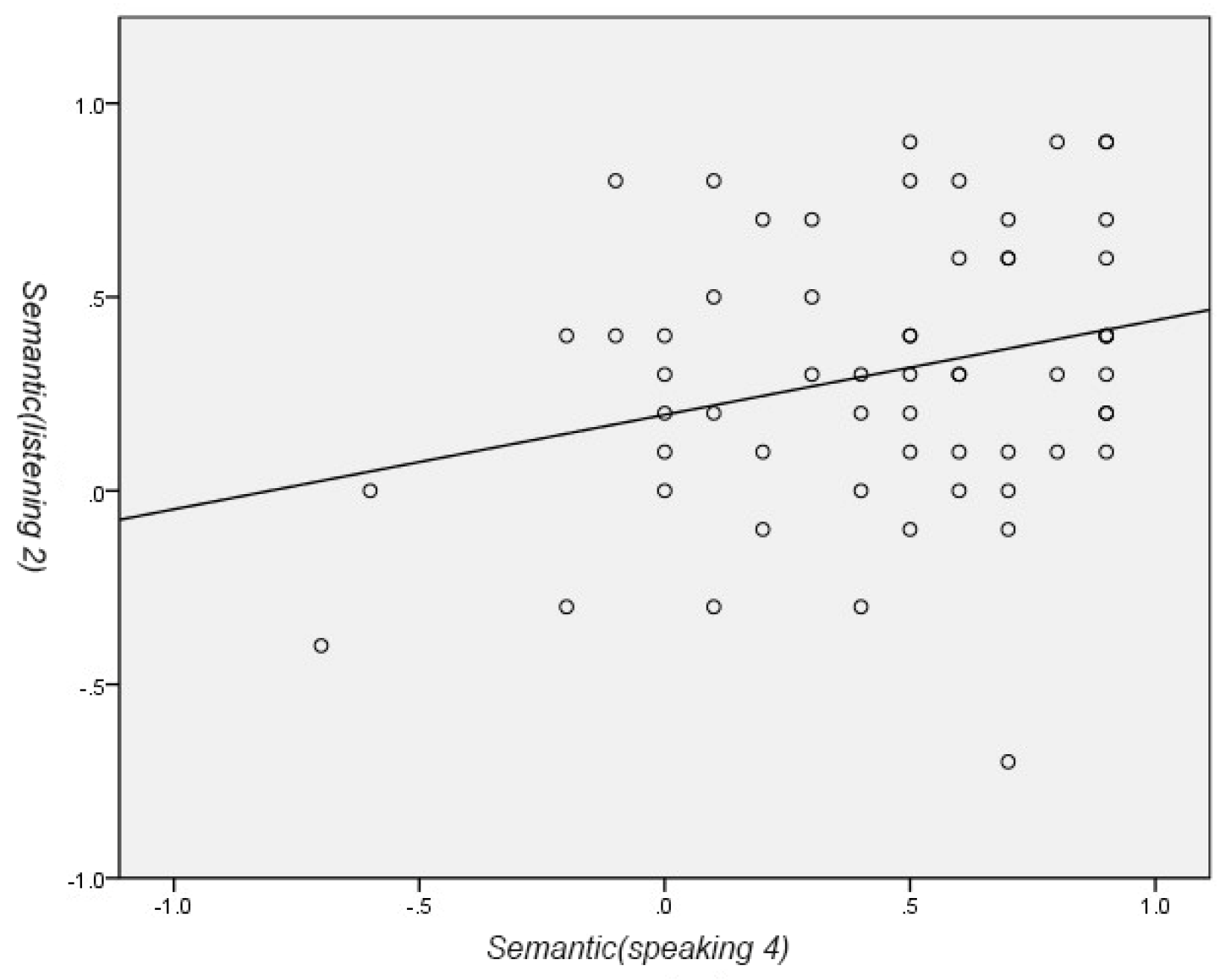

- Type 1: the correlation between the semantic of the i-th message left by the previous user (and listened by the current user) and the semantic of the j-th message spoken by the current user, i.e., the correlation between semantic (listening i) and semantic (speaking j), i ≠ j.

- (2)

- Type 2: the correlation between the semantic of the i-th message left by the previous user and the meditation or attention value measured of the current user when listening to the j-th message, i.e., the correlation between semantic (listening i) and meditation (speaking j) or attention (speaking j), i ≠ j.

- (3)

- Type 3: the correlation between the meditation (or attention) values measured of the current user while listening to the i-th messages and the meditation (or attention) value while listening to the (i+1)-th message, i.e., the correlation between meditation (listening i) (or attention (listening i)) and meditation (listening i+1) (or attention (listening i+1))

- (4)

- Type 4: the correlation between the meditation (or attention) values measured of the current user while speaking the i-th messages and the meditation (or attention) value while speaking the (i+1)-th message, i.e., the correlation between meditation (speaking i) (or attention (speaking i)) and meditation (speaking i+1) (or attention (speaking i+1)).

- (C)

- An illustrated case of emotion correlation ---

- (D)

- List of the four types of correlations investigated in this study ---

5.2.3. Concluding Remarks of Statistical Analysis

- (1)

- From all the types:

- (2)

- From Type 1:

- (3)

- From Type 2:

- (4)

- From Type 3:

- (5)

- From Type 4:

5.2.4. Observations of Emotion Changes in Listening and Sharing

- (A)

- Message listening phases

- (B)

- Message sharing phases

5.2.5. Concluding Remarks about Emotion Changes while Using the System

5.3. Questionnaire Survey and Statisitcal Analysis of Answer Data

5.3.1. Statistics of Participants in the Questionnaire Survey

5.3.2. Design of Questionnaire

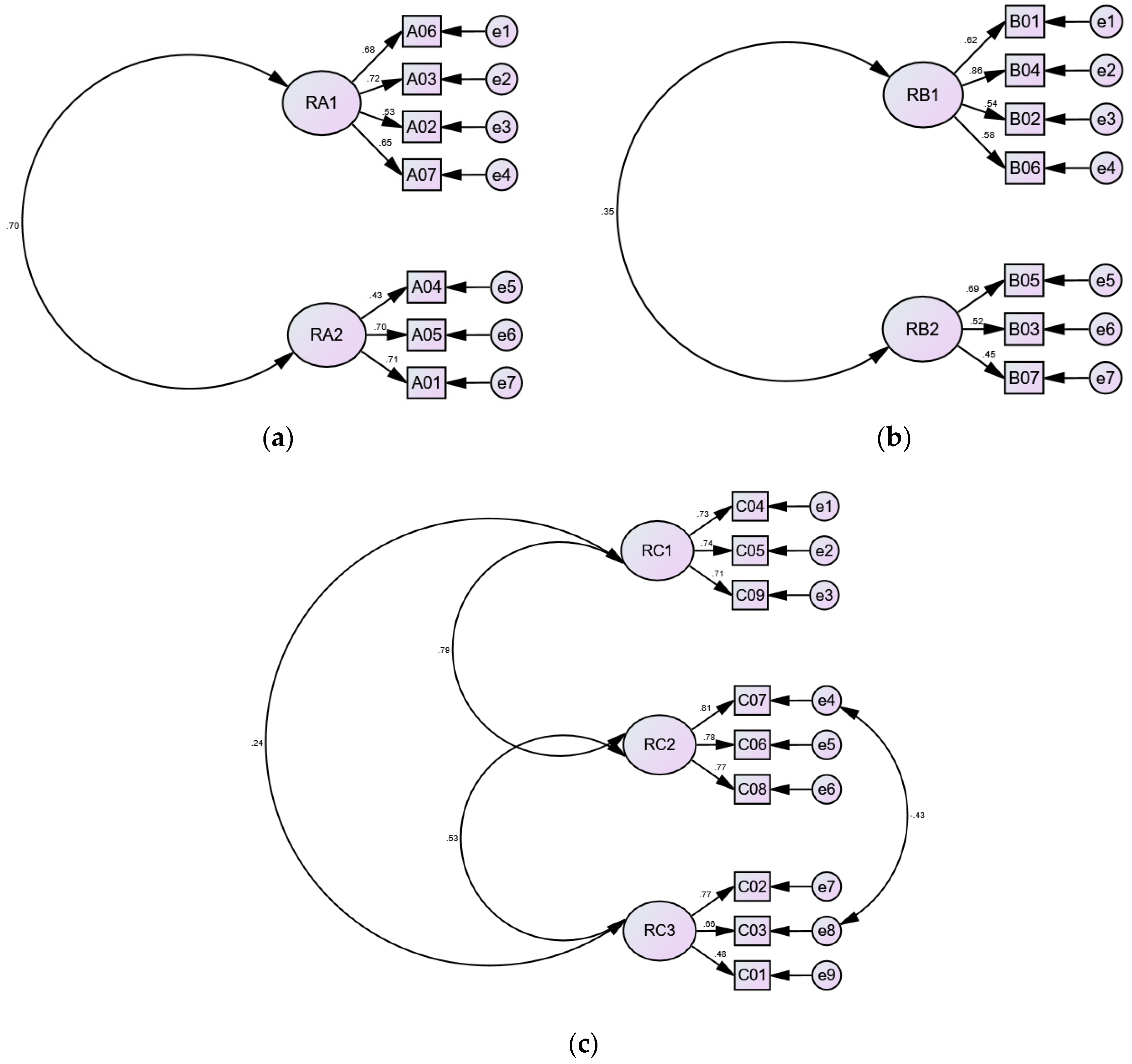

5.3.3. Analysis of Reliability and Validity of Collected Questionnaire Data

- (1)

- Step 1: Verification of the adequacy of the answer dataset

- (2)

- Step 2: Finding the latent dimensions of the question datasets

- (3)

- Step 3: Verifying the reliability of the answer data

- (4)

- Step 4: Verification of the applicability of the structural model

- (5)

- Step 5: Verification of the validity of the question answer data

5.3.4. Detailed Analyses of Answer Data of the Dimension of System Usability

- (A)

- Analysis about the latent dimension of “functionality”

- (1)

- The average values for this latent dimension range from 4.00 to 4.43, with standard deviations between 0.62 and 0.86, indicating user agreement with all question items.

- (2)

- The average value for question A06, “the various functions of the system are well integrated,” is 4.43 and the standard deviation is 0.59, with over 95% agreement, showing strong consensus on the effectiveness of function integration.

- (3)

- The average value for question A02, “I am able to understand and become familiar with each function of the system,” is 4.23 and the standard deviation is 0.62, with over 90% agreement, suggesting the system is easy for most users to understand.

- (4)

- The average value for question A07, “ I would like to use this system frequently,” is 4.00 and the standard deviation is 0.78, with over 80% agreement, but over 15% of the users were neutral, indicating some indifference regarding the willingness to use the system again.

- (5)

- All four items in this latent dimension have over 80% agreement, reflecting that the users find the proposed system to have good usability.

- (B)

- Analysis about the latent dimension of “effectiveness”

- (1)

- The average values for this dimension range from 4.00 to 4.38, with standard deviations between 0.61 and 0.75, indicating high perceived effectiveness of the system.

- (2)

- The standard deviation value for question A04, “the emotions output by the system seem accurate,” is above 0.7, showing some variation in user opinions. However, with an average of 4.0, it indicates that most users agree that their perceived emotions align with the system’s results.

- (3)

- Excluding question A04, each remaining question has over 90% agreement and a standard deviation below 0.62, reflecting a consistent user understanding of the effectiveness of the proposed system.

5.3.5. Detailed Analyses of Answer Data of the Dimension of Interactive Experience

- (A)

- Analysis about the latent dimension of “empathy”

- (1)

- The average values for this latent dimension range from 3.9 to 4.01, with standard deviations between 0.80 and 1.13, indicating that most users have a positive experience regarding the motions related to empathy during the interactions.

- (2)

- For questions B01, B02, and B04, more than 15% of responses were neutral, indicating notable variability in user opinions. Despite these differences, the overall response still reflects a positive user experience.

- (3)

- Especially, questions B04 and B01, both about empathy and care about others, have respectively 77% and 79% of agreements or above, meaning that most of the users improved their feelings of these aspects after using the system.

- (B)

- Analysis about the latent dimension of “isolation”

- (1)

- The average values for this dimension range from 3.2 to 4.06, with standard deviations between 0.93 and 1.29, indicating that a positive tendency in user interactions with the system is generally experienced, though with a few neutral responses.

- (2)

- There are three question items in this latent dimension, all with standard deviations greater than 0.9, and questions B03 and B07 have standard deviations exceeding 1.0, indicating considerable divergence in user opinions for these questions.

- (3)

- For question B05, “I feel lonely while using the system,” an agreement rate of over 80% was observed, suggesting that the system helps alleviate loneliness and enhances interpersonal relationships.

5.3.6. Detailed Analyses of Answer Data of the Dimension of Pleasure Experience

- (A)

- Analysis about the latent dimension of “satisfaction”

- (1)

- The average values for this latent dimension range from 4.11 to 4.73, with standard deviations between 0.54 and 0.94, reflecting the users’ good experiences of pleasure while using the proposed system.

- (2)

- For question C04, “I find the system’s interactive experience interesting,” an average value of 4.73 and a standard deviation of 0.54 were recorded, with 99% agreement, indicating that the users are highly satisfied with the interaction process of the system, thinking it to be very interesting and really enjoyable.

- (3)

- For question C09, “time seems to fly when I am using the system,” the average was 4.11, with a standard deviation of 0.94 and 18% of neutral responses. This indicates that there is discrepancy in user perceptions and thoughts regarding this question.

- (4)

- Aside from question C09, questions C04 and C05 achieved over 86% agreements, suggesting that most users perceive the system as being able to provide positive emotional experiences like happiness and pleasure.

- (B)

- Analysis about the latent dimension of “immersion”

- (1)

- The average values for this latent dimension range from 4.23 to 4.45, with the standard deviations between 0.67 and 0.88, indicating that positive and pleasant experiences are generally reported by the users.

- (2)

- For question C07, “I enjoy using the system,” an average value of 4.45 and a standard deviation of 0.67 were observed, with over 93% agreement, indicating that high affirmation of the pleasurable experience provided by the system is given by users.

- (3)

- For question C09, “time seems to fly when I am using the system,” the average is 4.11, with a standard deviation of 0.94 and 18% of neutral responses. This indicates that some discrepancy exists in user perceptions and thoughts regarding this question.

- (4)

- This latent dimension includes three questions, with all achieving over 84% agreement, suggesting that the proposed system is generally perceived by the users as providing enjoyable, immersive, and satisfying emotional experiences throughout the interaction process.

- (C)

- Analysis about the latent dimension of “relaxation”

- (1)

- The average values for this latent dimension range from 3.85 to 4.36, with standard deviations between 0.66 and 0.84, indicating that a positive and active experience regarding stress relief is reported by the users during the interactions.

- (2)

- Among the three questions in this latent dimension, question C01, “I feel special when using the system,” has a standard deviation of 0.84 with 28% of users providing neutral responses, the highest proportion in this latent dimension. This indicates noticeable variation in users’ opinions regarding this question.

- (3)

- Questions C02 and C03 have over 85% agreements, indicating that the proposed system is perceived by users as highly effective in providing relaxation and stress relief.

5.3.7. Concluding Remarks about the Analysis of the Questionnaire Survey Results

- (1)

- In the system usability dimension of the questionnaire, which is divided into two latent dimensions — “functionality” and “effectiveness” — the overall analysis indicates that the Emotion Drift System designed in this study demonstrates moderate usability, as reported by users after interacting with the system.

- (2)

- In the interactive experience dimension of the questionnaire, which includes the latent dimensions of “empathy” and “isolation,” questions B03, B06, and B07 have standard deviations greater than 1.0, reflecting variability in users’ responses. Nevertheless, the overall results show that the system effectively reduces loneliness, fosters empathy, and enhances interpersonal interactions.

- (3)

- In the pleasure experience dimension, which is divided into three latent dimensions — “satisfaction,” “immersion,” and “relaxation” — the overall analysis reveals that the Emotion Drift System demonstrates satisfactory pleasure-inducing qualities.

6. Conclusions and Suggestions

6.1. Conclusions

- (1)

- Emotional transmission in interactions is confirmed, with overall positive user emotions and notable individual variations ?

- (2)

- The proposed emotional perception system “Emotion Drift” effectively stimulates positive emotions in users and strengthens interpersonal interactions ?

- (3)

- A positive correlation was found to exist between semantic analysis and brainwave metrics of relaxation and concentration ?

- (4)

- The interaction experience based on multimodal and affective computing provides users with pleasure and relaxation emotions ?

6.2. Suggestions for Future Research

- (1)

- Exploring multi-sensing interaction and affective computing across different age groups?

- (2)

- Choosing a fixed, quiet, comfortable experimental environment ?

- (3)

- Designing longer induction phases to better cultivate emotions ?

- (4)

- Using more intuitive and readable visualizations for emotional information ?

- (5)

- Conducting experiments with diverse multi-sensing interaction interfaces and affective computing technologies ?

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Dubé, J. P.; Smith, M. M.; Sherry, S.B.; Hewitt, P. L.; Stewart, S. H. Suicide behaviors during the COVID-19 pandemic: A meta-analysis of 54 studies. Psychiatry Res. 2021, 301, 113998. [Google Scholar] [CrossRef] [PubMed]

- Krogstad, J.; Passel, J.; Cohn, D.V. Pew Research Center. US Border Apprehensions Of Families And Unaccompanied Children Jump Dramatically.

- World Mental Health Day. World Health Organization. Available online: https://www.who.int/campaigns/world-mental-health-day/2023 (accessed on 22 August 2024).

- Arya, R.; Singh, J.; Kumar, A. A survey of multidisciplinary domains contributing to affective computing. Comput. Sci. Rev. 2021, 40, Article 100399. [CrossRef]

- Collins, R. Interaction ritual chains. In Interaction Ritual Chains; Princeton University Press: Princeton, NJ, USA, 2014. [Google Scholar]

- Meng, L.; Zhao, Y.; Jiang, Y.; Bie, Y.; Li, J. Understanding interaction rituals: The impact of interaction ritual chains of the live broadcast on people’s wellbeing. Front. Psychol. 2022, 13. [Google Scholar] [CrossRef] [PubMed]

- Zhao, S.; Wang, S.; Soleymani, M.; Joshi, D.; Ji, Q. Affective computing for large-scale heterogeneous multimedia data: A survey. ACM Trans. Multim. Comput. Commun. Appl. (TOMM) 2019, 15, 1–32. [Google Scholar] [CrossRef]

- Picard, R.W. Affective computing: from laughter to IEEE. IEEE Trans. Affect. Comput. 2010, 1, 11–17. [Google Scholar] [CrossRef]

- Samadiani, N.; Huang, G.; Cai, B.; Luo, W.; Chi, C.-H.; Xiang, Y.; He, J. A review on automatic facial expression recognition systems assisted by multimodal sensor data. Sensors 2019, 19, 1863. [Google Scholar] [CrossRef]

- Gervasi, R.; Barravecchia, F.; Mastrogiacomo, L.; Franceschini, F. Applications of affective computing in human-robot interaction: State-of-art and challenges for manufacturing. Proc. Inst. Mech. Eng. Part B-J. Eng. Manuf. 2022. [Google Scholar] [CrossRef]

- Yongda, D.; Fang, L.; Huang, X. Research on multimodal human-robot interaction based on speech and gesture. Comput. Electr. Eng. 2018, 72, 443–454. [Google Scholar] [CrossRef]

- Samaroudi, M.; Echavarria, K.R.; Perry, L. Heritage in lockdown: digital provision of memory institutions in the UK and US of America during the COVID-19 pandemic. Museum Manag. Curatorship 2020, 35, 337–361. [Google Scholar] [CrossRef]

- Coherent Market Insights. Affective Computing Market Analysis. Available online: https://www.coherentmarketinsights.com/market-insight/affective-computing-market-5069 (accessed on 22 August 2024).

- DeVito, J.A. The Interpersonal Communication Book, 13th ed.; United: New York, NY, USA, 2013. [Google Scholar]

- Manning, J. Interpersonal communication. In The SAGE International Encyclopedia of Mass Media and Society; SAGE Publications: Thousand Oaks, CA, USA, 2020; Volume 2, pp. 842–845. [Google Scholar]

- Burgoon, J.K.; Berger, C.R.; Waldron, V. R. Mindfulness and interpersonal communication. J. Soc. Issues 2000, 56, 105–127. [Google Scholar] [CrossRef]

- Norman, D. The Design of Everyday Things: Revised and Expanded Edition; Basic Books: New York, NY, USA, 2013. [Google Scholar]

- Auxier, B.; Anderson, M. Social media use in 2021. Pew Res. Center 2021, 1, 1–4. [Google Scholar]

- Derks, D.; Fischer, A.H.; Bos, A.E. The role of emotion in computer-mediated communication: A review. Comput. Hum. Behav. 2008, 24, 766–785. [Google Scholar] [CrossRef]

- Derks, D.; Bos, A.E.; Von Grumbkow, J. Emoticons and social interaction on the Internet: The importance of social context. Comput. Hum. Behav. 2007, 23, 842–849. [Google Scholar] [CrossRef]

- Li, C.; Ning, G.; Xia, Y.; Guo, K.; Liu, Q. Does the internet bring people closer together or further apart? The impact of internet usage on interpersonal communications. Behav. Sci. 2022, 12, 425. [Google Scholar] [CrossRef] [PubMed]

- Chartrand, T.L.; Bargh, J.A. The chameleon effect: The perception–behavior link and social interaction. J. Pers. Soc. Psychol. 1999, 76, 893. [Google Scholar] [CrossRef]

- Reis, H.T.; Regan, A.; Lyubomirsky, S. Interpersonal chemistry: What is it, how does it emerge, and how does it operate? Perspect. Psychol. Sci. 2022, 17, 530–558. [Google Scholar] [CrossRef]

- Miles, L.K.; Nind, L.K.; Macrae, C.N. The rhythm of rapport: Interpersonal synchrony and social perception. J. Exp. Soc. Psychol. 2009, 45, 585–589. [Google Scholar] [CrossRef]

- Stephens, J.P.; Heaphy, E.; Dutton, J.E. High-quality connections. In Cameron, K. and Spreitzer, G., Eds., Handbook of Positive Organizational Scholarship; Oxford University Press: New York, NY, USA, 2012; pp. 385–399. [Google Scholar]

- Jiarui, W.; Xiaoli, Z.; Jiafu, S. Interpersonal relationship, knowledge characteristic, and knowledge sharing behavior of online community members: A TAM perspective. Comput. Intell. Neurosci. 2022, 2022, 4188480. [Google Scholar] [CrossRef]

- Davis, F.D. Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Lu, Y.; Zhou, T.; Wang, B. Exploring Chinese users’ acceptance of instant messaging using the theory of planned behavior, the technology acceptance model, and the flow theory. Comput. Hum. Behav. 2009, 25(1), 29–39. [Google Scholar] [CrossRef]

- Venkatesh, V.; Speier, C.; Morris, M.G. User acceptance enablers in individual decision making about technology: Toward an integrated model. Decis. Sci. 2002, 33, 297–316. [Google Scholar] [CrossRef]

- Mun, Y.Y.; Hwang, Y. Predicting the use of web-based information systems: Self-efficacy, enjoyment, learning goal orientation, and the technology acceptance model. Int. J. Hum. Comput. Stud. 2003, 59, 431–449. [Google Scholar]

- Chung, J.; Tan, F.B. Antecedents of perceived playfulness: An exploratory study on user acceptance of general information-searching websites. Inf. Manag. 2004, 41, 869–881. [Google Scholar] [CrossRef]

- Brown, J.S.; Collins, A.; Duguid, P. Situated cognition and the culture of learning. Educ. Res. 1989, 18, 32–42. [Google Scholar] [CrossRef]

- Durning, S.; Artino, A. Situativity theory: A perspective on how participants and the environment can interact: AMEE Guide no. 52. Med. Teach. 2011, 33, 188–199. [Google Scholar] [CrossRef]

- Schrepp, M.; Hinderks, A.; Thomaschewski, J. Design and evaluation of a short version of the User Experience Questionnaire (UEQ-S). Int. J. Interact. Multimedia Artif. Intell. 2017, 4, 103. [Google Scholar] [CrossRef]

- Collins, R. Princeton University Press. 2005. [CrossRef]

- Bellocchi, A.; Quigley, C.; Otrel-Cass, K. Exploring emotions, aesthetics and wellbeing in science education research, Vol. 13; Springer: Heidelberg, Germany, 2016. [Google Scholar]

- Krishnan, R.; Cook, K.S.; Kozhikode, R.K.; Schilke, O. An Interaction Ritual Theory of Social Resource Exchange: Evidence from a Silicon Valley Accelerator. Admin. Sci. Q. 2021, 66, 659-710, Article 0001839220970936. [CrossRef]

- Patricia, M. Online networks and emotional energy: How pro-anorexic websites use interaction ritual chains to (re)form identity. Inf. Commun. Soc. 2012, 16, 105–124. [Google Scholar]

- Clark, H.H. Using language; Cambridge University Press: Cambridge, UK, 1996. [Google Scholar]

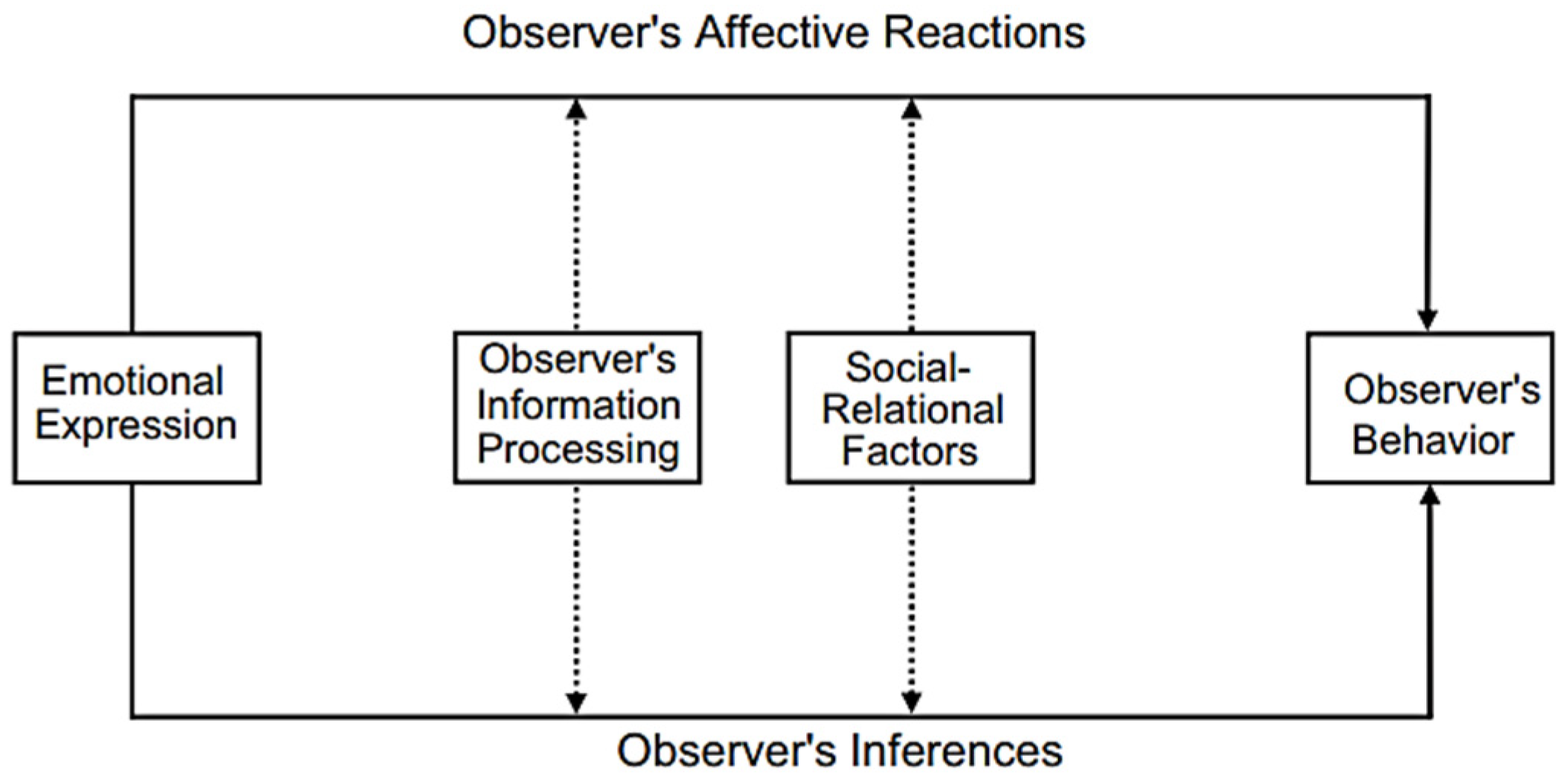

- Van Kleef, G.A. How emotions regulate social life: The emotions as social information (EASI) model. Curr. Dir. Psychol. Sci. 2009, 18, 184–188. [Google Scholar] [CrossRef]

- Van Kleef, G.A.; De Dreu, C.K.W.; Manstead, A.S.R. An interpersonal approach to emotion in social decision making: The emotions as social information model. In Advances in Experimental Social Psychology, Vol. 42; Academic Press: Cambridge, MA, USA, 2010; pp. 45–96. [Google Scholar] [CrossRef]

- Wright, P.; McCarthy, J. Technology as Experience; MIT Press: Cambridge, MA, USA, 2004. [Google Scholar]

- Hallnäs, L.; Redström, J. Slow technology–designing for reflection. Pers. Ubiquitous Comput. 2001, 5, 201–212. [Google Scholar] [CrossRef]

- Picard, R.W. Affective Computing; The MIT Press: Cambridge, MA, USA, 1997. [Google Scholar]

- Breazeal, C.; Scassellati, B. How to build robots that make friends and influence people. In Proceedings 1999 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE: Piscataway, NJ, USA, 1999; pp. 858-863.

- Shukla, A.; Gullapuram, S.S.; Katti, H.; Kankanhalli, M.; Winkler, S.; Subramanian, R. Recognition of advertisement emotions with application to computational advertising. IEEE Trans. Affect. Comput. 2022, 13, 781–792. [Google Scholar] [CrossRef]

- Andalibi, N.; Buss, J. The human in emotion recognition on social media: Attitudes, outcomes, risks. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems; ACM: New York, NY, USA, 2020; pp. 1–15. [Google Scholar]

- Shi, Z. Intelligence Science: Leading the Age of Intelligence; Elsevier: Amsterdam, Netherlands, 2021. [Google Scholar]

- Sloman, A. Review of affective computing. AI Mag. 1999, 20, 127–127. [Google Scholar]

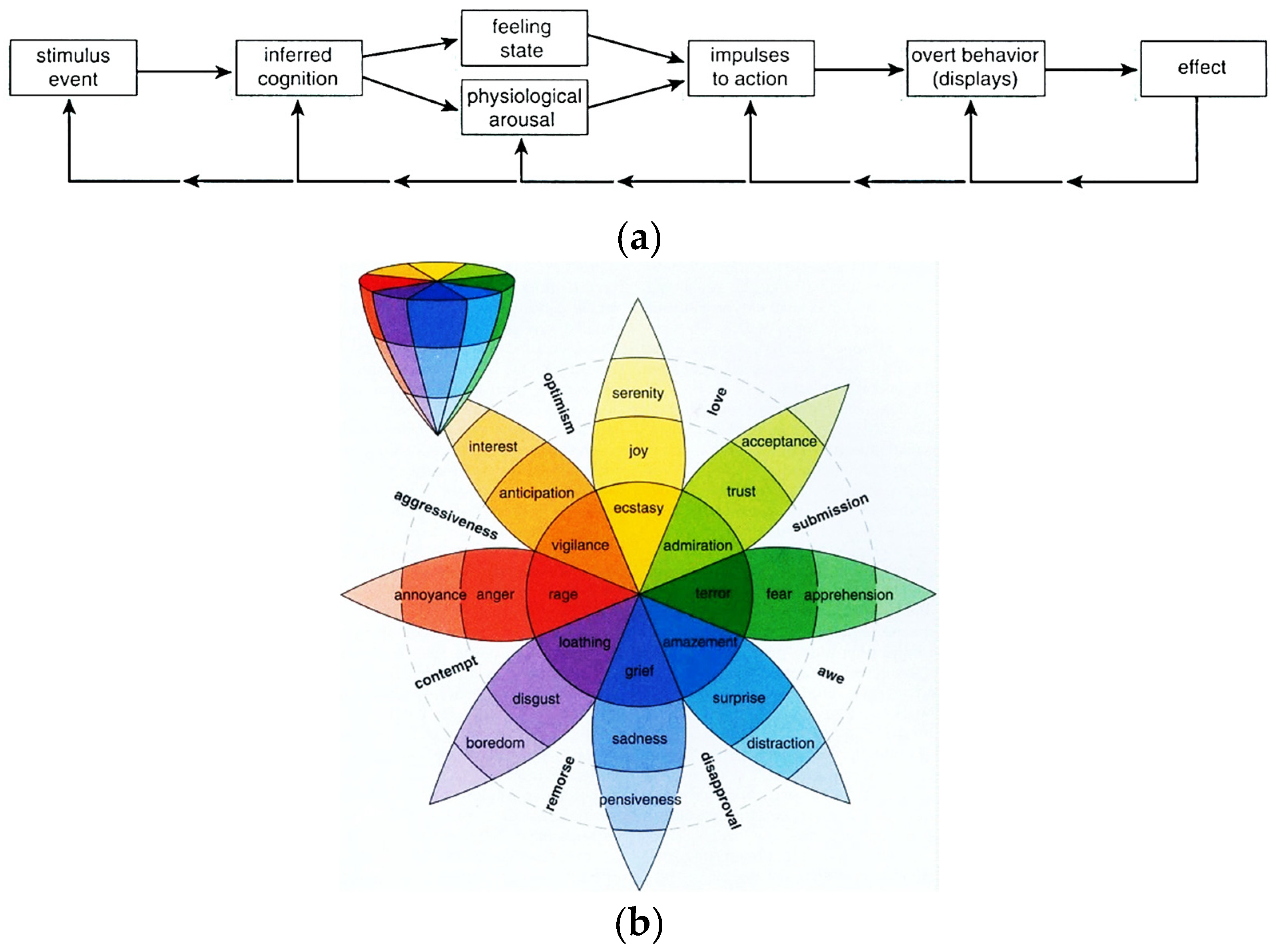

- Plutchik, R. The nature of emotions: Human emotions have deep evolutionary roots, a fact that may explain their complexity and provide tools for clinical practice. Am. Sci. 2001, 89, 344–350. [Google Scholar] [CrossRef]

- Cambria, E.; Livingstone, A.; Hussain, A. The hourglass of emotions. In Cognitive Behavioural Systems: COST 2102 International Training School, Dresden, Germany, February 21-26, 2011; Revised Selected Papers; Springer: Berlin, Germany, 2012; pp. 112–121. [Google Scholar]

- Feldman, L.A. Valence focus and arousal focus: Individual differences in the structure of affective experience. J. Pers. Soc. Psychol. 1995, 69, 153–166. [Google Scholar] [CrossRef]

- Russell, J.A. A circumplex model of affect. J. Pers. Soc. Psychol. 1980, 39, 1161–1178. [Google Scholar] [CrossRef]

- Russell, J.A. Core affect and the psychological construction of emotion. Psychol. Rev. 2003, 110, 145–172. [Google Scholar] [CrossRef]

- Warr, P.; Inceoglu, I. Job engagement, job satisfaction, and contrasting associations with person–job fit. J. Occup. Health Psychol. 2012, 17, 129–148. [Google Scholar] [CrossRef] [PubMed]

- Kensinger, E.A. Remembering the details: Effects of emotion. Emotion Rev. 2009, 1, 99–113. [Google Scholar] [CrossRef] [PubMed]

- Basu, S.; Jana, N.; Bag, A.; Mahadevappa, M.; Mukherjee, J.; Kumar, S.; Guha, R. Emotion recognition based on physiological signals using valence-arousal model. In 2015 Third International Conference on Image Information Processing (ICIIP); IEEE: Piscataway, NJ, USA, 2015; pp. 566–571. [Google Scholar]

- Jefferies, L.N.; Smilek, D.; Eich, E.; Enns, J.T. Emotional valence and arousal interact in attentional control. Psychol. Sci. 2008, 19, 290–295. [Google Scholar] [CrossRef]

- Nicolaou, M.A.; Gunes, H.; Pantic, M. Continuous prediction of spontaneous affect from multiple cues and modalities in valence-arousal space. IEEE Trans. Affect. Comput. 2011, 2, 92–105. [Google Scholar] [CrossRef]

- Ramirez, R.; Vamvakousis, Z. Detecting emotion from EEG signals using the Emotiv Epoc device. In Brain Informatics: International Conference, BI 2012, Macau, China, December 4-7, 2012; Proceedings; Springer: Berlin, Germany, 2012; pp. 125–134. [Google Scholar]

- Wang, C.-M.; Chen, Y.-C. Design of an interactive mind calligraphy system by affective computing and visualization techniques for real-time reflections of the writer’s emotions. Sensors 2020, 20(20), 5741. [Google Scholar] [CrossRef]

- da Silva, F.L. Neural mechanisms underlying brain waves: from neural membranes to networks. Electroencephalography and Clinical Neurophysiology 1991, 79, 81–93. [Google Scholar] [CrossRef]

- Shackman, A.J.; McMenamin, B.W.; Maxwell, J.S.; Greischar, L.L.; Davidson, R.J. Identifying robust and sensitive frequency bands for interrogating neural oscillations. Neuroimage 2010, 51, 1319–1333. [Google Scholar] [CrossRef] [PubMed]

- Yudhana, A.; Mukhopadhyay, S.; Karas, I.R.; Azhari, A.; Mardhia, M.M.; Akbar, S. A.; Muslim, A.; Ammatulloh, F. I. Recognizing human emotion patterns by applying Fast Fourier Transform based on brainwave features. In 2019 International Conference on Informatics, Multimedia, Cyber and Information System (ICIMCIS) 2019.

- Sundström, P.; Ståhl, A.; Höök, K. In situ informants exploring an emotional mobile messaging system in their everyday practice. International Journal of Human-Computer Studies 2007, 65, 704–716. [Google Scholar]

- Ko, L.-W.; Yang, C.-S. How emotion processing influences post-purchase intention: An exploratory study of consumer electronic products. International Journal of Information Management 2013, 33, 731–740. [Google Scholar]

- Sung, J.-Y.; Kim, J.-W.; Kim, J.-H. Cognitive and emotional responses to an interactive product: The case of the iPod nano. International Journal of Human-Computer Studies 2009, 67, 610–622. [Google Scholar]

- D’Mello, S. K.; Graesser, A. C. Multimodal sensors for assessing affective states. In Affective Computing and Intelligent Interaction; Springer: Berlin, Germany, 2012; pp. 54–64. [Google Scholar]

- Fitzpatrick, K.K.; Darcy, A.; Vierhile, M. Delivering cognitive behavior therapy to young adults with symptoms of depression and anxiety using a fully automated conversational agent (Woebot): a randomized controlled trial. JMIR Mental Health 2017, 4, e7785. [Google Scholar] [CrossRef] [PubMed]

- Tseng, Y.C. The Proof of existence and strategy of performativity - My name is Jane. Artistica TNNUA 2021, 23, 1-17 (in Chinese).

- Boehner, K.; DePaula, R.; Dourish, P.; Sengers, P. How emotion is made and measured. International Journal of Human-Computer Studies 2007, 65, 275-291. [CrossRef]

- Gartner. 5 Trends Appear on the Gartner Hype Cycle for Emerging Technologies. Available online: https://www.gartner.com/smarterwithgartner/5-trends-appear-on-the-gartner-hype-cycle-for-emerging-technologies-2019 (accessed on 24 August 2024).

- Cambria, E.; Das, D.; Bandyopadhyay, S.; Feraco, A. Affective computing and sentiment analysis. In A Practical Guide to Sentiment Analysis; Springer: Berlin, Germany, 2017; pp. 1–10. [Google Scholar]

- Poria, S.; Cambria, E.; Bajpai, R.; Hussain, A. A review of affective computing: From unimodal analysis to multimodal fusion. Information Fusion 2017, 37, 98–125. [Google Scholar] [CrossRef]

- Fang, Y.; Hettiarachchi, N.; Zhou, D.; Liu, H. Multi-modal sensing techniques for interfacing hand prostheses: A review. In 2015 IEEE Sensors Journal 2015; pp. 6065-6076.

- Wilhelm, M.; Krakowczyk, D.; Albayrak, S. PeriSense: ring-based multi-finger gesture interaction utilizing capacitive proximity sensing. Sensors 2020, 20(14), 3990. [Google Scholar] [CrossRef]

- Due, B. L.; Licoppe, C. Video-mediated interaction (VMI): introduction to a special issue on the multimodal accomplishment of VMI institutional activities. Social Interaction. Video-Based Studies of Human Sociality 2021, 3(3).

- Ferscha, A.; Resmerita, S.; Holzmann, C.; Reichör, M. Orientation sensing for gesture-based interaction with smart artifacts. Computer Communications 2005, 28, 1552–1563. [Google Scholar] [CrossRef]

- Jonell, P.; Bystedt, M.; Fallgren, P.; Kontogiorgos, D.; Lopes, J.; Malisz, Z.; Mascarenhas, S.; Oertel, C.; Raveh, E.; Shore, T. Farmi: a framework for recording multi-modal interactions. In Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018) 2018.

- Ueng, S.-K. Vision based multi-user human computer interaction. Ph.D. Thesis, National Taiwan Normal University, Taipei, Taiwan, 2016. [Google Scholar]

- Yasen, K.N. Vision-based control by hand-directional gestures converting to voice. International Journal of Scientific & Technology Research 2018, 7, 185–190. [Google Scholar]

- Bolt, R.A. “Put-that-there” Voice and gesture at the graphics interface. Proceedings of the 7th Annual Conference on Computer Graphics and Interactive Techniques 1980.

- Billinghurst, M. Put that where? Voice and gesture at the graphics interface. ACM Siggraph Computer Graphics 1998, 32, 60–63. [Google Scholar] [CrossRef]

- Jalil, N. Introduction to Intelligent User Interfaces (IUIs). In Software Usability; IntechOpen: London, UK, 2021. [Google Scholar]

- Song, J.-H.; Min, S.-H.; Kim, S.-G.; Cho, Y.; Ahn, S.-H. Multi-functionalization strategies using nanomaterials: A review and case study in sensing applications. Int’l J. of Precision Engineering & Manufacturing-Green Technology 2022, 9, 323–347. [Google Scholar]

- Maybury, M.; Wahlster, W. Readings in Intelligent User Interfaces; Morgan Kaufmann: San Francisco, CA, 1998. [Google Scholar]

- Škraba, A.; Koložvari, A.; Kofjač, D.; Stojanović, R. Wheelchair maneuvering using leap motion controller and cloud based speech control: Prototype realization. 2015 4th Mediterranean Conference on Embedded Computing (MECO) 2015.

- Rodríguez-Hidalgo, C.; Pantoja, A.; Araya, F.; Araya, H.; Dias, V. Generating empathic responses from a social robot: An integrative multimodal communication framework using Sima Robot. Workshop on Behavioral Patterns and Interaction Modelling for Personalized Human-Robot Interaction, Cambridge, UK, March 23, 2020.

- Park, C.M.; Ki, T.; Ben Ali, A. J.; Pawar, N.S.; Dantu, K.; Ko, S.Y.; Ziarek, L. Gesto: Mapping UI events to gestures and voice commands. Proceedings of the ACM on Human-Computer Interaction 2019, 3, 1-22.

- Lin, H.C.; Tseng, Y.C.; Chang, C.C. Affective Computing - Creative applications of emotional analysis. Science Monthly 2020, 569, 55-63 (in Chinese).

- Kaczmarek, W.; Panasiuk, J.; Borys, S.; Banach, P. Industrial robot control by means of gestures and voice commands in off-line and on-line mode. Sensors 2020, 20, 6358. [Google Scholar] [CrossRef]

- Scupin, R. The KJ Method: A Technique for Analyzing Data Derived from Japanese Ethnology. Human Organization 1997, 56, 2. [Google Scholar] [CrossRef]

- Cohen, E.; Aberle, D.F.; Bartolomé, L.J.; Caldwell, L.K.; Esser, A.H.; Hardesty, D. L.; Hassan, R.; Heinen, H. D.; Kawakita, J.; Linares, O.F. Environmental Orientations: A Multidimensional Approach to Social Ecology [and Comments and Reply]. Current Anthropology 1976, 17, 49–70. [Google Scholar] [CrossRef]

- Kuniavsky, M. Observing the User Experience: A Practitioner’s Guide to User Research 2003.

- Beyer, H.; Holtzblatt, K. Contextual design. Interactions 1999, 6, 32–42. [Google Scholar] [CrossRef]

- Holtzblatt, K.; Wendell, J.; Wood, S. Rapid Contextual Design: A How-To Guide to Key Techniques for User-Centered Design; Morgan Kaufmann: San Francisco, CA, 2004; p. 320. [Google Scholar]

- Awasthi, A.; Chauhan, S.S. A hybrid approach integrating Affinity Diagram, AHP and fuzzy TOPSIS for sustainable city logistics planning. Applied Mathematical Modelling 2012, 36, 573–584. [Google Scholar] [CrossRef]

- Lucero, A. Using affinity diagrams to evaluate interactive prototypes. In Human-Computer Interaction–INTERACT 2015: 15th IFIP TC 13 International Conference, Bamberg, Germany, September 14-18, 2015, Proceedings, Part II.

- Hanington, B.; Martin, B. Universal Methods of Design Expanded and Revised: 125 Ways to Research Complex Problems, Develop Innovative Ideas, and Design Effective Solutions; Rockport Publishers: Beverly, MA, 2019. [Google Scholar]

- Cooper, A. The Inmates Are Running the Asylum; Springer: New York, NY, 1999. [Google Scholar]

- Cooper, A.; Reimann, R. About Face 2.0: The Essentials of Interaction Design; Wiley: New York, NY, 2003. [Google Scholar]

- Acuña, S.T.; Castro, J.W.; Juristo, N. A HCI technique for improving requirements elicitation. Information and Software Technology 2012, 54, 1357–1375. [Google Scholar] [CrossRef]

- Pileggi, S.F. Knowledge interoperability and re-use in Empathy Mapping: An ontological approach. Expert Systems with Applications 2021, 180, 115065. [Google Scholar] [CrossRef]

- Ferreira, B.; Silva, W.; Oliveira, E.; Conte, T. Designing Personas with Empathy Map. SEKE 2015. [Google Scholar]

- Kalbach, J. Maximize business impact with JTBD, empathy maps, and personas. UX Design. Available online: https://www.uxdesign.cc (accessed on 24 August 2024).

- Kasemsarn, K. A Marketing Plan for Applying 5W1H: A Case Study from Thailand. The International Journal of Design Education 2022, 16, 37. [Google Scholar] [CrossRef]

- Cooper, A.; Reimann, R.; Cronin, D. About Face 3: The Essentials of Interaction Design; John Wiley & Sons: Hoboken, NJ, 2007. [Google Scholar]

- Govella, A. Collaborative Product Design: Help Any Team Build a Better Experience; O’Reilly Media: Sebastopol, CA, 2019. [Google Scholar]

- Kalbach, J. Mapping Experiences; O’Reilly Media: Sebastopol, CA, 2020. [Google Scholar]

- Pierdicca, R.; Paolanti, M.; Quattrini, R.; Mameli, M.; Frontoni, E. A Visual Attentive Model for Discovering Patterns in Eye-Tracking Data-A Proposal in Cultural Heritage. Sensors 2020, 20, 2101. [Google Scholar] [CrossRef]

- Ulwick, A.W.; Osterwalder, A. Jobs to be Done: Theory to Practice 2016.

- Hornbæk, K.; Stage, J. The interplay between usability evaluation and user interaction design. International Journal of Human-Computer Interaction 2006, 21, 117-123.

- Bernhaupt, R.; Palanque, P.; Manciet, F.; Martinie, C. User-test results injection into task-based design process for the assessment and improvement of both usability and user experience. In Proceedings of 6th International Conference on Human-Centered Software Engineering, HCSE 2016, and 8th International Conference on Human Error, Safety, and System Development, HESSD 2016, Stockholm, Sweden, August 29-31, 2016.

- Bernard, H. Boar, Application Prototyping: A requirements definition strategy for the 80s. In New York: John Wiley & Sons 1984.

- Schork, S.; Kirchner, E. Defining requirements in prototyping: The holistic prototype and process development. DS 91: Proceedings of NordDesign 2018, Linköping, Sweden, 14th-17th August 2018 2018.

- Turner, J.H. The origins of positivism: The contributions of Auguste Comte and Herbert Spencer. In Handbook of Social Theory; Sage: Thousand Oaks, CA, 2001; pp. 30–42. [Google Scholar]

- Williamson, K. Research Methods for Students, Academics and Professionals: Information Management and Systems; Elsevier: Amsterdam, Netherlands, 2002. [Google Scholar]

- Baker, L. Observation: A complex research method. Library Trends 2006, 55, 171–189. [Google Scholar] [CrossRef]

- Radzikowski, J.; Stefanidis, A.; Jacobsen, K.H.; Croitoru, A.; Crooks, A.; Delamater, P. L. The measles vaccination narrative in Twitter: A quantitative analysis. JMIR Public Health and Surveillance 2016, 2, e5059. [Google Scholar] [CrossRef]

- Taherdoost, H. Validity and reliability of the research instrument; how to test the validation of a questionnaire/survey in a research. Available online: https://doi.org/10.2139/ssrn.2815600 (accessed on 10 May 2023).

- Krosnick, J.A. Questionnaire design. In the Palgrave Handbook of Survey Research; Palgrave Macmillan: London, UK, 2018; pp. 439–455. [Google Scholar]

- Brooke, J. SUS: a “quick and dirty” usability. In Usability Evaluation in Industry; Taylor & Francis: London, UK, 1996; pp. 189–194. [Google Scholar]

- Brooke, J. SUS: a retrospective. Journal of Usability Studies 2013, 8, 29–40. [Google Scholar]

- Grier, R.A.; Bangor, A.; Kortum, P.; Peres, S.C. The system usability scale: Beyond standard usability testing. Proceedings of the Human Factors and Ergonomics Society Annual Meeting 2013.

- Zhang, D.; Adipat, B. Challenges, methodologies, and issues in the usability testing of mobile applications. International Journal of Human-Computer Interaction 2006, 18, 293–308. [Google Scholar] [CrossRef]

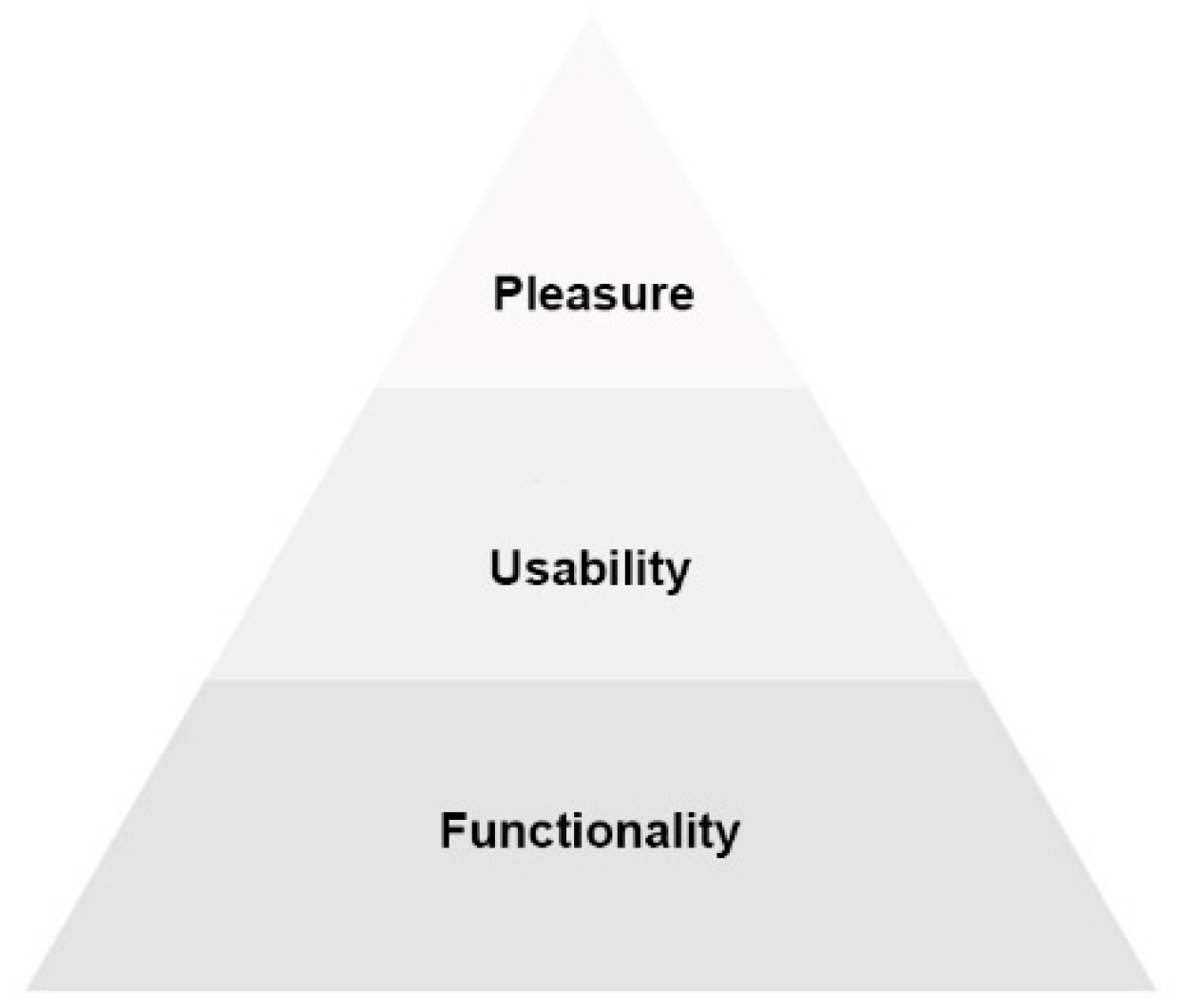

- Tiger, L. The Pursuit of Pleasure, 1st ed.; Little, Brown: Boston, MA, 1992. [Google Scholar]

- Jordan, P.W. Human factors for pleasure in product use. Applied Ergonomics 1998, 29, 25–33. [Google Scholar] [CrossRef] [PubMed]

- Jordan, P.W. Designing Pleasurable Products: An Introduction to the New Human Factors; CRC Press: Boca Raton, FL, 2000. [Google Scholar]

- Hassenzahl, M. The Effect of Perceived Hedonic Quality on Product Appealingness. International Journal of Human-Computer Interaction 2001, 13, 481–499. [Google Scholar] [CrossRef]

- Hernández-Jorge, C.M.; Rodríguez-Hernández, A. F.; Kostiv, O.; Rivero, F.; Domínguez-Medina, R. Psychometric Properties of an Emotional Communication Questionnaire for Education and Healthcare Professionals. Education Sciences 2022, 12, 484. [Google Scholar] [CrossRef]

- Artino Jr, A.R.; La Rochelle, J. S.; Dezee, K.J.; Gehlbach, H. Developing questionnaires for educational research: AMEE Guide No. 87. Medical Teacher 2014, 36, 463–474. [Google Scholar] [CrossRef]

- Hardesty, D.M.; Bearden, W.O. The use of expert judges in scale development: Implications for improving face validity of measures of unobservable constructs. Journal of Business Research 2004, 57, 98–107. [Google Scholar] [CrossRef]

- Natural Language API Basics. Cloud Natural Language. Available online: https://cloud.google.com/natural-language/docs/basics#interpreting_sentiment_analysis_values.

- KMO and Bartlett’s Test (by IBM, 2021). Available online: https://www.ibm.com/docs/en/spss-statistics/28.0.0?topic=detection-kmo-bartletts-test (accessed on 10 May 2023).

- Taber, K.S. The use of Cronbach’s alpha when developing and reporting research instruments in science education. Research in Science Education 2018, 48, 1273–1296. [Google Scholar] [CrossRef]

- Guilford, J.P. Psychometric Methods, 2nd ed.; McGraw-Hill: New York, NY, 1954. [Google Scholar]

- Ho, Y.; Kwon, O. Y.; Park, S.Y.; Yoon, T.Y.; Kim, Y.E. Reliability and validity test of the Korean version of Noe’s evaluation. Korean Journal of Medical Education 2017, 29, 15–26. [Google Scholar] [CrossRef]

- Hu, L.T.; Bentler, P. M. Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling: A Multidisciplinary Journal 1999, 6, 1–55. [Google Scholar] [CrossRef]

- Research Methods Knowledge Base (by Trochim, W.M.K., hosted by Conjointly, 2020). Available online: https://conjointly.com/kb/?_ga=2.202908566.666445287.1649411337-790067422.1649411337 (accessed on 10 May 2023).

| Key Factor | Mechanism | Positive Behavior |

|---|---|---|

| Cognition | Personal cognition is crucial for establishing interpersonal connections. Individuals process consciousness and impressions through psychological cognition, which adjusts relationship dynamics and influences final behaviors. | attention, memory, perception, perspective-taking |

| Affection | Emotions arise from interactions between individuals and their environment. Emotional reactions are triggered by issues in interpersonal interactions, aiding in the understanding of emotional tendencies in relationships. The affective mechanism regulates thoughts, body, and feelings. | gratitude, empathy, compassion, concern for others |

| Behavior | Behavior is a key factor in interpersonal communication, reflecting actual responses or actions towards people, events, and things. These actions, driven by internal intentions, manifest externally and can significantly influence interpersonal relationships. | Respect, participation, interpersonal assistance, playful interaction |

| Brainwave Type | Frequency | Physiological State |

|---|---|---|

| δ wave | 0–4 Hz | Occurring during deep sleep |

| θ wave. | 4–8 Hz | Occurring during deep dreaming and deep meditation |

| α wave | 8–13 Hz | Occurring when the body is relaxed and at rest. |

| β wave | 13–30 Hz | Occurring during states of neurological alertness, focused thinking, and vigilance. |

| Name of the work | Interaction Type | Application Area |

|---|---|---|

| Kismet (1999) [45] | Image Recognition, Semantic Analysis | Interpersonal Communication |

| eMoto (2007) [65] | Gesture Detection, Pressure Sensors | Interpersonal Communication |

| MobiMood (2010) [66] | Manual Input, Pressure Sensors | Interpersonal Communication |

| Feel Emotion (2015) [67] | Skin Conductance, Heart Rate Sensors | Assistive Psychological Diagnosis |

| Moon Light (2015) [68] | Skin Conductance, Heart Rate Sensors | Assistive Psychological Diagnosis |

| Woebot (2017) [69] | Semantic Analysis, Chatbots | Assistive Psychological Diagnosis |

| What is your scent today? (2018) [70] | Big Data Collection, Semantic Analysis | Tech-Art Creation |

| Name of the Work | Motivation of the Work | Interaction Content | Result of the Work |

|---|---|---|---|

| Kismet (1999) [45] | Exploring social behaviors between infants and caregivers and the expression of emotions through perception. | Waving and moving within the machine’s sight to provide emotional stimuli (happiness, sadness, anger, calmness, surprise, disgust, fatigue, and sleep). | Responding similarly to an infant, showing sadness without stimulation and happiness during interaction. |

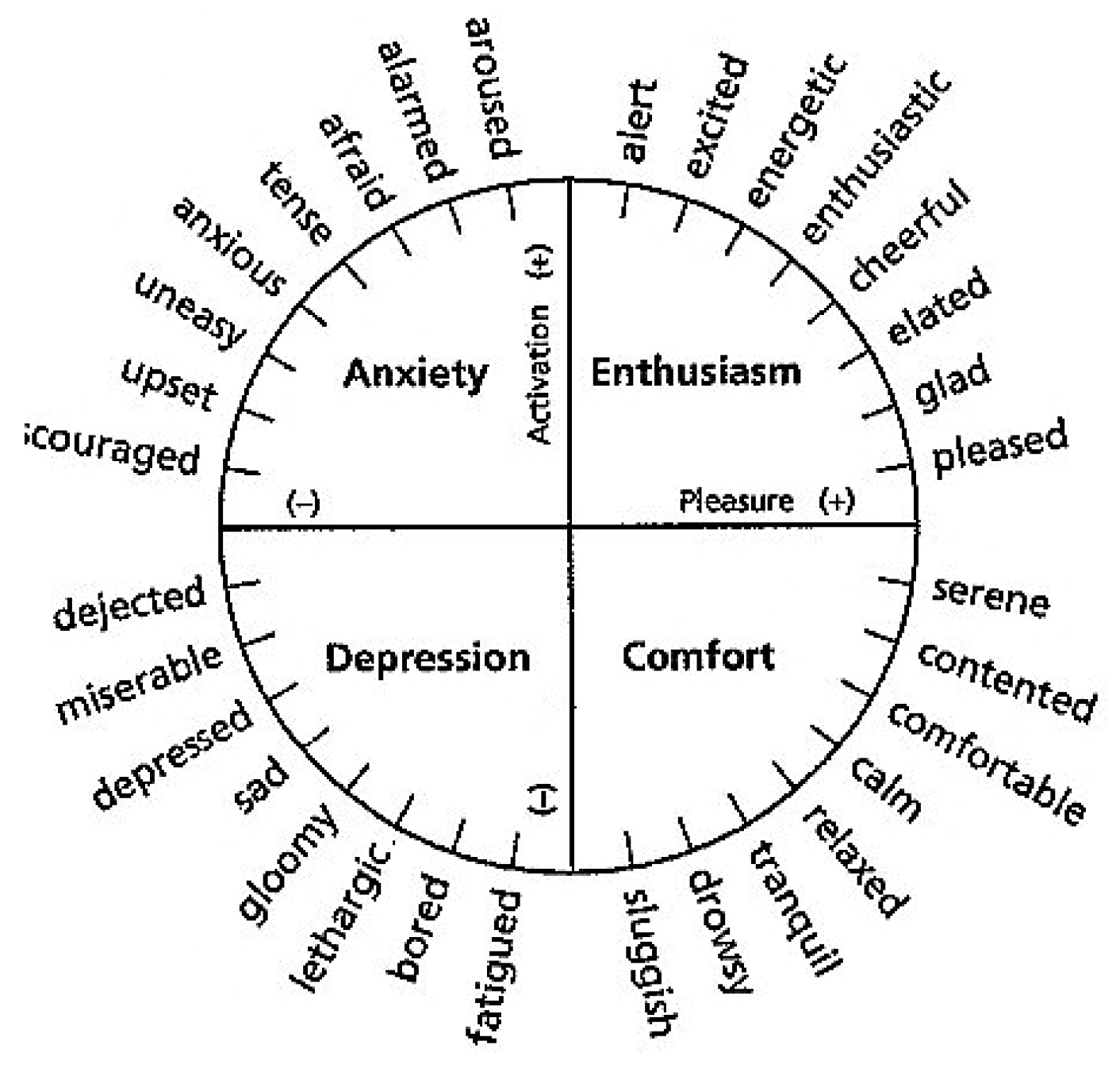

| eMoto (2007) [65] | Starting from everyday communication scenarios and designing emotional experiences centered on the user. | Interacting by pen pressure with emotional colors based on the Emotional Circumplex Model, allowing for the free pairing of emotional colors. | Allowing users to observe and become aware of their own and others’ emotions in realtime, noting emotional shifts and changes throughout interactions. |

| MobiMood (2010) [66] | Using emotions as a medium for communication, aiming at enhancing interpersonal relationships. | Users can select and adjust the intensity of emotions (e.g., sadness, energy, tension, happiness, anger). | Enabling personal expression and sharing, fostering positive social interactions. |

| Feel Emotion (2015) [67] | Monitoring users’ physiological information to observe and adjust their emotional states. | Employing wearable devices to monitor users’ physiological information. | Sensing emotional changes in realtime to achieve proactive emotional management. |

| Moon Light (2015) [68] | Understanding and cultivating self-awareness and control through physiological data. | Controls the corresponding environmental light color based on the current physiological information. | Exploring how feedback from biosensor data affects interpersonal interactions. |

| Woebot (2017) [69] | Replacing traditional psychological counseling with digital resources. | Offering cognitive behavioral therapy through conversations and chatbot-assisted functions. | Regulating users’ emotions to counteract negative and irrational cognitive thinking and emotions. |

| What is your scent today? (2018) [70] | Analyzing the emotions of information from social media using classifiers. | Conducting text mining for emotions (anger, disgust, fear, happiness, sadness, surprise). | Based on analyzed emotional information, adjusting fragrance blends to reflect emotional proportions and using scents to symbolize emotions. |

| Design Principle | Description | Notes |

|---|---|---|

| Sensitivity | Improving the accuracy of information recognition and provide a seamless experience | Integrating human sensory input and showing natural expression characteristics |

| Flexibility | Avoiding similar commands and preprocess related function commands | Conducting modular system design, including expressions and operations for commanding objects |

| Measurement Range | Providing equipment or functions for distant control of physical or virtual objects | Using depth cameras and non-contact sensing devices |

| Name of the Work | Sensing Interface | Interaction Style | Application Domain | Affective Computing |

|---|---|---|---|---|

| Speech, Gesture controlled wheelchair platform (2015) [87] | Gesture Recognition, Voice Recognition, Physical Interfaces | Integrating multimodal interfaces with visual feedback for remote control using physical devices. | Intelligent life | No |

| SimaRobot (2016) [88] | Voice Recognition, Image Recognition | Simulating, listening to, and empathizing with users’ emotions based on personal emotional information. | Education & learning, healthcare |

Yes |

| Gesto (2019) [89] | Voice Recognition, Gesture Recognition | Automatically executing customizable tasks. | Intelligent life | No |

| Emotion-based Music Composition System (2020) [90] | Physical Interfaces, Tone Recognition | Determining emotions through melody, pitch, volume, rhythm, etc. | Art creation | Yes |

| Industrial Robot (2020) [91] | Voice Recognition, Gesture Recognition | Using digital twin technology to achieve an integrated virtual and physical interactive experience. | Intelligent life | No |

| UAV multimodal interaction integration system (2022) [6] | Voice Recognition, Gesture Recognition | Controling drones and scenes within a virtual environment. | Intelligent life | No |

| Dimension | Questions |

|---|---|

| System Usability |

|

| |

| |

| |

| |

| |

| |

| Interactive Experience |

|

| |

| |

| |

| |

| |

| |

| Pleasure Experience |

|

| |

| |

| |

| |

| |

| |

| |

|

| Command | Description | Illustration |

|---|---|---|

| Move Forward | When both hands are clenched into fists and moved back and forth at the same rate, the game object will move forward. |  |

| Turn Left | When the right hand is clenched into a fist and swung back and forth, the game object moves left. The faster the swinging, the larger the movement angle. |  |

| Turn Right | When the left hand is clenched into a fist and swung back and forth, the game object moves right. The faster the swinging, the larger the movement angle. |  |

| Select and Click | Lightly touching the button with the index finger allows for easy navigation to other functional interfaces. |  |

| Grab Object | Grasping scene objects with a fist allows the game objects to move freely within the scene. |  |

| Emotion states | Example values | Explanation |

|---|---|---|

| Clearly positive | score S: 0.8; magnitude M: 3.0 | High positive emotion with a large amount of emotional vocabulary |

| Clearly nagative | score S: -0.6; magnitude M: 4.0 | High negative emotion with a large amount of emotional vocabulary |

| Neutral | score S: 0.1; magnitude M: 0.0 | Low positive emotion with relatively few emotional words |

| Mixed (lacking emotional expression) |

score S: 0.0; magnitude M: 4.0 | No obvious trend of positive or negative emotions overall. (The text expresses both positive and negative emotional vocabulary sufficiently.) |

| Original values | Value range | Scale & letter color | Color range | Meaning |

|---|---|---|---|---|

| Score | -1.0 ~ +1.0 | red | 0 ~ 255 | Emotion |

| Magnitude | 0.0 ~ +∞ | transparent | 0 ~ 255 | Sensibility |

| Meditation | 0 ~ 100 | green | 0 ~ 255 | Relaxation |

| Attention | 0 ~ 100 | blue | 0 ~ 255 | Focusing |

| Correlation class | Low Correlation | Moderate Correlation | High Correlation |

|---|---|---|---|

| Decision threshold | < 0.3 | 0.3 ~ 0.7 | > 0.7 |

| Significance level | Significant | High significant | Very significant |

|---|---|---|---|

| Decision threshold | p < 0.05 | p < 0.01 | p < 0.001 |

| Coefficient c with asterisk(s) | c* | c** | c*** |

| Pearson Correlation Analysis | Correlation coefficient c | .266* |

| Significance value p | .040 | |

| *When p < 0.05, the correlation is “significant.” | ||

| Interaction | Average semantic score of 50 users | Standard Deviation of semantic score of 50 users |

|---|---|---|

| Semantic (listening 2) | 0.30 | 0.36 |

| Semantic (speaking 4) | 0.44 | 0.39 |

| Case No. | Type | Emotion dataset 1 | Emotion dataset 2 | Correlation coefficient | Signeficance value p | Significance level |

|---|---|---|---|---|---|---|

| 1 | 1 | Semantic (listening 2) | Semantic (speaking 4) | .266* | .040 | significant |

| 2 | 2 | Semantic (listening 3) | Meditation (speaking 4) | .397** | .002 | highly significant |

| 3 | 2 | Semantic (listening 3) | Meditation (speaking 1) | .386** | .002 | highly significant |

| 4 | 2 | Semantic (listening 4) | Attention (speaking 1) | .285* | .027 | significant |

| 5 | 3 | Meditation (listening 2) | Meditation (listening 3) | .394** | .002 | highly significant |

| 6 | 3 | Meditation (listening 3) | Meditation (listening 4) | .344** | .007 | highly significant |

| 7 | 3 | Attention (listening 1) | Attention (listening 2) | .594*** | .000 | very significant |

| 8 | 3 | Attention (listening 2) | Attention (listening 3) | .722*** | .000 | very significant |

| 9 | 3 | Attention (listening 3) | Attention (listening 4) | .667*** | .000 | very significant |

| 10 | 4 | Meditation (speaking 1) | Meditation (speaking 2) | .277* | .032 | significant |

| 11 | 4 | Meditation (speaking 2) | Meditation (speaking 3) | .262* | .043 | significant |

| 12 | 4 | Meditation (speaking 3) | Meditation (speaking 4) | .626*** | .000 | very significant |

| 13 | 4 | Attention (speaking 1) | Attention (speaking 2) | .477*** | .000 | very significant |

| 14 | 4 | Attention (speaking 2) | Attention (speaking 3) | .417** | .001 | highly significant |

| 15 | 4 | Attention (speaking 3) | Attention (speaking 4) | .316* | .014 | significant |

| Interaction phase | Enthusiasm quadrant | Comfort quadrant | Depression quadrant | Anxiety qhadrant |

|---|---|---|---|---|

| Listening phase (I) | 40% | 20% | 15% | 25% |

| Listening phase (II) | 37% | 22% | 10% | 32% |

| Listening phase (III) | 32% | 37% | 13% | 18% |

| Listening phase (IV) | 27% | 37% | 12% | 25% |

| Interaction phase | Enthusiasm quadrant | Comfort quadrant | Depression quadrant | Anxiety qhadrant |

|---|---|---|---|---|

| Sharing phase (I) | 20% | 45% | 15% | 20% |

| Sharing phase (II) | 25% | 40% | 17% | 18% |

| Sharing phase (III) | 25% | 38% | 22% | 15% |

| Sharing phase (IV) | 30% | 40% | 13% | 17% |

| Basic information | Category | Number of samples | Percentage |

|---|---|---|---|

| Sex | Male | 22 | 37% |

| Female | 38 | 63% | |

| Age | 18~25 | 46 | 77% |

| 26~35 | 13 | 22% | |

| 36~45 | 1 | 2% | |

| Sxperience of using dating software | Yes | 47 | 78% |

| No | 13 | 22% |

| Dimension | Lebel | Min. | Max. | Mean | Standard deviation | Strongly agree (5) | Agree (4) | Average (3) | Disagree (2) | Strongly disagree (1) |

|---|---|---|---|---|---|---|---|---|---|---|

| System usability | A01 | 3 | 5 | 4.23 | 0.62 | 33% | 57% | 10% | 0% | 0% |

| A02 | 3 | 5 | 4.23 | 0.62 | 33% | 57% | 10% | 0% | 0% | |

| A03 | 2 | 5 | 4.28 | 0.86 | 50% | 33% | 12% | 5% | 0% | |

| A04 | 2 | 5 | 4.00 | 0.75 | 27% | 48% | 23% | 2% | 0% | |

| A05 | 3 | 5 | 4.38 | 0.61 | 45% | 48% | 7% | 0% | 0% | |

| A06 | 5 | 5 | 4.43 | 0.59 | 48% | 47% | 5% | 0% | 0% | |

| A07 | 2 | 5 | 4.00 | 0.78 | 25% | 55% | 15% | 5% | 0% | |

| Interactive experience | B01 | 1 | 5 | 4.01 | 0.91 | 32% | 47% | 15% | 5% | 1% |

| B02 | 2 | 5 | 3.9 | 0.83 | 25% | 45% | 25% | 5% | 0% | |

| B03 | 1 | 5 | 3.43 | 1.19 | 22% | 30% | 25% | 17% | 6% | |

| B04 | 2 | 5 | 4.0 | 0.80 | 30% | 47% | 20% | 3% | 0% | |

| B05 | 1 | 5 | 4.06 | 0.93 | 35% | 45% | 15% | 2% | 3% | |

| B06 | 1 | 5 | 3.97 | 1.13 | 40% | 33% | 15% | 7% | 5% | |

| B07 | 1 | 5 | 3.2 | 1.29 | 10% | 48% | 10% | 15% | 17% | |

| Pleasure experience | C01 | 2 | 5 | 3.85 | 0.84 | 23% | 43% | 28% | 5% | 0% |

| C02 | 2 | 5 | 4.31 | 0.81 | 50% | 35% | 12% | 3% | 0% | |

| C03 | 3 | 5 | 4.36 | 0.66 | 47% | 43% | 10% | 0% | 0% | |

| C04 | 2 | 5 | 4.73 | 0.54 | 77% | 22% | 0% | 1% | 0% | |

| C05 | 2 | 5 | 4.26 | 0.77 | 43% | 43% | 10% | 3% | 0% | |

| C06 | 2 | 5 | 4.23 | 0.76 | 42% | 42% | 15% | 2% | 0% | |

| C07 | 2 | 5 | 4.45 | 0.67 | 53% | 40% | 5% | 2% | 0% | |

| C08 | 1 | 5 | 4.28 | 0.88 | 48% | 38% | 8% | 3% | 2% | |

| C09 | 1 | 5 | 4.11 | 0.94 | 42% | 35% | 18% | 3% | 2% |

| Dimension | Name of Measure or Test | Value |

|---|---|---|

| System usability | KMO measure of sampling adequacy | .786 |

| Significance of Bartlett’s test of sphericity | .000 | |

| Interactive experience | KMO measure of sampling adequacy | .671 |

| Significance of Bartlett’s test of sphericity | .000 | |

| Pleasure experience | KMO measure of sampling adequacy | .778 |

| Significance of Bartlett’s test of sphericity | .000 |

| Dimension: system usability | ||

|---|---|---|

| Question | 1 | 2 |

| A06 | .744 | .168 |

| A03 | .738 | .269 |

| A02 | .733 | -.004 |

| A07 | .672 | .303 |

| A04 | -.004 | .772 |

| A05 | .244 | .764 |

| A01 | .369 | .644 |

| Dimension: interactive experience | ||

|---|---|---|

| Question | 1 | 2 |

| B04 | .819 | .178 |

| B01 | .771 | -.033 |

| B02 | .725 | .068 |

| B06 | .650 | .190 |

| B05 | .029 | .813 |

| B03 | .025 | .740 |

| B07 | .257 | .578 |

| Dimension: pleasure experience | |||

|---|---|---|---|

| Question | 1 | 2 | 3 |

| C04 | .812 | .167 | .087 |

| C05 | .790 | .195 | .175 |

| C09 | .734 | .321 | -.166 |

| C07 | .173 | .913 | .167 |

| C06 | .398 | .696 | .207 |

| C08 | .565 | .626 | .107 |

| C02 | -.077 | .325 | .785 |

| C03 | .405 | -.075 | .759 |

| C01 | -.024 | .125 | .702 |

| Dimension | Category | Latent dimension | No. of questions | Labels of questions |

|---|---|---|---|---|

| System usability | RA1 | Functionality | 4 | A06, A03, A02, A07 |

| RA2 | Effectiveness | 3 | A04, A05, A01 | |

| Interactive experience | RB1 | Empathy | 4 | B04, B01, B02, B06 |

| RB2 | Isolation | 3 | B05, B03, B07 | |

| Pleasure experience | RC1 | Satisfaction | 3 | C04, C05, C09 |

| RC2 | Immersion | 3 | C07, C06, C08 | |

| RC3 | Relaxation | 3 | C02, C03, C01 |

| Dimension | Category | Latent dimension | Cronbach’s α Coefficient of the latent dimension | Cronbach’s α Coefficient of the dimension |

|---|---|---|---|---|

| System usability | RA1 | Functionality | .735 | .786 |

| RA2 | Effectiveness | .604 | ||

| Interactive experience | RB1 | Empathy | .711 | .671 |

| RB2 | Isolation | .577 | ||

| Pleasure experience | RC1 | Satisfaction | .694 | .778 |

| RC2 | Immersion | .694 | ||

| RC3 | Relaxation | .638 |

| Dimension | df | χ2 | χ2/df | cfi | RMSEA | RMSEA (90% CI) | |

|---|---|---|---|---|---|---|---|

| LO | HI | ||||||

| System usability | 13 | 6.971 | 0.536 | 1.000 | 0.000 | 0.000 | 0.054 |

| Interactive experience | 13 | 11.170 | 0.859 | 1.000 | 0.000 | 0.000 | 0.113 |

| Pleasure experience | 23 | 31.726 | 1.379 | 0.951 | 0.080 | 0.000 | 0.143 |

| a Meanings of symbols—df: degree of freedom; gfi: goodness-of-fit index; agfi: average gfi; cfi: comparative fit index; RMSEA: root mean square error of approximation; CI: confidence interval; LO: low; HI: high. | |||||||

| Dimension | Category | Group of Related Questions | Construct Validity Value |

|---|---|---|---|

| System usability | RA1 | A06, A03, A02, A07 | 0.742 |

| RA2 | A04, A05, A01 | 0.650 | |

| Interactive experience | RB1 | B04, B01, B02, B06 | 0.750 |

| RB2 | B05, B03, B07 | 0.573 | |

| Pleasure experience | RC1 | C04, C05, C09 | 0.771 |

| RC2 | C07, C06, C08 | 0.830 | |

| RC3 | C02, C03, C01 | 0.677 |

| Label | Question | Min. | Max. | Mean | S. D. | Strongly agree (5) | Agree (4) |

Average (3) | Disagree (2) | Strongly disagree (1) | Agree or above |

|---|---|---|---|---|---|---|---|---|---|---|---|

| A06 | The various functions of the system are well integrated. | 5 | 5 | 4.43 | 0.59 | 48% | 47% | 5% | 0% | 0% | 95% |

| A03 | I find the system’s functionality intuitive. | 2 | 5 | 4.28 | 0.86 | 50% | 33% | 12% | 5% | 0% | 83% |

| A02 | I am able to understand and become familiar with each function of the system. | 3 | 5 | 4.23 | 0.62 | 33% | 57% | 10% | 0% | 0% | 90% |

| A07 | I would like to use this system frequently. | 2 | 5 | 4.00 | 0.78 | 25% | 55% | 15% | 5% | 0% | 80% |

| Label | Question | Min. | Max. | Mean | S. D. | Strongly agree (5) | Agree (4) |

Average (3) | Disagree (2) | Strongly disagree (1) | Agree or above |

|---|---|---|---|---|---|---|---|---|---|---|---|

| A04 | The emotions output by the system seem accurate. | 2 | 5 | 4.00 | 0.75 | 27% | 48% | 23% | 2% | 0% | 75% |

| A05 | The process of picking up the message bottle and listening to the message is coherent. | 3 | 5 | 4.38 | 0.61 | 45% | 48% | 7% | 0% | 0% | 93% |

| A01 | The interaction instructions help me quickly become familiar with the system. | 3 | 5 | 4.23 | 0.62 | 33% | 57% | 10% | 0% | 0% | 90% |