Submitted:

05 February 2025

Posted:

06 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

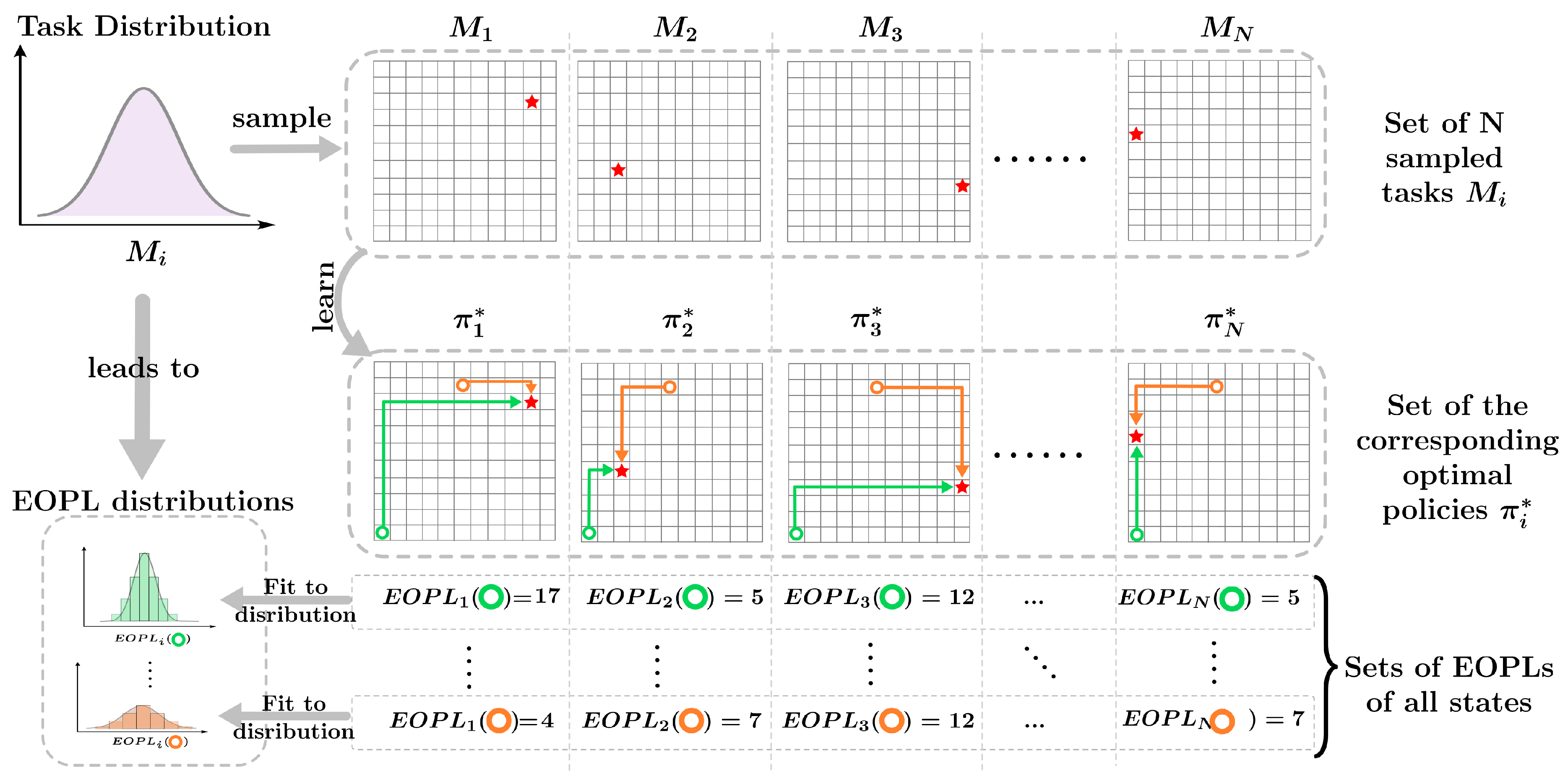

- We theoretically demonstrate that for tasks sharing the same state-action space, differences in goals or dynamics can be represented by multiple separate distributions of each state-action which is the distribution of the number of steps required to reach the goal called "Expected Optimal Path Length". We then show that the value function’s distribution of each state-action is directly connected to its distribution of the expected optimal path lengths.

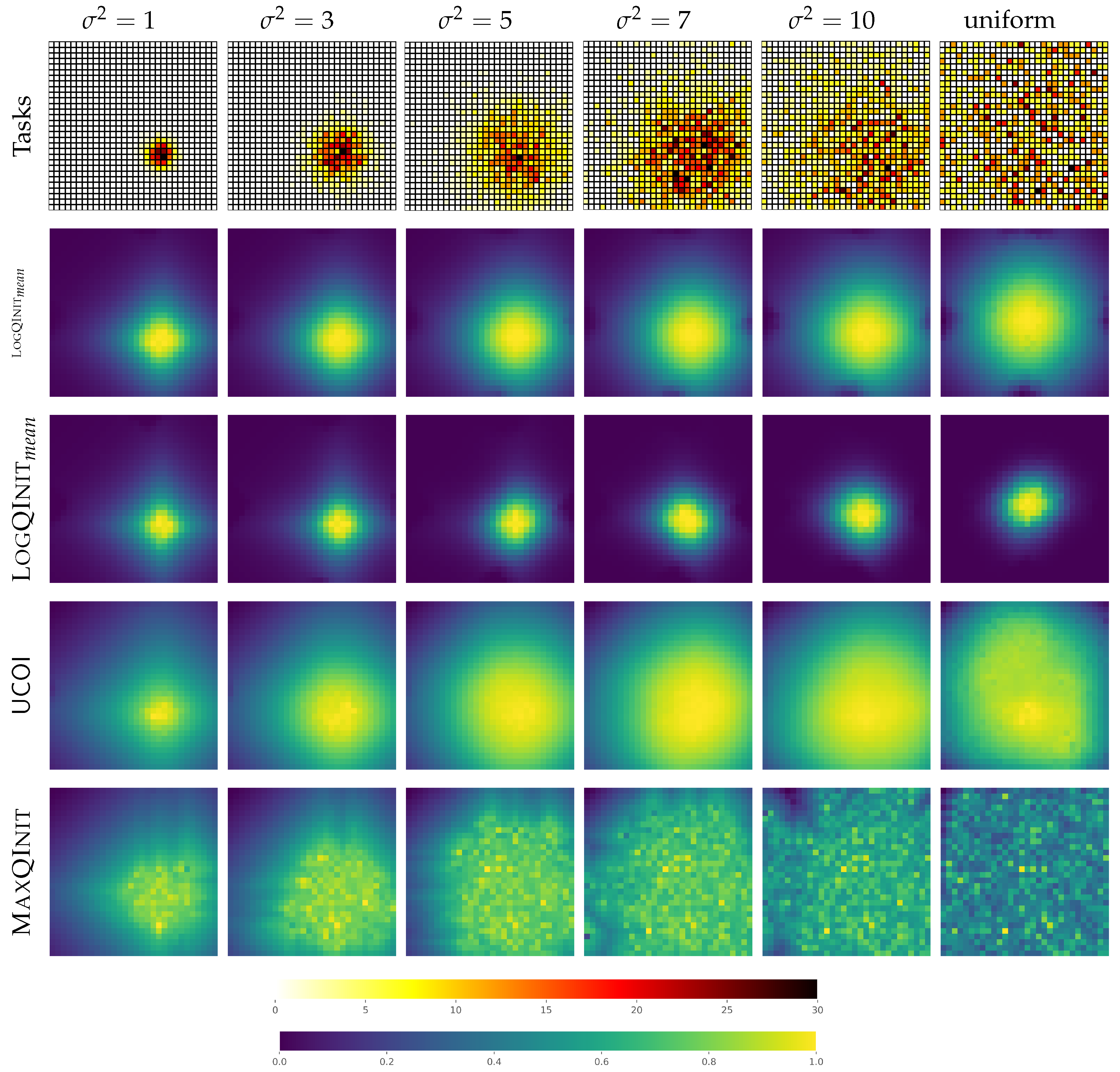

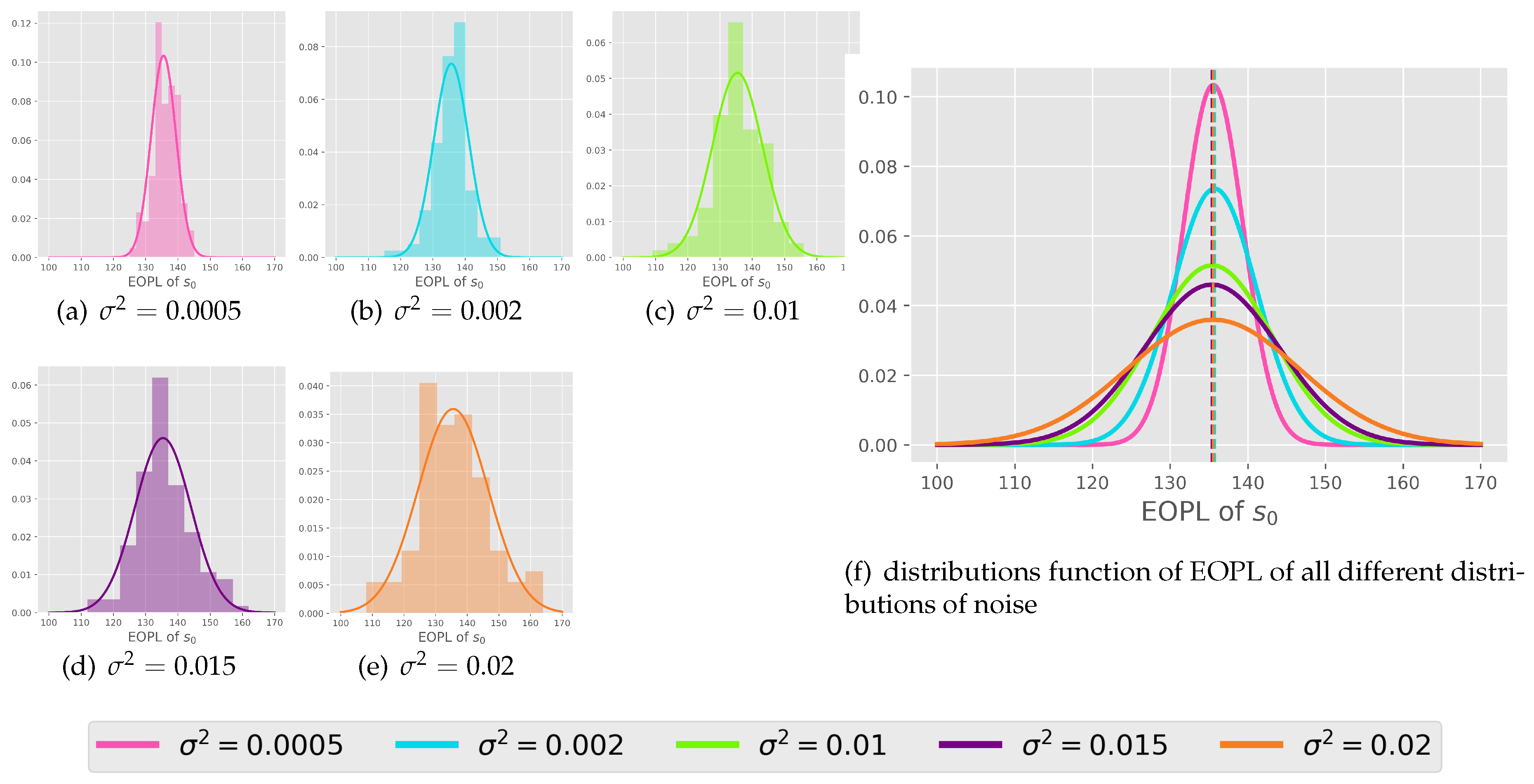

- We propose a method for initializing the value function in sparse reward setting using a normal distribution of tasks. Our empirical experiments validate this approach by confirming our proposition about the distribution of the expected path lengths. .

2. Related Works

3. Preliminaries

- Terminal state : which represents the goal the agent must achieve. This state is terminal which means the agent can not take further actions from there.

-

Most-likely-next-state function: defined as () is a function that map a state-action to the most likely state among all possible next states such as:For example, in a grid-world scenario, taking an action such as moving left might result in a high probability of transitioning to the adjacent left cell, which can be captured by .

4. Expected Optimal Path Length

4.1. Definition and Representation of EOPL

- if else .

- , where .

- The EOPL is 1 if the most likely successor state following action a from state s is the goal g. The agent cannot take any further actions if it is already in the goal state g, as this state is terminal; thus, the optimal length is 0.

- For any state different from the goal state g, the EOPL is strictly greater than 0.

4.2. Value Function in Term of EOPL

5. Value Function Distribution in Terms of EOPL Distribution

5.1. Value Function Initialization by the Distribution Expectation:

5.2. Decomposing the Distribution of Similar State-Action Tasks

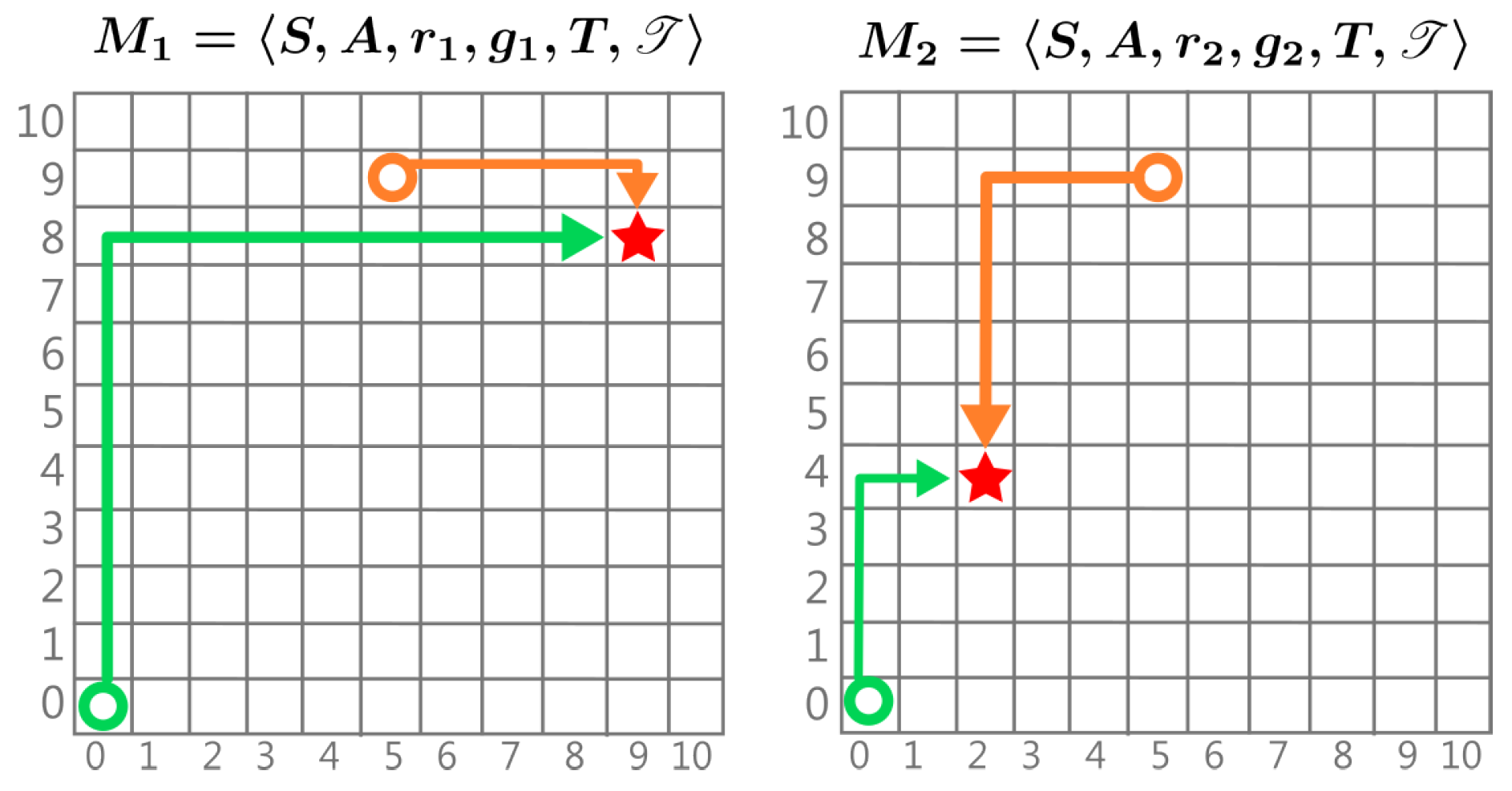

5.3. Goal Distribution

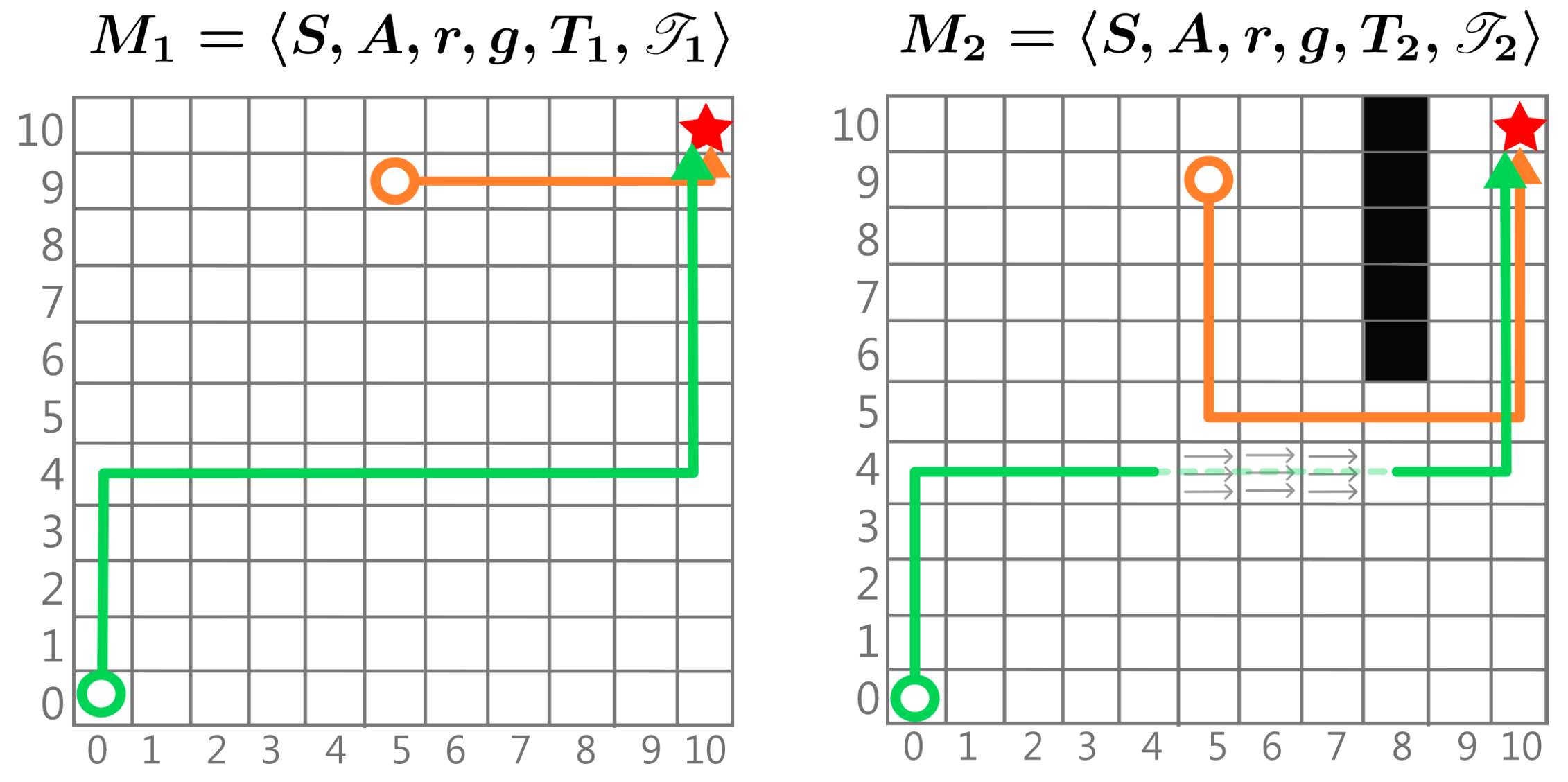

5.4. Dynamics Distribution

- Different T Similar : Only the probability of transitioning to the same neighbouring state using the same action changes slightly. In this case, remains nearly the same, so the EOPL does not significantly change across tasks.

- Different T Different : The same state-action pair may result in transitions to different states in different tasks. In this case, the EOPL changes depending on whether the transition moves the agent closer to the target or farther away. For example, consider a drone in a windy environment [17], where the target is always the same. Due to varying wind conditions, the drone will take different paths to reach the target each time. If the wind pushes it towards the target, it will reach the target more quickly; if the wind pushes against it, the drone will take longer to achieve the goal.

5.5. Value Function Distribution

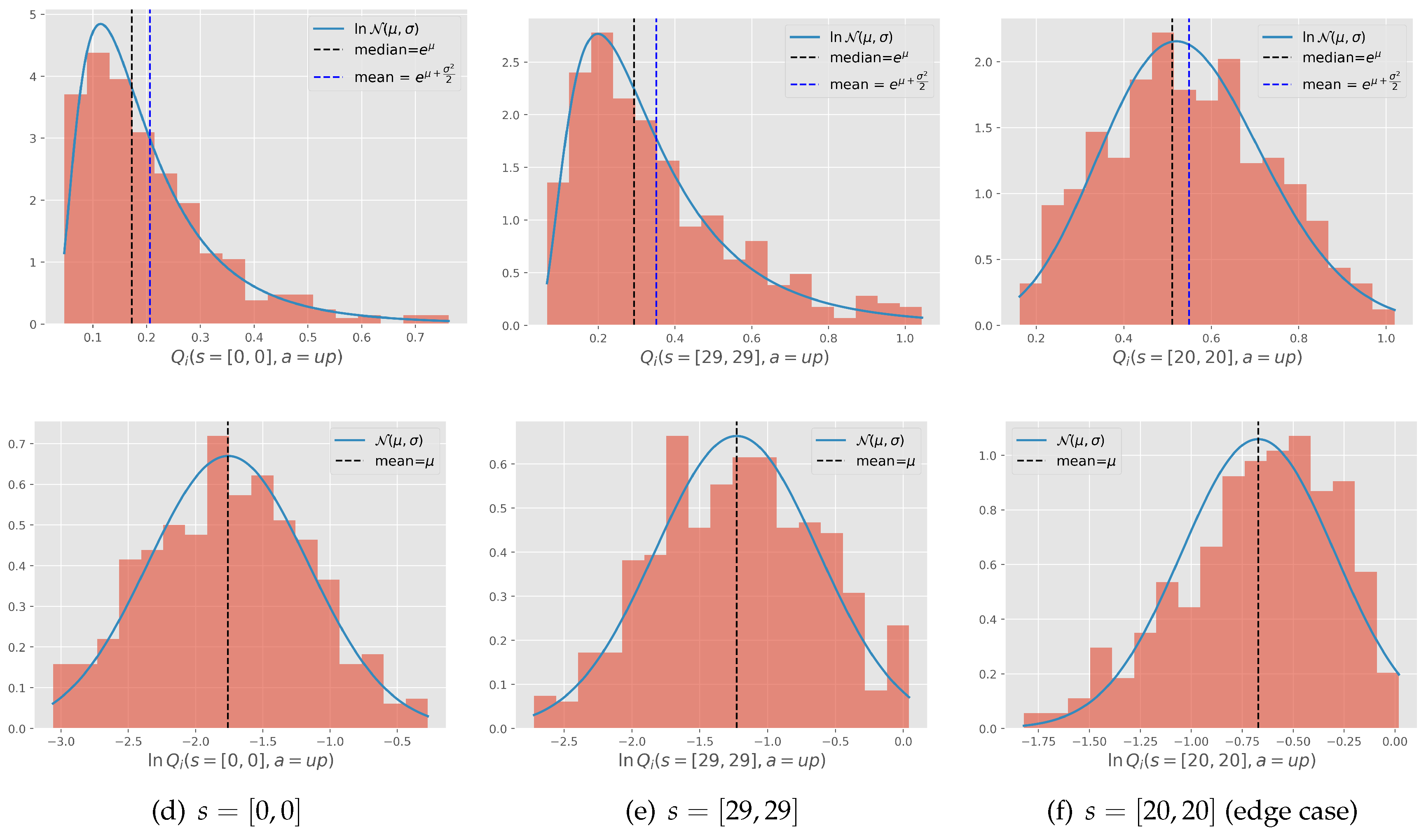

6. Case Study: Log-Normality of the Value Function Distribution

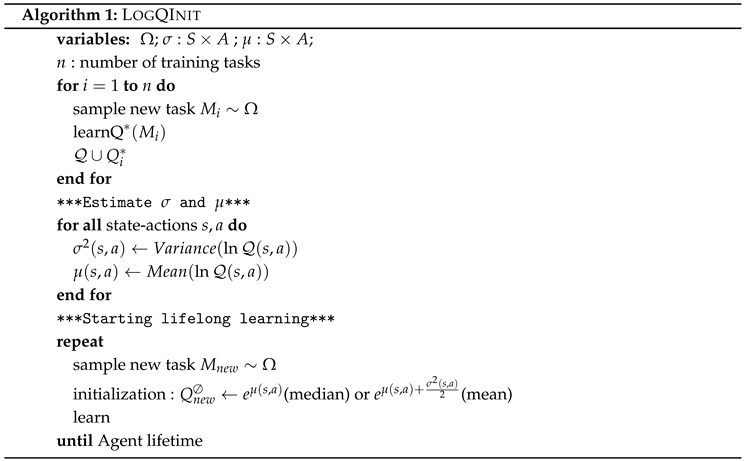

Algorithm: LogQInit

- The agent first samples a subset of tasks which are learnt sufficiently to get a close approximation of their optimal function and are stored in

- the EOPL distribution parameters for each state action is then estimated using the sample

- Using these estimates, the value function is initialized and learnt afterwards for subsequent tasks and

|

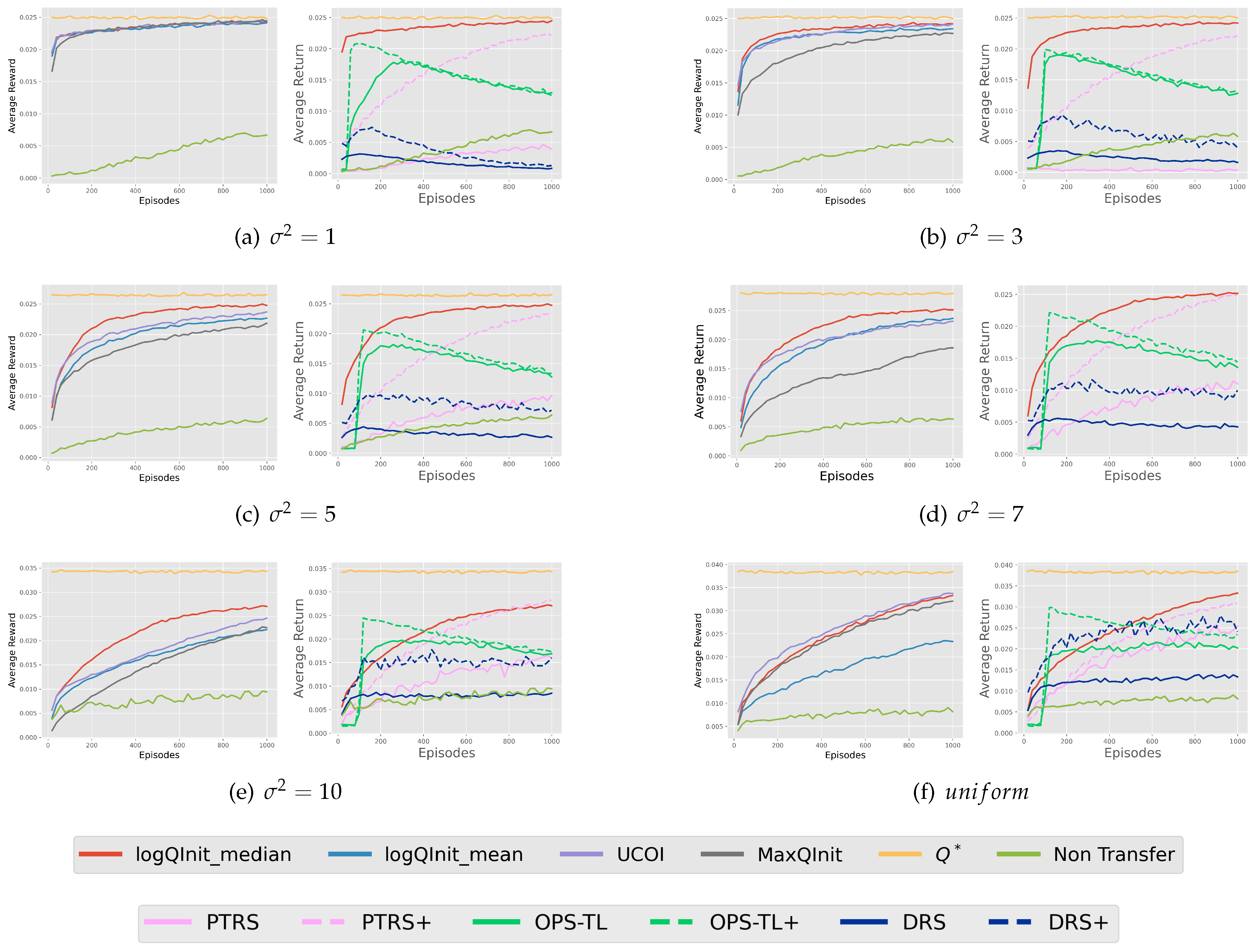

7. Experiments

- MaxQInit [1]: A method based on the optimistic initialization concept, where each state-action value is initialized using the maximum value from previously solved tasks.

- [19]: This method balances optimism in the face of uncertainty by initializing with the maximum value, while relying on the mean when certainty is higher.

- PTRS (Policy Transfer with Reward Shaping) [7]: This approach utilizes the value function from the source task as a potential function to shape the reward in the target task, expressed as . Here, the potential function is defined as the mean of the set .

- OPS-TL [15]: This method maintains a dictionary of previously learned policies and employs a probabilistic selection mechanism to reuse these policies for new tasks.

- DRS (Distance-Based Reward Shaping): We introduce this algorithm as a straightforward reward-shaping method that relies on the distance between the current state and the goal state.

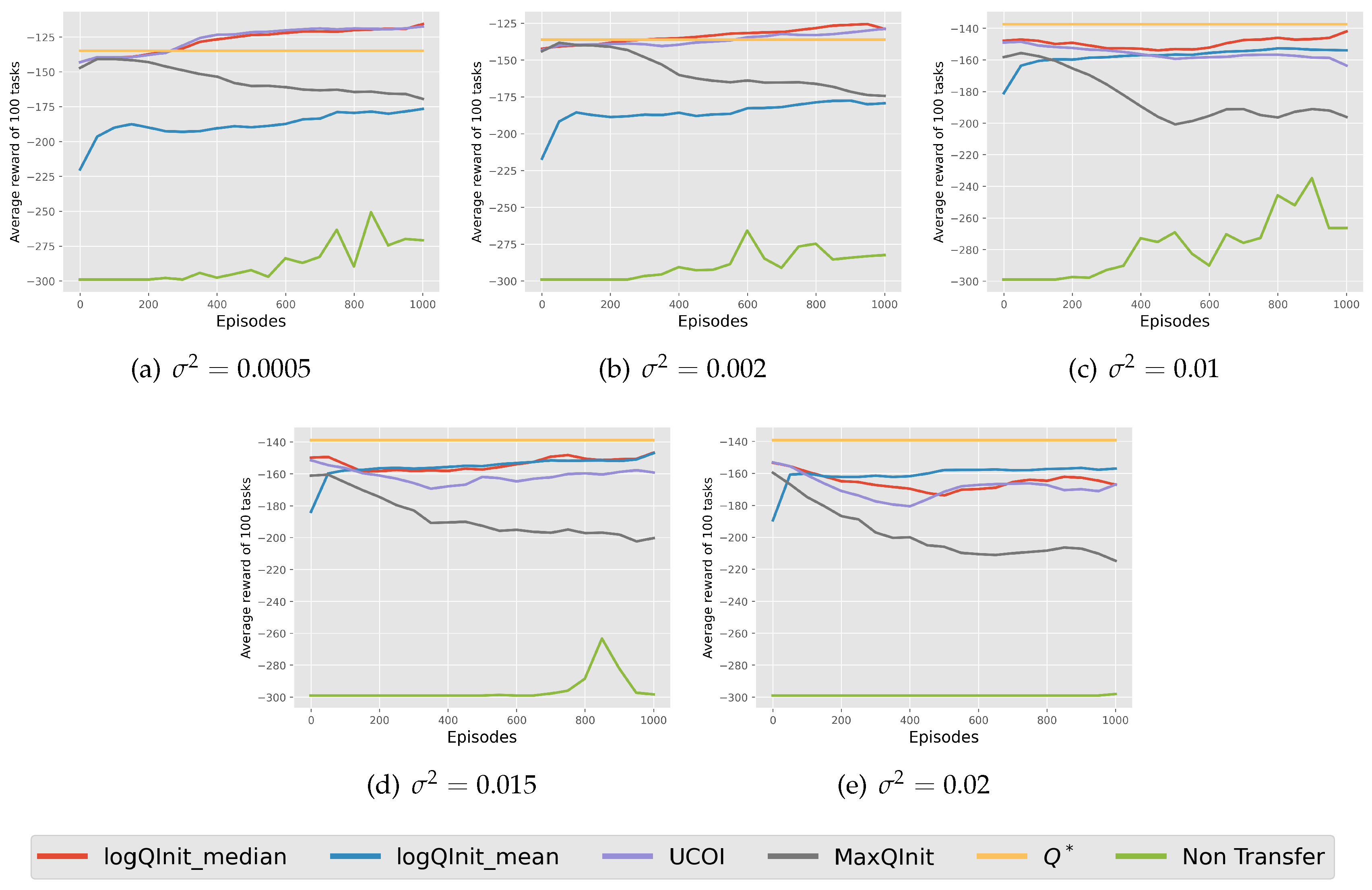

7.1. Goal Distribution: Gridworld

7.1.1. Environment Description:

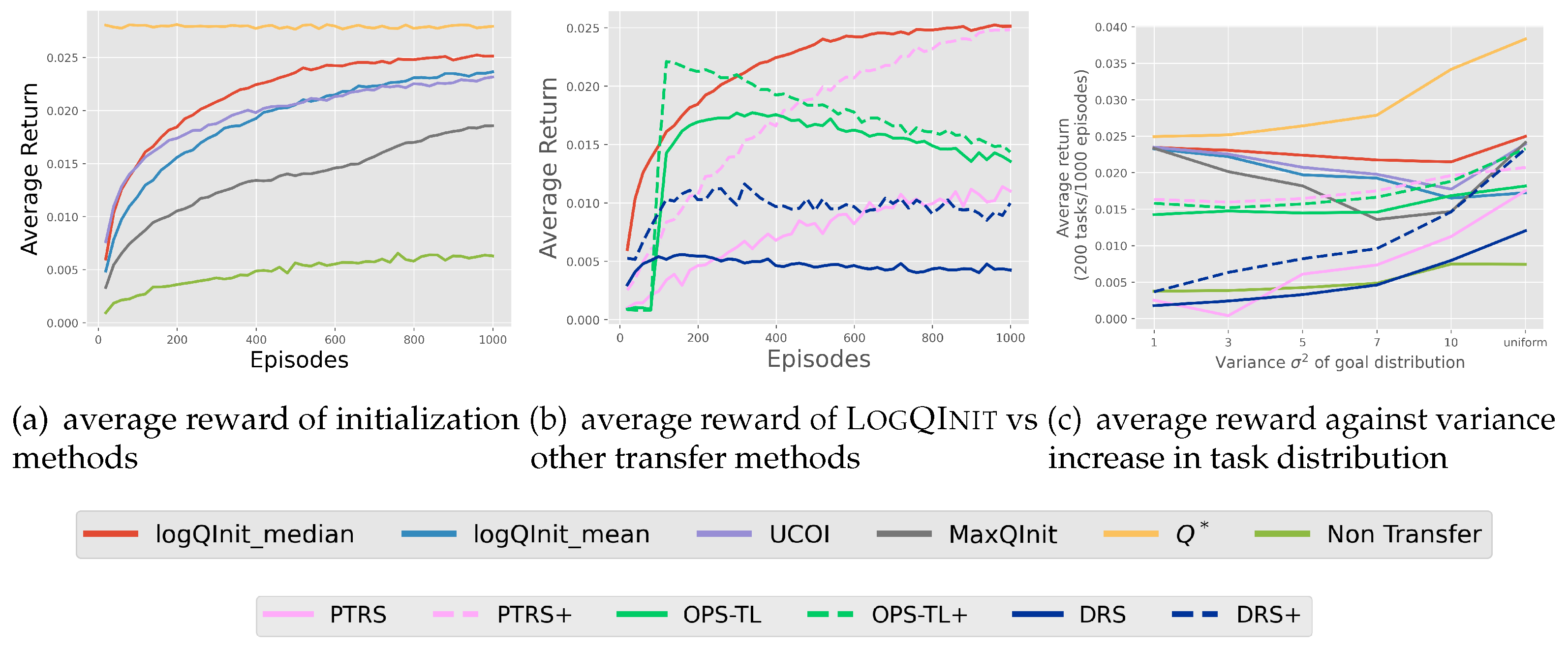

7.1.2. Results and Discussion:

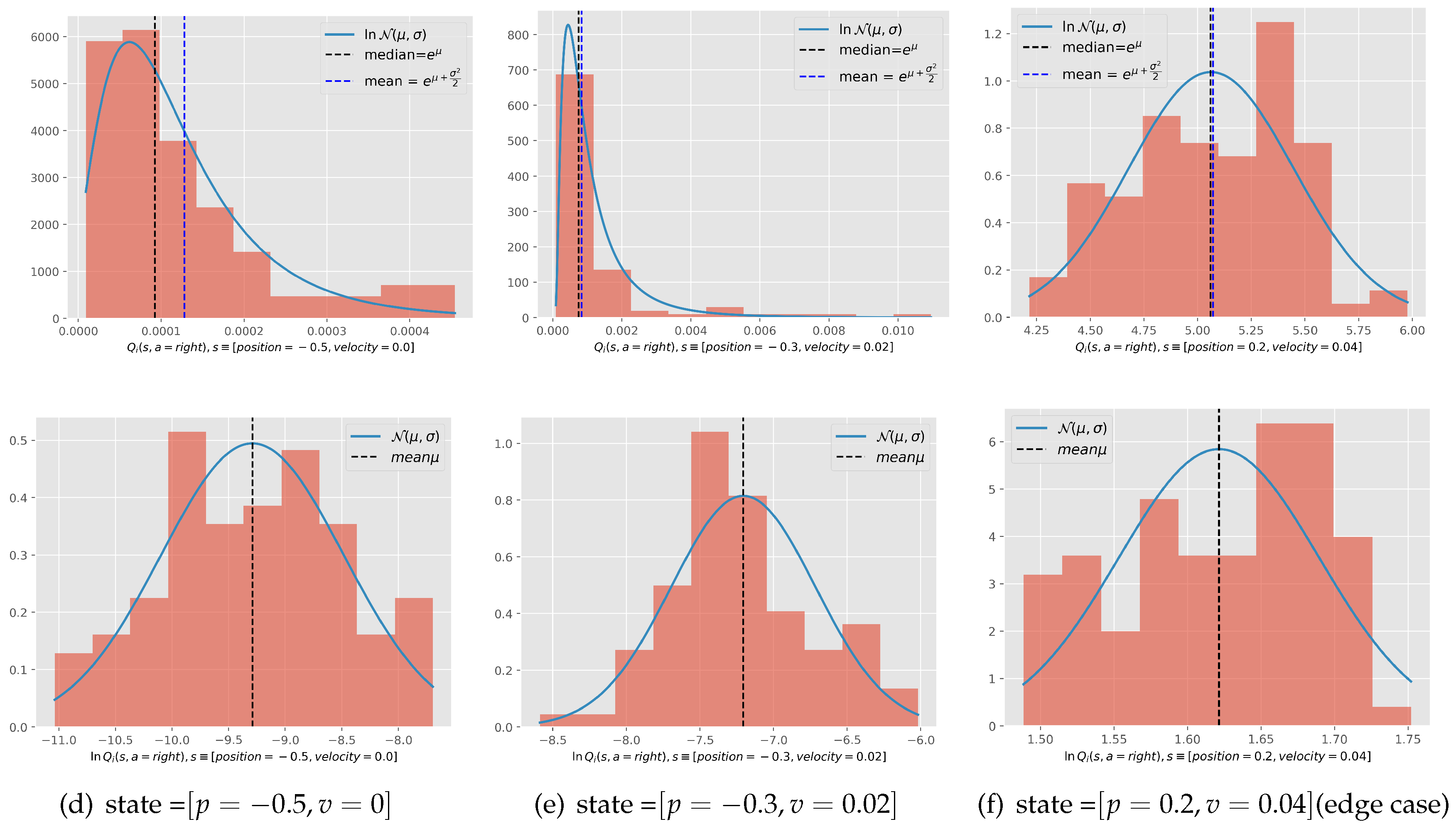

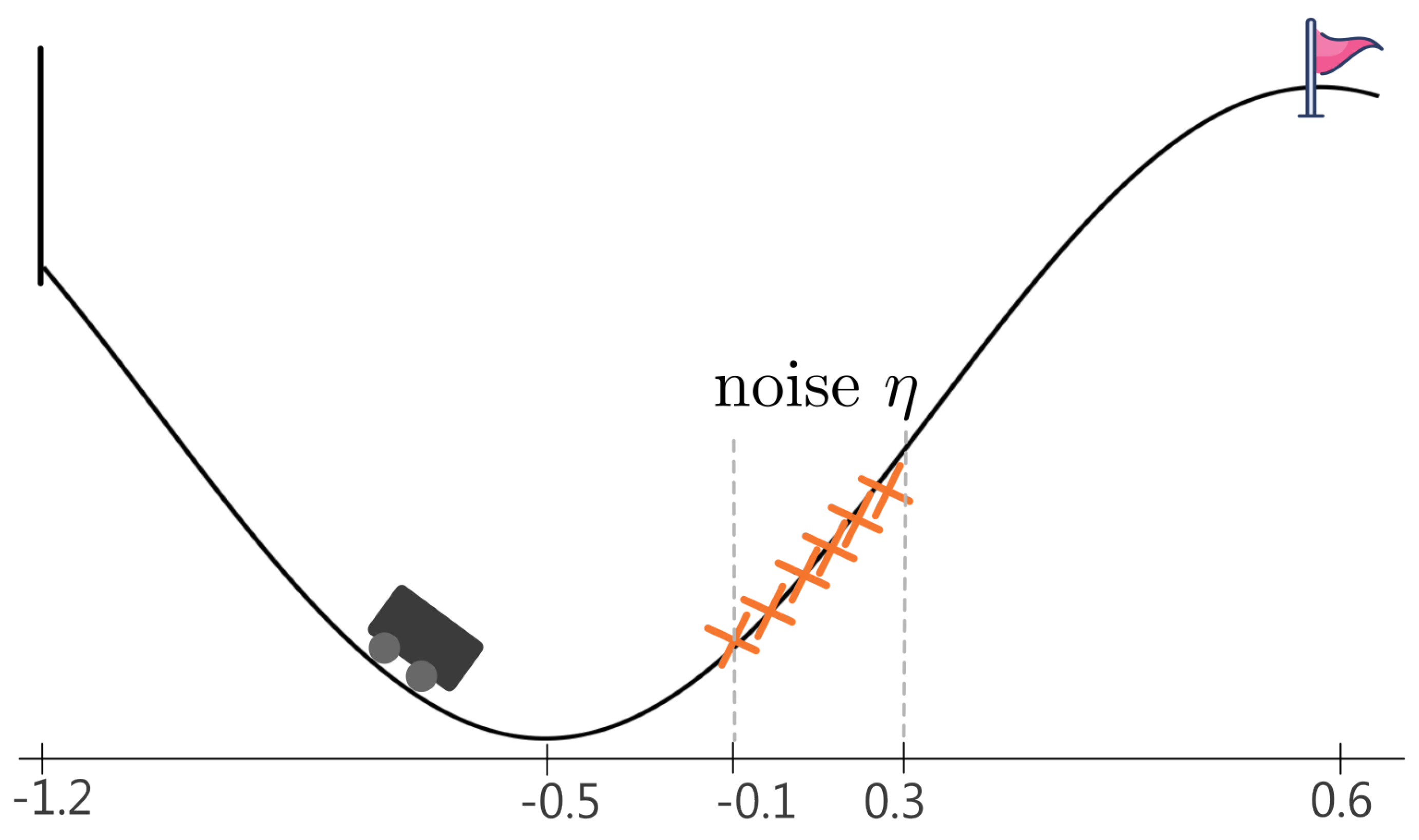

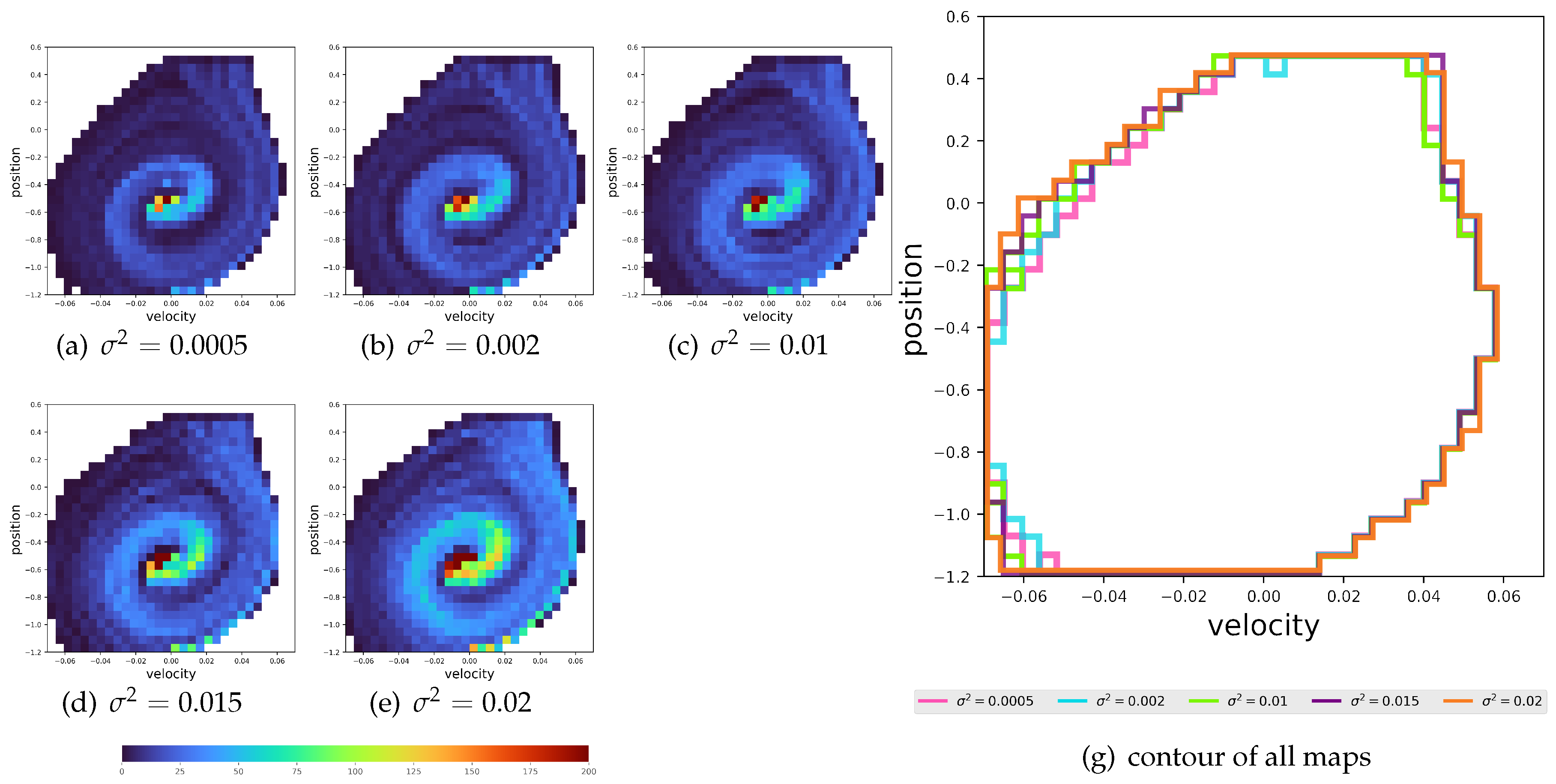

7.2. Dynamics Distribution: Mountain Car

7.2.1. Environment Description:

7.2.2. Results and Discussion:

8. Conclusion

Appendix A

Appendix A.1. Proof of Proposition 1

Appendix A.2. Proof of Proposition 5

Appendix A.3. Proof of normality of

-

Finite Expectation and Variance of : The expectation depends on the distribution of , with a higher probability density near 1 leading to a less negative mean. The variance is finite and captures the spread of :we know that for sure that , with since this transition is . Such behavior is characteristic of deterministic or near-deterministic environments, which are common in many RL tasks. Based on this observation, we posit that RL environments of interest adhere to this description, thereby satisfying the condition of finite expectation and variance for .

- Sufficiently Large : When Y is large enough (e.g., ), the sum satisfies the Central Limit Theorem, approximating a normal distribution due to the aggregation of independent terms.

Appendix A.4. Environment Description

References

- Abel, D., Jinnai, Y., Guo, S., Konidaris, G. & Littman, M. Policy and value transfer in lifelong reinforcement learning. International Conference On Machine Learning. pp. 20-29 (2018).

- Agrawal, P. & Agrawal, S. Optimistic Q-learning for average reward and episodic reinforcement learning. ArXiv Preprint ArXiv:2407.13743. (2024). [CrossRef]

- Ajay, A., Gupta, A., Ghosh, D., Levine, S. & Agrawal, P. Distributionally Adaptive Meta Reinforcement Learning. Advances In Neural Information Processing Systems. 35 pp. 25856-25869 (2022).

- Andrychowicz, M., Wolski, F., Ray, A., Schneider, J., Fong, R., Welinder, P., McGrew, B., Tobin, J., Pieter Abbeel, O. & Zaremba, W. Hindsight experience replay. Advances In Neural Information Processing Systems. 30 (2017).

- Bellemare, M., Dabney, W. & Munos, R. A distributional perspective on reinforcement learning. International Conference On Machine Learning. pp. 449-458 (2017). [CrossRef]

- Z., Guo, X., Wang, J., Qin, S. & Liu, G. Deep Reinforcement Learning for Truck-Drone Delivery Problem. Drones. 7 (2023). Available online: https://www.mdpi.com/2504-446X/7/7/445.

- Brys, T., Harutyunyan, A., Taylor, M. & Nowé, A. Policy Transfer using Reward Shaping.. AAMAS. pp. 181-188 (2015).

- Castro, P. & Precup, D. Using bisimulation for policy transfer in MDPs. Proceedings Of The AAAI Conference On Artificial Intelligence. 24 pp. 1065-1070 (2010).

- D’Eramo, C., Tateo, D., Bonarini, A., Restelli, M. & Peters, J. Sharing knowledge in multi-task deep reinforcement learning. ArXiv Preprint ArXiv:2401.09561. (2024). [CrossRef]

- Guo, Y., Gao, J., Wu, Z., Shi, C. & Chen, J. Reinforcement learning with Demonstrations from Mismatched Task under Sparse Reward. Conference On Robot Learning. pp. 1146-1156 (2023).

- Johannink, T., Bahl, S., Nair, A., Luo, J., Kumar, A., Loskyll, M., Ojea, J., Solowjow, E. & Levine, S. Residual reinforcement learning for robot control. 2019 International Conference On Robotics And Automation (ICRA). pp. 6023-6029 (2019).

- Khetarpal, K., Riemer, M., Rish, I. & Precup, D. Towards continual reinforcement learning: A review and perspectives. Journal Of Artificial Intelligence Research. 75 pp. 1401-1476 (2022). [CrossRef]

- Lecarpentier, E., Abel, D., Asadi, K., Jinnai, Y., Rachelson, E. & Littman, M. Lipschitz lifelong reinforcement learning. ArXiv Preprint ArXiv:2001.05411. (2020). [CrossRef]

- Levine, A. & Feizi, S. Goal-conditioned Q-learning as knowledge distillation. Proceedings Of The AAAI Conference On Artificial Intelligence. 37, 8500-8509 (2023).

- Li, S. & Zhang, C. An optimal online method of selecting source policies for reinforcement learning. Proceedings Of The AAAI Conference On Artificial Intelligence. 32(1) (2018).

- Liu, M., Zhu, M. & Zhang, W. Goal-conditioned reinforcement learning: Problems and solutions. ArXiv Preprint ArXiv:2201.08299. (2022). [CrossRef]

- Liu, R., Shin, H. & Tsourdos, A. Edge-enhanced attentions for drone delivery in presence of winds and recharging stations. Journal Of Aerospace Information Systems. 20, 216-228 (2023). [CrossRef]

- Lobel, S., Gottesman, O., Allen, C., Bagaria, A. & Konidaris, G. Optimistic initialization for exploration in continuous control. Proceedings Of The AAAI Conference On Artificial Intelligence. 36(7) pp. 7612-7619 (2022).

- Mehimeh, S., Tang, X. & Zhao, W. Value function optimistic initialization with uncertainty and confidence awareness in lifelong reinforcement learning. Knowledge-Based Systems. 280 pp. 111036 (2023). [CrossRef]

- Mezghani, L., Sukhbaatar, S., Bojanowski, P., Lazaric, A. & Alahari, K. Learning goal-conditioned policies offline with self-supervised reward shaping. Conference On Robot Learning. pp. 1401-1410 (2023).

- Padakandla, S. A survey of reinforcement learning algorithms for dynamically varying environments. ACM Computing Surveys (CSUR). 54, 1-25 (2021). [CrossRef]

- Rakelly, K., Zhou, A., Finn, C., Levine, S. & Quillen, D. Efficient off-policy meta-reinforcement learning via probabilistic context variables. International Conference On Machine Learning. pp. 5331-5340 (2019).

- Salvato, E., Fenu, G., Medvet, E. & Pellegrino, F. Crossing the reality gap: A survey on sim-to-real transferability of robot controllers in reinforcement learning. IEEE Access. 9 pp. 153171-153187 (2021). [CrossRef]

- Strehl, A., Li, L. & Littman, M. Reinforcement Learning in Finite MDPs: PAC Analysis.. Journal Of Machine Learning Research. 10 (2009).

- Sutton, R. & Barto, A. Reinforcement learning: An introduction. (MIT press,2018).

- Taylor, M. & Stone, P. Transfer learning for reinforcement learning domains: A survey.. Journal Of Machine Learning Research. 10 (2009). [CrossRef]

- Tirinzoni, A., Sessa, A., Pirotta, M. & Restelli, M. Importance weighted transfer of samples in reinforcement learning. International Conference On Machine Learning. pp. 4936-4945 (2018).

- Uchendu, I., Xiao, T., Lu, Y., Zhu, B., Yan, M., Simon, J., Bennice, M., Fu, C., Ma, C., Jiao, J. & Others Jump-start reinforcement learning. ArXiv Preprint ArXiv:2204.02372. (2022). [CrossRef]

- Vuong, T., Nguyen, D., Nguyen, T., Bui, C., Kieu, H., Ta, V., Tran, Q. & Le, T. Sharing experience in multitask reinforcement learning. Proceedings Of The 28th International Joint Conference On Artificial Intelligence. pp. 3642-3648 (2019).

- Wang, J., Zhang, J., Jiang, H., Zhang, J., Wang, L. & Zhang, C. Offline meta reinforcement learning with in-distribution online adaptation. International Conference On Machine Learning. pp. 36626-36669 (2023). [CrossRef]

- Zang, H., Li, X., Zhang, L., Liu, Y., Sun, B., Islam, R., Combes, R. & Laroche, R. Understanding and addressing the pitfalls of bisimulation-based representations in offline reinforcement learning. Advances In Neural Information Processing Systems. 36 (2024).

- Zhai, Y., Baek, C., Zhou, Z., Jiao, J. & Ma, Y. Computational benefits of intermediate rewards for goal-reaching policy learning. Journal Of Artificial Intelligence Research. 73 pp. 847-896 (2022). [CrossRef]

- Zhu, T., Qiu, Y., Zhou, H. & Li, J. Towards Long-delayed Sparsity: Learning a Better Transformer through Reward Redistribution.. IJCAI. pp. 4693-4701 (2023). [CrossRef]

- Zhu, Z., Lin, K., Jain, A. & Zhou, J. Transfer learning in deep reinforcement learning: A survey. IEEE Transactions On Pattern Analysis And Machine Intelligence. (2023). [CrossRef]

- Zou, H., Ren, T., Yan, D., Su, H. & Zhu, J. Learning task-distribution reward shaping with meta-learning. Proceedings Of The AAAI Conference On Artificial Intelligence. 35(12) pp. 11210-11218 (2021). [CrossRef]

| 1 | In the literature, sequences are typically defined to start from a specific time t and end at time H, with the length of the sequence given by . To simplify the notation for later theorems and propositions, we choose to refer to the state at time t as 0. This adjustment relaxes the notation while preserving generality |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).