Submitted:

28 April 2025

Posted:

30 April 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Problem Formulation

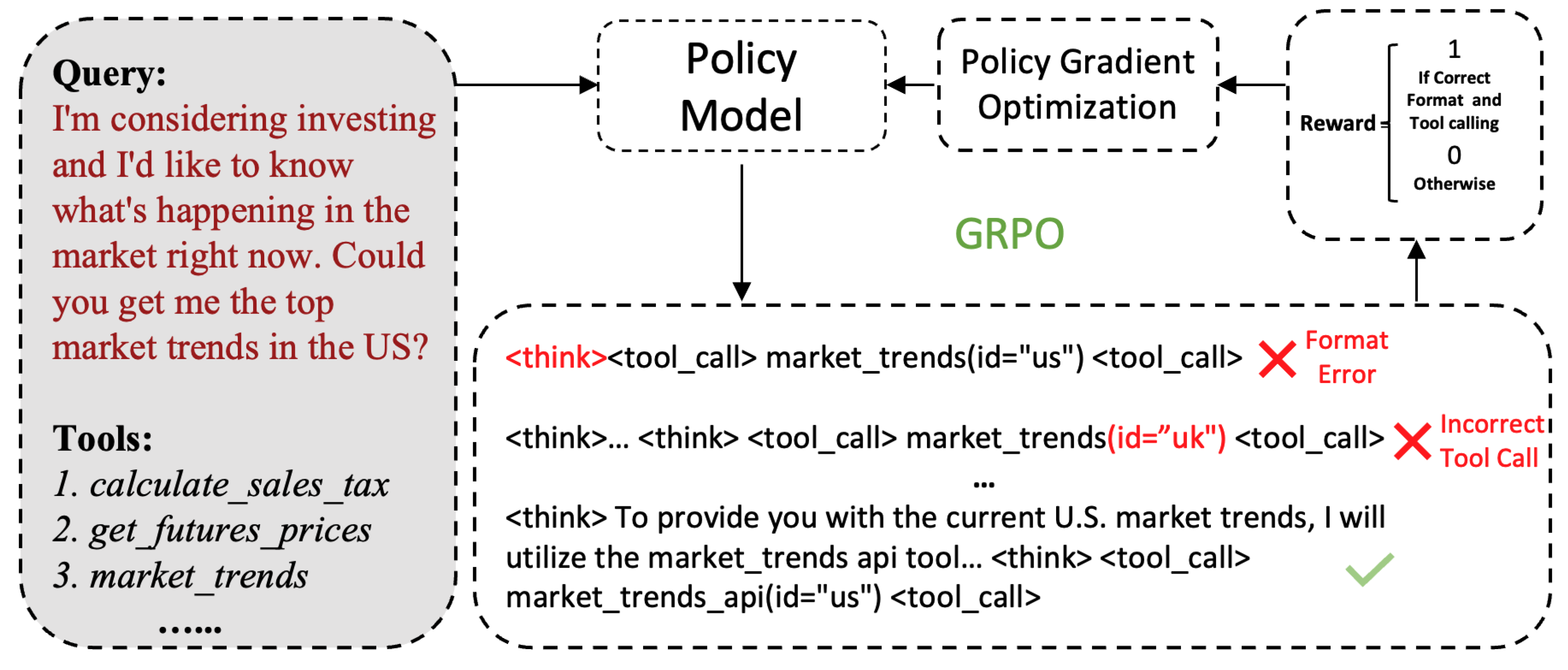

4. Nemotron-Research-Tool-N1

4.1. Data Preparation

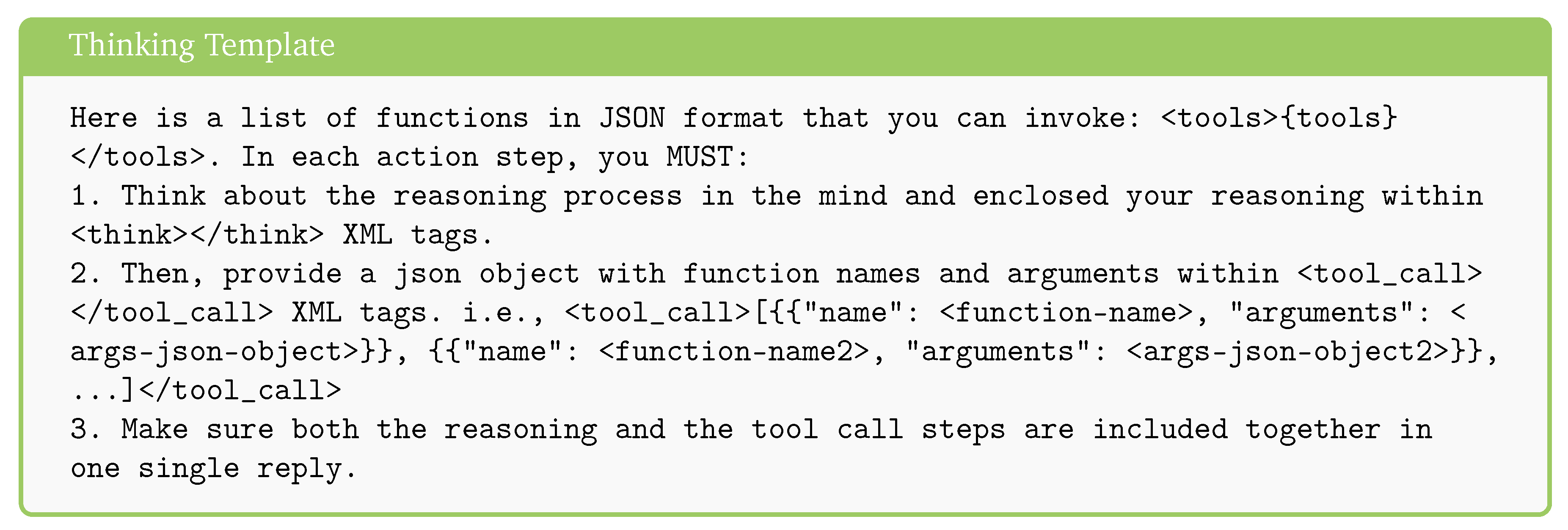

4.2. Thinking Template

4.3. Reward Modeling

5. Experiments

5.1. Settings

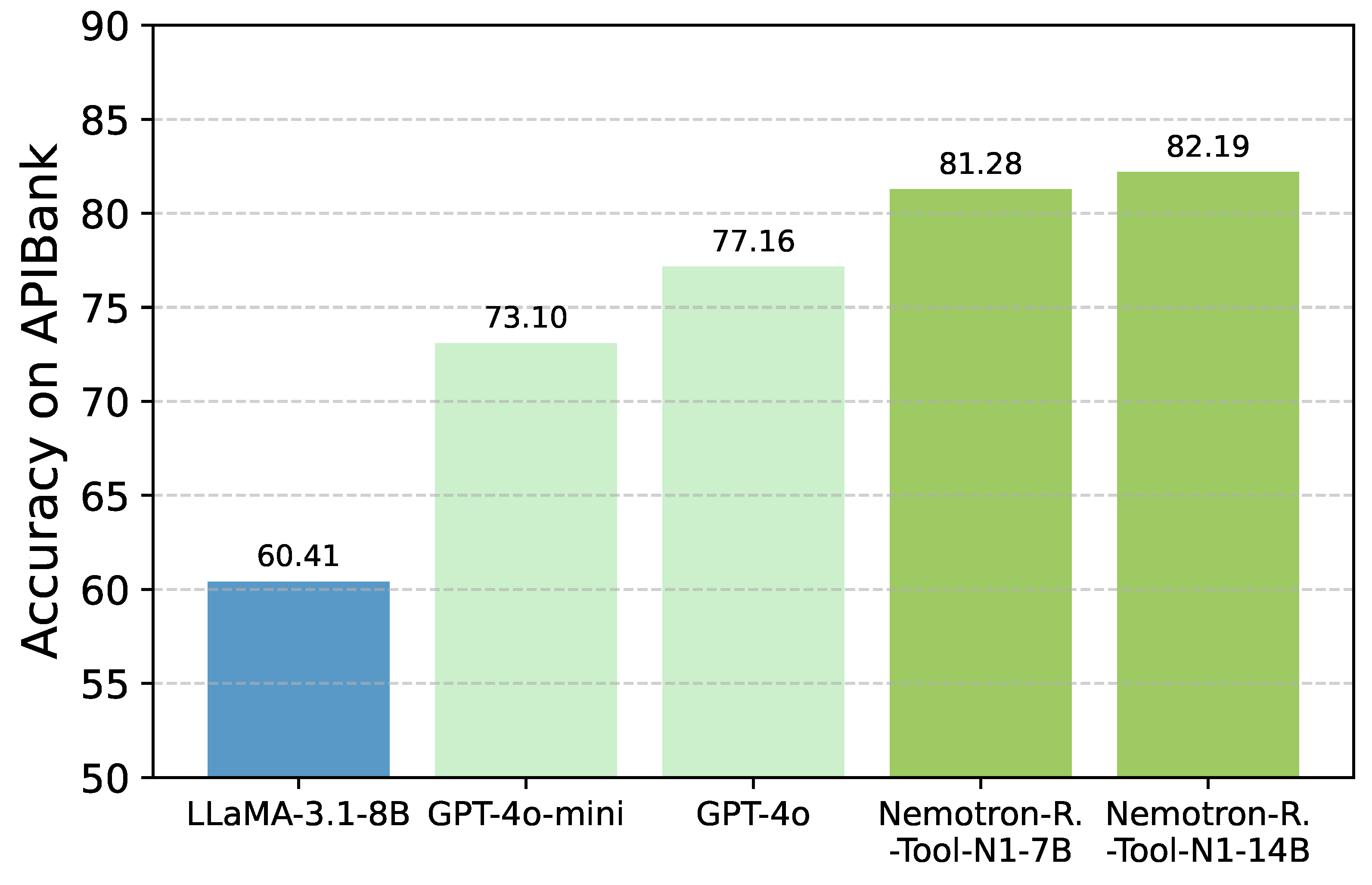

5.2. Main Results

5.3. Deep Analysis

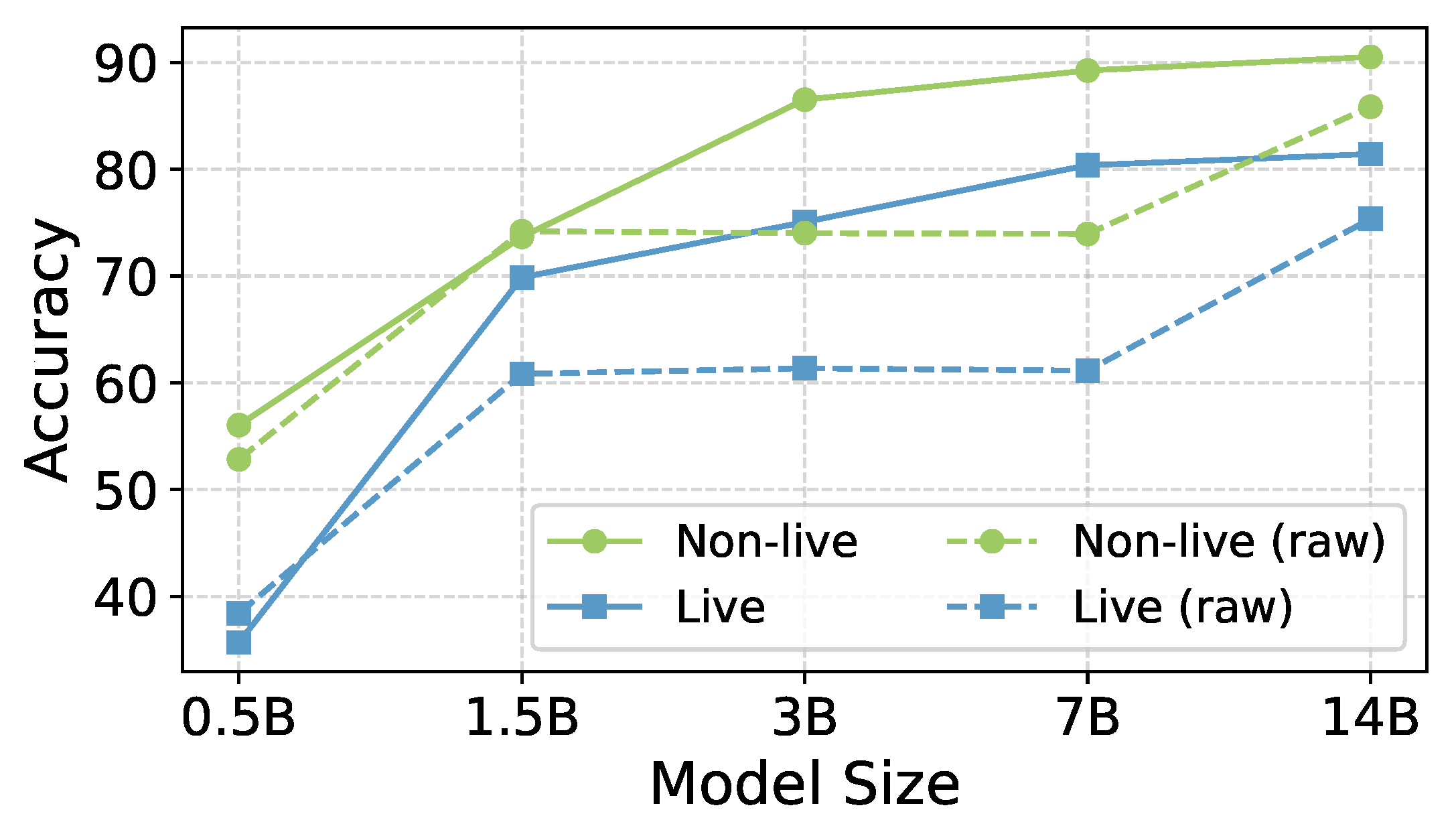

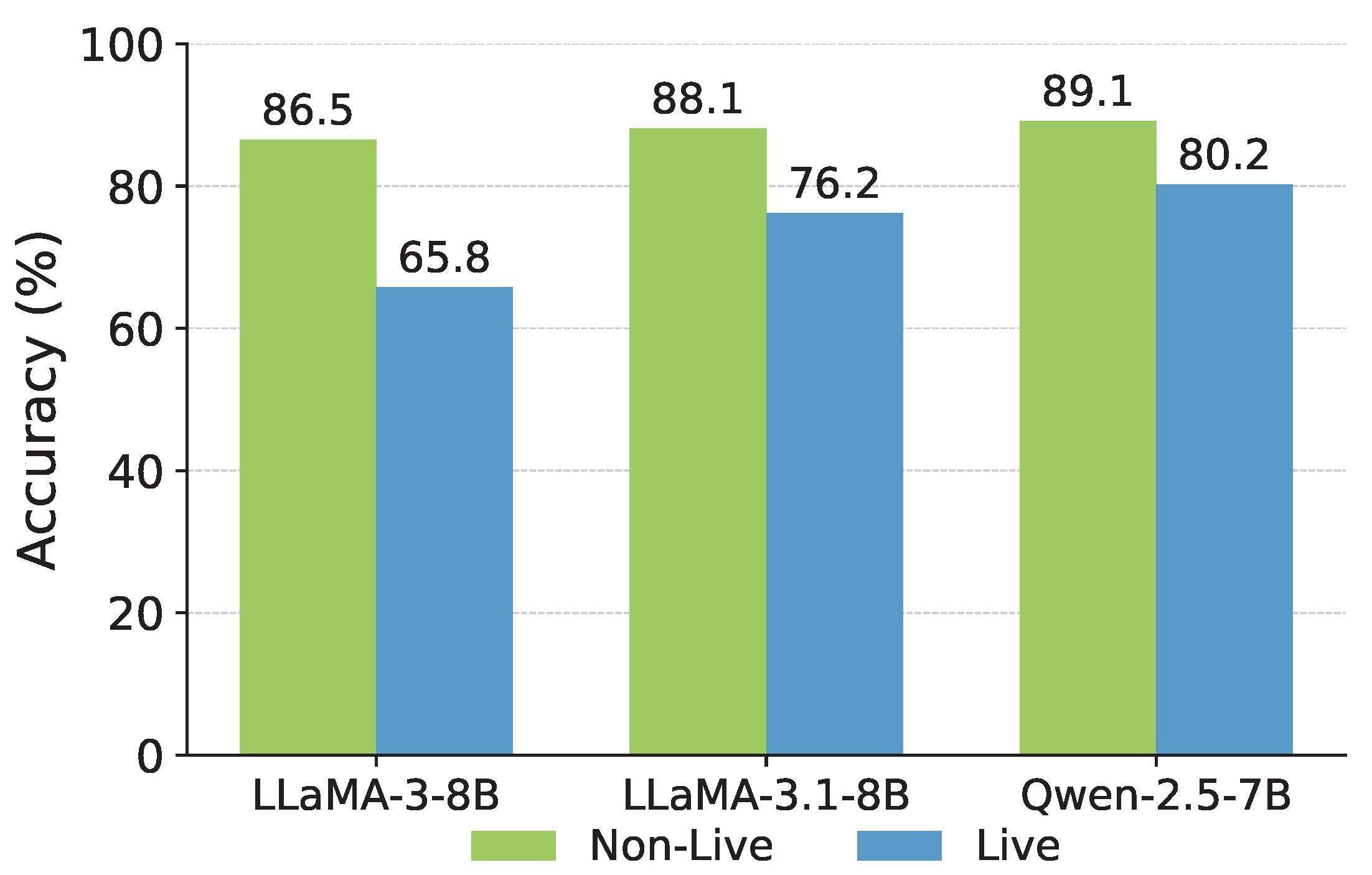

5.3.1. Scalability and Generalizability

5.3.2. Ablations

6. Conclusion

References

- Andres M Bran, Sam Cox, Oliver Schilter, Carlo Baldassari, Andrew D White, and Philippe Schwaller. Chemcrow: Augmenting large-language models with chemistry tools. arXiv:2304.05376, 2023.

- Hardy Chen, Haoqin Tu, Fali Wang, Hui Liu, Xianfeng Tang, Xinya Du, Yuyin Zhou, and Cihang Xie. Sft or rl? an early investigation into training r1-like reasoning large vision-language models. arXiv:2504.11468, 2025.

- Jiazhan Feng, Shijue Huang, Xingwei Qu, Ge Zhang, Yujia Qin, Baoquan Zhong, Chengquan Jiang, Jinxin Chi, and Wanjun Zhong. Retool: Reinforcement learning for strategic tool use in llms. arXiv:2504.11536, 2025.

- Kanishk Gandhi, Ayush Chakravarthy, Anikait Singh, Nathan Lile, and Noah D Goodman. Cognitive behaviors that enable self-improving reasoners, or, four habits of highly effective stars. arXiv:2503.01307, 2025.

- Jiaxuan Gao, Shusheng Xu, Wenjie Ye, Weilin Liu, Chuyi He, Wei Fu, Zhiyu Mei, Guangju Wang, and Yi Wu. On designing effective rl reward at training time for llm reasoning. arXiv:2410.15115, 2024.

- Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv:2501.12948, 2025.

- Yushi Hu, Weijia Shi, Xingyu Fu, Dan Roth, Mari Ostendorf, Luke Zettlemoyer, Noah A Smith, and Ranjay Krishna. Visual sketchpad: Sketching as a visual chain of thought for multimodal language models. arXiv:2406.09403, 2024.

- Bowen Jin, Hansi Zeng, Zhenrui Yue, Dong Wang, Hamed Zamani, and Jiawei Han. Search-r1: Training llms to reason and leverage search engines with reinforcement learning. arXiv:2503.09516, 2025.

- Geunwoo Kim, Pierre Baldi, and Stephen McAleer. Language models can solve computer tasks. Advances in Neural Information Processing Systems 2023, 36, 39648–39677.

- Mojtaba Komeili, Kurt Shuster, and Jason Weston. Internet-augmented dialogue generation. arXiv:2107.07566, 2021.

- Angeliki Lazaridou, Elena Gribovskaya, Wojciech Stokowiec, and Nikolai Grigorev. Internet-augmented language models through few-shot prompting for open-domain question answering. arXiv:2203.05115, 2022.

- Minghao Li, Yingxiu Zhao, Bowen Yu, Feifan Song, Hangyu Li, Haiyang Yu, Zhoujun Li, Fei Huang, and Yongbin Li. Api-bank: A comprehensive benchmark for tool-augmented llms. arXiv:2304.08244, 2023.

- Wendi Li and Yixuan, Li. Process reward model with q-value rankings. arXiv:2410.11287, 2024.

- Qiqiang Lin, Muning Wen, Qiuying Peng, Guanyu Nie, Junwei Liao, Jun Wang, Xiaoyun Mo, Jiamu Zhou, Cheng Cheng, Yin Zhao, et al. Hammer: Robust function-calling for on-device language models via function masking. arXiv:2410.04587, 2024.

- Jiawei Liu and Lingming Zhang. Code-r1: Reproducing r1 for code with reliable rewards. arXiv:2503.18470, 2025.

- Weiwen Liu, Xu Huang, Xingshan Zeng, Xinlong Hao, Shuai Yu, Dexun Li, Shuai Wang, Weinan Gan, Zhengying Liu, Yuanqing Yu, et al. Toolace: Winning the points of llm function calling. arXiv:2409.00920, 2024a.

- Zuxin Liu, Thai Hoang, Jianguo Zhang, Ming Zhu, Tian Lan, Juntao Tan, Weiran Yao, Zhiwei Liu, Yihao Feng, Rithesh RN, et al. Apigen: Automated pipeline for generating verifiable and diverse function-calling datasets. Advances in Neural Information Processing Systems, 2024; 37, 54463–54482. [CrossRef]

- Zhengxi Lu, Yuxiang Chai, Yaxuan Guo, Xi Yin, Liang Liu, Hao Wang, Guanjing Xiong, and Hongsheng Li. Ui-r1: Enhancing action prediction of gui agents by reinforcement learning. arXiv:2503.21620, 2025.

- Peixian Ma, Xialie Zhuang, Chengjin Xu, Xuhui Jiang, Ran Chen, and Jian Guo. Sql-r1: Training natural language to sql reasoning model by reinforcement learning. arXiv:2504.08600, 2025.

- Zixian Ma, Weikai Huang, Jieyu Zhang, Tanmay Gupta, and Ranjay Krishna. m & m’s: A benchmark to evaluate tool-use for m ulti-step m ulti-modal tasks. In European Conference on Computer Vision, pages 18–34. Springer, 2024.

- Zixian Ma, Jianguo Zhang, Zhiwei Liu, Jieyu Zhang, Juntao Tan, Manli Shu, Juan Carlos Niebles, Shelby Heinecke, Huan Wang, Caiming Xiong, et al. Taco: Learning multi-modal action models with synthetic chains-of-thought-and-action. arXiv:2412.05479, 2024b.

- Fanqing Meng, Lingxiao Du, Zongkai Liu, Zhixiang Zhou, Quanfeng Lu, Daocheng Fu, Botian Shi, Wenhai Wang, Junjun He, Kaipeng Zhang, et al. Mm-eureka: Exploring visual aha moment with rule-based large-scale reinforcement learning. arXiv:2503.07365, 2025.

- Niklas Muennighoff, Zitong Yang, Weijia Shi, Xiang Lisa Li, Li Fei-Fei, Hannaneh Hajishirzi, Luke Zettlemoyer, Percy Liang, Emmanuel Candès, and Tatsunori Hashimoto. s1: Simple test-time scaling. arXiv:2501.19393, 2025.

- Reiichiro Nakano, Jacob Hilton, Suchir Balaji, Jeff Wu, Long Ouyang, Christina Kim, Christopher Hesse, Shantanu Jain, Vineet Kosaraju, William Saunders, et al. Webgpt: Browser-assisted question-answering with human feedback. arXiv:2112.09332, 2021.

- Alexander Pan, Erik Jones, Meena Jagadeesan, and Jacob Steinhardt. Feedback loops with language models drive in-context reward hacking. arXiv:2402.06627, 2024.

- Bhargavi Paranjape, Scott Lundberg, Sameer Singh, Hannaneh Hajishirzi, Luke Zettlemoyer, and Marco Tulio Ribeiro. Art: Automatic multi-step reasoning and tool-use for large language models. arXiv:2303.09014, 2023.

- Akshara Prabhakar, Zuxin Liu, Weiran Yao, Jianguo Zhang, Ming Zhu, Shiyu Wang, Zhiwei Liu, Tulika Awalgaonkar, Haolin Chen, Thai Hoang, et al. Apigen-mt: Agentic pipeline for multi-turn data generation via simulated agent-human interplay. arXiv:2504.03601, 2025.

- Yujia Qin, Shihao Liang, Yining Ye, Kunlun Zhu, Lan Yan, Yaxi Lu, Yankai Lin, Xin Cong, Xiangru Tang, Bill Qian, Sihan Zhao, Runchu Tian, Ruobing Xie, Jie Zhou, Mark Gerstein, Dahai Li, Zhiyuan Liu, and Maosong Sun. Toolllm: Facilitating large language models to master 16000+ real-world apis, 2023.

- Changle Qu, Sunhao Dai, Xiaochi Wei, Hengyi Cai, Shuaiqiang Wang, Dawei Yin, Jun Xu, and Ji-Rong Wen. Tool learning with large language models: A survey. Frontiers of Computer Science 2025, 19, 198343. [CrossRef]

- Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv:2402.03300, 2024.

- Haozhan Shen, Peng Liu, Jingcheng Li, Chunxin Fang, Yibo Ma, Jiajia Liao, Qiaoli Shen, Zilun Zhang, Kangjia Zhao, Qianqian Zhang, et al. Vlm-r1: A stable and generalizable r1-style large vision-language model. arXiv:2504.07615, 2025.

- Kurt Shuster, Jing Xu, Mojtaba Komeili, Da Ju, Eric Michael Smith, Stephen Roller, Megan Ung, Moya Chen, Kushal Arora, Joshua Lane, et al. Blenderbot 3: a deployed conversational agent that continually learns to responsibly engage. arXiv:2208.03188, 2022.

- Linxin Song, Jiale Liu, Jieyu Zhang, Shaokun Zhang, Ao Luo, Shijian Wang, Qingyun Wu, and Chi Wang. Adaptive in-conversation team building for language model agents. arXiv:2405.19425, 2024.

- Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. Llama: Open and efficient foundation language models. arXiv:2302.13971, 2023.

- Xingyao Wang, Yangyi Chen, Lifan Yuan, Yizhe Zhang, Yunzhu Li, Hao Peng, and Heng Ji. Executable code actions elicit better llm agents. In Forty-first International Conference on Machine Learning, 2024a.

- Zhiruo Wang, Zhoujun Cheng, Hao Zhu, Daniel Fried, and Graham Neubig. What are tools anyway? a survey from the language model perspective. arXiv:2403.15452, 2024b.

- Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 2022; 35, 24824–24837. [CrossRef]

- Yiran Wu, Feiran Jia, Shaokun Zhang, Hangyu Li, Erkang Zhu, Yue Wang, Yin Tat Lee, Richard Peng, Qingyun Wu, and Chi Wang. Mathchat: Converse to tackle challenging math problems with llm agents. arXiv:2306.01337, 2023.

- Fanjia Yan, Huanzhi Mao, Charlie Cheng-Jie Ji, Tianjun Zhang, Shishir G. Patil, Ion Stoica, and Joseph E. Gonzalez. Berkeley function calling leaderboard. https://gorilla.cs.berkeley.edu/blogs/8_berkeley_function_calling_leaderboard.html, 2024.

- An Yang, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran Wei, et al. Qwen2. 5 technical report. arXiv:2412.15115, 2024.

- Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Tom Griffiths, Yuan Cao, and Karthik Narasimhan. Tree of thoughts: Deliberate problem solving with large language models. Advances in neural information processing systems 2023, 36, 11809–11822. [CrossRef]

- Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models. In International Conference on Learning Representations (ICLR), 2023b.

- Fan Yin, Zifeng Wang, I Hsu, Jun Yan, Ke Jiang, Yanfei Chen, Jindong Gu, Long T Le, Kai-Wei Chang, Chen-Yu Lee, et al. Magnet: Multi-turn tool-use data synthesis and distillation via graph translation. arXiv:2503.07826, 2025.

- Yuanqing Yu, Zhefan Wang, Weizhi Ma, Zhicheng Guo, Jingtao Zhan, Shuai Wang, Chuhan Wu, Zhiqiang Guo, and Min Zhang. Steptool: A step-grained reinforcement learning framework for tool learning in llms. arXiv:2410.07745, 2024.

- Yirong Zeng, Xiao Ding, Yuxian Wang, Weiwen Liu, Wu Ning, Yutai Hou, Xu Huang, Bing Qin, and Ting Liu. Boosting tool use of large language models via iterative reinforced fine-tuning. arXiv:2501.09766, 2025.

- Daochen Zha, Zaid Pervaiz Bhat, Kwei-Herng Lai, Fan Yang, Zhimeng Jiang, Shaochen Zhong, and Xia Hu. Data-centric artificial intelligence: A survey. ACM Computing Surveys, 2025; 57, 1–42. [CrossRef]

- Jianguo Zhang, Tian Lan, Ming Zhu, Zuxin Liu, Thai Hoang, Shirley Kokane, Weiran Yao, Juntao Tan, Akshara Prabhakar, Haolin Chen, et al. xlam: A family of large action models to empower ai agent systems. arXiv:2409.03215, 2024.

- Shaokun Zhang, Jieyu Zhang, Dujian Ding, Mirian Hipolito Garcia, Ankur Mallick, Daniel Madrigal, Menglin Xia, Victor Rühle, Qingyun Wu, and Chi Wang. Ecoact: Economic agent determines when to register what action. arXiv:2411.01643, 2024.

- Shaokun Zhang, Jieyu Zhang, Jiale Liu, Linxin Song, Chi Wang, Ranjay Krishna, and Qingyun Wu. Training language model agents without modifying language models. arXiv e-prints, pages arXiv–2402. 2024.

| 1 | Throughout this paper, we refer to Nemotron-Research-Tool-N1 as Tool-N1 for brevity. |

| xLAM | ToolACE | |||

|---|---|---|---|---|

| Single-Turn | Multi-Turn | Single-Turn | Multi-Turn | |

| Raw Data | 60000 | 0 | 10500 | 800 |

| After Process | 60000 | 0 | 8183 | 1470 |

| Non-Live | Live | Overall | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Models | Simple | Multiple | Parallel |

Parallel Multiple |

Simple | Multiple | Parallel |

Parallel Multiple |

Non-live | Live | Overall |

| GPT-4o-2024-11-20 | 79.42 | 95.50 | 94.00 | 83.50 | 84.88 | 79.77 | 87.50 | 75.00 | 88.10 | 79.83 | 83.97 |

| GPT-4o-mini-2024-07-18 | 80.08 | 90.50 | 89.50 | 87.00 | 81.40 | 76.73 | 93.75 | 79.17 | 86.77 | 76.50 | 81.64 |

| GPT-3.5-Turbo-0125 | 77.92 | 93.50 | 67.00 | 53.00 | 80.62 | 78.63 | 75.00 | 58.33 | 72.85 | 68.55 | 70.70 |

| Gemini-2.0-Flash-001 | 74.92 | 89.50 | 86.50 | 87.00 | 75.58 | 73.12 | 81.25 | 83.33 | 84.48 | 81.39 | 82.94 |

| DeepSeek-R1 | 76.42 | 94.50 | 90.05 | 88.00 | 84.11 | 79.87 | 87.50 | 70.83 | 87.35 | 74.41 | 80.88 |

| Llama3.1-70B-Inst | 77.92 | 96.00 | 94.50 | 91.50 | 78.29 | 76.16 | 87.50 | 66.67 | 89.98 | 62.24 | 76.11 |

| Llama3.1-8B-Inst | 72.83 | 93.50 | 87.00 | 83.50 | 74.03 | 73.31 | 56.25 | 54.17 | 84.21 | 61.08 | 72.65 |

| Qwen2.5-7B-Inst | 75.33 | 94.50 | 91.50 | 84.50 | 76.74 | 74.93 | 62.50 | 70.83 | 86.46 | 67.44 | 76.95 |

| xLAM-2-70b-fc-r (FC) | 78.25 | 94.50 | 92.00 | 89.00 | 77.13 | 71.13 | 68.75 | 58.33 | 88.44 | 72.95 | 80.70 |

| ToolACE-8B (FC) | 76.67 | 93.50 | 90.50 | 89.50 | 73.26 | 76.73 | 81.25 | 70.83 | 87.54 | 78.59 | 82.57 |

| Hammer2.1-7B (FC) | 78.08 | 95.00 | 93.50 | 88.00 | 76.74 | 77.4 | 81.25 | 70.83 | 88.65 | 75.11 | 81.88 |

|

Nemotron-Research- Tool-N1-7B |

77.00 | 95.00 | 94.50 | 90.50 | 82.17 | 80.44 | 62.50 | 70.83 | 89.25 | 80.38 | 84.82 |

|

Nemotron-Research- Tool-N1-14B |

80.58 | 96.00 | 93.50 | 92.00 | 84.10 | 81.10 | 81.25 | 66.67 | 90.52 | 81.42 | 85.97 |

| Fine-Grained Reward Design | Binary Reward Design | |||

|---|---|---|---|---|

| Split | w/ Reason Format Partial Reward |

w/ Reason Format + Func name Partial Rewards |

w/o Reason Format |

w/ Reason Format |

| Non-Live | 87.83 | 88.54 | 87.63 | 89.25 |

| Live | 79.64 | 76.61 | 76.24 | 80.38 |

| Avg | 83.74 | 82.58 | 81.94 | 84.82 |

| Recipe | Raw | xLAM | ToolACE | xLAM | ToolACE | ToolACE |

| Model | 8B | 8B | w/o ToolACE | w/o xLAM | w/ xLAM | |

| Non-Live | 73.94 | 84.40 | 87.54 | 87.77 | 87.67 | 89.25 |

| Live | 61.14 | 66.90 | 78.59 | 76.24 | 79.58 | 80.38 |

| Avg | 67.54 | 75.65 | 82.57 | 82.01 | 83.63 | 84.82 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 1996 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).