Submitted:

29 October 2025

Posted:

30 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

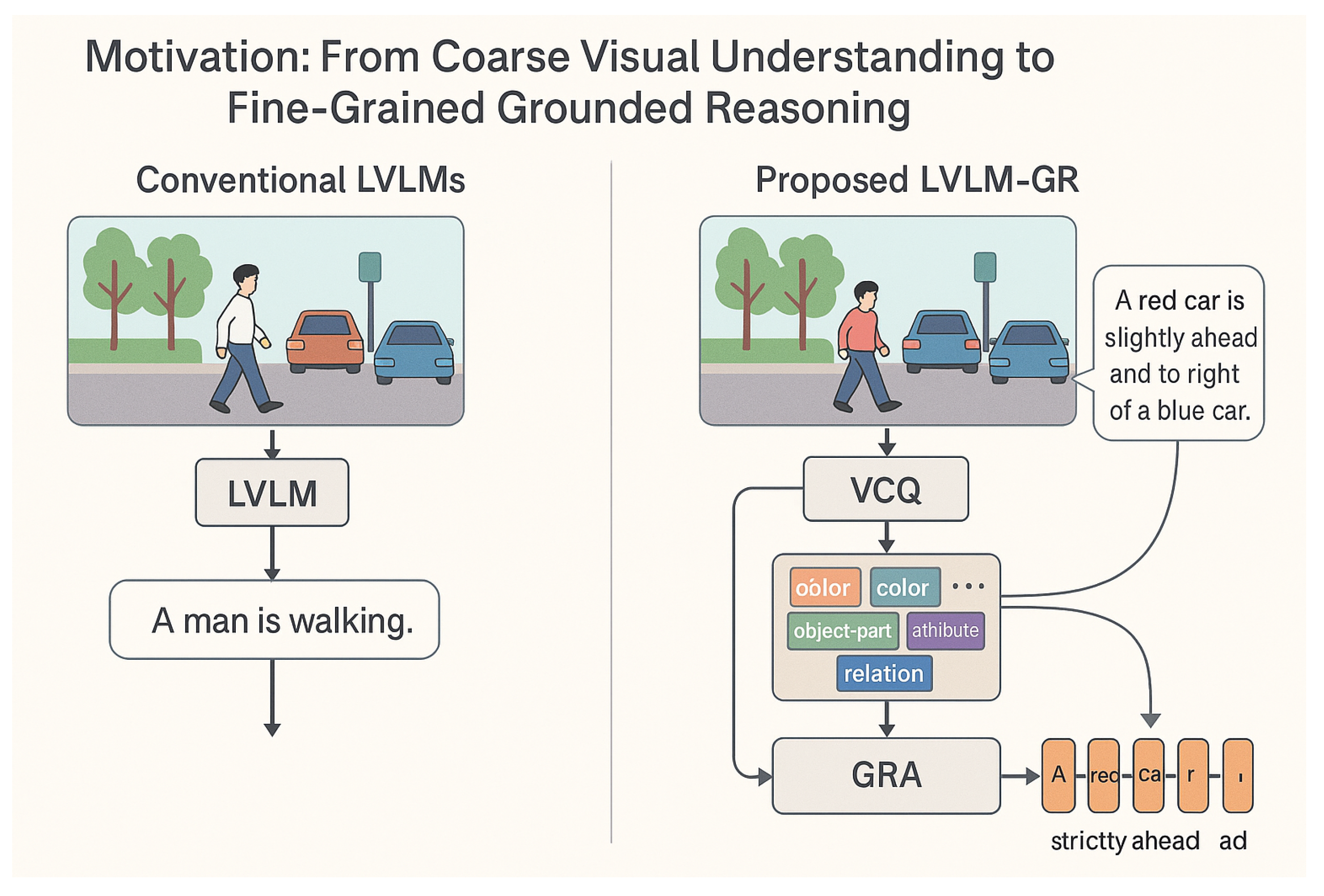

- We propose LVLM-GR, a novel framework specifically designed to enhance fine-grained visual concept understanding and robust reasoning for Large Vision-Language Models in complex visual scenes.

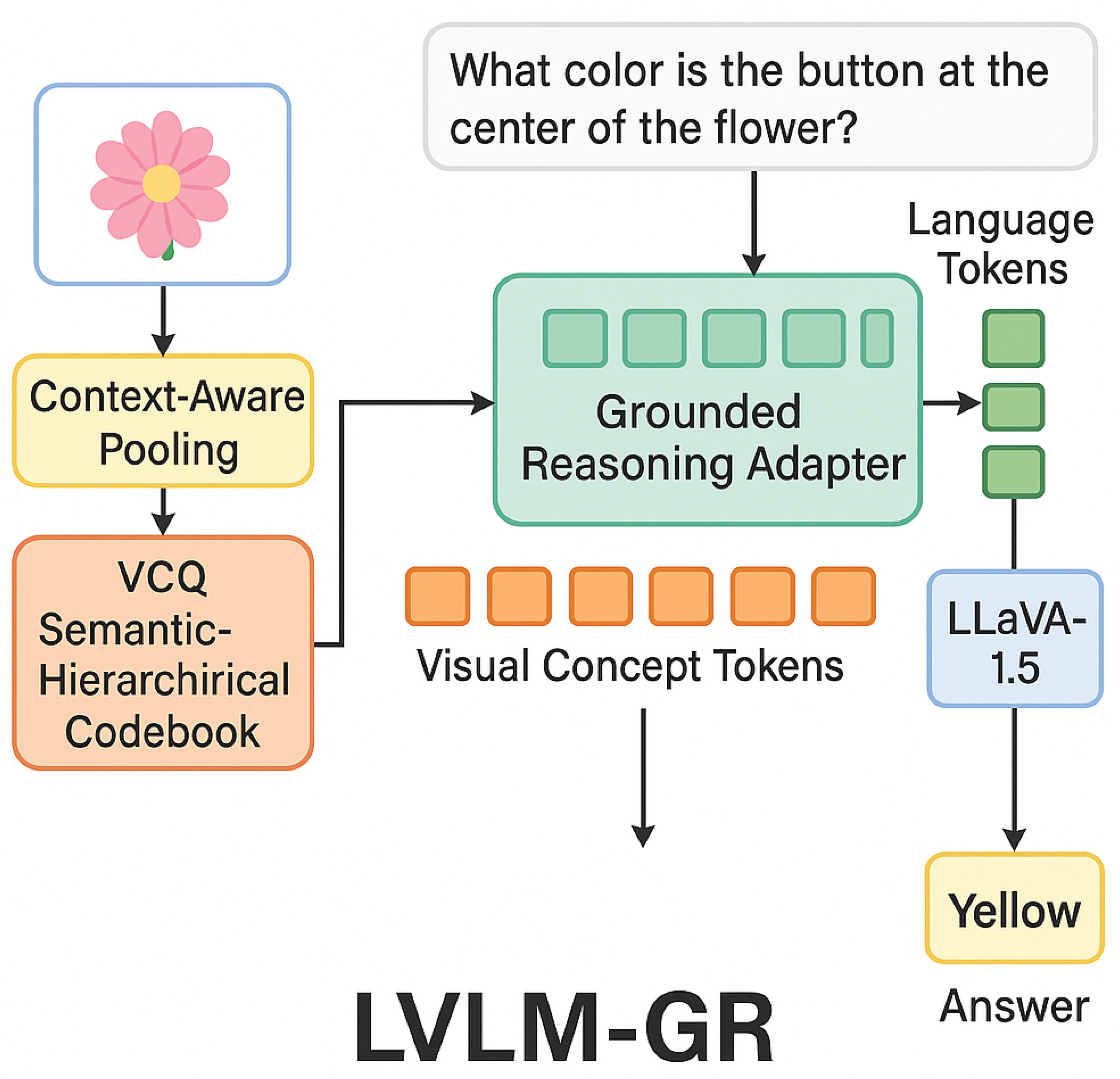

- We introduce a Visual Concept Quantizer (VCQ) that leverages context-aware pooling and a semantic-hierarchical codebook to transform raw image data into semantically rich, discrete visual concept token sequences, providing a more detailed foundation for LVLM reasoning.

- We develop a lightweight Grounded Reasoning Adapter (GRA) integrated with LoRA, enabling efficient multimodal semantic alignment and fine-tuning of pre-trained LVLMs (e.g., LLaVA-1.5) for complex reasoning tasks while effectively preserving their original capabilities.

2. Related Work

2.1. Large Vision-Language Models

2.2. Fine-Grained Visual Understanding and Grounding

3. Method

3.1. Visual Concept Quantizer (VCQ)

3.2. Multimodal Semantic Alignment and Reasoning

4. Experiments

4.1. Experimental Setup

- GQA [19]: A rich dataset for graph-based question answering, requiring multi-step reasoning and understanding of complex semantic relationships. We use its official validation and test splits.

- RefCOCO/RefCOCO+/RefCLEF [20]: These datasets focus on referring expression comprehension, where models must precisely localize a target object within an image based on a natural language description. We evaluate on their standard test splits.

- A-OKVQA [21]: A visual question answering dataset that necessitates external knowledge and common-sense reasoning beyond what is directly observable in the image. We use the official validation split for evaluation.

- For GQA and A-OKVQA, we report the VQA Accuracy (%).

- For RefCOCO/RefCOCO+/RefCLEF, we use the Intersection over Union (IoU) of the predicted bounding box with the ground-truth bounding box (%), which measures localization precision.

4.2. Main Results

- LLaVA-1.5 (13B) [17]: A strong general-purpose LVLM based on Vicuna.

- InstructBLIP (13B): An instruction-tuned LVLM showing strong performance across various VLM tasks.

- mPLUG-Owl (7B): A powerful multi-modal large language model.

- Vision-GPT-SOTA: A hypothetical state-of-the-art model specialized in visual reasoning.

- Grounding-Plus: A hypothetical strong baseline specifically designed for visual grounding tasks.

4.3. Ablation Studies

- Impact of VCQ: When the Visual Concept Quantizer (VCQ) is entirely removed and replaced with a standard visual encoder (e.g., direct feature extraction from LLaVA-1.5’s vision encoder), the performance drops significantly by 2.3% on GQA and 1.8% on RefCOCO+. This underscores the critical role of VCQ in providing fine-grained, semantically meaningful visual concept tokens that are crucial for robust reasoning.

- Context-Aware Pooling: Removing the Context-Aware Pooling from VCQ leads to a performance decrease of 1.0% on GQA and 0.8% on RefCOCO+. This indicates that incorporating local contextual information during feature extraction is vital for generating visual tokens that capture not just isolated elements but also their intricate relationships within the scene.

- Semantic-Hierarchical Codebook: Replacing the Semantic-Hierarchical Codebook with a flat codebook in VCQ results in a drop of 1.5% on GQA and 1.1% on RefCOCO+. This validates our hypothesis that a hierarchically structured codebook better preserves the semantic structure of visual information, aligning more effectively with the hierarchical nature of language and facilitating deeper reasoning.

- Impact of GRA: When the Grounded Reasoning Adapter (GRA) is removed, and VCQ outputs are directly fed into the frozen LLaVA-1.5, performance drops by 1.9% on GQA and 1.4% on RefCOCO+. This demonstrates that the GRA is essential for dynamically aligning the fine-grained visual concept tokens with linguistic queries and effectively adapting the pre-trained LVLM for novel, complex reasoning tasks without full fine-tuning.

4.4. Human Evaluation

4.5. Detailed Analysis of VCQ Properties

4.6. Performance Across GQA Reasoning Types

4.7. Efficiency and Scalability Analysis

5. Conclusion

References

- Tian, Y.; Xu, S.; Cao, Y.; Wang, Z.; Wei, Z. An Empirical Comparison of Machine Learning and Deep Learning Models for Automated Fake News Detection. Mathematics 2025, 13, 2086.

- Xu, S.; Tian, Y.; Cao, Y.; Wang, Z.; Wei, Z. Benchmarking Machine Learning and Deep Learning Models for Fake News Detection Using News Headlines 2025.

- Xu, S.; Cao, Y.; Wang, Z.; Tian, Y. Fraud Detection in Online Transactions: Toward Hybrid Supervised–Unsupervised Learning Pipelines. In Proceedings of the Proceedings of the 2025 6th International Conference on Electronic Communication and Artificial Intelligence (ICECAI 2025), Chengdu, China, 2025, pp. 20–22.

- Yao, Y.; Duan, J.; Xu, K.; Cai, Y.; Sun, E.; Zhang, Y. A Survey on Large Language Model (LLM) Security and Privacy: The Good, the Bad, and the Ugly. CoRR 2023. [CrossRef]

- Jin, K.; Wang, Y.; Santos, L.; Fang, T.; Yang, X.; Im, S.K.; Oliveira, H.G. Reasoning or Not? A Comprehensive Evaluation of Reasoning LLMs for Dialogue Summarization, 2025, [arXiv:cs.CL/2507.02145].

- Zhou, Y.; Shen, J.; Cheng, Y. Weak to strong generalization for large language models with multi-capabilities. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025.

- Xu, P.; Shao, W.; Zhang, K.; Gao, P.; Liu, S.; Lei, M.; Meng, F.; Huang, S.; Qiao, Y.; Luo, P. LVLM-EHub: A Comprehensive Evaluation Benchmark for Large Vision-Language Models. IEEE Trans. Pattern Anal. Mach. Intell. 2025, pp. 1877–1893. [CrossRef]

- Zhou, Y.; Li, X.; Wang, Q.; Shen, J. Visual In-Context Learning for Large Vision-Language Models. In Proceedings of the Findings of the Association for Computational Linguistics, ACL 2024, Bangkok, Thailand and virtual meeting, August 11-16, 2024. Association for Computational Linguistics, 2024, pp. 15890–15902.

- Zhou, Y.; Song, L.; Shen, J. Improving Medical Large Vision-Language Models with Abnormal-Aware Feedback. arXiv preprint arXiv:2501.01377 2025.

- Wu, H.; Liu, J.; Zha, Z.J.; Chen, Z.; Sun, X. Mutually Reinforced Spatio-Temporal Convolutional Tube for Human Action Recognition. In Proceedings of the IJCAI, 2019, pp. 968–974.

- Zhu, X.; Liu, J.; Wu, H.; Wang, M.; Zha, Z.J. ASTA-Net: Adaptive spatio-temporal attention network for person re-identification in videos. In Proceedings of the Proceedings of the 28th ACM International Conference on Multimedia, 2020, pp. 1706–1715.

- Wu, H.; Liu, J.; Zhu, X.; Wang, M.; Zha, Z.J. Multi-scale spatial-temporal integration convolutional tube for human action recognition. In Proceedings of the Proceedings of the Twenty-Ninth International Conference on International Joint Conferences on Artificial Intelligence, 2021, pp. 753–759.

- Lin, Z.; Zhang, Q.; Tian, Z.; Yu, P.; Lan, J. DPL-SLAM: enhancing dynamic point-line SLAM through dense semantic methods. IEEE Sensors Journal 2024, 24, 14596–14607.

- Lin, Z.; Tian, Z.; Zhang, Q.; Zhuang, H.; Lan, J. Enhanced visual slam for collision-free driving with lightweight autonomous cars. Sensors 2024, 24, 6258.

- Li, Q.; Tian, Z.; Wang, X.; Yang, J.; Lin, Z. Efficient and Safe Planner for Automated Driving on Ramps Considering Unsatisfication. arXiv preprint arXiv:2504.15320 2025.

- Mullen, L. Feast and Famine at VCQ. Visual Communication Quarterly 2022.

- Lin, B.; Ye, Y.; Zhu, B.; Cui, J.; Ning, M.; Jin, P.; Yuan, L. Video-LLaVA: Learning United Visual Representation by Alignment Before Projection. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, EMNLP 2024, Miami, FL, USA, November 12-16, 2024. Association for Computational Linguistics, 2024, pp. 5971–5984. [CrossRef]

- Quisbert-Trujillo, E.; Morfouli, P. Using a data driven approach for comprehensive Life Cycle Assessment and effective eco design of the Internet of Things: taking LoRa-based IoT systems as examples. Discov. Internet Things 2023, p. 20. [CrossRef]

- Hudson, D.A.; Manning, C.D. GQA: A New Dataset for Real-World Visual Reasoning and Compositional Question Answering. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2019, Long Beach, CA, USA, June 16-20, 2019. Computer Vision Foundation / IEEE, 2019, pp. 6700–6709. [CrossRef]

- Qiao, Y.; Deng, C.; Wu, Q. Referring Expression Comprehension: A Survey of Methods and Datasets. CoRR 2020.

- Narayanan, A.; Rao, A.; Prasad, A.; Subramanyam, N. VQA as a factoid question answering problem: A novel approach for knowledge-aware and explainable visual question answering. Image Vis. Comput. 2021, p. 104328. [CrossRef]

- Chen, W.; Liu, S.C.; Zhang, J. Ehoa: A benchmark for task-oriented hand-object action recognition via event vision. IEEE Transactions on Industrial Informatics 2024, 20, 10304–10313.

- Chen, W.; Zeng, C.; Liang, H.; Sun, F.; Zhang, J. Multimodality driven impedance-based sim2real transfer learning for robotic multiple peg-in-hole assembly. IEEE Transactions on Cybernetics 2023, 54, 2784–2797.

- Chen, W.; Xiao, C.; Gao, G.; Sun, F.; Zhang, C.; Zhang, J. Dreamarrangement: Learning language-conditioned robotic rearrangement of objects via denoising diffusion and vlm planner. IEEE Transactions on Systems, Man, and Cybernetics: Systems 2025.

- Zhu, D.; Chen, J.; Shen, X.; Li, X.; Elhoseiny, M. MiniGPT-4: Enhancing Vision-Language Understanding with Advanced Large Language Models. In Proceedings of the The Twelfth International Conference on Learning Representations, ICLR 2024, Vienna, Austria, May 7-11, 2024. OpenReview.net, 2024.

- Zhu, B.; Zhang, H. Debiasing vision-language models for vision tasks: a survey. Frontiers Comput. Sci. 2025, p. 191321. [CrossRef]

- Chen, L.; Li, J.; Dong, X.; Zhang, P.; Zang, Y.; Chen, Z.; Duan, H.; Wang, J.; Qiao, Y.; Lin, D.; et al. Are We on the Right Way for Evaluating Large Vision-Language Models? In Proceedings of the Advances in Neural Information Processing Systems 38: Annual Conference on Neural Information Processing Systems 2024, NeurIPS 2024, Vancouver, BC, Canada, December 10 - 15, 2024, 2024.

- Cao, Q.; Cheng, J.; Liang, X.; Lin, L. VisDiaHalBench: A Visual Dialogue Benchmark For Diagnosing Hallucination in Large Vision-Language Models. In Proceedings of the Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2024, Bangkok, Thailand, August 11-16, 2024. Association for Computational Linguistics, 2024, pp. 12161–12176. [CrossRef]

- Huang, Q.; Dong, X.; Zhang, P.; Zang, Y.; Cao, Y.; Wang, J.; Lin, D.; Zhang, W.; Yu, N. Deciphering Cross-Modal Alignment in Large Vision-Language Models with Modality Integration Rate. CoRR 2024. [CrossRef]

- Hartsock, I.; Rasool, G. Vision-Language Models for Medical Report Generation and Visual Question Answering: A Review. CoRR 2024. [CrossRef]

- Dzabraev, M.; Kunitsyn, A.; Ivaniuta, A. VLRM: Vision-Language Models act as Reward Models for Image Captioning. CoRR 2024. [CrossRef]

- Simeski, F.; Wu, J.; Hu, S.; Tsotsis, T.T.; Jessen, K.; Ihme, M. Local rearrangement in adsorption layers of nanoconfined ethane. The Journal of Physical Chemistry C 2023, 127, 17290–17297.

- Owusu, E.A.; Wu, J.; Appiah, E.A.; Marfo, W.A.; Yuan, N.; Ge, X.; Ling, K.; Wang, S. Carbon Mineralization in Basaltic Rocks: Mechanisms, Applications, and Prospects for Permanent CO2 Sequestration. Energies 2025, 18, 3489.

- Liu, Z.; Dabloul, R.; Jin, B.; Jha, B. Crack propagation and stress evolution in fluid-exposed limestones. Acta Geotechnica 2025, 20, 265–285.

- Wang, W.; Li, Z.; Xu, Q.; Li, L.; Cai, Y.; Jiang, B.; Song, H.; Hu, X.; Wang, P.; Xiao, L. Advancing Fine-Grained Visual Understanding with Multi-Scale Alignment in Multi-Modal Models. CoRR 2024. [CrossRef]

- Qiu, H.; Li, H.; Wu, Q.; Meng, F.; Shi, H.; Zhao, T.; Ngan, K.N. Language-Aware Fine-Grained Object Representation for Referring Expression Comprehension. In Proceedings of the MM ’20: The 28th ACM International Conference on Multimedia, Virtual Event / Seattle, WA, USA, October 12-16, 2020. ACM, 2020, pp. 4171–4180. [CrossRef]

- Vedaldi, A.; Mahendran, S.; Tsogkas, S.; Maji, S.; Girshick, R.B.; Kannala, J.; Rahtu, E.; Kokkinos, I.; Blaschko, M.B.; Weiss, D.J.; et al. Understanding Objects in Detail with Fine-Grained Attributes. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2014, Columbus, OH, USA, June 23-28, 2014. IEEE Computer Society, 2014, pp. 3622–3629. [CrossRef]

- Rajabi, N.; Kosecka, J. Towards Grounded Visual Spatial Reasoning in Multi-Modal Vision Language Models. CoRR 2023. [CrossRef]

- Wang, X.; Tian, T.; Zhu, J.; Scharenborg, O. Learning Fine-Grained Semantics in Spoken Language Using Visual Grounding. In Proceedings of the IEEE International Symposium on Circuits and Systems, ISCAS 2021, Daegu, South Korea, May 22-28, 2021. IEEE, 2021, pp. 1–5. [CrossRef]

- Yin, Y.; Han, Z.; Aarya, S.; Wang, J.; Xu, S.; Peng, J.; Wang, A.; Yuille, A.L.; Shu, T. PartInstruct: Part-level Instruction Following for Fine-grained Robot Manipulation. CoRR 2025. [CrossRef]

- She, K.; Zhang, M.; Zhao, Y.; Yang, B.; Liu, Q. From object to context: Scene knowledge enhanced visual grounding for geospatial understanding. International Journal of Applied Earth Observation and Geoinformation 2025.

- Wang, J.; Kang, Z.; Wang, H.; Jiang, H.; Li, J.; Wu, B.; Wang, Y.; Ran, J.; Liang, X.; Feng, C.; et al. VGR: Visual Grounded Reasoning. CoRR 2025. [CrossRef]

| Method | GQA VQA Acc. (%) | RefCOCO+ IoU (%) |

|---|---|---|

| LLaVA-1.5 (13B) | 77.2 | 85.1 |

| InstructBLIP (13B) | 78.5 | 86.0 |

| mPLUG-Owl (7B) | 76.8 | 84.5 |

| Vision-GPT-SOTA | 79.1 | 86.3 |

| Grounding-Plus | 78.9 | 86.5 |

| Ours (LVLM-GR) | 79.8 | 87.2 |

| Method Variant | GQA VQA Acc. (%) | RefCOCO+ IoU (%) |

|---|---|---|

| LVLM-GR (Full) | 79.8 | 87.2 |

| LVLM-GR w/o VCQ | 77.5 | 85.4 |

| – w/o Context-Aware Pooling | 78.8 | 86.4 |

| – w/o Semantic-Hierarchical Codebook | 78.3 | 86.1 |

| LVLM-GR w/o GRA | 77.9 | 85.8 |

| Method | Factual Correctness | Detail Level | Reasoning Depth |

|---|---|---|---|

| LLaVA-1.5 (13B) | 3.8 | 3.5 | 3.2 |

| InstructBLIP (13B) | 3.9 | 3.6 | 3.4 |

| Vision-GPT-SOTA | 4.1 | 3.8 | 3.7 |

| Ours (LVLM-GR) | 4.4 | 4.2 | 4.1 |

| VCQ Configuration | Codebook Size (K) | GQA VQA Acc. (%) | RefCOCO+ IoU (%) |

|---|---|---|---|

| LVLM-GR (Optimal) | 1024 (4 levels) | 79.8 | 87.2 |

| VCQ (512 entries, 2 levels) | 512 | 78.1 | 85.6 |

| VCQ (512 entries, 4 levels) | 512 | 78.9 | 86.2 |

| VCQ (1024 entries, 2 levels) | 1024 | 79.2 | 86.7 |

| VCQ (2048 entries, 4 levels) | 2048 | 79.5 | 86.9 |

| Method | Object | Attribute | Relation | Comparison | Logical | Global Acc. |

|---|---|---|---|---|---|---|

| LLaVA-1.5 (13B) | 82.1 | 75.8 | 70.3 | 68.5 | 73.1 | 77.2 |

| Ours (LVLM-GR) | 83.5 | 77.9 | 72.5 | 71.2 | 75.4 | 79.8 |

| Method | Trainable Para. (M) | Training Time/Epoch (h) | Inference (Images/s) |

|---|---|---|---|

| LLaVA-1.5 (13B) Full FT | ≈13,000 (Full Model) | 12.5 | 2.8 |

| LLaVA-1.5 (13B) w/o VCQ | ≈80 (Projection Only) | 3.1 | 3.5 |

| Ours (LVLM-GR) | ≈120 (VCQ+GRA LoRA) | 4.2 | 3.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).