Submitted:

01 December 2025

Posted:

04 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Related Work

2.1. Retrieval Augmented Generation and Large Language Models

2.2. Enterprise Knowledge Management and Document Automation

2.2.1. Knowledge Management

2.2.2. Document Automation

2.3. A Conceptual Framework for Enterprise RAG and LLM

| Stage | Key RQ Alignment | Description |

|---|---|---|

| 1. Input | RQ1 (Platforms); RQ2 (Datasets) | Defines the data sources and infrastructure. |

| 2. Retrieval | RQ3 (ML Paradigms); RQ4 (Architectures) | Indexing strategy and retrieval mechanism (RAG variants, ML paradigms) to fetch relevant context. |

| 3. Generation | RQ8 (Best Configs) | How the LLM synthesizes output, influenced by the backbone and prompting. |

| 4. Validation | RQ5 (Metrics); RQ6 (Validation) | Technical factual quality checks (accuracy, latency provenance) before rollout. |

| 5. Business Impact | RQ9 (Challenges); RQ5 (Biz Metrics) | Outcome measurement beyond technical metrics: operational and economic gains. |

2.4. Related Review and Mapping Studies

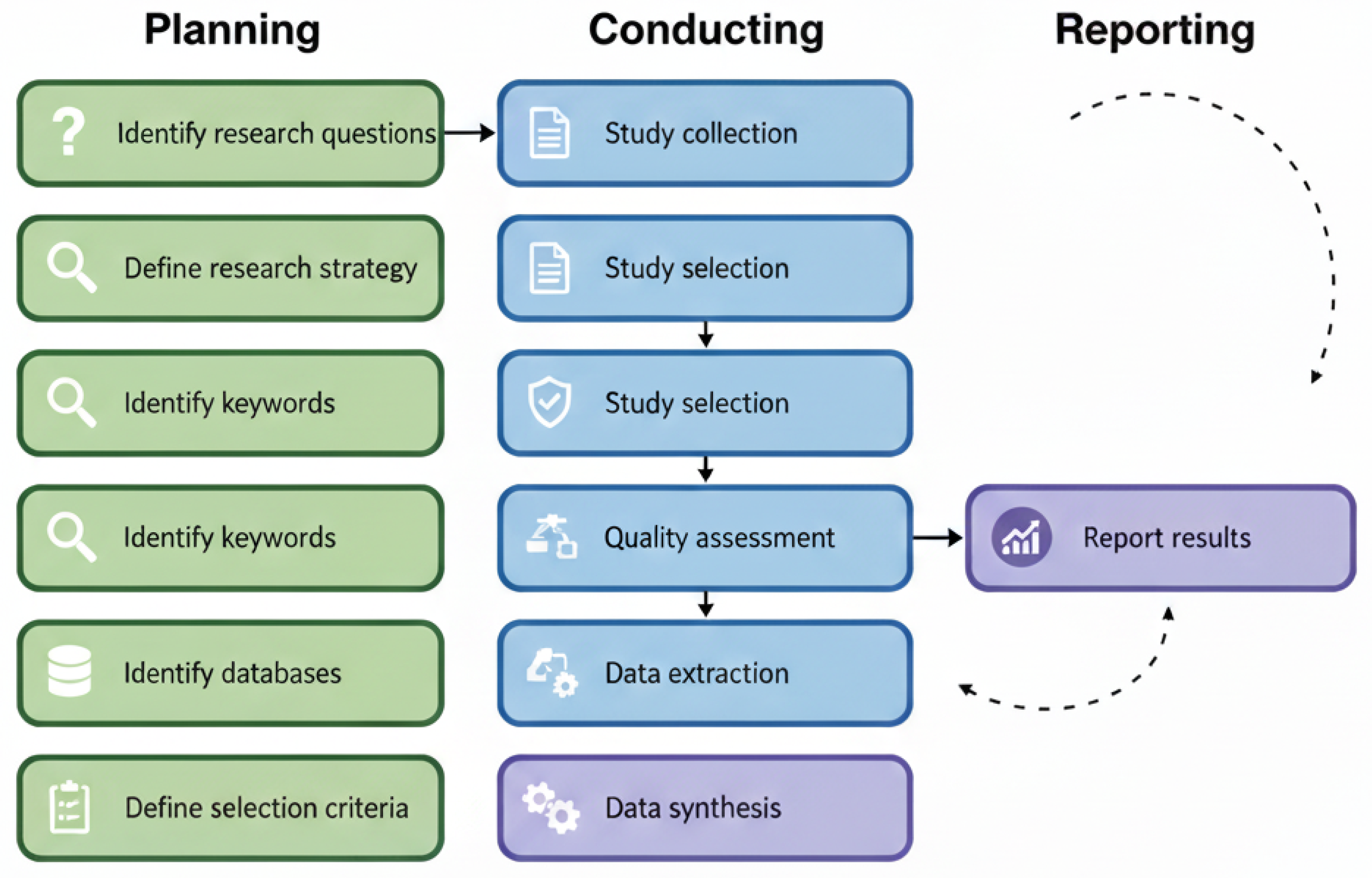

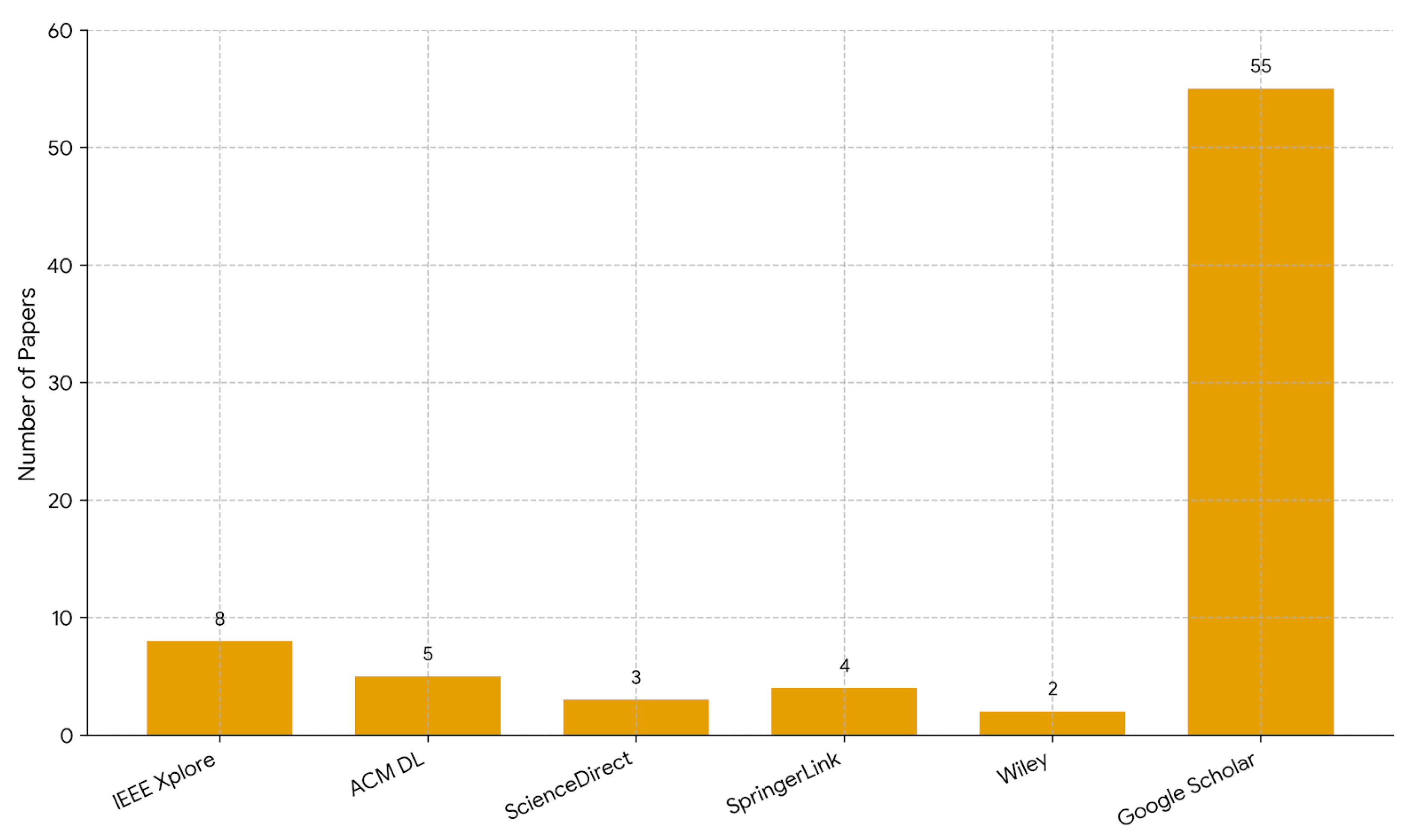

3. Research Methodology

- RQ1:

- Which platforms are addressed in enterprise RAG + LLM studies for knowledge management and document automation?

- RQ2:

- Which datasets are used in these RAG + LLM studies?

- RQ3:

- Which types of machine learning (supervised, unsupervised, etc.) are employed?

- RQ4:

- Which specific RAG architectures and LLM algorithms are applied?

- RQ5:

- Which evaluation metrics are used to assess model performance?

- RQ6:

- Which validation approaches (cross validation, hold out, case studies) are adopted?

- RQ7:

- What knowledge and software metrics are utilized?

- RQ8:

- Which RAG + LLM configurations achieve the best performance for enterprise applications?

- RQ9:

- What are the main practical challenges, limitations, and research gaps in applying RAG + LLMs in this domain?

(("Retrieval Augmented Generation" OR RAG) AND ("Large Language Model" OR LLM) AND ("Knowledge Management" OR "Document Automation" OR Enterprise))

- E1.

- The paper includes only an abstract (we required full text, peer reviewed articles).

- E2.

- The paper is not written in English.

- E3.

- The article is not a primary study.

- E4.

- The content does not provide any experimental or evaluation results.

- E5.

- The study does not describe how Retrieval Augmented Generation or LLM methods work.

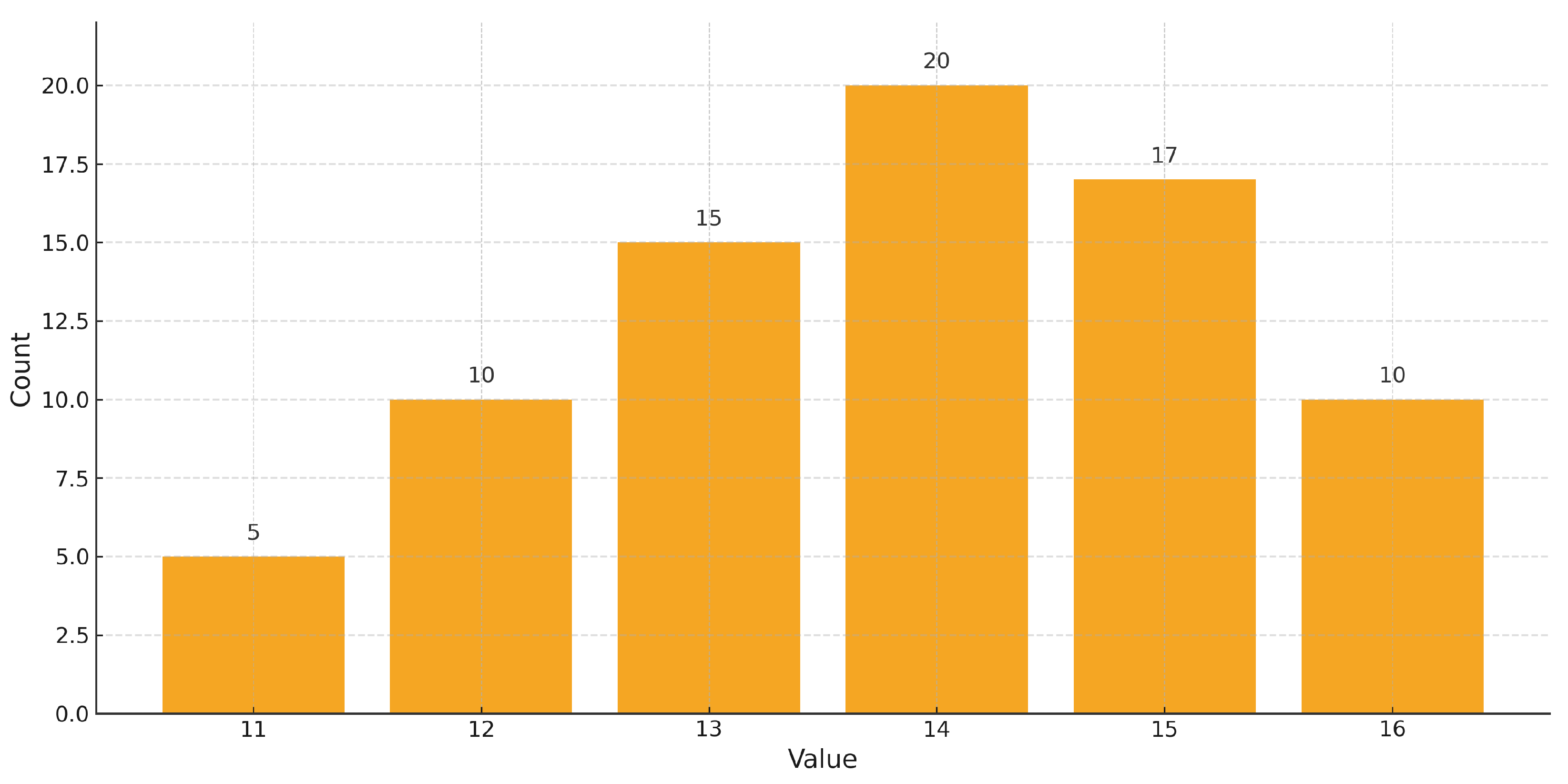

- Q1.

- Are the aims of the study declared?

- Q2.

- Are the scope and context of the study clearly defined?

- Q3.

- Is the proposed solution (RAG + LLM method) clearly explained and validated by an empirical evaluation?

- Q4.

- Are the variables (datasets, metrics, parameters) used in the study likely valid and reliable?

- Q5.

- Is the research process (data collection, model building, analysis) documented adequately?

- Q6.

- Does the study answer all research questions (RQ1–RQ9)?

- Q7.

- Are negative or null findings (limitations, failures) transparently reported?

- Q8.

- Are the main findings stated clearly in terms of credibility, validity, and reliability?

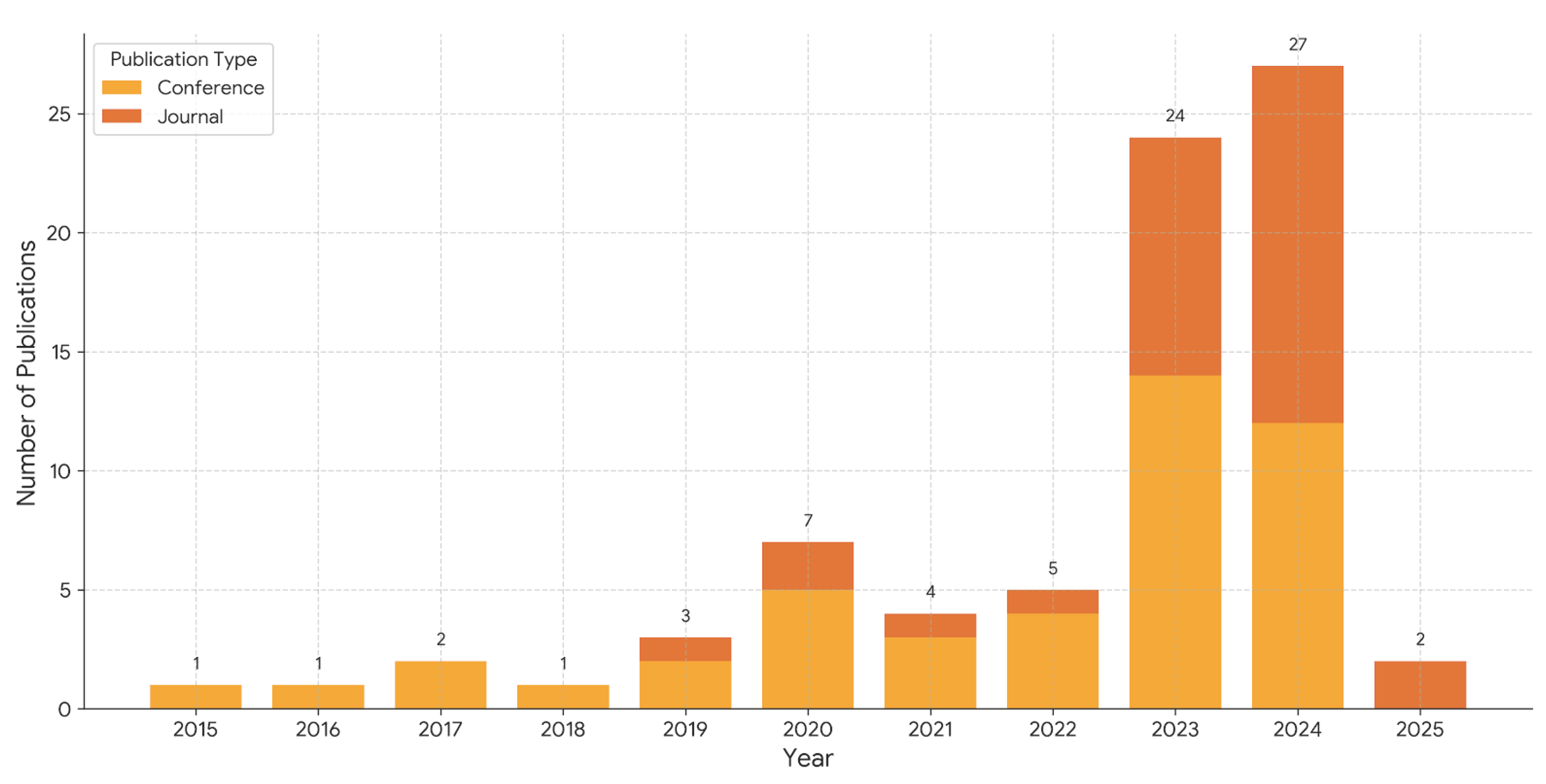

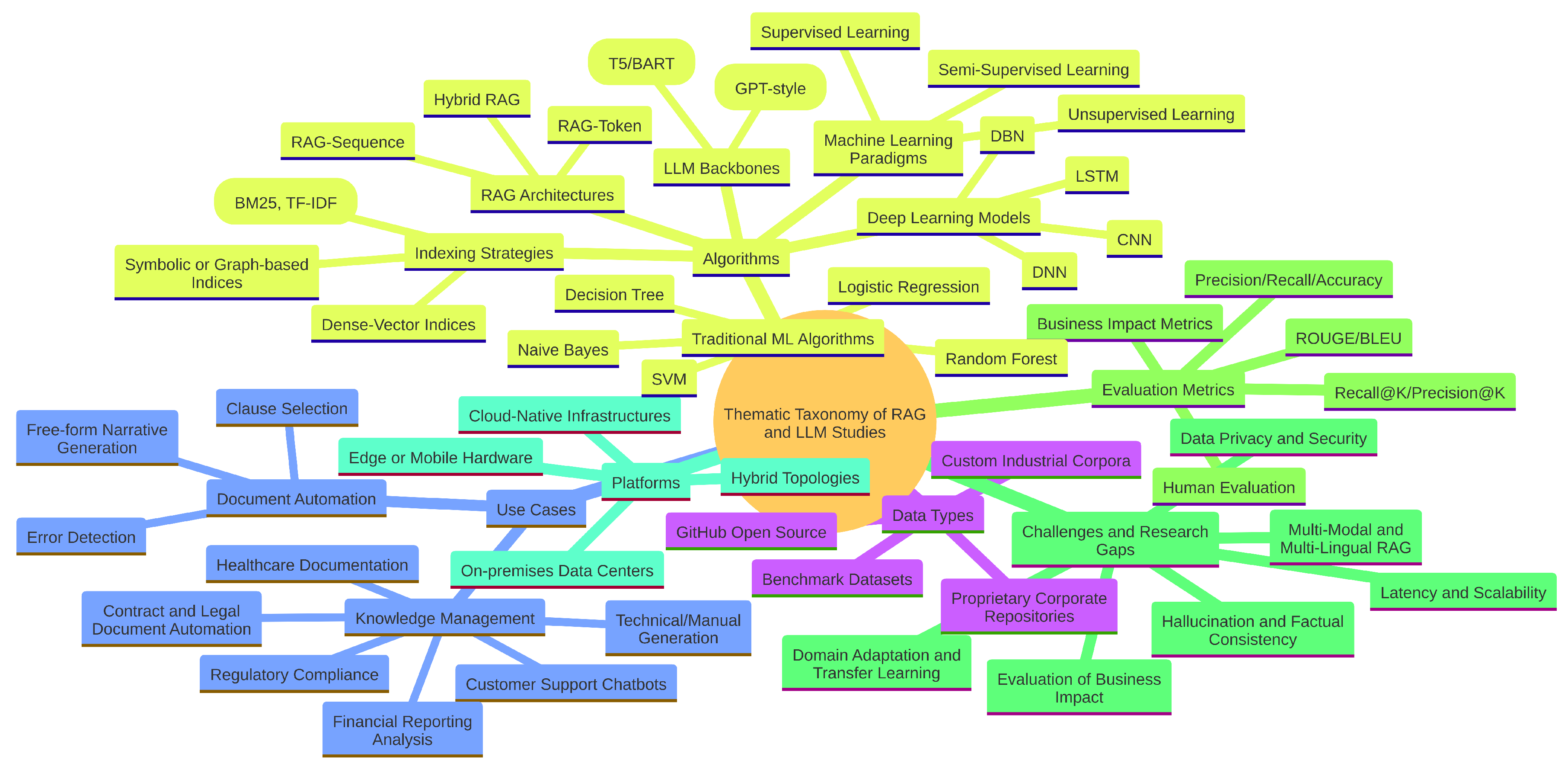

4. Results

4.1. RQ 1: Platforms Addressed

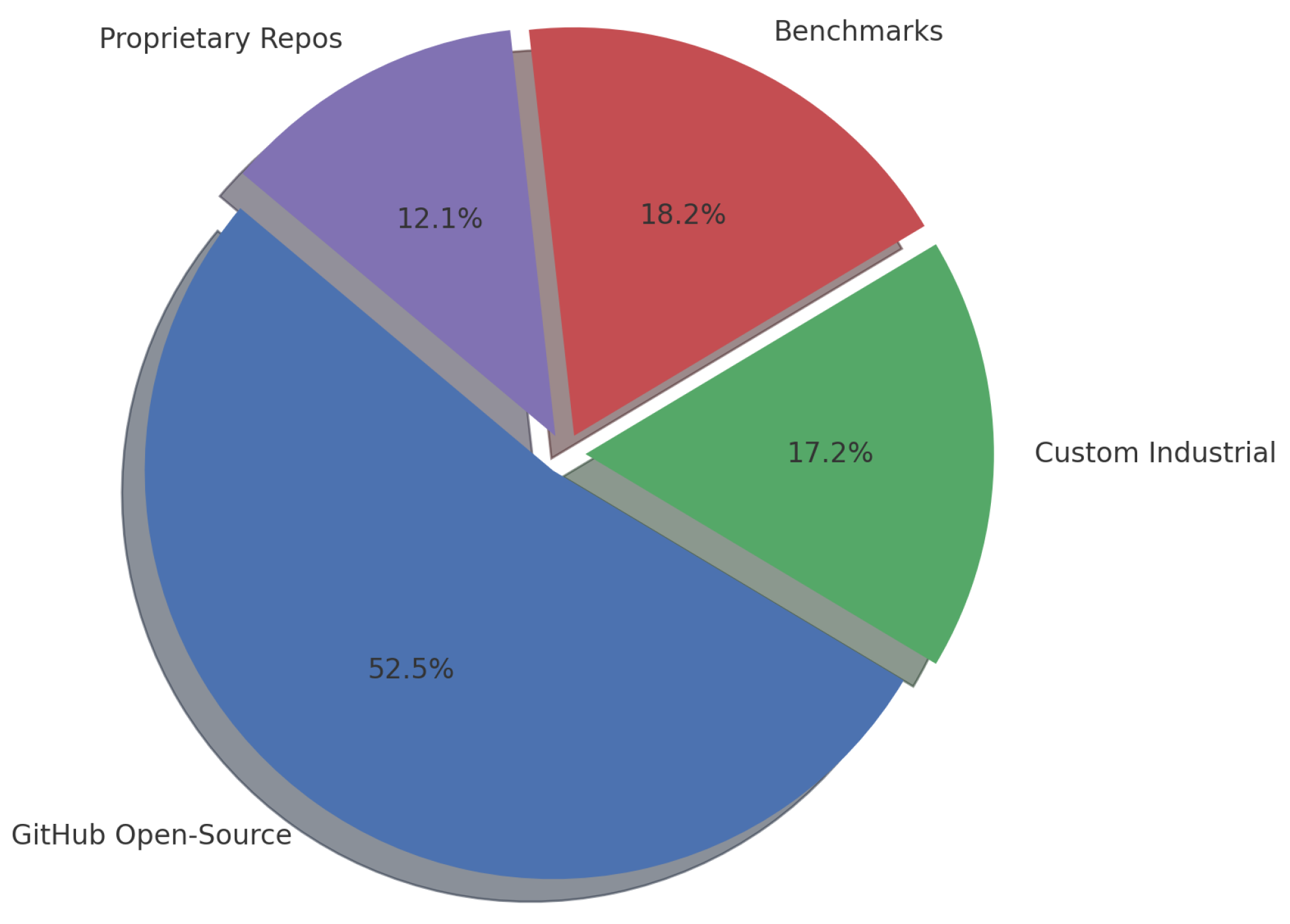

4.2. RQ2: Dataset Sources for Enterprise RAG + LLM Studies

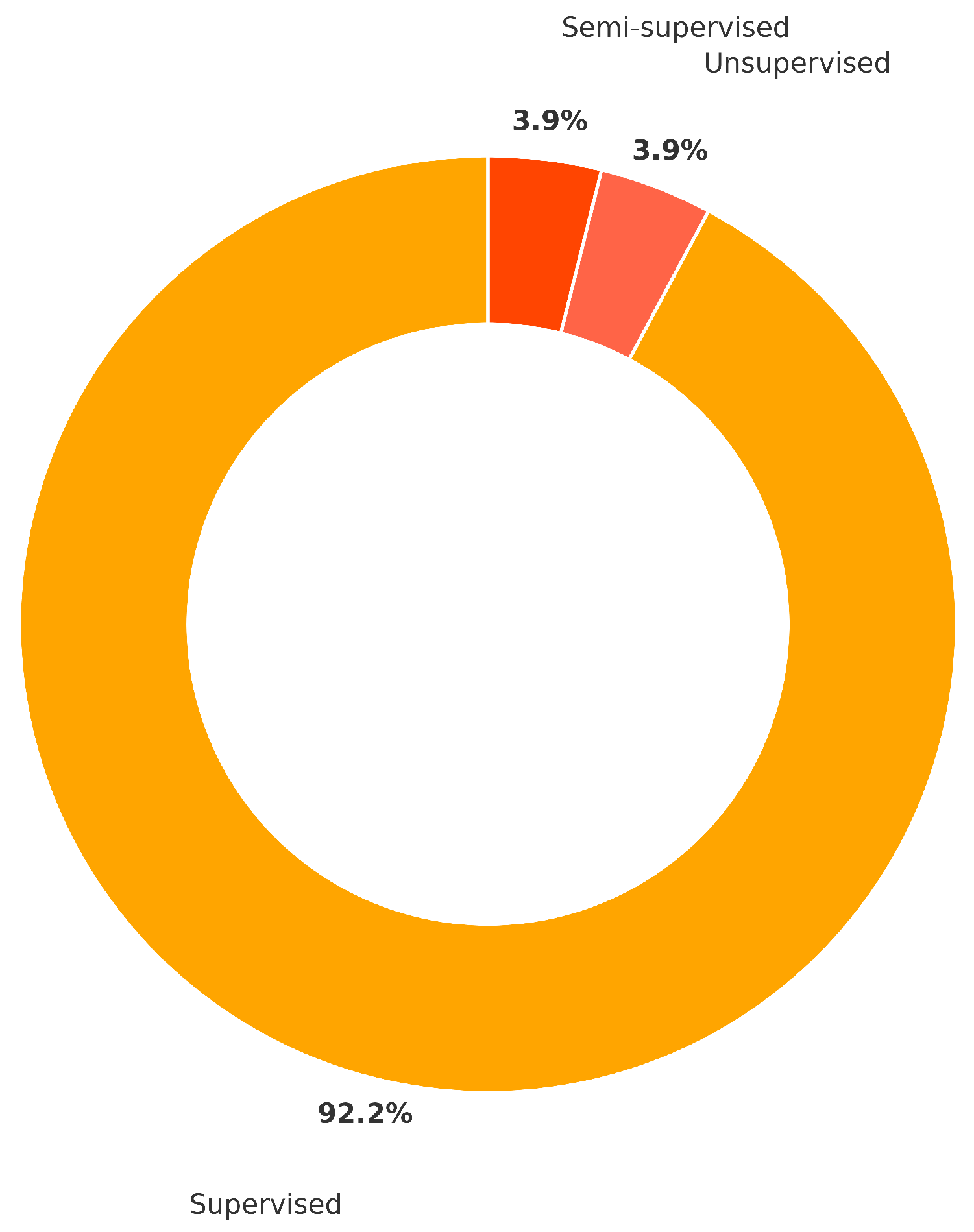

4.3. RQ3: Machine Learning Paradigms Employed

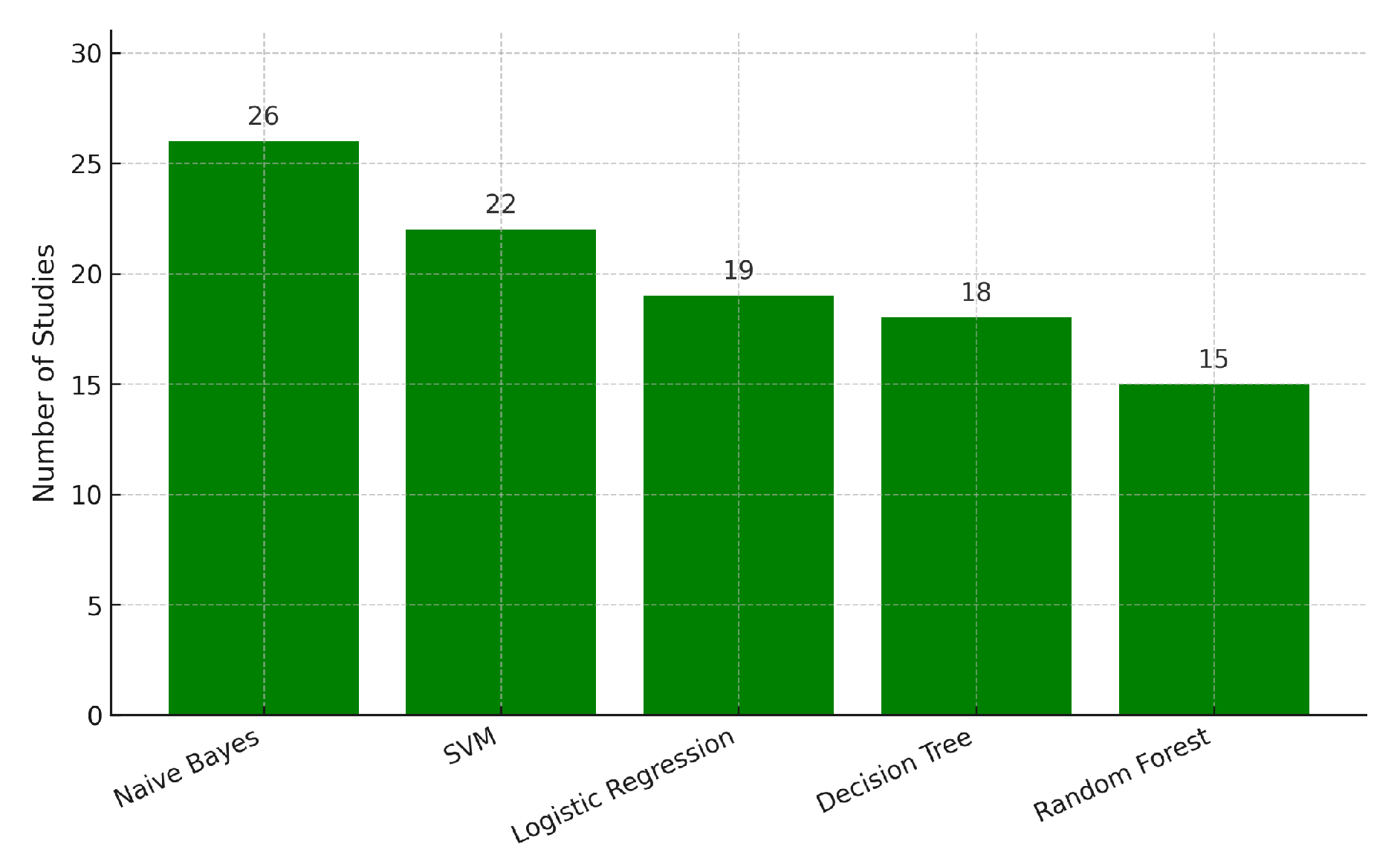

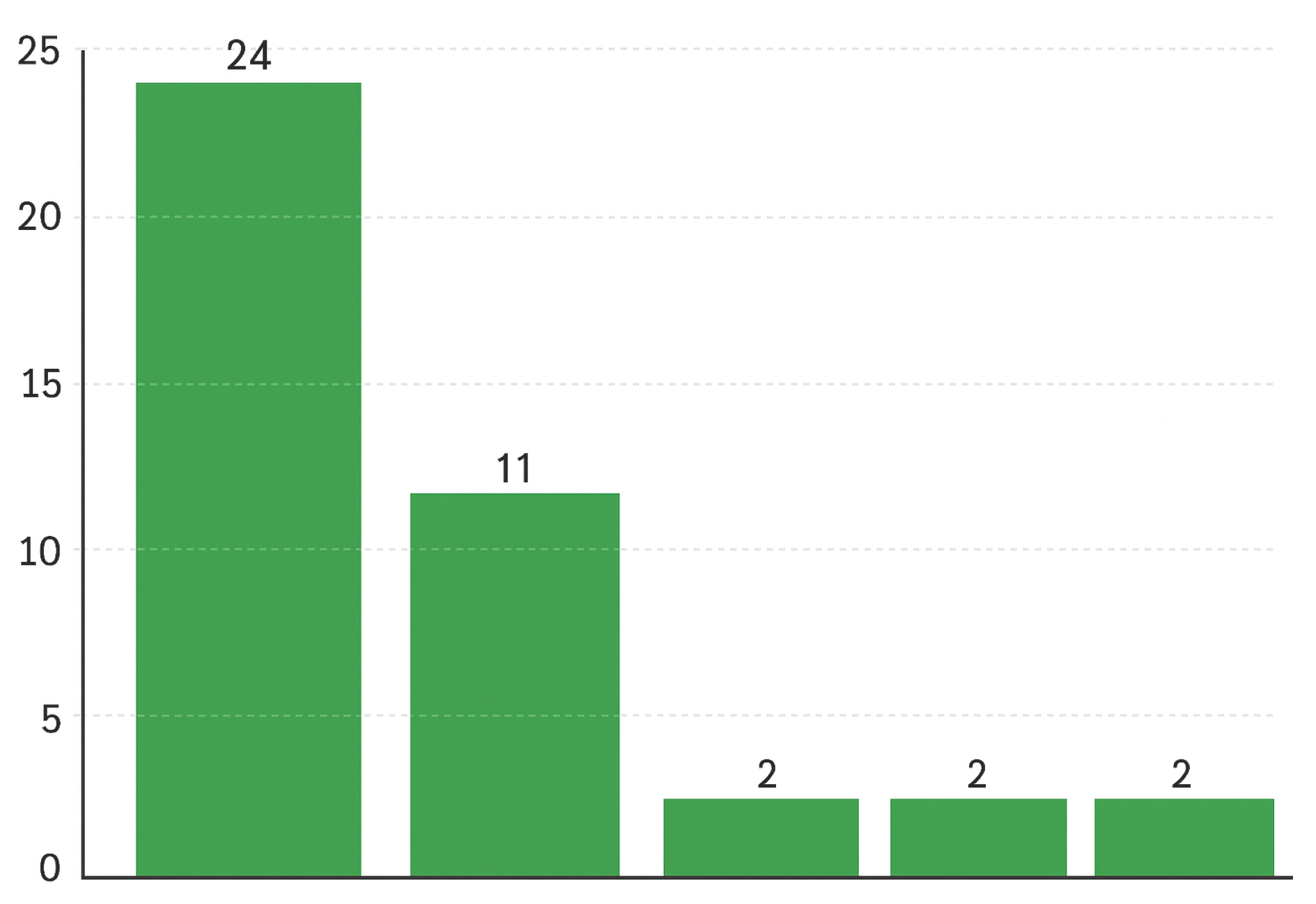

4.4. RQ4: Machine Learning and RAG Architectures Applied

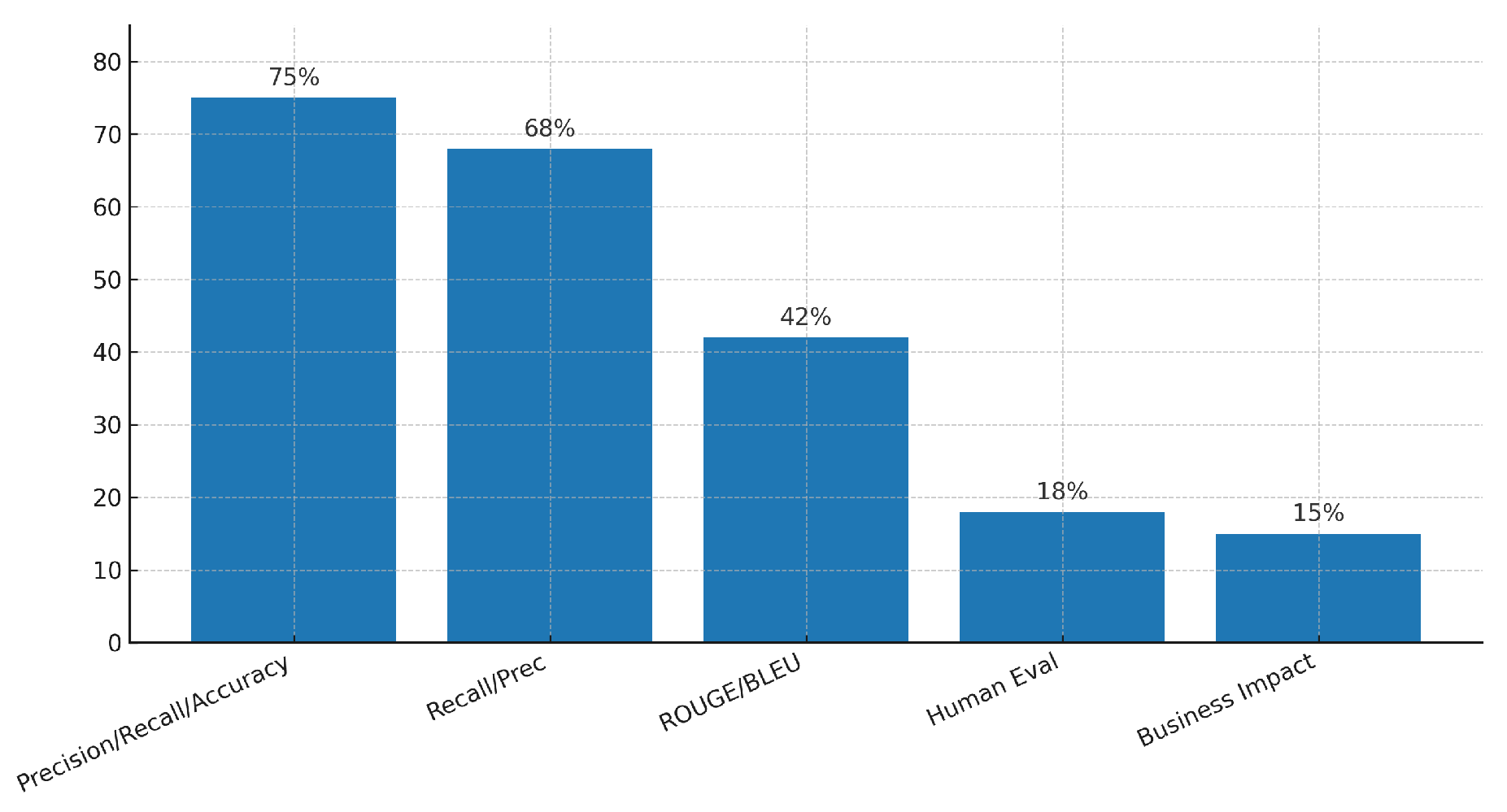

4.5. RQ5: Evaluation Metrics Employed

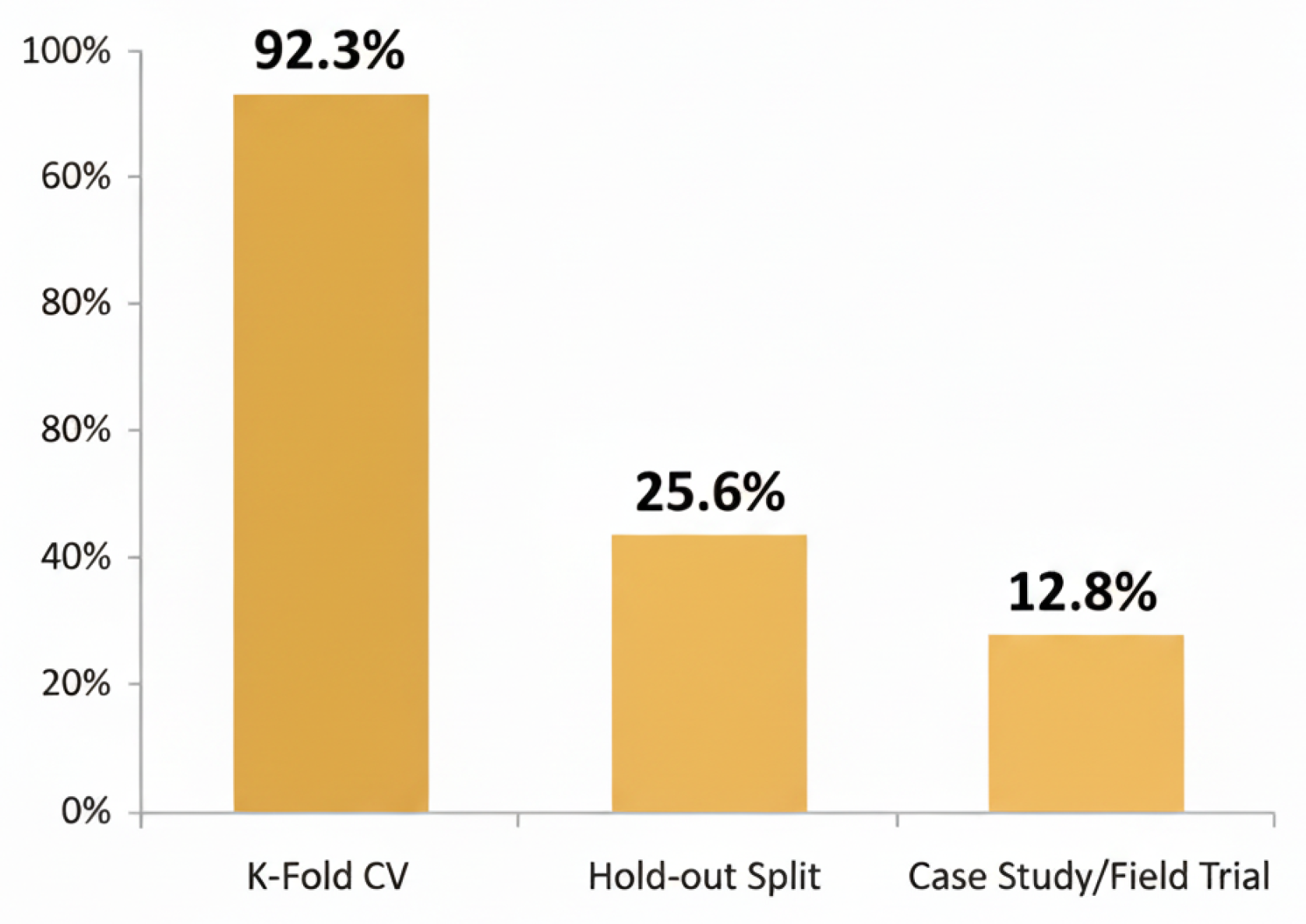

4.6. RQ6: Validation Approaches Adopted

4.7. RQ7: Software Metrics Adopted

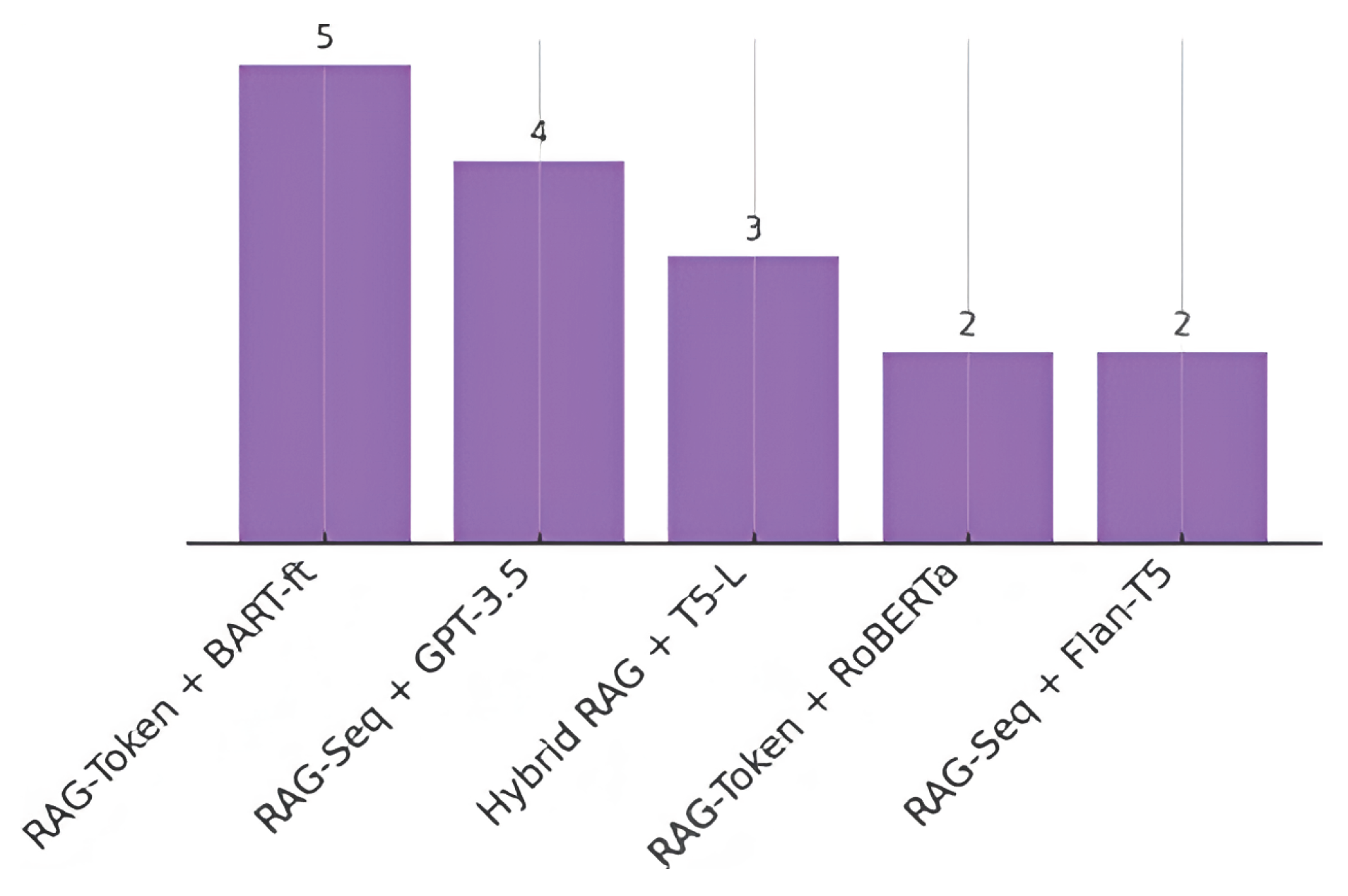

4.8. RQ8: Best Performing RAG + LLM Configurations

4.9. RQ9: Challenges and Research Gaps

5. Discussion

5.1. Synthesis of Key Findings

5.2. Practical Implications for Enterprise Adoption

5.3. Limitations of This Review

5.4. Future Research Directions

- Standardized Benchmarks: Setting up business benchmarks that blend technical performance with real world operations, user feedback, and compliance requirements is vital [17].

6. Conclusions and Future Work

- Holistic Evaluation: Pair automated scores with human studies and operational KPIs (cycle time, error rate, satisfaction, compliance); contribute to shared benchmarks that foreground business impact [17].

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| RAG | Retrieval-Augmented Generation |

| LLM | Large Language Model |

| SLR | Systematic Literature Review |

| NLP | Natural Language Processing |

| QA | Question Answering |

| KG | Knowledge Graph |

| MDPI | Multidisciplinary Digital Publishing Institute |

References

- Mallen, A.; Asai, A.; Zhong, V.; Das, R.; Khashabi, D.; Hajishirzi, H. When Not to Trust Language Models: Investigating Effectiveness of Parametric and Non-Parametric Memories. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (ACL), 2023, pp. 9802–9822. [CrossRef]

- Lazaridou, A.; Gribovskaya, E.; Stokowiec, W.; Grigorev, N.; McInnis, H.; et al. Internet-augmented language models through few-shot prompting for open-domain question answering. arXiv preprint arXiv:2203.05115 2022. [CrossRef]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; Cao, Y. ReAct: Synergizing Reasoning and Acting in Language Models. In Proceedings of the International Conference on Learning Representations (ICLR), 2023. [CrossRef]

- Wang, N.; Han, X.; Singh, J.; Ma, J.; Chaudhary, V. CausalRAG: Integrating Causal Graphs into Retrieval-Augmented Generation. arXiv preprint arXiv:2503.19878 2025. [CrossRef]

- Ma, X.; Gong, Y.; He, P.; Zhao, H.; Duan, N. Query Rewriting for Retrieval-Augmented Large Language Models. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2023, pp. 5303–5315. [CrossRef]

- Chowdhery, A.; Narang, S.; Devlin, J.; Bosma, M.; Mishra, G.; Roberts, A.; Barham, P.; Chung, H.W.; Sutton, C.; Gehrmann, S.; et al. PaLM: Scaling Language Modeling with Pathways. Journal of Machine Learning Research (JMLR) 2023, 24, 1–113.

- Khattab, O.; Singhvi, A.; Maheshwari, P.; Zhang, Z.; Santhanam, K.; Vardhamanan, S.; Haq, S.; Sharma, A.; Joshi, T.T.; Moazam, H.; et al. DSPy: Compiling Declarative Language Model Calls into Self-Improving Pipelines. In Proceedings of the International Conference on Learning Representations (ICLR), 2024.

- Kandpal, N.; Deng, H.; Roberts, A.; Wallace, E.; Raffel, C. Large Language Models Struggle to Learn Long-Tail Knowledge. In Proceedings of the Proceedings of the 40th International Conference on Machine Learning (ICML), 2023, pp. 15696–15707.

- Arslan, M.; Mahdjoubi, L.; Munawar, S.; Cruz, C. Driving Sustainable Energy Transitions with a Multi-Source RAG-LLM System. Energy and Buildings 2024, 324, 114827. [CrossRef]

- Sun, J.; Xu, C.; Tang, L.; Wang, S.; Lin, C.; Gong, Y.; Ni, L.M.; Shum, H.Y.; Guo, J. Think-on-Graph: Deep and Responsible Reasoning of Large Language Models with Knowledge Graphs. In Proceedings of the International Conference on Learning Representations (ICLR), 2024.

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.R.; Le, Q.V. XLNet: Generalized Autoregressive Pretraining for Language Understanding. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2019, Vol. 32.

- OpenAI. GPT-4 Technical Report. arXiv arXiv:2303.08774 2023. [CrossRef]

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S.; et al. Llama 2: Open Foundation and Fine-Tuned Chat Models. arXiv preprint arXiv:2307.09288 2023. [CrossRef]

- Zhang, S.; Roller, S.; Goyal, N.; Artetxe, M.; Chen, M.; et al. OPT: Open Pre-trained Transformer Language Models. arXiv preprint arXiv:2205.01068 2022. [CrossRef]

- Black, S.; Biderman, S.; Hallahan, E.; Anthony, Q.; Gao, L.; Golding, L.; He, H.; Leahy, C.; McDonell, K.; Phang, J.; et al. GPT-NeoX-20B: An Open-Source Autoregressive Language Model. In Proceedings of the Proceedings of BigScience Episode #5 – Workshop on Challenges & Perspectives in Creating Large Language Models, 2022, pp. 95–136. [CrossRef]

- Le Scao, T.; Fan, A.; Akiki, C.; Pavlick, E.; Ilić, S.; Hesslow, D.; et al. BLOOM: A 176B-Parameter Open-Access Multilingual Language Model. arXiv arXiv:2211.05100 2022. [CrossRef]

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; Ishii, E.; Bang, Y.; Madotto, A.; Fung, P. Survey of Hallucination in Natural Language Generation. ACM Computing Surveys 2023, 55, 1–38. [CrossRef]

- Zhang, Y.; Li, Y.; Cui, L.; Cai, D.; Liu, L.; Fu, T.; Huang, X.; Zhao, E.; Zhang, Y.; Xu, C.; et al. Siren’s Song in the AI Ocean: A Survey on Hallucination in Large Language Models. arXiv preprint arXiv:2309.01219 2023. [CrossRef]

- Gao, Y.; Xiong, Y.; Gao, X.; Jia, K.; Pan, J.; Bi, Y.; Dai, Y.; Sun, J.; Wang, M.; Wang, H. Retrieval-Augmented Generation for Large Language Models: A Survey. arXiv preprint arXiv:2312.10997 2023. [CrossRef]

- Cui, L.; Wu, Y.; Liu, J.; Yang, S.; Zhang, Y. Template-Based Named Entity Recognition Using BART. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, 2021, pp. 1835–1845. [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. In Proceedings of the International Conference on Learning Representations (ICLR), 2020.

- Rajpurkar, P.; Zhang, J.; Lopyrev, K.; Liang, P. SQuAD: 100,000+ Questions for Machine Comprehension of Text. In Proceedings of the Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2016, pp. 2383–2392. [CrossRef]

- Chen, D.; Fisch, A.; Weston, J.; Bordes, A. Reading Wikipedia to Answer Open-Domain Questions. In Proceedings of the Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (ACL), 2017, pp. 1870–1879. [CrossRef]

- Qu, Y.; Ding, Y.; Liu, J.; Liu, K.; Ren, R.; Zhao, W.X.; Dong, D.; Wu, H.; Wang, H. RocketQA: An Optimized Training Approach to Dense Passage Retrieval for Open-Domain Question Answering. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021, pp. 5835–5847. [CrossRef]

- Shi, W.; Min, S.; Yasunaga, M.; Seo, M.; James, R.; Lewis, M.; Zettlemoyer, L.; Yih, W.t. REPLUG: Retrieval-Augmented Black-Box Language Models. In Proceedings of the Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2024, pp. 8371–8384. [CrossRef]

- Mialon, G.; Dessì, R.; Lomeli, M.; Nalmpantis, C.; Pasunuru, R.; Raileanu, R.; Rozière, B.; Schick, T.; Dwivedi-Yu, J.; Celikyilmaz, A.; et al. Augmented Language Models: a Survey. Transactions on Machine Learning Research (TMLR) 2023.

- Sanh, V.; Webson, A.; Raffel, C.; Bach, S.H.; Sutawika, L.; Alyafeai, Z.; et al. Multitask Prompted Training Enables Zero-Shot Task Generalization. In Proceedings of the International Conference on Learning Representations (ICLR), 2022.

- Min, S.; Lewis, M.; Zettlemoyer, L.; Hajishirzi, H. MetaICL: Learning to Learn In Context. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2022, pp. 2791–2809. [CrossRef]

- Xiong, L.; Xiong, C.; Li, Y.; Tang, K.F.; Liu, J.; Bennett, P.; Ahmed, J.; Overwijk, A. Approximate Nearest Neighbor Negative Contrastive Learning for Dense Text Retrieval. In Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Wei, J.; Wang, X.; Schuurmans, D.; et al. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2022, Vol. 35, pp. 24824–24837.

- Asai, A.; Wu, Z.; Wang, Y.; Sil, A.; Hajishirzi, H. Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection. In Proceedings of the Proceedings of the International Conference on Learning Representations, 2024. [CrossRef]

- Conneau, A.; Khandelwal, K.; Goyal, N.; Chaudhary, V.; Wenzek, G.; Guzmán, F.; Grave, E.; Ott, M.; Zettlemoyer, L.; Stoyanov, V. Unsupervised Cross-lingual Representation Learning at Scale. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics (ACL), 2020, pp. 8440–8451. [CrossRef]

- Trivedi, H.; Balasubramanian, N.; Khot, T.; Sabharwal, A. Interleaving Retrieval with Chain-of-Thought Reasoning for Knowledge-Intensive Multi-Step Questions. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (ACL), 2023, pp. 10014–10037. [CrossRef]

- Song, K.; Tan, X.; Qin, T.; Lu, J.; Liu, T.Y. MPNet: Masked and Permuted Pre-training for Language Understanding. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2020, Vol. 33, pp. 16857–16867.

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Hambro, E.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language Models Can Teach Themselves to Use Tools. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2023, Vol. 36, pp. 68539–68551.

- Borgeaud, S.; Mensch, A.; Hoffmann, J.; Cai, T.; Rutherford, E.; Millican, K.; Van Den Driessche, G.B.; Lespiau, J.B.; Damoc, B.; Clark, A.; et al. Improving Language Models by Retrieving from Trillions of Tokens. In Proceedings of the Proceedings of the 39th International Conference on Machine Learning (ICML), 2022, pp. 2206–2240.

- Luo, H.; Zhang, T.; Chuang, Y.S.; Gong, Y.; Kim, Y.; Wu, X.; Meng, H.; Glass, J. Search Augmented Instruction Learning. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, 2023, pp. 3717–3729. [CrossRef]

- Qin, Y.; Liang, S.; Ye, Y.; Zhu, K.; Yan, L.; Lu, Y. ToolLLM: Facilitating Large Language Models to Master 16000+ Real-world APIs. In Proceedings of the International Conference on Learning Representations (ICLR), 2024.

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I. Language Models are Unsupervised Multitask Learners. OpenAI blog 2019, 1, 9.

- Thakur, N.; Reimers, N.; Rücklé, A.; Srivastava, A.; Gurevych, I. BEIR: A Heterogenous Benchmark for Zero-shot Evaluation of Information Retrieval Models. In Proceedings of the Advances in Neural Information Processing Systems 34 (NeurIPS 2021), Track on Datasets and Benchmarks, 2021, pp. 21249–21260.

- Hermann, K.M.; Kocisky, T.; Grefenstette, E.; Espeholt, L.; Kay, W.; Suleyman, M.; Blunsom, P. Teaching Machines to Read and Comprehend. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2015, Vol. 28.

- Zakka, C.; Shad, R.; Chaurasia, A.; Dalal, A.R.; Kim, J.L.; Moor, M.; Alexander, K.; Ashley, E.; Leeper, N.J.; Dunnmon, J. Almanac: Retrieval-Augmented Language Models for Clinical Medicine. NEJM AI 2024, 1. [CrossRef]

- Kwiatkowski, T.; Palomaki, J.; Redfield, O.; Collins, M.; Parikh, A.; Alberti, C.; Epstein, D.; Polosukhin, I.; Devlin, J.; Lee, K.; et al. Natural Questions: A Benchmark for Question Answering Research. Transactions of the Association for Computational Linguistics (TACL) 2019, 7, 452–466. [CrossRef]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer. Journal of Machine Learning Research (JMLR) 2020, 21, 1–67.

- Lewis, M.; Liu, Y.; Goyal, N.; Ghazvininejad, M.; Mohamed, A.; Levy, O.; Stoyanov, V.; Zettlemoyer, L. BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics (ACL), 2020, pp. 7871–7880. [CrossRef]

- Lan, Z.; Chen, M.; Goodman, S.; Gimpel, K.; Sharma, P.; Soricut, R. ALBERT: A Lite BERT for Self-supervised Learning of Language Representations. In Proceedings of the International Conference on Learning Representations (ICLR), 2020.

- Wu, Y.; Li, H.; et al. Does RAG Introduce Unfairness in LLMs? Evaluating Fairness in Retrieval-Augmented Generation Systems. arXiv preprint arXiv:2409.19804 2024. [CrossRef]

- Es, S.; James, J.; Espinosa-Anke, L.; Schockaert, S. RAGAS: Automated Evaluation of Retrieval Augmented Generation. In Proceedings of the Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations (EACL), 2024, pp. 150–158. [CrossRef]

- Levine, Y.; Dalmedigos, I.; Ram, O.; Zeldes, Y.; Jannai, D.; Muhlgay, D.; Osin, Y.; Lieber, O.; Lenz, B.; Shalev-Shwartz, S.; et al. Standing on the Shoulders of Giant Frozen Language Models. arXiv preprint arXiv:2204.10019 2022. [CrossRef]

- Yang, Z.; Qi, P.; Zhang, S.; Bengio, Y.; Cohen, W.W.; Salakhutdinov, R.; Manning, C.D. HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering. In Proceedings of the Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2018, pp. 2369–2380. [CrossRef]

- Xiong, W.; Li, J.; Iyer, S.; Du, W.; Lewis, P.; Wang, W.Y.; Stoyanov, V.; Oguz, B. Benchmarking Retrieval-Augmented Generation for Medicine. arXiv arXiv:2402.13178 2024. [CrossRef]

- Zhang, B.; Yang, H.; Zhou, T.; Babar, A.; Giles, C.L. Enhancing Financial Sentiment Analysis via Retrieval Augmented Large Language Models. In Proceedings of the Proceedings of the 4th ACM International Conference on AI in Finance (ICAIF), 2023, pp. 549–556. [CrossRef]

- Lee, K.; Chang, M.W.; Toutanova, K. Latent Retrieval for Weakly Supervised Open Domain Question Answering. In Proceedings of the Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics (ACL), 2019, pp. 6086–6096. [CrossRef]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. In Proceedings of the Advances in Neural Information Processing Systems, 2020, Vol. 33, pp. 9459–9474.

- Joshi, M.; Choi, E.; Weld, D.S.; Zettlemoyer, L. TriviaQA: A Large Scale Distantly Supervised Challenge Dataset for Reading Comprehension. In Proceedings of the Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (ACL), 2017, pp. 1601–1611. [CrossRef]

- He, P.; Liu, X.; Gao, J.; Chen, W. DeBERTa: Decoding-enhanced BERT with Disentangled Attention. In Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Clark, K.; Luong, M.T.; Le, Q.V.; Manning, C.D. ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators. In Proceedings of the International Conference on Learning Representations (ICLR), 2020.

- Robertson, S.; Zaragoza, H. The Probabilistic Relevance Framework: BM25 and Beyond. Foundations and Trends in Information Retrieval 2009, 3, 333–389. [CrossRef]

- Lin, X.V.; Chen, X.; Chen, M.; Shi, W.; Lomeli, M.; James, R.; Rodriguez, P.; Kahn, J.; Szilvasy, G.; Lewis, M.; et al. RA-DIT: Retrieval-Augmented Dual Instruction Tuning. In Proceedings of the International Conference on Learning Representations (ICLR), 2024. [CrossRef]

- Zhao, P.; Zhang, H.; Yu, Q.; Wang, Z.; Geng, Y.; Fu, F.; Yang, L.; Zhang, W.; Jiang, J.; Cui, B. Retrieval-Augmented Generation for AI-Generated Content: A Survey. arXiv preprint arXiv:2402.19473 2024. [CrossRef]

- Han, B.; Susnjak, T.; Mathrani, A. Automating Systematic Literature Reviews with Retrieval-Augmented Generation: A Comprehensive Overview. Appl. Sci. 2024, 14, 9103. [CrossRef]

- Chen, J.; Lin, H.; Han, X.; Sun, L. Benchmarking Large Language Models in Retrieval-Augmented Generation. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2024, Vol. 38, pp. 17754–17762. [CrossRef]

- Edge, D.; Trinh, H.; Cheng, N.; Bradley, J.; Chao, A.; Mody, A.; Truitt, S.; Metropolitansky, D.; Ness, R.O.; Larson, J. From Local to Global: A Graph RAG Approach to Query-Focused Summarization. arXiv preprint arXiv:2404.16130 2024. [CrossRef]

- Bubeck, S.; Chandrasekaran, V.; Eldan, R.; Gehrke, J.; Horvitz, E.; Kamar, E.; Lee, P.; Lee, Y.T.; Li, Y.; Lundberg, S.; et al. Sparks of Artificial General Intelligence: Early Experiments with GPT-4. arXiv preprint arXiv:2303.12712 2023. [CrossRef]

- Jiang, Z.; Xu, F.; Gao, L.; Sun, Z.; Liu, Q.; Dwivedi-Yu, J.; Yang, Y.; Callan, J.; Neubig, G. Active Retrieval Augmented Generation. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023, pp. 7969–7992. [CrossRef]

- Pan, S.; Luo, L.; Wang, Y.; Chen, C.; Wang, J.; Wu, X. Unifying Large Language Models and Knowledge Graphs: A Roadmap. IEEE Transactions on Knowledge and Data Engineering 2024, 36, 3580–3599. [CrossRef]

- Ram, O.; Levine, Y.; Dalmedigos, I.; Muhlgay, D.; Shashua, A.; Leyton-Brown, K.; Shoham, Y. In-Context Retrieval-Augmented Language Models. Transactions of the Association for Computational Linguistics 2023, 11, 1316–1331. [CrossRef]

- Dettmers, T.; Pagnoni, A.; Holtzman, A.; Zettlemoyer, L. QLoRA: Efficient Finetuning of Quantized LLMs. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2023, Vol. 36, pp. 10088–10115.

- Saad-Falcon, J.; Khattab, O.; Potts, C.; Zaharia, M. ARES: An Automated Evaluation Framework for Retrieval-Augmented Generation Systems. In Proceedings of the Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2024, pp. 338–354. [CrossRef]

- Gao, L.; Callan, J. Unsupervised Corpus Aware Language Model Pre-training for Dense Passage Retrieval. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (ACL), 2022, pp. 2843–2853. [CrossRef]

- Barnett, S.; Kurniawan, S.; Thudumu, S.; Bratanis, Z.; Lau, J.H. Seven Failure Points When Engineering a Retrieval Augmented Generation System. arXiv preprint arXiv:2401.05856 2024. [CrossRef]

- Liu, N.F.; Lin, K.; Hewitt, J.; Paranjape, A.; Bevilacqua, M.; Petroni, F.; Liang, P. Lost in the Middle: How Language Models Use Long Contexts. Transactions of the Association for Computational Linguistics (TACL) 2024, 12, 157–173. [CrossRef]

- Sarthi, P.; Abdullah, S.; Tuli, A.; Khanna, S.; Goldie, A.; Manning, C.D. RAPTOR: Recursive Abstractive Processing for Tree-Organized Retrieval. In Proceedings of the International Conference on Learning Representations (ICLR), 2024.

- Zhang, T.; Patil, S.G.; Jain, N.; Shen, S.; Zaharia, M.; Stoica, I.; Gonzalez, J.E. RAFT: Adapting Language Model to Domain Specific RAG. arXiv preprint arXiv:2403.10131 2024. [CrossRef]

- Jiang, A.Q.; Sablayrolles, A.; Mensch, A.; Bamford, C.; Chaplot, D.S.; de Las Casas, D.; Bressand, F.; Lengyel, G.; Lample, G.; Saulnier, L.; et al. Mistral 7B. CoRR 2023, abs/2310.06825. [CrossRef]

- Beltagy, I.; Peters, M.E.; Cohan, A. Longformer: The Long-Document Transformer. arXiv preprint arXiv:2004.05150 2020. [CrossRef]

- Johnson, J.; Douze, M.; Jégou, H. Billion-scale Similarity Search with GPUs. IEEE Transactions on Big Data 2019, 7, 535–547. [CrossRef]

- Malkov, Y.A.; Yashunin, D.A. Efficient and Robust Approximate Nearest Neighbor Search Using Hierarchical Navigable Small World Graphs. IEEE Transactions on Pattern Analysis and Machine Intelligence 2020, 42, 824–836. [CrossRef]

- Khandelwal, U.; Levy, O.; Jurafsky, D.; Zettlemoyer, L.; Lewis, M. Generalization through Memorization: Nearest Neighbor Language Models. In Proceedings of the International Conference on Learning Representations (ICLR), 2020.

- Gao, L.; Ma, X.; Lin, J.; Callan, J. Precise Zero-Shot Dense Retrieval without Relevance Labels. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2023, pp. 1762–1777. [CrossRef]

- Khattab, O.; Zaharia, M. ColBERT: Efficient and Effective Passage Search via Contextualized Late Interaction over BERT. In Proceedings of the Proceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval, 2020, pp. 39–48. [CrossRef]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. In Proceedings of the Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), 2019, pp. 3982–3992. [CrossRef]

- Hoffmann, J.; Borgeaud, S.; Mensch, A.; et al. Training Compute-Optimal Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2022, Vol. 35, pp. 30016–30030.

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling Laws for Neural Language Models. arXiv preprint arXiv:2001.08361 2020. [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2017, Vol. 30, pp. 5998–6008.

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2019, pp. 4171–4186. [CrossRef]

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; et al. Language Models are Few-Shot Learners. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2020, Vol. 33, pp. 1877–1901.

- Karpukhin, V.; Oguz, B.; Min, S.; Lewis, P.; Wu, L.; Edunov, S.; Chen, D.; Yih, W.t. Dense Passage Retrieval for Open-Domain Question Answering. In Proceedings of the Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2020, pp. 6769–6781. [CrossRef]

- Izacard, G.; Grave, E. Leveraging Passage Retrieval with Generative Models for Open Domain Question Answering. In Proceedings of the Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics (EACL), 2021, pp. 874–880. [CrossRef]

- Wu, S.; Irsoy, O.; Lu, S.; Dabravolski, V.; Dredze, M.; Gehrmann, S.; Kambadur, P.; Rosenberg, D.; Mann, G. BloombergGPT: A Large Language Model for Finance. arXiv preprint arXiv:2303.17564 2023. [CrossRef]

- Yang, H.; Liu, X.Y.; Wang, C.D. FinGPT: Open-Source Financial Large Language Models. arXiv preprint arXiv:2306.06031 2023. [CrossRef]

- Singhal, K.; Azizi, S.; Tu, T.; Mahdavi, S.S.; Wei, J.; Chung, H.W.; Scales, N.; Tanwani, A.; Cole-Lewis, H.; Pfohl, S.; et al. Large Language Models Encode Clinical Knowledge. Nature 2023, 620, 172–180. [CrossRef]

- Dhuliawala, S.; Komeili, M.; Xu, J.; Raileanu, R.; Li, X.; Celikyilmaz, A.; Weston, J. Chain-of-Verification Reduces Hallucination in Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2024, 2024, pp. 3563–3578. [CrossRef]

- Yan, S.Q.; Gu, J.C.; Zhu, Y.; Ling, Z.H. Corrective Retrieval Augmented Generation. arXiv preprint arXiv:2401.15884 2024. [CrossRef]

- Kaddour, J.; Harris, J.; Mozes, M.; Bradley, H.; Raileanu, R.; McHardy, R. Challenges and Applications of Large Language Models. arXiv preprint arXiv:2307.10169 2023. [CrossRef]

- Liu, Y.; Iter, D.; Xu, Y.; Wang, S.; Xu, R.; Zhu, C. G-Eval: NLG Evaluation using GPT-4 with Better Human Alignment. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2023, pp. 2511–2522. [CrossRef]

- Patil, S.G.; Zhang, T.; Wang, X.; Gonzalez, J.E. Gorilla: Large Language Model Connected with Massive APIs. arXiv preprint arXiv:2305.15334 2023. [CrossRef]

- Dao, T.; Fu, D.Y.; Ermon, S.; Rudra, A.; Ré, C. FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2022, Vol. 35, pp. 16344–16359.

- Izacard, G.; Lewis, P.; Lomeli, M.; Hosseini, L.; Petroni, F.; Schick, T.; Dwivedi-Yu, J.; Joulin, A.; Riedel, S.; Grave, E. Atlas: Few-shot Learning with Retrieval Augmented Language Models. Journal of Machine Learning Research (JMLR) 2023, 24, 1–43.

- Yang, H.; Zhang, M.; Wei, D.; Guo, J. SRAG: Speech Retrieval Augmented Generation for Spoken Language Understanding. In Proceedings of the 2024 IEEE 2nd International Conference on Control, Electronics and Computer Technology (ICCECT), 2024, pp. 370–374. [CrossRef]

- Wu, T.; Luo, L.; Li, Y.F.; Pan, S.; Vu, T.T.; Haffari, G. Continual Learning for Large Language Models: A Survey. arXiv preprint arXiv:2402.01364 2024. [CrossRef]

- Chen, W.; He, H.; Cheng, Y.; Chang, M.W.; Cohen, W.W.; Wang, W.Y. MuRAG: Multimodal Retrieval-Augmented Generator for Open Question Answering over Images and Text. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2022, pp. 5558–5570. [CrossRef]

- Guu, K.; Lee, K.; Tung, Z.; Pasupat, P.; Chang, M.W. Retrieval-Augmented Language Model Pre-Training. In Proceedings of the Proceedings of the 37th International Conference on Machine Learning (ICML), 2020, pp. 3929–3938.

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training Language Models to Follow Instructions with Human Feedback. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2022, Vol. 35, pp. 27730–27744.

- Wang, L.; Yang, N.; Wei, F. Query2doc: Query Expansion with Large Language Models. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2023, pp. 9414–9423. [CrossRef]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. BLEU: a Method for Automatic Evaluation of Machine Translation. In Proceedings of the Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics (ACL), 2002, pp. 311–318. [CrossRef]

- Bajaj, P.; Campos, D.; Craswell, N.; Deng, L.; Gao, J.; Liu, X.; Majumder, R.; McNamara, A.; Mitra, B.; Nguyen, T.; et al. MS MARCO: A Human Generated MAchine Reading COmprehension Dataset. arXiv preprint arXiv:1611.09268 2016.

- Lin, C.Y. ROUGE: A Package for Automatic Evaluation of Summaries. In Proceedings of the Text Summarization Branches Out, 2004, pp. 74–81.

- See, A.; Liu, P.J.; Manning, C.D. Get To The Point: Summarization with Pointer-Generator Networks. In Proceedings of the Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (ACL), 2017, pp. 1073–1083. [CrossRef]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. In Proceedings of the International Conference on Learning Representations (ICLR), 2022.

- Nakano, R.; Hilton, J.; Balaji, S.; Wu, J.; Ouyang, L.; Kim, C.; Hesse, C.; Jain, S.; Kosaraju, V.; Saunders, W.; et al. WebGPT: Browser-assisted question-answering with human feedback. arXiv preprint arXiv:2112.09332 2021. [CrossRef]

- Yang, J.; Jimenez, C.; Wettig, A.; Lunt, K.; Yao, S.; Narasimhan, K.; Press, O. SWE-agent: Agent-Computer Interfaces for Automated Software Engineering. arXiv preprint arXiv:2405.15793 2024. [CrossRef]

- Shoeybi, M.; Patwary, M.; Puri, R.; LeGresley, P.; Casper, J.; Catanzaro, B. Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism. arXiv preprint arXiv:1909.08053 2019. [CrossRef]

- Dong, L.; Yang, N.; Wang, W.; Wei, F.; Liu, X.; Wang, Y.; Gao, J.; Zhou, M.; Hon, H.W. Unified Language Model Pre-training for Natural Language Understanding and Generation. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2019, Vol. 32.

- Xue, L.; Constant, N.; Roberts, A.; Kale, M.; Al-Rfou, R.; Siddhant, A.; Barua, A.; Raffel, C. mT5: A Massively Multilingual Pre-trained Text-to-Text Transformer. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021, pp. 483–498. [CrossRef]

- Artetxe, M.; Ruder, S.; Yogatama, D. On the Cross-lingual Transferability of Monolingual Representations. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2020, pp. 4623–4637. [CrossRef]

- Lester, B.; Al-Rfou, R.; Constant, N. The Power of Scale for Parameter-Efficient Prompt Tuning. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021, pp. 3045–3059. [CrossRef]

- Li, X.L.; Liang, P. Prefix-Tuning: Optimizing Continuous Prompts for Generation. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 2021, pp. 4582–4597. [CrossRef]

- Liu, X.; Ji, K.; Fu, Y.; Tam, W.; Du, Z.; Yang, Z.; Tang, J. P-Tuning: Prompt Tuning Can Be Comparable to Fine-tuning Across Scales and Tasks. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), 2022, pp. 61–68. [CrossRef]

- Zhang, T.; Kishore, V.; Wu, F.; Weinberger, K.Q.; Artzi, Y. BERTScore: Evaluating Text Generation with BERT. In Proceedings of the International Conference on Learning Representations (ICLR), 2020.

Short Biography of Authors

|

Ehlullah Karakurt received the degree in Computer Programming from İstanbul Kültür University Vocational School in 2017 and the B.S. degree in Computer Engineering (English medium) from İstanbul Kültür University, İstanbul, Turkey, in 2021. From 2021 to 2022, he served as a Software Developer at Inksen Teknology. He then joined Related Digital as a Solution Engineer (2021–2022). From 2022 to 2023, he worked as a Full Stack Developer at Arentech Bilişim, and from 2023 to 2024, he was a Software Developer at Yenibiris.com. Since March 2024, he has been a Software Engineer at LC Waikiki. His technical interests include cross platform mobile development (Flutter, SwiftUI), modern web frameworks, and cloud native solutions. |

|

Akhan Akbulut (M’16) received his B.Sc. and M.Sc. degrees in Computer Engineering from Istanbul Kültür University (IKU), Turkey, in 2001 and 2008, respectively, and earned his Ph.D. in Computer Engineering from Istanbul University in 2013. From 2004 to 2013, he served as a research assistant at IKU, where he was appointed as an assistant professor in 2013. Between 2017 and 2019, he conducted postdoctoral research at North Carolina State University, USA. He returned to IKU in 2019, became a full professor in 2023, and currently serves as the chairman of the Department of Computer Engineering. His research interests include software intensive systems design, performance optimization, and machine learning applications. |

| Domain | # Papers | % |

|---|---|---|

| Regulatory compliance governance | 20 | 26.0% |

| Contract legal document automation | 18 | 23.4% |

| Customer support chatbots | 15 | 19.5% |

| Technical manual generation | 12 | 15.6% |

| Financial reporting analysis | 8 | 10.4% |

| Healthcare documentation | 4 | 5.2% |

| Total | 77 | 100.0% |

| Citation | Authors | Years | # Papers | Focus |

|---|---|---|---|---|

| [19] | Gao et al. (2023) | 2020–2023 | 45 | RAG methods & evolution survey |

| [60] | Zhao et al. (2024) | 2021–2024 | 38 | Comprehensive RAG survey |

| [61] | Susnjak et al. (2024) | 2021–2024 | 27 | RAG for automating SLRs |

| [62] | Chen et al. (2024) | 2022–2024 | 30 | Benchmarking LLMs in RAG |

| [26] | Mialon et al. (2023) | 2020–2023 | 52 | Augmented Language Models survey |

| [17] | Ji et al. (2023) | 2019–2023 | 47 | Hallucination in NLG survey |

| Platform Category | Characterization | # of Studies | % |

|---|---|---|---|

| Cloud native infrastructures | Public cloud GPU/TPU clusters (AWS, GCP, Azure) with managed vector stores for on demand scaling of retrieval and generation | 51 | 66.2% |

| On premises data centers | Private deployments behind corporate firewalls to satisfy data sovereignty and compliance requirements | 15 | 19.5% |

| Edge or mobile hardware | Model compressed RAG pipelines on devices for real time, offline operation | 8 | 10.4% |

| Hybrid topologies | Workload bifurcation between cloud and private/edge environments to optimize privacy, latency, and operational cost | 3 | 3.9% |

| Dataset Category | Description | # Studies | % |

|---|---|---|---|

| GitHub open source | Public repositories containing code, documentation, and issue trackers | 42 | 54.5% |

| Proprietary repositories | Private corporate code and document stores behind enterprise firewalls | 12 | 15.6% |

| Benchmarks | Established academic corpora (PROMISE defect sets, NASA, QA benchmarks) | 10 | 13.0% |

| Custom industrial corpora | Domain specific collections assembled from finance, healthcare, and manufacturing | 13 | 16.9% |

| Learning Paradigm | Description | # Studies | % |

|---|---|---|---|

| Supervised | Models trained on labeled data (classification, regression, QA pairs) | 71 | 92.2% |

| Unsupervised | Clustering, topic modeling, or retrieval without explicit labels | 3 | 3.9% |

| Semi supervised | A mix of small, labeled sets with large, unlabeled corpora (self training, co training) | 3 | 3.9% |

| Total | 77 | 100.0% |

| Category | Specific Algorithms / Architectures | # Mentions |

|---|---|---|

| Traditional ML (Baselines) | Naïve Bayes (26), SVM (22), Logistic Regression (19), Decision Tree (18), Random Forest (15), KNN (6), Bayesian Network (5) | 121 |

| Deep Learning Models | LSTM (3), DNN (2), CNN (2), DBN (1) | 8 |

| RAG Architectures | RAG Sequence (36), RAG Token (28), Hybrid RAG (18) | 82 |

| Retrieval & Indexing | Dense Vector (FAISS, Annoy) (62), BM25/TF-IDF (45), Knowledge Graph (20) | 127 |

| Metric Category | Description | # Studies | % |

|---|---|---|---|

| Precision / Recall / Accuracy | Standard classification metrics applied to retrieval ranking or defect detection | 62 | 80.5% |

| Recall@K / Precision@K | Retrieval specific metrics measuring top K document relevance | 56 | 72.7% |

| ROUGE / BLEU | Generation quality metrics for summarization and translation tasks | 34 | 44.2% |

| Human Evaluation | An expert or crowd worker assesses fluency, relevance, and factuality | 15 | 19.5% |

| Business Impact Metrics | Task level measures | 12 | 15.6% |

| Validation Method | Description | # Studies | % |

|---|---|---|---|

| k fold Cross Validation | Data is partitioned into k folds; each fold, in turn, serves as a test set | 72 | 93.5% |

| Hold out Split | Single static partition into training and test sets | 20 | 26.0% |

| Real world Case Study | Deployment in a live environment with user feedback or business metrics | 10 | 13.0% |

| Configuration | Task Type | #* | Key Findings |

|---|---|---|---|

| RAG Token + Fine Tuned BART | Knowledge grounded QA | 5 | Achieved up to 87% exact match on enterprise QA, reducing hallucinations by 35% compared to GPT-3 baseline. |

| RAG Sequence + GPT-3.5 (Zero Shot Prompting) | Contract Clause Generation | 4 | Generated legally coherent clauses with 92% human rated relevance; outperformed template-only systems by 45%. |

| Hybrid RAG (Dense + KG) + T5 Large | Policy Summarization | 3 | Produced summaries with 0.62 ROUGE-L, a 20% improvement over pure dense retrieval. |

| RAG Token + Retrieval Enhanced RoBERTa | Technical Manual Synthesis | 2 | Reduced manual editing time by 40% in field trials; achieved 85% procedural correctness. |

| RAG Sequence + Flan-T5 (Prompt Tuned) | Financial Report Drafting | 2 | Achieved 0.58 BLEU and 0.65 ROUGE-L on internal financial narrative benchmarks. |

| Challenge | Description | # Studies | % |

|---|---|---|---|

| Data Privacy & Security | Safeguarding proprietary corpora and ensuring compliance with data protection regulations | 29 | 37.7% |

| Latency & Scalability | Reducing retrieval and generation delays to meet real time enterprise SLAs | 24 | 31.2% |

| Difficulty in Measuring Business Impact | Measuring end user outcomes (time saved, error reduction, ROI) beyond technical metrics | 12 | 15.6% |

| Hallucination Factual Consistency | Detecting and mitigating fabricated or outdated content in generated documents | 37 | 48.1% |

| Domain Adaptation Transfer Learning | Adapting RAG pipelines across domains with minimal labeled data | 18 | 23.4% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).