Submitted:

19 February 2026

Posted:

20 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction: How Do Machines Learn to Make Decisions?

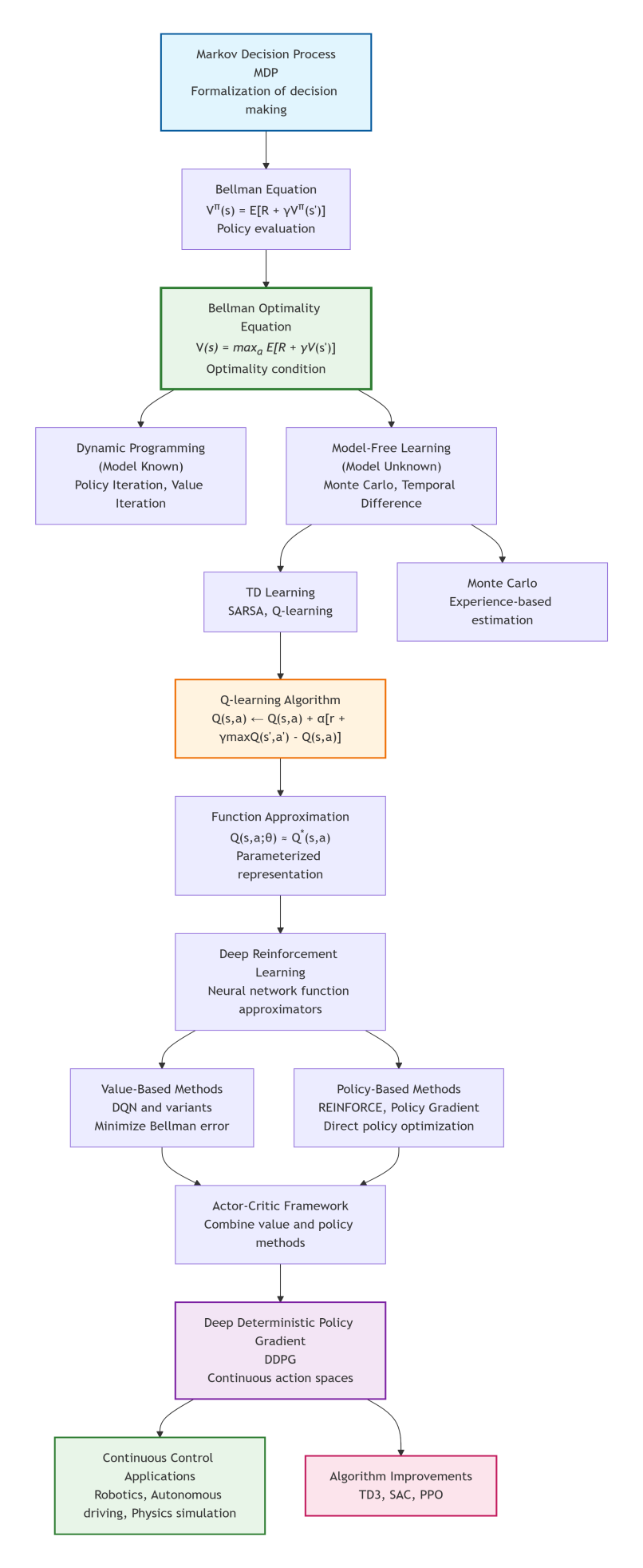

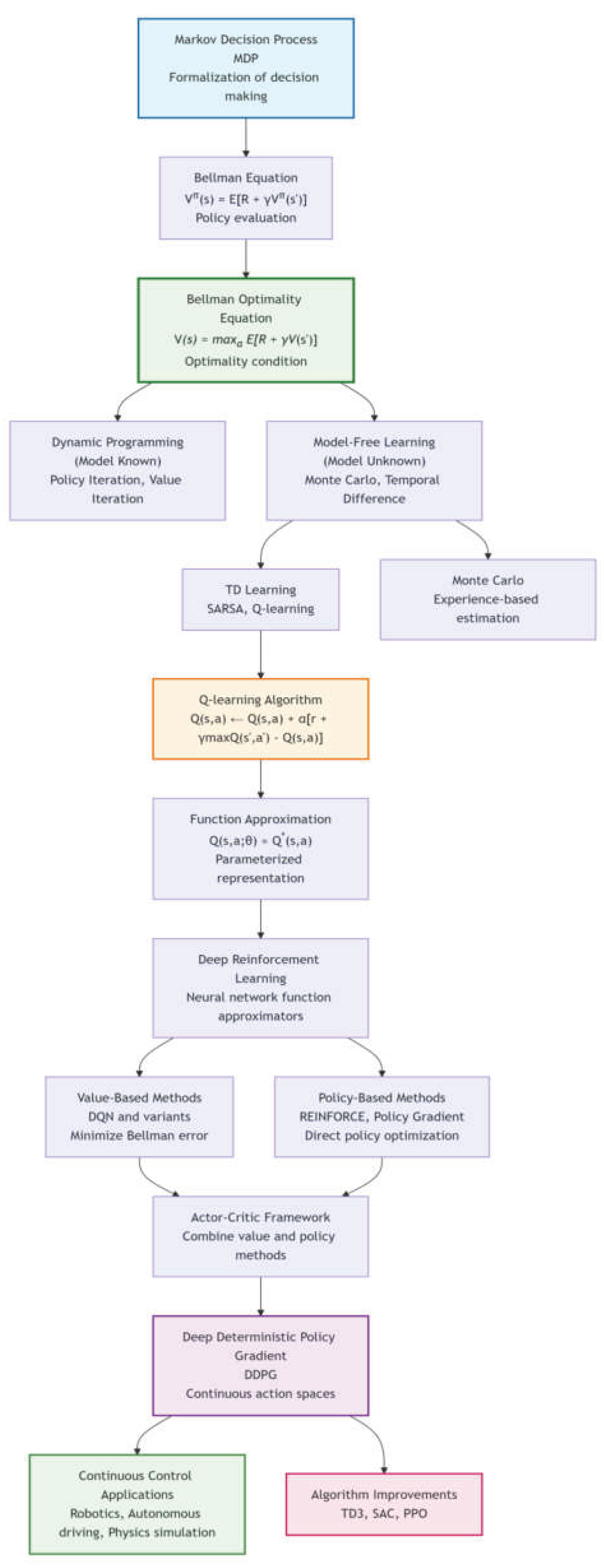

2. The Mathematical Foundation of Decision-Making: MDP

- (1)

- State space (): The set of all possible situations of the environment.

- (2)

- Action space (): The set of operations the agent can perform.

- (3)

- Transition probability (): The probability distribution of state transitions after taking an action.

- (4)

- Reward function (): The environment’s immediate feedback for a state-action pair.

- (5)

- Discount factor (γ): Measures the present value of future rewards (0 ≤ γ ≤ 1).

3. From Policy Evaluation to Optimal Policy: The Evolution of the Bellman Equation

4. Model-Free Learning: From Theory to Practice

5. The Rise of Deep Reinforcement Learning

6. The Deep Deterministic Policy Gradient (DDPG) Algorithm

| Initialize actor network and critic network |

| Initialize corresponding target networks μ’ and Q’ |

| Initialize experience replay buffer R |

| for each time step do |

| Select action a = + exploration noise |

| Execute a, observe reward r and new state s’ |

| Store transition (s,a,r,s’) in R |

| Randomly sample a minibatch from R |

| Update critic (based on modified Bellman optimality equation) |

| Minimize |

| Update actor (approximate policy improvement) |

| Soft update target networks |

| end for |

7. Frontier Developments and a Unified Theoretical Perspective

8. Conclusion: The Science of Intelligent Decision-Making Within a Unified Framework

References

- Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press.

- Bellman, R. (1957). Dynamic Programming. Princeton University Press.

- Watkins, C. J. C. H., & Dayan, P. (1992). Q-learning. Machine Learning, 8(3-4), 279–292. [CrossRef]

- Mnih, V., Kavukcuoglu, K., Silver, et al., (2015). Human-level control through deep reinforcement learning. Nature, 518(7540), 529–533. [CrossRef]

- Lillicrap, T. P., Hunt, J. J., Pritzel, A., Heess, N., Erez, T., Tassa, Y., Silver, D., & Wierstra, D. (2016). Continuous control with deep reinforcement learning. International Conference on Learning Representations (ICLR).

- Silver, D., Lever, G., Heess, N., Degris, T., Wierstra, D., & Riedmiller, M. (2014). Deterministic policy gradient algorithms. International Conference on Machine Learning (ICML).

- Fujimoto, S., van Hoof, H., & Meger, D. (2018). Addressing function approximation error in actor-critic methods. International Conference on Machine Learning (ICML).

- Haarnoja, T., Zhou, A., Abbeel, P., & Levine, S. (2018). Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. International Conference on Machine Learning (ICML).

- Schulman, J., Wolski, F., Dhariwal, P., Radford, A., & Klimov, O. (2017). Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347.

| Theoretical Stage | Core Concept | Mathematical Expression | Representat-ive Algorithms | Applicable Scenarios |

|---|---|---|---|---|

| Problem Definition | Markov Decision Process | - | Decision problem formulation | |

| Policy Evaluation | Bellman Equation | Policy evaluation algorithms | Analyzing a given policy | |

| Optimal Condition | Bellman Optimality Equation | Theoretical benchmark | Defining optimal policy standard | |

| Model-Based Solution | Dynamic Programming | Value iteration, Policy iteration | Environments with known model | |

| Model-Free Learning | Temporal Difference Learning | SARSA、Q-learning | Environments with unknown model | |

| High-Dimensional Extension | Function Approximation | Linear function approximation | Large state spaces | |

| Deep Integration | Deep Q-Learning | DQN and its variants | High-dimensional inputs like images | |

| Policy Optimization | Policy Gradient | REINFORCE | Direct policy optimization | |

| Integrated Methods | Actor-Critic | actor: | A3C、DDPG | Continuous action spaces |

| Continuous Control | Deterministic Policy Gradient | DDPG、TD3、SAC | Robot control, etc. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.