Submitted:

13 January 2026

Posted:

13 January 2026

You are already at the latest version

Abstract

Keywords:

Introduction

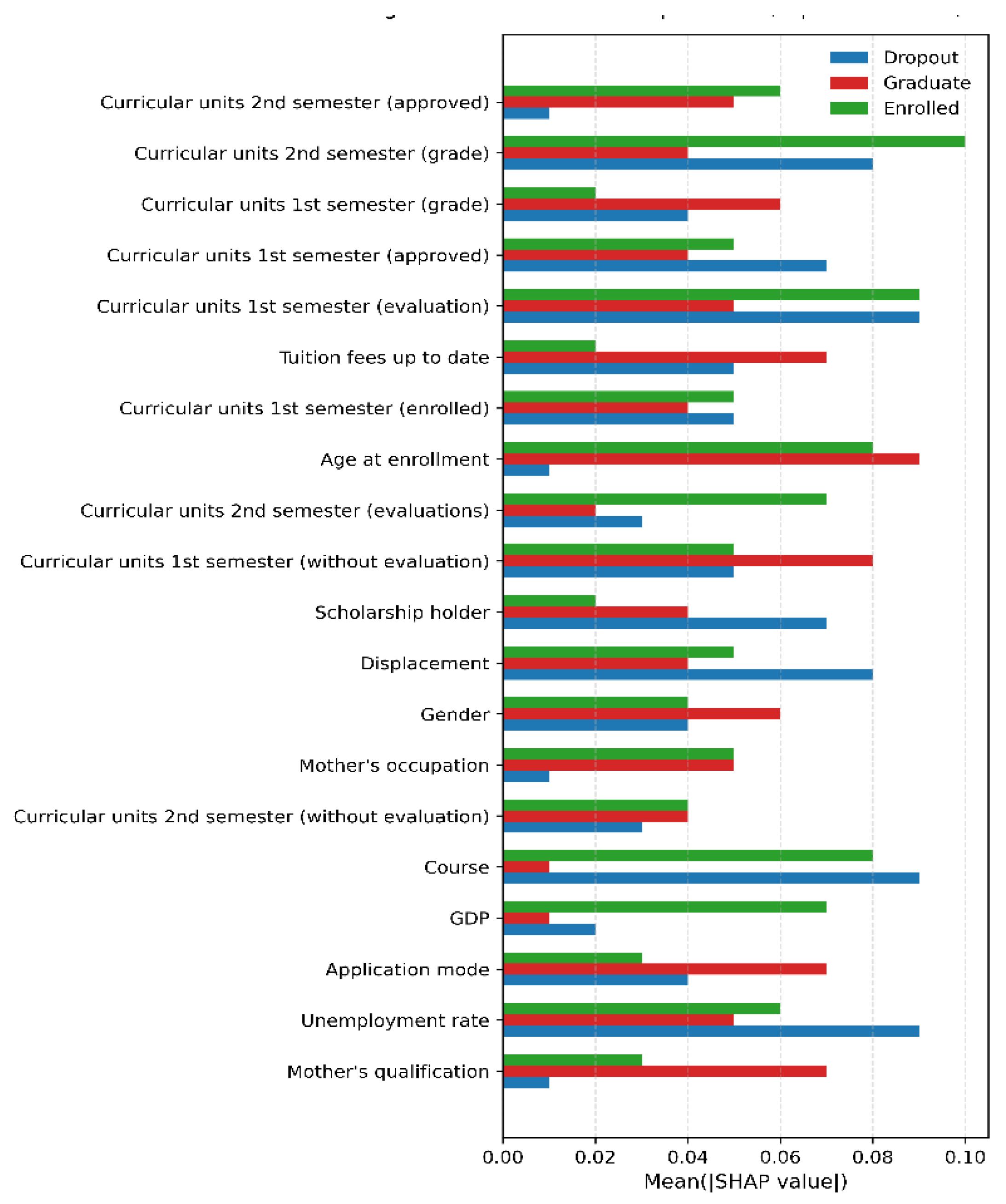

- RQ1: Which predictors exert the greatest influence on student retention outcomes in a multi-class higher education context?

- RQ2: Which machine learning model demonstrates the strongest predictive performance, robustness, and generalisability for multi-class student retention prediction?

Contributions

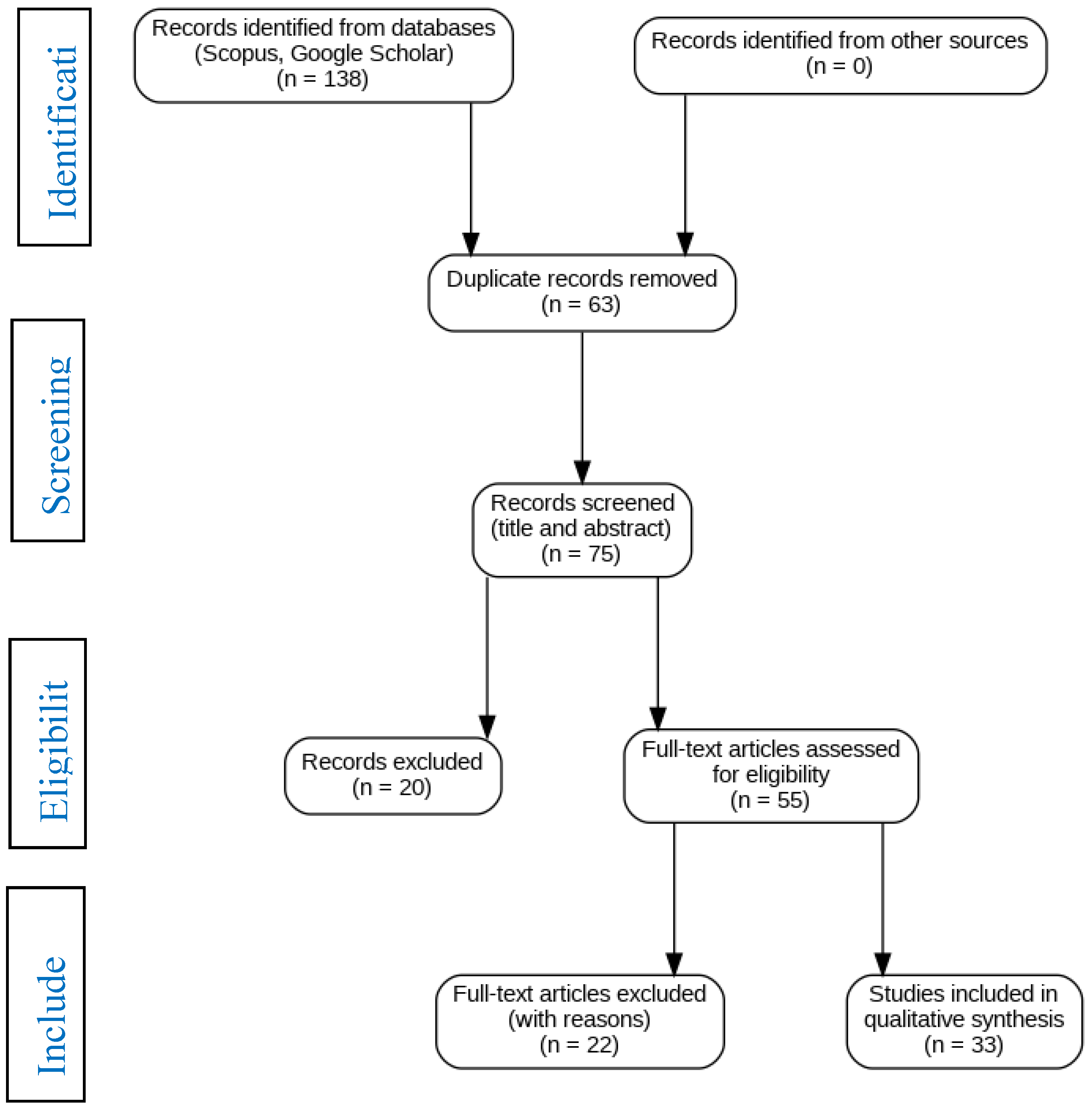

Related Literature

Materials and Methods

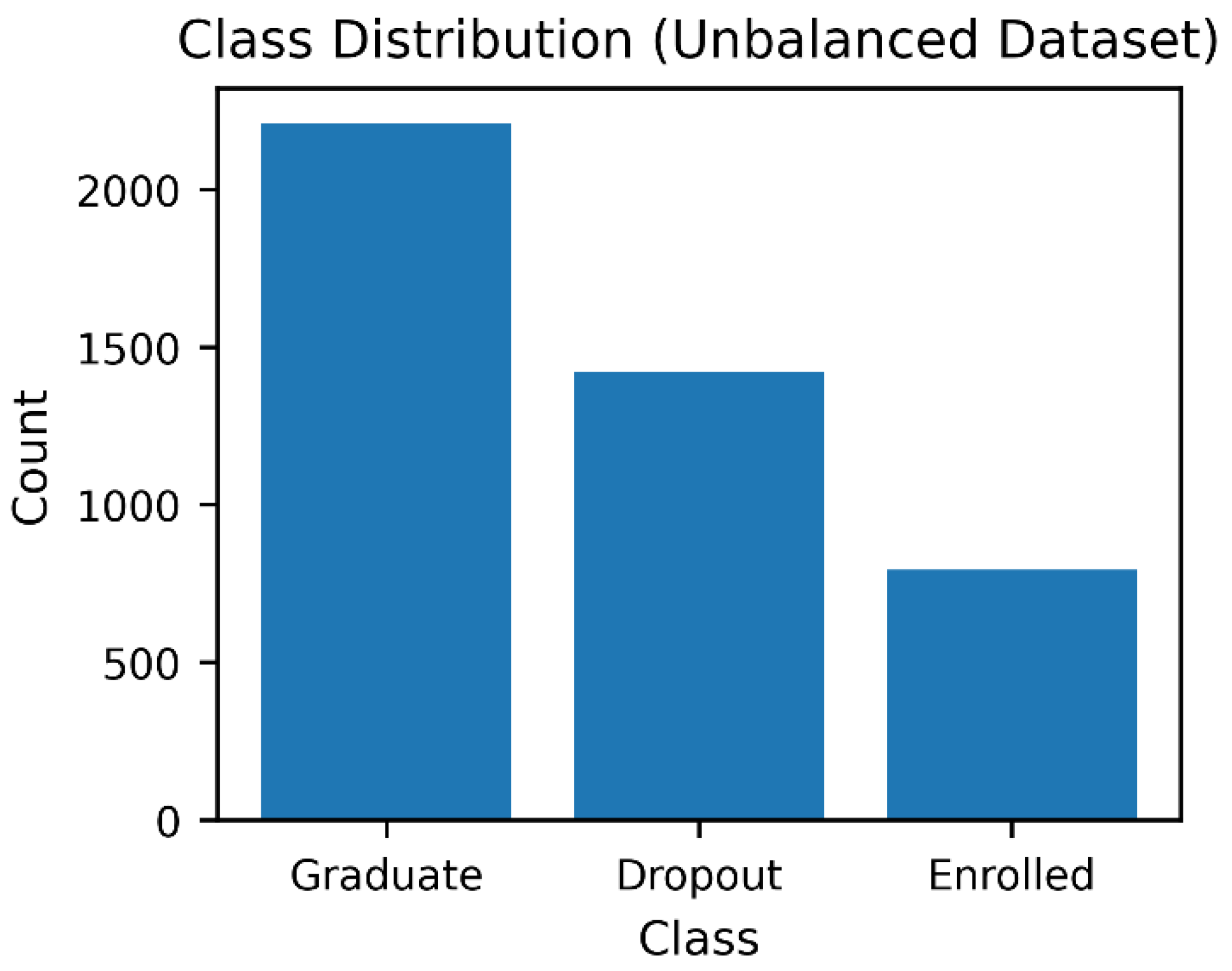

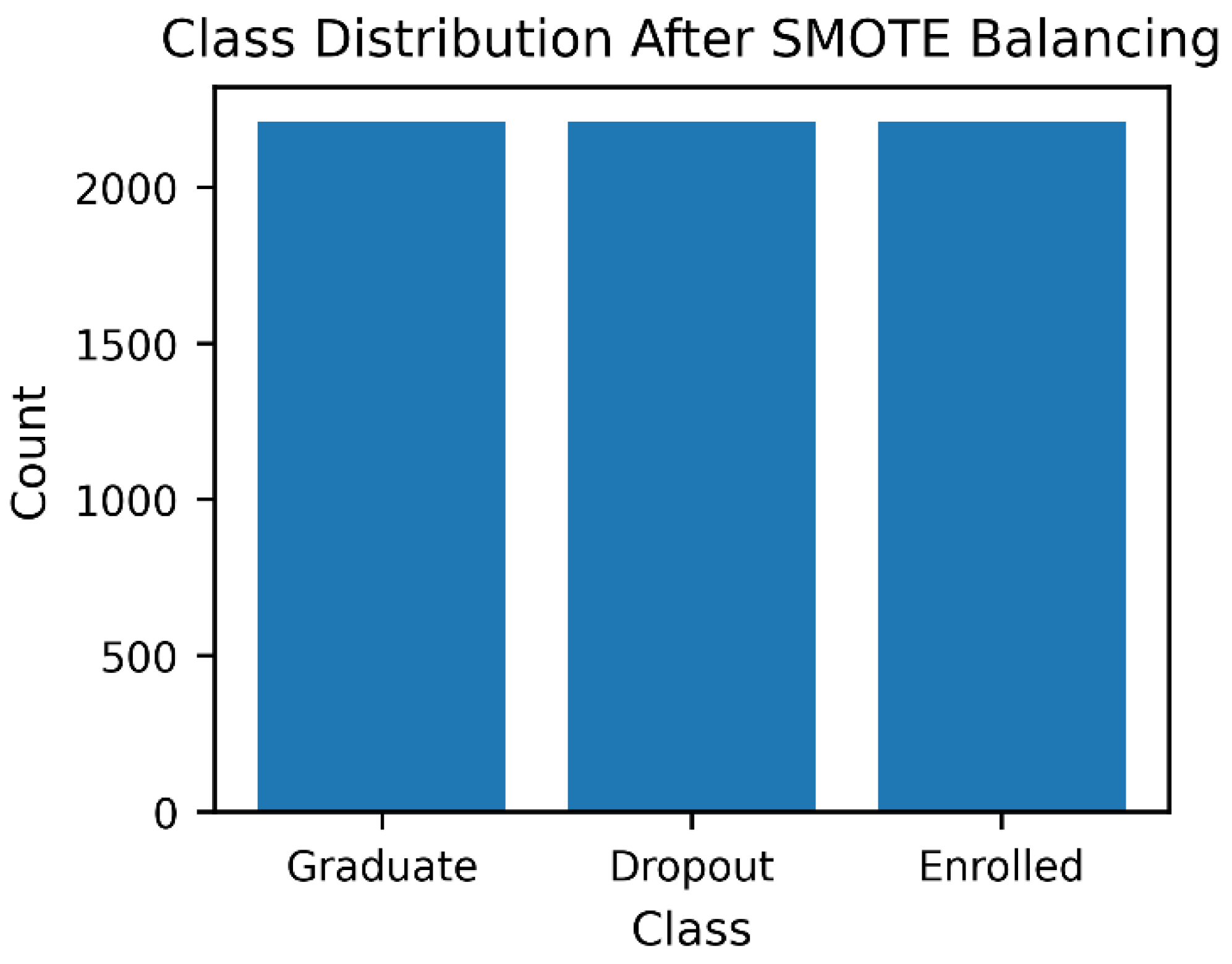

Dataset Description

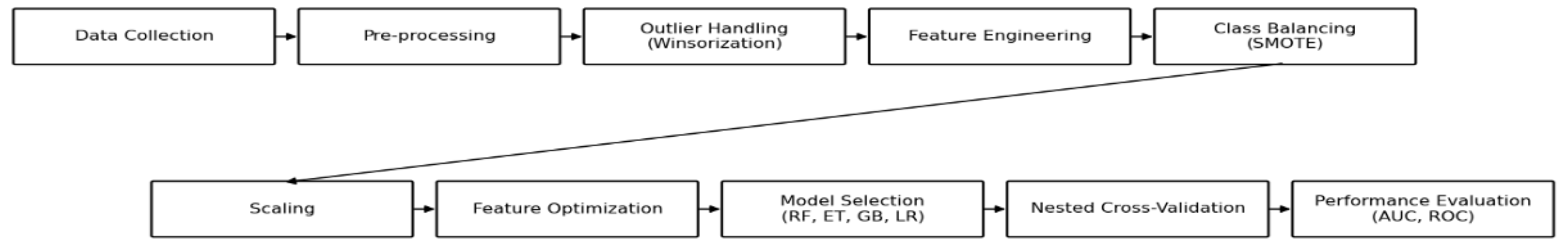

Methodological Framework

Preprocessing: Outlier Handling, Encoding, and Scaling

Feature Optimization Comparison

Results

Evaluation Metrics

Model Interpretation Using SHAP

SHAP Feature Plot

Discussion

Conclusions

References

- Peck, A.; Callahan, K. Connecting student employment and leadership development. New Dir. Stud. Leadersh. 2019, 2019, 9–22. [CrossRef]

- Abuzaid, A.; Alkronz, E. A comparative study on science context. Ital. J. Appl. Stat. 2024, 36, 85–99. [CrossRef]

- Prasanth, A.; Alqahtani, H. Predictive modelling of student behaviour for early dropout detection in universities using machine learning techniques. In Proc. 8th IEEE Int. Conf. Eng. Technol. Appl. Sci. (ICETAS); IEEE: 2023; pp. 1–5. [CrossRef]

- Araque, F.; Roldán, C.; Salguero, A. Factors influencing university dropout rates. Comput. Educ. 2009, 53, 563–574. [CrossRef]

- Abdullah, A.; Ali, R.H.; Koutaly, R.; Khan, T.A.; Ahmad, I. Enhancing student retention: Predictive machine learning models for identifying and preventing university dropout. In Proceedings of the 2025 International Conference on Innovation in Artificial Intelligence and Internet of Things (AIIT), Dubai, United Arab Emirates, 18–20 March 2025; IEEE: New York, NY, USA, 2025; pp. 1–6. [CrossRef]

- Maples, B. Dropping out of university. Uni Compare 2021. [CrossRef]

- Chacha, B.R.C.; López, W.L.G.; Guerrero, V.X.V.; Villacis, W.G.V. Student dropout model based on logistic regression. In Int. Conf. Appl. Technol.; Springer: Cham, 2019; pp. 321–333. [CrossRef]

- Delen, D. A comparative analysis of machine learning techniques for student retention management. Decis. Support Syst. 2010, 49, 498–506. [CrossRef]

- Edvoy. UK universities in financial stress. Edvoy 2023. [CrossRef]

- Meseret, Y.M.; Sunonora, S. Global challenges of students’ dropout: A prediction model development using machine learning algorithms on higher education datasets. SHS Web Conf. 2021, 129, 09001. [CrossRef]

- Dew, M.A.; Kumiadi, F.I.; Murad, D.F.; Rabiha, S.G.; Romli, A. Machine learning algorithms for early predicting dropout student online learning. In Proc. IEEE 9th Int. Conf. Comput. Eng. Des. (ICCED); 2023. [CrossRef]

- Matz, S.C.; Bukow, C.S.; Peters, H.; Deacons, C.; Dinu, A.; Stachl, C. Using machine learning to predict student retention from socio-demographic characteristics and app-based engagement metrics. Sci. Rep. 2023, 13, 5705. [CrossRef]

- Salloum, S.A.; Basiouni, A.; Alfansal, R.; Salloum, A.; Shaalam, K. Predicting student retention in higher education using machine learning. In Using Generative Intelligence to Improve Human Education and Well-Being; 2024; pp. 197–206. [CrossRef]

- Sharma, N.; Sharma, M.K.; Garg, U. Predicting academic performance of students using machine learning models. In Proc. Int. Conf. Artif. Intell. Smart Commun. (AISC); 2023. [CrossRef]

- Sun, Z.; Song, Q.; Zhu, X.; Sun, H.; Xu, B.; Zhou, Y. Ensemble methods for classifying imbalanced data. Pattern Recognit. 2015, 48, 1623–1637. [CrossRef]

- Villegas-Ch, W.; Govea, J.; Revelo-Tapia, S. Improving student retention in higher education through machine learning. J. Innov. Sustain. Teach. High. Educ. Inst. 2023, 15, 14512. [CrossRef]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; et al. The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. BMJ 2021, 372, n71. [CrossRef]

- Realinho, V.; Vieira Martins, M.; Machado, J.; Baptista, L. Predict students’ dropout and academic success. UCI Mach. Learn. Repos. 2021. [CrossRef]

- Lundberg, S.M.; Lee, S.-I. A Unified Approach to Interpreting Model Predictions. Advances in Neural Information Processing Systems 2017, 30, 4765–4774. [CrossRef]

- Lundberg, S.M.; Erion, G.; Chen, H.; DeGrave, A.; Prutkin, J.M.; Nair, B.; Katz, R.; Himmelfarb, J.; Bansal, N.; Lee, S.-I. From Local Explanations to Global Understanding with Explainable AI for Trees. Nature Machine Intelligence 2020, 2, 56–67. [CrossRef]

- Molnar, C. Interpretable Machine Learning; Lulu Press: 2022. https://christophm.github.io/interpretable-ml-book/.

- Breiman, L. Random Forests. Machine Learning 2001, 45, 5–32. [CrossRef]

- Geurts, P.; Ernst, D.; Wehenkel, L. Extremely Randomized Trees. Machine Learning 2006, 63, 3–42. [CrossRef]

- Friedman, J.H. Greedy Function Approximation: A Gradient Boosting Machine. Annals of Statistics 2001, 29, 1189–1232. [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; et al. Scikit-learn: Machine Learning in Python. Journal of Machine Learning Research 2011, 12, 2825–2830. https://www.jmlr.org/papers/v12/pedregosa11a.html.

- Varma, S.; Simon, R. Bias in Error Estimation When Using Cross-Validation for Model Selection. BMC Bioinformatics 2006, 7, 91. [CrossRef]

- Romero, C.; Ventura, S. Educational Data Mining and Learning Analytics: An Updated Survey. WIREs Data Mining and Knowledge Discovery 2020, 10, e1355. [CrossRef]

- Sokolova, M.; Lapalme, G. A Systematic Analysis of Performance Measures for Classification Tasks. Information Processing & Management 2009, 45, 427–437. [CrossRef]

- Fawcett, T. An Introduction to ROC Analysis. Pattern Recognition Letters 2006, 27, 861–874. [CrossRef]

- Hosmer, D.W.; Lemeshow, S.; Sturdivant, R.X. Applied Logistic Regression, 3rd ed.; Wiley: 2013. [CrossRef]

- Menard, S. Logistic Regression: From Introductory to Advanced Concepts and Applications; Sage: 2010. [CrossRef]

- Tinto, V. Dropout from Higher Education: A Theoretical Synthesis of Recent Research. Review of Educational Research 1975, 45, 89–125. [CrossRef]

- Bean, J.P. Dropouts and Turnover: The Synthesis and Test of a Causal Model of Student Attrition. Research in Higher Education 1980, 12, 155–187. [CrossRef]

- Pascarella, E.T.; Terenzini, P.T. How College Affects Students: A Third Decade of Research; Jossey-Bass: San Francisco, CA, USA, 2005. [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. “Why Should I Trust You?” Explaining the Predictions of Any Classifier. Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining 2016, 1135–1144. [CrossRef]

| Study (Short Form) | Dataset / Sample | Model(s) Used | Key Findings | Identified Gaps |

| Dew et al. (2023) | Dataset not reported | KNN, LR, SVM, FNN, RF, GB | Academic performance is identified as the strongest predictor of student retention | Narrow feature space; absence of non-academic predictors (e.g., financial, socio-economic); no interpretability analysis (e.g., SHAP) |

| Study (Short Form) | Dataset / Sample | Model(s) Used | Key Findings | Identified Gaps |

| Matz et al. (2023) | Large dataset (50,095 records) | EN, RF | Applied rigorous preprocessing (SMOTE, scaling); RF achieved 75% AUC | Predictive performance remained moderate; lacked interpretability; limited insight into feature contributions |

| Salloum et al. (2024) | 4,400 student multi-class dataset | Random Forest | Achieved 76% accuracy | Limited model comparison; no handling of class imbalance; reliance on accuracy alone |

| Abdullah et al. (2025) | 4,424 student records | DT, RF, SVM, MLP, XGB | Reported 94% accuracy | Accuracy inflated due to class imbalance; no class balancing (e.g., SMOTE); potential majority-class bias |

| Prasanth & Alqahtani (2023) | Unspecified dataset | LR, SVC | Achieved 82.3% and 80.9% accuracy, respectively | Missing key predictors (e.g., financial status, family background) |

| Villegas et al. (2023) | Over 10,000 student records | Machine Learning models (including Neural Networks) | Reported an accuracy of 85%. | Feature set too narrow; excluded financial and socio-economic variables; limited model diversity |

| Method | Feature Count | Models Tested | Best Model | Accuracy (%) | AUC Mean (%) | AUC SD |

| Literature Baseline | 34 | NN, DT, SVM, LR, RF, EN | N/A | 69 - 77 | N/A | N/A |

| Initial Full Feature Set | 34 | RF, ET, GB, LR | Extra Trees | 77.0 | 96.0 | 0.0047 |

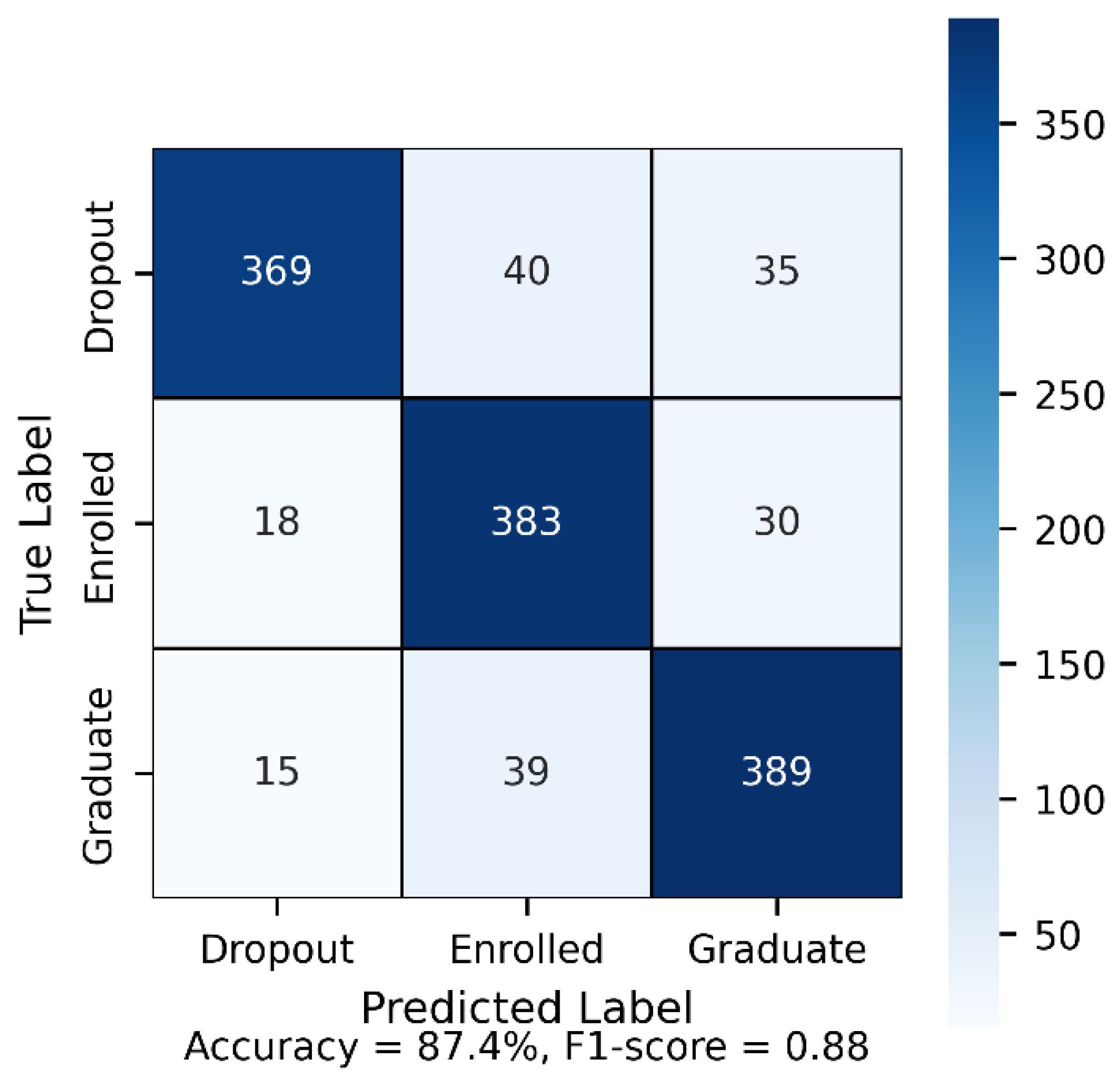

| Optimal Feature Set | 28 | RF, ET, GB, LR | Extra Trees | 87.4 | 96.0 | 0.0057 |

| Reduced Feature Set | 20 | RF, ET, GB, LR | Extra Trees | 85.0 | 96.0 | 0.0045 |

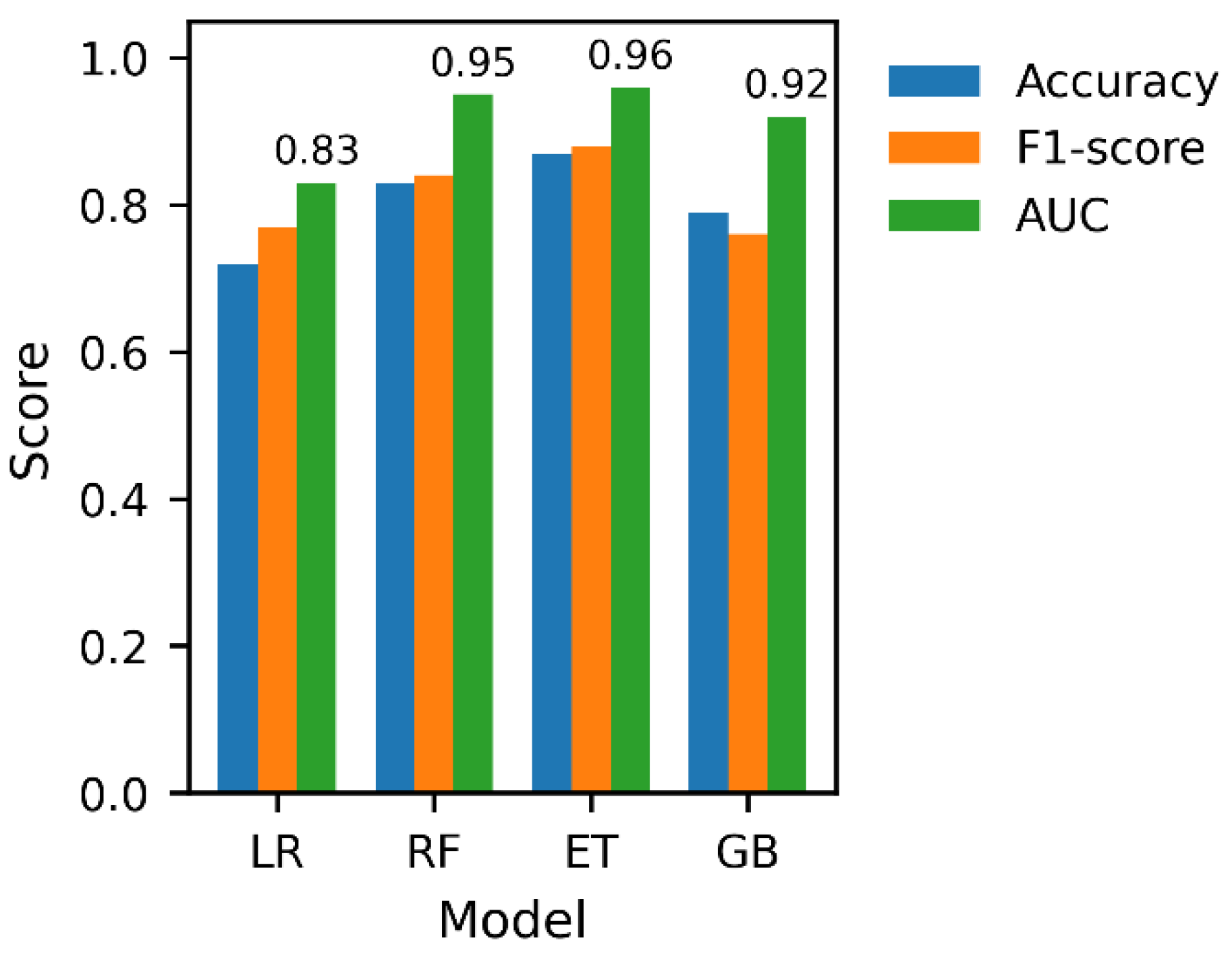

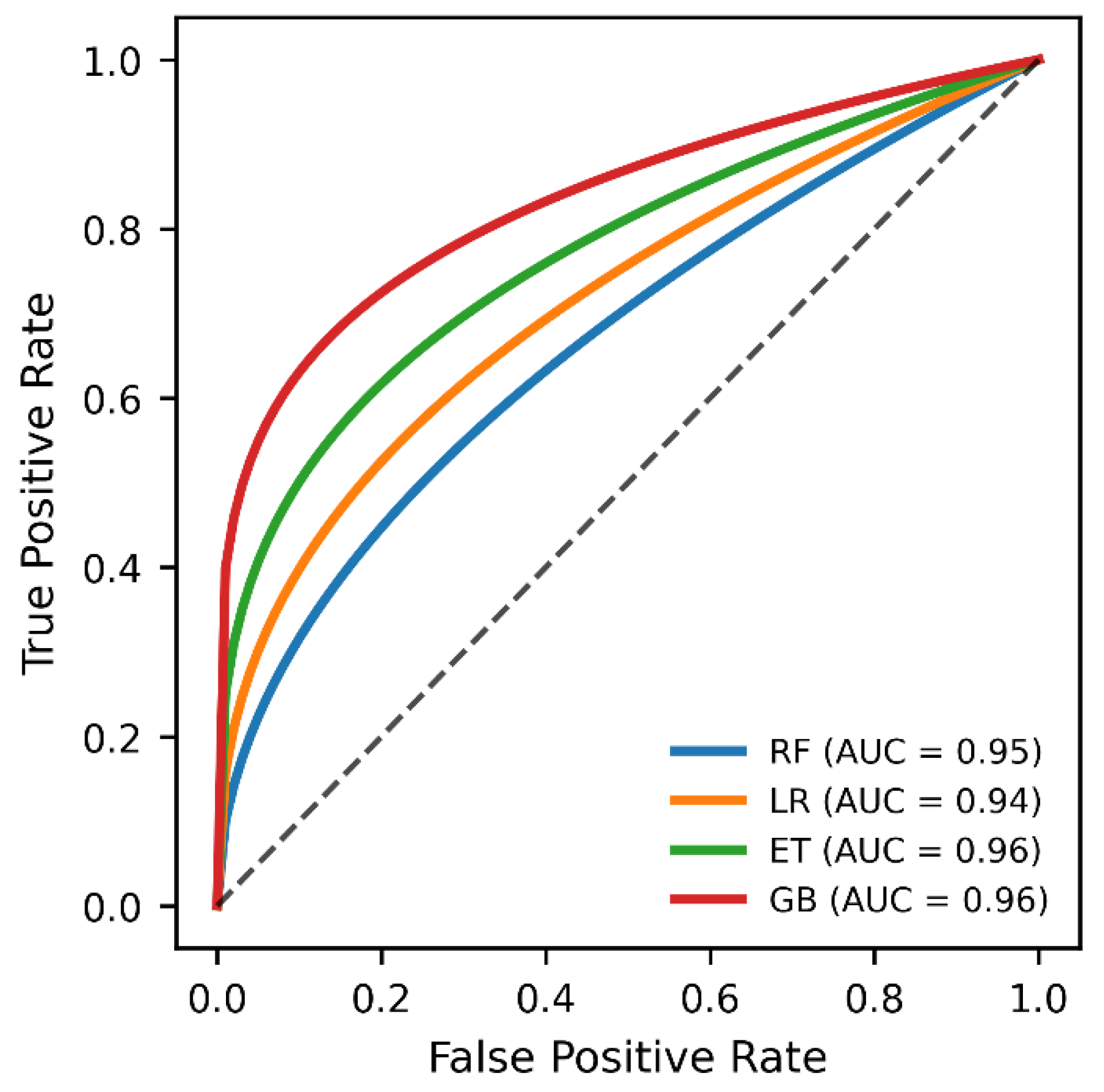

| Models | AUC Mean (%) | AUC SD | Accuracy Mean (%) | Accuracy SD |

| Random Forest | 95.0 | 0.0057 | 86.0 | 0.0110 |

| Extra Trees | 96.0 | 0.0053 | 87.0 | 0.0120 |

| Gradient Boosting | 93.0 | 0.0060 | 79.0 | 0.0080 |

| Logistic Regression | 83.0 | 0.0074 | 72.0 | 0.0090 |

| Study | Target Type | Class Balancing | Model(s) | Best Metric Reported | Key Limitation |

| A.A. et al. (2025) | Multi-class (Dropout, Enrol, Graduate) | None | DT, RF, SVM, MLP, XGB | Accuracy = 93.5% | Potential performance inflation due to unbalanced class distribution |

| S.A. et al. (2024) | Multi-class | None | Random Forest | Accuracy = 76.7% | No class balancing; reliance on accuracy alone |

| This study | Multi-class | SMOTE | RF, ET, GB, LR | Accuracy = 87.4%, AUC = 0.96 | Increased computational complexity; reliance on a single-institution dataset |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).