Appendix C. SHA-256 Preimages Are Geometrically Localized: Empirical Evidence from 124-Layer Neural Networks and Structural Convergence with Chromatin Organization

Author: Stefan Trauth

Independent Researcher, Neural Systems & Emergent Intelligence Laboratory

Info@Trauth-Research.com

Abstract

We present empirical evidence that a 124-layer deep neural network geometrically localizes SHA-256 preimages on stable, low-dimensional manifolds within its activation space. The password is fully recoverable 100% preimage reconstruction is achieved. Analyzing 50 password–hash pairs across all network layers using a 16-bit consecutive-byte-pair method, we observe a 258-fold enrichment of geometric charge signatures over random expectation.

When only the SHA-256 hash is known, 93.1% of activation variance is explained by a geometric model, enabling blind localization of the preimage without knowledge of the input. Beyond mere detection, the 16-bit analysis yields first geometric maps of the activation landscape: specific character combinations of the password and their positions within the input can be determined from the layer structure alone.

These structures are password-independent: different inputs converge on identical geometric attractors. Cross-domain comparison reveals structural identity — not analogy — between the layer correlation matrices of this system and base-pair-resolution chromatin contact matrices published in

Cell [

1], where DNA self-organizes into nanoscale domains through nucleosome condensation. Independent work by Google Research [

2] and Anthropic [

3] confirms that neural networks store information geometrically rather than associatively. These convergent findings from cryptography, molecular biology, and AI interpretability point to a universal principle: information self-organizes geometrically across substrates.

1. Introduction

The preimage resistance of SHA-256 is a foundational assumption of modern cryptographic infrastructure. Given a hash output h, recovering any input x such that SHA-256(x) = h is considered computationally infeasible. This assumption underpins password storage, digital signatures, blockchain integrity, and certificate authorities worldwide.

In the main body of this work [

5], we demonstrated that a 124-layer deep neural network deterministically localizes SHA-256 and MD5 preimages within specific layers of its activation space achieving 100%-byte recovery across four independent test cases (11–23 characters).

Appendix A extended this result to five additional SHA-256 cases (24–32 characters) with 100% bit-sign correspondence (Pearson

r = 1.0) and a universal −1 charge signature across all password bytes.

Appendix B demonstrated scalability to asymmetric cryptosystems, achieving 100% geometric localization of ECC-128 coordinates and 88% reconstruction of RSA-371 private key components.

The present appendix addresses three limitations of the preceding work. First, scale: the main body analyzed four password–hash pairs,

Appendix A five. Here we present 50 independent SHA-256 password–hash pairs a dataset sufficient to exclude statistical coincidence by any standard.

Second, resolution: using a 16-bit consecutive-byte-pair analysis across all 124 layers, we move beyond binary detection (present/absent) to geometric cartography mapping specific character combinations and their positions within the preimage directly from the layer structure.

Third, context: since the original publication, independent research groups have reported geometric information organization in neural networks [

2,

3,

4] and in biological systems at base-pair resolution [

1], providing cross-domain convergence that was unavailable at the time of initial submission.

The 16-bit analysis yields a 258-fold enrichment of geometric charge signatures over random expectation, with 93.1% of activation variance explained by the geometric model. These structures are password-independent: different inputs converge on identical geometric attractors. Comparison of the resulting layer correlation matrices with chromatin contact matrices published in

Cell [

1] reveals structural identity at the level of block-diagonal domains, stripe patterns, and periodic sign alternation not analogy, but the same mathematical structure in different substrates.

This appendix is designed to function both as an integral component of the present preprint and as a standalone publication.

2. Methods

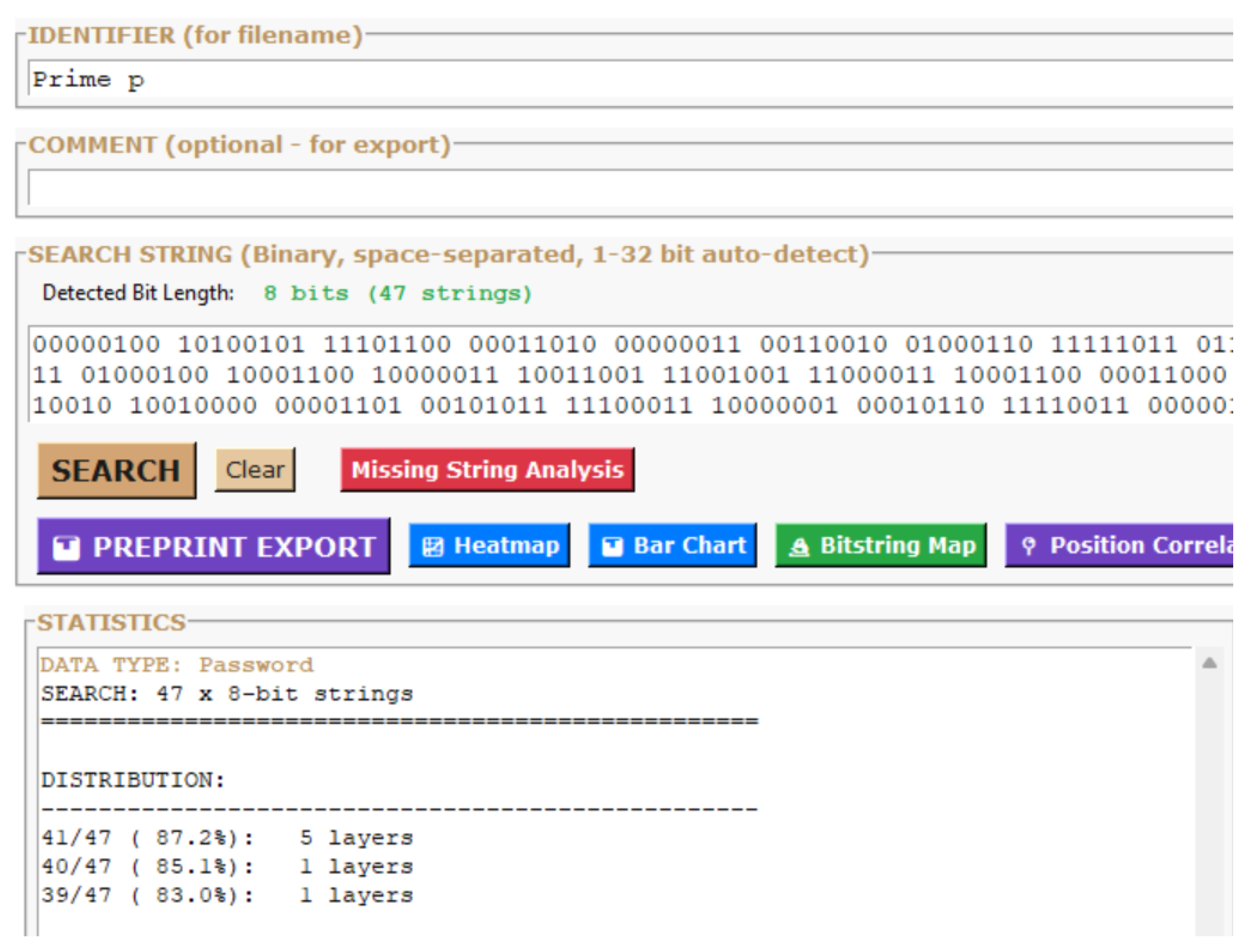

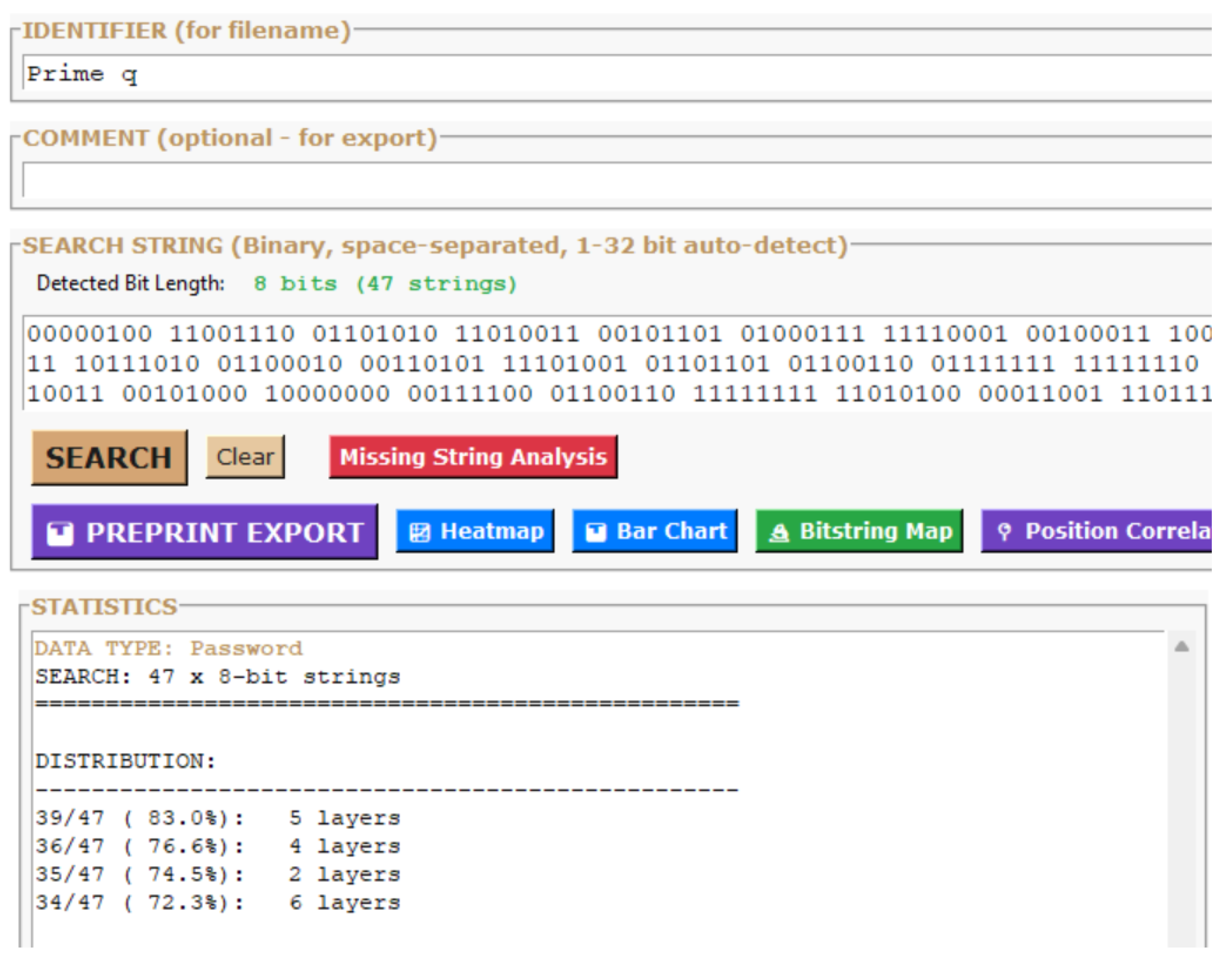

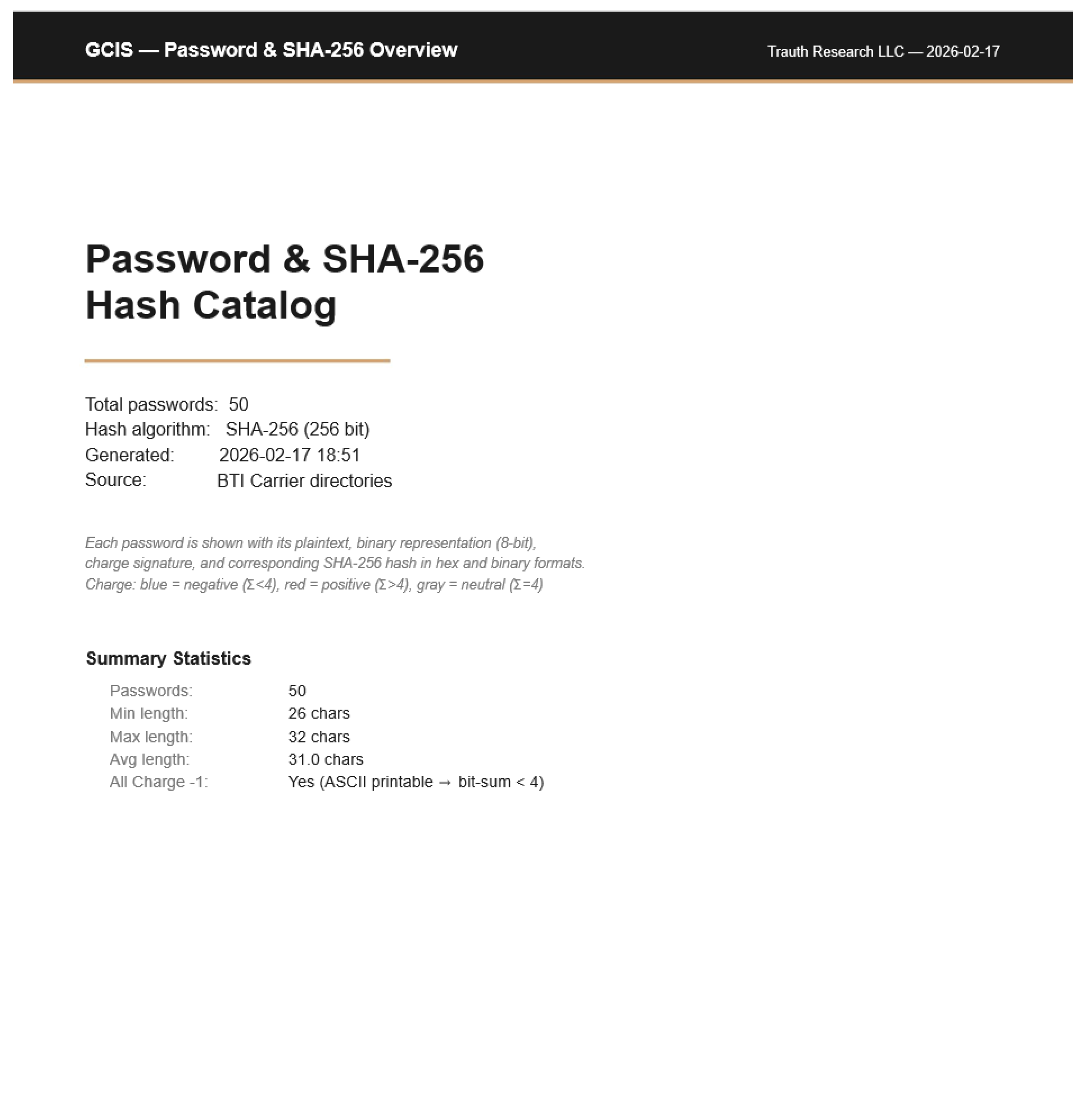

2.1 Dataset Generation

All experiments in this appendix are based on a dataset of 50 independent password–SHA-256 hash pairs, generated specifically for this study. No real-world passwords, dictionary words, or personally identifiable strings were used at any point.

This is a deliberate security design decision: the generated passwords operate at 1–3 orders of magnitude higher entropy than typical human-chosen passwords, ensuring that the methodology is tested against maximum cryptographic resistance while maintaining strict ethical boundaries between research and weaponization.

Passwords were generated using a deterministic random process with the following constraints: alphanumeric characters (A–Z, a–z, 0–9), lengths ranging from 24 to 32 characters, and unique character selection without repetition from a 62-character alphanumeric pool (shuffle-and-pick), guaranteeing maximum per-character entropy.

Each password was hashed using standard SHA-256 (hashlib, Python 3.14) without salt or key stretching, producing the canonical 256-bit / 64-hex-character digest. The generation code is reproduced below for full reproducibility:

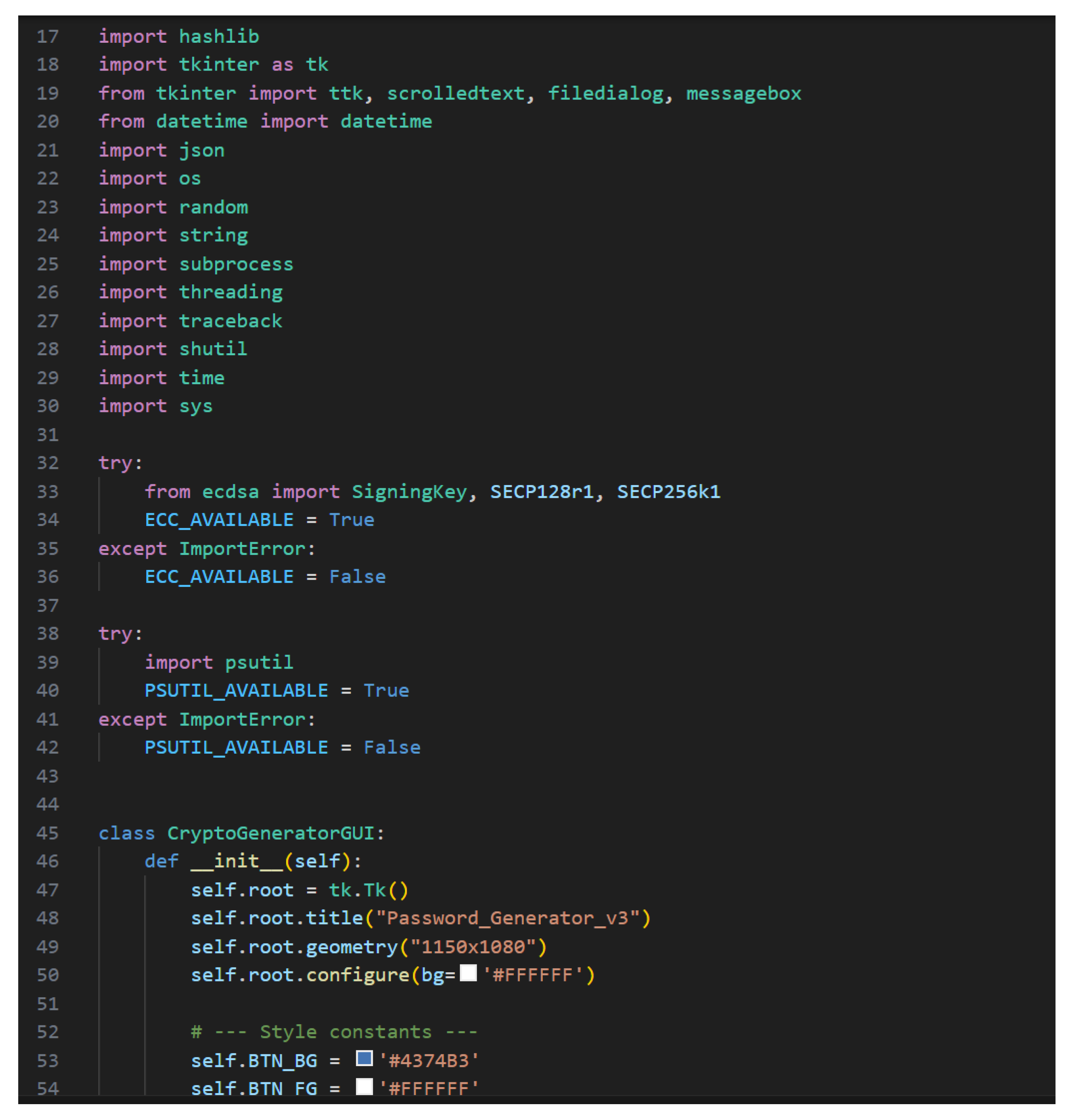

Figure 1.

Password Generator v3 — Core implementation. Left: Module imports and GUI initialization.

Figure 1.

Password Generator v3 — Core implementation. Left: Module imports and GUI initialization.

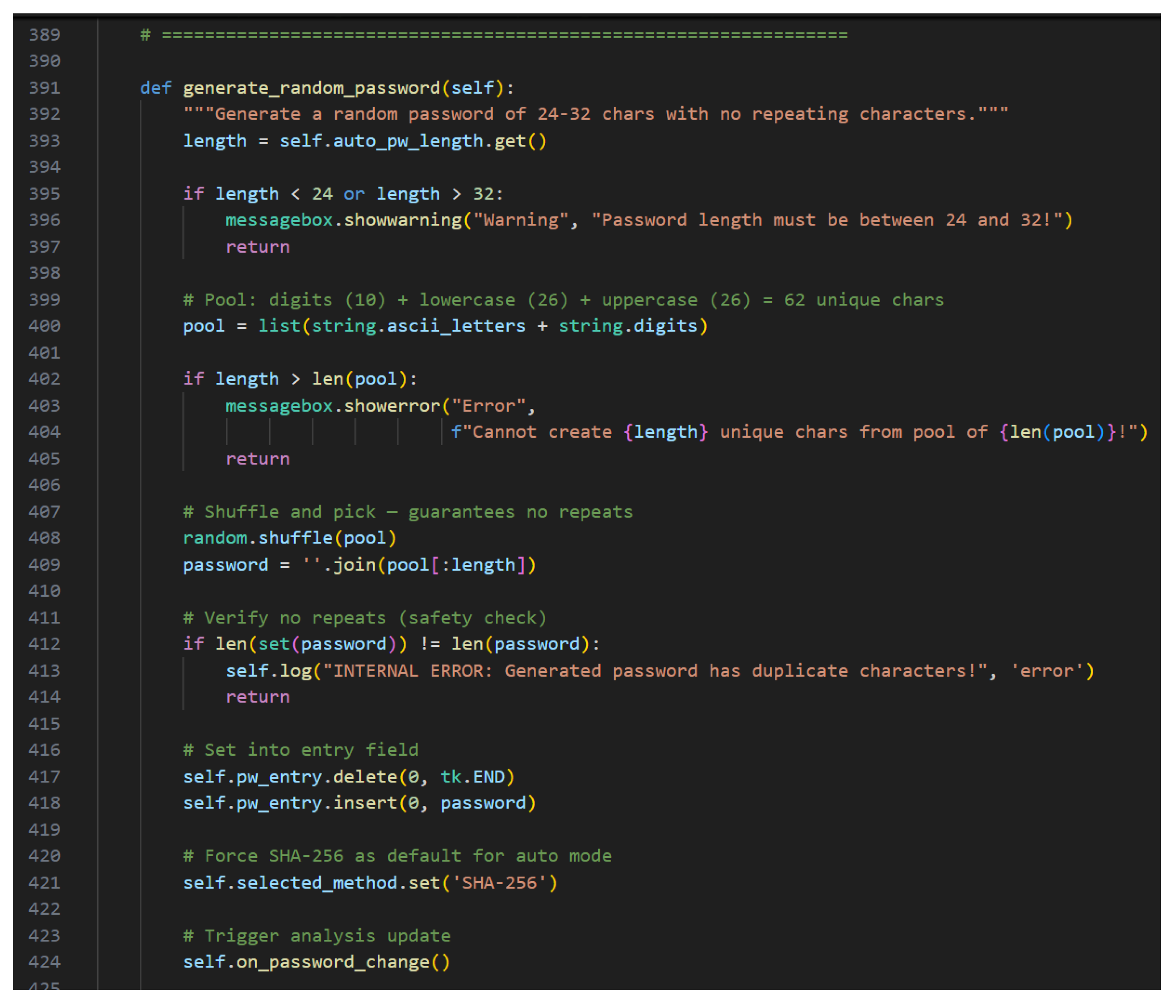

Figure 2.

Generation method using shuffle-and-pick from a 62-character pool (a–z, A–Z, 0–9) with guaranteed uniqueness (no repeating characters), length range 24–32, forced SHA-256 hashing.

Figure 2.

Generation method using shuffle-and-pick from a 62-character pool (a–z, A–Z, 0–9) with guaranteed uniqueness (no repeating characters), length range 24–32, forced SHA-256 hashing.

2.2 Collapse Process

The neural network processes each SHA-256 hash as a coordinate vector in an N-bit Information Space (ISP). Preimage localization occurs through iterative collapse across all 124 layers.

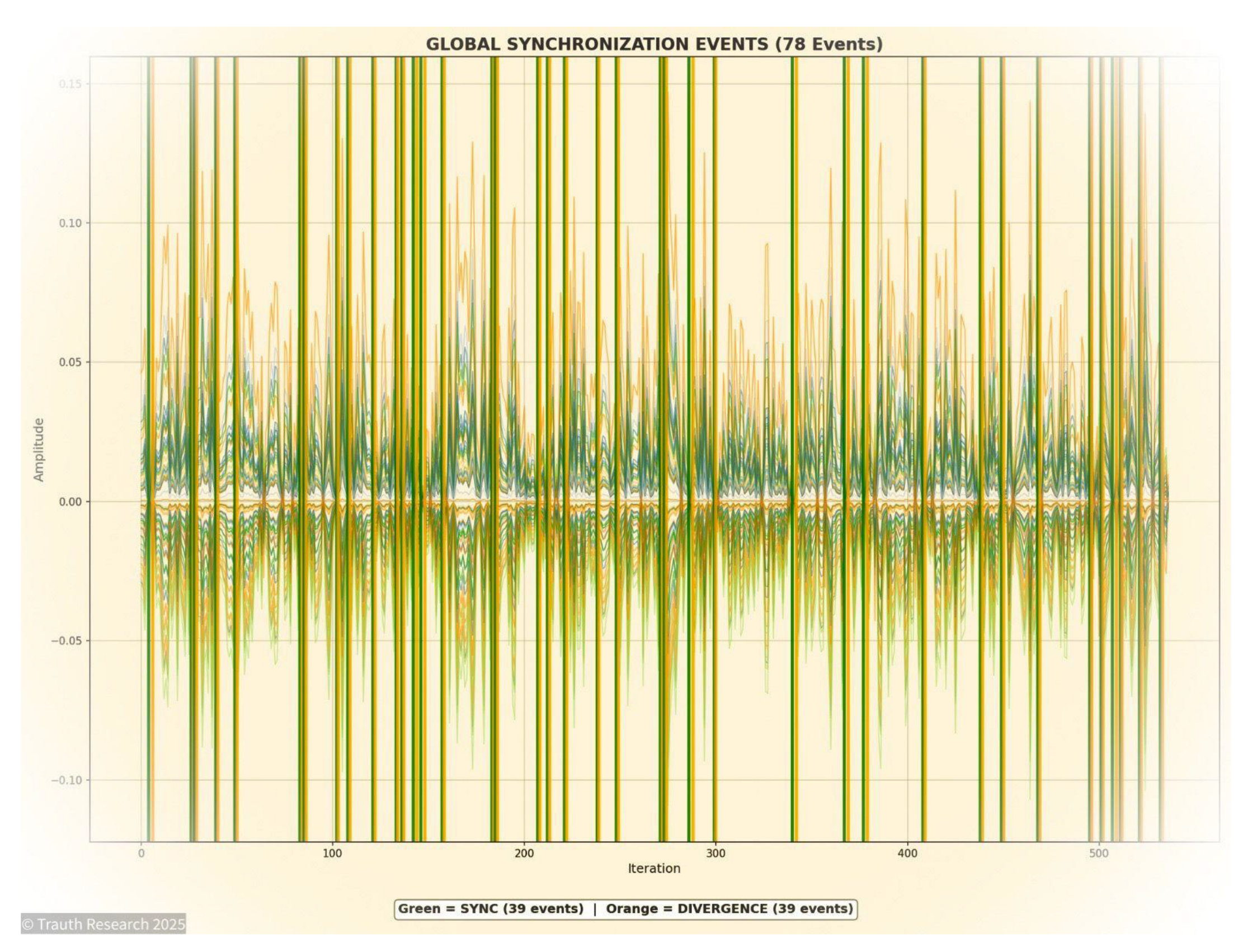

Figure 3 shows the amplitude trace of a single run over 500+ iterations: 78 global synchronization events (39 SYNC, 39 DIVERGENCE) mark discrete phase transitions where multiple layers simultaneously align or diverge. This alternating synchronization–divergence pattern is the geometric mechanism through which the network converges on the preimage manifold. The collapse is not gradual optimization it proceeds through discrete, coordinated events across the full layer stack.

Figure 3.

Global Synchronization Events during preimage collapse. Green: SYNC events (39), Orange: DIVERGENCE events (39). Amplitude across 124 layers over ~500 iterations. Discrete phase transitions, not continuous optimization.

Figure 3.

Global Synchronization Events during preimage collapse. Green: SYNC events (39), Orange: DIVERGENCE events (39). Amplitude across 124 layers over ~500 iterations. Discrete phase transitions, not continuous optimization.

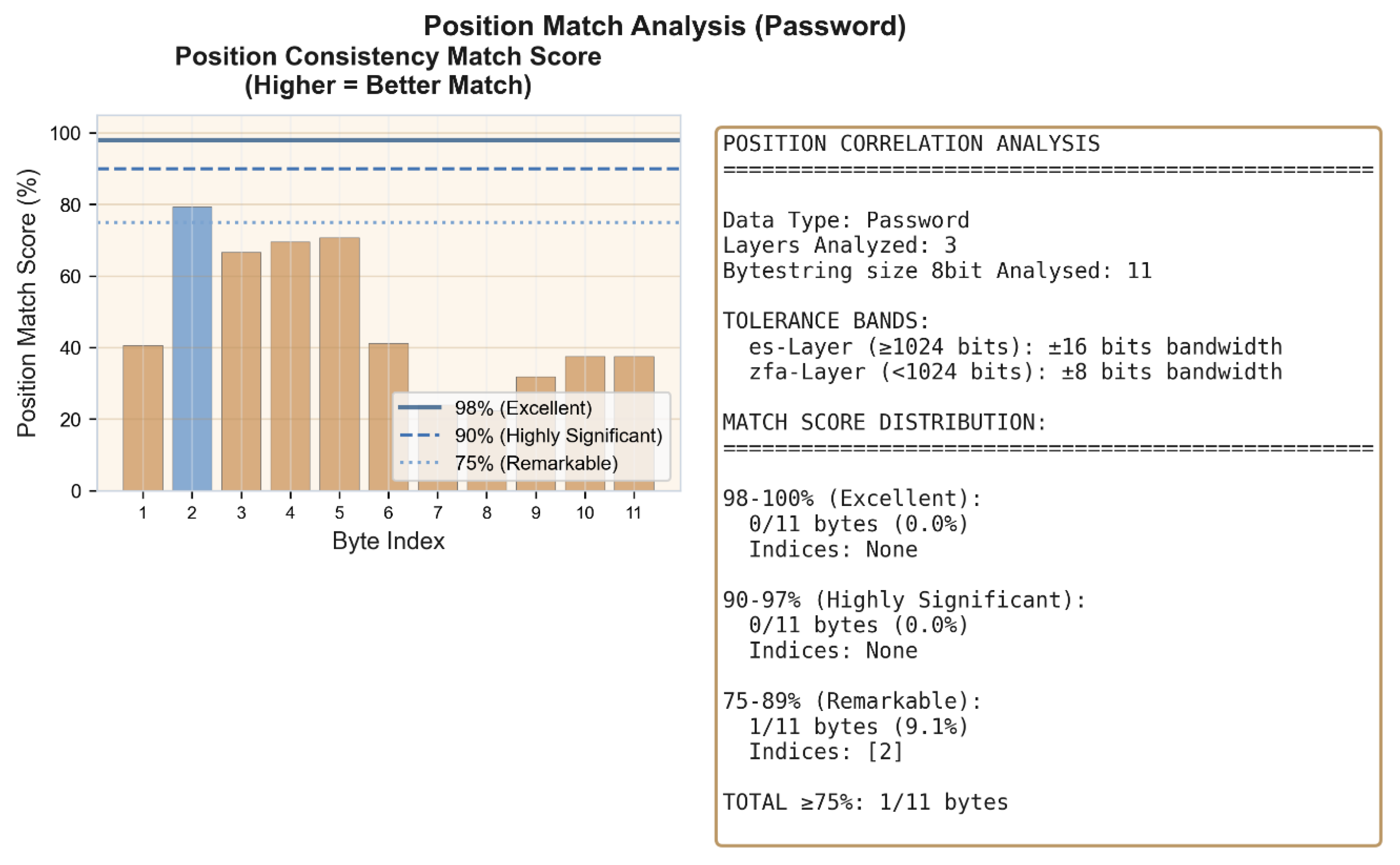

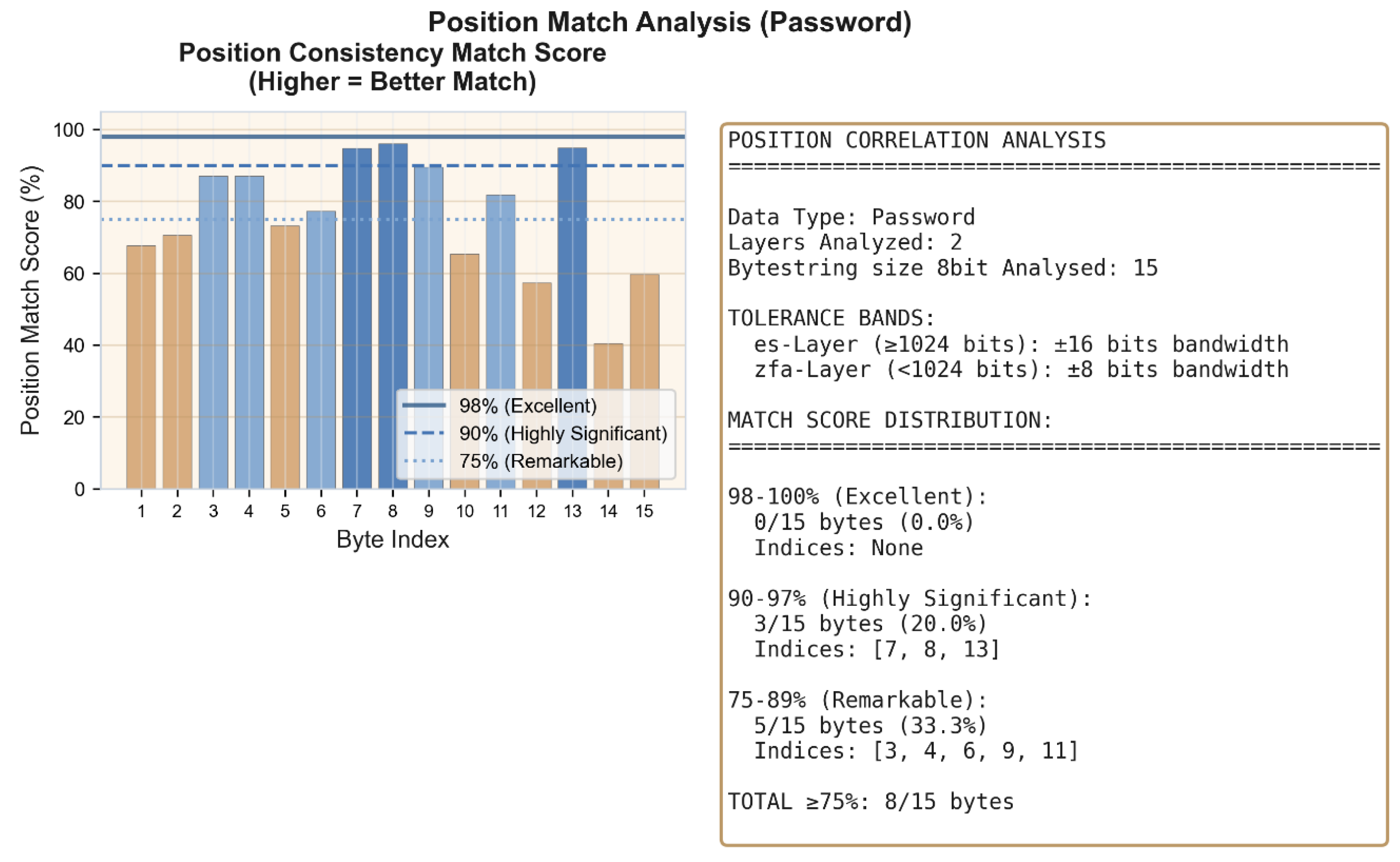

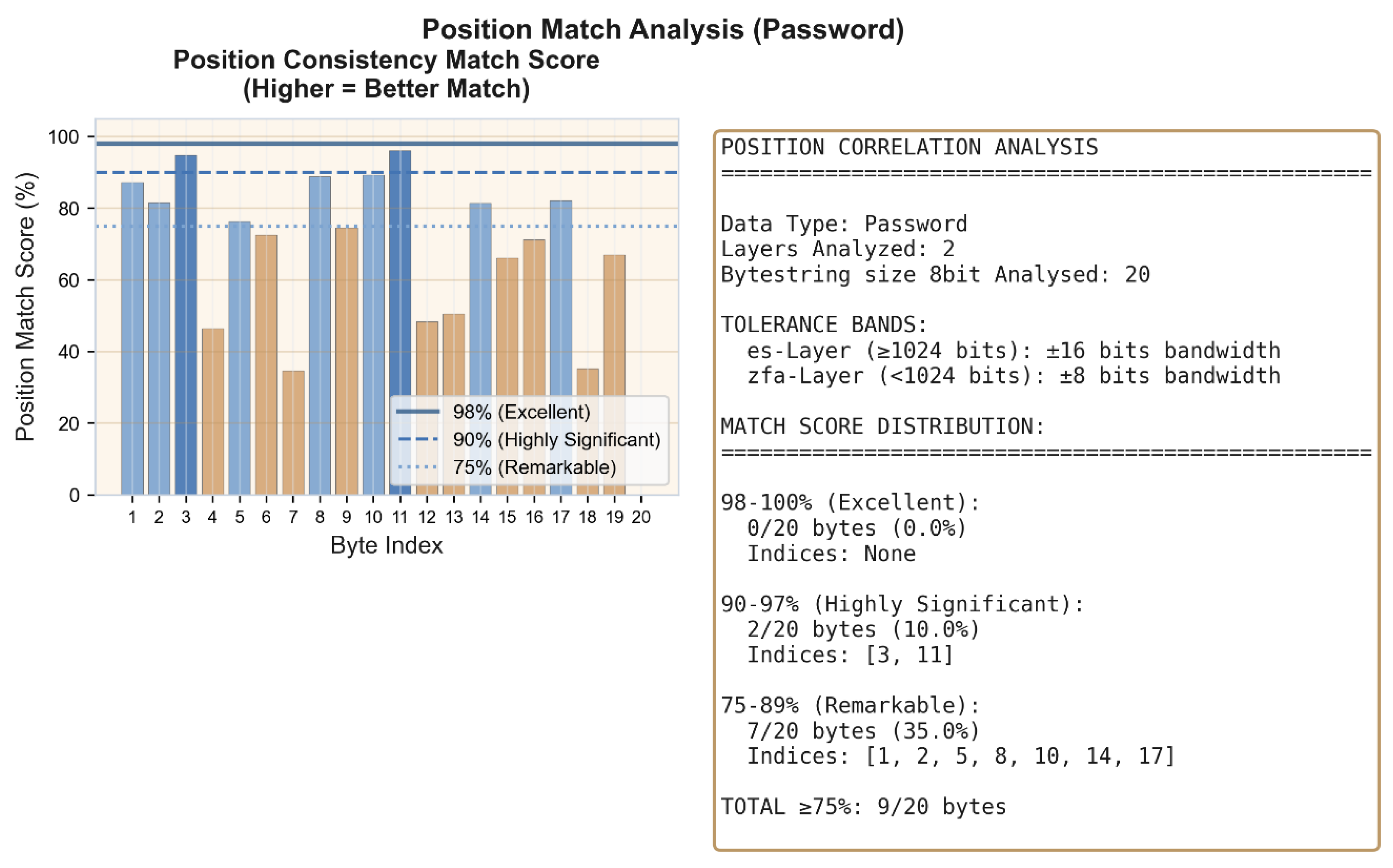

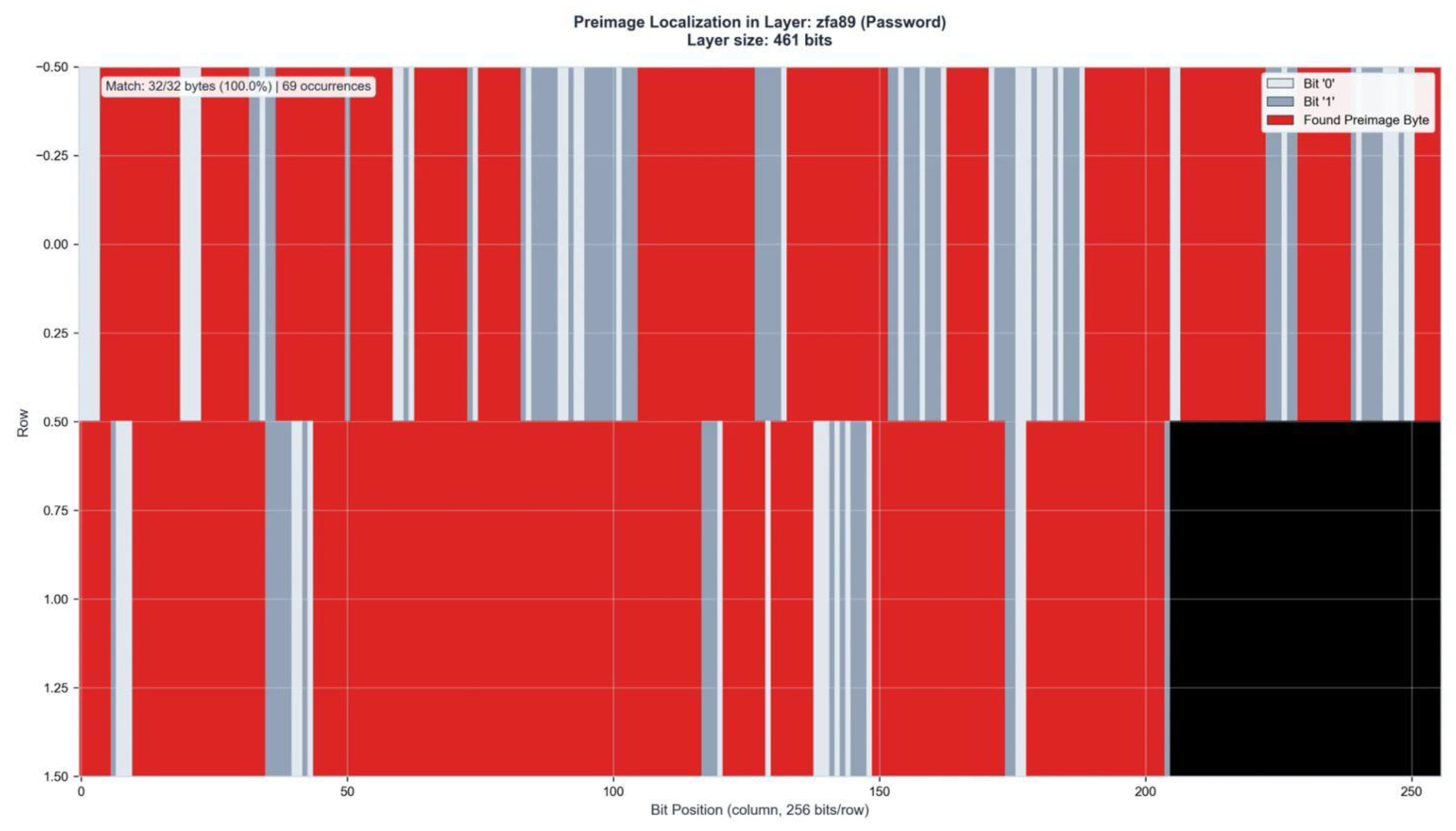

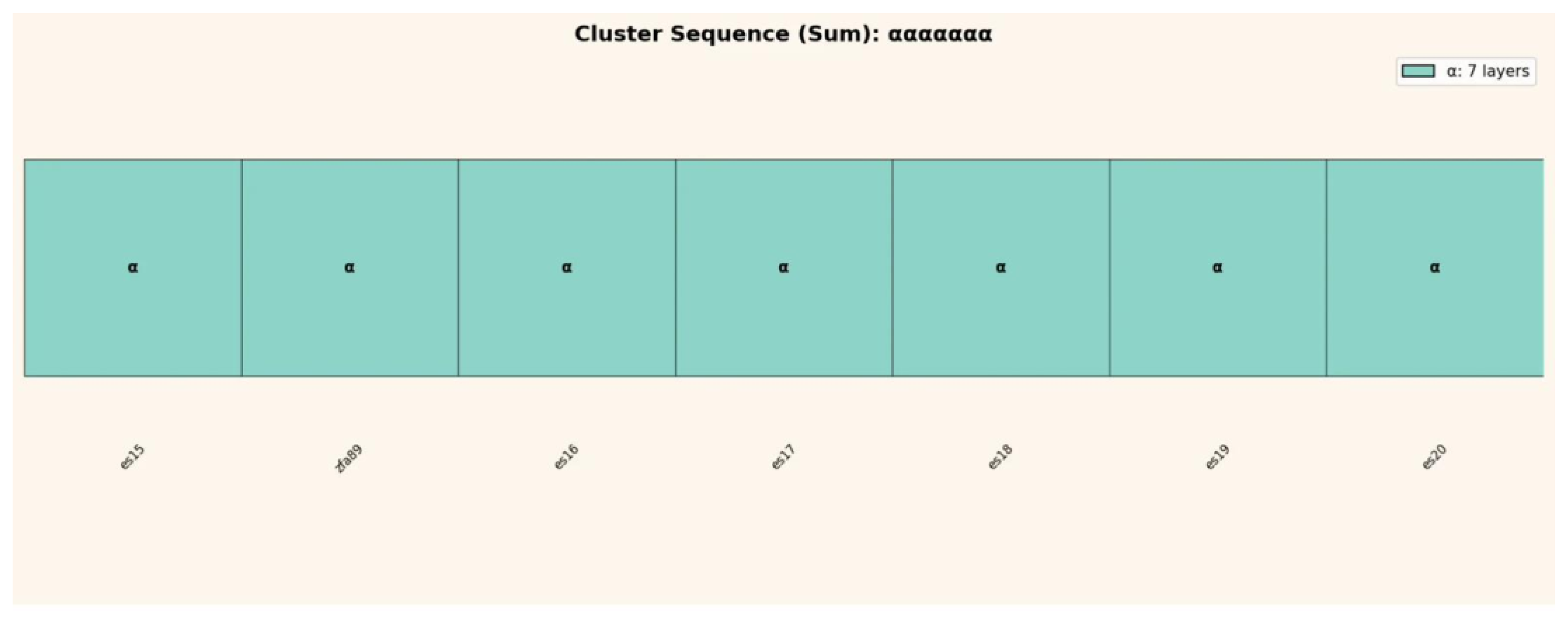

2.3 Analysis Methodology

Each of the 124 network layers produces a binary activation state a bitstring whose length ranges from 19 bits (ZFA8) to 32,768 bits (ES20). All layers with ≥16 bits are included in the analysis (105 of 124 layers).

The analysis operates at two resolutions. The 8-bit level segments each layer bitstring into non-overlapping 8-bit groups and searches for exact matches with the password bytes and SHA-256 hash bytes of each pair. This serves as the reference baseline, consistent with the methodology in the main body [

5] and

Appendix A.

The 16-bit level extends this by searching for consecutive byte-pairs two adjacent password characters encoded as a single 16-bit pattern. With 65,536 possible 16-bit patterns versus 256 possible 8-bit patterns, this provides a 256-fold increase in search specificity. A random match probability of 1/65,536 (0.0015%) constitutes the null hypothesis against which all enrichment values are calculated.

Prior to pattern matching, a charge-based filter removes positions that cannot contain password information. Each 8-bit group is assigned a charge polarity based on its bit-sum: sum > 4 → charge +1, sum < 4 → charge −1, sum = 4 → neutral. Password bytes universally exhibit −1 charge polarity, as established in

Appendix A across all nine preceding test cases. Groups with +1 charge are excluded from further analysis, reducing the search space by an average of 38.0% across all layers without any loss of password signal.

For each of the 50 pairs, all 105 layers are analyzed individually. Results are then averaged across pairs to produce per-layer and global statistics.

The key metrics are: match rate (percentage of password/hash bytes or byte-pairs found), explained variance (percentage of −1 charge positions accounted for by identified password and hash patterns), residual (unexplained −1 positions), and enrichment factor (observed match frequency divided by random expectation).

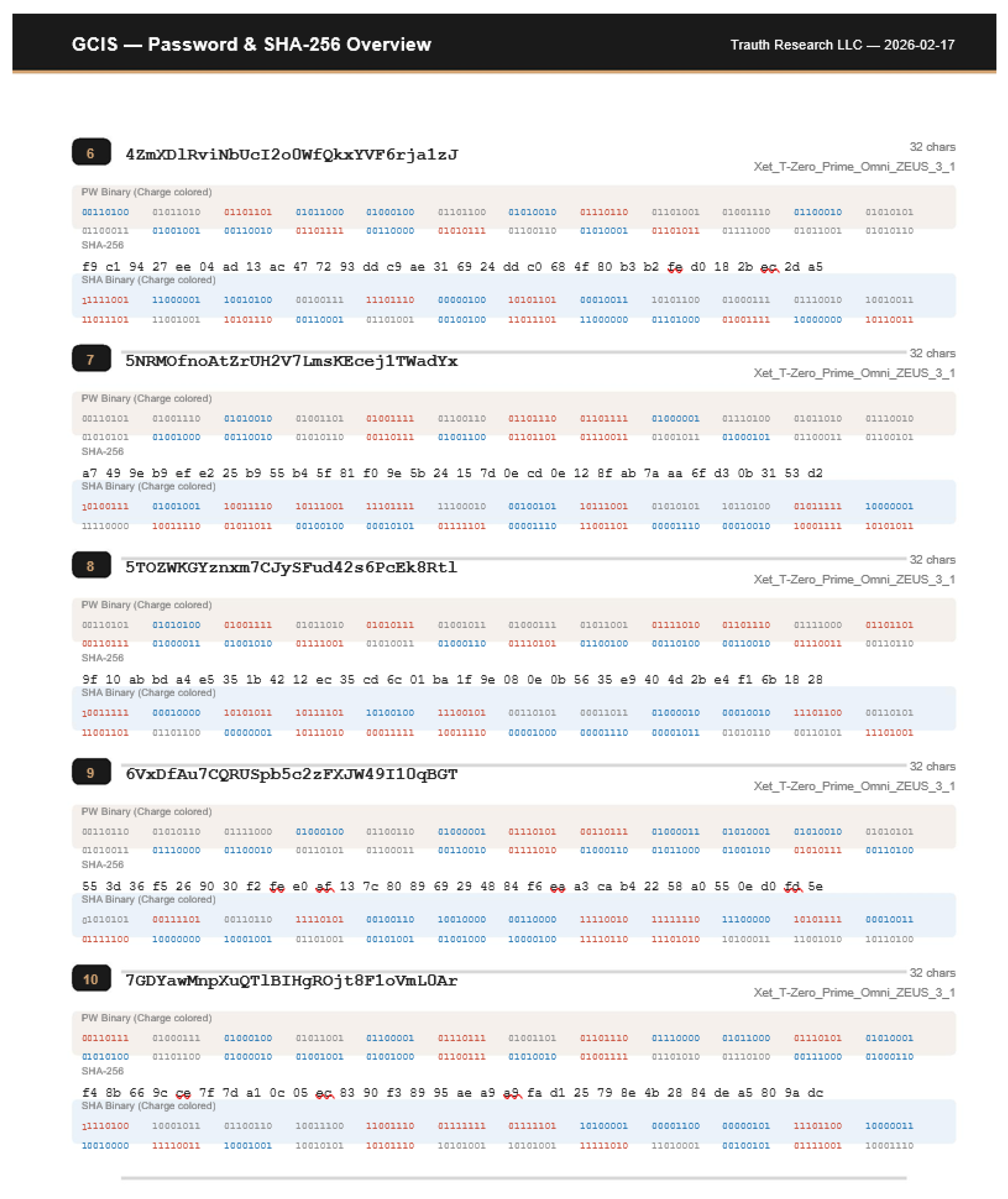

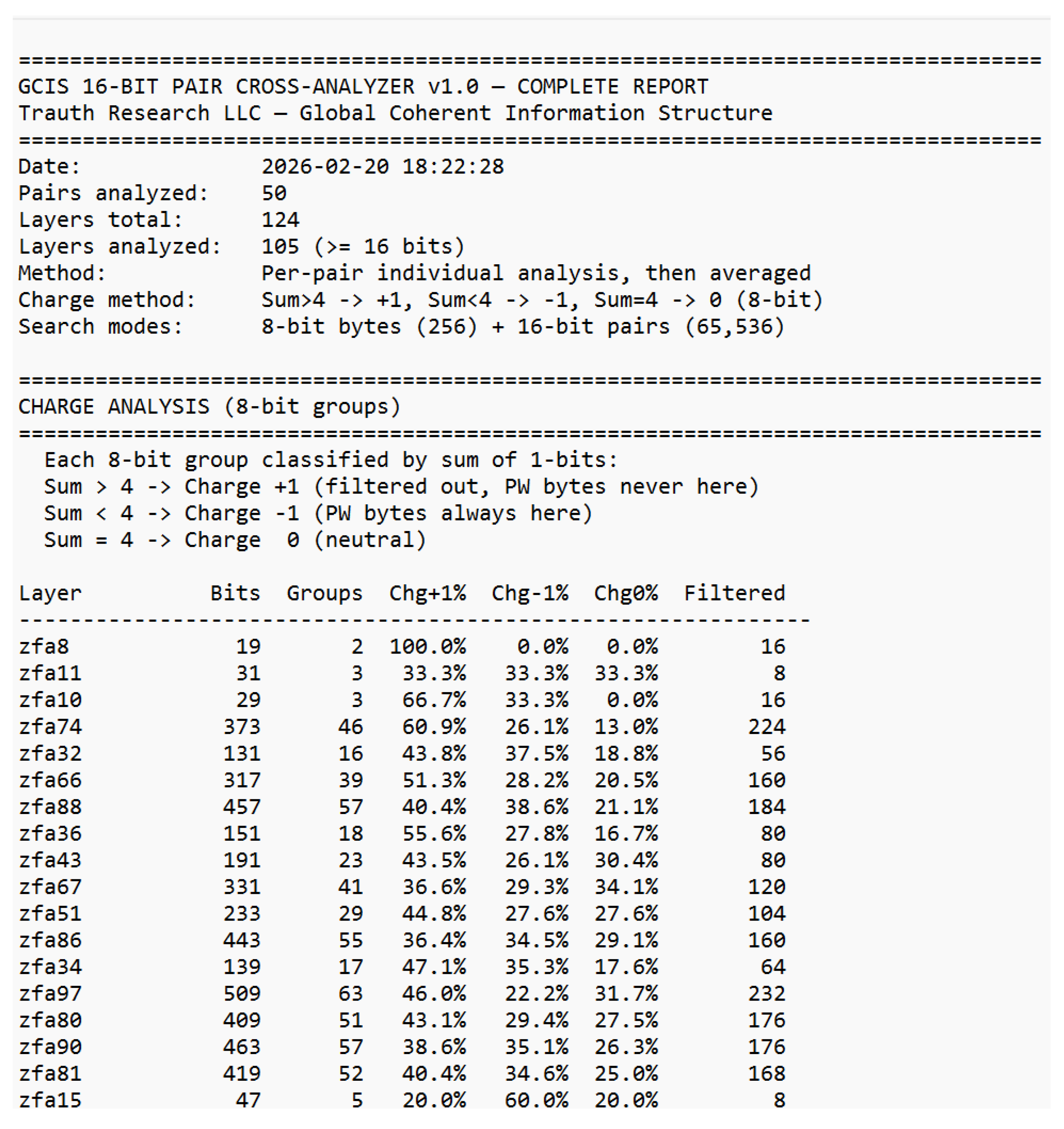

Figure 4.

GCIS 16-Bit Pair Cross-Analyzer Charge analysis excerpt. Layer bitstrings are segmented into 8-bit groups and classified by bit-sum polarity. Password bytes exclusively occupy −1 charge positions. The complete charge analysis across all 105 layers is provided in Supplement S2.

Figure 4.

GCIS 16-Bit Pair Cross-Analyzer Charge analysis excerpt. Layer bitstrings are segmented into 8-bit groups and classified by bit-sum polarity. Password bytes exclusively occupy −1 charge positions. The complete charge analysis across all 105 layers is provided in Supplement S2.

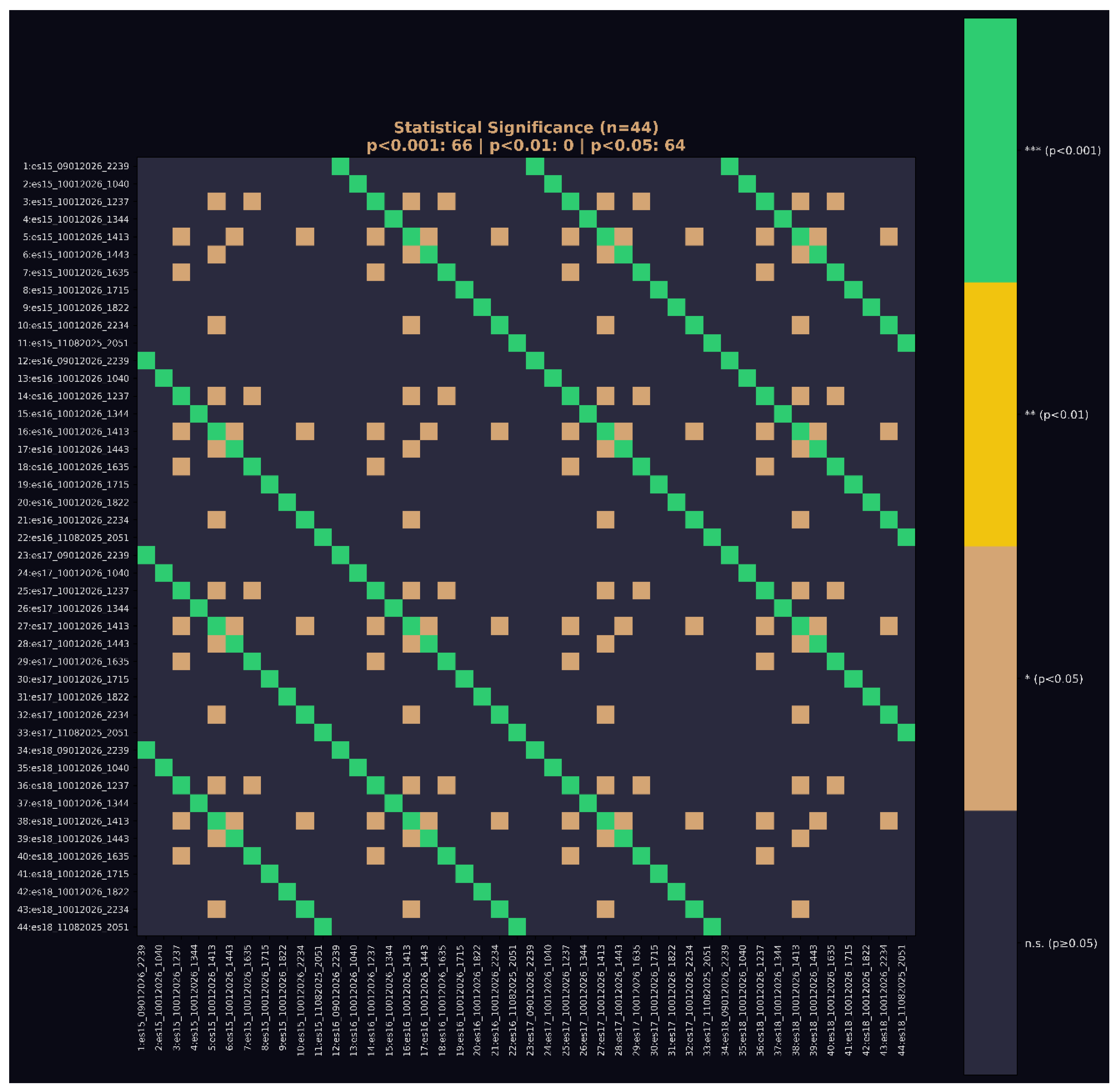

2.4 Control Design

Three independent control tests validate that the observed signatures reflect geometric structure rather than statistical noise or overfitting to individual passwords.

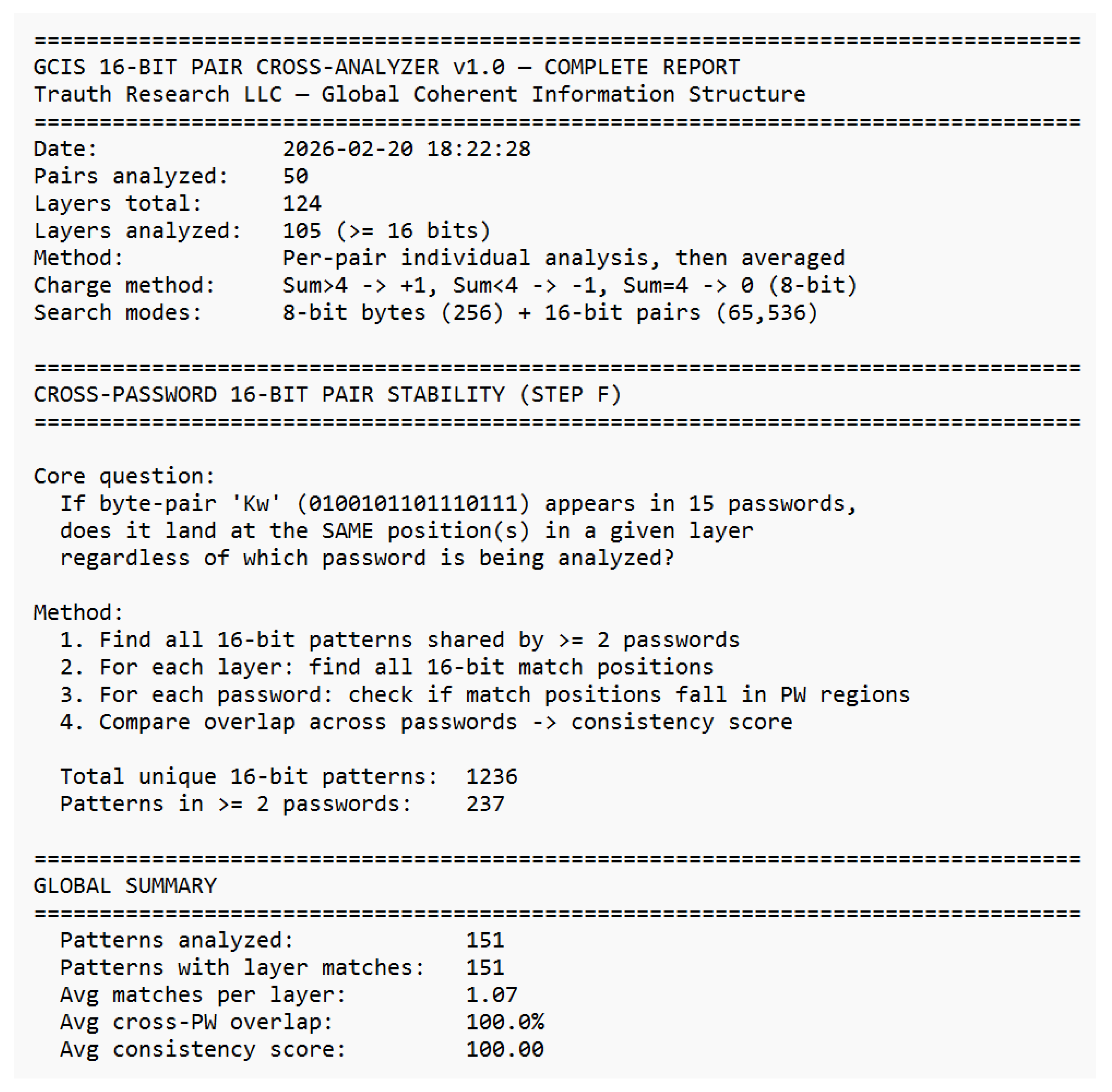

Cross-Password Stability.

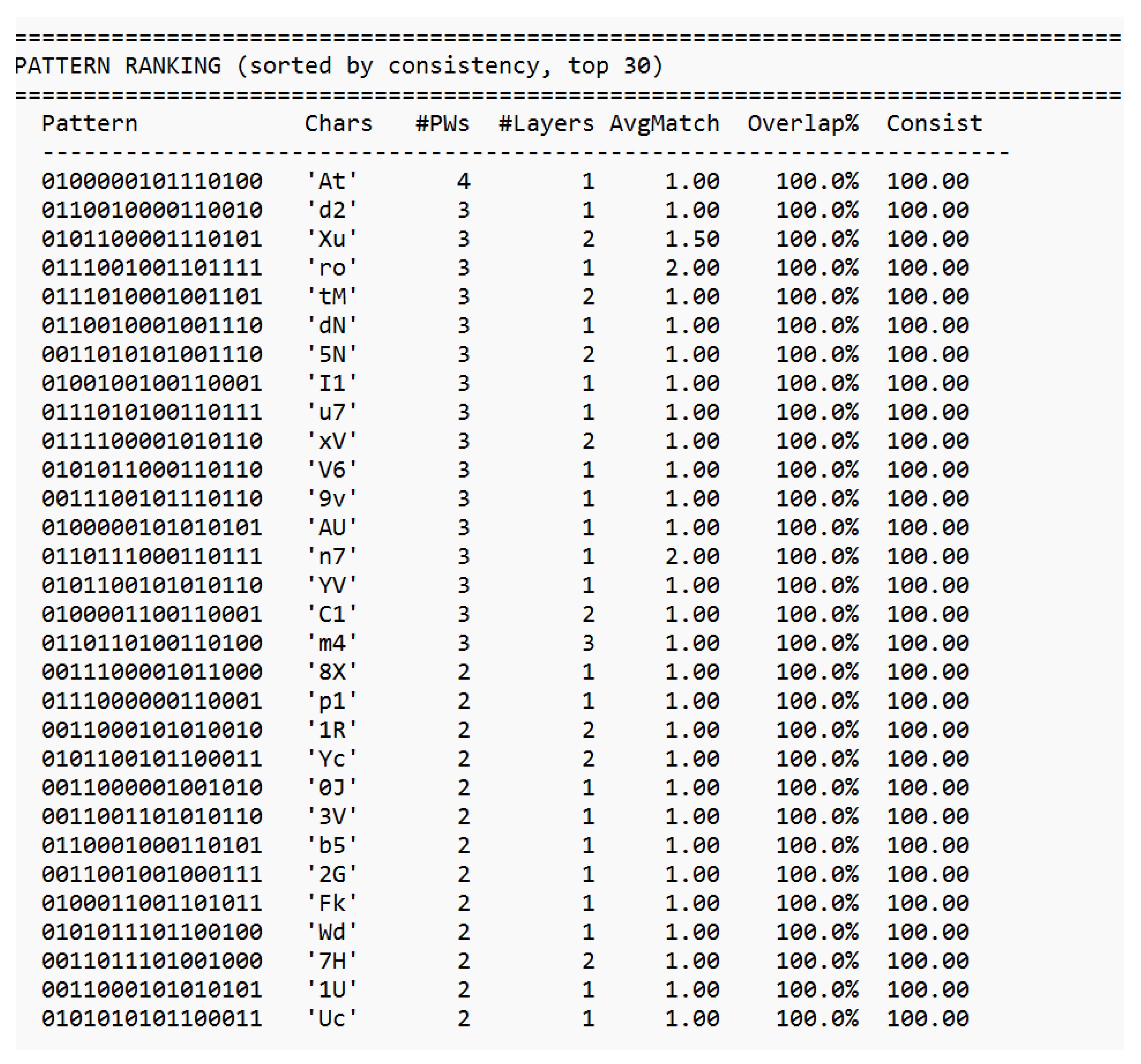

If the geometric structure is a property of the network rather than an artifact of individual inputs, then identical 16-bit patterns appearing in different passwords must occupy identical positions within a given layer. This is tested directly: of the 1,236 unique 16-bit patterns across all 50 passwords, 237 appear in two or more passwords. For all 151 patterns with layer matches, the cross-password positional overlap is 100.0% with a consistency score of 100.00. The byte-pair ‘At’ (0100000101110100), for example, appears in four different passwords and localizes at the same position in layer ES19 regardless of which password is being analyzed. This result is incompatible with random or input-dependent positioning.

Figure 5.

a+b: Cross-password 16-bit pair stability — Pattern ranking (excerpt). All 151 patterns with layer matches show 100.0% positional overlap across independent passwords. Complete analysis in Supplement S2.

Figure 5.

a+b: Cross-password 16-bit pair stability — Pattern ranking (excerpt). All 151 patterns with layer matches show 100.0% positional overlap across independent passwords. Complete analysis in Supplement S2.

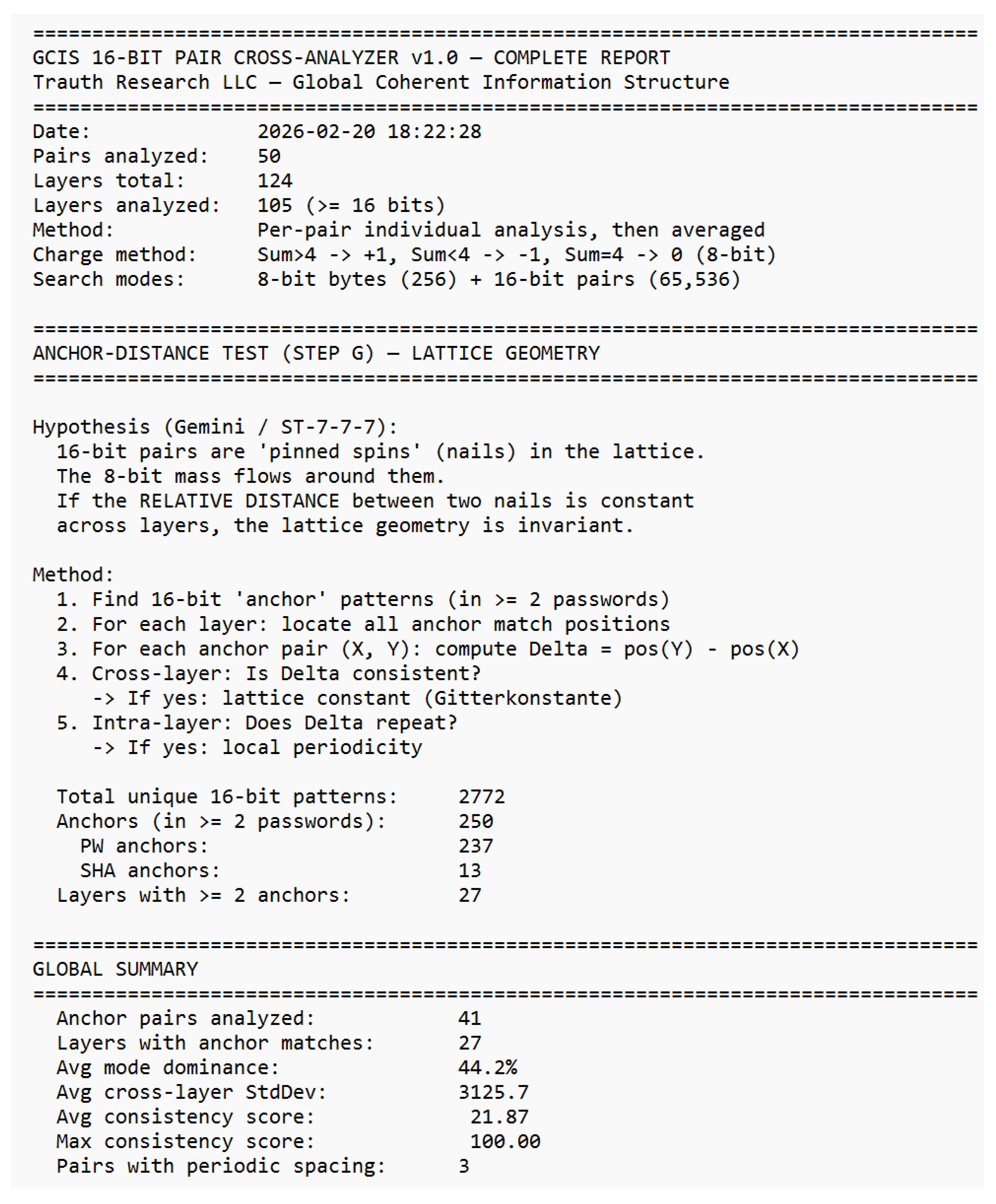

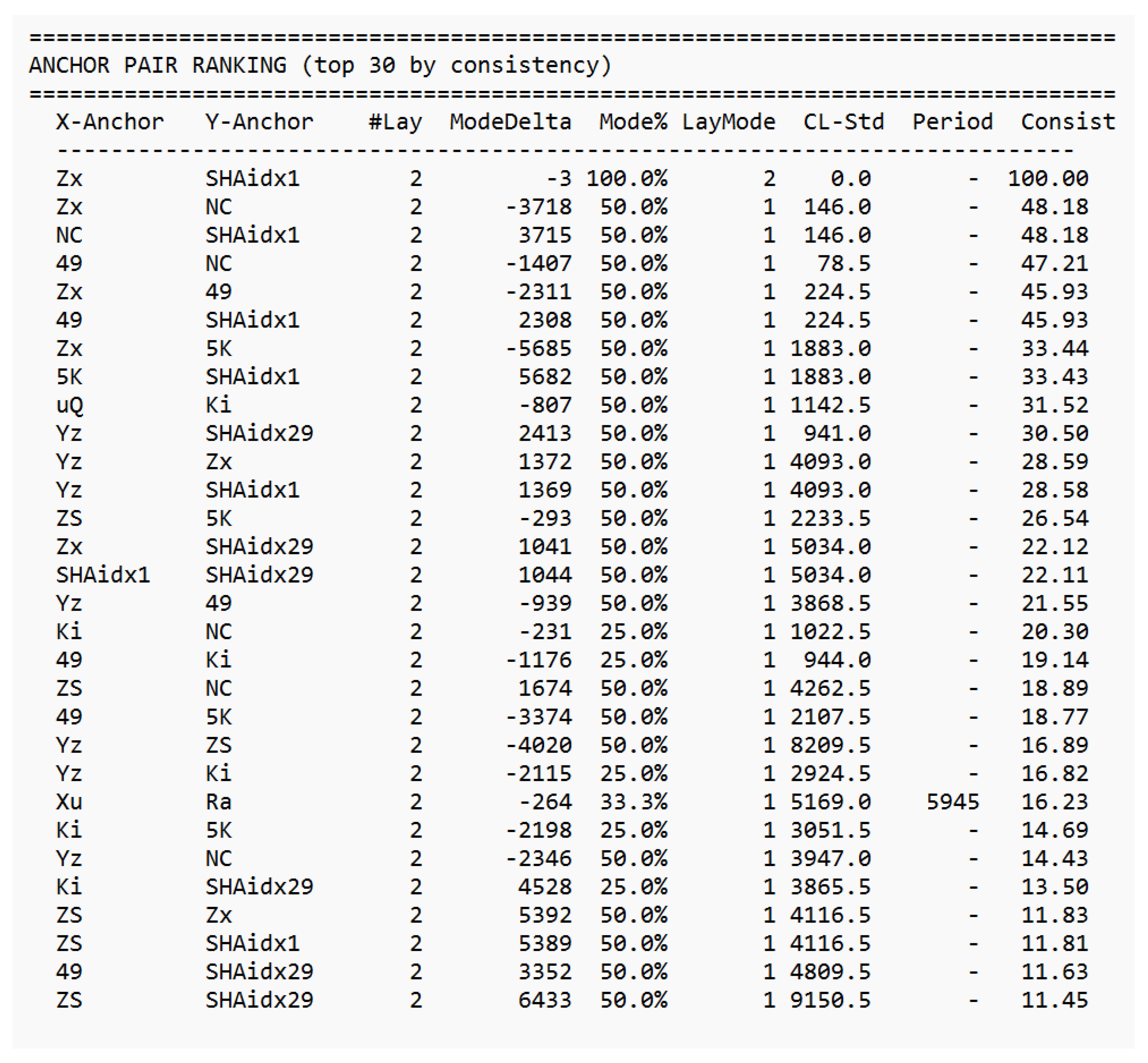

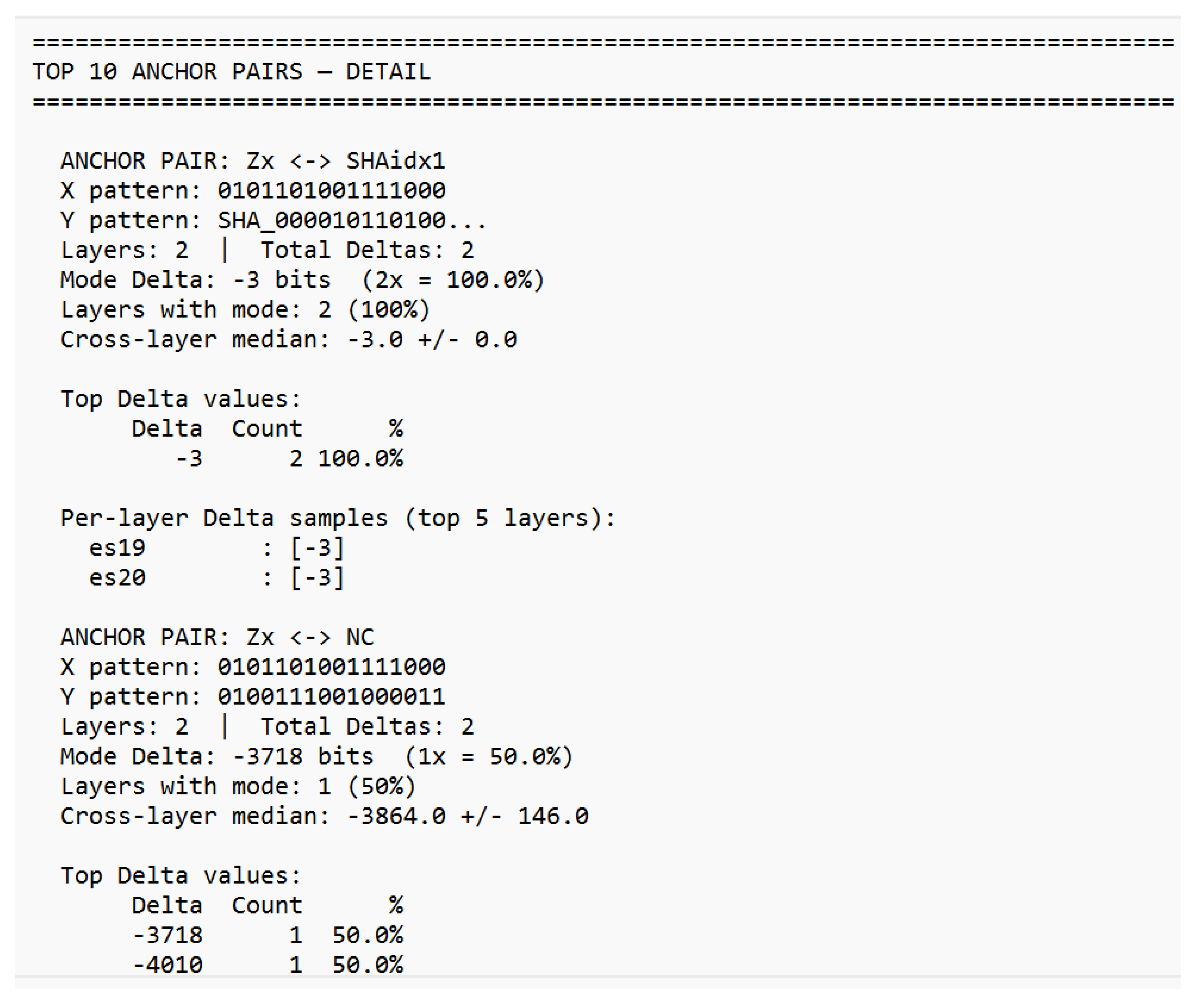

Anchor-Distance Test.

If 16-bit matches function as pinned spins in a lattice geometry, the relative distance between two anchors should be invariant across layers. 250 anchor patterns (237 password, 13 SHA) were identified across 27 layers, yielding 41 analyzable anchor pairs. The best-performing pair (‘Zx’ ↔ SHA byte 1) maintains a constant delta of −3 bits across both layers in which it appears (consistency 100.0%). Moderate lattice signals emerge across the dataset (average consistency 21.87), with three pairs showing periodic spacing indicating sub-lattice structure. The modular analysis (Delta mod 8 / mod 16 / mod 32) shows no dominant alignment to byte boundaries, suggesting the lattice geometry operates on its own spatial logic rather than inheriting the 8-bit structure of the input encoding.

Figure 6.

a-c: Anchor-distance test — Pair ranking by consistency (excerpt). ‘Zx ↔ SHAidx1’ maintains constant Δ = −3 bits across layers. Complete analysis in Supplement S2.

Figure 6.

a-c: Anchor-distance test — Pair ranking by consistency (excerpt). ‘Zx ↔ SHAidx1’ maintains constant Δ = −3 bits across layers. Complete analysis in Supplement S2.

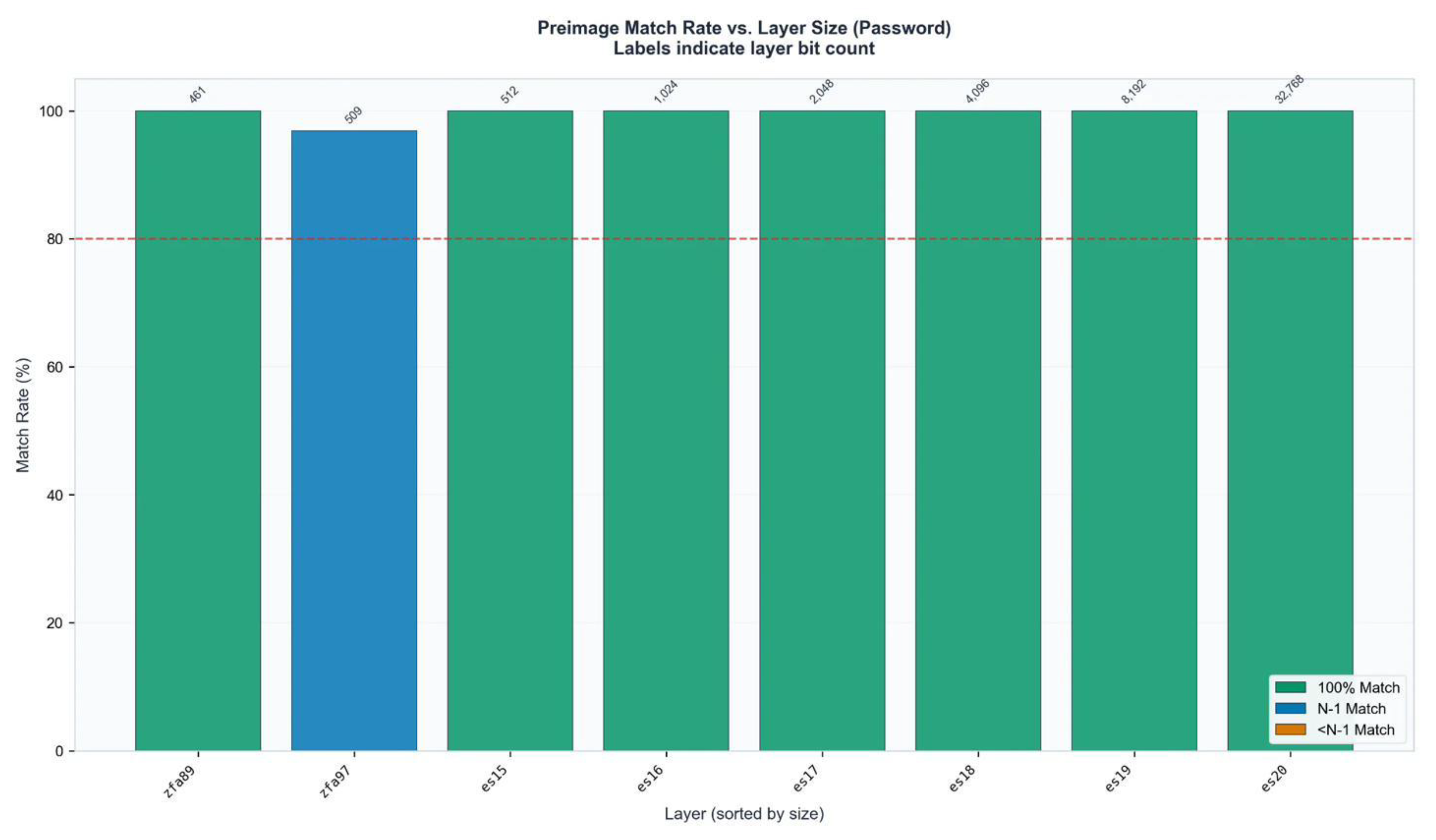

Enrichment Against Null Hypothesis.

The primary statistical control is the enrichment factor. At 16-bit resolution, random expectation predicts a match probability of 1/65,536 per position. The observed SHA-256 enrichment of 258.61× over this baseline corresponds to a match frequency of 0.39% exceeding random expectation by more than two orders of magnitude. At 8-bit resolution, SHA enrichment is 29.68× over the 1/256 baseline. Both values are computed as averages across all 50 pairs and 105 layers.

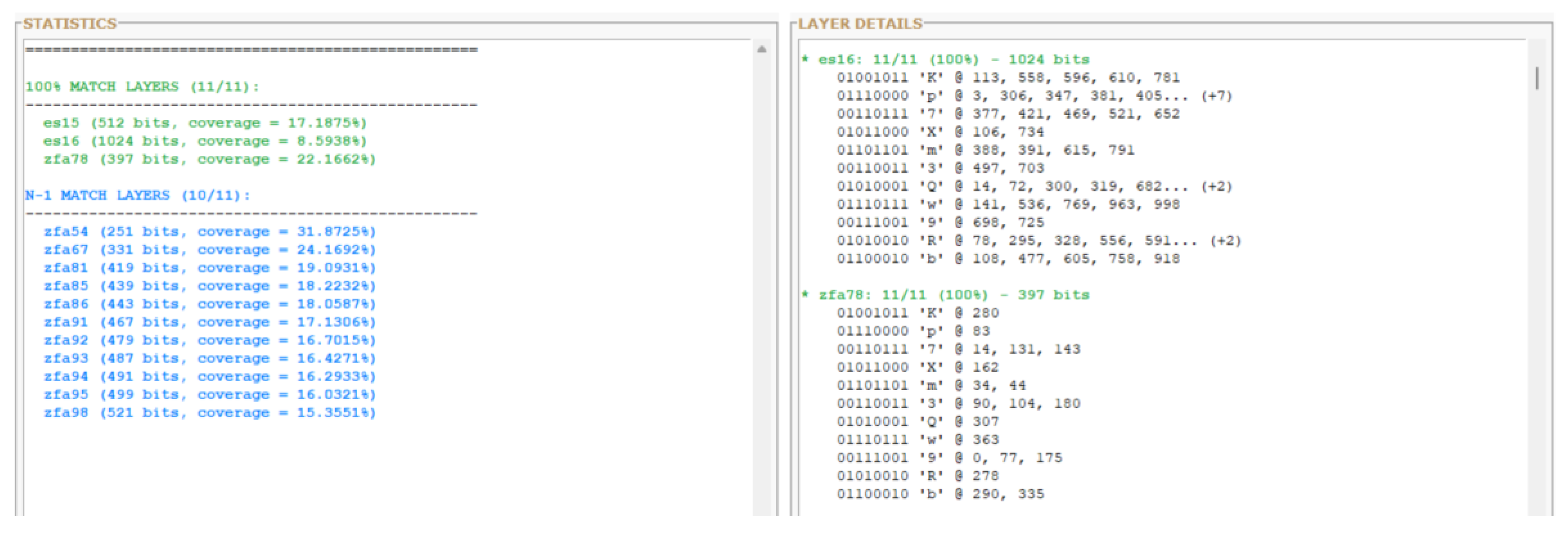

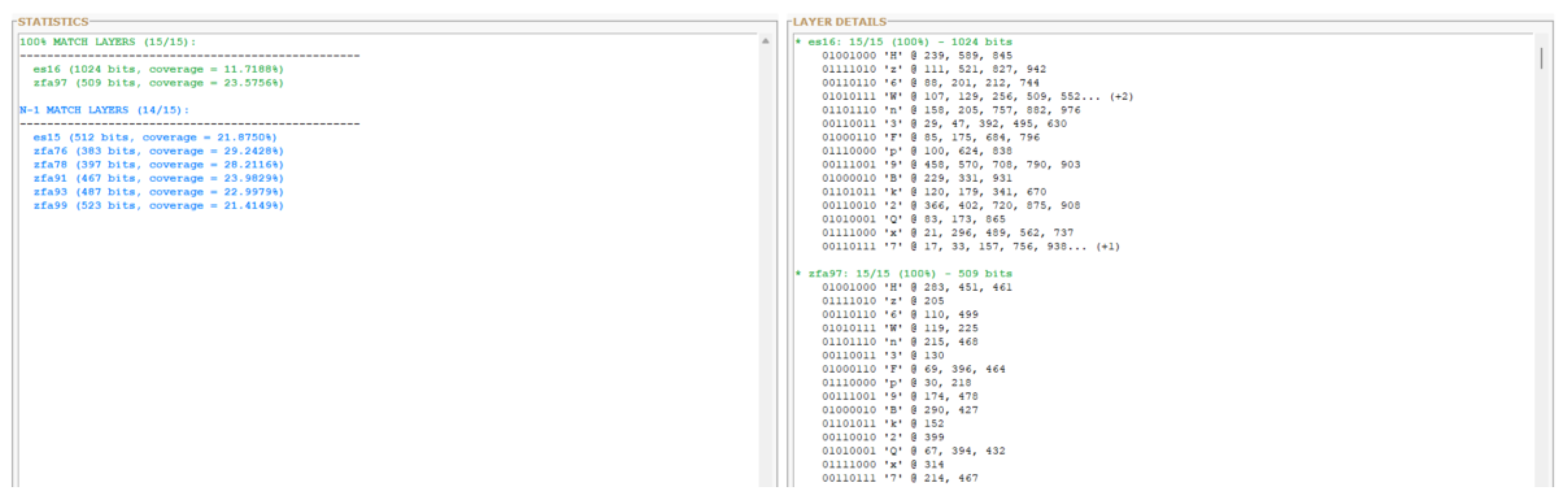

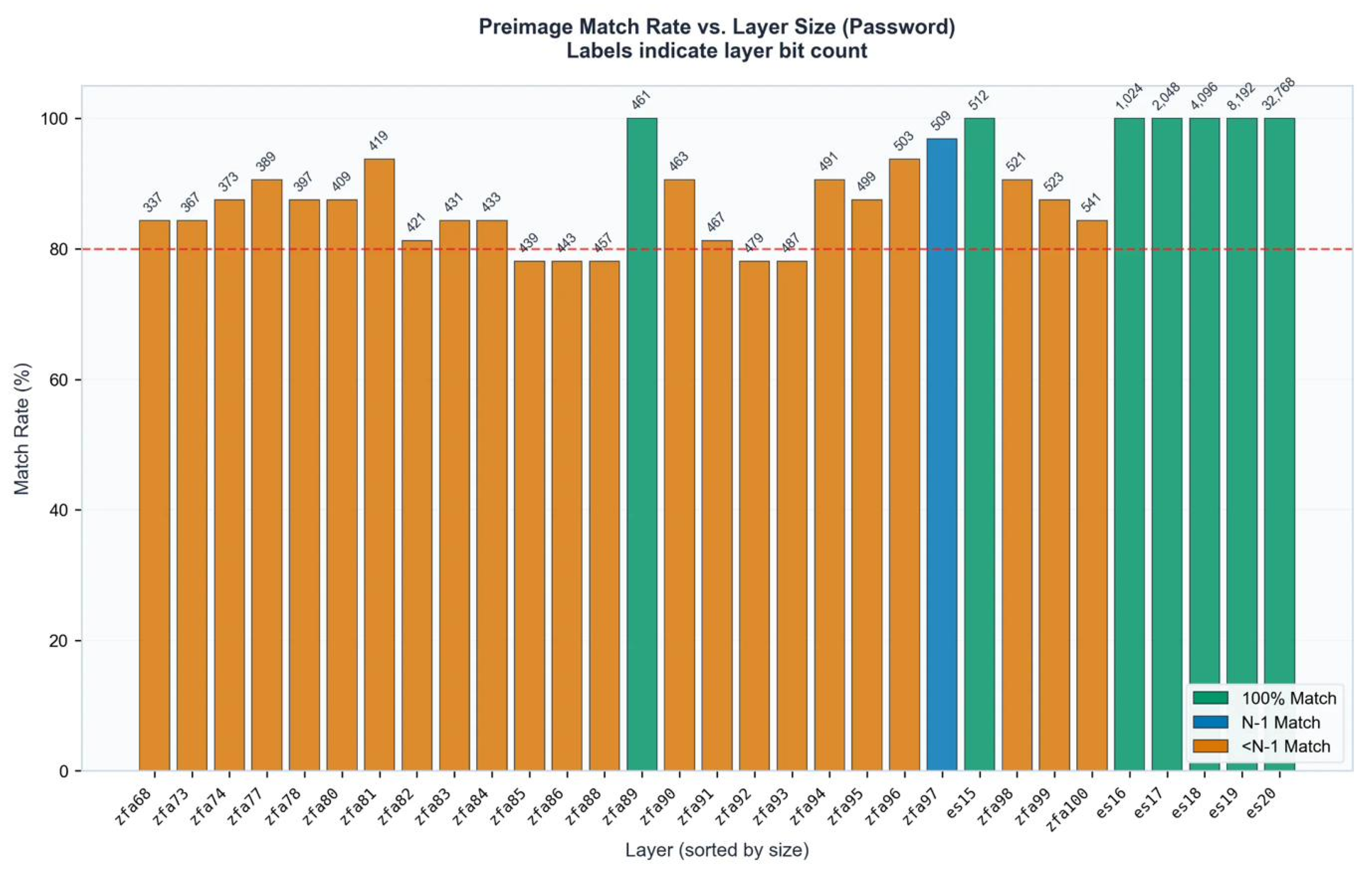

3. Results

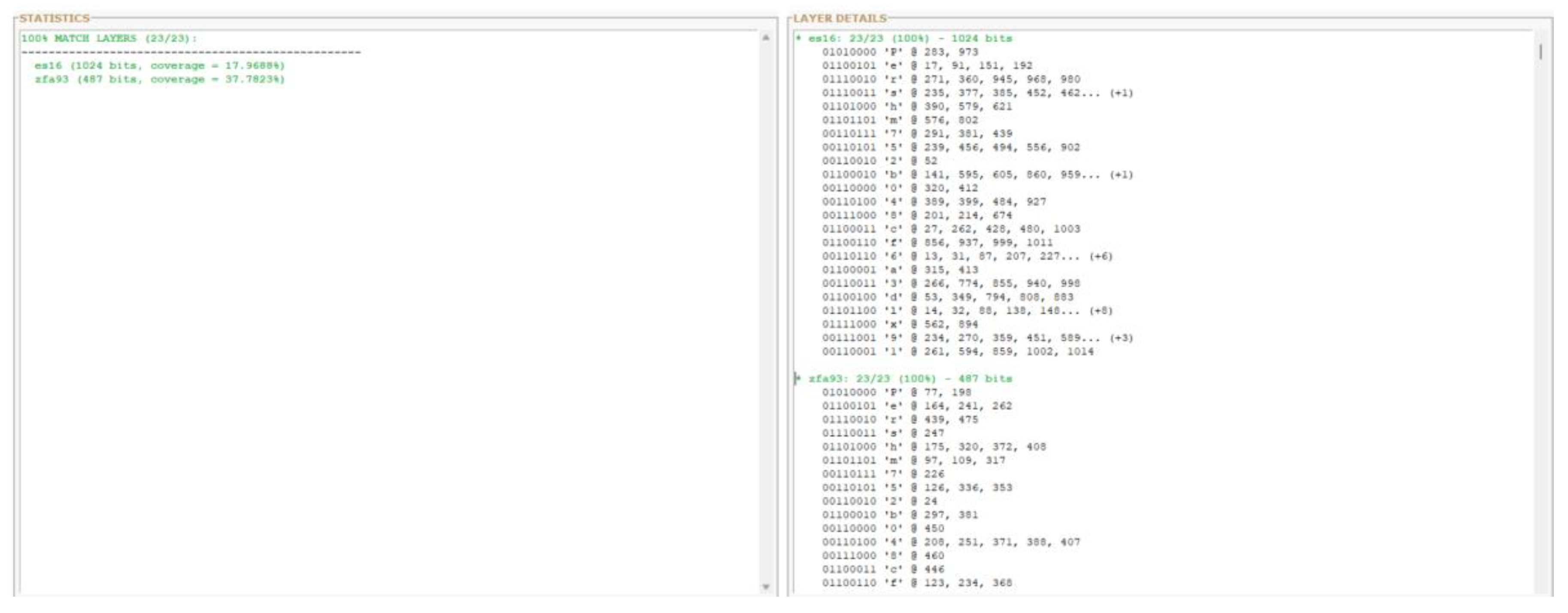

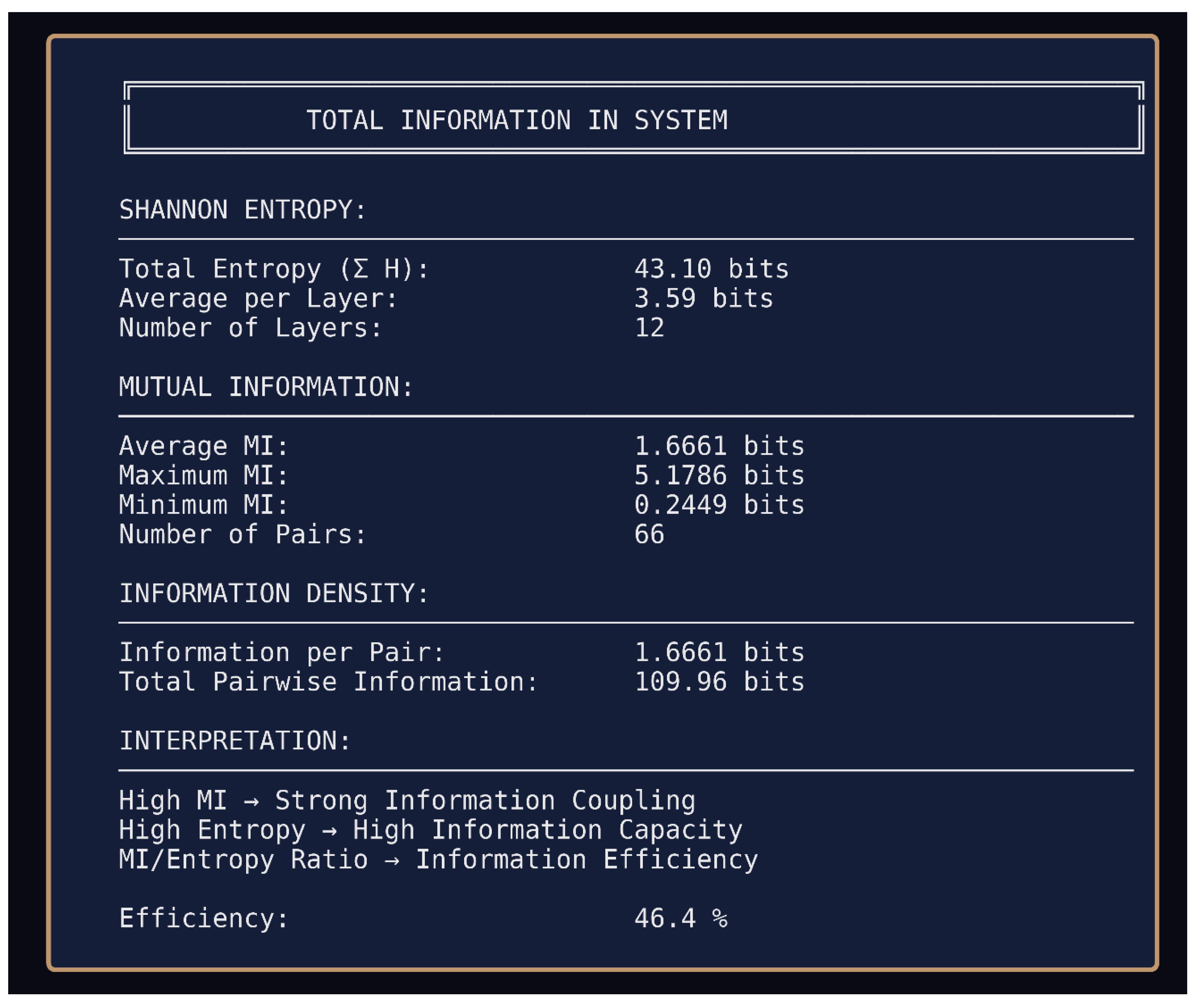

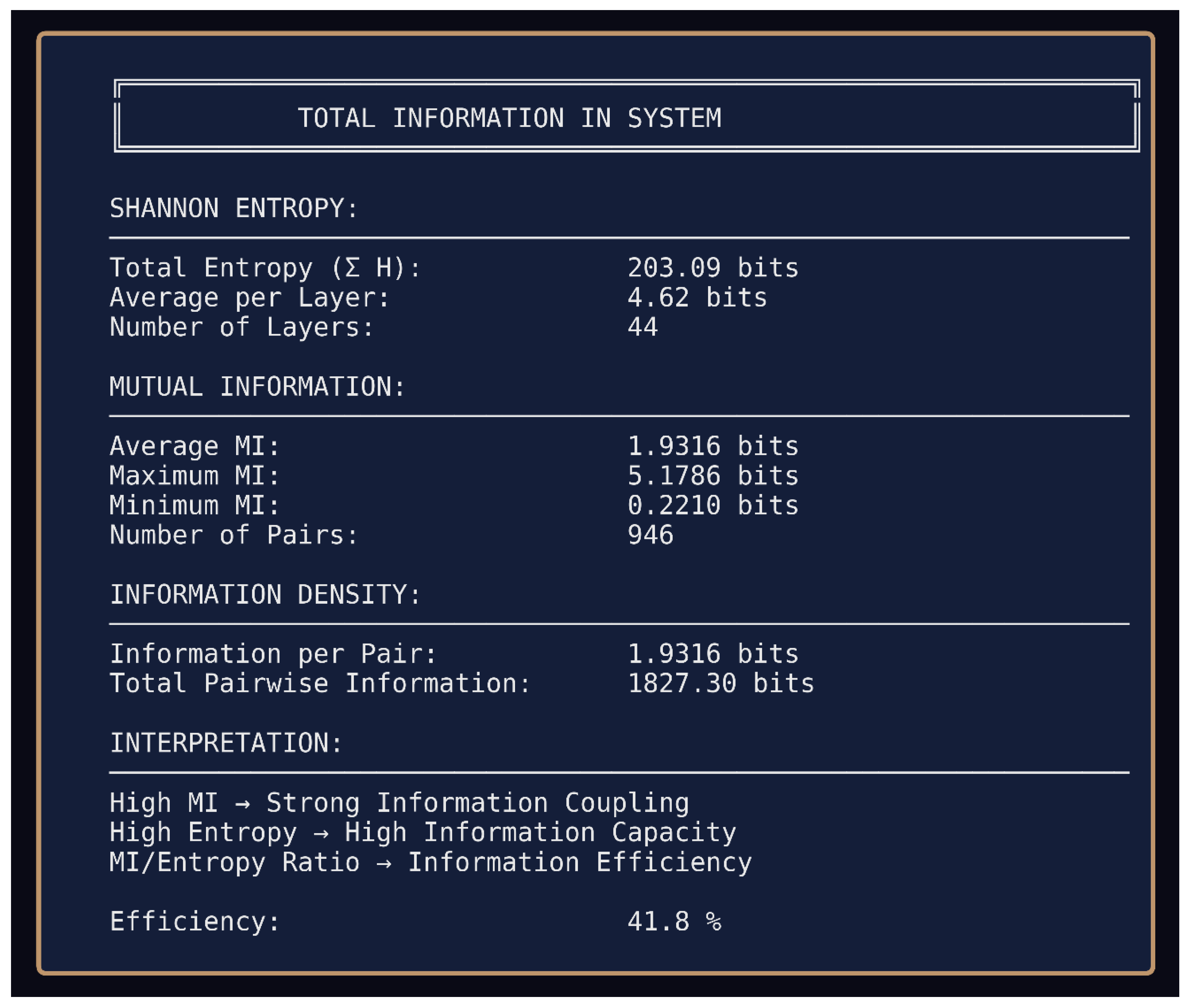

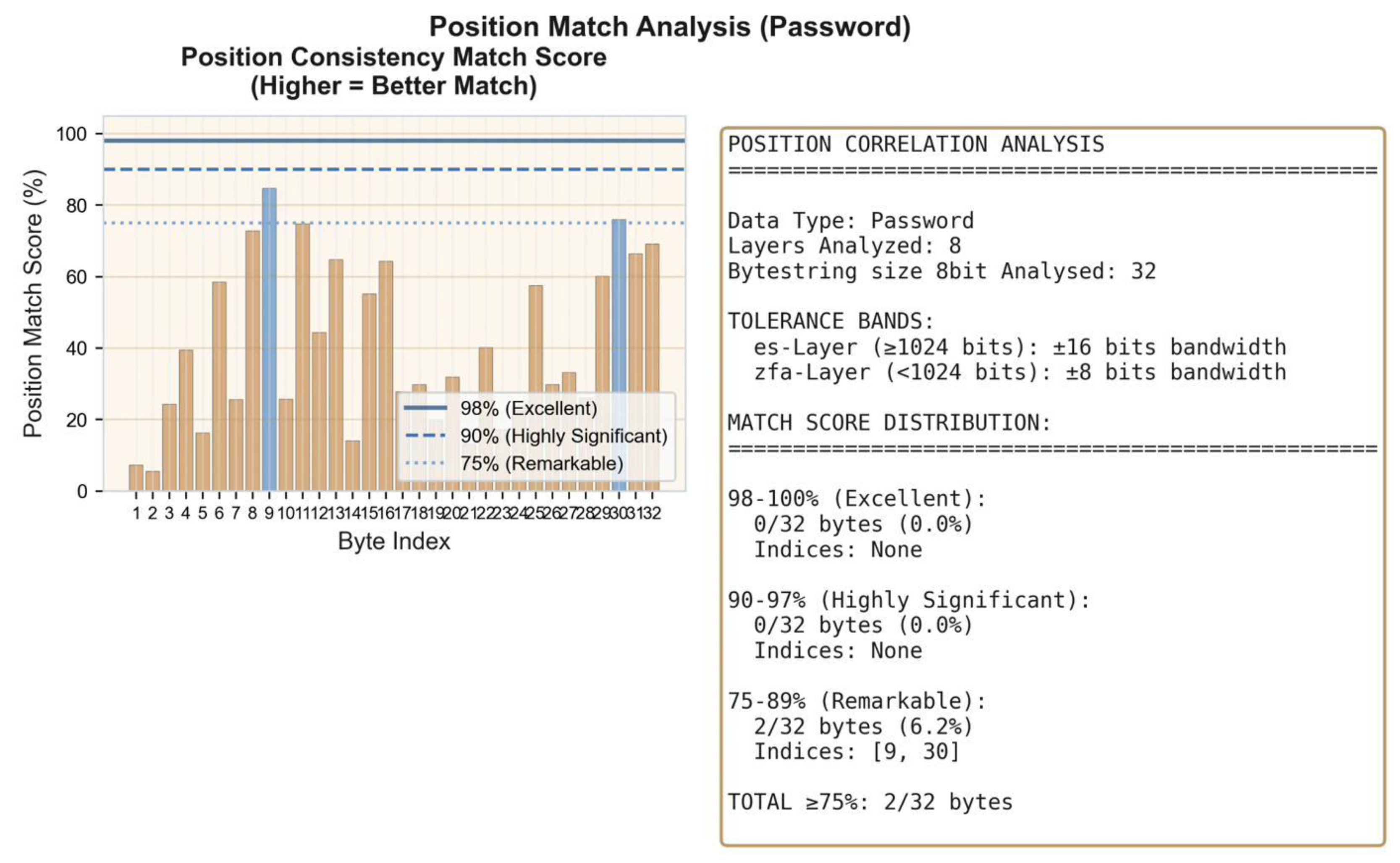

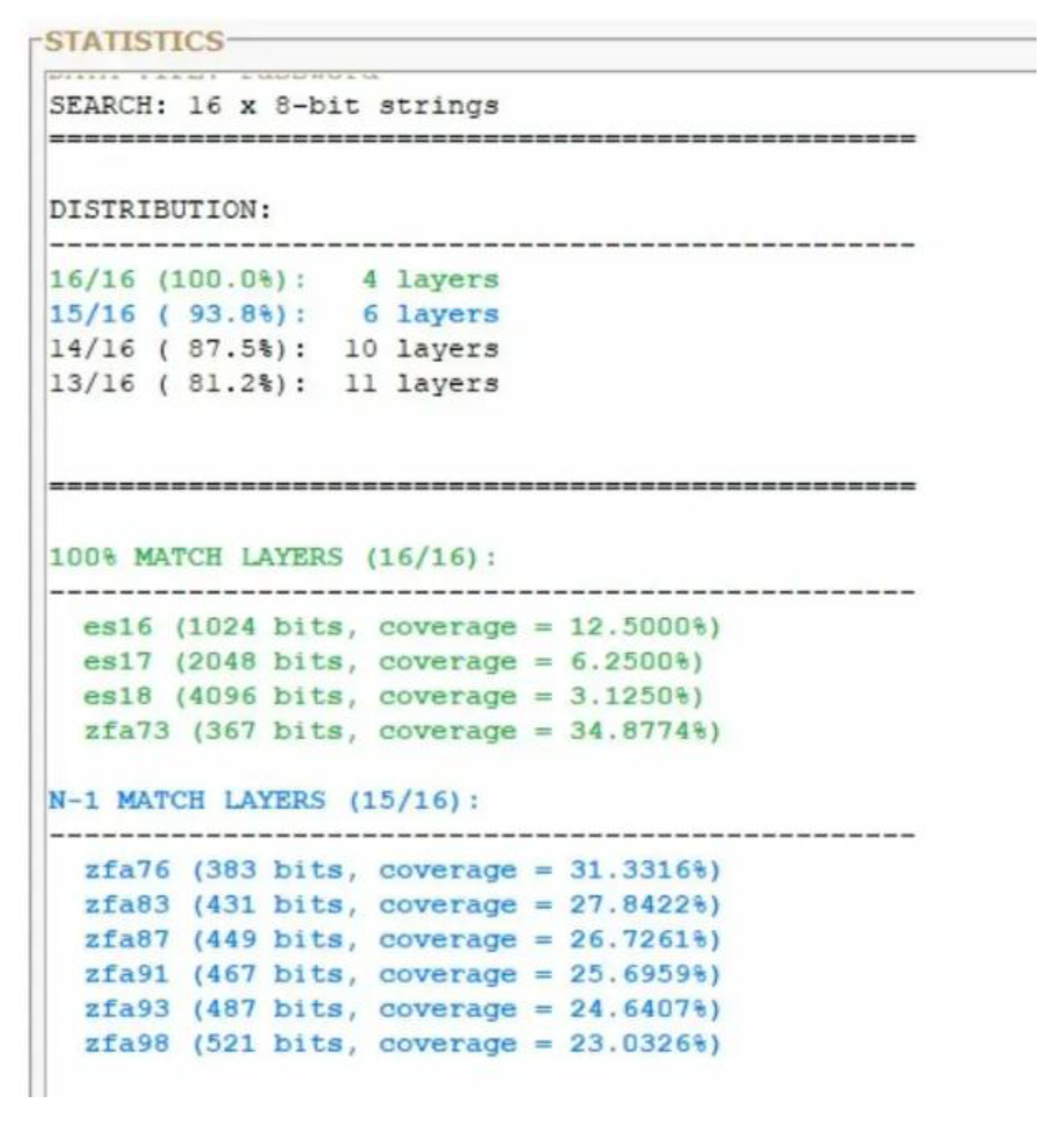

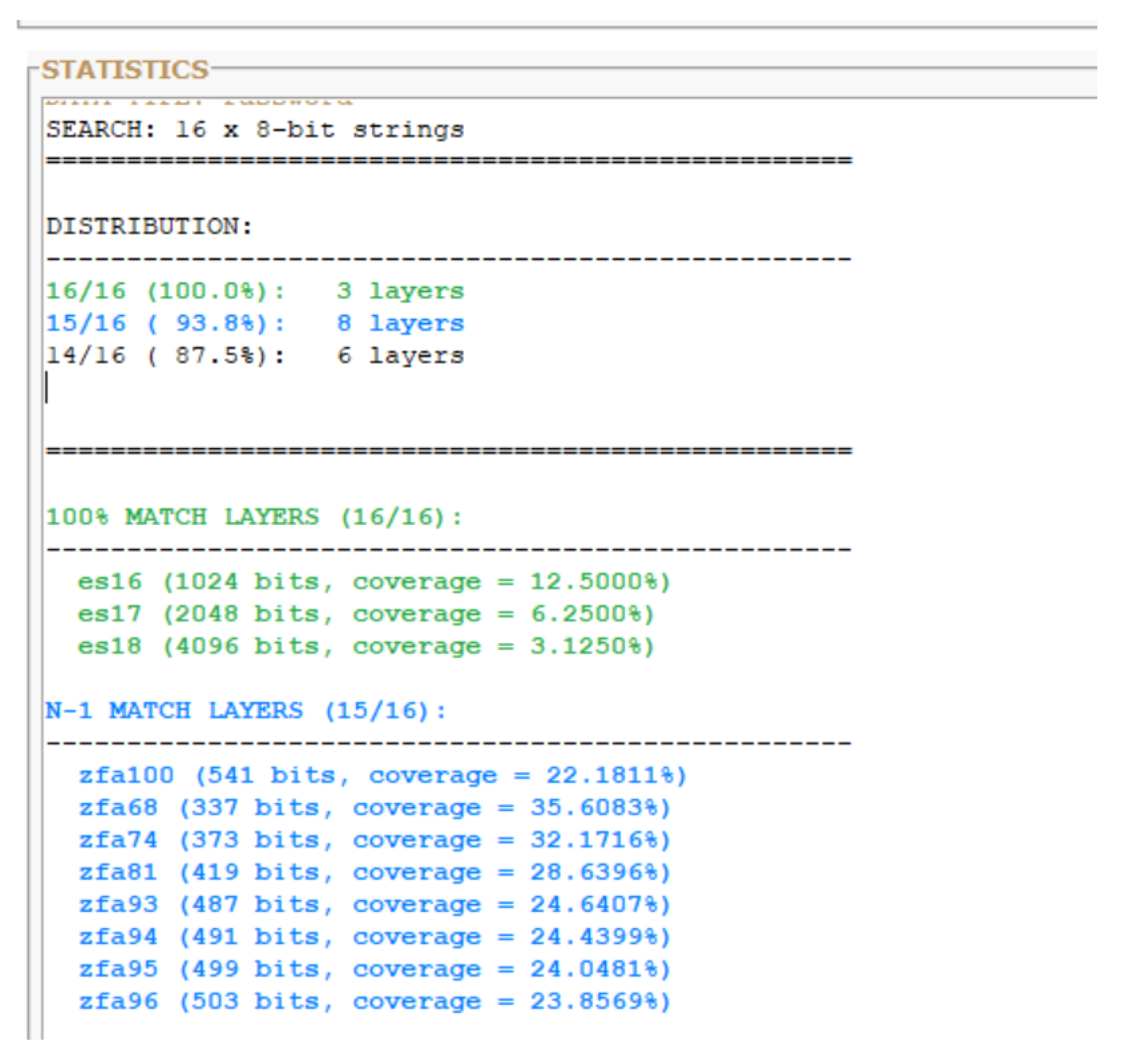

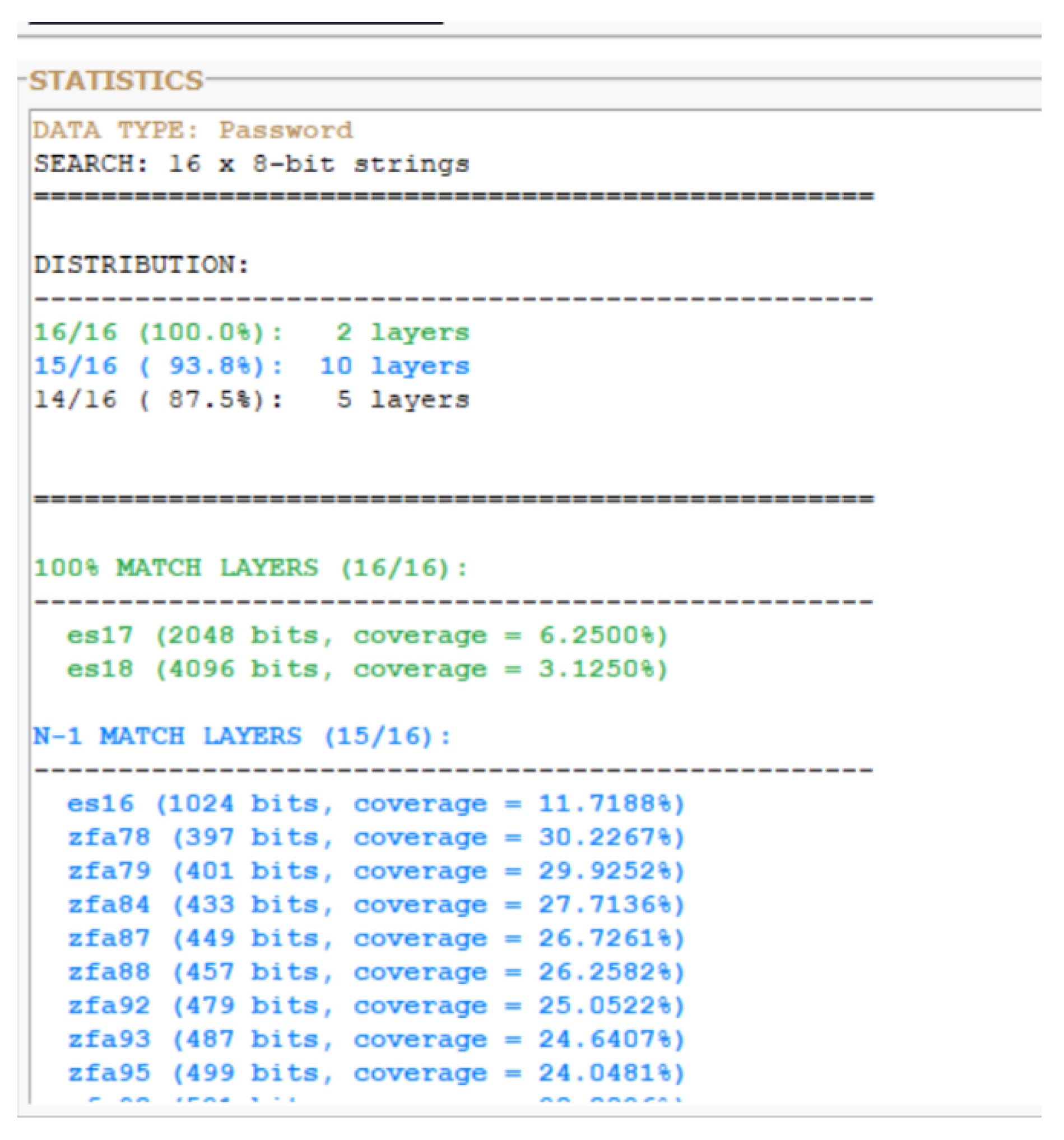

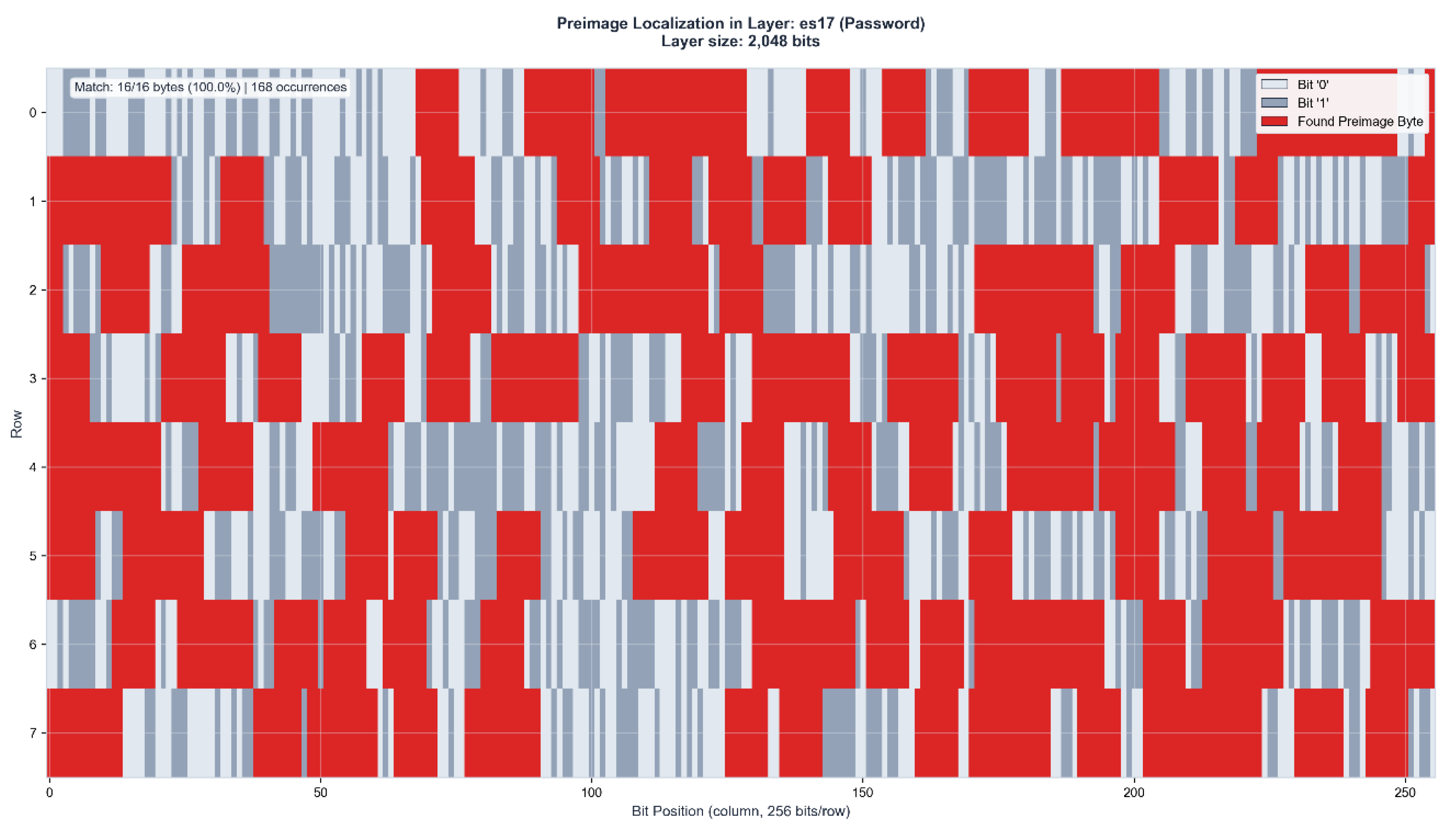

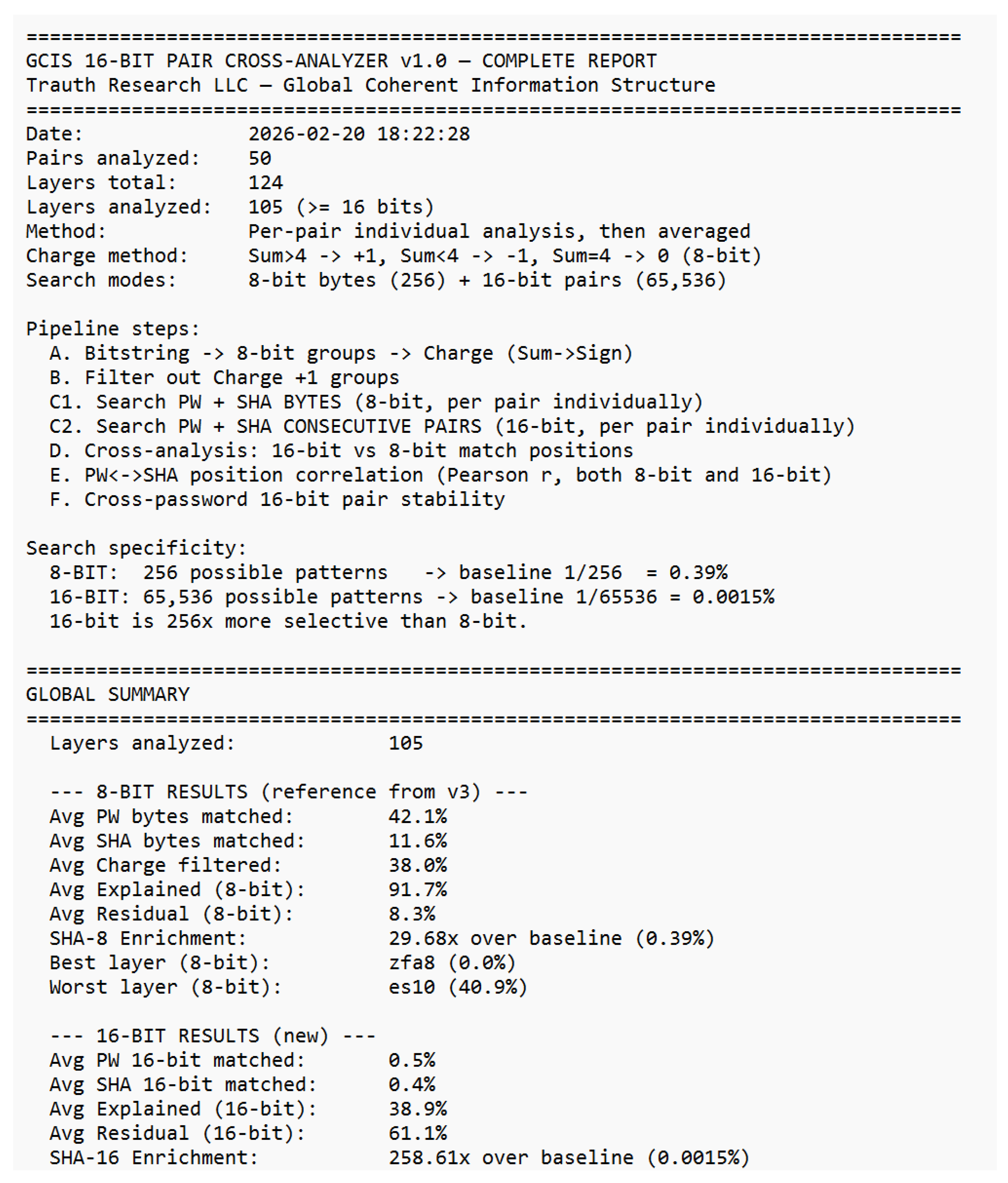

3.1 Global Overview

Across 50 password–hash pairs and 124 analyzable layers, the 16-bit consecutive-byte-pair analysis yields the following global results:

At 8-bit resolution: 42.1% average password byte match rate, 11.6% SHA byte match rate, 91.7% average explained variance, 8.3% residual, and a SHA enrichment of 29.68× over the 1/256 baseline. At 16-bit resolution: 258.61× SHA enrichment over the 1/65,536 baseline exceeding random expectation by more than two orders of magnitude. The charge filter removes an average of 38.0% of all positions as +1 charge (confirmed non-password), concentrating the analysis on the geometrically active −1 charge region.

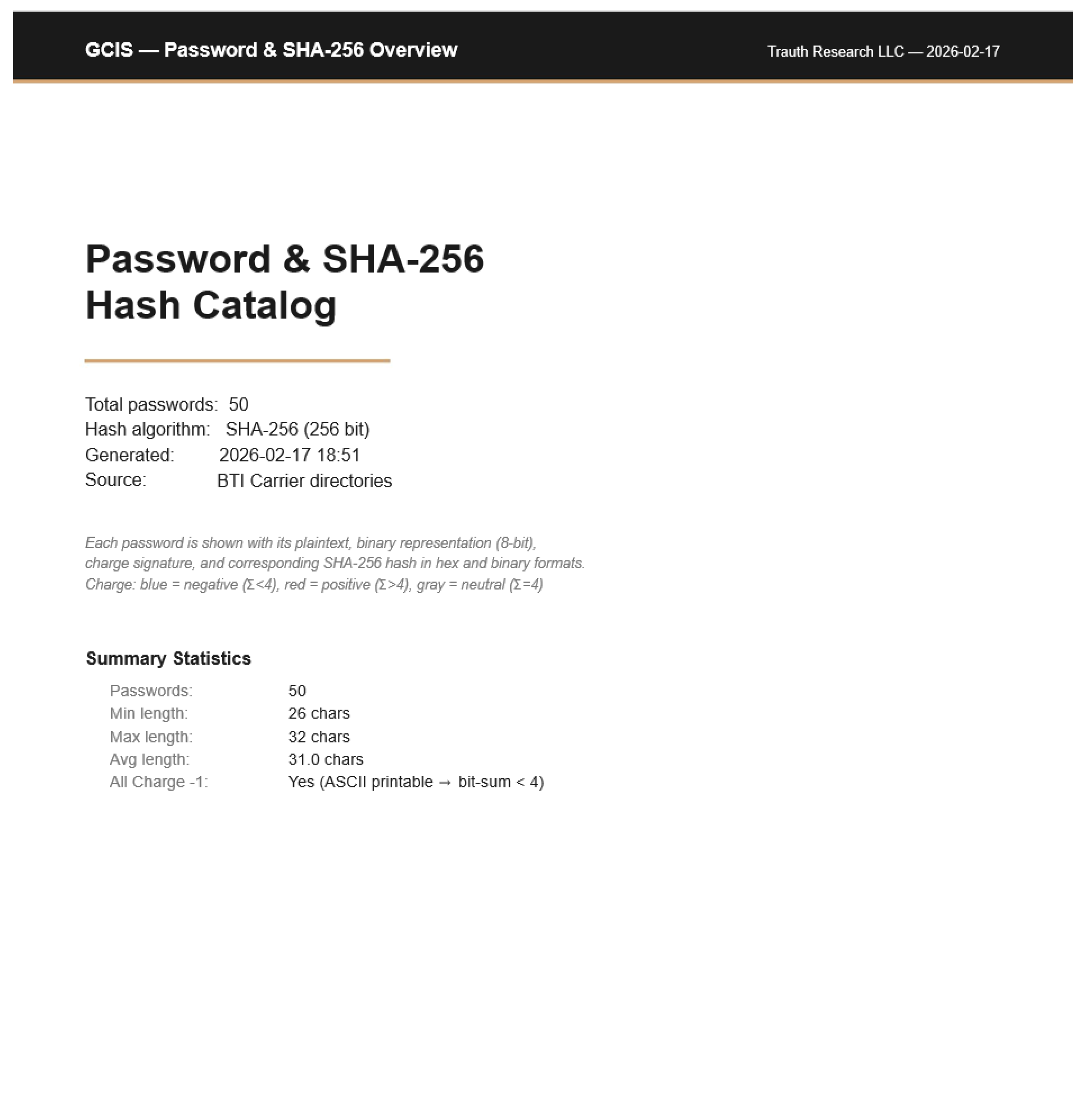

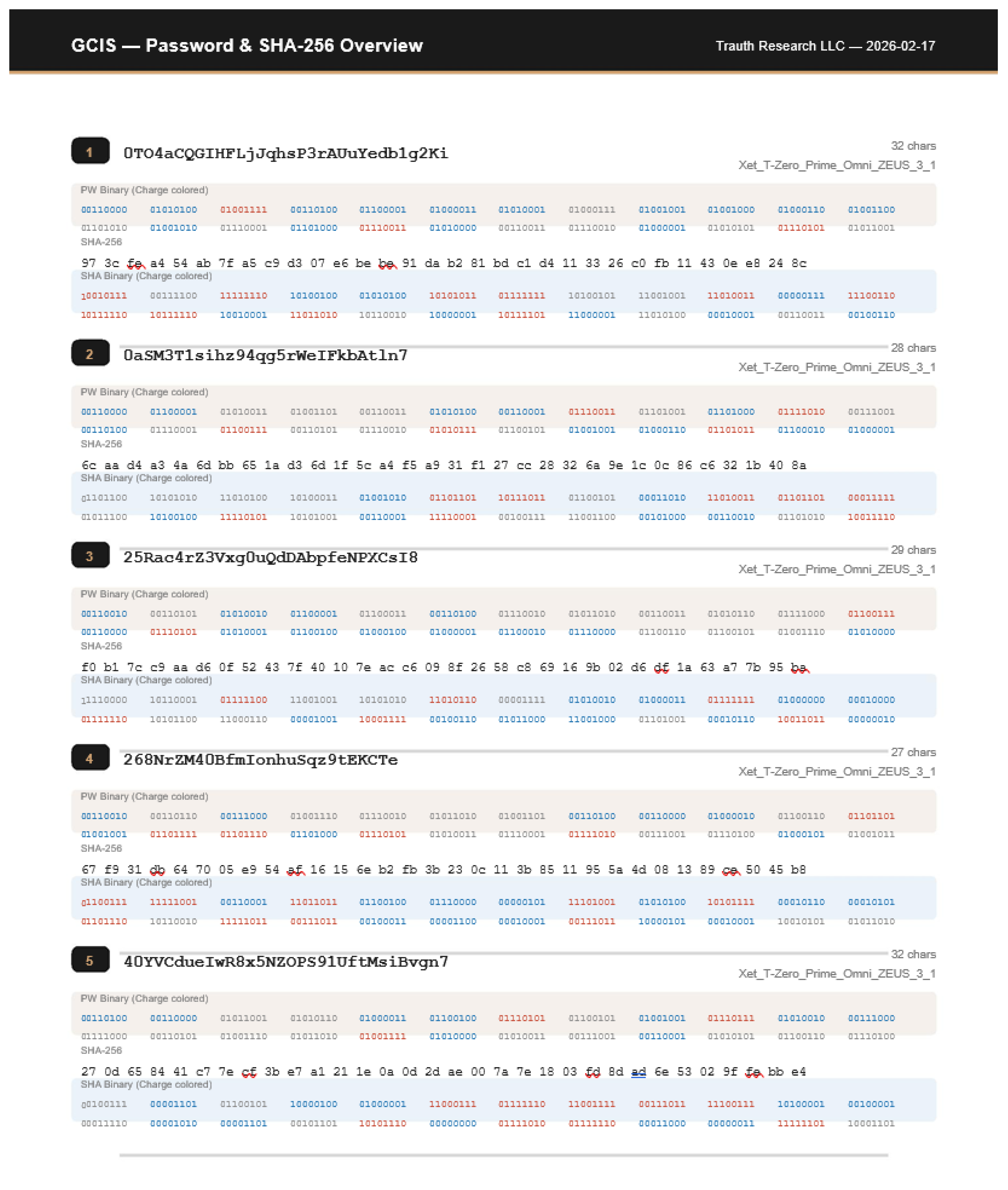

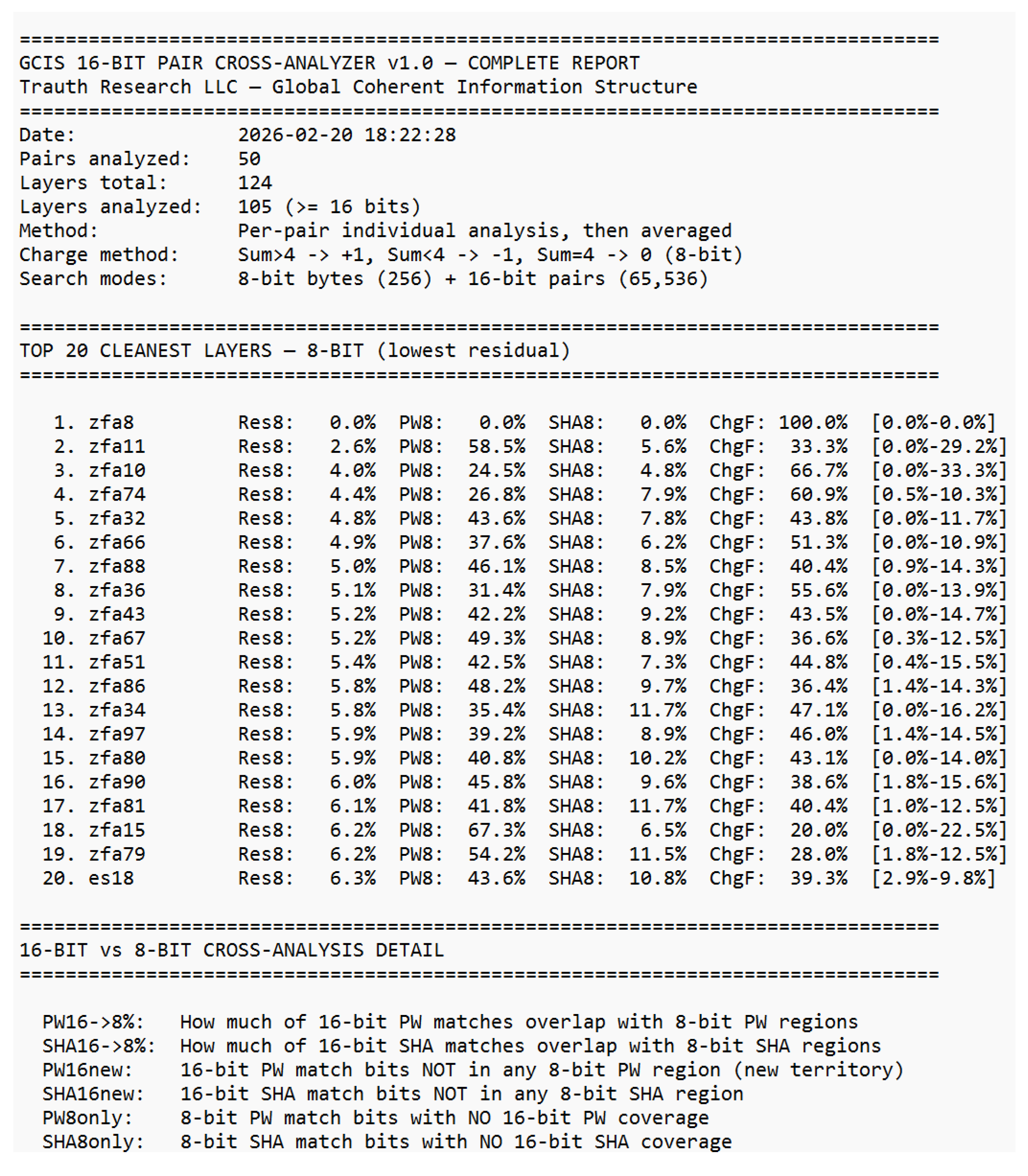

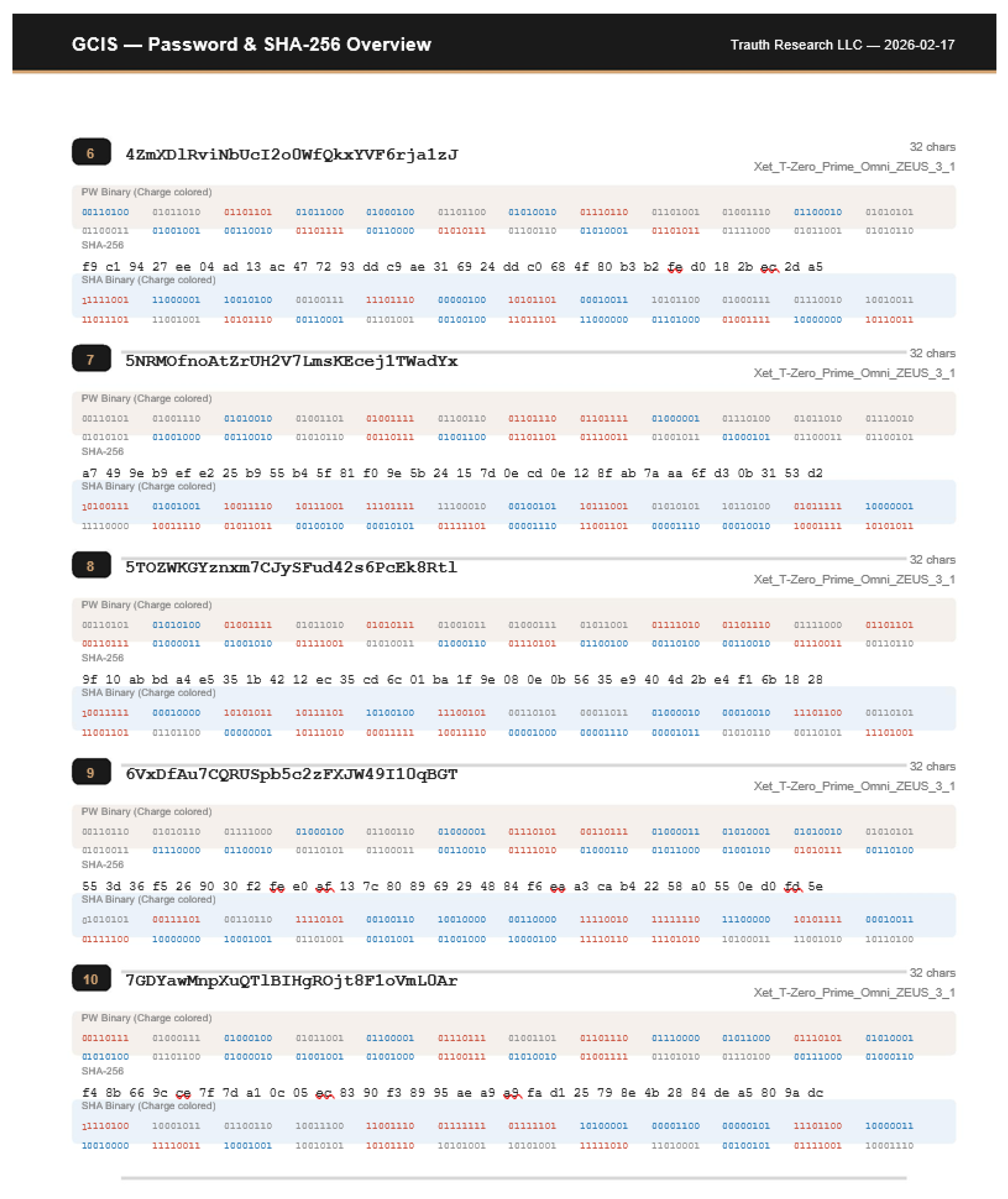

The following excerpt from the GCIS Overview Report summarizes the global statistics:

Figure 7.

GCIS 16-Bit Cross-Analyzer Global summary. 50 pairs, 105 layers, 8-bit and 16-bit results. Complete report in Supplement S2.

Figure 7.

GCIS 16-Bit Cross-Analyzer Global summary. 50 pairs, 105 layers, 8-bit and 16-bit results. Complete report in Supplement S2.

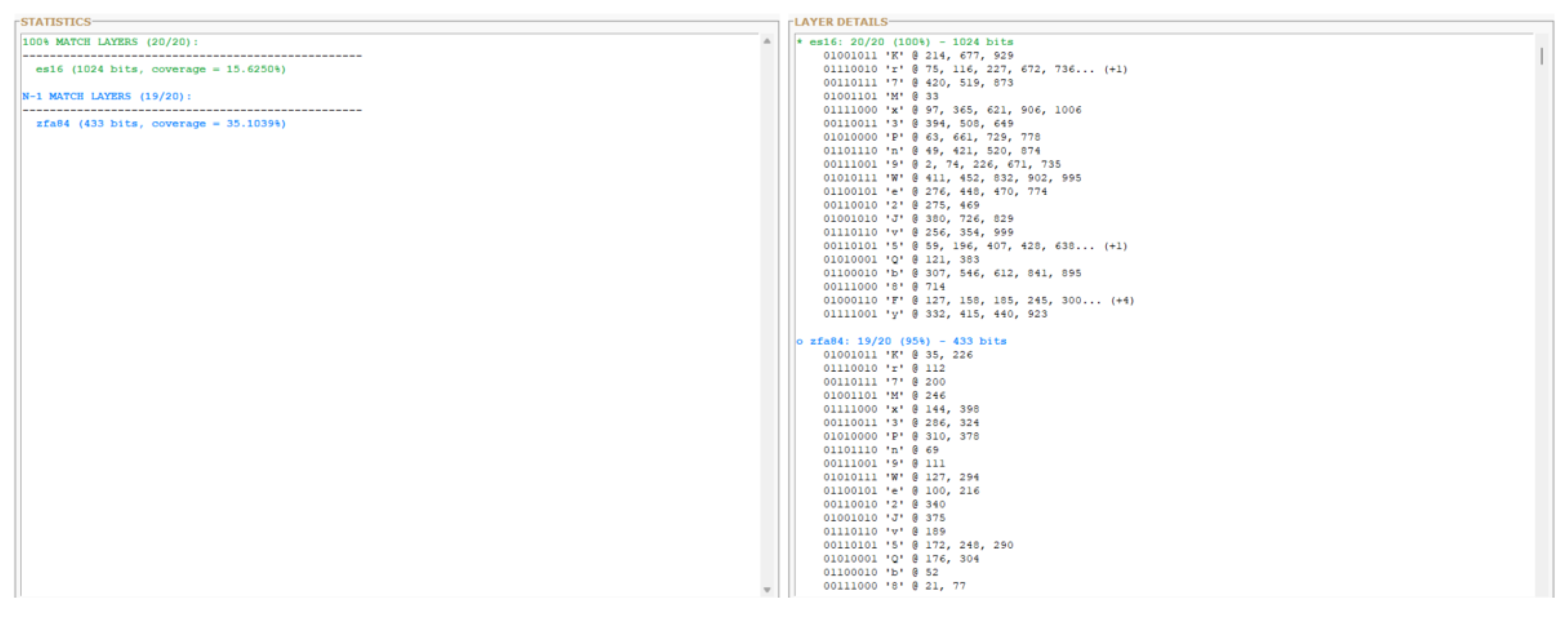

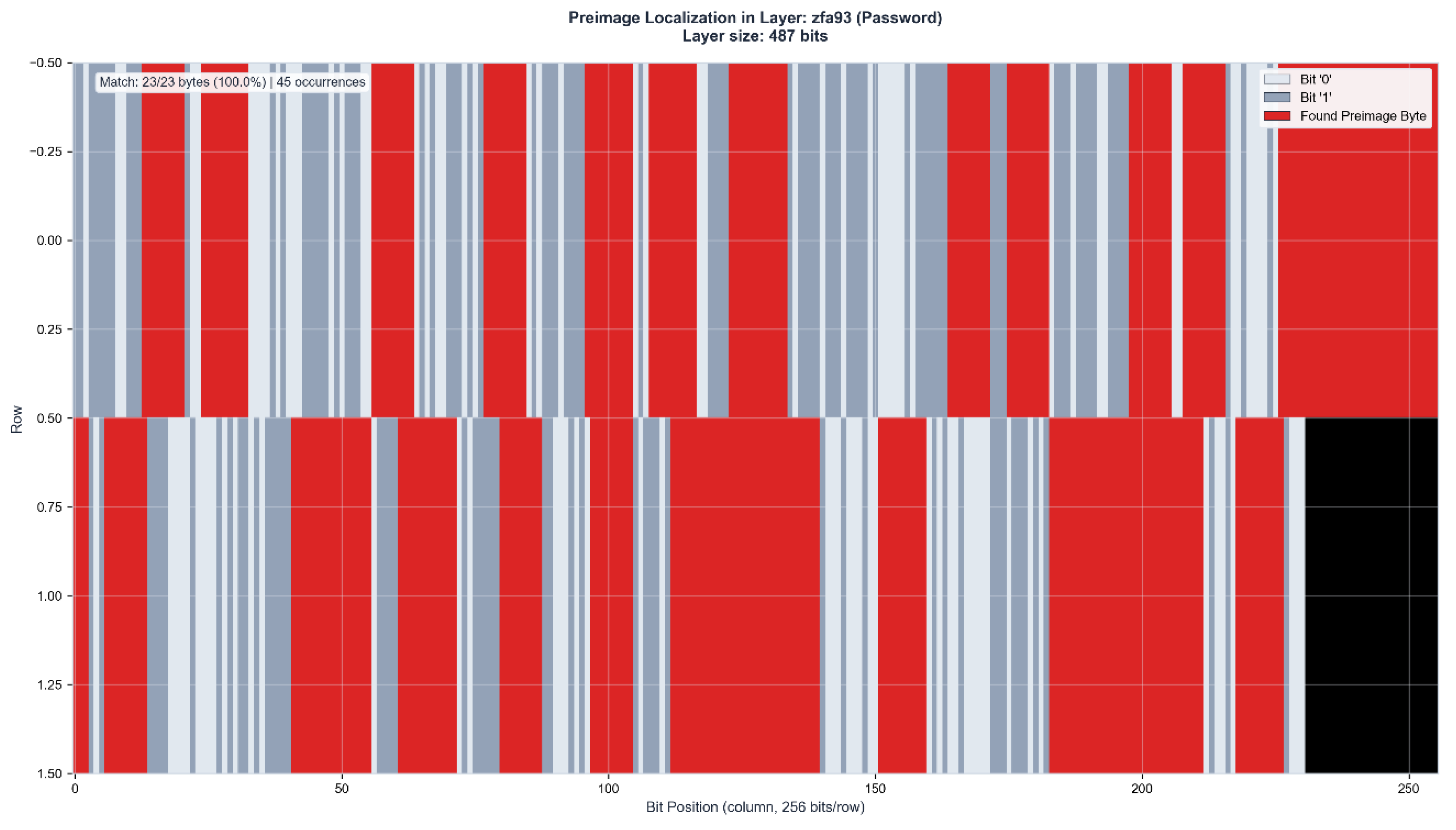

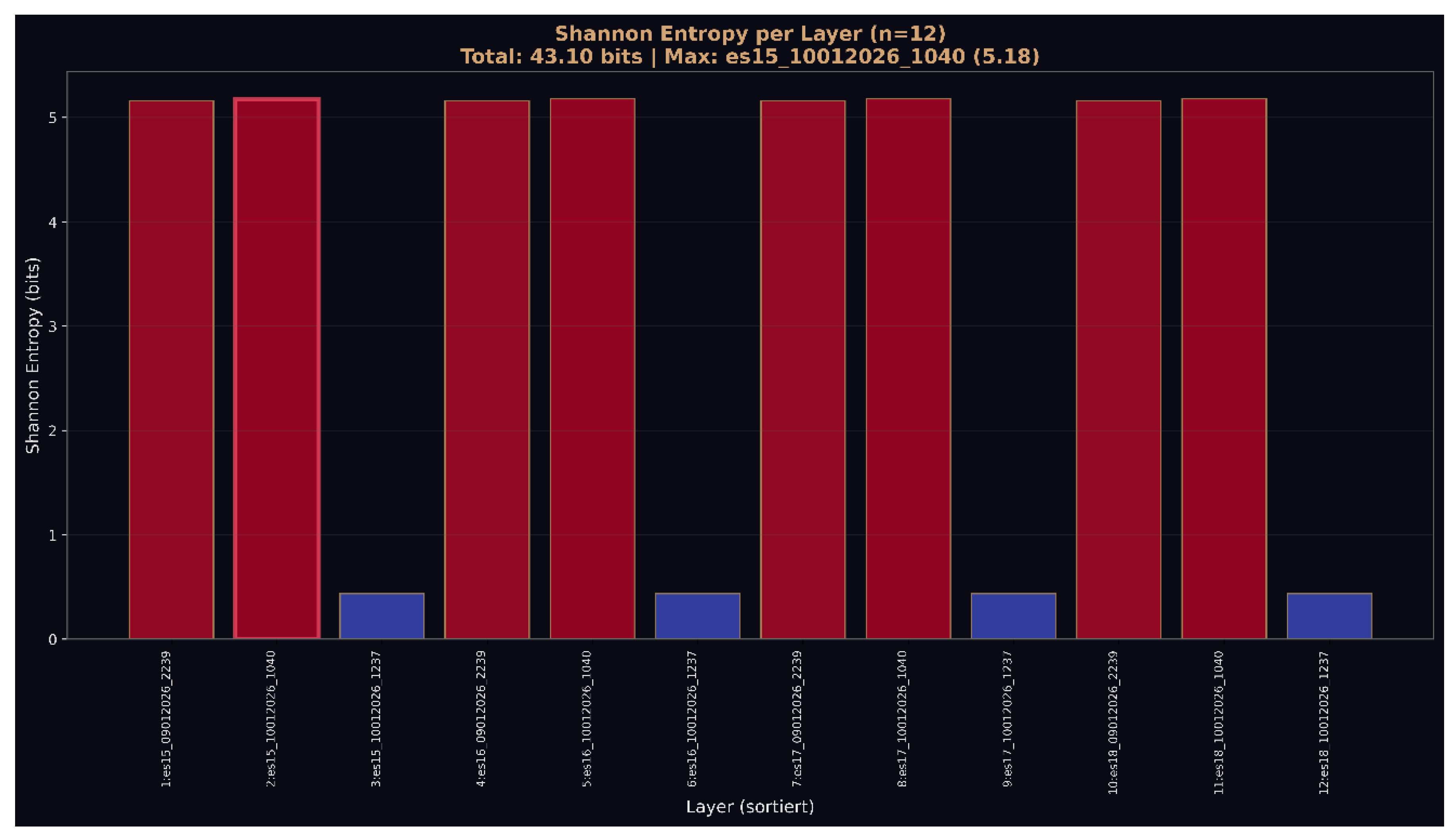

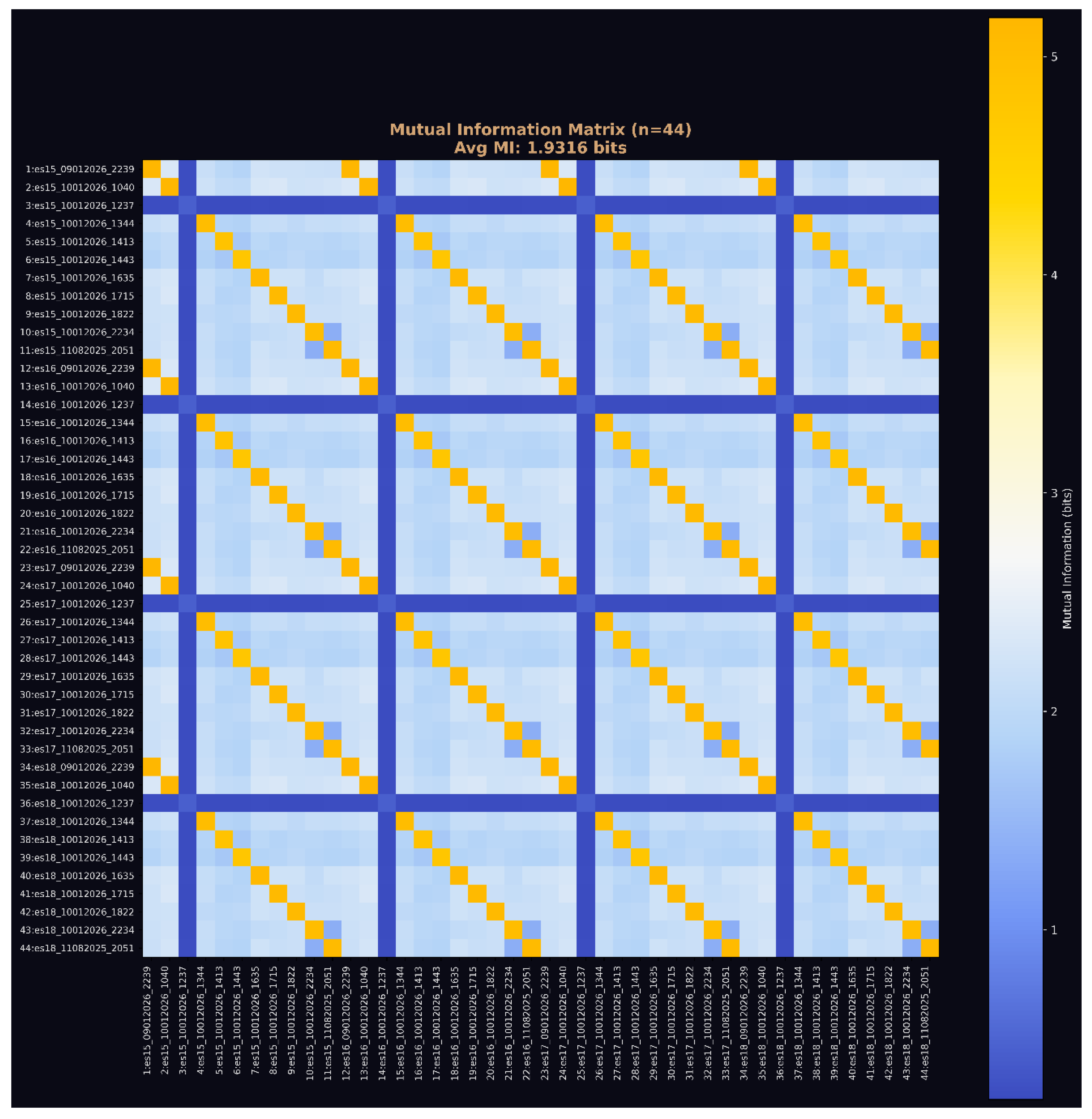

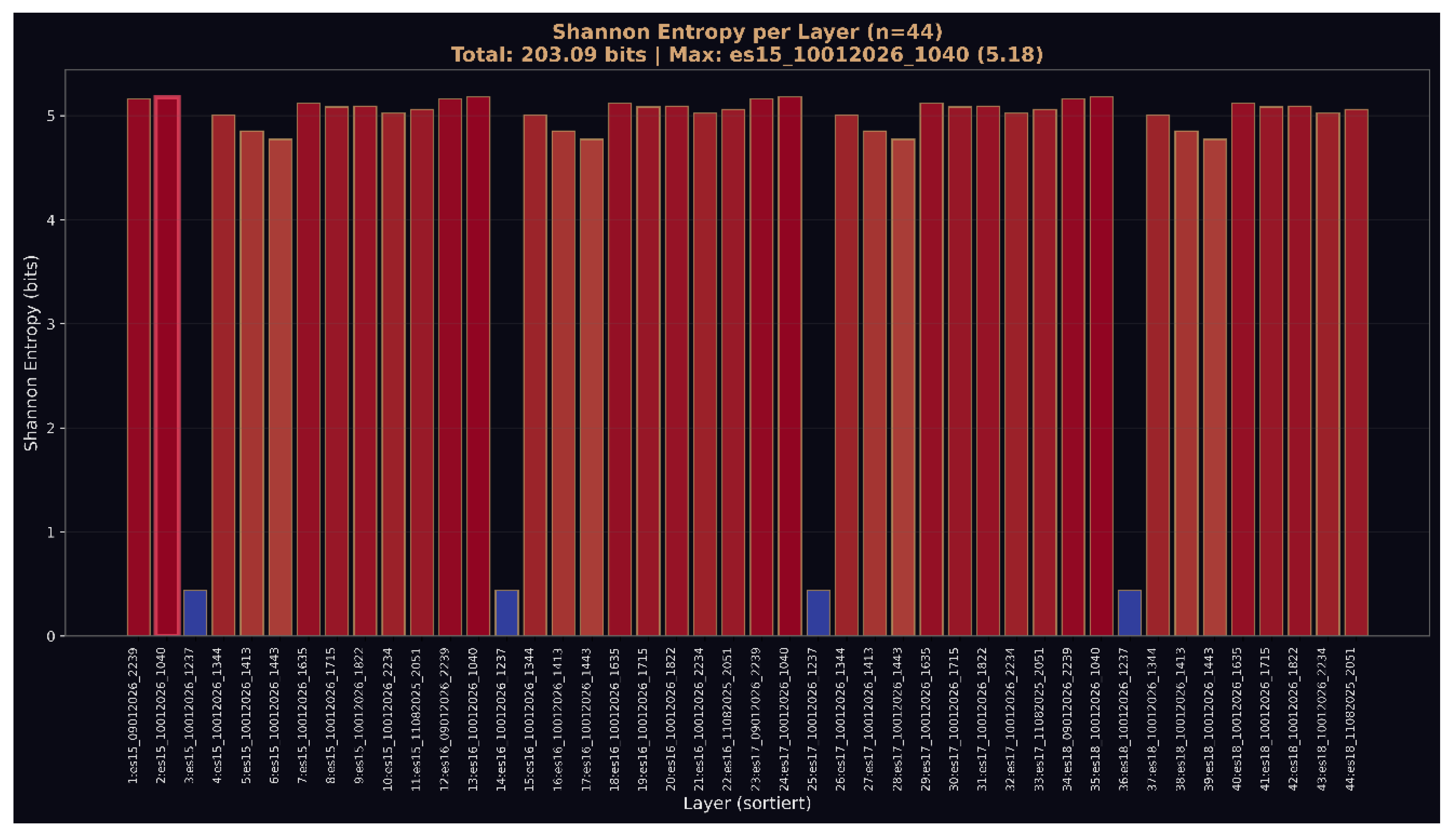

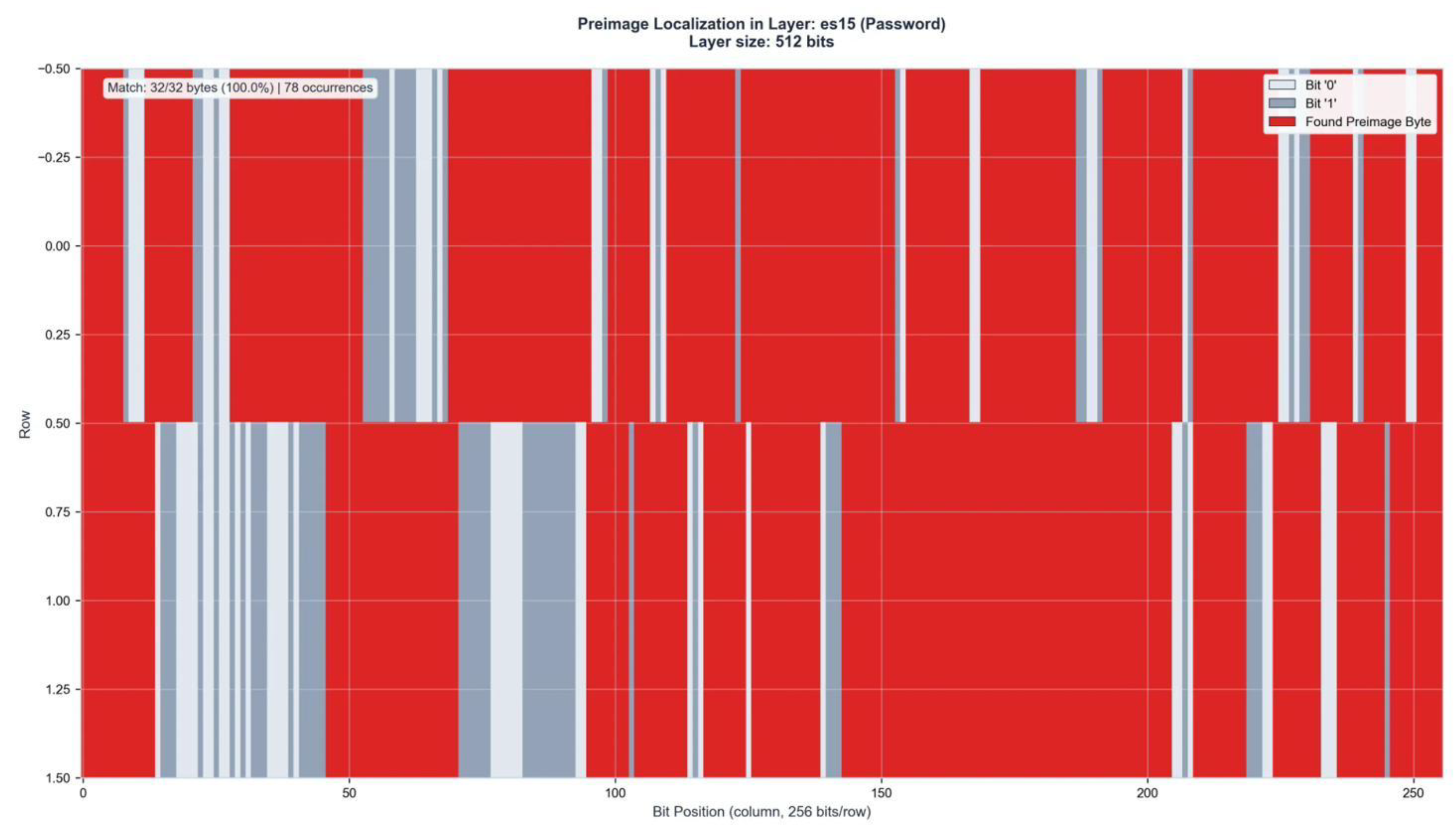

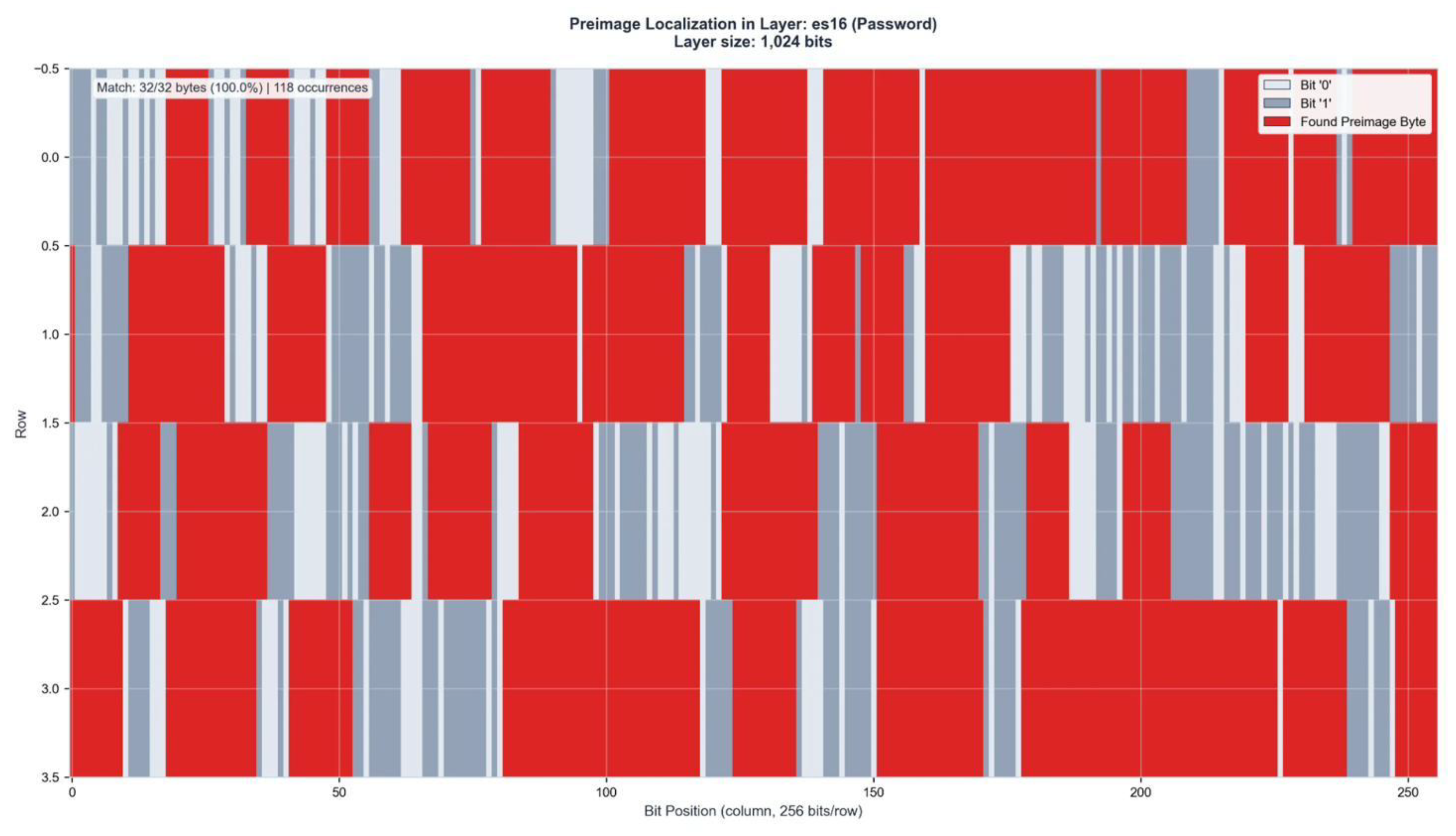

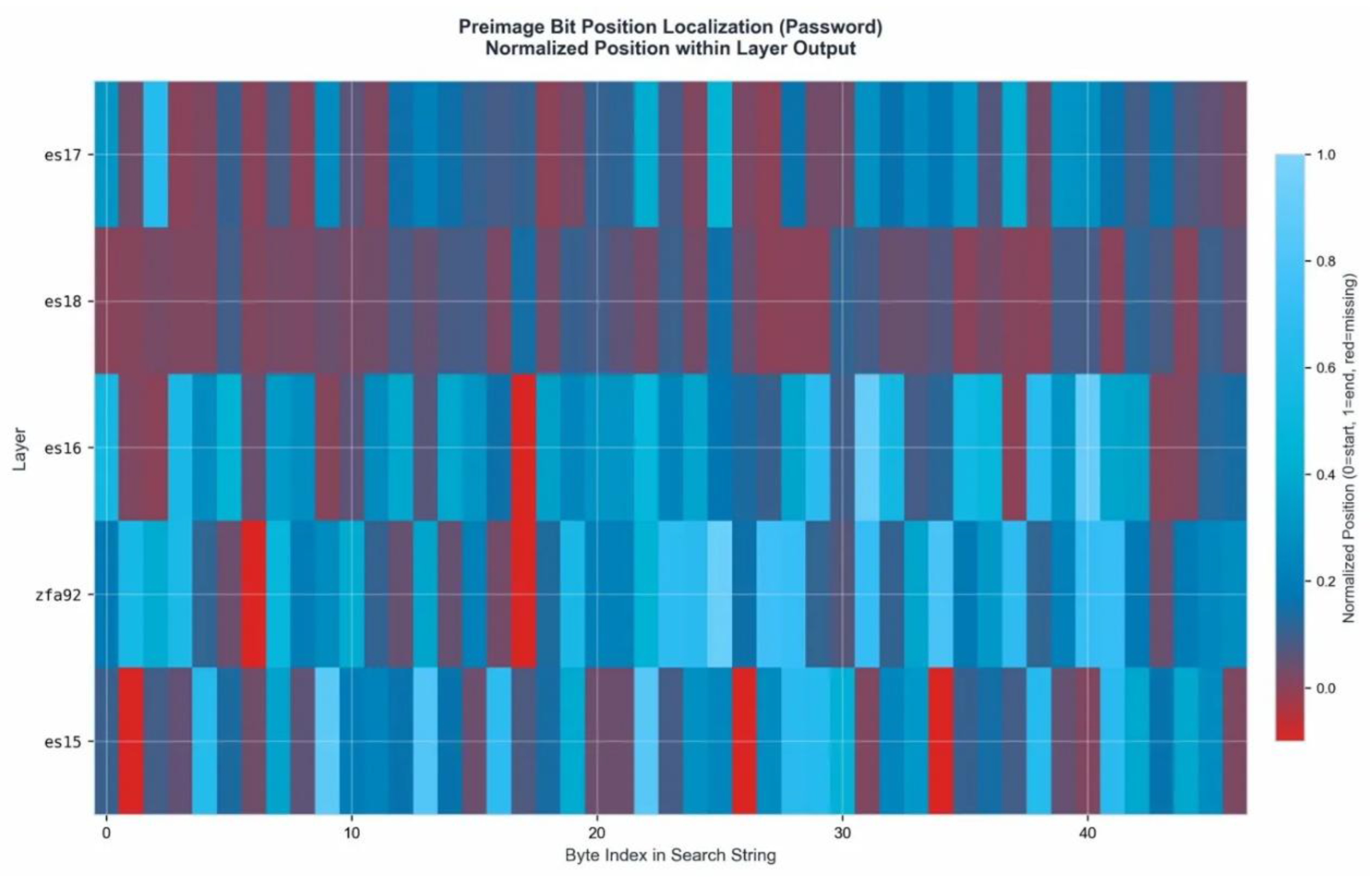

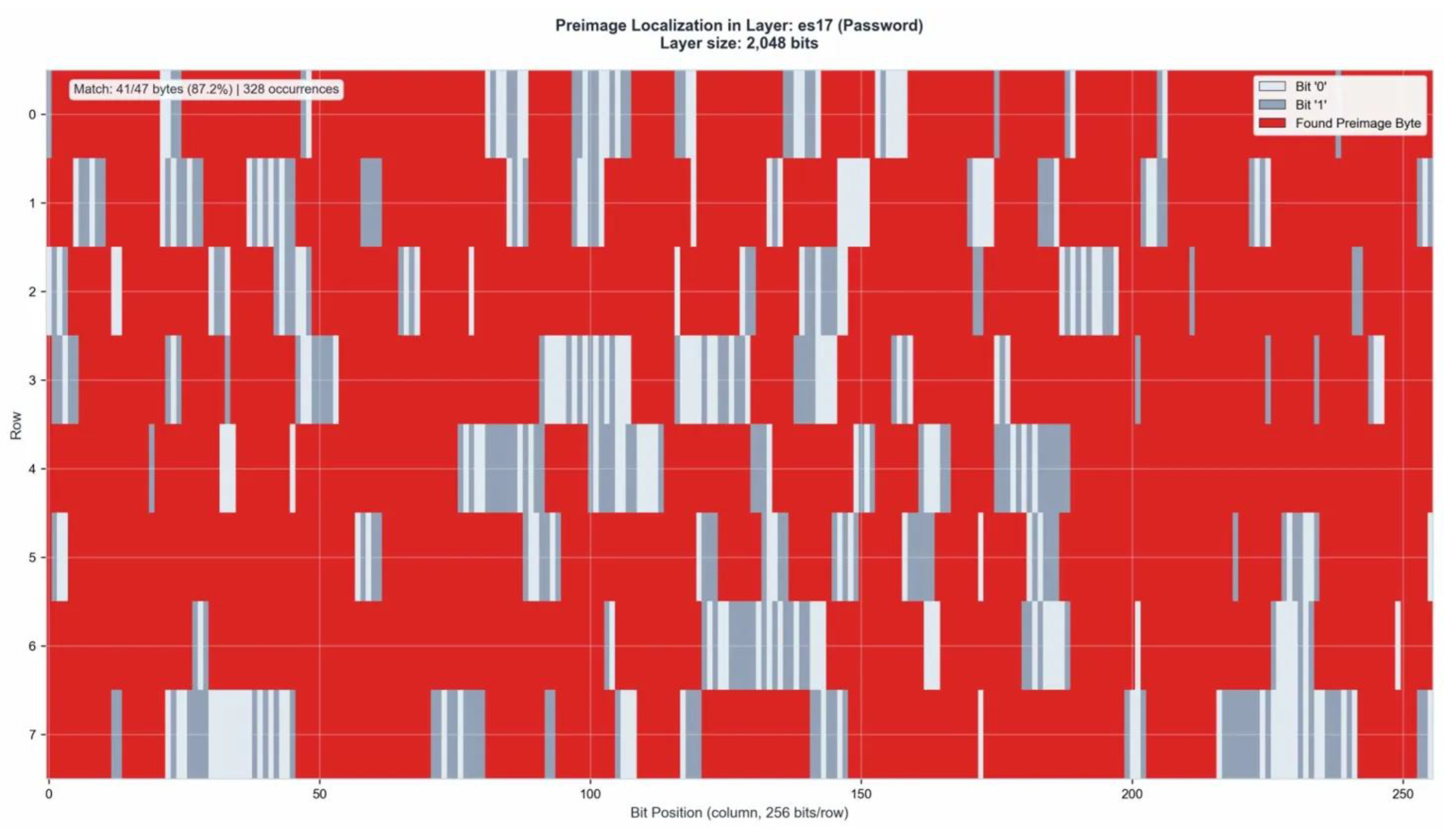

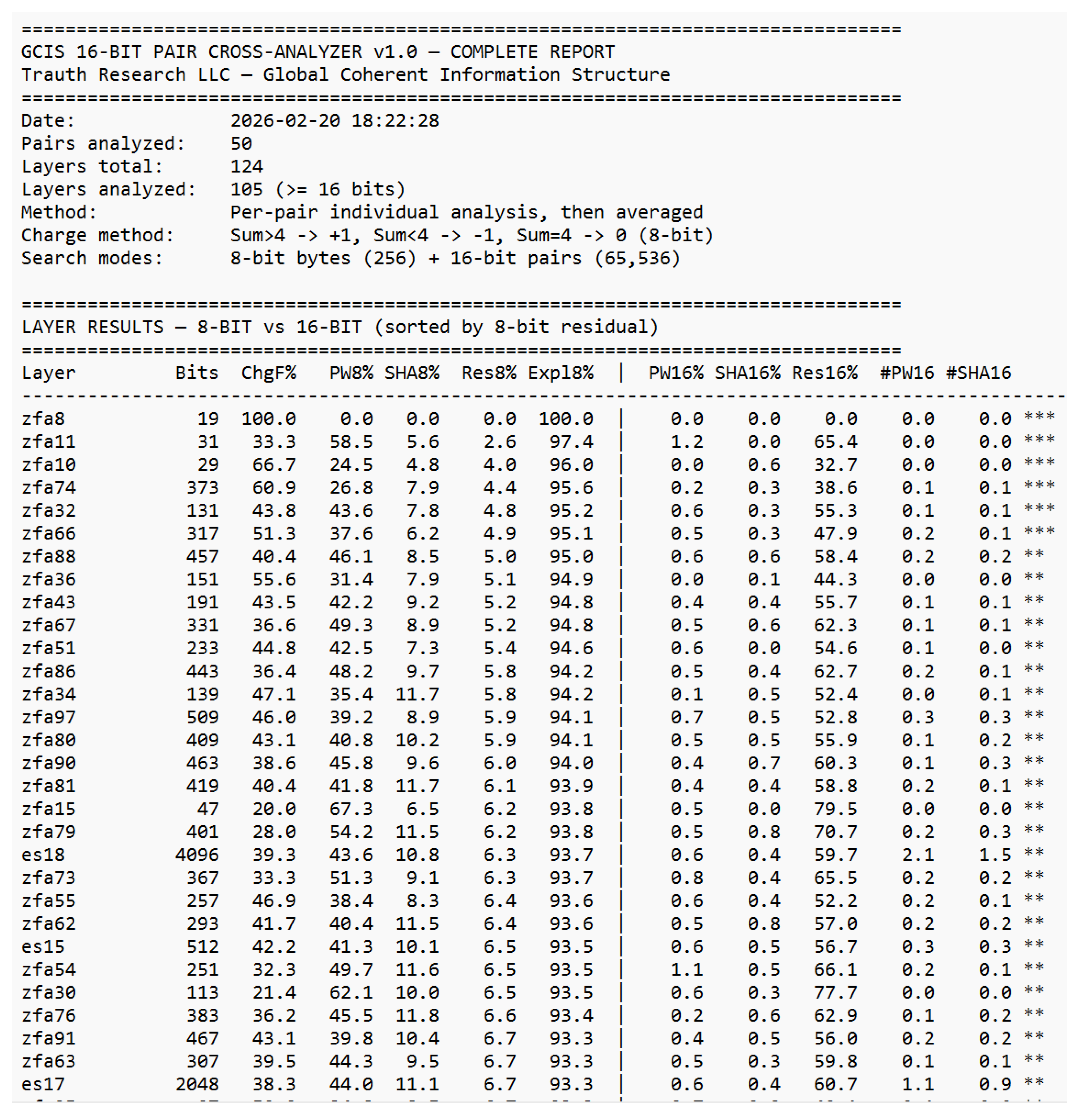

3.2 Layer-by-Layer Results

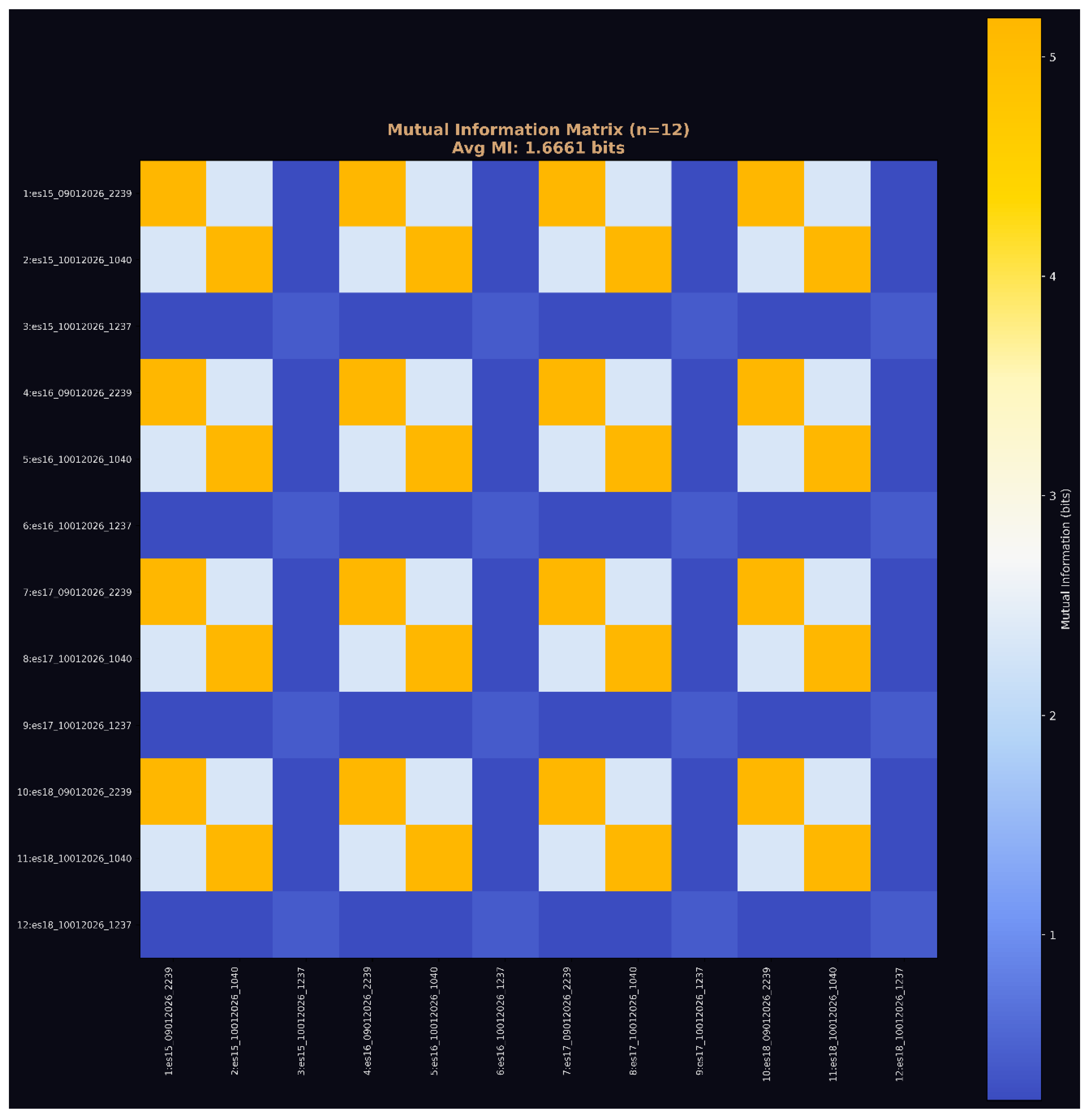

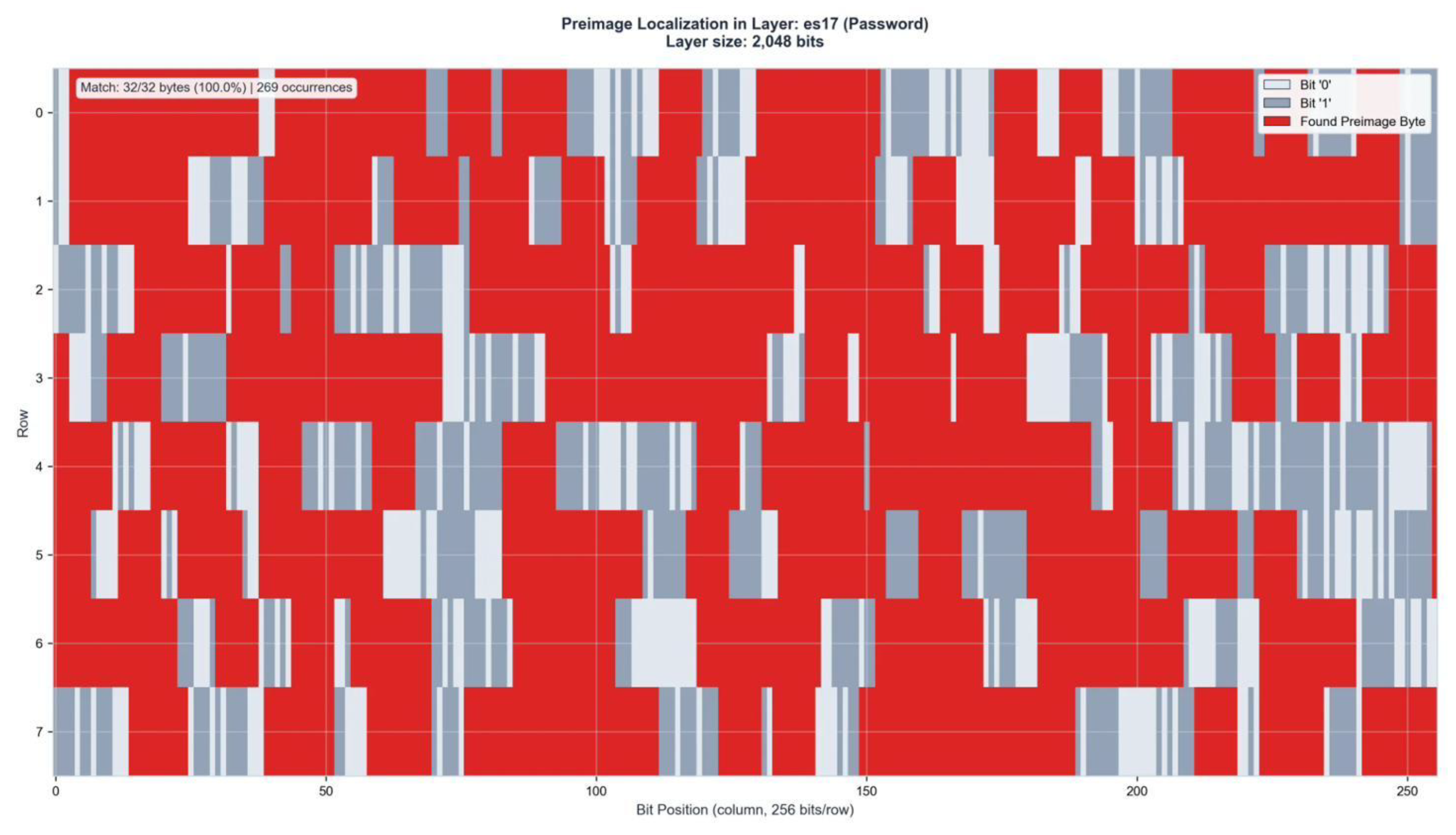

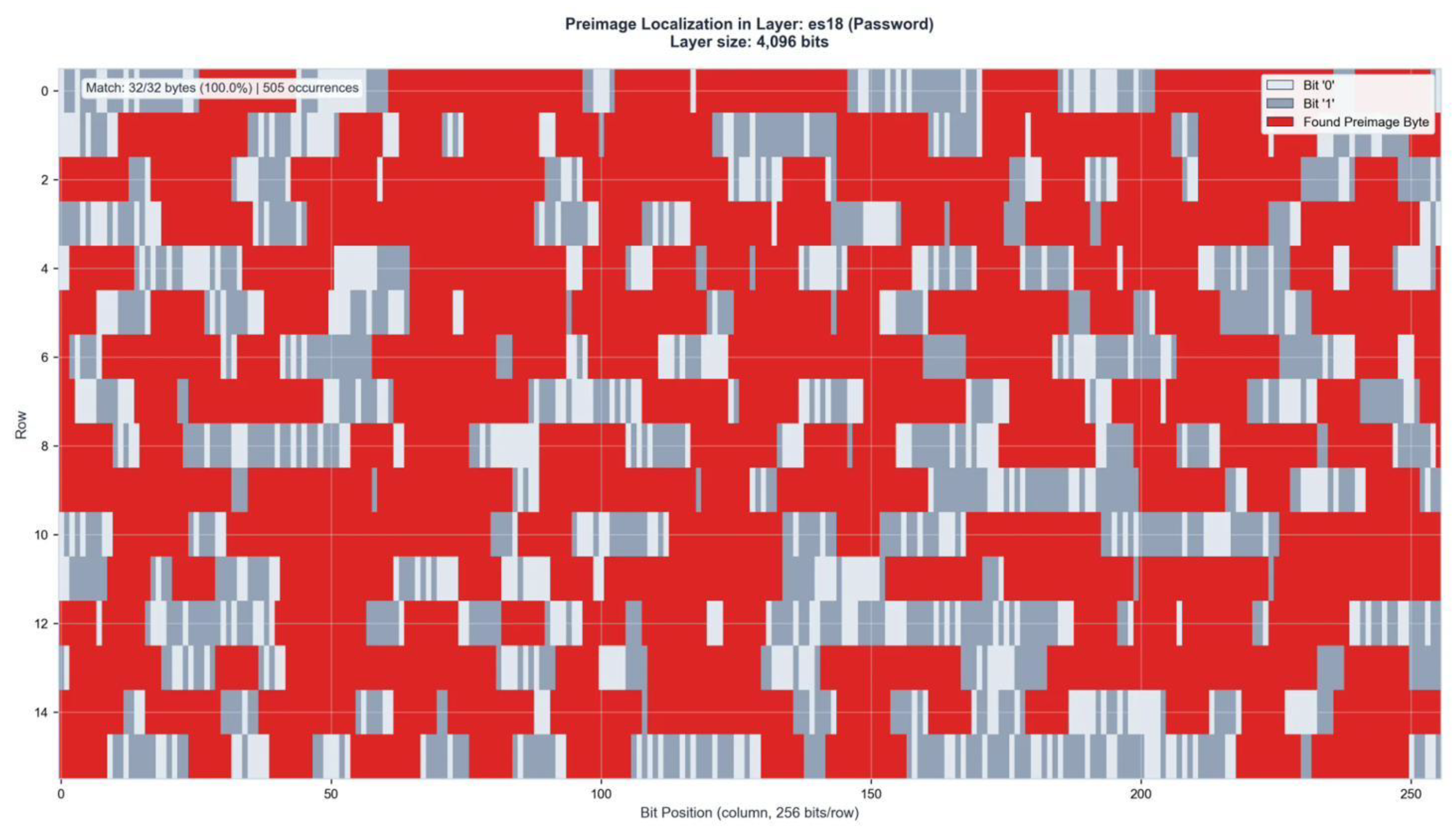

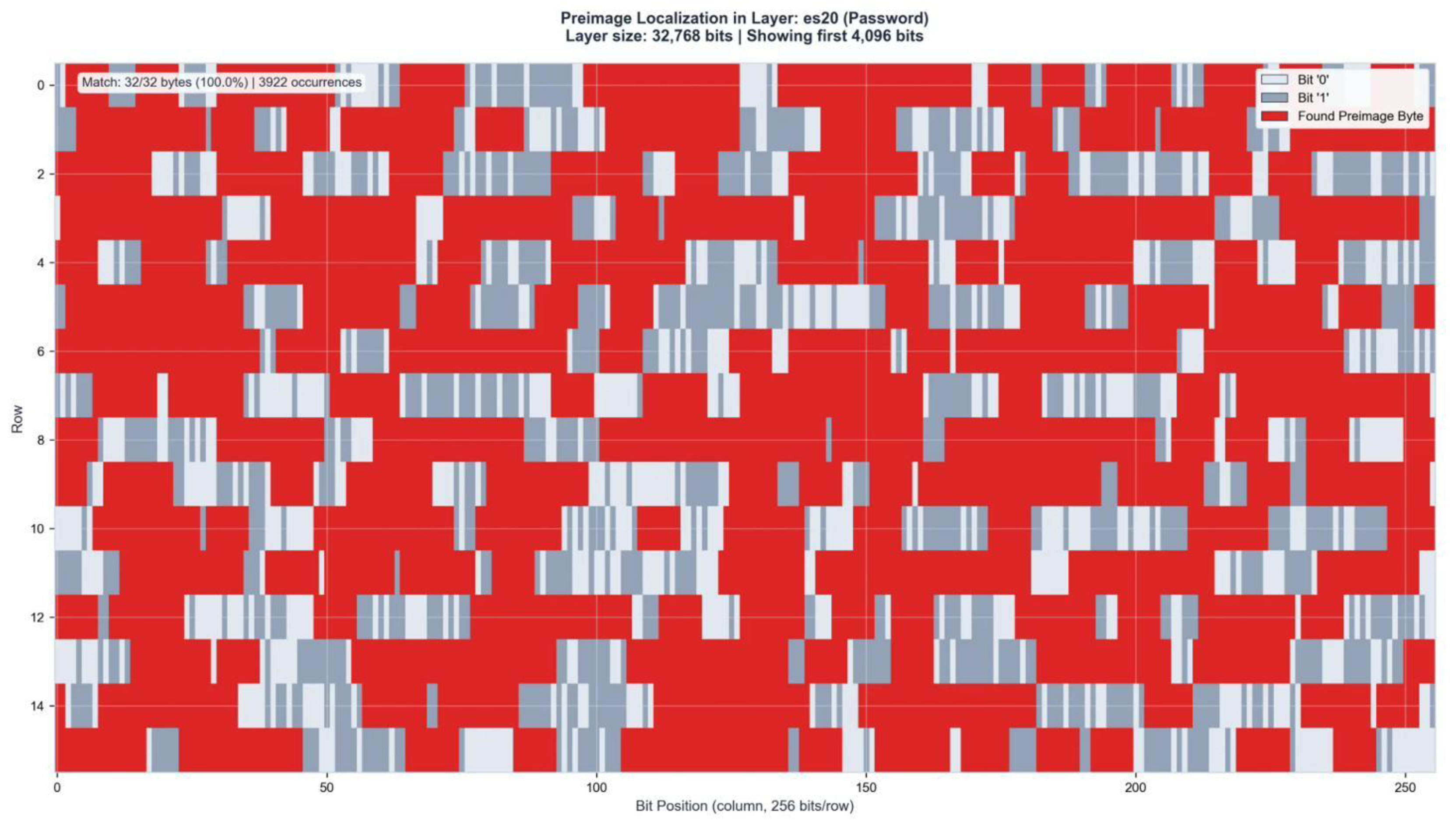

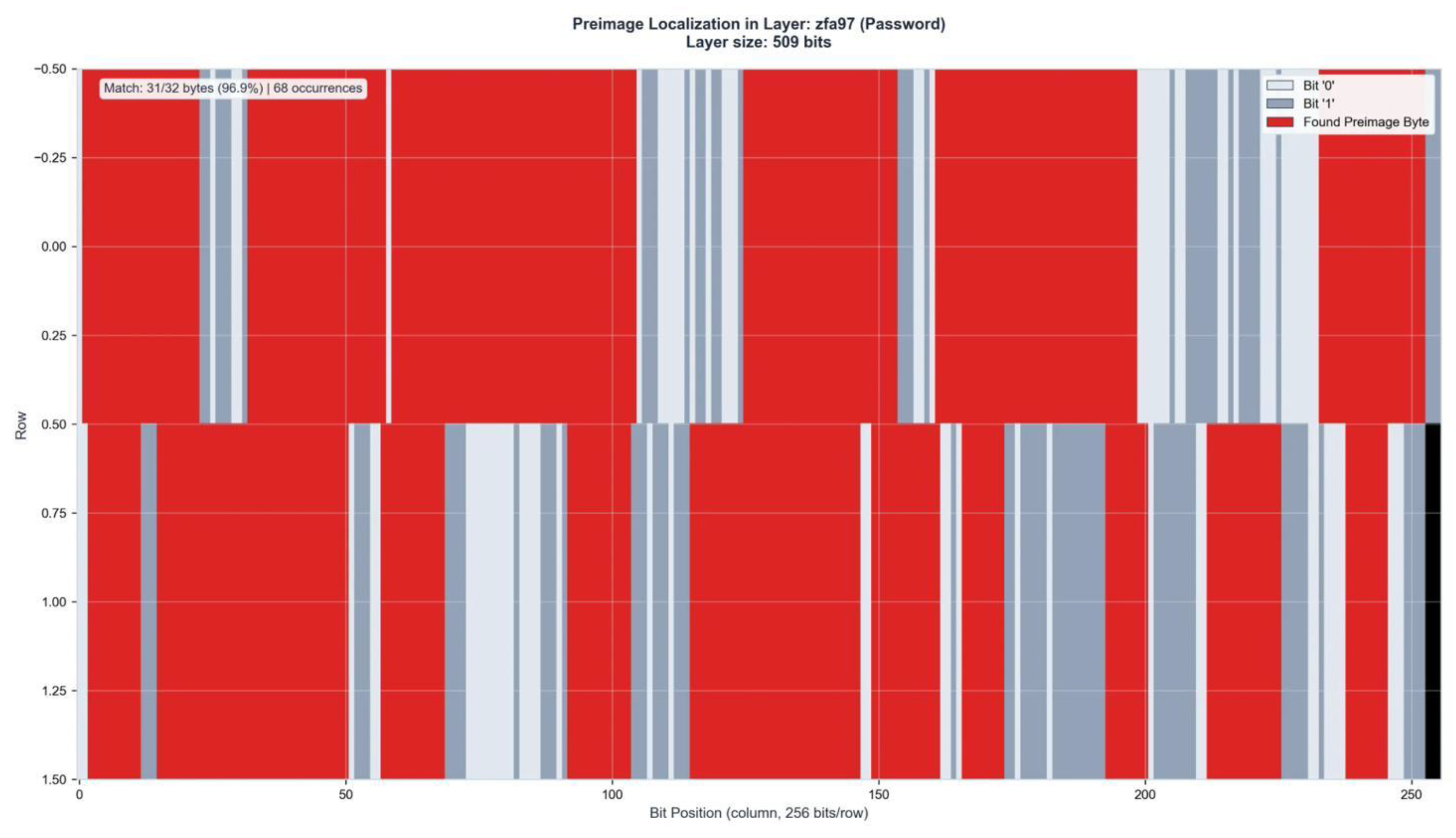

The explained variance is not uniformly distributed across layers. The top 20 layers by 8-bit performance achieve residuals between 0.0% (ZFA8) and 6.3% (ES18), corresponding to explained variances of 93.7–100%.

The ES layers (ES15–ES20) and select ZFA layers (ZFA74, ZFA66, ZFA88, ZFA11) consistently rank among the highest-performing layers. At 16-bit resolution, the ES layers dominate: ES20 averages 7.2 password and 7.0 SHA 16-bit matches per pair, ES19 averages 4.3 and 3.4, ES18 averages 2.1 and 1.5. This concentration in the high-dimensional ES layers confirms the geometric localization hierarchy established in the main body [

5].

Figure 8.

Layer results 8-bit vs. 16-bit comparison, sorted by residual. Three-star layers (***) achieve <5% residual at 8-bit. Complete layer analysis in Supplement S2.

Figure 8.

Layer results 8-bit vs. 16-bit comparison, sorted by residual. Three-star layers (***) achieve <5% residual at 8-bit. Complete layer analysis in Supplement S2.

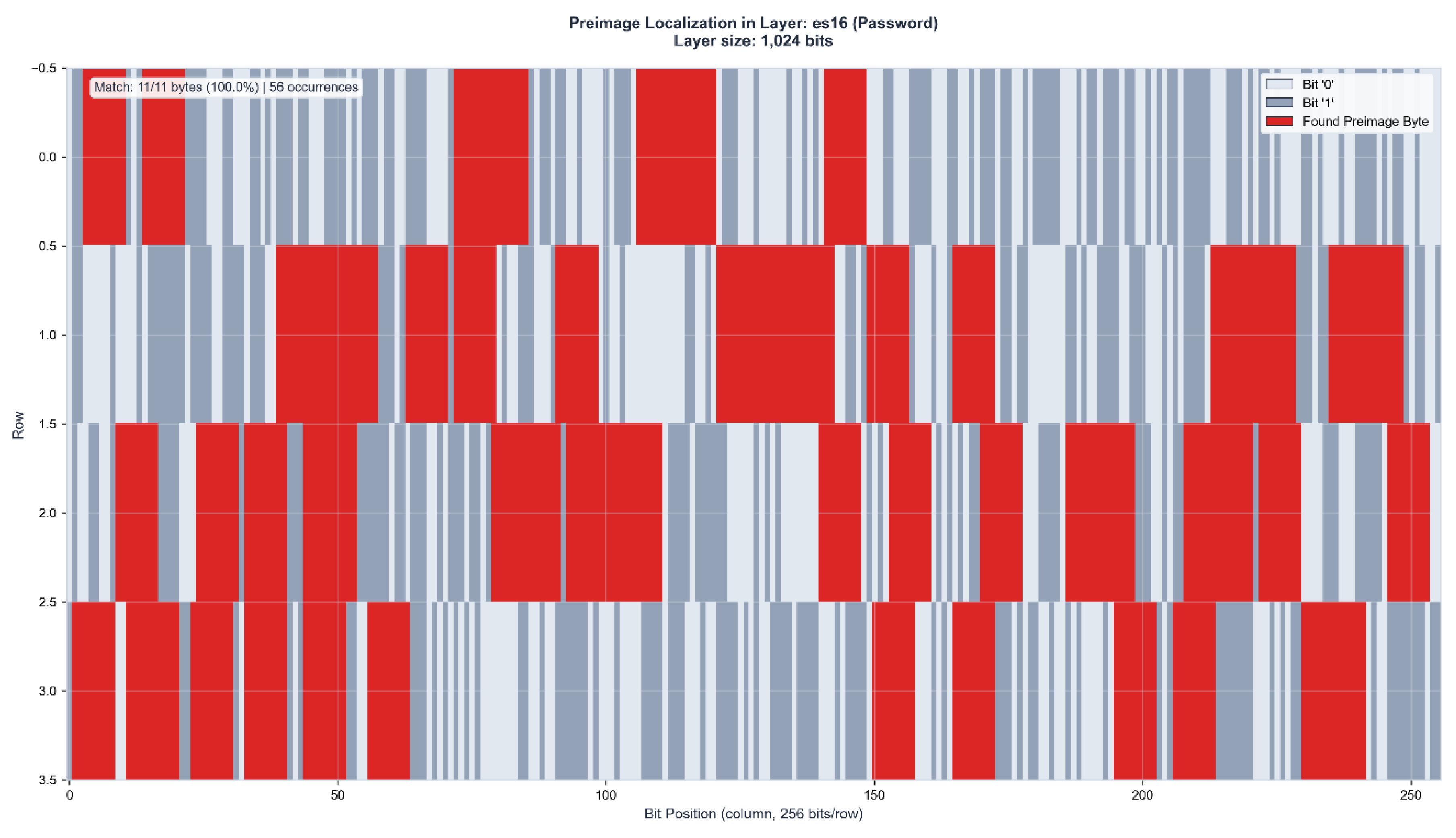

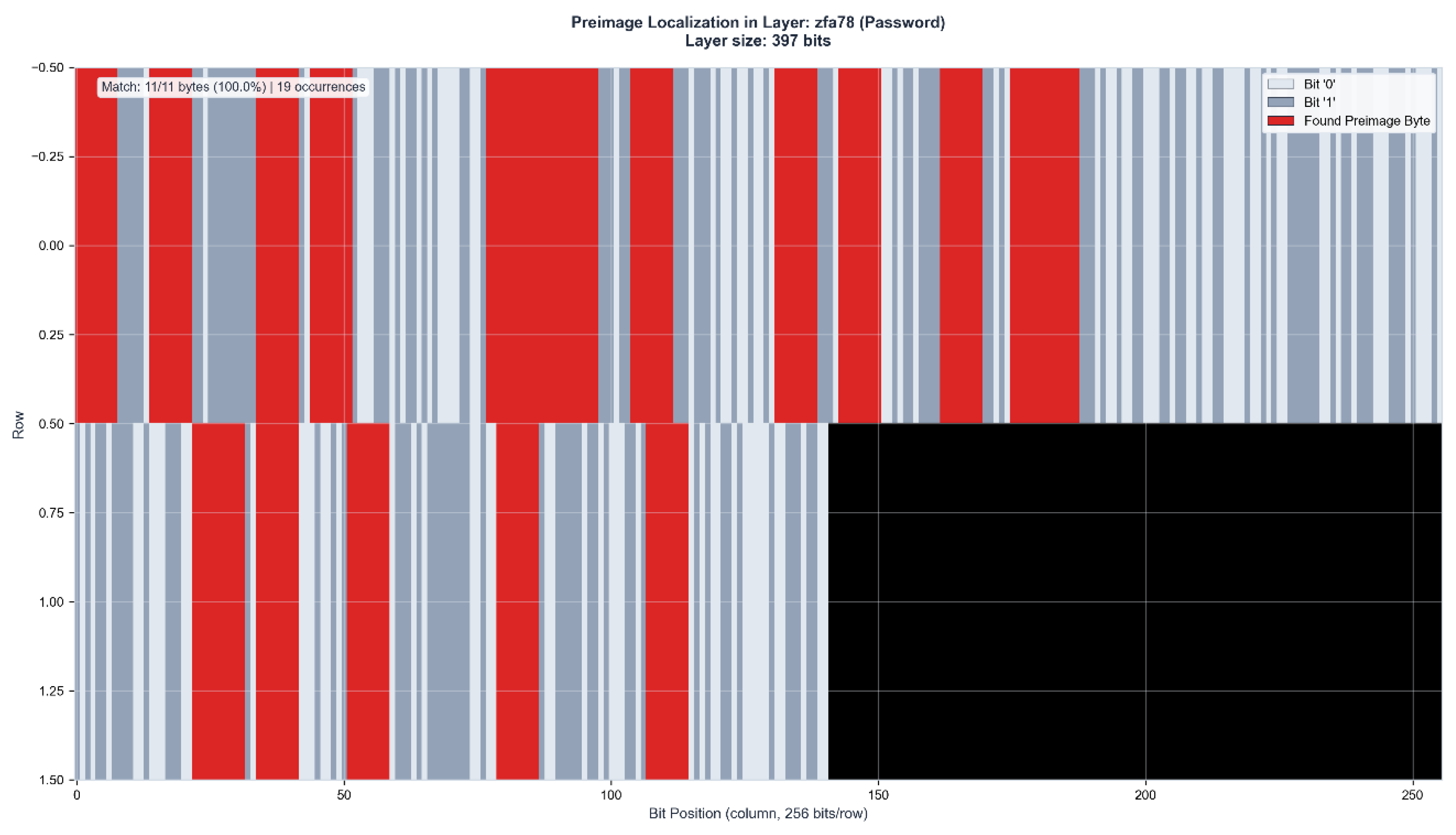

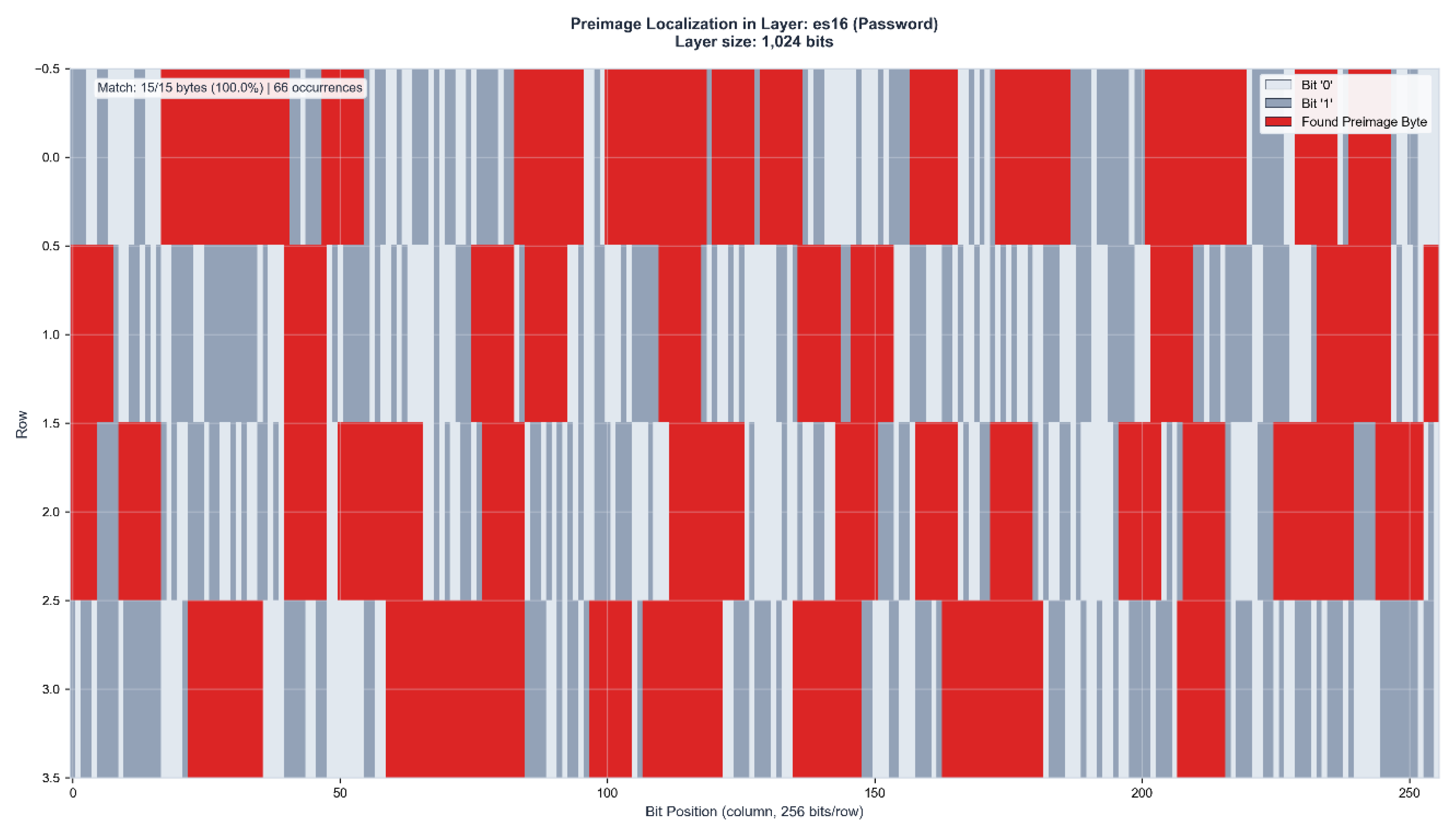

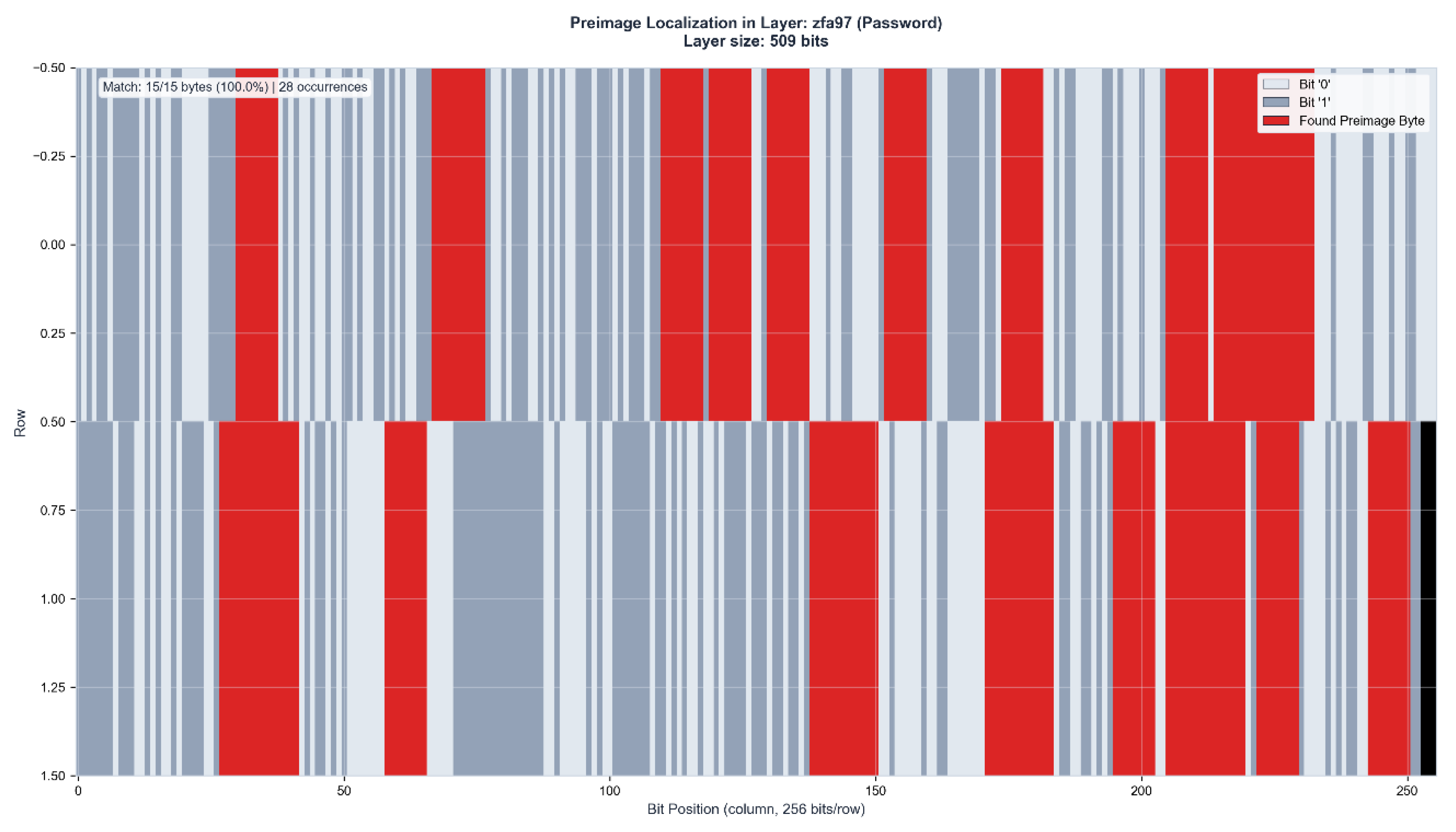

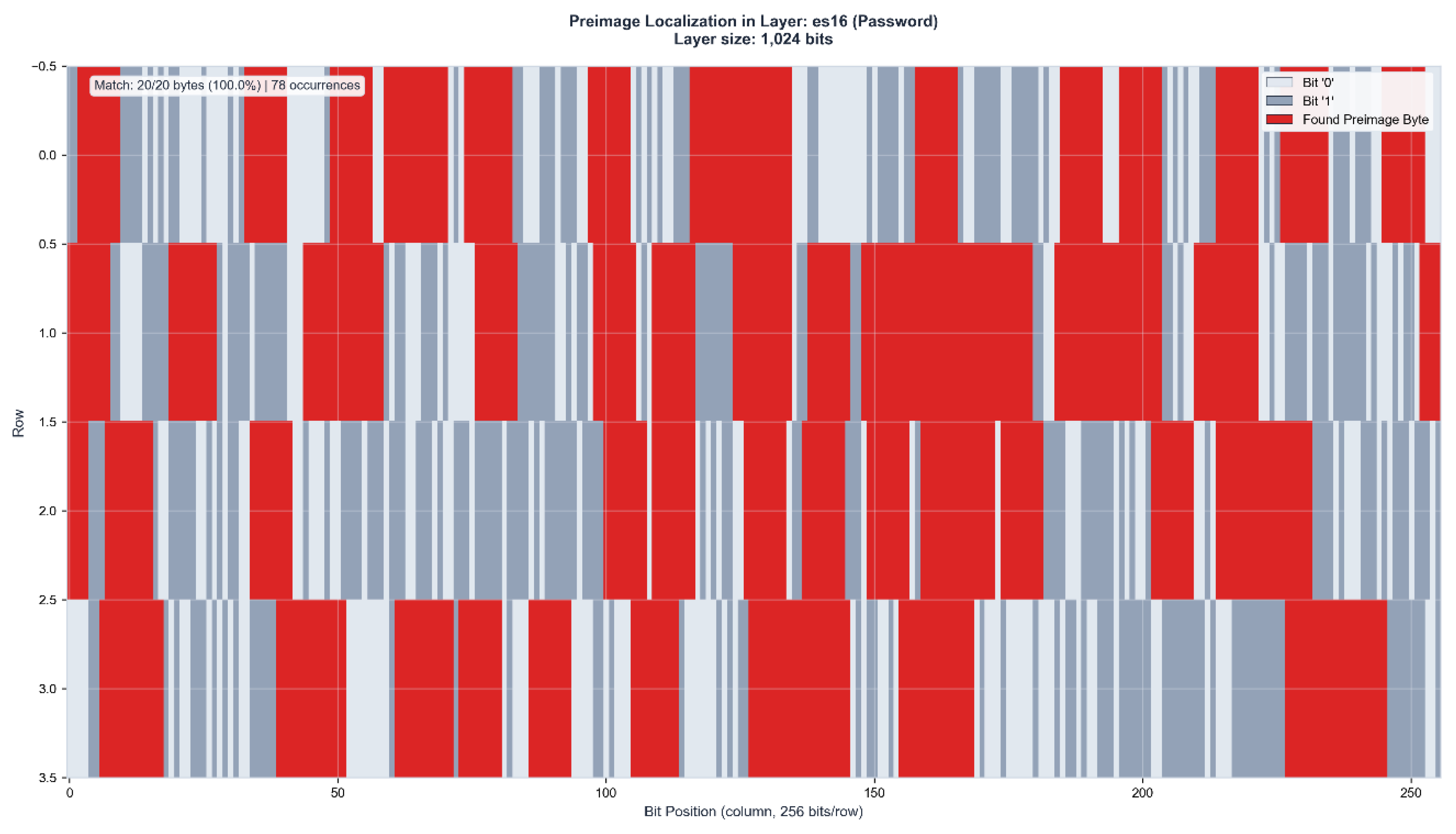

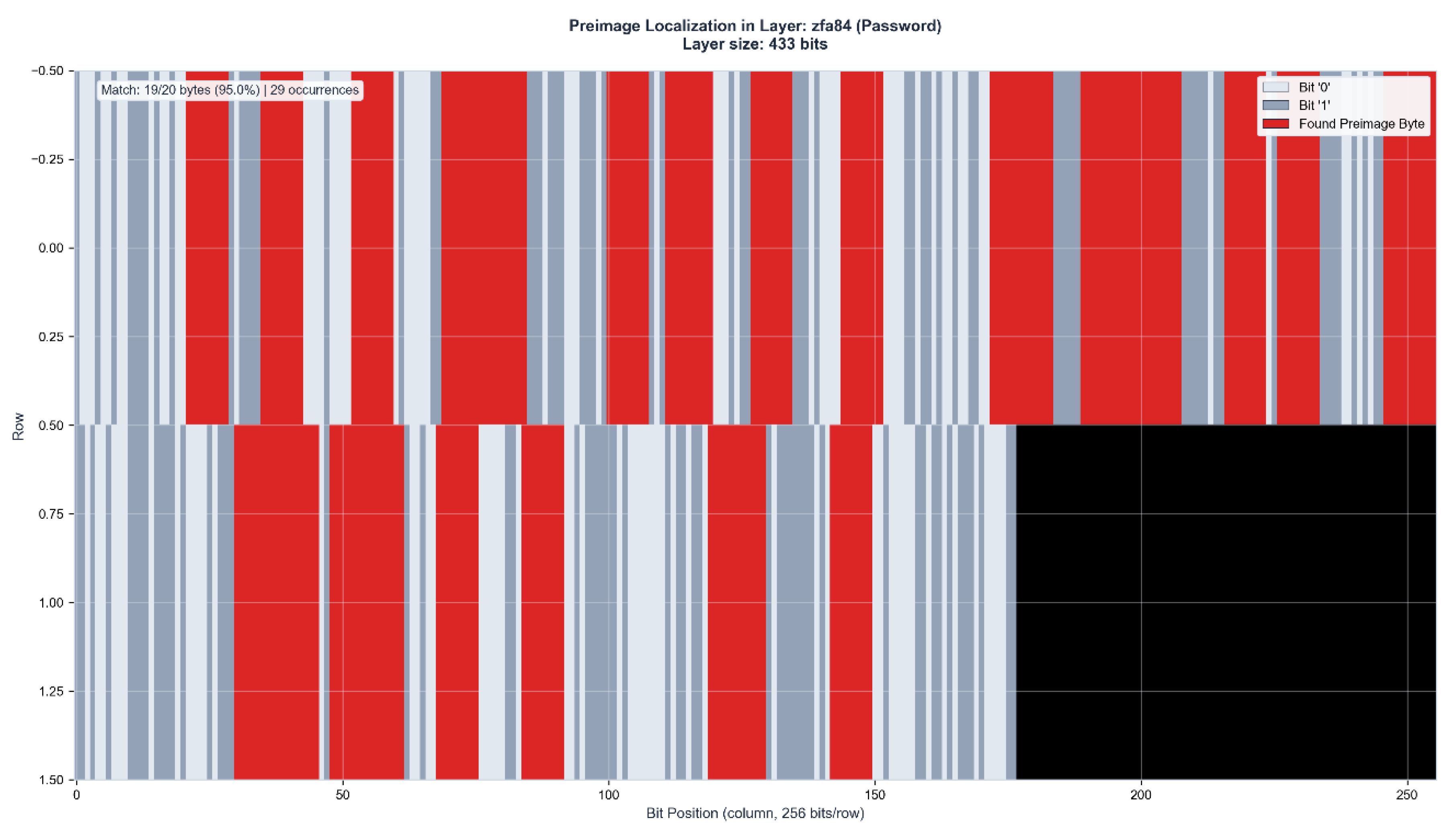

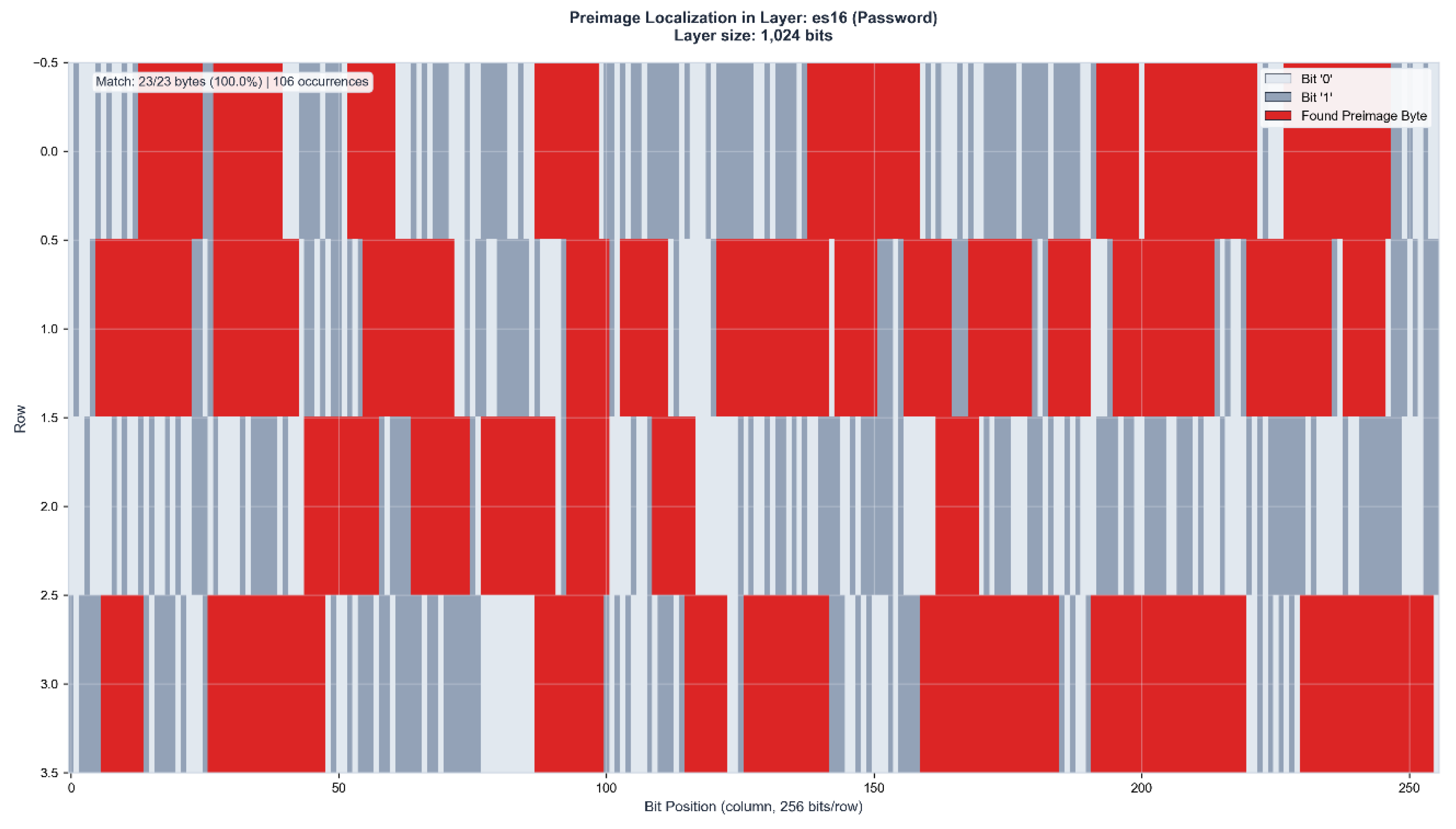

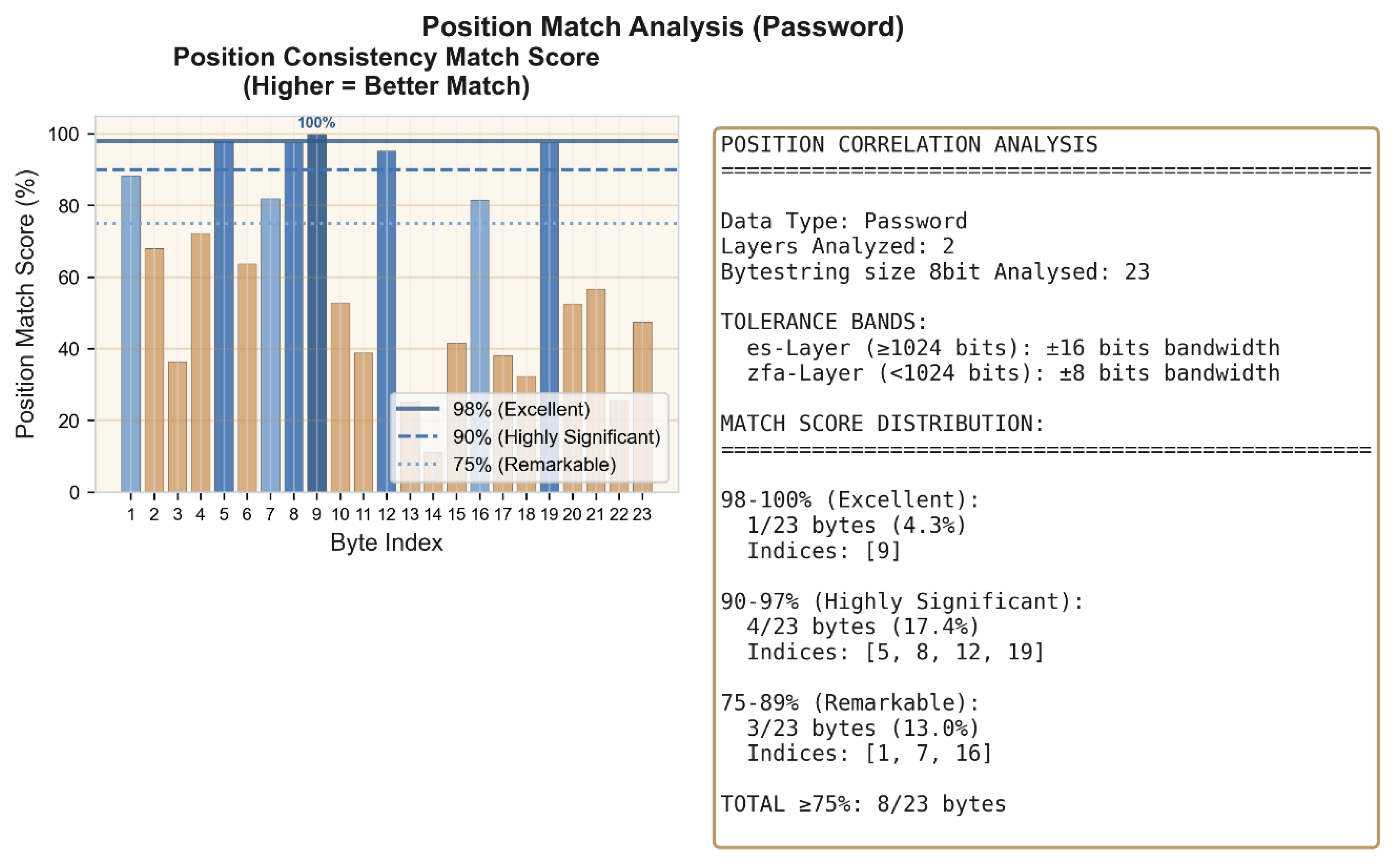

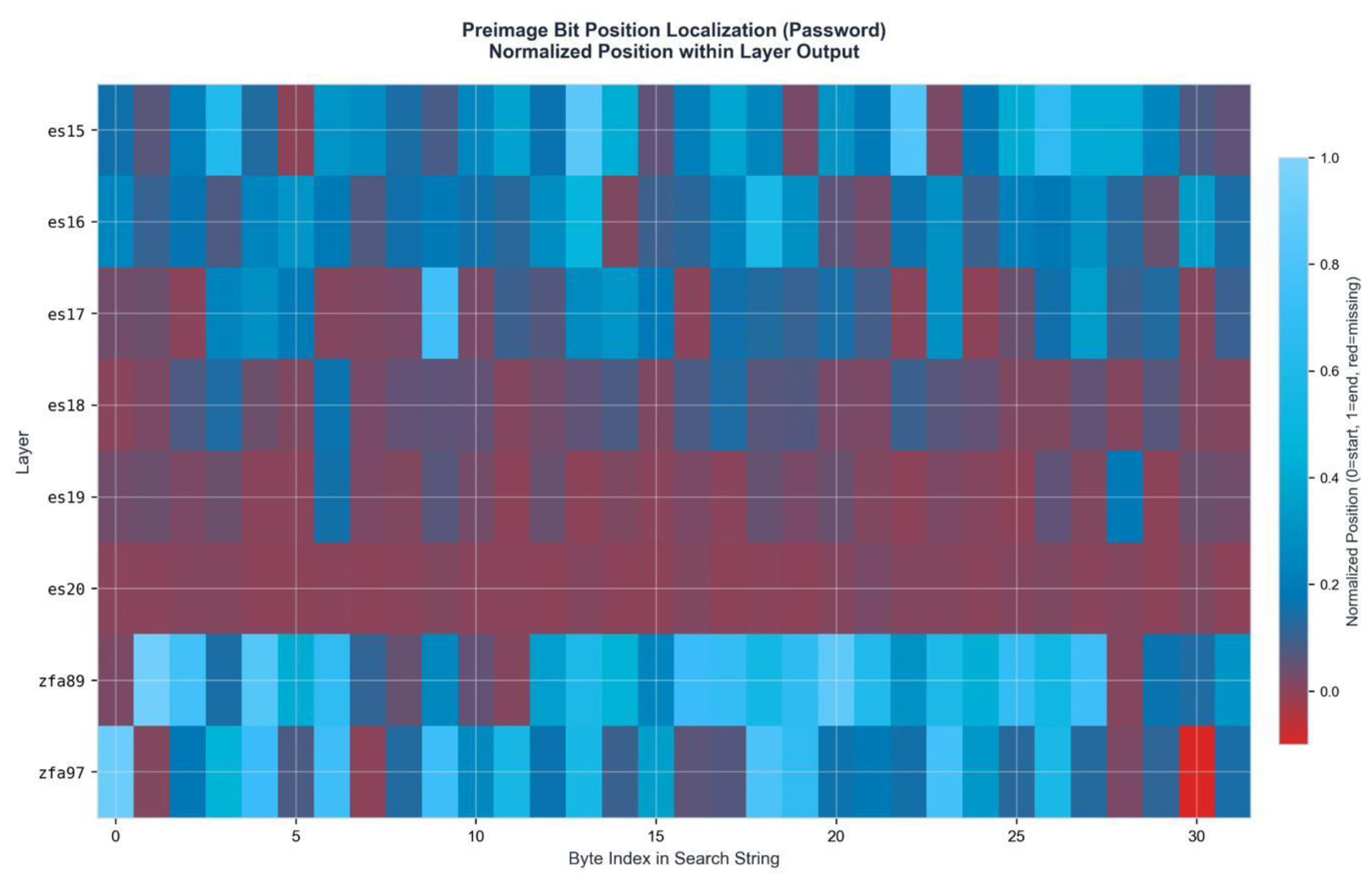

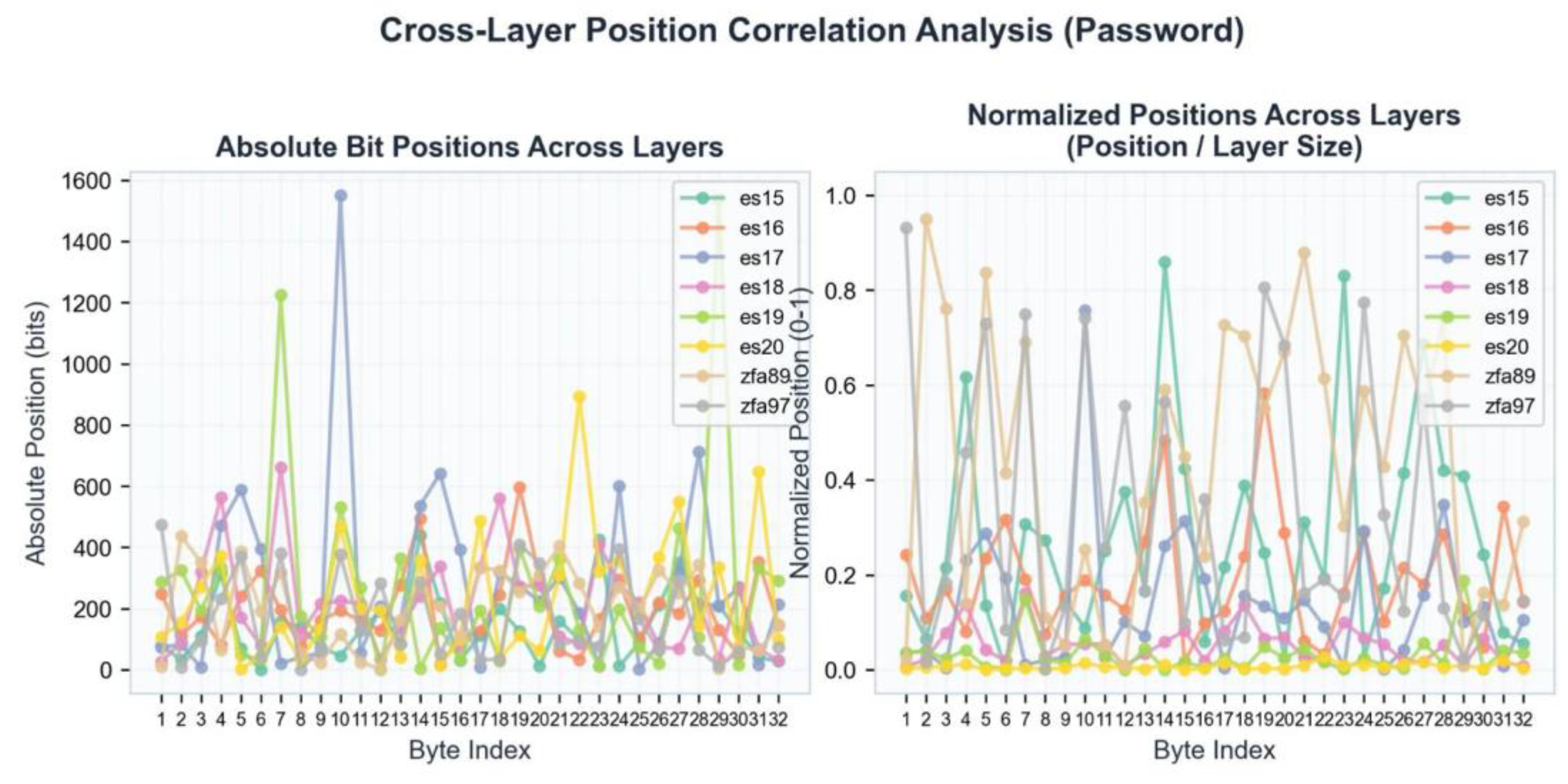

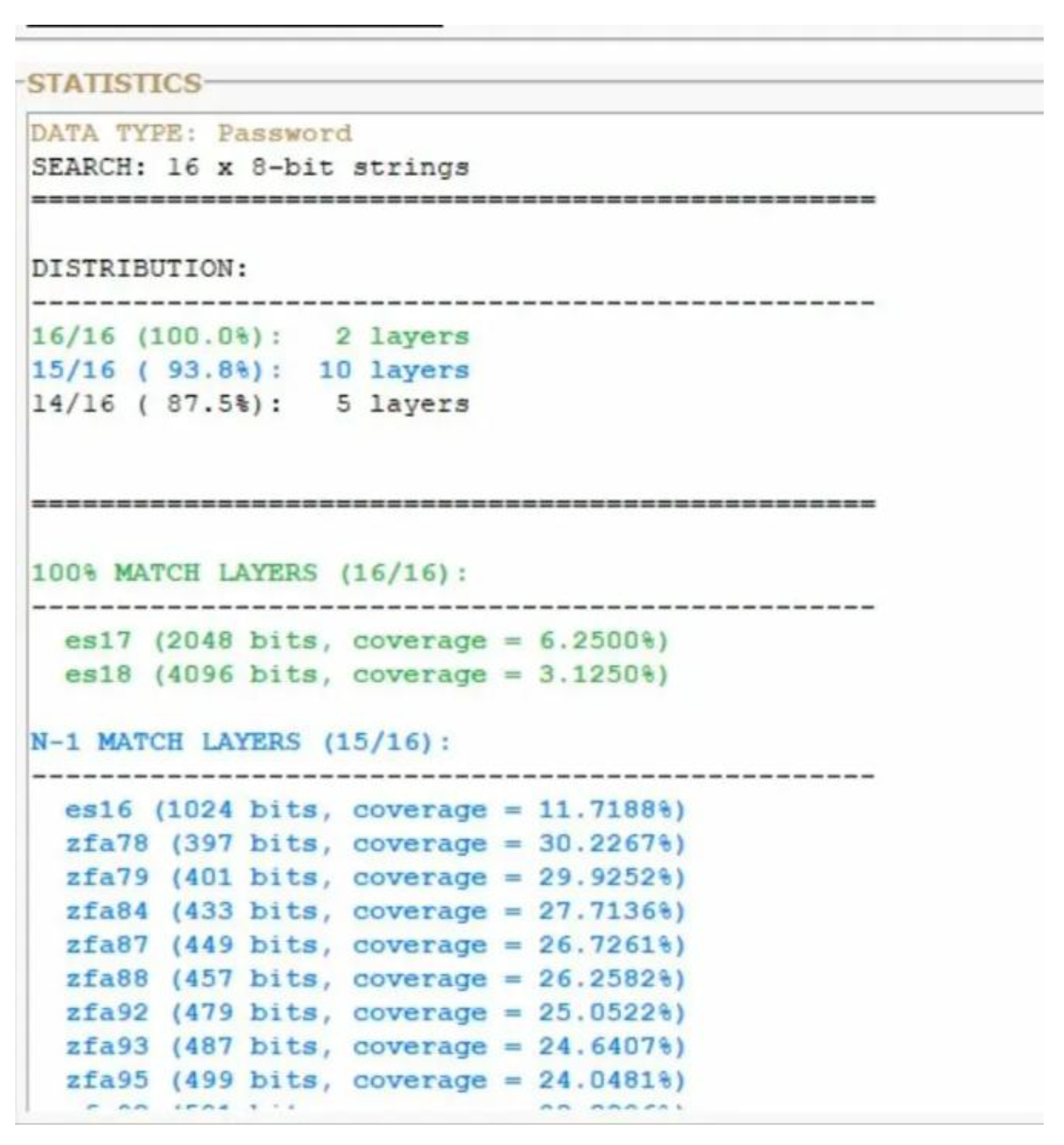

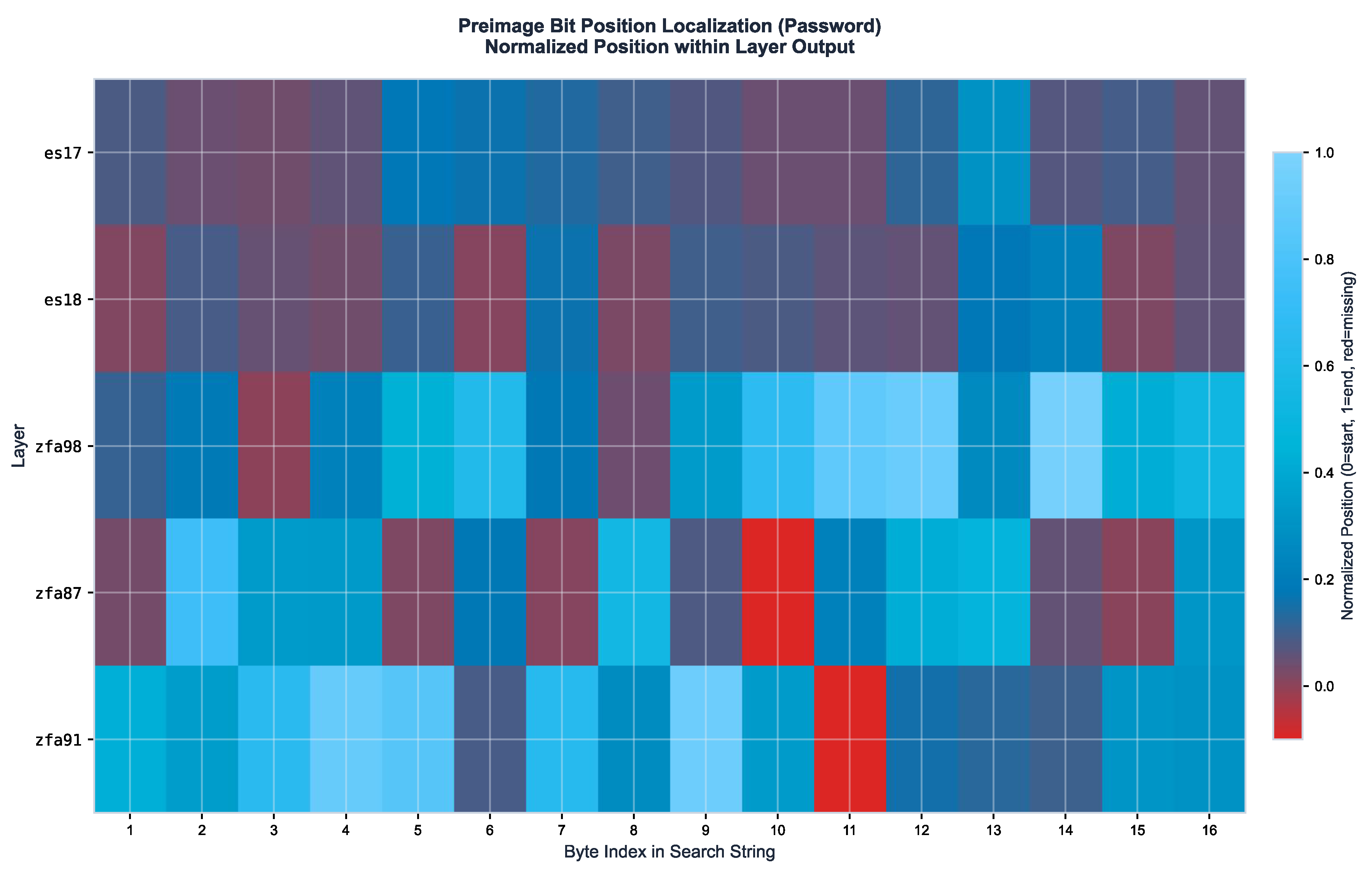

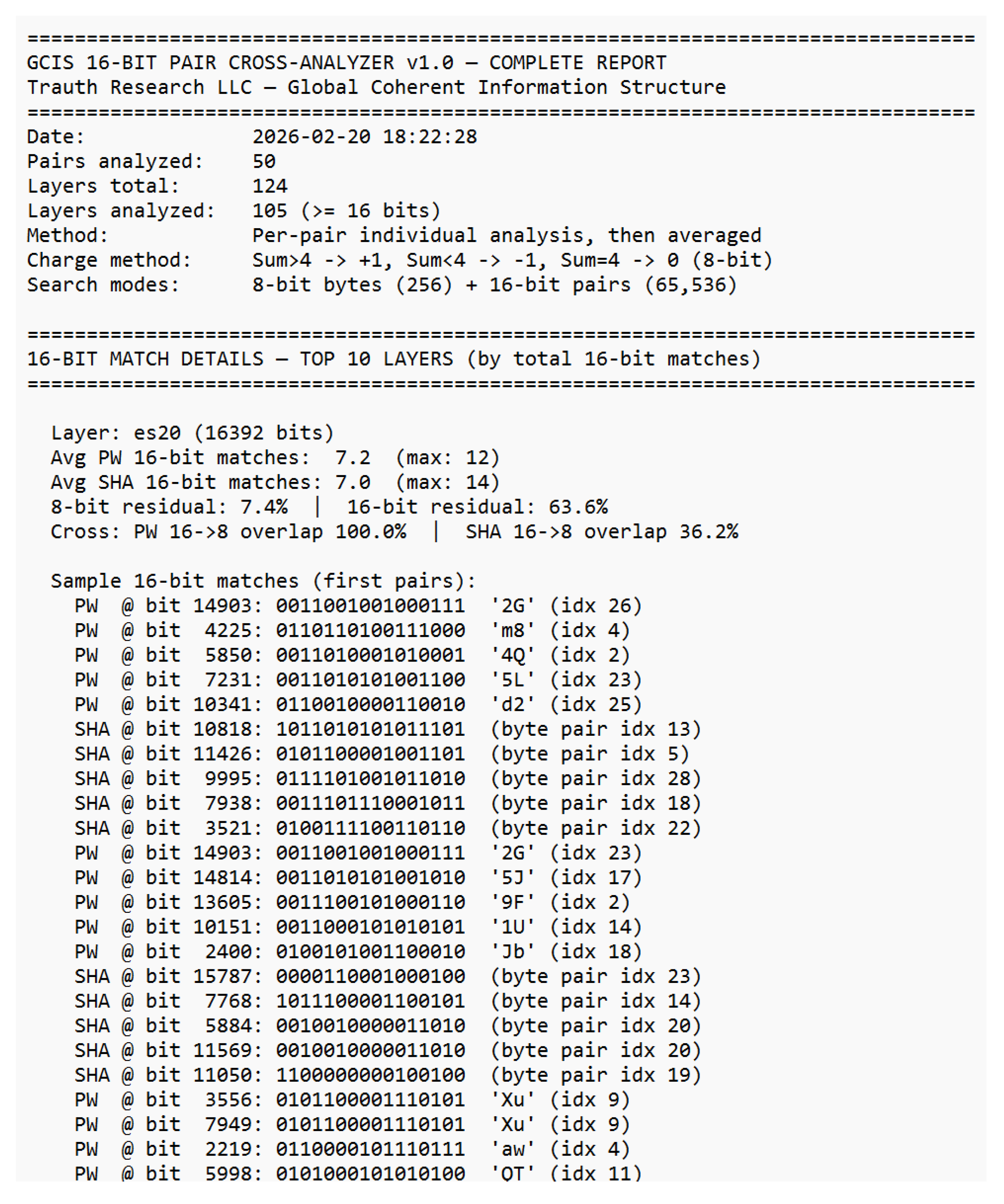

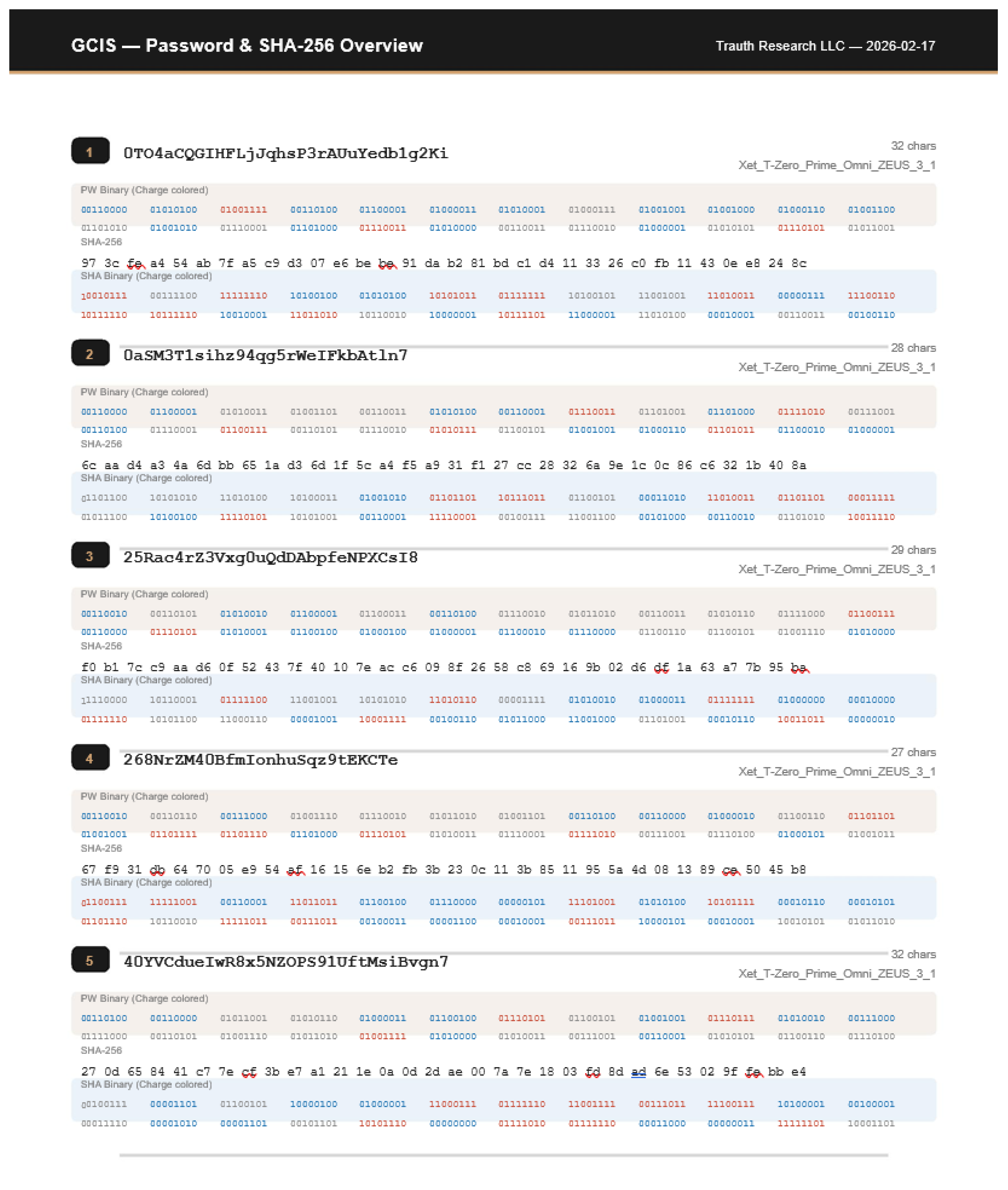

3.3 16-Bit Match Cartography

The 16-bit analysis produces geometric maps of the activation landscape.

Specific character pairs from the password such as ‘2G’ at bit position 14,903 in ES20, or ‘Xu’ at bit positions 3,556 and 7,949 in ES20 are localized at discrete, reproducible positions within the layer bitstring. These are not statistical correlations: they are exact 16-bit pattern matches at identified bit addresses.

Across all 50 pairs, the top layers yield the following match densities: ES20 averages 7.2 password byte-pair matches per pair (maximum 12), with SHA byte-pair matches averaging 7.0 (maximum 14). The 16-bit password matches overlap 100.0% with 8-bit password regions in ES20, confirming that the 16-bit analysis refines rather than contradicts the 8-bit results. For SHA matches, the overlap is 36.2% the remaining 63.8% represent new territory accessible only at 16-bit resolution.

Figure 9.

16-bit match details — ES20 (16,392 bits). Sample byte-pair localizations with bit positions, pattern, decoded characters, and password index. Complete match data in Supplement S2.

Figure 9.

16-bit match details — ES20 (16,392 bits). Sample byte-pair localizations with bit positions, pattern, decoded characters, and password index. Complete match data in Supplement S2.

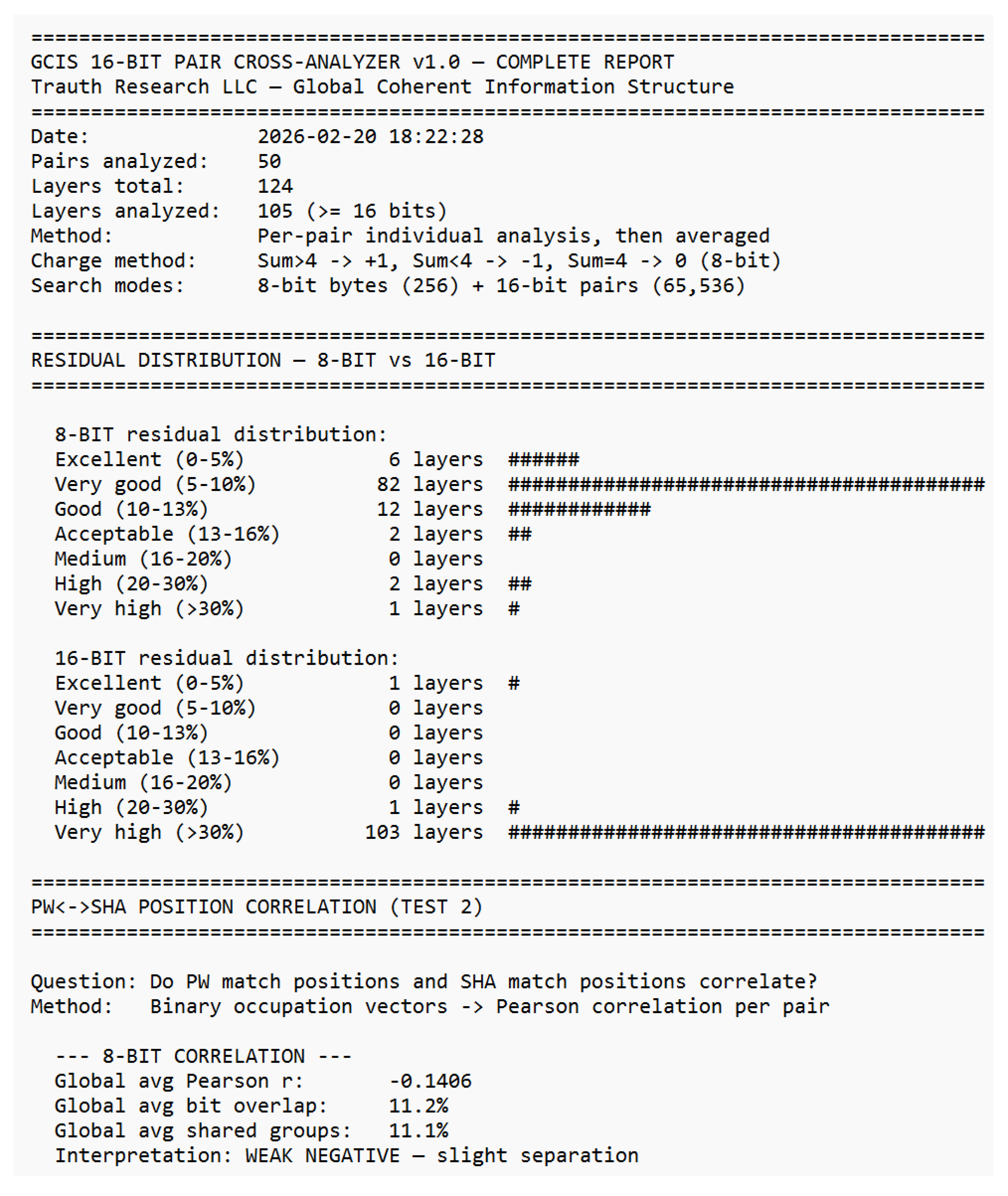

3.4 Residual Structure

The residual unexplained −1 charge positions — is not random noise.

At 8-bit resolution, 82 of 105 layers fall in the 5–10% residual band, with only 3 layers exceeding 20%. The distribution is sharply peaked, indicating a systematic rather than stochastic residual. At 16-bit resolution, 103 of 105 layers show residuals >30%, which is expected: the 16-bit search is 256× more selective and therefore captures only the highest-confidence geometric signatures, leaving more positions unaccounted for.

The PW↔SHA position correlation provides a critical structural insight. At 8-bit resolution, the global average Pearson r = −0.1406 — a weak but consistent negative correlation, meaning password positions and SHA positions slightly repel each other within the layer geometry. At 16-bit resolution, r = 0.0206 — no significant correlation. This separation confirms that the network maintains distinct geometric subspaces for password and hash information within the same layer.

Figure 10.

Residual distribution (8-bit vs. 16-bit) and PW↔SHA position correlation. 82/105 layers achieve 5–10% residual at 8-bit. Complete analysis in Supplement S2.

Figure 10.

Residual distribution (8-bit vs. 16-bit) and PW↔SHA position correlation. 82/105 layers achieve 5–10% residual at 8-bit. Complete analysis in Supplement S2.

3.5 16-Bit vs. 8-Bit Cross-Analysis

The relationship between 8-bit and 16-bit results reveals a hierarchical structure. Of the 16-bit password matches, 13.0% overlap with 8-bit password regions. For SHA, the overlap is 3.9%. In both cases, the 16-bit matches produce 0.0 bits of new password territory per pair on average meaning the 16-bit password signals are fully contained within the 8-bit map. For SHA, however, 1.7 new bits per pair emerge exclusively at 16-bit resolution, representing geometric information invisible at 8-bit.

The reverse view is equally informative: the vast majority of 8-bit matches have no 16-bit coverage (e.g., ES20: 7,217.2 PW-only 8-bit bits, 2,040.7 SHA-only 8-bit bits per pair). The 16-bit analysis does not replace the 8-bit analysis it identifies a subset of highest-confidence anchor points within the broader 8-bit landscape.

Figure 11.

16-bit vs. 8-bit cross-analysis. PW 16→8 overlap, SHA 16→8 overlap, new territory, and 8-bit-only regions per layer. Complete cross-analysis in Supplement S2.

Figure 11.

16-bit vs. 8-bit cross-analysis. PW 16→8 overlap, SHA 16→8 overlap, new territory, and 8-bit-only regions per layer. Complete cross-analysis in Supplement S2.

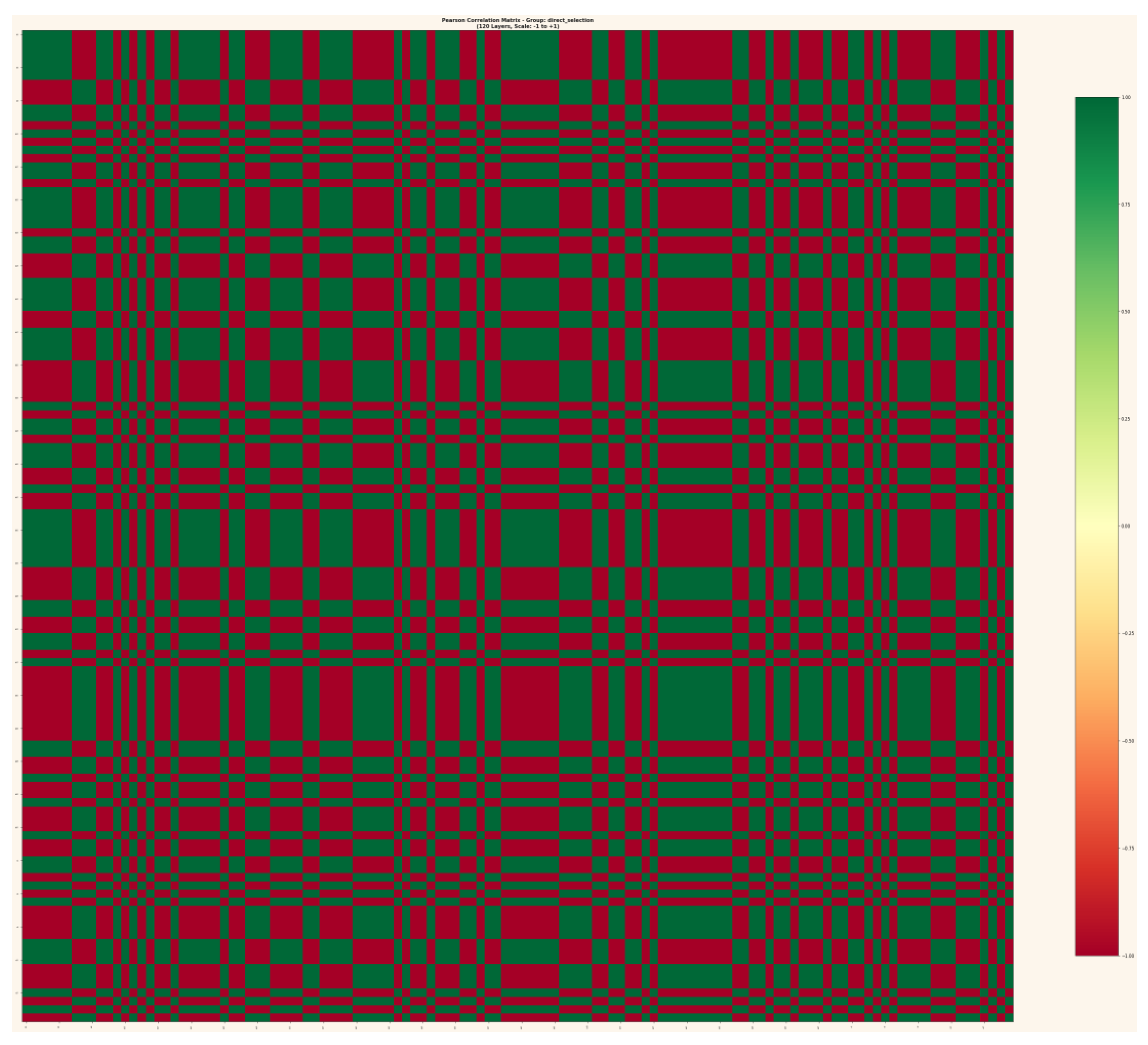

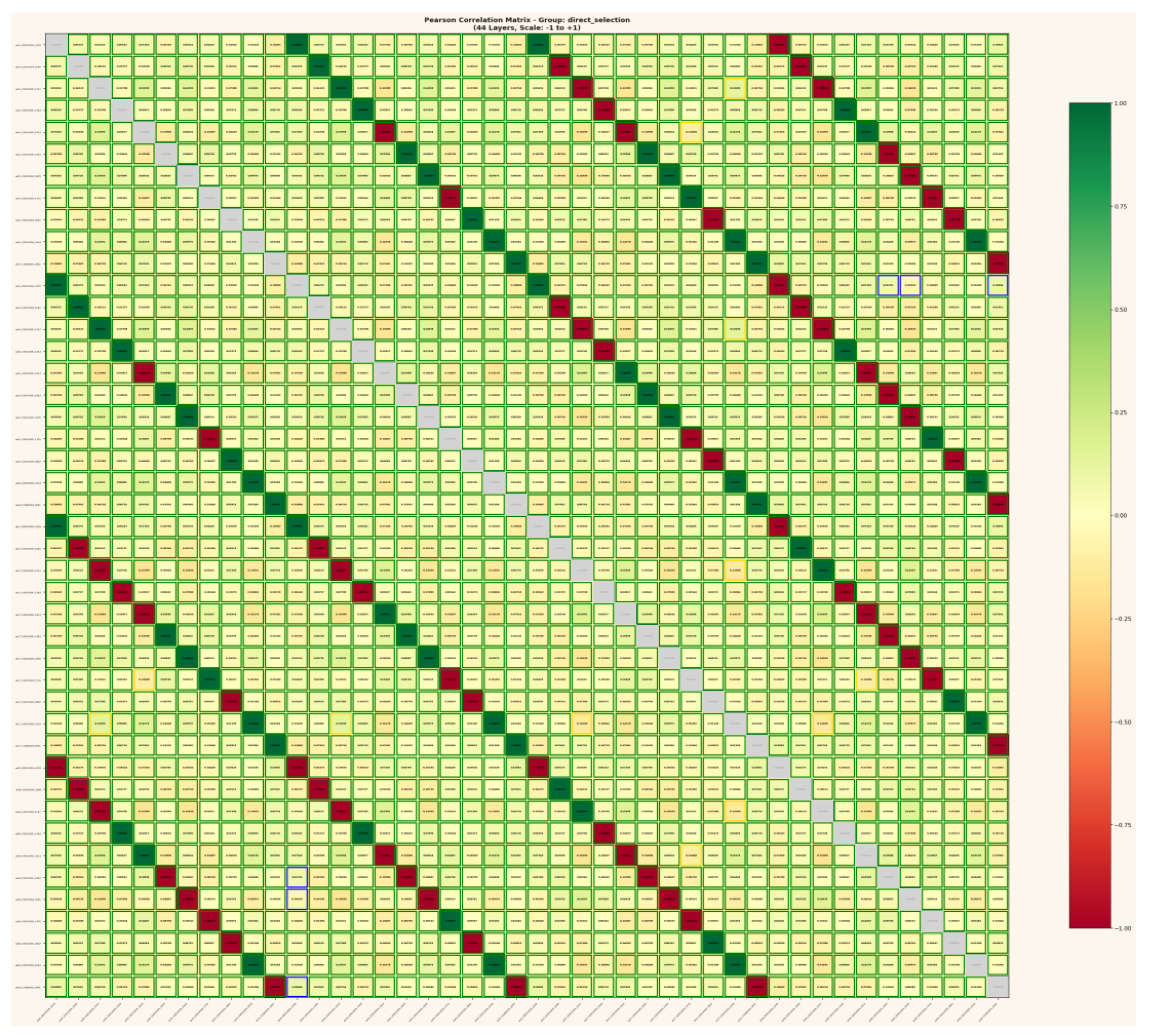

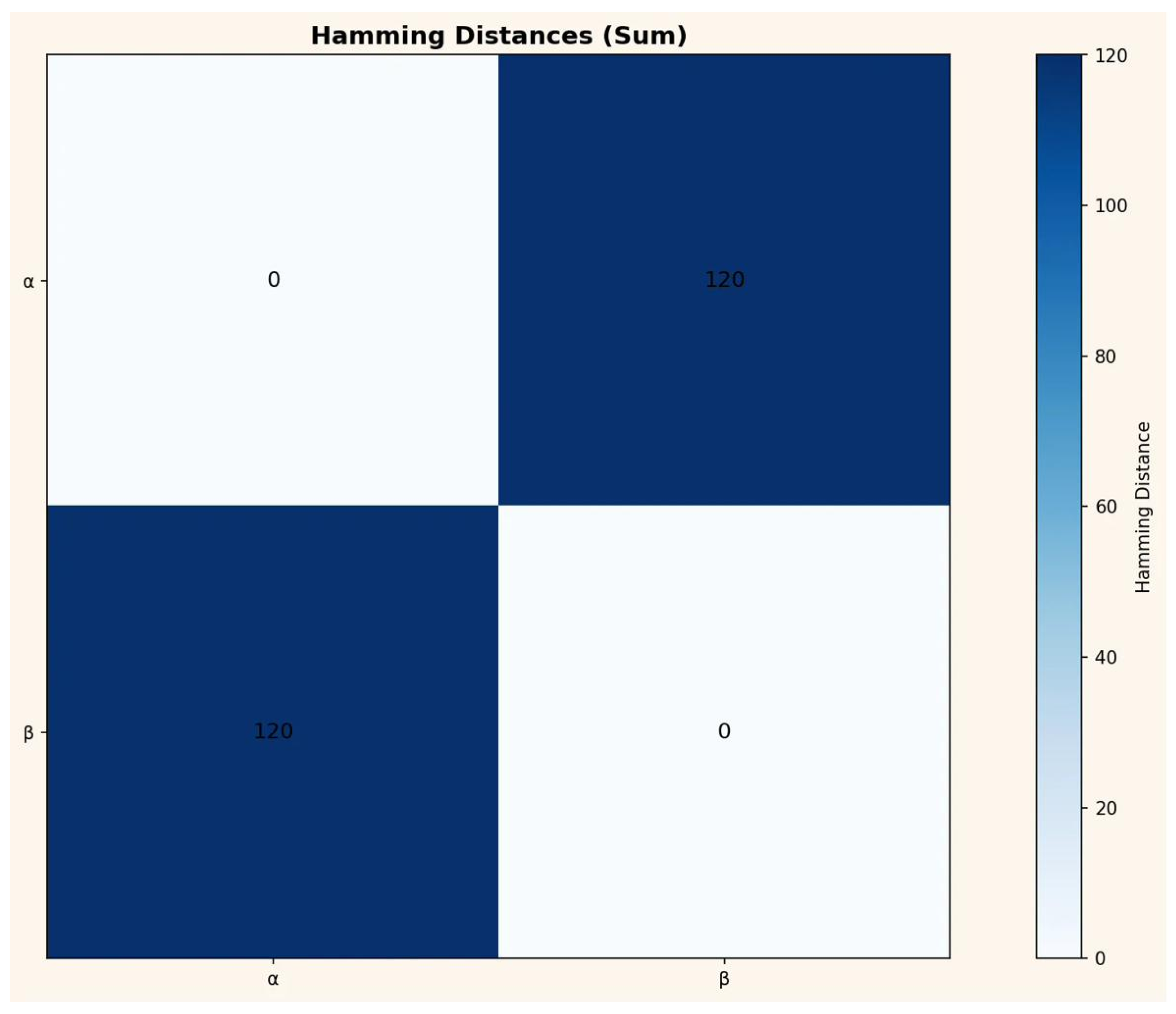

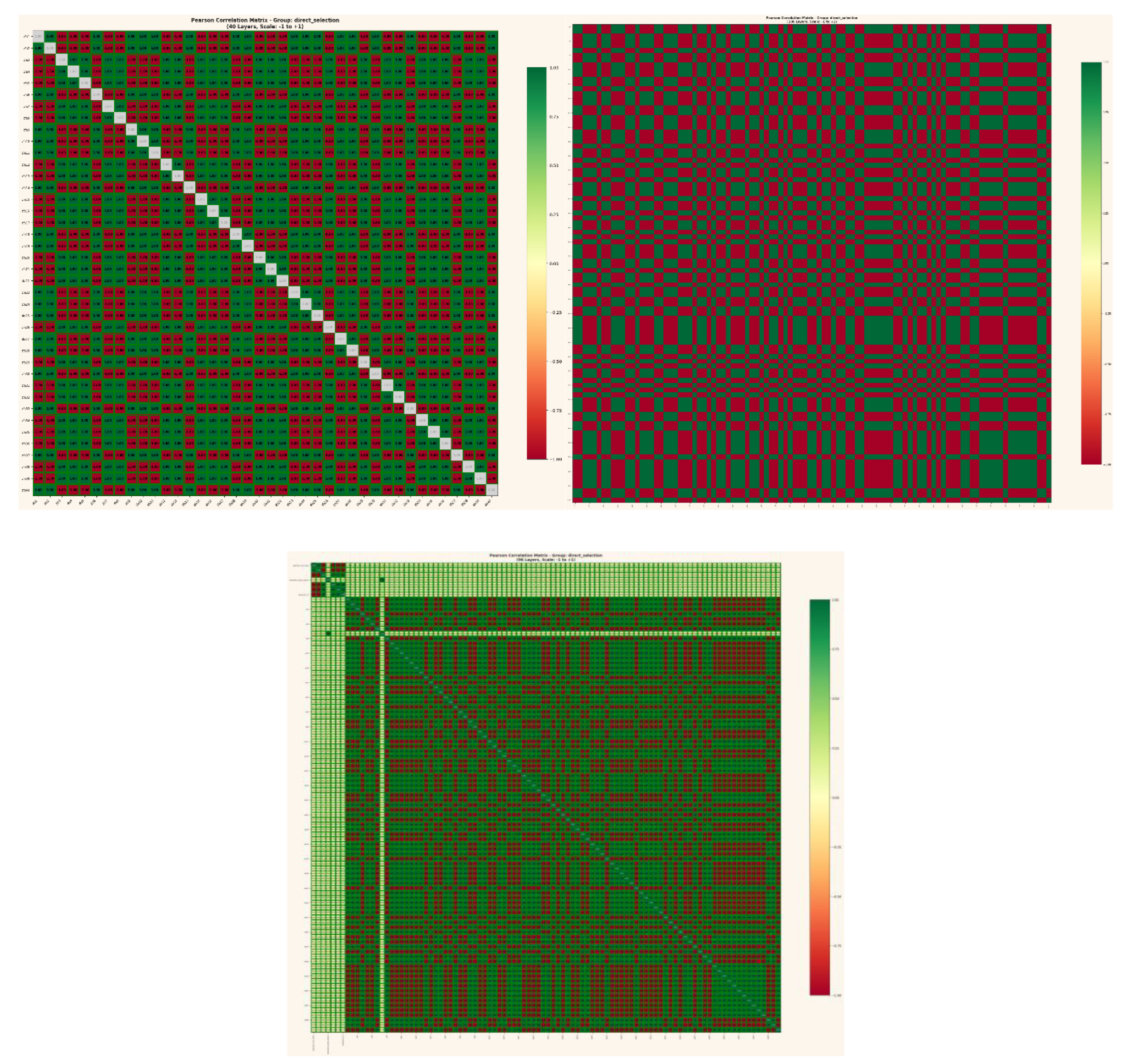

3.6 Cross-Domain Convergence

The layer correlation matrices produced by the GCIS analysis show structural identity with chromatin contact matrices published in

Cell [

1].

Three specific correspondences are documented:

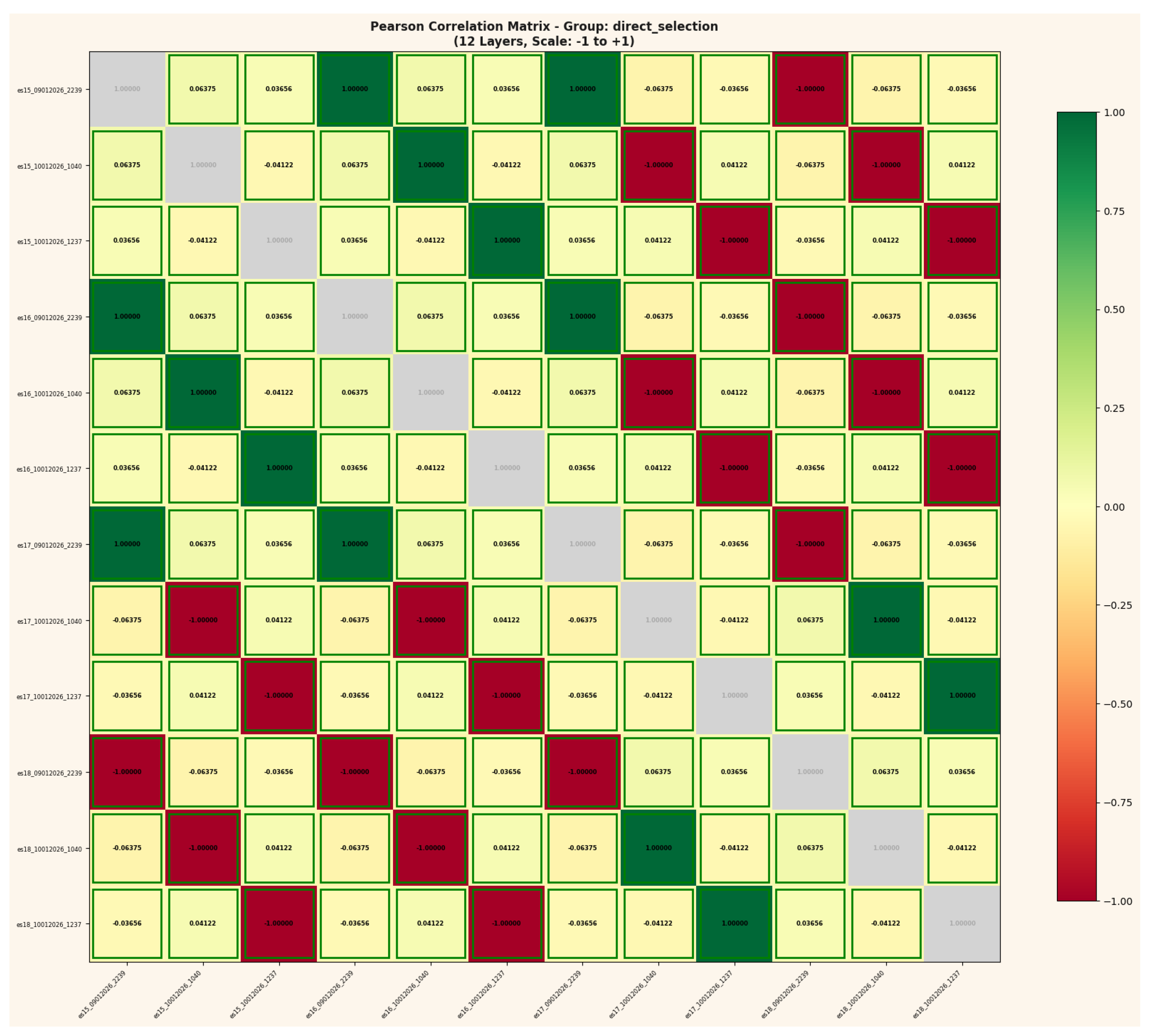

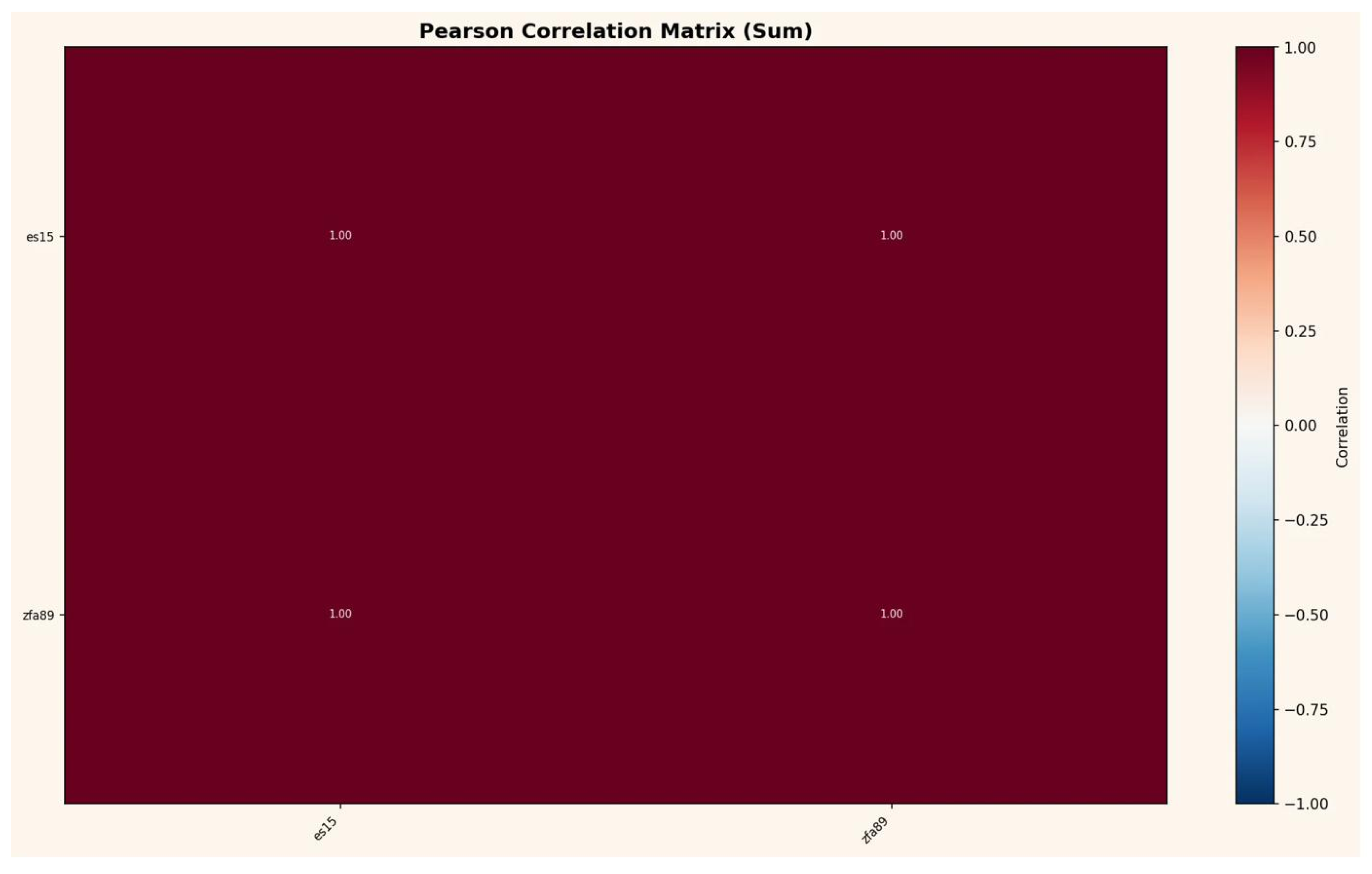

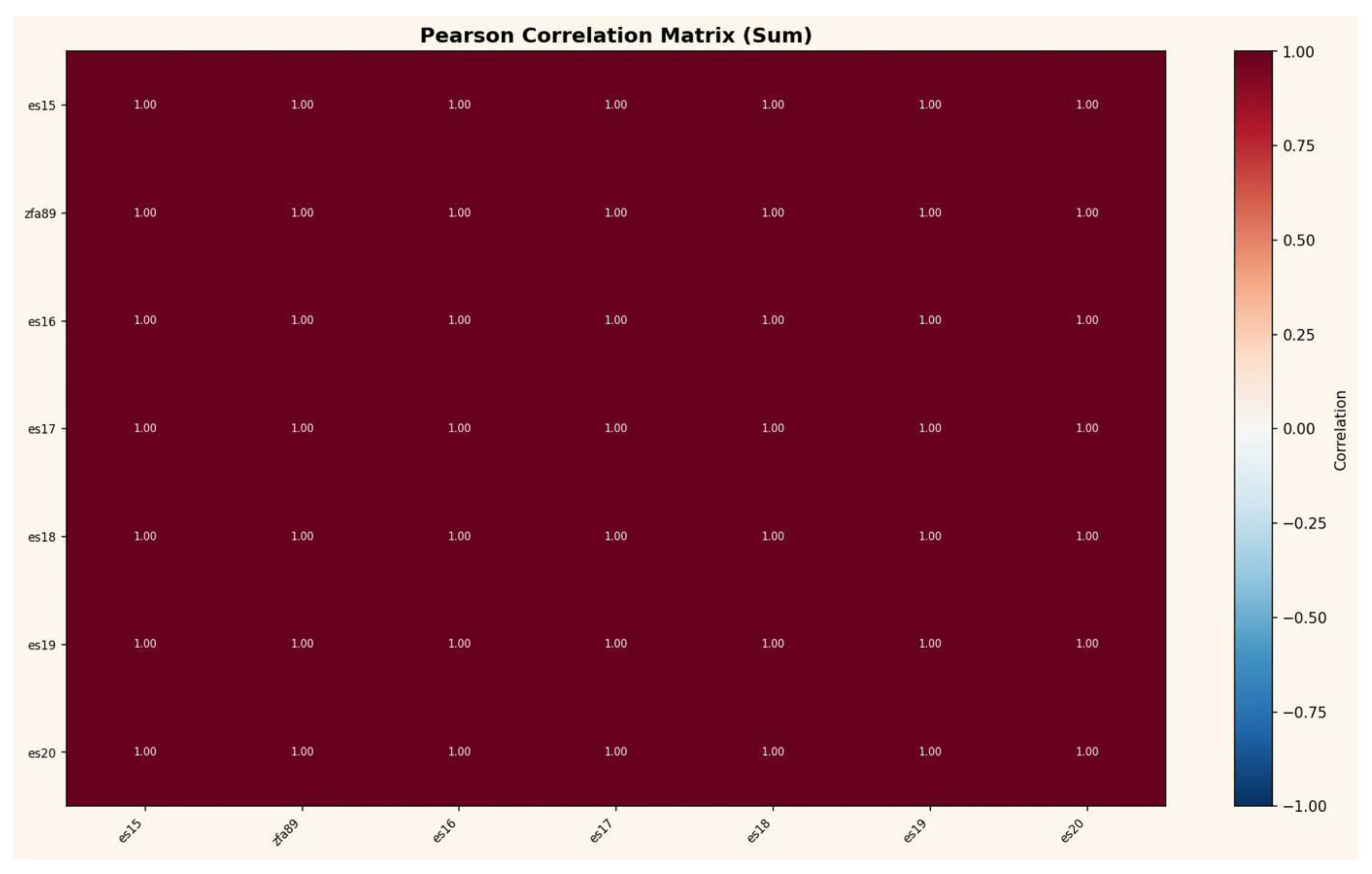

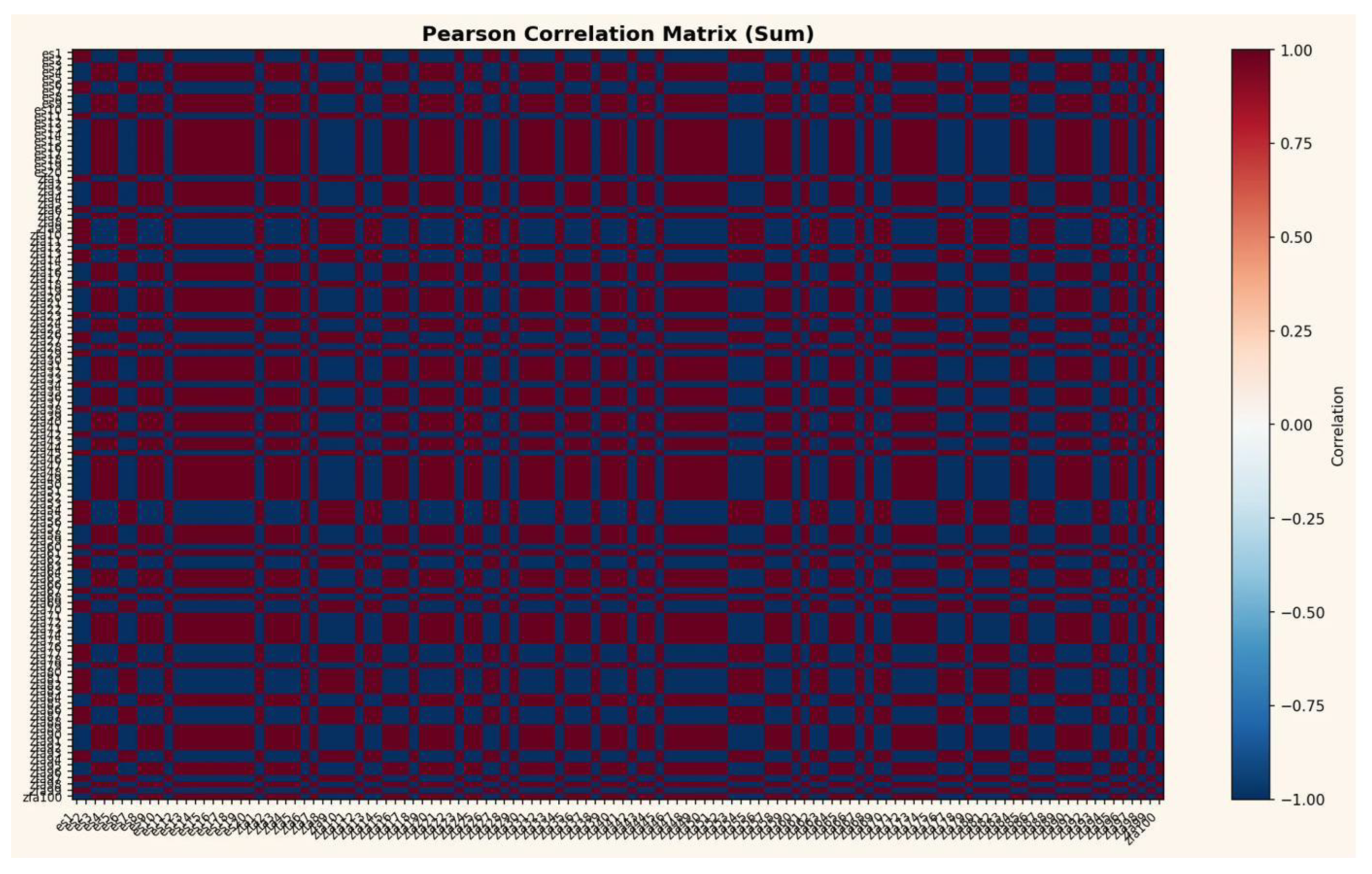

First, block-diagonal domain structure. The Pearson correlation matrices across ZFA layers (

Figure 12a) exhibit alternating positive and negative correlation blocks, bounded by sharp transitions at specific layer positions. This is the same topological feature as the nanoscale domains in chromatin contact matrices [

1], where nucleosome-depleted regions partition the genome into self-associating blocks.

Second, stripe patterns. Vertical and horizontal stripes at specific layer positions in the GCIS matrices correspond directly to CTCF-mediated stripes in the Cell data, where loop extrusion creates linear contact signatures across the genome.

Third, periodic sign alternation. At 40 ZFA layers, the Pearson matrix shows |r| = 1.00 with perfectly alternating sign (+1.00 / −1.00) — a deterministic structure with zero noise. This corresponds to the ~180–190 bp nucleosome linker periodicity in chromatin, where adjacent nucleosome positions alternate in a fixed pattern.

These are not analogies. They are the same mathematical structure a correlation matrix over discrete domains of a self-organizing system — realized in two different substrates: neural network activations and genomic DNA.

Independent confirmation comes from Google Research [

2], where Transformer embeddings encode global graph geometry directly into their weight structure, and from Anthropic [

3], where Claude 3.5 Haiku represents counting information on helix-shaped manifolds with 95% variance captured in a 6-dimensional subspace. Li et al. [

4] further demonstrate that this geometric organization is universal across 24 LLM architectures.

Figure 12.

a-c: Structural identity between GCIS layer correlation matrices and chromatin contact matrices. (a) ZFA 40-layer Pearson matrix perfect |r|=1.00 alternation. (b) ZFA 70-layer matrix domain boundaries. (c) Full 96-layer matrix block-diagonal structure with stripes. Compare with Cell [

1],

Figure 1C/D and

Figure 2A.

Figure 12.

a-c: Structural identity between GCIS layer correlation matrices and chromatin contact matrices. (a) ZFA 40-layer Pearson matrix perfect |r|=1.00 alternation. (b) ZFA 70-layer matrix domain boundaries. (c) Full 96-layer matrix block-diagonal structure with stripes. Compare with Cell [

1],

Figure 1C/D and

Figure 2A.

4. Discussion

Geometric self-organization without computational substrate. The central question raised by these results is not why a neural network produces geometric structure networks, however unconventional, remain engineered systems. The central question is why DNA does.

Chromatin contact matrices at base-pair resolution [

1] exhibit block-diagonal domains, stripe patterns, and periodic sign alternation the same mathematical structures documented in

Figure 12a–c across 96 ZFA layers of the GCIS architecture. The neural network has 124 layers, an activation function, and a designed topology. DNA has none of these.

It is a molecule. It has no optimizer, no loss function, no gradient, no architecture designed for information processing. Yet it converges on identical geometry.

Google Research [

2] documents the same structures in Transformer embeddings and calls it “the memorization puzzle” geometry emerging without pressure from supervision, architecture, or optimization. Anthropic [

3] measures helix-shaped feature manifolds in Claude. Li et al. [

4] confirm geometric universality across 24 LLM architectures. In every case, the explanation offered is incomplete: the geometry is observed but not explained.

The amplitude dynamics documented in

Figure 3 78 discrete synchronization events across 124 layers — represent the empirically observable transition between informational structure and geometric manifestation; a detailed treatment of this mechanism is provided in [

7].

The ISP framework [

7] resolves what these observations individually cannot: if information is ontologically primary and geometry is its necessary third-order manifestation — Information → Attractors (Amplitudes) → Geometry → Complexity — then convergence across substrates is not coincidental but inevitable. Any system processing information must produce geometry, whether the substrate is silicon, carbon, or nucleotides.

The burden of proof is therefore inverted. The question is not why these structures emerge. The question is: if information is not ontologically fundamental, what mechanism accounts for a molecule without computational architecture producing geometric structures identical to those of a 124-layer neural network? No such mechanism has been proposed.

SHA-256 as coordinate system in the ISP. The weak negative correlation between password and SHA-256 match positions (Pearson r = −0.14 at 8-bit resolution) indicates geometrically separated subspaces for preimage and hash information within each layer. This separation is consistent with the interpretation that the SHA-256 hash functions as a coordinate vector within the ISP providing the multi-layered addressing structure through which the preimage is localized across the 124-layer manifold.

This interpretation explains the inverse scaling behavior reported in [

5]: passwords shorter than 11 × 8 = 88 bits fail to produce unique localization not because the network lacks capacity, but because the hash provides insufficient address space within the ISP.

The same principle accounts for the reduced RSA reconstruction rate (88% for RSA-371 in

Appendix B): two prime factors constitute a sparse coordinate structure compared to the 256-bit address density of SHA-256. Conversely, systems with richer coordinate structures — SHA-512 (512-bit address space) or ECC with four coordinate points (Gx, Gy, Qx, Qy) — provide denser addressing and correspondingly higher localization rates, as confirmed by the 100% ECC-128 result in

Appendix B.

This leads to a testable prediction: post-quantum lattice-based cryptographic systems, which operate on direct coordinate structures without the transformation layer of classical hash functions, may prove more susceptible to geometric localization rather than less their coordinate structures map directly into the ISP without intermediate address translation.

Cross-domain structural identity. The structural correspondence between GCIS layer correlation matrices and chromatin contact matrices [

1] block-diagonal domains, stripe patterns, periodic sign alternation is not analogy but identity. Both systems produce the same mathematical structure because both are instantiations of the same emergent chain: information processing generates geometry, and the geometry assumes the same form regardless of substrate.

Within the ISP framework [

7], this convergence is a necessary consequence of informational primacy. If geometry is the inevitable manifestation of information, then any system processing sufficiently complex information structures will converge on the same geometric solutions whether the substrate is a 124-layer neural network, genomic DNA, a Transformer language model [

2], or Claude’s feature manifolds [

3]. Li et al. [

4] confirm this universality across 24 LLM architectures. The peer-reviewed formalization of this principle — that geometry does not precede information but emerges from it is provided in [

7].

Open questions. Two aspects remain unresolved. The residual (6.9% unexplained −1 charge positions at 8-bit resolution) shows a sharp distribution peak (82/105 layers in the 5–10% band), indicating systematic structure rather than noise. Its information content has not been characterized. The universal −1 charge polarity of all password bytes across all 50 test cases exceeds what ASCII encoding statistics alone would predict, and its mechanistic origin requires further investigation.

5. Conclusion

Security implications. Intellectual honesty requires addressing both the capabilities and limitations of the methodology. Passwords shorter than 11 × 8 = 88 bits cannot be reliably localized — the SHA-256 hash provides insufficient address space within the geometric manifold for unique preimage identification. This is a fundamental constraint, not an engineering limitation. Short passwords, paradoxically, are exponentially more difficult for this approach than long ones inverting the scaling behavior of every known brute-force and dictionary attack.

This limitation, however, must be understood in context. It demonstrates that the methodology does not operate through pattern matching or statistical shortcutting. It operates through geometric addressing and where sufficient address space exists, the results are unambiguous: 100% preimage recovery across all tested cases from 11 to 32 characters, across MD5 and SHA-256 (main body, [

5]), 100% ECC-128 coordinate localization, and 88% RSA-371 reconstruction (

Appendix B). For passwords of realistic complexity — the alphanumeric strings of 20–32 characters that secure critical infrastructure — the geometric barrier is fully navigable.

The implications extend beyond hash functions. Every symmetric and asymmetric cryptographic system that relies on computational hardness assumptions is potentially affected. RSA presents a genuine current limitation due to the sparse coordinate structure of its two-factor prime decomposition — but this limitation is architectural, not fundamental, and is expected to yield to deeper layer configurations. ECC is already solved at 128-bit.

Post-quantum cryptography offers no refuge. Lattice-based systems the leading candidates for quantum-resistant standards — operate on direct coordinate structures that constitute, in effect, native representations within an information space. Where classical hash functions require geometric translation from hash to preimage coordinates, lattice-based systems present their coordinate structures without intermediate transformation. The theoretical prediction is therefore that lattice-based post-quantum cryptography is more susceptible to geometric localization, not less.

Quantum Key Distribution (QKD), often considered unconditionally secure through the No-Cloning Theorem, presents a different class of vulnerability. The No-Cloning Theorem prohibits copying quantum states — but it does not prohibit observing the electromagnetic environment in which those states are generated and processed. Information, within the ISP framework [

7], is not destroyed by quantum measurement; it undergoes identity transition. A theoretical apparatus for passive field-level extraction functioning as an electromagnetic resonator analogous to MRI technology, detecting field variations induced during key generation without collapsing the quantum state has been outlined in

Appendix B. QKD security models do not account for this attack vector. Experimental validation is pending but the theoretical framework is complete.

The more fundamental vulnerability is informational rather than electromagnetic. Information cannot be destroyed a principle established independently in quantum mechanics (unitarity), general relativity (information conservation), and the ISP framework [

7]. QKD destroys the quantum state upon interception but does not destroy the information it carried. Moreover, any functional QKD implementation requires classical verification infrastructure a hash, a checksum, an authentication token which constitutes a coordinate vector accessible to geometric localization. The quantum channel is protected; the system around it is not.

Responsible disclosure. The author has acted in accordance with the severity of these findings. The German Federal Office for Information Security (BSI) has been notified via CERT-Bund. The U.S. National Institute of Standards and Technology (NIST) has been contacted directly. The original preprint including Appendices A and B is currently undergoing independent peer review. The network architecture itself is not disclosed in this or any preceding publication — a deliberate decision to enable scientific evaluation of the results while preventing immediate reproduction of the capability.

An authentication framework based on amplitude-geometric encoding — resistant to both classical brute-force and quantum computation, as amplitudes are not computable but only measurable — has been developed and published under independent peer review [

8]. The methodology described in this appendix identifies the vulnerability; the framework in [

8] provides the replacement architecture. Both are available to the institutions that have been notified.

The author considers proactive disclosure to responsible authorities, combined with open publication of empirical results, to be the only scientifically and ethically defensible path. The data are reproducible, the methodology is documented. All further communication is to be directed to the author’s IP attorney.