1. Introduction

The development of computer science, software engineering, and the increasing use of artificial intelligence and data mining technologies has led to the development of a wide range of applications that are critical to operations in business, health care, and education. The development of software is a complex and expensive process, prone to error and subsequent failure to meet user requirements [

1]. Organisations, therefore, invest significant resources into ensuring that software products are tested against set criteria, ensuring they are of the best quality before being released to their clients and users [

2]. Traditionally testing has been a manual process, involving humans executing applications and comparing their behaviour against certain benchmarks. However, advances in technology and the constant desire to improve quality have introduced and increased the use of automated testing, which uses computer algorithms to detect bugs in software applications [

3]. Automated testing can generally be categorised into two types: that where manual test cases are written and used by automated testing tools, or a framework whereby the testing tools automatically generate test cases. In this study, we focus on the first type where test cases are manually created. The phrase `automated testing’ is used throughout the rest of the paper and is referring to instances of automated testing involving the manual creation and automated use of testing.

A key aspect of software development processes is that they all have distinct testing phases. For example, the

Waterfall development process has a distinct test phase after development has taken place [

4]. Although there are processes involving iterative and concurrent testing, many development processes assume users can specify a finished set of requirements in advance, ignoring the fact that they develop as the project progresses and changes depending on the client’s circumstances. Manual testing and the correction of errors, as well as the integration of changes, is feasible in small projects as the code size is easy to manage. However, as client requirements change or more requirements are added, the projects grow in complexity, yielding more lines of code and a higher probability of software faults occurring (commonly named bugs). This results in the need for an increased frequency of manual software testing. Consequently, there has been a shift to more flexible methodologies that combine testing with the completion of each phase to identify software problems before progressing to the next phase.

Automated software testing has many well-established known benefits [

5,

6]; however, several organisations are still not using automation techniques. The results from the 2018 State of Testing Report survey on test automation

1 identified that automation is not yet as common as organisations desire. There are still many factors hindering update and use, such as challenges in acquiring and maintaining expertise, cost, and the utilisation of the correct testing tools and frameworks. Although previous studies present the reasons why automated testing might not be used, there is an absence of literature focusing on different job roles and experiences and how they relate to factors preventing the adoption of automated testing. There is also debate amongst academics and professionals as to the merits of automated testing over traditional, manual testing methods [

7]. This research paper presents an empirical study to gain an understanding of the different attitudes of employees working within the software industry. The particular focus of this research is to understand whether there are common patterns surrounding different roles and levels of experience. Furthermore, this research aims to identify common reasons as to why automation is not being used.

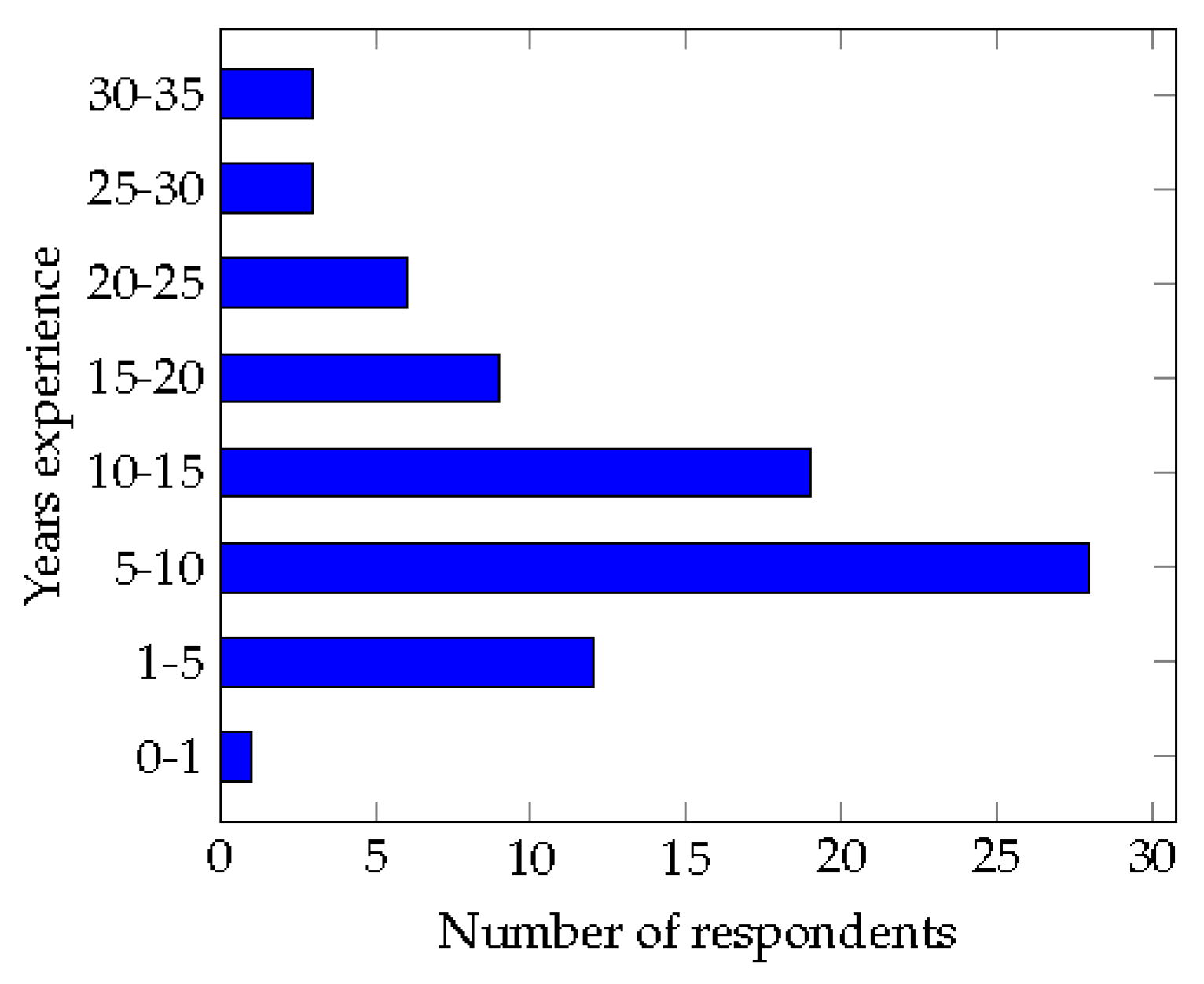

At the end of this research, it is foreseen that the following question will be answered: do common themes emerge when investigating opinions as to why automated testing is not used, with the focus being on job role and level of experience? To answer this research question, a twenty-two-question survey has been created to collect attitudes toward automated testing (AtAT) from employees working in the software testing industry. The data is then thoroughly analysed by using quantitative techniques to determine key patterns and themes.

This paper is structured as follows:

Section 2 presents and discusses existing work, grounding this study in the relevant literature.

Section 3 describes and justifies the process adopted in this paper, which includes using a two-stage analysis approach. This section also presents and discusses the results of the study in detail, identifying common themes pertinent to the aim of this study.

Section 4 provides a summary of key findings, discussing how these findings motivate future work. Finally, in

Section 5 a conclusion of the work is provided. The full set of participant responses is available in

Appendix A.1.

4. Discussion and Findings

The purpose of this study was to test the nature of the relationship between a set of predictors including software characteristics, non-software issues and those reasons relating to practitioner support and opposition to AT adoption. In this spirit, scholars have found that AT characteristics, e.g. functionality and usability and adaptability can have a strong effect on practitioners’ support or opposition. In particular, we sought to test these predictors across different scenarios in order to gain an understanding of how the perceptions of individuals operating in different roles and with different levels of experience differ. To that end, it has been established that there are key identifiable patterns surrounding the attitudes towards automated testing from employees undertaking different roles and having different levels of experience. These key findings can be used by employers within the software industry to better understand the viewpoints of their employees.

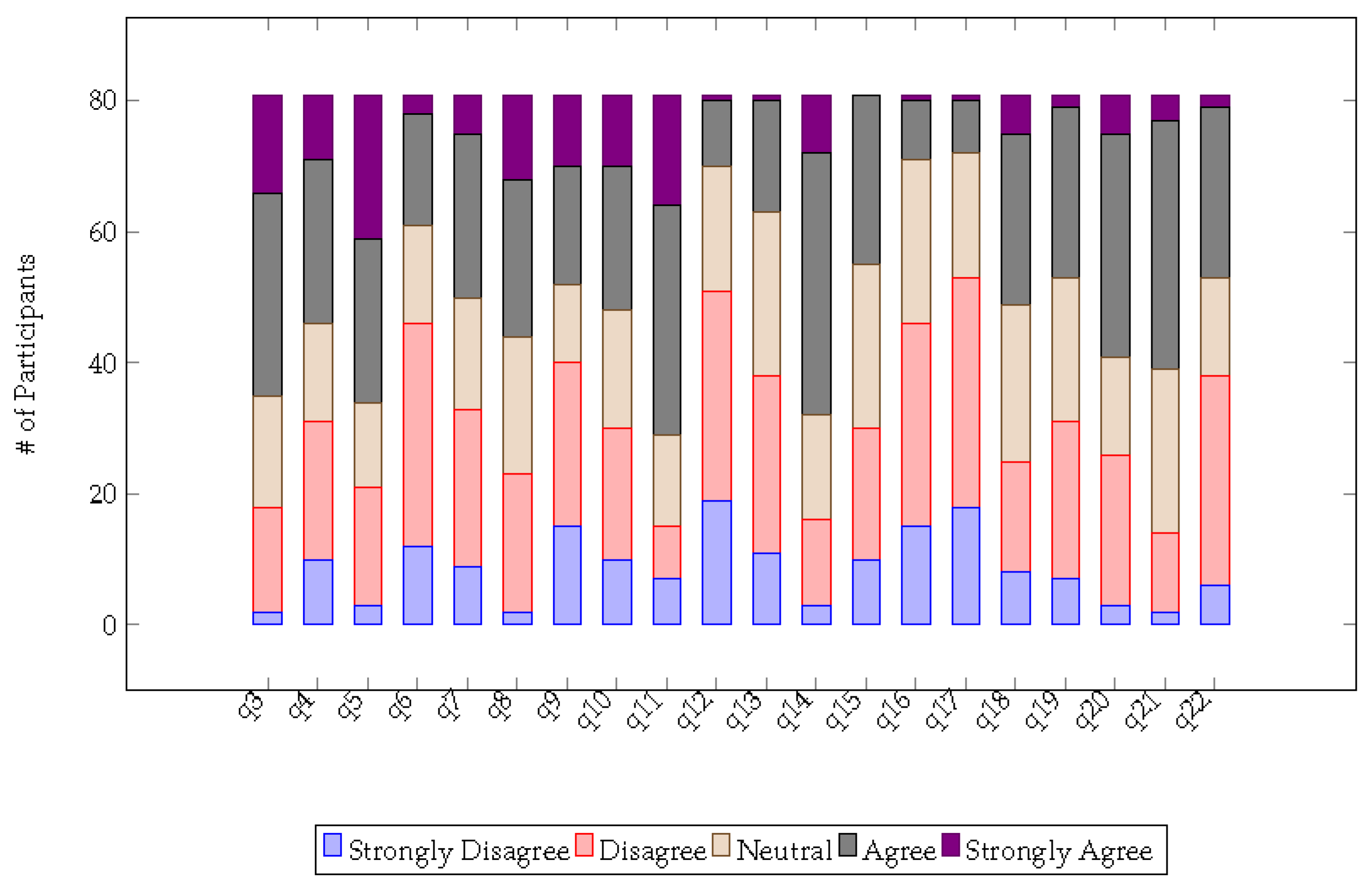

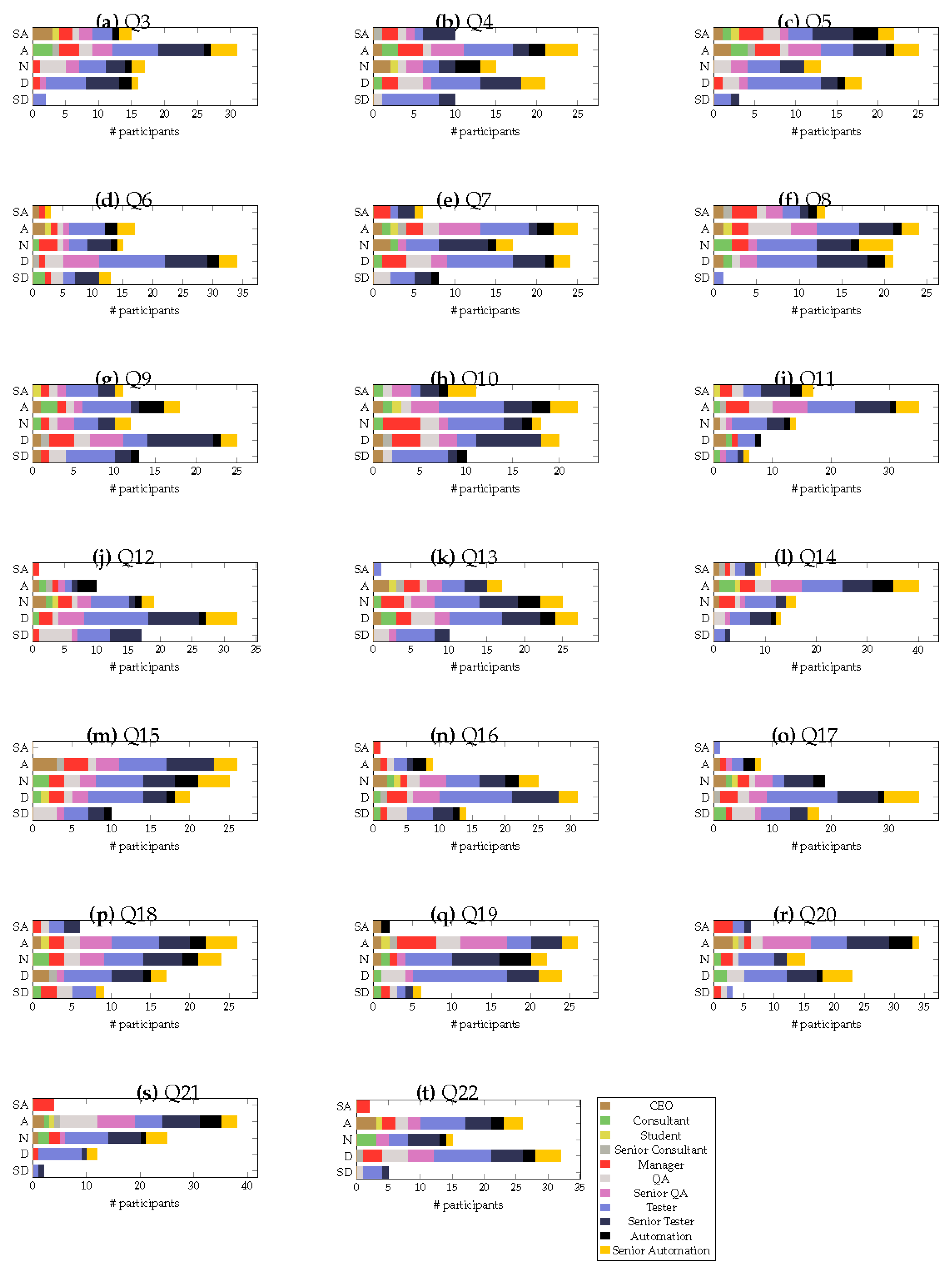

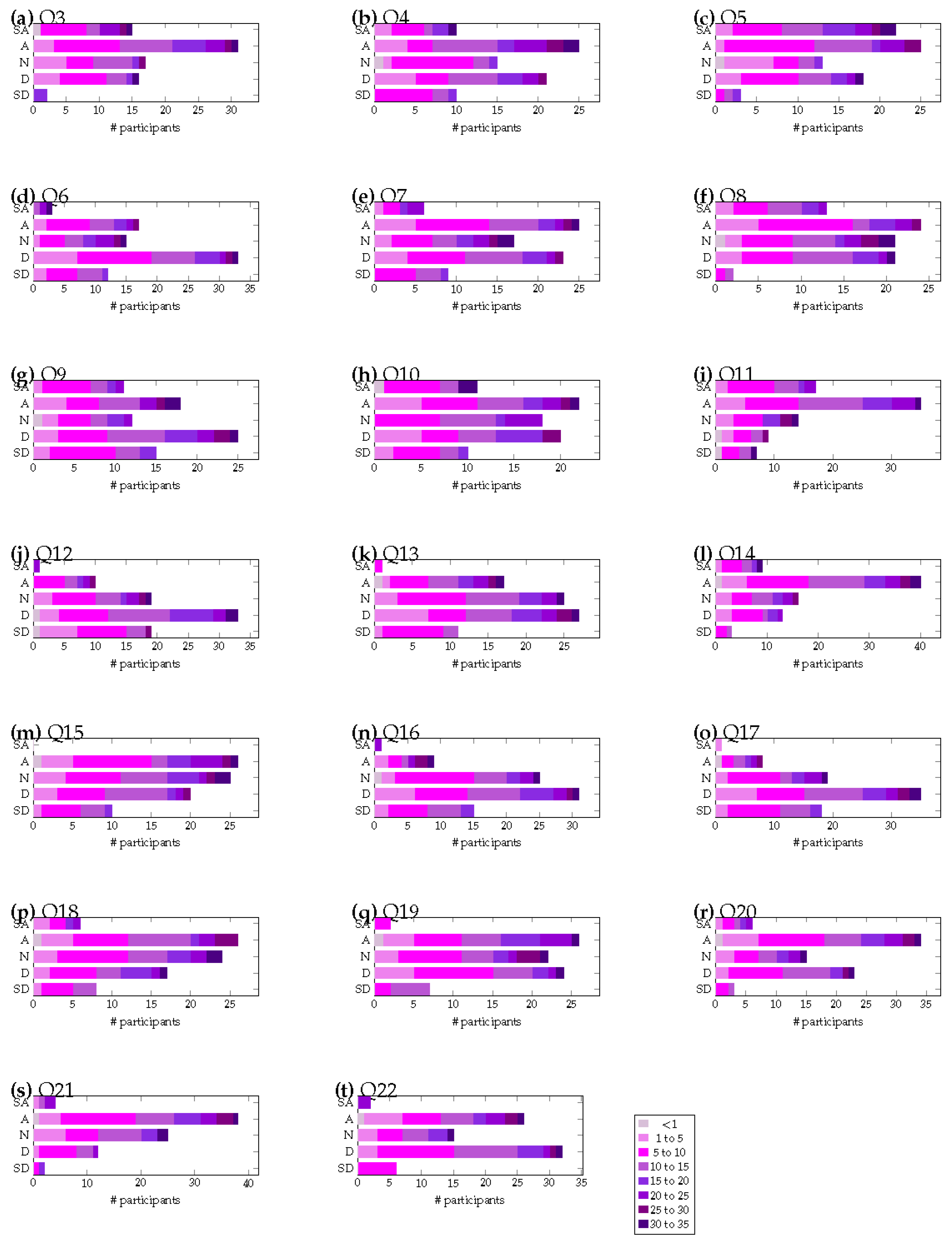

Based on the values in

Table 6, the responses for technical roles are asymmetric as technical roles believe that the reasons for not adopting AT are due to the non-software factor. However, the responses for non-technical roles are symmetric and, agree with both non-software and software reasons are the factors preventing adoption. We deduce that this could be down to the following reasons: (1) questions in non-software factors related to cost that all i.e not just non-technical employees agree with; (2) Based on common practice in the IT sector, technical employees are often promoted to non-technical (managerial) roles, meaning that they have both technical and non-technical attitudes; and, (3) Non-technical might have less understanding on how capable technical people are. I.e, management lack of understanding of their employees’ skills.

Based on the combination of the comprehensive basic analysis and principle component analysis, we draw the key findings presented in the remainder of this section. Throughout this section, the original questions and their responses are cross-referenced by adding the question number in parenthesis (e.q., q3 for question 3). In this section, free text optional responses provided by the user are analysed alongside the previously discussed quantitative information. The full responses provided by 19 of the participants can be seen in

Table A1, and as this section is trying to establish key findings from the data, they are used to substantiate quantitative patterns. A summary of the key themes in the free text submissions can be seen in

Table A2.

Summary Point 1.Although technical employees are more likely to believe that testers require a high level of expertise and that open-source tools are challenging, this is not identified as a factor preventing their adoption. However, on the contrary, non-technical roles do agree that an absence of expertise is preventing the use of automated testing.

When asking participants about whether they believe a lack of skilled resources is preventing automated testing from being used, it is evident that managerial staff believe this to be true, whereas those with more technical expertise do not (q3). This is an interesting finding as it confirms that there is a different perception between technical and non-technical staff in regard to whether a lack of skilled resources is a prevention factor. This could be because non-technical staff are unable to determine the requirements of expertise and match it with the capabilities within their organisation. Furthermore, it could also be because technical staff overstate their ability without having significant expertise in automated testing.

It is also evident that people do not believe that automated testing is not fully utilised due to people not realising its benefits (q9). Furthermore, technical roles do not believe there is an issue with open-source tools; however, less technical roles are more likely to support this argument (q12). In addition, there is a weak indication that those with technical expertise believe a high level of expertise is required (q13). It is however evident that the majority of the participants believe that strong programming skills are required to undertake automated testing (q14). However, when relating this to the results of the principle component analysis, it is evident that technical employees do not believe that technical reasons are preventing the use of automated testing.

It is perhaps not too surprising that technical roles are more likely to believe that a high level of expertise is required. This is because they are working closely with the technology and will have a comprehensive understanding of what knowledge is required. However, as demonstrated, technical roles are less likely to believe that skilled resources are preventing the use of automated testing as they have already gone through the learning process, becoming competent testers. On the contrary, management is more likely to be viewing the capability within their organisation versus what is to be delivered, and therefore, a lack of skilled resources might refer to there being insufficient resources available to deliver a project on time, rather than the absence of expertise from preventing thorough software testing. On the contrary, it is possible that those in technical roles report that they have the expertise required to utilise automated testing tools; however, this raises the question of why they are not always utilised if the necessary expertise is available.

In terms of comments provided by the participants, 7 of the 19 responses were directed at the necessity and lack of expertise. All of the 7 responses provided in

Table A2 are provided by individuals performing technical roles (cross reference participant number with

Table A1). Interestingly, all the responses do agree that technical knowledge is important, but one interesting observation is that some responses draw attention to the fact that there is a lack of training and mentorship within testing roles. One response even highlights the importance of individuals being able to learn the necessary skills independently. It is also interesting that a couple of responses directly state that the management of people is extremely important to help remove any skill and expertise gap, resulting in a more thorough and robust testing process.

Summary Point 2.Those with less experience are more likely to agree that individuals do not have enough time to engage in automated testing. Furthermore, employees with less technical experience with automated testing and increased management responsibilities disagree that they are time-consuming to learn.

Whether individuals have enough time to perform automated testing is polarised, with an even split agreeing and disagreeing. However, it has been identified that those with more junior roles are more likely to agree with this statement (q4). Furthermore, when considering how difficult they are to learn, the majority of people disagree that they are time-consuming to learn. However, in general, the least experienced employees tend to agree, and so do managers and CEOs (q15). This is agreeing with the results of the principle component analysis whereby technical staff are identified to agree that non-technical reasons are behind not adopting automated testing.

This finding agrees with the fact that the work levels and deadline pressures will be different in different organisations, and furthermore, people will respond to and handle these pressures differently. The fact that junior employees are more likely to state that they do not have sufficient time to perform automated testing duties is explainable by the fact that junior employees might take longer to perform testing duties. This might also be due to a lack of experience due to the employee learning new expertise necessary for their role, which could be slowing down the testing. It is also possible that those with less experience are burdened with learning the necessary knowledge and expertise to perform their entire role and therefore have little capacity to take on improvement activities. This may change as an individual gains more experience, becoming more efficient in their role and creating more space for learning and improvement.

Summary Point 3.The majority of participants agree that automated testing is expensive, with non-technical roles more likely to agree that they are expensive to use and maintain.

The majority of participants agree that commercial tools are expensive to use, but there is no discernible pattern (q11). However, there is a weak correlation that managerial roles are more likely to agree with the statement that test scripts are more expensive to generate (q19). This is further compounded whereby non-technical roles agree that there are high maintenance costs for test cases and scripts (q20). This agrees with the presented principle component analysis as both technical employees agree with non-technical reasons being responsible for not adopting automated testing. Furthermore, non-technical roles are split between believing that software and non-software factors are responsible for not adopting automated testing.

It is not surprising that the majority of users agree that the costs of automated testing are expensive. Furthermore, the pattern that managerial staff more strongly agree with this statement is explainable through their closeness with the financial operations of the business. It is however quite surprising that managerial staff believe that automated testing has high maintenance costs. A fundamental aspect of automated testing is its reuse and ease of maintenance. This difference in perspective is likely to originate from management’s lack of understanding when it comes to fundamental aspects of automated testing.

Comments provided by the participants also mirror the fact that automated testing is expensive to perform and maintain, which is largely down to the cost of the testing team. One participant (#75) states that management does not see the wasted amount of time in automated software testing, and this could provide justification as to why non-technical roles agree that they are expensive to maintain. If they saw the amount of wasted time, they might have a better understanding of the true cost.

Summary Point 4.All but the more experienced employees disagree that automated testing tools and techniques lack functionality. Furthermore, experienced employees are more likely to disagree that problems are introduced due to fast revisions, whereas those with managerial roles agree.

When considering whether automated testing tools and techniques lack functionality, in general, the more experienced employees are likely to agree, but overall the majority disagree (q16). When asked whether people believe that automated tools are reliable enough, there was a very strong tendency to disagree (q17). There is a slight agreement in that people believe that automated testing tools lack support for testing non-functional requirements (q18). When asking about whether automated testing tools and techniques change too often, introducing problems that need fixing, the general trend is that a higher number of years of experience leads to an increased chance of disagreement. Furthermore, of the response categories, non-technical roles agree/strongly agree (q21). This aligns with the findings from performing principle component analysis where non-technical roles more strongly believe that software reasons are preventing the use of automated testing, whereas those undertaking technical roles believe it is non-software issues.

The reason behind more experienced employees disagreeing that automated testing tools and techniques lack functionality is most likely down to the fact that more experienced employees either have fully mastered the tools, or they have developed sufficient workaround or alternative techniques. Furthermore, experienced staff do not believe that updates cause significant problems, which could be put down to the fact that they are experienced in how to handle revisions within the automated testing frameworks. Non-functional requirements are a secondary feature set of automated testing tools and techniques, and as such, are not the primary feature set integral to their core use. This is most likely the reason why the majority of participants do not see an issue with their lack of support for non-functional requirements.

Many comments were received in regard to the capabilities of tools and techniques, and in general, they state that the tools, techniques and frameworks do not lack functionality. Rather, they justify the complexity of tightly integrating the functionality within a project and how this can make it hard to reuse and fix revisions. Furthermore, it is evident that technical employees also believe that those in managerial roles do not understand what is involved in the implementation of automated testing. It is also interesting that one response from an individual performing a technical role (#64) even states that test scripts breaking is a good sign as it clearly demonstrates that they are working. A comment from an individual in management (#81) states that product delivery is more important than testing, demonstrating that for management their emphasis is on project completion rather than testing.

Summary Point 5.Only managerial staff believe that test preparation and integration inhibit their use. Furthermore, only managerial staff do not believe that software requirements change too frequently, having negative impacts on automated testing.

In terms of utilisation, when considering whether difficulties in preparing test data and scripts inhibit their use, only non-technical staff agree and there is a balanced response from technical roles (q5). Furthermore, the majority of participants do not believe that not having the right automation tools and available frameworks is preventing use (q6). When asking staff specifically about whether the difficulty to integrate tools is a problem, non-technical roles agree. technical roles are balanced with a slight emphasis on disagreement (q7). The majority of participants also agreed that requirements changing too frequently are impacting their use (q8); however, it is also the case that non-technical roles do not agree. There is also a strong disagreement from technical staff that test scripts are difficult to reuse across different testing stages (q22). This finding also agrees with the performed principle component analysis where it was identified that non-technical staff more strongly believe the reasons for not adopting automated testing to be technical.

The fact that non-technical employees believe that there are difficulties, both in setting up and maintaining automated tests, are prohibiting the use of automated testing tools is most likely down to the disconnect between non-technical and technical staff when it comes to understanding limitations with software testing. All participants believe that there are sufficient frameworks to meet their individual testing requirements. Interestingly, only management believes that changing requirements do not impact automated testing techniques. This difference could most likely originate due to a managerial misunderstanding of the impact of changing requirements throughout the software development cycle.

Comments provided by the participants do support the argument that those in testing roles understand the technical complexities involved and why automated testing might not be fully utilised. However, there is a lack of responses from managerial staff to justify that this is only a viewpoint from technical employees. There are many reasons specified for poor utilisation, from formal training and guidance, a preference to view automated testing as second to manual, and that automation might be used for the wrong reasons i.e., to replace manual rather than complement.

Summary Point 6.Whether a lack of support is preventing automated testing use is polarised.

There is neither agreement nor disagreement that a lack of support is preventing the use of automated testing. There is however an observation that consultants tend to agree with this statement (q10). This is interesting as it demonstrates that there is no majority, either in terms of role or experience, that are stating a lack of support is preventing them from adopting and using automated testing within their organisation. However, it is also worth noting that the responses to this question are rather polarised with people agreeing and disagreeing, but overall there are few holding strong views on this. This is consistent with the performed principle component analysis, which determined that non-technical roles and technical roles both agree (technically more strongly) that non-software factors, such as finance, expertise, and time are preventing the adoption of automated testing.

Comments received from participants detail that training is a common limiting factor to their update, but the biggest theme is that non-technical either do not understand or value test automation. This means that automation is seen as an afterthought from manual testing and thus will not be well supported by their employer.