Abstract. Accurate cloud quantification is essential in climate change research. In this work, we construct an automated computer vision framework by synergistically incorporating deep neural networks and finite sector clustering to achieve robust whole sky image-based cloud classification, adaptive segmentation, and recognition under intricate illumination dynamics. A bespoke YOLOv8 architecture attains over 95% categorical precision across four archetypal cloud varieties curated from extensive annual observations (2020) at a Tibetan highland station. Tailor-made segmentation strategies adapted to distinct cloud configurations, allied with illumination-invariant image enhancement algorithms, effectively eliminate solar interference and substantially boost quantitative performance even in illumination-adverse analysis scenarios. Compared with the traditional threshold analysis method, the cloud quantification accuracy calculated within the framework of this paper is significantly improved. Collectively, the methodological innovations provide an advanced solution to markedly escalate cloud quantification precision levels imperative for climate change research, while offering a paradigm for cloud analytics transferable to various meteorological stations.

1. Introduction

Clouds play an extremely critical role in regulating the Earth's climate system (Voigt et al., 2021). Clouds can reflect incoming solar shortwave radiation, reducing the amount of heat absorbed at the surface; meanwhile, they can also absorb outgoing longwave radiation from the surface, producing a greenhouse effect (Feng et al., 2021). In essence, clouds serve as an important "sunshade" to maintain the balance of the greenhouse effect and prevent overheating of the Earth (Werner et al., 2013). However, different cloud types at varying altitudes impact the climate system differently. For instance, high-level cirrus clouds mainly contribute to reflection and scattering, while low-level stratus and cumulus clouds more so cause the greenhouse effect (Riihimaki et al., 2021). Accurately determining cloud types, distributions and evolutions is vital for long-term climate change monitoring and forecasting (Jafariserajehlou et al., 2019). Moreover, there are considerable regional disparities in cloud amount, and pronounced differences exist in regional climate characteristics, which makes precise cloud quantification even more crucial. Accurate cloud detection can provide critical climate change information to advance our understanding of the climate system from multiple dimensions (Hutchison et al., 2019); it also helps validate the accuracy of climate model predictions, furnishing input parameters for climate sensitivity research (Hutchison et al., 2019). Therefore, conducting accurate quantitative cloud observations is of great significance for climate change science, which is also the motivation behind this study's effort to achieve precise cloud quantification through image processing techniques.

Currently, accurate cloud typing and quantification still face certain difficulties and limitations. For cloud classification, common approaches include manual identification, threshold segmentation, texture feature extraction, satellite remote sensing, ground-based cloud radar detection, aircraft sounding observations, etc. (Li et al., 2017; He et al., 2018; Ma et al., 2021; Rumi et al., 2015; Wu et al., 2021). Manual visual identification relies on the experience of professional meteorological observers to discern cloud shapes, colors, boundaries and other features to categorize cloud types. This method has long been widely used, but is heavily impacted by individual differences and lacks consistency, with low efficiency (Alonso-Montesinos, 2020). Threshold segmentation sets thresholds based on RGB values, brightness and other parameters in images to extract pixel features corresponding to different cloud types for classification. It is susceptible to illumination conditions and ineffective at distinguishing transitional cloud zones (Nakajima et al., 2011). Texture feature analysis utilizes measurements of roughness, contrast, directionality and other metrics to perform multi-feature combined identification of various clouds, but adapts poorly to both tenuous and thick clouds (Yu et al., 2013). Satellite remote sensing discerns cloud types based on spectral features in different bands combined with temperature inversion results, but has low resolution and inaccurate recognition of ground-level small clouds (Yang et al., 2007). Ground-based cloud radar differentiation of water and ice clouds relies on measured Doppler velocity and other parameters, with inadequate detection of high thin clouds (Irbah et al., 2023). Aircraft sounding observations synthesize multiple parameters to make judgments, but have limited coverage and observation time. For cloud quantification, currently prevalent methods include laser radar measurement, satellite remote sensing inversion, ground-based cloud radar, and whole sky image recognition (Li et al., 2022a). Laser radar emits sequenced laser pulses and estimates cloud vertical structure and optical depth from the backscatter to directly quantify cloud amount, but has large equipment size, high costs, limited coverage area, and cannot produce cloud distribution maps. Satellite remote sensing inversion utilizes parameters like cloud top temperature and optical depth, combined with inversion algorithms to obtain cloud amount distribution. However, restricted by resolution, it has poor recognition of local clouds (Rumi et al., 2015). Ground-based cloud radar can measure backscatter signals at different altitudes to determine layered cloud distribution, but has weak return signals for high thin clouds, resulting in inadequate detection. With multiple cloud layers, it struggles to differentiate between levels, unfavorable for accurate quantification (Van De Poll et al., 2006). The conventional whole sky image segmentation utilizes fisheye cameras installed at ground stations to acquire whole sky images, then segments the images based on color thresholds or texture features to calculate pixel proportions of various cloud types, which are converted to cloud cover. This method has the advantage of easy and economical image acquisition, but is susceptible to illumination changes that can impact segmentation outcomes, with poor recognition of small or high clouds (Alonso-Montesinos, 2020). In summary, the current technical means for cloud classification and quantification lack high accuracy, cannot precisely calculate regional cloud information, and need improved stability and reliability. They fall short of meeting the climate change science demand for massive fine-grained cloud datasets.

In recent years, with advances in computer vision and machine learning theories, some more sophisticated technical means have been introduced into cloud classification and recognition, making significant progress. While traditional methods are not able to characterize and extract cloud texture features well, convolutional neural networks can learn increasingly complex patterns and discriminative textures from large pre-trained datasets. In addition, convolutional neural networks typically employ a hierarchical feature extraction framework that captures fine textures such as edges and shapes. For instance, cloud image classification algorithms based on deep learning have become a research hotspot. Deep learning can automatically learn feature representations from complex data and construct models to synthetically judge the visual information of cloud shapes, boundaries, textures, etc. to distinguish between different cloud types (Yu et al., 2020). Meanwhile, unsupervised learning methods like k-means clustering are also widely applied in cloud segmentation and recognition. This algorithm can autonomously discover inherent data category structures without manual annotation, enabling cloud image partitioning and greatly simplifying the workflow (Krauz et al., 2020). Some studies train k-means models to swiftly cluster and recognize cloud and clear sky regions in whole sky images, improving cloud quantification speed and efficiency. It can be foreseeable that the combination of deep learning and unsupervised clustering for cloud recognition will find expanded applications in meteorology. We also hope to lay the groundwork for revealing circulation characteristics, radiative effects and climate impacts of different cloud types through this cutting-edge detection approach.

Despite some progress made in current cloud recognition algorithms, numerous challenges remain. Firstly, accurately identifying different cloud types is still difficult, especially indistinct high-altitude cirrus clouds and transitional mixed cloud types (Ma et al., 2021). Secondly, illumination condition changes can drastically impact recognition outcomes, leading to high misjudgment rates in situations like polarization and shadowing. To address these issues and limitations, we propose constructing an end-to-end cloud recognition framework, with a focus on achieving accurate classification of cirrus, clear sky, cumulus and stratus clouds, paying particular attention to the traditionally challenging cirrus clouds. Building upon the categorization, we design adaptive dehazing algorithms and k-means clustering finite sector segmentation to enhance recognition of cloud edges and tenuous regions. We hope that through optimized framework design, long-standing issues of cloud typing and fine-grained quantification can be solved, significantly improving ground-based cloud detection and quantification for solid data support in related climate studies. The structure of this paper is as follows.

Section 2 introduces the study area, data acquisition, and construction of the cloud classification dataset.

Section 3 elaborates the methodologies including neural networks, image enhancement, adaptive processing algorithms, and evaluation metrics. Finally,

Section 4,

Section 5 and

Section 6 present the results, discussions, and conclusions respectively.

2. Study Area and Data

2.1. Study Area

The Yangbajing Comprehensive Atmospheric Observatory is located 90 kilometers northwest of Lhasa, Tibet, adjacent to the Qinghai-Tibet Highway and Railway, with an average altitude of 4300 meters. This region has high atmospheric transparency and abundant sunlight, creating a unique meteorological environment (Krüger et al., 2004). Yangbajing is far from industrial areas and cities, with relatively good air quality and low atmospheric pollution levels, which reduces the impact of air pollution on cloud observation and improves cloud quantification accuracy. Meanwhile, Tibet spans diverse meteorological types, meaning various cloud types can be observed in the same area, enabling better research on the evolution patterns of different cloud types.

2.2. Imager Information

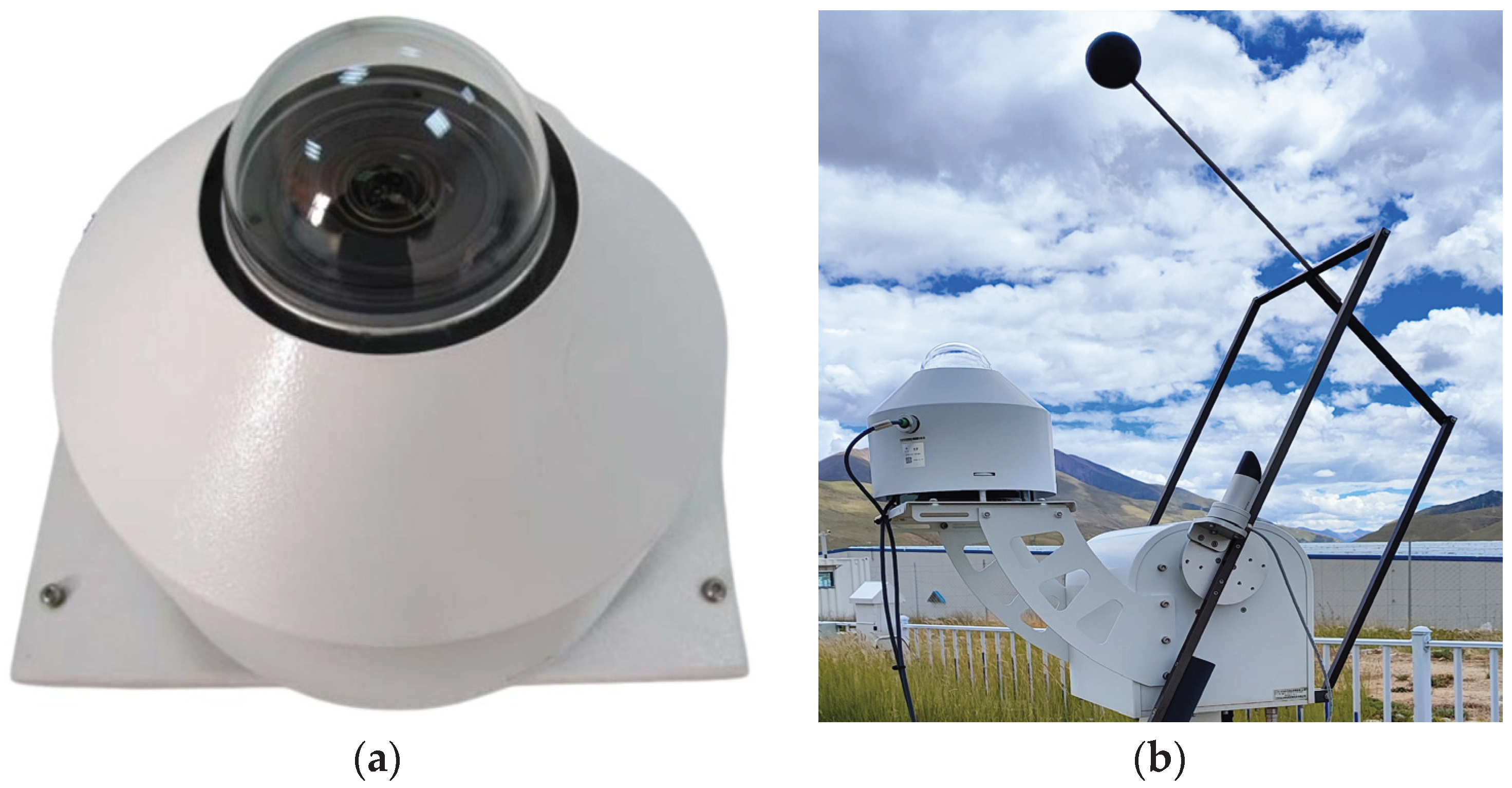

The cloud quantification automated observation instrument used in this study is installed at the Yangbajing Comprehensive Atmospheric Observatory (90°33'E, 30°05'N) and has been measuring since April 2019. The visible light imaging subsystem mainly comprises the visible light imaging unit (

Figure 1a), the sun tracking unit (

Figure 1b), the acquisition box, and the power box. As summarized in

Table 1, this system images the entire sky every 10 minutes, measuring clouds ranging from 0 to 10 km with elevation angles above 15°. It can capture RGB images in the visible spectrum at a resolution of 4288 × 2848 pixels. The visible light imaging device is a CMOS imaging system with an ultra-wide-angle fisheye lens that periodically acquires images of the entire sky in the visible spectrum. The sun tracking unit calculates and tracks the sun's position, effectively shielding direct incident sunlight to prevent damage to the CMOS sensor's photosensitive elements. It also significantly reduces the impact of white light around the sun on subsequent processing.

2.3. Dataset

The image dataset used in this study is comprised of all-sky images during 2020. Considering images taken during sunset and sunrise are more easily influenced by lighting conditions, we only selected images taken between 9am to 4pm daily. Additionally, to reduce correlation, we only picked one image every half hour, amounting to 16 sample images per day. Among all selected images, cases of rain, snow as well as lens occlusion or contamination were removed. Finally, 4000 high quality, occlusion-free all-sky images with no rain or snow were screened out and categorized into four classes with 1000 images per class. The four classes are namely: cirrus, clear sky, cumulus, and stratus. It should be emphasized that the categorization into four dominant cloud types here is for accurately quantifying the cloud fraction of each individual category, rather than accounting for hybrid clouds. These screened images represent the visual characteristics of different cloud types well, forming the image dataset for this study.

3. Materials and Methods

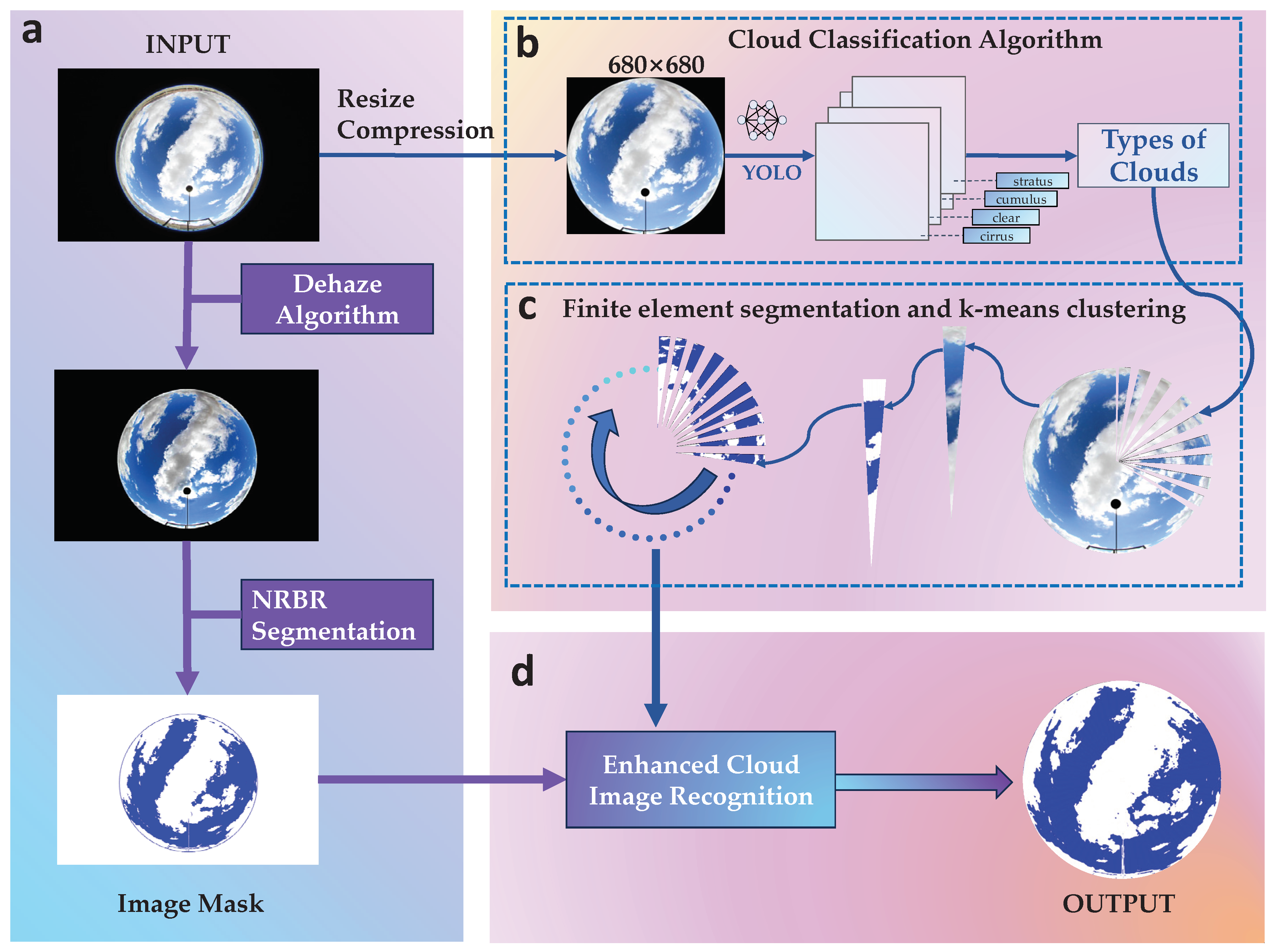

The framework proposed in this study is illustrated in

Figure 2. It can be summarized into the following steps:(1) Data quality control and preprocessing. First, quality control is performed on the collected raw all-sky images to remove distorted images caused by occlusion or sensor issues. Then, image size and resolution are standardized.(2) Deep neural network classification and evaluation metrics. The YOLOv8 deep neural network is utilized to categorize the cloud images, judging which of the four types (cirrus, clear sky, cumulus, and stratus) each image belongs to. Precision, recall, and F1-scores are used to evaluate the classification performance.(3) Adaptive enhancement. Different image enhancement strategies are adopted according to cloud type to selectively perform operations like dehazing, contrast adjustment etc. to improve image quality. (4) K-means Clustering with Finite Sector Segmentation: The improved photos are subjected to category-based K-means clustering, which is based on finite sector segmentation, in order to extract cloud features and produce precise cloud detection outcomes.

3.1. Quality Control and Preprocessing

Considering that irrelevant ground objects may occlude the edge areas of the original all-sky images, directly using the raw images to train models could allow unrelated ground targets to interfere with the learning of cloud features, reducing the model's ability to recognize cloud regions (Wu et al., 2023). Therefore, we cropped the edges of the original images, using the geometric center of the all-sky images as the circle center and calculating the circular coverage range corresponding to a 26° zenith angle, to precisely clip out this circular image area and remove ground objects on the edges. This cropping operation eliminated ground objects from the original images that could negatively impact cloud classification, resulting in circular image regions containing only sky elements. To facilitate subsequent image processing operations while ensuring image detail features, the cropped images underwent size adjustment to set the target resolution to 680×680 pixels. Compared to the original 4288×2848 pixels, adjusting the resolution retained the main detail features of the cloud areas in the images, but significantly reduced the file size for easier loading and calculation during network training. Finally, a standardized dataset was constructed by cloud type - the resolution-adjusted images were organized and divided into four folders for cirrus, clear sky, cumulus, and stratus, with 1000 pre-processed images in each folder. A standardized all-sky image dataset containing diverse cloud morphologies was built.

3.2. Deep Neural Network Classification

3.2.1. Network Structure Design

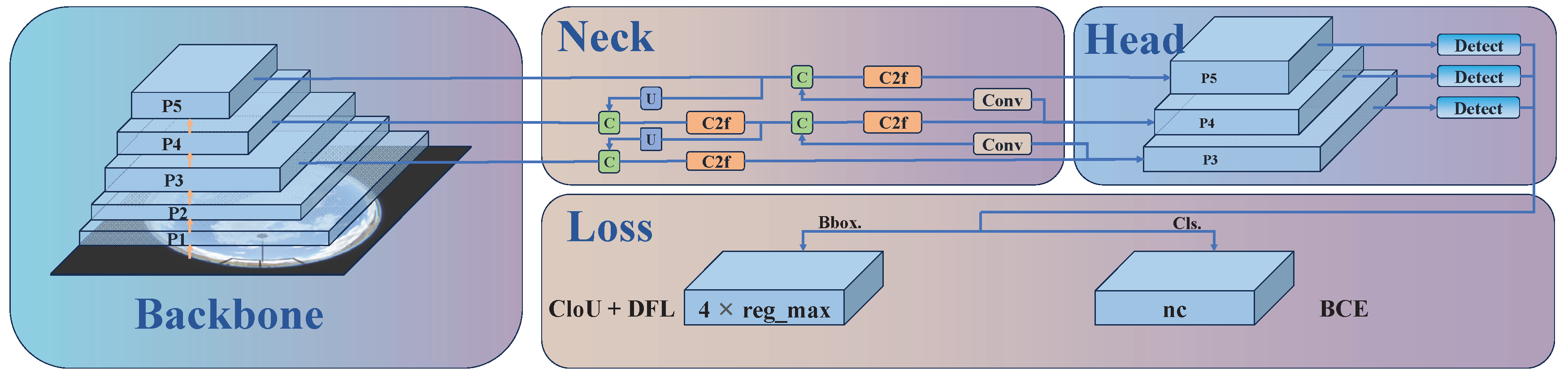

This study employs YOLOv8 as the base model architecture for the cloud classification task. As illustrated in

Figure 3, the YOLOv8 network structure consists primarily of the Backbone, Neck, Head and Loss components. The Backbone of YOLOv8 uses the Darknet-53 network, replacing all C3 modules in the backbone with C2f modules, which draw design inspiration from C3 modules and ELAN to extract richer gradient flow information (Li et al., 2023). The Neck of YOLOv8 implements a PAN-FPN structure to achieve model lightweightization while retaining original performance levels. The detection Head uses a decoupled structure with two convolutions respectively responsible for classification and regression (Xiao et al., 2023). For the classification task, binary cross entropy loss (BCE Loss) is utilized, while distribution focal loss (DFL) and complete IoU (CIoU) are used for predicting bounding box regression. This detection structure can improve detection accuracy and accelerate model convergence (Wang et al., 2023). Considering the limited scale of cloud datasets, we load the YOLOv8-X-cls model pre-trained on ImageNet with 57.4M parameters as the initialized model. Through deliberate designs of modules, pre-trained model initialization and configuration of training parameters, a superior end-to-end cloud classification network is constructed.

3.2.2. Experimental Parameter Settings

After constructing the model architecture, we trained the model using the previously prepared classification dataset containing images of multiple cloud types. During training, the input image size was set to 680×680. We set the maximum number of training epochs to 400, and the number of samples used per iteration was 32. To prevent overfitting, momentum and weight decay terms were added to the optimizer and the patience parameter was adjusted to 50. To augment the sample space, various data augmentation techniques were employed such as random horizontal flipping (probability of 0.5) and mosaic (probability of 1.0). The SGD optimizer was chosen since its stochastic sampling and parameter update provide opportunities to jump out of local optima, helping locate the global optimum in a wider region. Considering initial and final learning rates, the initial learning rate was set to 0.01 and gradually decayed during training to enable more refined optimization of model parameters during later convergence.

3.2.3. Cloud Classification Evaluation Indicators

To comprehensively evaluate the cloud classification performance of the model, a combined qualitative and quantitative analysis scheme was adopted. Qualitatively, we inspected the model's ability in categorizing different cloud types, boundaries, and detail structures by comparing classification recognition differences between the validation set and test set. Quantitatively, metrics including precision, recall and F1-score were used to assess the model. Precision reflects the portion of true positive cases among samples predicted as positive, and is calculated as:

Recall represents the fraction of correctly classified positive examples out of all positive samples, and is calculated as:

F1-score considers both precision and recall via the formula:

Here, TP stands for true positives, TN true negatives, FP false positives, and FN false negatives. Through this combined qualitative and quantitative evaluation system, the cloud classification recognition performance can be fully examined.

3.3. Adaptive Enhancement Algorithm

When processing all-sky images, we face the challenges of visual blurring and low contrast caused by overexposure and haze interference. To address this, a dark channel prior algorithm is adopted in this study. The core idea of the dark channel prior algorithm is to perform haze estimation and elimination based on dark channel images (Kaiming et al., 2009). The dark channel image selects the minimum value among the RGB channels at each pixel location, thus reflecting the minimum brightness within the pixel area. Low brightness values tend to manifest in regions with haze, thereby providing clues for haze. We utilize an image enhancement algorithm to further optimize image quality following these steps based on the dark channel prior assumption: First, calculate the dark channel for each pixel of the input image by taking the minimum value across the three RGB color channels. Next, estimate the global atmospheric light A using the non-zero minimums of the dark channel. Based on the atmospheric scattering model, obtain the transmission t for each pixel, which indicates the visibility of that pixel. Finally, apply the formula:

where J is the recovered haze-free image, and I is the original input image. The dehazing algorithm can effectively eliminate fog in images to yield more discernible cloud and sky boundaries, facilitating subsequent generation of high-quality cloud covers.

In the image enhancement algorithm, the atmospheric light value A directly impacts the intensity of dehazing. Adaptive enhancement strategies are designed according to cloud type. For relatively thin cirrus, excessive enhancement may filter them out, hence smaller A values are chosen to preserve details. Meanwhile, thicker cumulus, stratus and clear sky images allow larger A values to strengthen haze removal, eliminating overexposed areas surrounding the sun and on edges to obtain more uniform sky distributions. We focused our analyses on the processing effects on two types of easily misclassified areas - surrounding the sun, where overexposure often leads to misjudgements; and white glows on sky edges, which can also readily cause errors. To improve segmentation quality, additional processing is implemented on these two areas. The sun's position can be determined by recognizing the location of the occulting disk, enabling application of enhanced dehazing to this circular region to reduce white glow. For the sky edge area, after removing ground elements, an annular region on the sky edge is designated for strengthened haze removal to alleviate white glow influence on identification. Through the above optimized designs, misjudgement issues surrounding the sun and edges can be effectively controlled to boost cloud segmentation quality.

3.4. Finite Sector Segmentation and K-means Clustering

On the basis of obtained cloud type classification results, we propose an adaptive image segmentation method tailored to cloud morphology. Different cloud types exhibit varying shapes, necessitating customized segmentation strategies for optimal effects. Specifically, we adopt an adaptive image segmentation method based on cloud types, taking the geometric center of the all-sky image as the center of the circle, and the distance from the center of the circle to the edge of the circular sky as the radius, to evenly divide the circular area into multiple Sector shape to meet the characteristics of different cloud shapes. For example, we segment the cirrus cloud image into 72 sector-shaped areas. Because cirrus clouds are weak in shape and similar in color to the sky, more detailed segmentation can help improve the accuracy of the clustering algorithm. For sunny days, there are fewer elements in the image, so dividing it into four sectors can enable the subsequent clustering algorithm to achieve higher classification accuracy. The edge of cumulus clouds is obvious, but there may be uneven light. This study divided it into 36 fan-shaped areas. There are fewer elements that need to be divided in the stratus image, so it is also divided into 4 sectors. This adaptive segmentation strategy is based on the understanding of the characteristics of the four types of cloud forms and is determined through a large number of actual test results, which significantly improves the accuracy of the clustering algorithm in identifying cloud cover.

With cloud type adaptively segmented images, a K-means clustering algorithm is then implemented in each sector to further glean cloud information. It first randomly initializes K cluster centers, then categorizes samples to the nearest cluster based on distance, before recalculating cluster centers (Dinc et al., 2022). This iterative process converges when centers remain static. A proper k value is chosen per sector to partition it into sky, cloud and background. Each region is then recolored according to clustering outcomes to obtain a preliminary cloud detection image. This design capitalizes on K-means’ clustering capability to automatically distinguish sky and cloud elements in sectors.

In traditional cloud segmentation, the Normalized Red/Blue Ratio (NRBR) method exhibits certain shortcomings. Firstly, it struggles to effectively distinguish intense white light around the sun, often misclassifying these overexposed areas as cloud regions. Secondly, it fails to properly handle the bottom of thick cloud layers, where the regions appear dark due to the lack of penetrating light and may be erroneously classified as clear sky areas. Both misclassifications stem from the NRBR method overly relying on RGB color features without comprehensive consideration of lighting conditions. When atypical lighting distributions occur, accurate cloud and sky differentiation becomes challenging based solely on red/blue ratio values. Therefore, after obtaining the initial cloud segmentation results, we propose a mask-based refined segmentation method to further enhance the effectiveness. The specific approach involves first extracting the predicted sky regions from the aforementioned segmentation results, using them as a mask template. Subsequently, each sector undergoes k-means clustering to identify blue sky and white clouds, restricting the region after concatenating sectors within the mask-defined blue sky template. This process yields more nuanced identification results. By conducting secondary segmentation only on key areas and leveraging the results from adaptive k-means extraction, a finer segmentation is achieved. Ultimately, building upon the initial segmentation, this approach significantly improves potential misclassifications at the cloud edges, generating more accurate final cloud detection results. This design, guided by prior masks for localized refinement, effectively enhances the quality of cloud segmentation.

4. Results

4.1. Cloud Classification Results

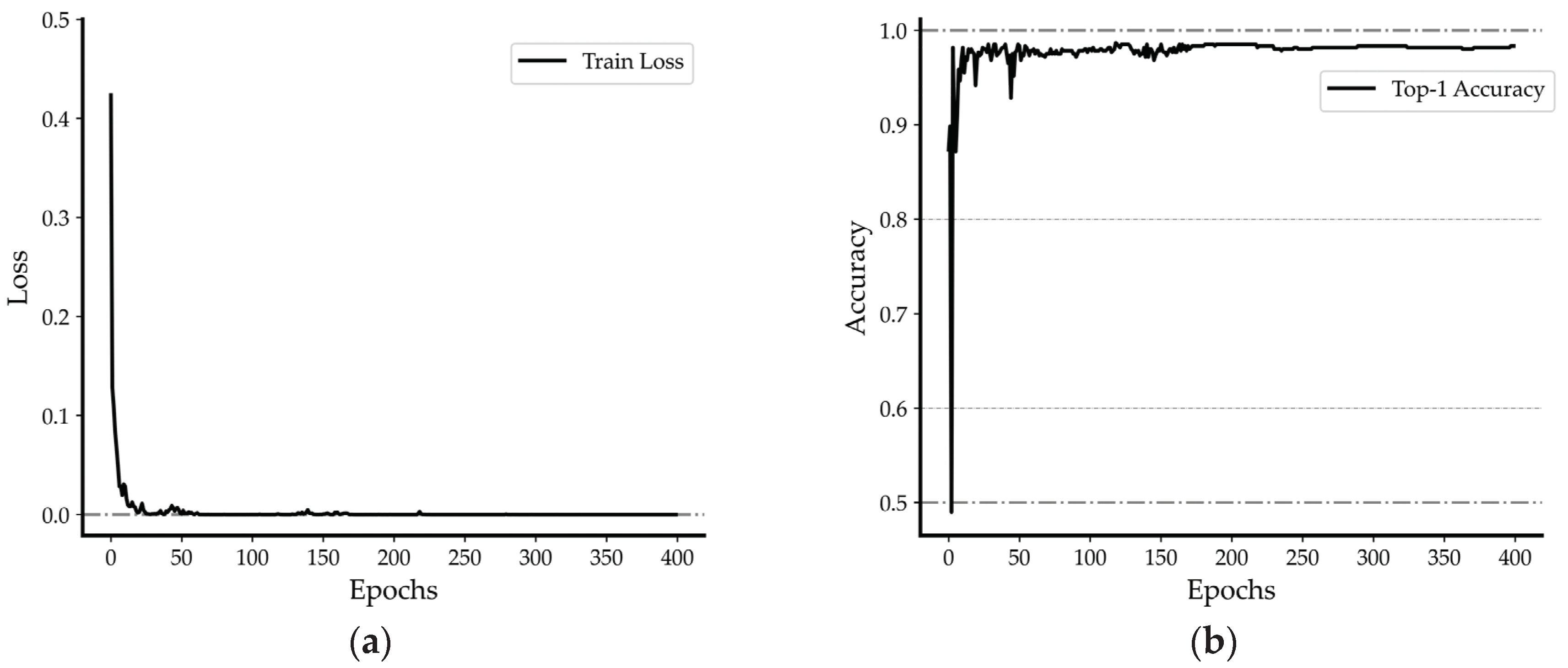

This study constructs a dataset based on four dominant types of cloud images collected from the Yangbaqing station in Tibet and employs the YOLOv8 deep learning model for cloud classification. To quantitatively assess the training effectiveness of the YOLOv8 cloud classification model, we record the values of the loss function and training accuracy at different training epochs, as depicted in

Figure 4a. With the increase in training iterations, the model's loss value consistently decreases, with the training set loss decreasing from around 0.4 to near 0. The model gradually achieves improved predictive performance, reducing the gap between predicted values and true labels. Simultaneously, we analyze the classification accuracy curve during the model training process. As seen in

Figure 4b, the model's Top-1 Accuracy rises from 0.5 to around 0.98. Through continuous training optimization, the model demonstrates sustained improvement in accuracy for distinguishing the four cloud types, progressively acquiring the ability to effectively discriminate the visual features of different cloud formations.

After training completion on the self-built dataset, we tested the model's classification performance. These results cover four major cloud types, including Cirrus, Clear, Cumulus, and Stratus.

Table 2 demonstrates the model's precision, recall, and F1 scores. As shown in the table, on the Self-built Dataset, the model delivers fairly steady classification performance for indistinctly bounded cumulus clouds, maintaining relatively high precision, recall, and F1 scores of over 95%, indicating robustness and generalization capability of the model in categorizing cumulus clouds. The model achieves outstanding classification efficacy on clear days, with all metrics reaching or approximating 100%, reflecting powerful generalization aptitude in recognizing clear conditions. The Cumulus type also sees all classification performance parameters surpassing 96%, denoting high classification accuracy. The Stratus category manifests extremely excellent outcomes on the Self-built Dataset across all metrics of 100%, implying that the model classifies Stratus very accurately with stable performance unaffected by dataset variations, successfully learning effective visual traits to discriminate the Stratus type.

When verifying our model's classification performance, we opted to validate on the public TCI Dataset to ensure extensive applicability of our model. Firstly, stringent quality control was imposed on the TCI dataset, removing images of inferior quality and ambiguous categorization. Eventually 900 high quality images per cloud type - Cirrus, Clear, Cumulus and Stratus - were screened, totaling 3600 images. Adopting identical training parameters as the self-built dataset, we trained the public dataset and validated performance on the test set containing 200 images per cloud type - Cirrus, Clear, Cumulus and Stratus - subsequently computing precision, recall and F1 scores for the model's classifications as depicted in

Table 2. Evident from the table, the model demonstrates outstanding performance on the public TCI dataset, attaining commendable classification outcomes. Notably, for the Clear and Stratus cloud types, the model approximates or achieves 100% accuracy across multiple evaluation metrics.

Compared to related research utilizing the bag of micro-structures (BoMS) approach for cloud type identification on the TCI dataset which encompassed five cloud types and attained an average accuracy of 93.80% after excluding mixed types (Li et al., 2016), our model realizes a higher average accuracy of 98.31% under the same assessment criteria. This further exhibits the superiority of our model architecture over preceding techniques, possessing more potent classification capability and performance. These results signify that our model framework not only manifests stellar performance on the self-built dataset, but can also maintain lofty competency with robustness and generalization strengths on public data.

On the four-type weather test set, five randomly selected images from each type were tested. As Figure 5 shows, all images obtained accurate category labels with confidence scores of 1.00, again validating the reliability of the training results in

Table 2. Through training, the model has acquired the capability to discern different cloud morphologies based on visual characteristics like shape, boundary and thickness to generate cloud type classification outcomes. In summary, the model can not only effectively tackle various challenges in cloud classification tasks but also delivers consistent performance across cloud types on validation and test sets. The robust overall performance provides a reliable cloud classification tool for practical applications.

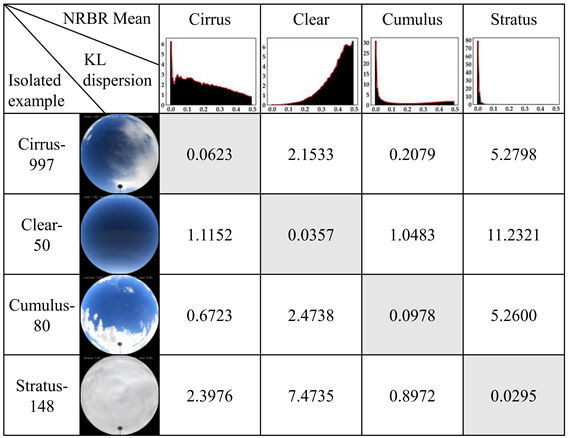

To further validate the model's discrimination of different cloud types, we computed the average probability density functions of normalized red-blue ratio (NRBR) distributions for 1000 RGB images per cloud dataset. Cloud image samples were then randomly drawn from each category and their NRBR distributions derived. Finally, the Kullback–Leibler (KL) divergences between the sample distributions and corresponding category averages were calculated. As

Table 3 shows, the KL divergence between a sample and its ground truth category is markedly lower than divergences to other categories. For instance, a clear sky sample has an average KL divergence of 0.0357 to the clear sky category, but 11.2321 to the stratus category. This signifies that the NRBR distribution of the clear sky sample identified by YOLOv8 aligns closely with the true category average, with similar KL divergence relationships holding for other cloud type samples. It verifies that the model can effectively discriminate the NRBR traits of different cloud types to ultimately yield accurate cloud classification outcomes.

4.2. Cloud Recognition Effect

To improve the accuracy of subsequent cloud quantification, we first performed pre-processing enhancement on the whole sky images. However, considering different cloud types are impacted differently by illumination and haze, we designed an adaptive image enhancement strategy: applying lower intensity for cirrus clouds to preserve more edge details, while stronger intensity for other cloud types to eliminate overexposed areas. As shown in

Figure 6b, 7b and 7f, this image enhancement algorithm makes the boundaries between clouds and blue sky more pronounced, with clearer ground objects and richer detail features.

This study employs an adaptive finite sector segmentation strategy for feature extraction. For stratus clouds and clear sky with distinct boundaries, just a few sectors are sufficient to accurately capture their traits. In contrast, more sectors are utilized for the indistinct boundary cirrus and cumulus clouds to enable more delicate partitioning that precisely seizes cloud edges. By further leveraging the k-means algorithm, we divide each sector region into three classes - blue sky, cloud and background. Compared to conventional holistic NRBR segmentation, the segmentation tailored to cloud types has significantly better adaptivity and partitioning outcomes. As depicted in

Figure 7, the finite sector segmentation and k-means clustering achieve remarkable results in three challenging scenarios: (1) The bottom of thick cloud layers that are prone to misjudgment as blue sky by traditional methods; (2) The overexposed vicinity of the sun where RGB values resemble clouds, potentially causing some blue sky around the sun to be wrongly judged as white clouds by conventional techniques; (3) Thin edge areas of cloud layers that are difficult to accurately recognize by standard NRBR algorithms, leading to deficient cloud quantification.

Through adaptive finite sector segmentation, we divide the original image into multiple sectoral regions, reducing the complexity of directly processing the entire image. This process enables the k-means clustering method to more effectively identify clouds in each sector, thereby significantly improving the accuracy of detection. This forms the key strategy for our success in cloud amount calculation. As illustrated in

Figure 8, the curve charts the cloud amount information at 15:00 every afternoon in June 2020 collected from valid images in Yangbajing area, comparing and analyzing the differences in cloud amount identification between the traditional NRBR method and the image enhancement technique. Over the course of this month, after image enhancement, the cloud recognition effect has conspicuous improvements for whole sky images with cloud cover below 80%. As denoted by the marked points in

Figure 8, at 15:00 on June 17th, the cloud amount calculated from the enhanced cloud map has an approximated 40% higher precision than that obtained using the traditional NRBR threshold segmentation method. This is primarily attributed to NRBR solely relying on color features, whereas the finite sector method synthesizes multiple features including shape and position for comprehensive judgment, hence possessing superior recognition effects on the overexposed areas surrounding the sun. Similarly, as exhibited in

Figure 7d and 7h, after image enhancement, the cloud amount identification results for the bottom of thicker cloud layers and the overexposed regions around the sun are pronouncedly ameliorated.

4.3. Spatial and Temporal Analysis of Cloud Types

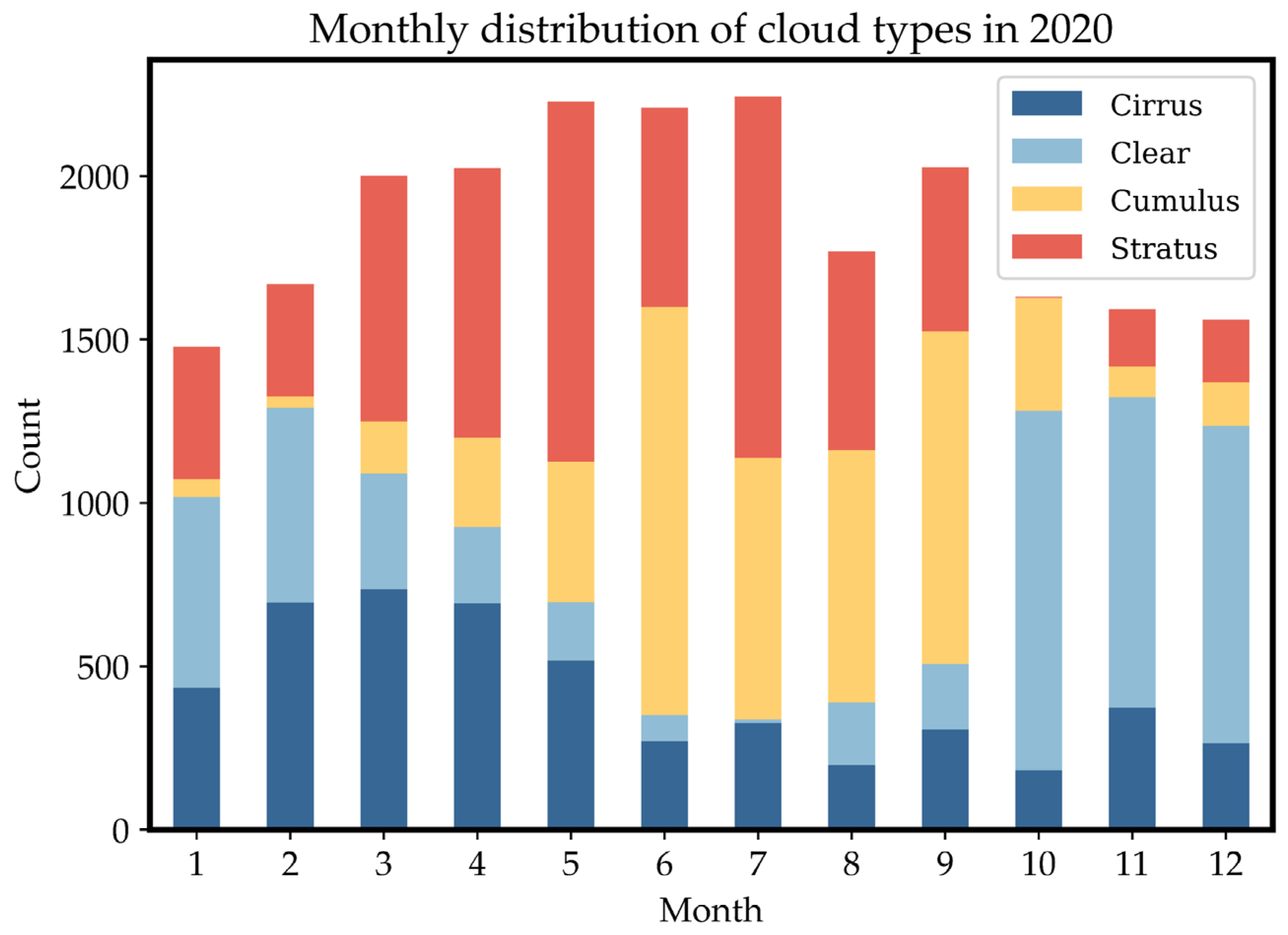

To gain deeper insight into the seasonal variations and diurnal patterns of cloud types in the Yangbajing area, Tibet, more detailed classification statistics on the 2020 annual daylight data are conducted. As depicted in

Figure 9, stratus clouds occurred most frequently throughout the year, accumulating 6622 times and accounting for 30% of total cloud occurrences. Clear sky and cumulus clouds took the second and third places, appearing 5447 and 5365 times respectively, both comprising around 24%. Cirrus clouds occurred least, at 5001 times making up 22%. This aligns with the climate characteristics of the Qinghai-Tibet Plateau. Stratus clouds primarily form from atmospheric water vapor condensation, facilitated by the high altitude and greater atmospheric thickness in Tibet. Cirrus clouds often develop at relatively lower altitudes and more humid climatic conditions (Monier et al., 2006), while the dry climate on the Tibetan plateau is less conducive to their formation. Analyzing by seasons, stratus clouds appeared most in spring (March-May), occurring 2678 times and occupying 42.9% of all daytime cloud types in the season. Cumulus clouds occurred most frequently in summer (June-August), reaching 3838 times and taking up 46.5% of total daytime cloud types. Clear sky dominated in autumn (September-November), appearing 2249 times and accounting for 42.8% of the daytime varieties. Winter (December-February) was also predominated by clear sky, which occurred 2150 times constituting 45.7% of the daytime population. The distinct seasonal shifts in cloud types across Tibet match its climate patterns - increased evaporation in spring facilitates thick cloud buildup; intense convection readily forms cumulus clouds in summer (Chen et al., 2012), aligning with the greater summer precipitation; while the relatively dry, less snowy winters see more clear sky days. Analyzing diurnal fluctuations reveals that stratus and cumulus clouds concentrate in afternoon hours, peaking at 17:00 and 18:00 for stratus (761 and 756 times respectively), and 13:00, 14:00 and 15:00 for cumulus (665, 707 and 684 times), potentially related to convective activity strengthened by afternoon surface heating. Clear sky occurrences are mainly distributed in the morning at 9:00, 10:00 and 11:00 (740, 812 and 743 times). Cirrus clouds vary more evenly throughout the day. Dividing the daylight hours of 7-20 into 7 periods, we statistically determine the peak timing of different cloud types: (1) Stratus clouds peak at 17-18:00, occupying 39.1% of the total occurrences. (2) Cumulus clouds peak at 13-14:00, taking up 34.2%. (3) Clear sky peaks at 9-10:00, constituting 40.6%. (4) Cirrus clouds peak at 17-18:00, comprising 24.9%. The common afternoon emergence of cumulus clouds may relate to intensified convective motions caused by daytime solar heating of the surface. Because the early air is less volatile and has a lower water vapor content than other times of the day, clear skies are more common in the morning. Generally speaking, the development of cirrus clouds requires relatively humid circumstances. Late afternoon solar radiation warms the surface and causes the air to rise, which aids in the vertical movement that carries water vapor to higher altitudes where it condenses as cirrus clouds.

5. Discussion

Cloud detection and identification has long been a research focus and challenge in meteorology and remote sensing. Current mainstream ground-based cloud detection methods can be summarized into two categories – traditional image processing approaches and deep learning-based techniques (Hensel et al., 2021). Traditional methods like threshold segmentation and texture analysis rely on manually extracted features with weaker adaptability to atypical cases, whereas deep learning can automatically learn features for superior performance. This study belongs to the latter, utilizing the YOLOv8 model for cloud categorization to capitalize on deep learning’s visual feature extraction strengths. Compared to other deep learning based cloud detection studies, the innovations of this research are three-fold: 1) An adaptive segmentation strategy tailored to different cloud types was designed, with segmentation parameters set according to cloud morphology to extract representative traits, improving partitioning accuracy. 2) Adaptive image enhancement algorithms were introduced, which markedly improved detection in regions with strong illumination impact like solar vicinity over conventional NRBR segmentation. 3) Multi-level refinement was adopted to enhance capturing of cloud edges and bottoms. These aspects enhanced adaptivity to various cloud types under complex illumination. Limitations of this study include: 1) Small dataset scale containing only Yangbajing area samples due to geographic and instrumentation constraints; 2) Sophisticated model training and tuning demanding substantial computational resources; 3) Room for further improving adaptability to overexposed regions. Future work may address these deficiencies via enlarged samples, cloud computing resources, and more powerful models.

In the context of comparing YOLOv8 model against BoMS method on the TCI dataset for cloud type classification, while this study has indeed exhibited superior performance attributes, we acknowledge that novel image classification algorithms are consistently emerging. In recent scholarly work (Gyasi and Swarnalatha, 2023), a streamlined convolutional neural network (CNN) built upon MobileNet architecture has achieved substantial enhancements, reaching an overall accuracy as high as 97.45% on analogous public datasets. Similarly, other cloud classification networks such as CloudNet (Zhang et al., 2018), Transformer-based models (Li et al., 2022b), and Combined convolutional network (Zhu et al., 2022) have also demonstrated commendable classification efficacy. However, due to the lack of direct comparative empirical evaluations between these latest algorithms and our proposed YOLOv8 model within the current paper, it is not feasible to conduct a quantitative juxtaposition with these advancements. Despite this limitation, considering the cutting-edge achievements reported in the literature and the swift pace of technological progress within deep learning, future research endeavors will include meticulous comparative analyses of these state-of-the-art methods. This strategic move aims at rigorously validating and augmenting the robustness and generalization capabilities of our model under intricate meteorological circumstances, ensuring its continued competitiveness at the vanguard of research into cloud quantification. Ultimately, this drive is directed towards refining our existing framework continually and furnishing climate science research with increasingly accurate and efficient solutions for cloud measurement tasks.

Table 4 shows the comparison of our model with the most recent technical approaches in the literature.

Although only validated at the Yangbajing Comprehensive Atmospheric Observatory in Tibet, this approach exhibits considerable scalability and versatility. Firstly, the constructed end-to-end recognition framework has generalization capability – with appropriate fine-tuning, it can adapt to cloud morphological traits in other regions. Secondly, the adaptive image enhancement strategy functions irrespective of specific lighting conditions hence widely applicable to diverse environments; the finite sector segmentation with k-means clustering philosophy can also generalize to cloud quantification at different sites – regions with more prevalent hazes would benefit well. The modularized design ensures convenient upgradability of individual components. Therefore, this proposed technique can be readily transferred within the cloud monitoring network to enable coordinated high-precision multi-regional recognition, providing a referential paradigm for cloud detection tasks under other challenging illumination circumstances.

Exploring the relationship between cloud amount and solar radiation is an important aspect of our research, and previous work has provided valuable insights. To further understand the spatiotemporal impact of different cloud types on solar radiation in studying the solar radiation-cloud amount relationship, techniques such as XGBoost and deep neural networks, specifically CNN-LSTM layers, can be adopted to finely model satellite images, extracting features from vast amounts of satellite images to construct solar radiation models for gaining an in-depth comprehension of the spatiotemporal effects of assorted cloud types on solar radiation (Rocha and Santos, 2022). Efficient cloud detection is also integral to solar radiation research. By introducing advanced cloud detection technologies, researchers can calculate cloud amount more accurately, thereby refining the modeling and forecasting of solar radiation. Additionally, by utilizing convolutional neural networks (CNNs) to perform cloud detection in remote sensing images, it is analyzed that different cloud types possess unique visual characteristics in images, and clouds and non-clouds can be effectively distinguished through these traits, which provides an intuitive yet potent approach for us to understand the impact of cloud amount on the solar radiation balance (Matsunobu et al., 2021). Hence, by leveraging advanced technical means, we can better grasp how different cloud types influence solar radiation, enabling enhanced prediction of meteorological changes and climate evolution. This holds great practical significance for establishing coupling models between cloud amount and radiation and promoting meteorological and climatic research.

6. Conclusions

This research proposes a novel deep learning based whole sky image cloud detection solution, constructing a 4000-image multi-cloud dataset spanning cirrus, clear sky, cumulus and stratus categories that achieved markedly improved recognition and quantification outcomes in Tibet’s Yangbajing area. Specifically, this study constructs an end-to-end cloud recognition framework. First, different cloud types are accurately determined using the YOLOv8 model with an average classification accuracy of more than 98%, and an average classification accuracy of more than 98% is also achieved on the TCI public dataset. On the basis of cloud classification, an adaptive segmentation strategy is designed for different cloud shapes, which significantly improves the segmentation accuracy, especially for convolutional clouds with fuzzy boundaries. Moreover, adaptive image enhancement algorithms were introduced to significantly improve detection in illumination-challenging areas around the sun. Finally, multi-level refinement modules based on finite sector techniques further upgraded judgment precision of cloud edges and details. Validation on the 2020 annual Yangbajing dataset proves stratus clouds constitute the predominant type, appearing in 30% of daytime cloud images, delivering valuable data support for regional climate studies. In conclusion, this framework significantly raises the automation level of ground-based cloud quantification to create a strong technological foundation for research on climate change. It does this by integrating various modules that cover classification, adaptive segmentation, and image enhancement. Additionally, it offers a referable paradigm for other cloud recognition tasks under complex lighting environments.

Author Contributions

YW and JL: conceptualization, methodology, developed the model code, formal analysis, investigation, writing (original draft), writing (review and editing). YP and DS: conceptualization, resources, formal analysis, supervision. JZ, LW, WZ, XH, ZQ and DL: data curation, resources, methodology.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Acknowledgments

This research was funded by the Second Tibetan Plateau Scientific Expedition and Research Program of China, grant number 2019QZKK0604; and by the National Natural Science Foundation of China (42293321 and 42030708). We would like to express our sincere gratitude to the Yangbajing General Atmospheric Observatory of the Institute of Atmospheric Physics, Chinese Academy of Sciences, and the Chinese Academy of Meteorological Sciences for providing data for this study.

Conflicts of Interest

The authors declare that they have no conflict of interest.

References

- Alonso-Montesinos, J.: Real-Time Automatic Cloud Detection Using a Low-Cost Sky Camera, Remote Sens., 2020, 12, 1382. [CrossRef]

- Chen, B., Xu, X. D., Yang, S., and Zhao, T. L.: Climatological perspectives of air transport from atmospheric boundary layer to tropopause layer over Asian monsoon regions during boreal summer inferred from Lagrangian approach, Atmos. Chem. Phys., 2012, 12, 5827-5839. [CrossRef]

- Dinc, S., Russell, R., and Parra, L. A. C.: Cloud Region Segmentation from All Sky Images using Double K-Means Clustering, 2022 IEEE International Symposium on Multimedia (ISM), Italy, 5-7 Dec. 2022. [CrossRef]

- Feng, C. J., Zhang, X. T., Wei, Y., Zhang, W. Y., Hou, N., Xu, J. W., Yang, S. Y., Xie, X. H., and Jiang, B.: Estimation of Long-Term Surface Downward Longwave Radiation over the Global Land from 2000 to 2018, Remote Sens., 2021, 13, 1848. [CrossRef]

- Gyasi, E. K. and Swarnalatha, P.: Cloud-MobiNet: An Abridged Mobile-Net Convolutional Neural Network Model for Ground-Based Cloud Classification, Atmosphere., 2023, 14. [CrossRef]

- He, L. L., Ouyang, D. T., Wang, M., Bai, H. T., Yang, Q. L., Liu, Y. Q., and Jiang, Y.: A Method of Identifying Thunderstorm Clouds in Satellite Cloud Image Based on Clustering, CMC-Comput. Mater. Continua, 2018, 57, 549-570. [CrossRef]

- Hensel, S., Marinov, M. B., Koch, M., and Arnaudov, D.: Evaluation of Deep Learning-Based Neural Network Methods for Cloud Detection and Segmentation, Energies., 2021, 14, 6156. [CrossRef]

- Hutchison, K. D., Iisager, B. D., Dipu, S., Jiang, X. Y., Quaas, J., and Markwardt, R.: A Methodology for Verifying Cloud Forecasts with VIIRS Imagery and Derived Cloud Products-A WRF Case Study, Atmosphere. 2019, 10, 521. [CrossRef]

- Irbah, A., Delanoe, J., van Zadelhoff, G. J., Donovan, D. P., Kollias, P., Treserras, B. P., Mason, S., Hogan, R. J., and Tatarevic, A.: The classification of atmospheric hydrometeors and aerosols from the EarthCARE radar and lidar: the A-TC, C-TC and AC-TC products, Atmos. Meas. Tech., 2023, 16, 2795-2820. [CrossRef]

- Jafariserajehlou, S., Mei, L. L., Vountas, M., Rozanov, V., Burrows, J. P., and Hollmann, R.: A cloud identification algorithm over the Arctic for use with AATSR-SLSTR measurements, Atmos. Meas. Tech., 2019, 12, 1059-1076. [CrossRef]

- Kaiming, H., Jian, S., and Xiaoou, T.: Single image haze removal using dark channel prior, 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, 20-25 June 2009. [CrossRef]

- Krauz, L., Janout, P., Blazek, M., and Páta, P.: Assessing Cloud Segmentation in the Chromacity Diagram of All-Sky Images, Remote Sens., 2020, 12, 1902. [CrossRef]

- Krüger, O., Marks, R., and Grassl, H.: Influence of pollution on cloud reflectance, J. Geophys. Res.: Atmos., 2004, 109, D24210. [CrossRef]

- Li, P., Zheng, J. S., Li, P. Y., Long, H. W., Li, M., and Gao, L. H.: Tomato Maturity Detection and Counting Model Based on MHSA-YOLOv8, Sensors., 2023, 23. [CrossRef]

- Li, Q., Zhang, Z., Lu, W., Yang, J., Ma, Y., and Yao, W.: From pixels to patches: a cloud classification method based on a bag of micro-structures, Atmos. Meas. Tech., 2016, 9, 753-764. [CrossRef]

- Li, W. W., Zhang, F., Lin, H., Chen, X. R., Li, J., and Han, W.: Cloud Detection and Classification Algorithms for Himawari-8 Imager Measurements Based on Deep Learning, IEEE Trans. Geosci. Remote Sens., 2022a, 60, 4107117. [CrossRef]

- Li, X., Qiu, B., Cao, G., Wu, C., and Zhang, L.: A Novel Method for Ground-Based Cloud Image Classification Using Transformer, Remote Sens., 2022b, 14. [CrossRef]

- Li, Z. W., Shen, H. F., Li, H. F., Xia, G. S., Gamba, P., and Zhang, L. P.: Multi-feature combined cloud and cloud shadow detection in GaoFen-1 wide field of view imagery, Remote Sens. Environ., 2017, 191, 342-358. [CrossRef]

- Ma, N., Sun, L., Zhou, C. H., and He, Y. W.: Cloud Detection Algorithm for Multi-Satellite Remote Sensing Imagery Based on a Spectral Library and 1D Convolutional Neural Network, Remote Sens., 2021, 13, 3319. [CrossRef]

- Matsunobu, L. M., Pedro, H. T. C., and Coimbra, C. F. M.: Cloud detection using convolutional neural networks on remote sensing images, Sol. Energy, 2021, 230, 1020-1032. [CrossRef]

- Monier, M., Wobrock, W., Gayet, J. F., and Flossmann, A.: Development of a detailed microphysics cirrus model tracking aerosol particles' histories for interpretation of the recent INCA campaign, J. Atmos. Sci., 2006, 63, 504-525. [CrossRef]

- Nakajima, T. Y., Tsuchiya, T., Ishida, H., Matsui, T. N., and Shimoda, H.: Cloud detection performance of spaceborne visible-to-infrared multispectral imagers, Appl. Opt., 2011, 50, 2601-2616. [CrossRef]

- Raghuraman, S. P., Paynter, D., and Ramaswamy, V.: Quantifying the Drivers of the Clear Sky Greenhouse Effect, 2000-2016, J. Geophys. Res.: Atmos., 2019, 124, 11354-11371. [CrossRef]

- Riihimaki, L. D., Li, X. Y., Hou, Z. S., and Berg, L. K.: Improving prediction of surface solar irradiance variability by integrating observed cloud characteristics and machine learning, Sol. Energy, 2021, 225, 275-285. [CrossRef]

- Rocha, P. A. C. and Santos, V. O.: Global horizontal and direct normal solar irradiance modeling by the machine learning methods XGBoost and deep neural networks with CNN-LSTM layers: a case study using the GOES-16 satellite imagery, Int. J. Energy Environ, 2022, 13, 1271-1286. [CrossRef]

- Rumi, E., Kerr, D., Sandford, A., Coupland, J., and Brettle, M.: Field trial of an automated ground-based infrared cloud classification system, Meteorol. Appl., 2015, 22, 779-788. [CrossRef]

- van de Poll, H. M., Grubb, H., and Astin, I.: Sampling uncertainty properties of cloud fraction estimates from random transect observations, J. Geophys. Res.: Atmos., 2006, 111, D22218. [CrossRef]

- Voigt, A., Albern, N., Ceppi, P., Grise, K., Li, Y., and Medeiros, B.: Clouds, radiation, and atmospheric circulation in the present-day climate and under climate change, Wiley Interdiscip. Rev. Clim. Change, 2021, 12, e694. [CrossRef]

- Wang, G., Chen, Y. F., An, P., Hong, H. Y., Hu, J. H., and Huang, T. E.: UAV-YOLOv8: A Small-Object-Detection Model Based on Improved YOLOv8 for UAV Aerial Photography Scenarios, Sensors., 2023, 23, 7190. [CrossRef]

- Werner, F., Siebert, H., Pilewskie, P., Schmeissner, T., Shaw, R. A., and Wendisch, M.: New airborne retrieval approach for trade wind cumulus properties under overlying cirrus, J. Geophys. Res.: Atmos., 2013, 118, 3634-3649. [CrossRef]

- Wu, L. X., Chen, T. L., Ciren, N., Wang, D., Meng, H. M., Li, M., Zhao, W., Luo, J. X., Hu, X. R., Jia, S. J., Liao, L., Pan, Y. B., and Wang, Y. A.: Development of a Machine Learning Forecast Model for Global Horizontal Irradiation Adapted to Tibet Based on Visible All-Sky Imaging, Remote Sens., 2023, 15, 2340. [CrossRef]

- Wu, Z. P., Liu, S., Zhao, D. L., Yang, L., Xu, Z. X., Yang, Z. P., Liu, D. T., Liu, T., Ding, Y., Zhou, W., He, H., Huang, M. Y., Li, R. J., and Ding, D. P.: Optimized Intelligent Algorithm for Classifying Cloud Particles Recorded by a Cloud Particle Imager, J. Atmos. Oceanic Technol., 2021, 38, 1377-1393. [CrossRef]

- Xiao, B. J., Nguyen, M., and Yan, W. Q.: Fruit ripeness identification using YOLOv8 model, Multimed. Tools Appl., 2023. [CrossRef]

- Yang, Y. K., Di Girolamo, L., and Mazzoni, D.: Selection of the automated thresholding algorithm for the Multi-angle Imaging SpectroRadiometer Radiometric Camera-by-Camera Cloud Mask over land, Remote Sens. Environ., 2007, 107, 159-171. [CrossRef]

- Yu, C. H., Yuan, Y., Miao, M. J., and Zhu, M. L.: CLOUD DETECTIONMETHOD BASED ON FEATURE EXTRACTION IN REMOTE SENSING IMAGES, 8th International Symposium on Spatial Data Quality, China, Hong Kong, 30 May–1 June 2013. [CrossRef]

- Yu, J. C., Li, Y. C., Zheng, X. X., Zhong, Y. F., and He, P.: An Effective Cloud Detection Method for Gaofen-5 Images via Deep Learning, Remote Sens., 2020, 12, 2106. [CrossRef]

- Zhang, J., Liu, P., Zhang, F., and Song, Q.: CloudNet: Ground-Based Cloud Classification With Deep Convolutional Neural Network, Geophys. Res. Lett., 2018, 45, 8665-8672. [CrossRef]

- Zhu, W., Chen, T., Hou, B., Bian, C., Yu, A., Chen, L., Tang, M., and Zhu, Y.: Classification of Ground-Based Cloud Images by Improved Combined Convolutional Network, Applied Sciences, 2022, 12. [CrossRef]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).