2. Methods

In order to predict the positional paths of two point masses in a double pendulum system, we need a classical mechanics framework represented by the Euler-Lagrange equation. This is because the Euler-Lagrange equation provides a systematic approach to deriving the equations of motion for complex systems like the double pendulum, taking into account the constraints and forces involved. On the other hand, using Newtonian mechanics alone for the double pendulum can be challenging due to the system’s nonlinear nature and the presence of constraints such as the lengths of the pendulum arms. While it’s possible to analyze simpler pendulum systems using Newton’s laws directly, the double pendulum’s motion involves coupled, nonlinear differential equations that are more conveniently handled using the Euler-Lagrange formalism [

14].

The Euler-Lagrange equation is essential in classical mechanics and field theory, describing the motion of particles or fields by minimizing a functional known as the action. To derive this equation from scratch, we started with the action

S, defined as the integral of the Lagrangian

over time:

Here,

q represents the generalized coordinates,

is the derivative of

q with respect to time, and

t is time. The Lagrangian

has a simple and concise definition:

The kinetic energy (T) and potential energy (V) together make up the classical Lagrangian, which is just the difference between these energies in the system. This applies to classical mechanics with conservative systems, where the total energy is the sum of kinetic and potential energies. Our next step was to find the path that keeps the action constant, even if the path changes a little. This basic idea is called the principle of least action.

To find this stationary path, we used the calculus of variations. Let

be a small variation in the path

, such that

becomes

. The variation in the action is then:

We expanded

in a Taylor series around

q and

:

Substituting the expression back into equation

3 we would consider terms up to second order or higher in

and

in the variation of the action

S,

:

Integrating the first term by parts with respect to

t and assuming that variations

and

vanish (boundary conditions), we got:

For the action to be stationary,

must be zero for all possible variations

. This leads to the Euler-Lagrange equation:

This equation governs the dynamics of the system and provides the equations of motion for the generalized coordinates

.

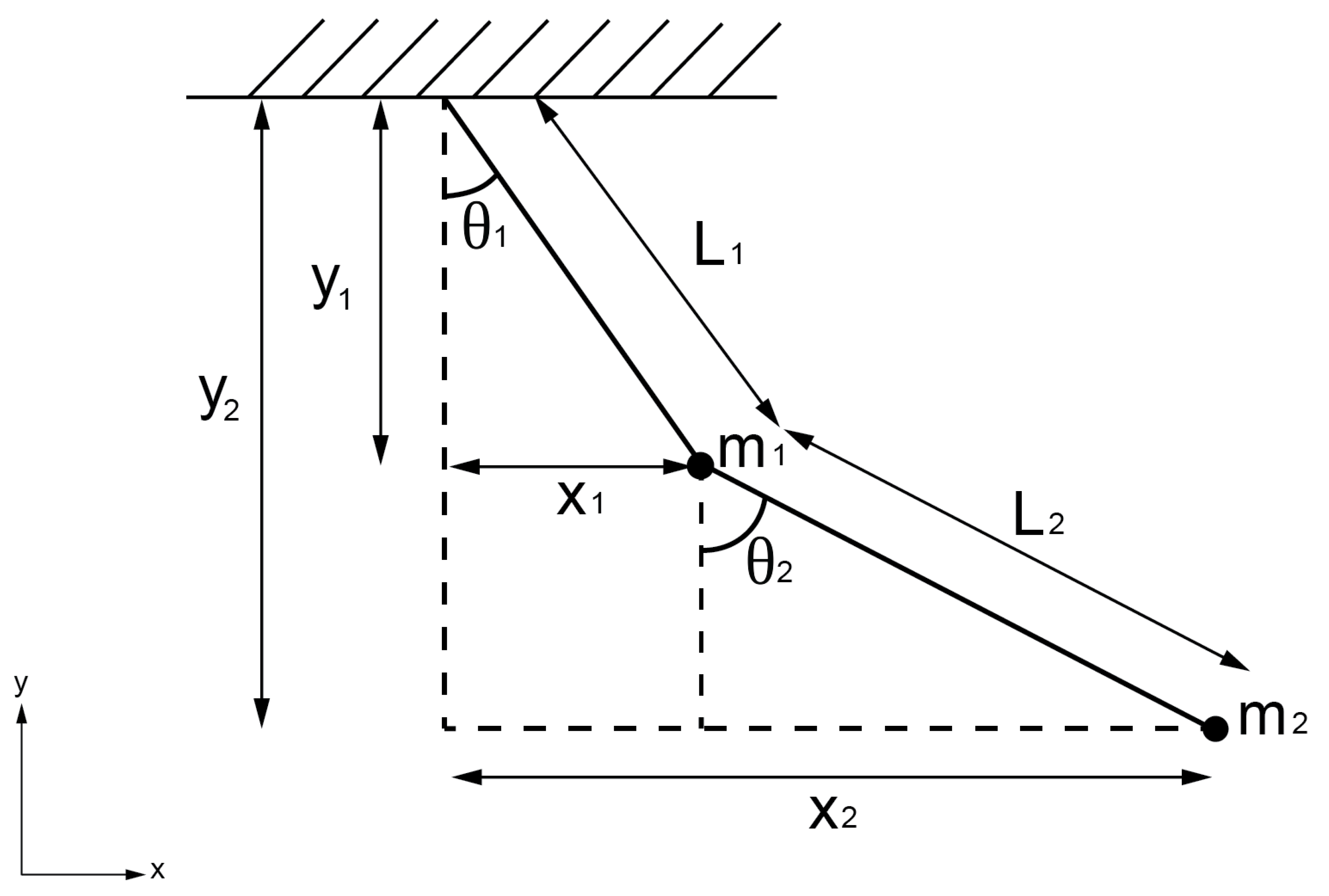

To apply the Euler-Lagrange equation to the double pendulum system, we must first establish its Lagrangian, which encapsulates both the system’s kinetic and potential energies. This process started by delineating the geometric relationships governing the vertical and horizontal positions within the double pendulum system, as illustrated in

Figure 1. These relationships were formalized through the following equations, denoted as equations

8 and

9:

Here,

and

represent the horizontal positions, while

and

represent the vertical positions. These positions are determined by the lengths of the pendulum arms (

and

) and the angles (

and

).

Since the positions

x and

y are functions of the angles

and

, respectively, obtaining their first derivatives requires applying the chain rule. This application results in the following expressions:

These derivatives

and

represent the rates of change of the horizontal and vertical positions with respect to time, taking into account the angular velocities

and

.

Starting from the general formula for kinetic energy

(where

m is the total of two-point masses of

and

v is velocity), we substituted the velocities

and

from the systems of equations

10 and

11 into equation

12. This substitution yields the expanded form:

Expanding and simplifying each term step by step, we obtained expressions for the kinetic energy components. These components involve terms related to the masses

and

, the lengths

and

of the pendulum arms, and the angular velocities

and

. After combining and simplifying the terms, we arrived at the final form of the kinetic energy:

This expression captures the kinetic energy

T of the double pendulum system comprehensively, incorporating the masses

and

, the lengths

and

of the pendulum arms, and the angular velocities

and

in a way that reflects the system’s dynamics and interactions.

Beginning with equation

14, which defines potential energy as a function of the vertical positions

and

, and the gravitational constant

g, we can express it as:

Substituting the expressions for

and

from the system of equations

9 into the above equation yields:

We then simplified this expression to:

Finally, to express potential energy

V solely in terms of the angles

and

, we derived:

Here,

g represents the gravitational acceleration, and the potential energy

V is derived from the vertical positions of the pendulum components and their respective masses, articulated in terms of the angles

and

.

After deriving the expressions for kinetic energy

T and potential energy

V, we can now formulate the Lagrangian

for the double pendulum system. The Lagrangian is defined in the definition

2. Substituting the expressions we derived for

T and

V into this equation, we obtained:

This expression for the Lagrangian encapsulates the dynamic behavior of the double pendulum system. It incorporates the masses and , the lengths and of the pendulum arms, the angular velocities and , and the gravitational constant g, as well as the angles and that describe the positions of the pendulum components. The Lagrangian serves as a fundamental quantity in the analysis of the system’s motion and dynamics, providing a comprehensive representation of its energy and interactions.

Starting with the Lagrangian

derived earlier (equation

18), we applied the Euler-Lagrange equation (equation

7) to derive the equations of motion for a system with two point masses (the double pendulum in this case). The Euler-Lagrange equation for a variable

is given by:

Applying this equation to the variables

and

separately, we obtained two set of equations:

For

, we first calculated the partial derivative of

with respect to

:

Then, we took the derivative of this with respect to time

t:

Next, we calculated the partial derivative of

with respect to

:

Finally, substituting these derivatives into the Euler-Lagrange equation (equation

20) for

:

To derive the equation of motion for

using the Euler-Lagrange equation (equation

20), we started by calculating the partial derivative of the Lagrangian

with respect to the derivative of

, denoted as

. This yields:

Taking the time derivative of this expression gave us:

Next, we calculated the partial derivative of

with respect to

, denoted as

, which was given by:

Substituting the expressions for

and

into the Euler-Lagrange equation and simplifying this equation further results in the equation of motion for

, given as:

Combining the final forms of the Euler-Lagrange equations for

(equation

24) and

(equation

28) together form the complete system of equations of motion for the double pendulum system. They are coupled second-order ordinary differential equations (ODEs) because the acceleration terms

and

are dependent on each other due to the cosine and sine terms involving

and

. This coupling reflects the interdependence of the pendulum’s motions and positions, making the system dynamically rich and challenging to analyze without numerical or advanced analytical techniques.

For the numerical analysis, we substituted the following expressions

to simplify the system of equations for computational purposes. Substituting these into the given equations (equations

24 and

28), we obtained the following system:

This transformed system allows us to numerically solve for the angular frequencies and given initial conditions and system parameters.

To simplify the numerical analysis, we first rewrote the original system of equations in terms of the defined variables:

We then rewrote the system of equations in matrix form:

Finally, solving for

and

, we obtained:

These derived equations were expressed in terms of the defined variables, providing a more organized and manageable representation for the numerical experiments.

For the numerical simulation needs in this study, we used parameters consistent with the physical properties of the system. These include an acceleration due to gravity (g) of , the lengths of the pendulum arms and set to 2 meters and 1 meter respectively, and the masses of the pendulums ( and ) set at 1 kilogram and 2 kilograms respectively. The simulation time is chosen to be 10 seconds with 10,000 linearly time spacing, providing sufficient duration to observe the system’s behavior.

With these parameters established, we set the initial conditions for the simulation. The initial angles ( and ) are set to 3.14 radians (which is equivalent to ) for and 1.57 radians (equivalent to ) for . Additionally, the initial angular velocities ( and ) are initialized to 0 radians per second, representing a starting point where the pendulums are at rest. For the subsequent simulation, we changed the both angular velocities to radians per seconds fo the sensitivity test with the initial conditions.

We used the Runge-Kutta-Fehlberg (RKF) method for approximating the solution of our problem (system of equations

32). RKF is a powerful numerical integration technique used to solve systems of ODEs with high accuracy and computational efficiency [

13]. Its adaptability makes it particularly suitable for dynamic systems like the double pendulum, where the motion can be complex and highly nonlinear.

We started with the initial conditions and set the initial step size

h. Then, we proceeded to define the predictor step by using the 4th order Runge-Kutta formulas to predict the solution at the next time step. For a general ODE of the form

, the 4th order Runge-Kutta formulas are:

The predicted solution at

is then given by:

We used the 5th order Runge-Kutta formulas to compute a more accurate estimate of the solution at the next time step. The 5th order Runge-Kutta formulas are similar to the 4th order ones but include an additional evaluation point:

The corrected solution at

is then given by:

Then, we calculated the local truncation error by comparing the 4th and 5th order solutions. The error estimate

is given by:

We compared the error estimate to a predefined tolerance. If is within the tolerance, accept the step and update the solution. If exceeds the tolerance, reduce the step size h and we repeated the process until the error is acceptable. Finally, we continued integrating until reaching the desired end time or number of steps.

The adaptive step-size control implemented in the RKF method ensures accurate integration while minimizing computational costs. By dynamically adjusting the step size based on error estimation, the RKF method provides accurate numerical approximations of the system’s behavior over time, rendering it an effective tool for analyzing complex dynamic systems such as the double pendulum. However, in the present study, we did not employ the RKF method directly from the beginning. Instead, we utilized several platform-specific tools, including SciPy in Python [

15], deSolve in R [

16], DifferentialEquations.jl in Julia [

17], and the built-in function ode45 in GNU Octave [

18].

The motivation behind using multiple programming languages and their respective computing environments was twofold. First, it allowed us to leverage the strengths and unique features of each language and environment, enabling a comprehensive exploration of the double pendulum problem from diverse perspectives. Second, it facilitated a comparative analysis of the performance and accuracy of different numerical solvers across these platforms.

In Python, the SciPy library provided a robust and well-established collection of scientific computing tools, including numerical solvers for ODEs. The flexibility and ease of use of Python, combined with the power of SciPy, made it a suitable choice for implementing and testing numerical methods for the double pendulum problem [

19,

20]. However, the interpreted nature of Python may introduce performance overhead compared to compiled languages.

The R programming language, with its deSolve package, offered a specialized environment for solving ODEs and differential algebraic equations (DAEs) [

21]. R’s strong emphasis on statistical analysis and data visualization made it an attractive option for exploring the double pendulum problem, enabling efficient data analysis and visual representation of the results. Nonetheless, the high-level nature of R may lead to performance limitations for computationally intensive simulations.

Julia, a relatively new language designed for scientific computing, provided a high-performance computing environment through its DifferentialEquations.jl package. The combination of Julia’s dynamic programming capabilities and its efficient just-in-time (JIT) compilation made it a promising choice for solving the double pendulum problem with potentially improved computational performance [

22,

23]. However, the relatively young ecosystem of Julia may present challenges in terms of package maturity and community support.

Finally, GNU Octave, a high-level language primarily intended for numerical computations, offered the built-in ode45 function, which implements a versatile Runge-Kutta method for solving ODEs. GNU Octave’s compatibility with MATLAB

® syntax and its open-source nature made it an accessible option for researchers and students alike [

18]. However, its performance may be limited compared to lower-level languages or specialized numerical libraries. By employing these various computing environments, we aimed to evaluate the trade-offs between performance, accuracy, and ease of use for each approach.

When conducting scientific research involving computational simulations, it is crucial to ensure reproducibility and transparency in the methods used. In this particular study, we aim to benchmark the performance of four different computing environments: Python, R, Octave/MATLAB, and Julia, by running a script that simulates the motion of a double pendulum. The script was executed 1,000 times in each computing environment, and the runtime and memory usage for each run will be measured and recorded. The benchmarking was performed on a Fedora Linux 39 (Budgie) x86_64 system with a 20LB0021US ThinkPad P52s laptop equipped with an Intel i7-8550U (8) @4.000GHz CPU.

The rationale behind this benchmarking approach is multifaceted. Firstly, it promotes reproducibility by providing the scripts and the benchmarking script, allowing other researchers to easily replicate the computational experiments and verify the results. Additionally, it facilitates a direct comparison of the runtime and memory usage across different computing environments for the same task, providing valuable insights into the relative performance of each environment. This information can guide researchers in choosing the most suitable tool for their specific computational needs.

Furthermore, running the scripts 1,000 times in each environment helps to account for potential variability and ensures that the performance measurements are consistent and reliable. This is particularly important when dealing with computationally intensive simulations like the double pendulum simulation, where minor fluctuations in hardware or software configurations can impact the results. With a large number of runs, it becomes possible to perform statistical analysis on the collected data, such as calculating confidence intervals, identifying outliers, and determining the statistical significance of any observed performance differences.

Measuring memory usage alongside runtime can provide insights into the scalability and resource requirements of each computing environment. This information is crucial when working with large-scale simulations or data-intensive applications, where efficient memory management is essential. By including the benchmarking methodology and results in the scientific paper, researchers demonstrate transparency and allow others to critically evaluate the computational approaches used in the study [

24]. This aligns with the principles of open science and facilitates future replication and extension of the research.

After conducting the benchmarking process and collecting the runtime and memory usage data for each computing environment, we performed statistical analyses to determine if there were significant differences in performance among the platforms. Specifically, we employed the Kruskal-Wallis (K-W) test and Dunn’s test with Bonferroni adjustment, which are commonly used in various scientific fields for comparing multiple groups or treatments.

The K-W test is a non-parametric alternative to the one-way analysis of variance (ANOVA) and is used when the assumptions of normality and homogeneity of variances are violated [

25]. It is a rank-based test that evaluates whether the populations from which the samples were drawn have the same distribution. The test statistic for the K-W test was calculated as:

where

N is the total number of observations across all groups,

k is the number of groups,

is the sum of ranks for group

i, and

is the number of observations in group

i. The null hypothesis for the K-W test is that the populations have the same distribution, while the alternative hypothesis is that at least one population has a different distribution from the others.

If the K-W test indicates significant differences among the groups, post-hoc tests are typically performed to determine which specific groups differ from each other. In our case, we used Dunn’s test with Bonferroni adjustment, which is a multiple comparison procedure that adjusts the significance level to control the family-wise error rate (FWER) [

26]. The Dunn’s test statistic for comparing groups

i and

j was calculated as:

, where and are the average ranks for groups i and j, respectively, N is the total number of observations across all groups, and and are the number of observations in groups i and j, respectively. The Bonferroni adjustment was applied by dividing the desired significance level () by the number of pairwise comparisons made, resulting in an adjusted significance level of , where k is the number of groups.

The K-W test and Dunn’s test with Bonferroni adjustment are widely used in various scientific fields [

27,

28,

29]. These tests are particularly useful when dealing with non-normal data or when the assumptions of parametric tests (such as ANOVA) are violated. They provide a robust and reliable way to compare multiple groups or treatments, ensuring that any observed differences are statistically significant and not due to chance alone. By applying these statistical tests to our benchmarking data, we aimed to determine if there were significant differences in performance among the four computing environments (Python, R, GNU Octave, and Julia) for the double pendulum simulation task. These non-parametric procedures were implemented in Python using the SciPy stats module [

15] and the scikit-posthoc library [

30].

After conducting the benchmarking process to measure the runtime and memory usage of the double pendulum simulation across different computing environments, we further analyzed the obtained time series data to gain insights into the underlying dynamics of the system. One of the analysis techniques we employed was the calculation of Shannon entropy [

31], which is a measure derived from information theory that quantifies the amount of information or uncertainty present in a random variable or time series. The Shannon entropy was calculated using the following equation:

, where

is the Shannon entropy,

n is the number of unique values in the time series, and

is the probability of the occurrence of the value

in the time series. A higher Shannon entropy value indicates a higher degree of uncertainty or unpredictability in the time series, while a lower entropy value suggests a more predictable or regular pattern. This measure can provide valuable insights into the complexity and dynamics of the double pendulum system.

In our analysis, we calculated the Shannon entropy for each variable (e.g., , , , , , ) in the time series data obtained from the double pendulum simulation. To interpret the entropy scores, we established thresholds based on the following criteria: low entropy (predictable) for entropy scores , medium entropy (some predictability) for entropy scores between 0.5 and 1.0, and high entropy (unpredictable) for entropy scores . These thresholds are commonly used in various applications and provide a convenient way to interpret the degree of predictability or uncertainty present in the time series data. By calculating and analyzing the Shannon entropy of the time series data, we can gain insights into the predictability and complexity of the double pendulum system’s dynamics. A high entropy score for a particular variable suggests that the corresponding time series is highly unpredictable or complex, while a low entropy score indicates a more regular or predictable pattern.

The choice of Shannon entropy as an analysis technique was motivated by its strong theoretical foundation in information theory and its widespread use in various scientific fields for quantifying the complexity and uncertainty of dynamical systems. Furthermore, the interpretation of entropy scores based on predefined thresholds provides a convenient and standardized way to categorize the time series data into different levels of predictability or complexity.

After conducting the entropy measurement, we employed the Kolmogorov-Smirnov (K-S) test to assess the sensitivity of the system to initial conditions, which is a characteristic feature of chaotic systems. The K-S test is a non-parametric statistical test that compares the cumulative distribution functions (CDFs) of two samples to determine if they are drawn from the same underlying distribution [

32]. In the context of dynamical systems analysis, the K-S test can be used to compare the time series obtained from the original system with slightly perturbed initial conditions, allowing us to quantify the divergence between the trajectories. Let

and

be the empirical CDFs of the original time series and the perturbed time series, respectively. The K-S statistic is defined as the maximum absolute difference between these two CDFs:

, where

represents the supremum (least upper bound) of the set of absolute differences between the CDFs over all possible values of

x.

The null hypothesis for the K-S test is that the two samples are drawn from the same continuous distribution, while the alternative hypothesis is that they are drawn from different distributions. The null hypothesis is rejected if the K-S statistic exceeds a critical value that depends on the chosen significance level and the sample sizes n and m. In our analysis, we compared the original time series obtained from the double pendulum simulation with slightly perturbed time series, where the initial conditions for and were perturbed by 0.001 rad/s. By applying the K-S test to each pair of original and perturbed time series, we can assess whether the small perturbation in the initial conditions leads to a significant divergence in the trajectories over time. If the K-S test rejects the null hypothesis, indicating that the original and perturbed time series are drawn from different distributions, it suggests that the double pendulum system is sensitive to initial conditions, which is a hallmark of chaotic behavior. Conversely, if the test fails to reject the null hypothesis, it implies that the system is less sensitive to small perturbations in the initial conditions, suggesting a more predictable or regular dynamics.

The choice of the K-S test was motivated by its nonparametric nature, which means that it does not make assumptions about the underlying distribution of the data, making it suitable for analyzing complex dynamical systems where the distribution is often unknown or difficult to model parametrically. Additionally, the K-S test is widely used in various scientific fields for comparing distributions and detecting deviations from a hypothesized distribution [

33,

34,

35], further justifying its application in our analysis. We employed SciPy’s stats module [

15] to conduct an automated K-S test.

3. Results and Discussion

The K-W test, a non-parametric test for comparing multiple groups, yielded a test statistic of 3523.203 and a p-value of 0.000 for the runtime data, indicating that at least one group’s median runtime significantly differs from the others. Dunn’s post-hoc test, with Bonferroni adjustment for multiple comparisons, showed that all pairs of groups had p-values below 0.05, indicating significant differences in their median runtimes.

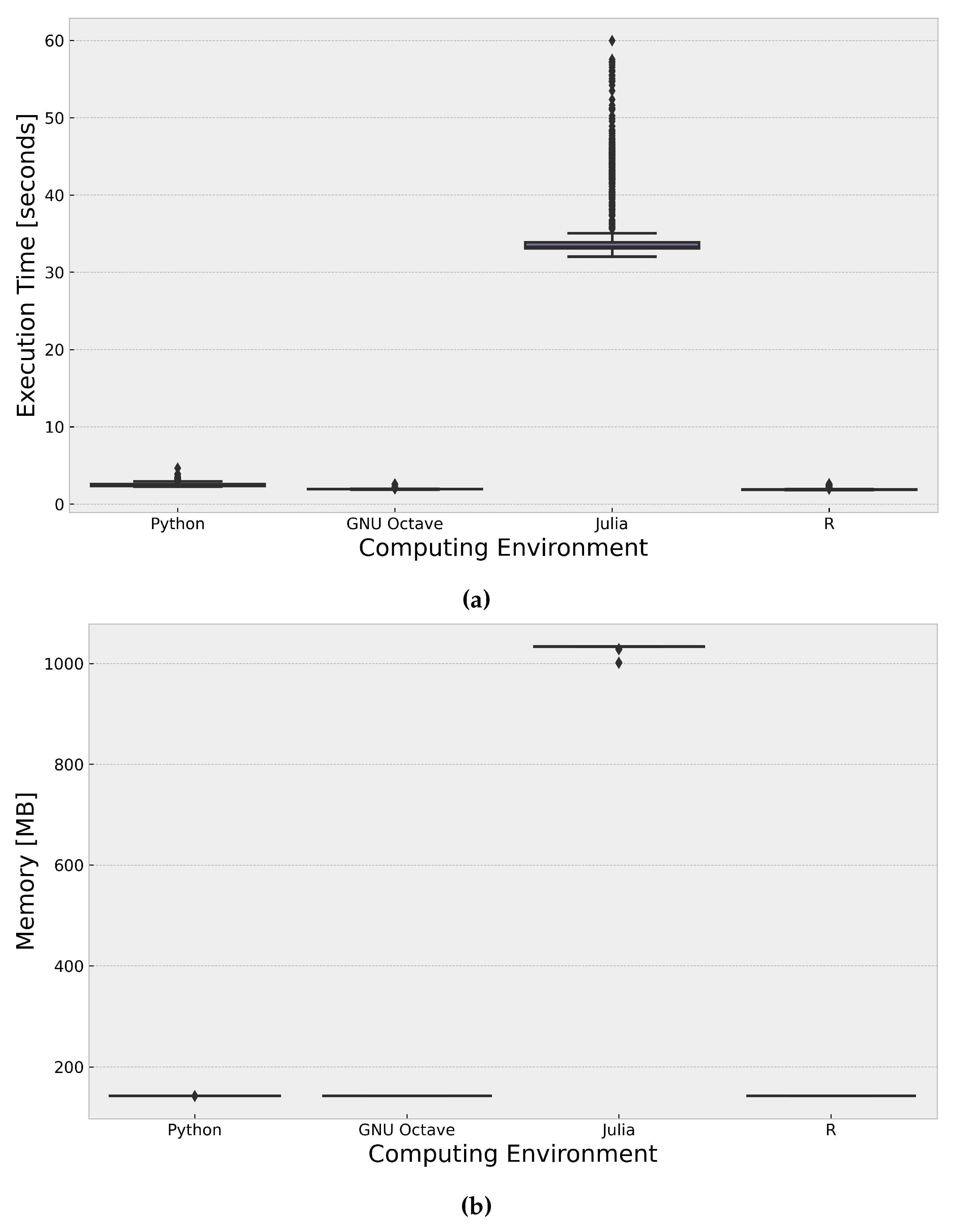

The results (

Figure 2) indicate that R and GNU Octave exhibited the fastest runtimes, with mean values of 1.914 seconds and 1.944 seconds, respectively. The fast performance of R can be attributed to the use of the deSolve package, which provides efficient solvers for ODEs. GNU Octave, on the other hand, likely leverages optimized numerical libraries or solvers for ODE systems. Python followed closely with a mean runtime of 2.503 seconds, benefiting from the SciPy and NumPy libraries, which provide efficient numerical computations and ODE solvers. Notably, Julia had the slowest runtime performance, with a mean of 35.701 seconds, which is significantly longer than the other environments. One potential factor contributing to Julia’s slower performance could be the overhead associated with its JIT compilation process. JIT compilation can introduce additional runtime overhead, particularly for computationally intensive tasks like solving ODEs, which may have impacted Julia’s overall runtime performance in this specific implementation.

The K-W test for memory usage data yielded a test statistic of 3375.563 and a p-value of 0.000, indicating that at least one group’s median memory usage significantly differs from the others. Dunn’s post-hoc test showed that the pairs of groups with p-values below 0.05, indicating significant differences in their median memory usage, were GNU Octave-Julia, GNU Octave-Python, Julia-Python, and Julia-R. The results (

Figure 2) show that Python, R, and GNU Octave exhibited similar memory usage patterns, with a mean of approximately 142.84 MB. Julia, on the other hand, had significantly higher memory usage, with a mean of 1031.703 MB, which is about seven times higher than the other environments. The differences in memory usage across the computing environments can be attributed to various factors, such as the memory management strategies of the respective programming languages and the specific implementation of the double pendulum simulation in each environment. However, it is worth noting that the pure Julia implementation using DifferentialEquations.jl may have different memory requirements or optimization strategies compared to the packages or libraries used in the other environments.

When selecting the appropriate computing environment for the double pendulum simulation, the statistical significance of the runtime and memory usage differences should be considered alongside other factors, such as existing codebase, familiarity with the language, and potential optimizations. If runtime performance is the primary concern, R and GNU Octave would be the recommended choices based on the provided results, with R leveraging the deSolve package and GNU Octave likely utilizing optimized numerical libraries or solvers for ODE systems. Python, with the SciPy and NumPy libraries, also exhibited a relatively good runtime performance and could be a viable option, especially if existing Python code or familiarity with the language is a consideration. If memory usage is a critical factor and the higher memory footprint of Julia is not a concern, Julia could be considered, potentially with further optimization efforts or alternative implementations. It is important to note that the pure Julia implementation using DifferentialEquations.jl may have different memory requirements or optimization strategies compared to the packages or libraries used in the other environments.

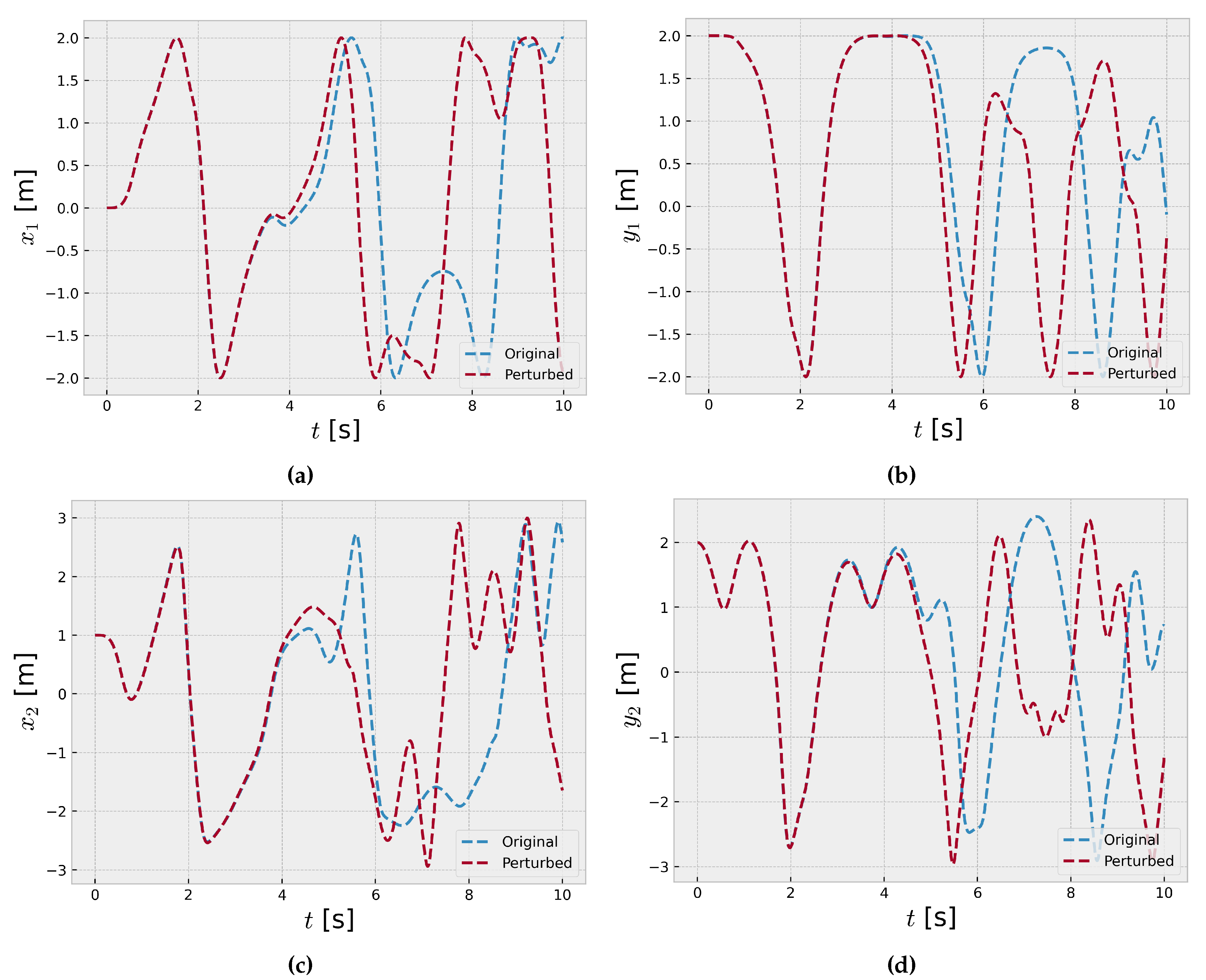

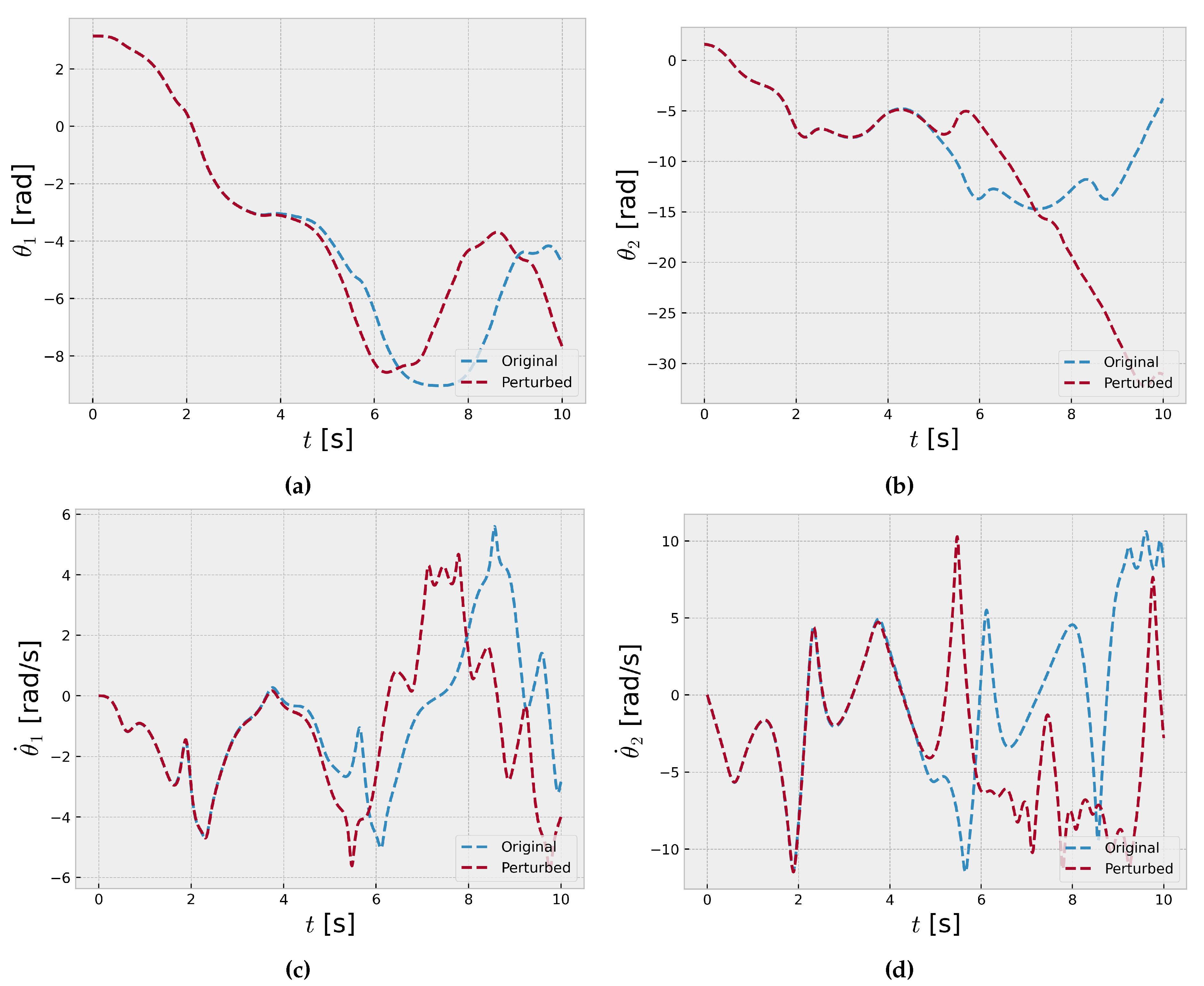

Figure 3 and

Figure 4 present time series plots of a double pendulum system with slightly different initial angular velocities for the inner and outer pendulum. Each subplot displays the time evolution of the angular positions of both pendulums over 10 seconds with 1000 time steps.

The sensitivity to initial conditions is evident as the trajectories diverge significantly, despite only a small difference of 0.001 rad/s in the initial angular velocities (

and

) of the inner and outer pendulums. This phenomenon is characteristic of chaotic systems, where minuscule changes in initial conditions can lead to vastly different long-term behaviors, making precise predictions challenging [

1]. The time series exhibit intricate patterns with recurring oscillations, indicative of the underlying nonlinear dynamics. However, the trajectories quickly diverge, showcasing the system’s sensitivity to initial conditions, a hallmark of chaos theory [

36]. The time series initially following similar paths but rapidly diverging due to the infinitesimal differences in the initial angular velocities, further demonstrating the sensitive dependence on initial conditions in chaotic systems.

These results highlight the importance of accurately measuring and accounting for initial conditions in chaotic systems, as even minute uncertainties can amplify over time, leading to substantial deviations in the system’s behavior. The double pendulum serves as a compelling example of the intricate dynamics and unpredictability that can arise in nonlinear systems due to their extreme sensitivity to initial conditions, a fundamental concept in chaos theory [

37].

To further quantify and statistically validate this divergence, we performed the K-S test on the time series data for various variables, including positions (x, y, ) and angular velocities , before and after the initial perturbation. The K-S test results revealed that for each of these variables, the distributions of the time series data before and after the perturbation were significantly different, with p-values less than 0.05, leading to the rejection of the null hypothesis. This statistically confirms that the slight change in the initial angular velocity has a profound impact on the system’s dynamics, causing the distributions of the position and velocity variables to diverge substantially.

This divergence can be attributed to the chaotic nature of the double pendulum system, where the nonlinear equations governing its motion exhibit extreme sensitivity to initial conditions. Even minuscule differences in the starting values can rapidly amplify over time, leading to vastly different trajectories in the phase space [

36]. The K-S test results corroborate this fundamental characteristic of chaos, demonstrating that the distributions of the system’s variables become statistically distinct due to the exponential divergence of initially close trajectories, a phenomenon known as the "butterfly effect" [

38].

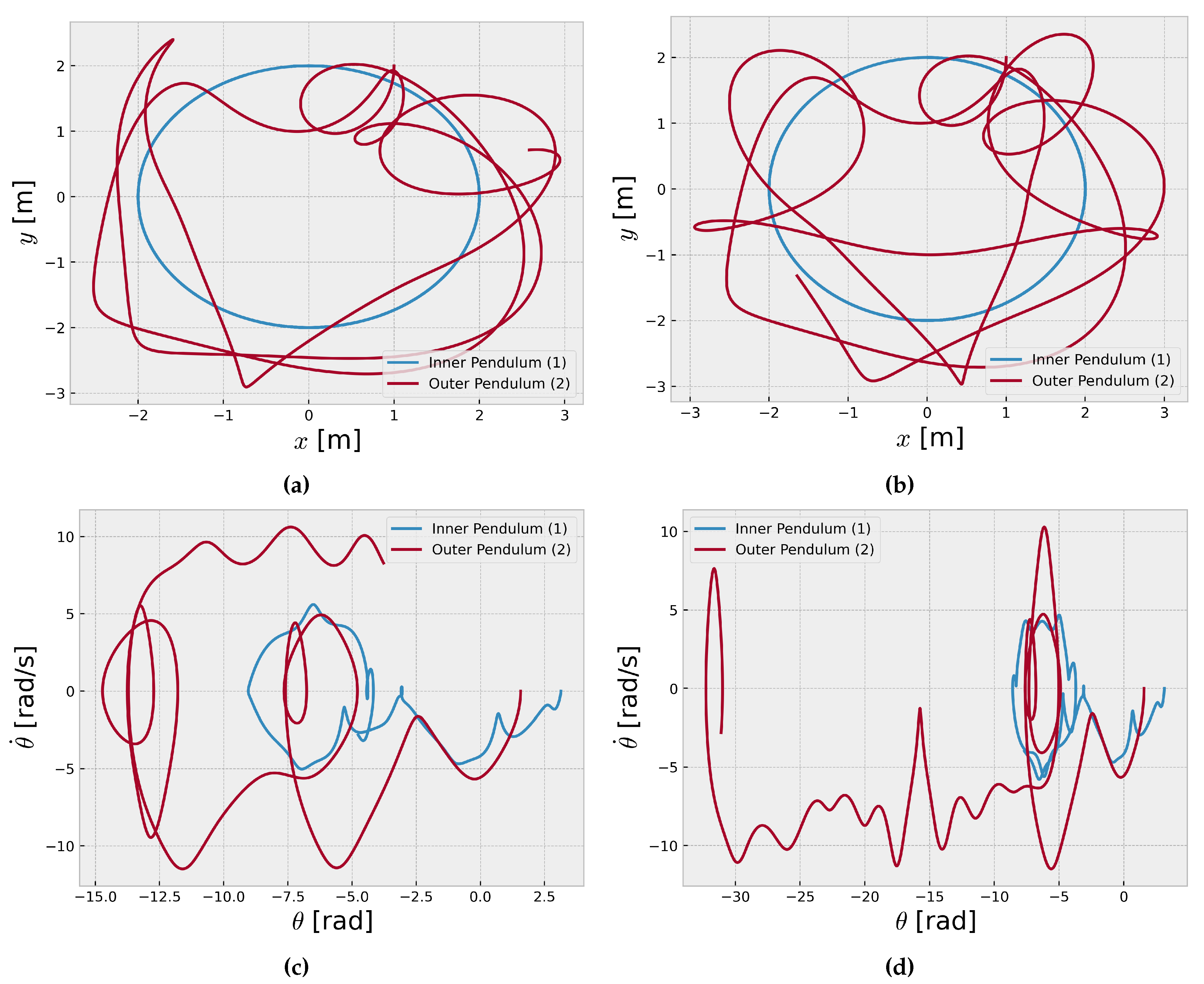

Figure 5 and

Figure 5 show the trajectories of the inner and outer pendulums in the

plane, providing a visualization of their motion over time. However,

Figure 5 and

Figure 5 represent the phase space diagrams of the coupled pendulum system. A phase space diagram is a powerful tool for studying dynamical systems, as it provides a geometric representation of the system’s state at any given time [

13]. In the case of the coupled pendulum system, the phase space diagrams plot the angular position

of each pendulum against its angular velocity

, capturing the evolution of the system’s state over time.

The complex patterns observed in

Figure 5 and

Figure 5 are indicative of the chaotic nature of the coupled pendulum system. The phase space trajectories exhibit intricate, aperiodic behavior, never repeating the same pattern or revisiting the same state. The presence of chaos in the coupled pendulum system has significant consequences for its predictability and controllability [

36]. Even with precise knowledge of the initial conditions and governing equations, the system’s behavior becomes increasingly difficult to predict over long time scales due to the exponential divergence of nearby trajectories in the phase space.

Furthermore, chaotic systems often exhibit strange attractors in the phase space, which are geometrically complex structures that the system’s trajectories are confined. The presence of strange attractors in the phase space diagrams of the coupled pendulum system suggests that the system’s dynamics are governed by an underlying deterministic process, despite the apparent randomness and unpredictability of its behavior [

1].

The high Shannon entropy values (greater than 1.0) mentioned for the time series data of the coupled pendulum system are consistent with the observed chaotic dynamics. For chaotic systems, the Shannon entropy is expected to be high due to the inherent unpredictability and lack of regularity in the system’s behavior [

39].