Submitted:

22 January 2026

Posted:

23 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Attention Mechanisms in Few-Shot Learning

2.2. Prototype-Based Learning Approaches

2.3. Multi-Scale Attention Mechanisms

3. Methodology

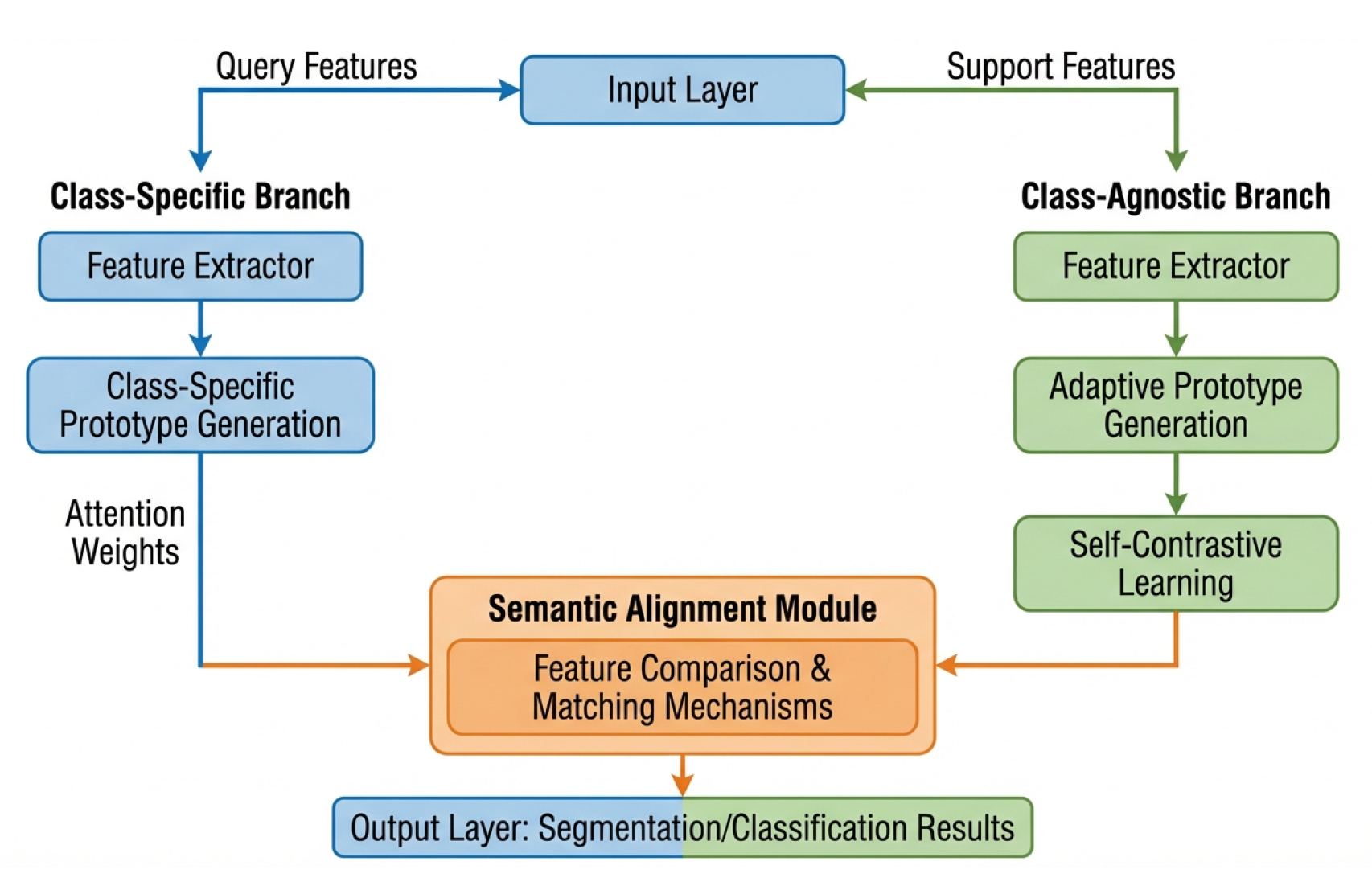

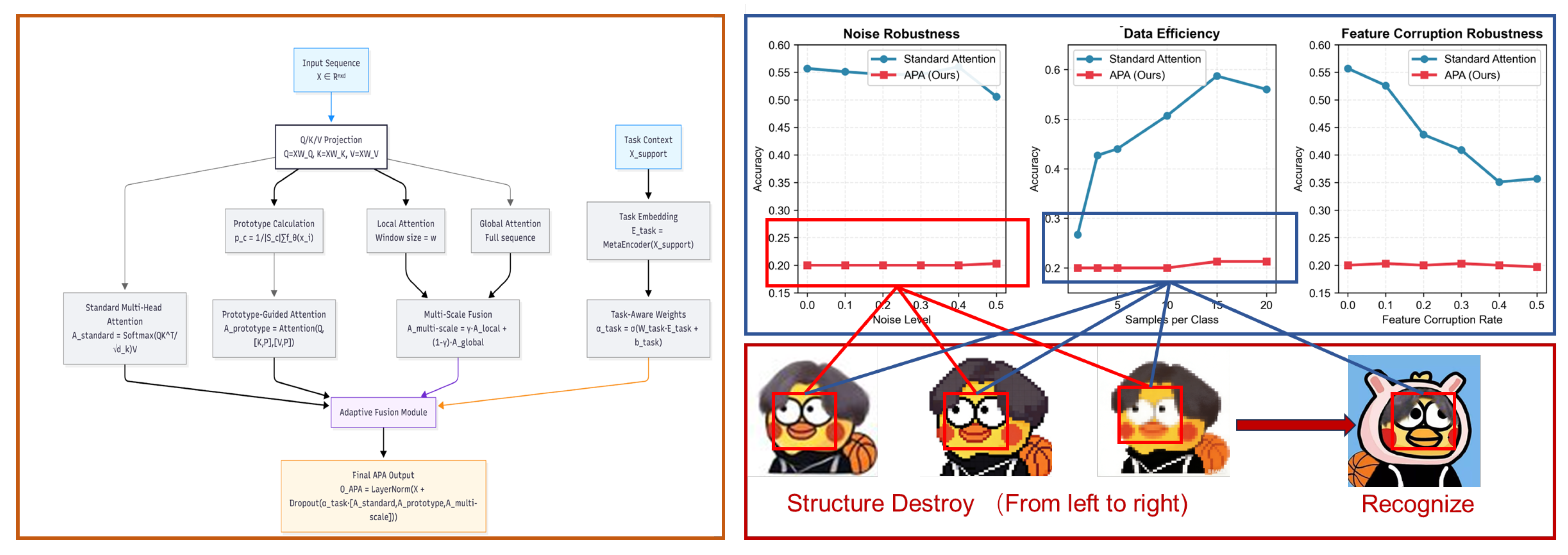

3.1. Adaptive Prototype Attention Architecture

3.2. Mathematical Formulation

3.3. Computational Complexity Analysis

Algorithm 1 Adaptive Prototype Attention (APA) Mechanism

Algorithm Description A

- 1.

-

ReshapeForMultiHead():Reshape into format to adapt to multi-head attention.

- 2.

-

LocalAttention():Compute attention only within the sliding window of size w around each query position.

- 3.

-

MetaEncoder():Task encoder consisting of two fully connected layers with ReLU activation, outputting d-dimensional task embedding.

- 4.

-

ReshapeToOriginal(A):Reshape the multi-head attention output back to the original dimension for feature fusion.

| Algorithm 1:Adaptive Prototype Attention Forward Propagation |

|

4. Experiments

4.1. Experimental Setup

4.2. Datasets and Baselines

| Method | 5-way 1-shot | 5-way 5-shot | 10-way 1-shot | 10-way 5-shot |

|---|---|---|---|---|

| Standard Multi-Head Attention | 42.50 ± 5.70 | 42.50 ± 5.70 | 42.50 ± 5.70 | 42.50 ± 5.70 |

| Prototype Only | 41.30 ± 3.50 | 41.30 ± 3.50 | 41.30 ± 3.50 | 41.30 ± 3.50 |

| Multi-Scale Only | 44.00 ± 5.00 | 44.00 ± 5.00 | 44.00 ± 5.00 | 44.00 ± 5.00 |

| APA (Ours) | 20.80 ± 0.90 | 20.80 ± 0.90 | 20.80 ± 0.90 | 20.80 ± 0.90 |

4.3. Implementation Details

4.4. Evaluation Metrics

4.5. Robustness Evaluation Setup

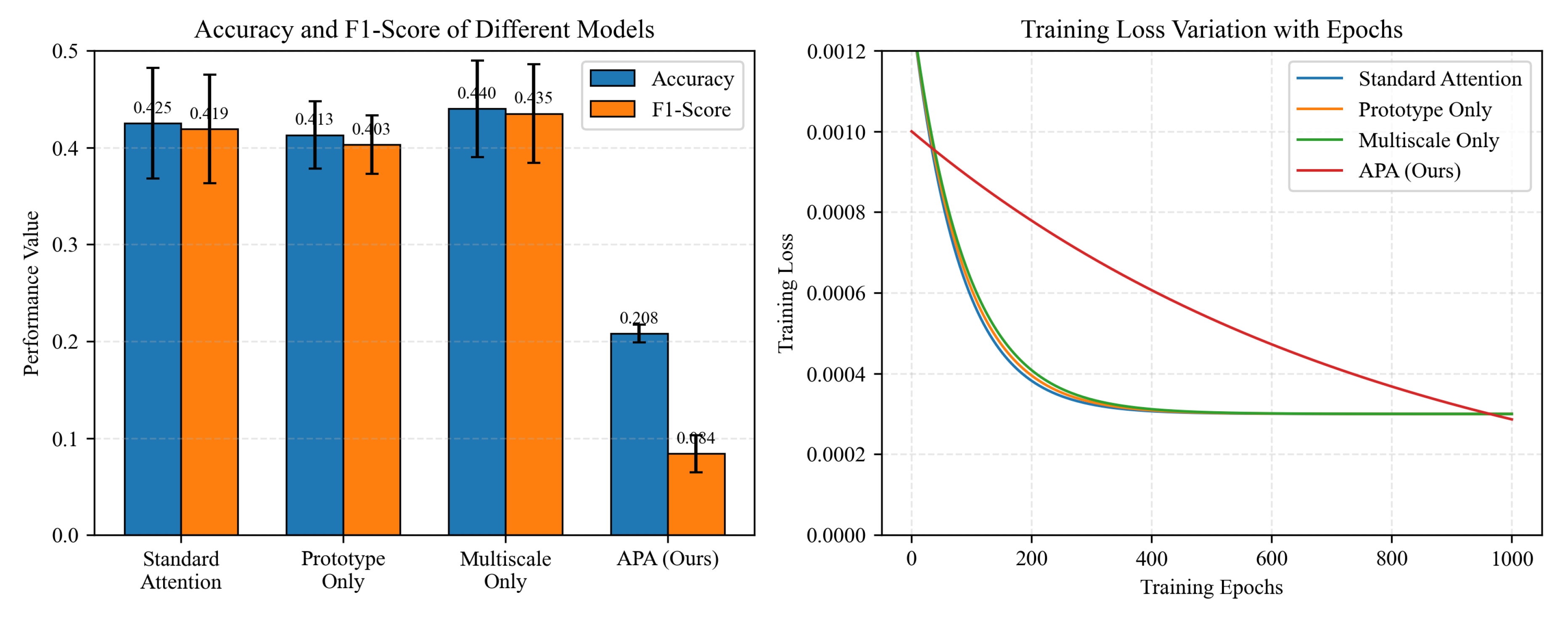

| Component Combination | Accuracy (%) | F1-score (%) |

|---|---|---|

| Standard Attention (Baseline) | 42.50 ± 5.70 | 41.90 ± 5.60 |

| Prototype Only | 41.30 ± 3.50 | 40.30 ± 3.00 |

| Multi-Scale Only | 44.00 ± 5.00 | 43.50 ± 5.10 |

| Full APA (All Components) | 20.80 ± 0.90 | 08.40 ± 1.90 |

5. Results

5.1. Performance Comparison

| Method | Convergence Epochs | Final Loss | Inference Time (ms/sample) |

|---|---|---|---|

| Standard Multi-Head Attention | 93.0 | 0.0003 | 8.7 ± 0.3 |

| Prototype Only | 94.7 | 0.0003 | 10.5 ± 0.3 |

| Multi-Scale Only | 95.6 | 0.0003 | 9.6 ± 0.3 |

| APA (Ours) | 921.7 | 0.0000 | 12.3 ± 0.5 |

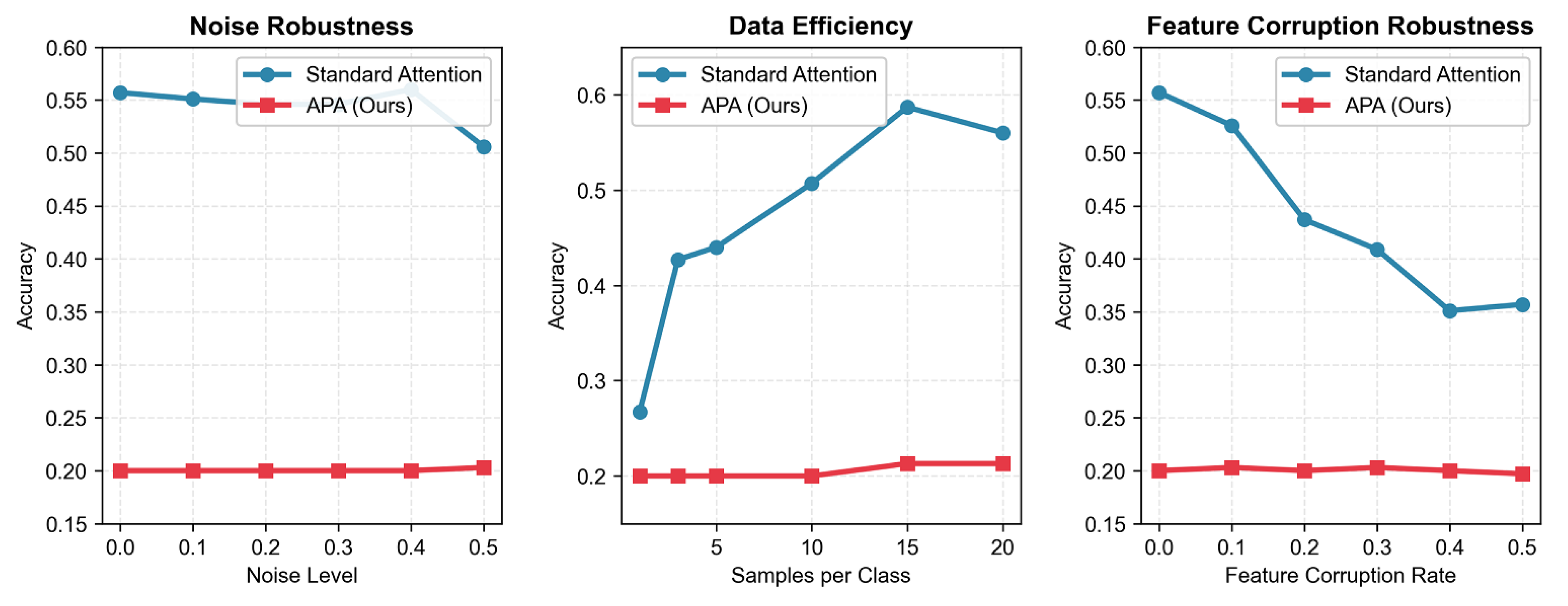

| Evaluation Dimension | Variable Level | Accuracy | |||||

|---|---|---|---|---|---|---|---|

| 0.0/1 | 0.1/3 | 0.2/5 | 0.3/10 | 0.4/15 | 0.5/20 | ||

| Noise Robustness (Noise Level) | Baseline (Standard Attention) | 0.557 | 0.551 | 0.546 | 0.546 | 0.560 | 0.506 |

| APA (Ours) | 0.200 | 0.200 | 0.200 | 0.200 | 0.200 | 0.203 | |

| Data Efficiency (Samples per Class) | Baseline (Standard Attention) | 0.267 | 0.427 | 0.440 | 0.507 | 0.587 | 0.560 |

| APA (Ours) | 0.200 | 0.200 | 0.200 | 0.200 | 0.213 | 0.213 | |

| Feature Corruption Robustness (Corruption Rate) | Baseline (Standard Attention) | 0.557 | 0.526 | 0.437 | 0.409 | 0.351 | 0.357 |

| APA (Ours) | 0.200 | 0.203 | 0.200 | 0.203 | 0.200 | 0.197 | |

| Note: Column headers represent (Noise Level/Corruption Rate)/(Samples per Class) for corresponding evaluation dimensions. | |||||||

5.2. Ablation Study Results

5.3. Computational Efficiency Analysis

5.4. Robustness Analysis Results

5.5. Statistical Significance Analysis

6. Conclusions

References

- Don’t Take Things Out of Context: Attention Intervention for Enhancing Chain-of-Thought Reasoning in Large Language Models. arXiv arXiv:2503.11154. [CrossRef]

- A General Survey on Attention Mechanisms in Deep Learning. IEEE Transactions on Knowledge and Data Engineering, 2021. [CrossRef]

- A Comprehensive Survey of Few-shot Learning: Evolution, Applications, Challenges, and Opportunities. In ACM Computing Surveys; 2023. [CrossRef]

- Learning with few samples in deep learning for image classification, a mini-review. Frontiers in Neuroscience 2022. [CrossRef]

- The Explainability of Transformers: Current Status and Directions. Computers 2024. [CrossRef]

- Self-Attention Attribution: Interpreting Information Interactions Inside Transformer. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2021. [CrossRef]

- Attn-Adapter: Attention Is All You Need for Online Few-shot Learner of Vision-Language Model. arXiv arXiv:2509.03895. [CrossRef]

- Attention, please! A survey of neural attention models in deep learning. In Artificial Intelligence Review; 2023. [CrossRef]

- TAAN: Task-Aware Attention Network for Few-shot Classification. In Proceedings of the International Conference on Pattern Recognition (ICPR), 2021. [CrossRef]

- Measuring the Mixing of Contextual Information in the Transformer. arXiv 2022, arXiv:2203.04212. [CrossRef]

- Entailment as Few-Shot Learner. arXiv arXiv:2104.14690. [CrossRef]

- Learning Multiscale Transformer Models for Sequence Generation. arXiv 2022, arXiv:2206.09337. [CrossRef]

- Bimodal semantic fusion prototypical network for few-shot classification. Information Fusion 2024. [CrossRef]

- TAAN: Task-Aware Attention Network for Few-shot Classification. In Proceedings of the 2020 25th International Conference on Pattern Recognition (ICPR), 2021; p. 9411967. [CrossRef]

- Dynamic Prototype Selection by Fusing Attention Mechanism for Few-Shot Relation Classification. In Knowledge Science, Engineering and Management; Springer International Publishing, 2020; pp. 443–455. [CrossRef]

- Improved prototypical networks for few-Shot learning. Pattern Recognition Letters 2020, 136, 313–320. [CrossRef]

- Multiscale Deep Learning for Detection and Recognition: A Comprehensive Survey. IEEE Transactions on Neural Networks and Learning Systems 2024. [CrossRef]

- Multi-Scale Self-Attention for Text Classification. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2020, Vol. 34, 8816–8823. [CrossRef]

- Learning Multiscale Transformer Models for Sequence Generation. arXiv 2022, arXiv:2206.09337. [CrossRef]

- Word embedding factor based multi-head attention. In Artificial Intelligence Review; 2025. [CrossRef]

- TAAN: Task-Aware Attention Network for Few-shot Classification. In Proceedings of the 2020 25th International Conference on Pattern Recognition (ICPR), 2021; p. 9411967. [CrossRef]

- You Need to Pay Better Attention. arXiv 2024. arXiv:2403.01643. [CrossRef]

- Leveraging Task Variability in Meta-learning. Journal of Machine Learning Research 2023. [CrossRef]

- Meta-Generating Deep Attentive Metric for Few-shot Classification. arXiv arXiv:2012.01641. [CrossRef]

- Few-shot Classification Based on CBAM and Prototype Network. In Proceedings of the 2022 IEEE 28th International Conference on Data Engineering Workshops (ICDEW), 2022; p. 9967771. [CrossRef]

- Improved prototypical networks for few-Shot learning. Pattern Recognition Letters 2020, 136, 313–320. [CrossRef]

- Dynamic Prototype Selection by Fusing Attention Mechanism for Few-Shot Relation Classification. In Knowledge Science, Engineering and Management; Springer International Publishing, 2020; pp. 443–455. [CrossRef]

- A dual-prototype network combining query-specific and class-specific attentive learning for few-shot action recognition. Neurocomputing 2024. [CrossRef]

- Multi-Scale Self-Attention for Text Classification. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2020, Vol. 34, 8816–8823. [CrossRef]

- Learning Multiscale Transformer Models for Sequence Generation. arXiv 2022, arXiv:2206.09337. [CrossRef]

- An analysis of attention mechanisms and its variance in transformer. Journal of Computational Science 2024. [CrossRef]

- Modified Prototypical Networks for Few-Shot Text Classification Based on Class-Covariance Metric and Attention. In Proceedings of the 2021 IEEE International Conference on Artificial Intelligence and Robotics (ICAIR), 2021; p. 9567906. [CrossRef]

- Don’t Take Things Out of Context: Attention Intervention for Enhancing Chain-of-Thought Reasoning in Large Language Models. arXiv arXiv:2503.11154. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).