Submitted:

13 February 2026

Posted:

13 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We are the first to introduce Scalable Vector Graphics (SVG) as a visual modality in multimodal learning, emphasizing its resolution independence and structural semantics.

- We propose an integrated framework MNER-SVG combining SVG representations, ChatGPT-generated auxiliary knowledge, and graph-based cross-modal interactions via Graph Attention Networks (GATs), enhancing both performance and interpretability.

- We present SvgNER, the first SVG-annotated dataset for MNER. Experimental results demonstrate the effectiveness of our approach, validating SVG as a promising modality for fine-grained multimodal understanding.

2. Related Works

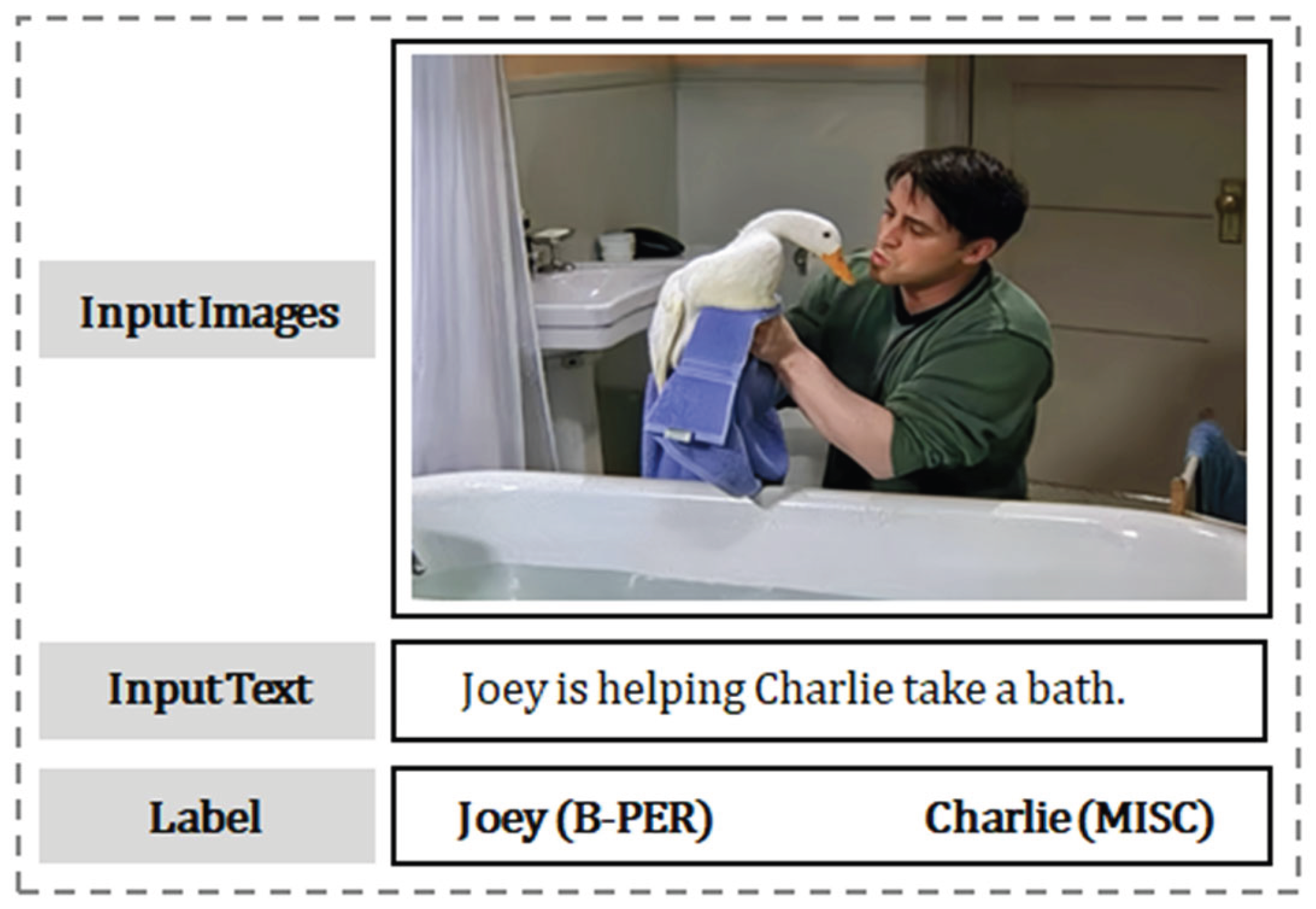

2.1. Multimodal Named Entity Recognition

2.2. Prompt Engineering for NER with ChatGPT

2.3. Summary

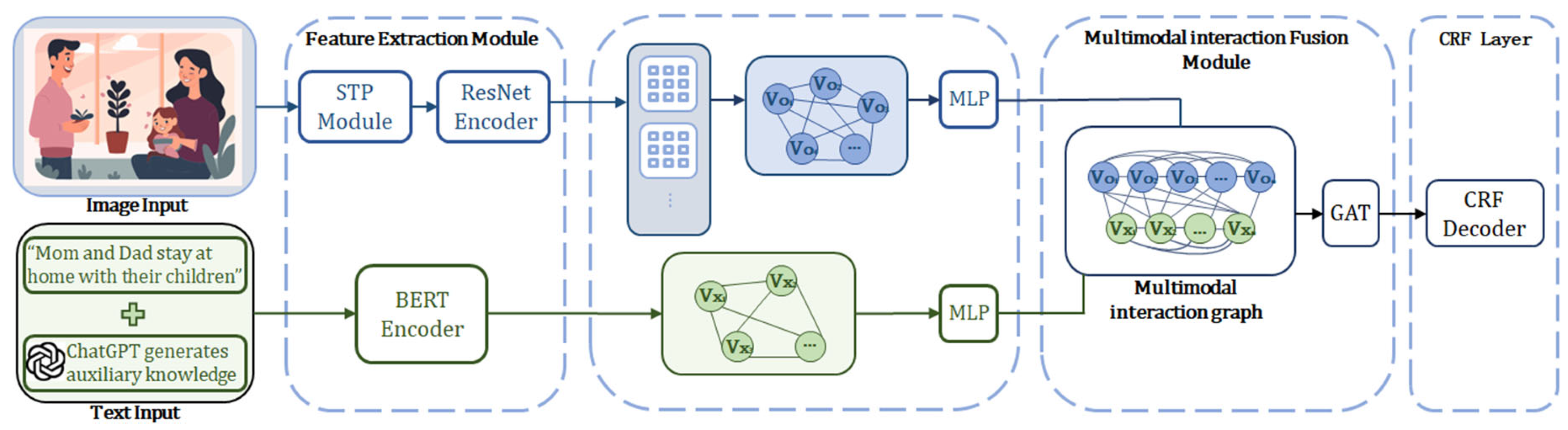

3. Methods

3.1. Feature Extraction Module

3.1.1. Textual Representation

3.1.2. Visual Representation

- Vector Parsing: Parse XML-based primitives, line segments , Bézier curves , and region fills.

- Rasterization: Convert continuous vector coordinates to discrete pixels with resolution : .

- Encoding: PNG images with RGBA channels are compressed using the DEFLATE algorithm: .

| Algorithm 1. Convert SVG to PNG. |

|

Input: Path to an SVG file Output: PNG image in RGB format, or None if conversion fails |

|

3.1.3. Visual Feature Extraction

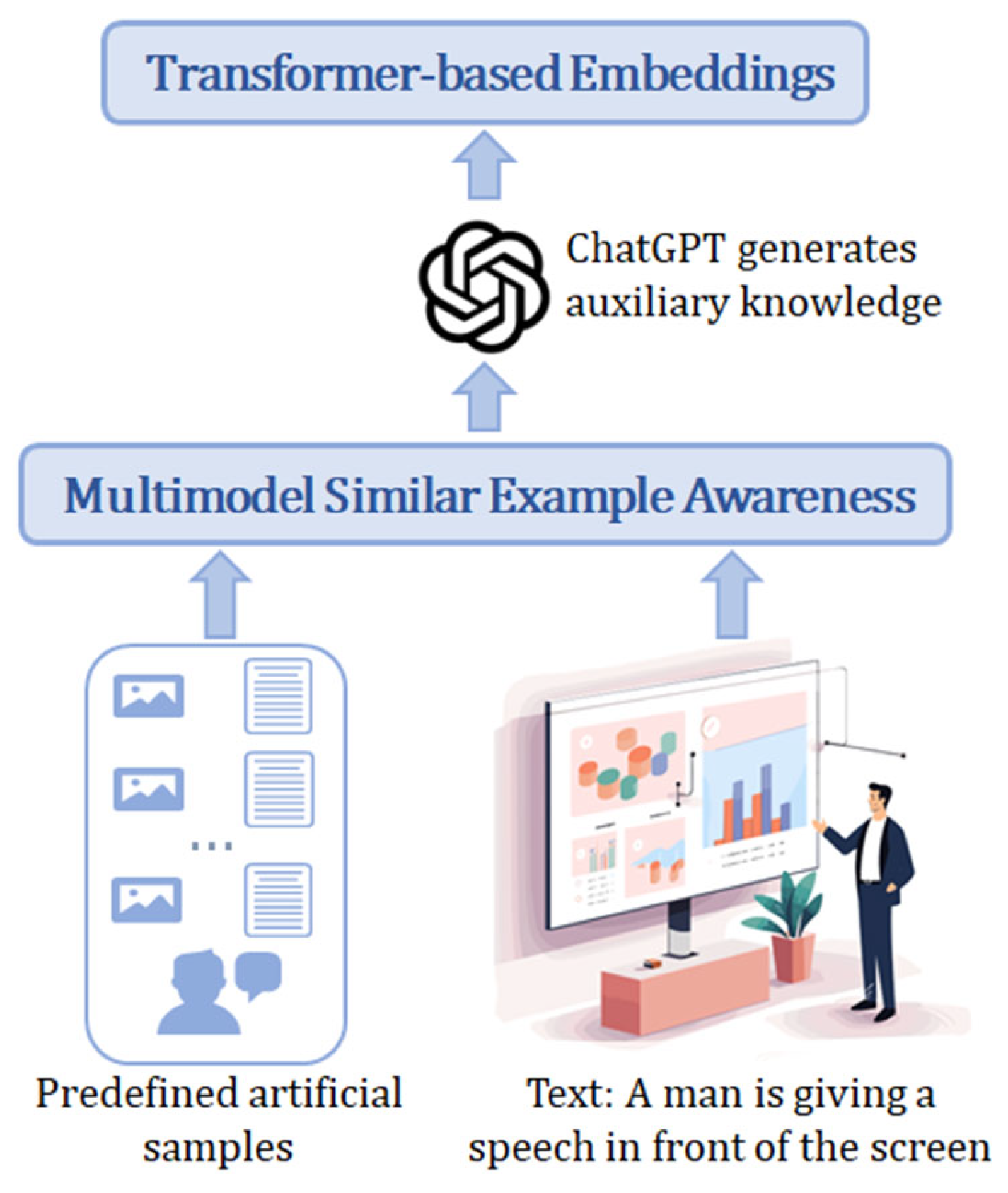

3.2. ChatGPT-Generated Auxiliary Knowledge Module

3.2.1. Reference Sample Annotation

3.2.2. Multimodal Similarity-Based Example Selection

3.3. Multimodal Interaction Fusion

3.3.1. Multimodal Interaction Graph Construction

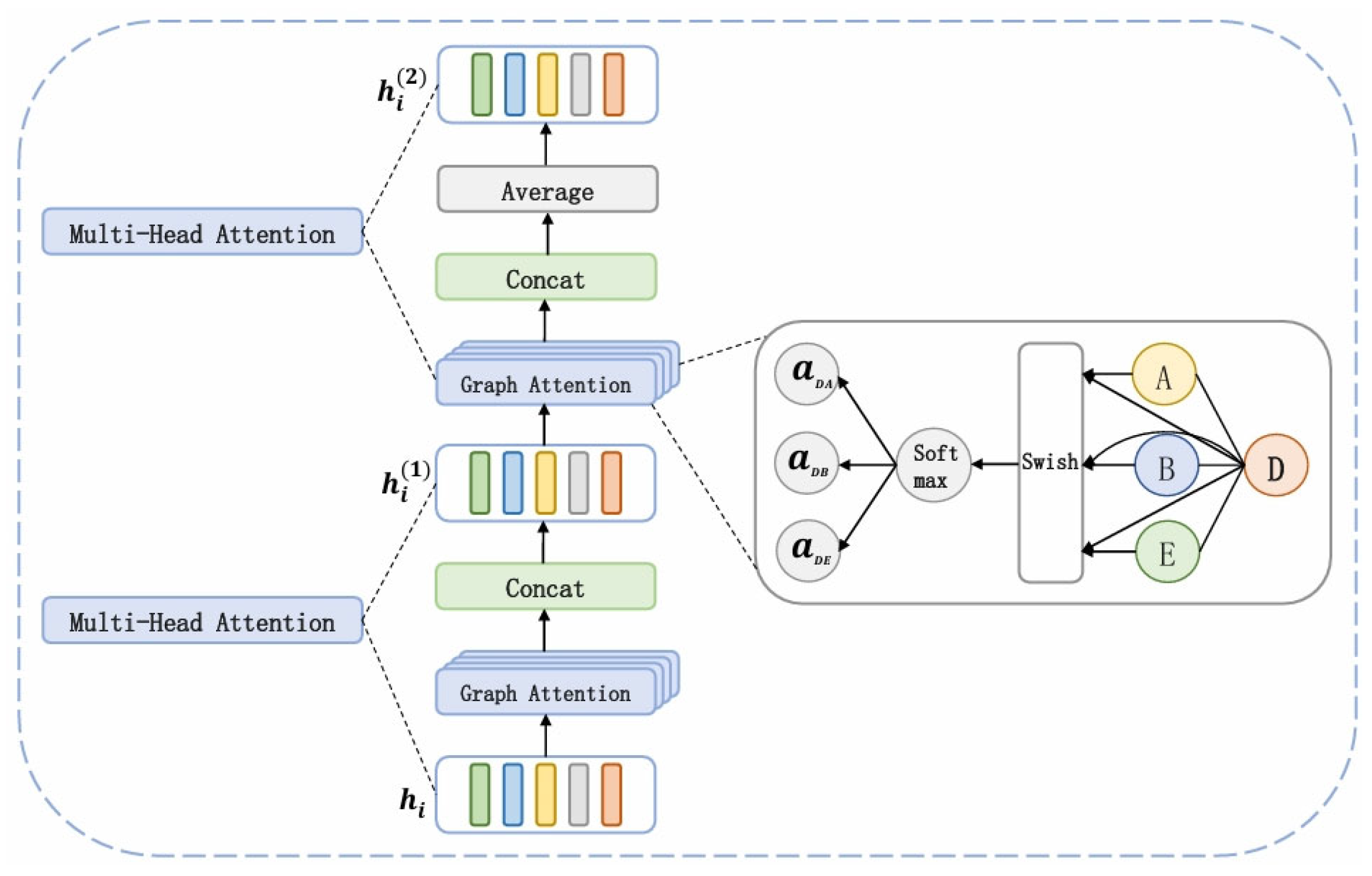

3.3.2. Cross-Modal Feature Fusion with GAT

3.4. CRF Decoding Module

4. Experimental Evaluation

4.1. SvgNER Dataset

4.2. Data Structure

4.3. Experimental Details

4.4. Evaluation Metrics

4.5. Comparison Experiments

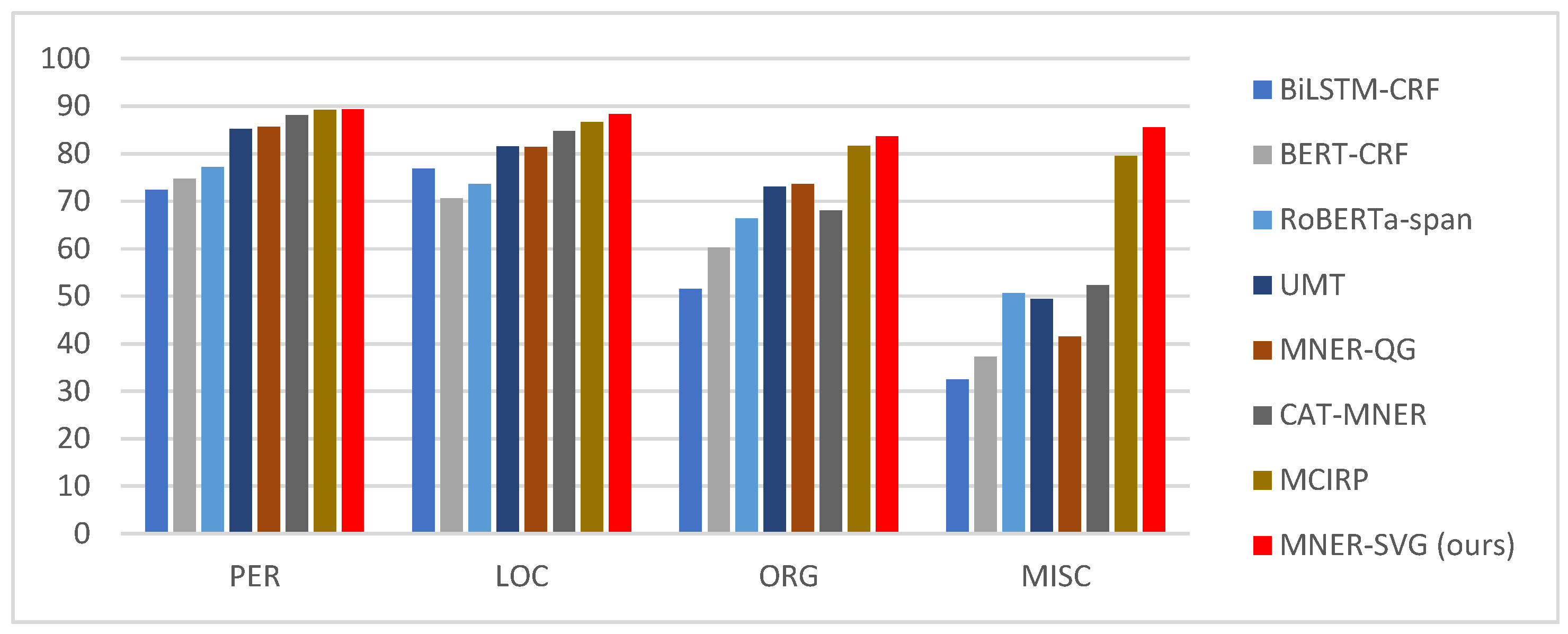

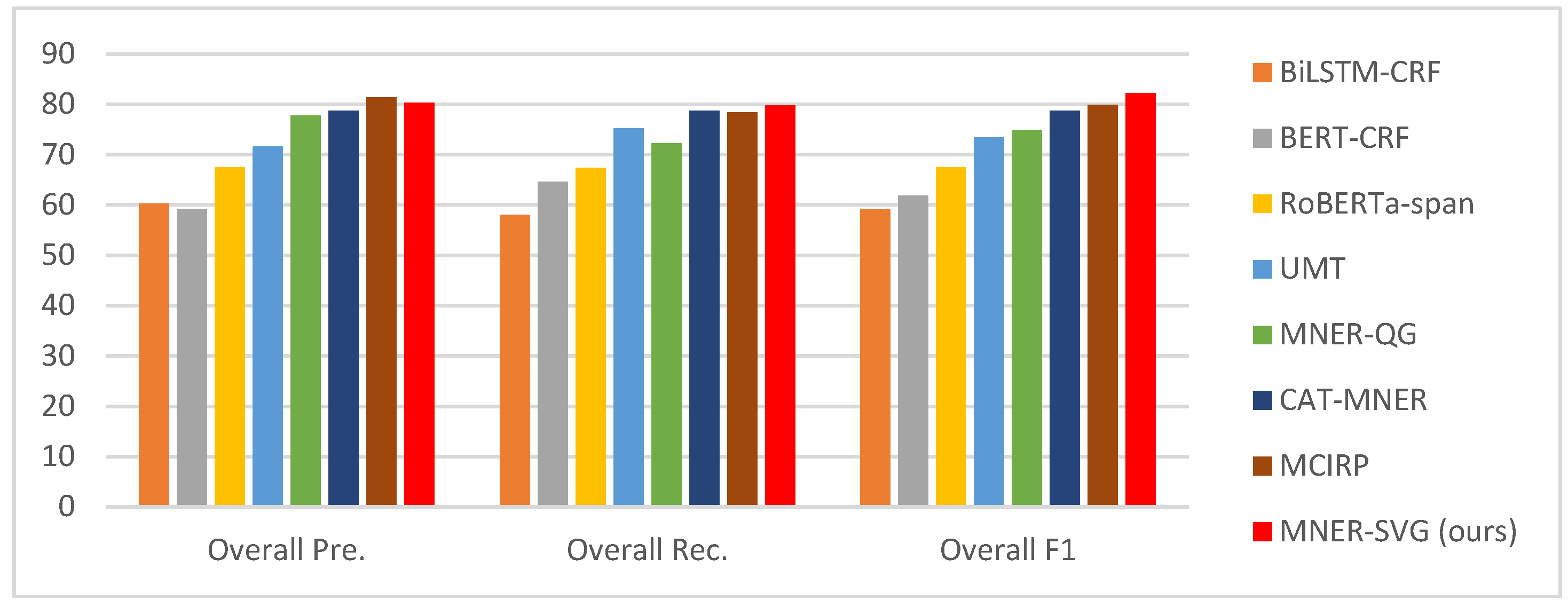

4.6. Results and Analysis

4.7. Ablation Study

4.7.1. Impact of ChatGPT-Generated Knowledge

4.7.2. Effect of Removing ChatGPT Auxiliary Knowledge

4.7.3. Effectiveness of the GNN Module

- GCN for Text and Visual Representation: Removing GCN from either graph severely impacts performance, demonstrating its role in enhancing representation by aggregating topological and node features.

- GAT for Multimodal Fusion: GAT outperforms GCN in fusion, adaptively weighting features and improving cross-modal relationships for better integration.

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Seow, W.L.; Chaturvedi, I.; Hogarth, A.; Mao, R.; Cambria, E. A Review of Named Entity Recognition: From Learning Methods to Modelling Paradigms and Tasks. Artif Intell Rev 2025, 58, 315. [Google Scholar] [CrossRef]

- Li, J.; Sun, A.; Han, J.; Li, C. A Survey on Deep Learning for Named Entity Recognition. IEEE transactions on knowledge and data engineering 2020, 34, 50–70. [Google Scholar] [CrossRef]

- Zhu, C.; Chen, M.; Zhang, S.; Sun, C.; Liang, H.; Liu, Y.; Chen, J. SKEAFN: Sentiment Knowledge Enhanced Attention Fusion Network for Multimodal Sentiment Analysis. Information Fusion 2023, 100, 101958. [Google Scholar] [CrossRef]

- Geng, H.; Qing, H.; Hu, J.; Huang, W.; Kang, H. A Named Entity Recognition Method for Chinese Vehicle Fault Repair Cases Based on a Combined Model. Electronics 2025, 14, 1361. [Google Scholar] [CrossRef]

- Shi, X.; Yu, Z. Adding Visual Information to Improve Multimodal Machine Translation for Low-Resource Language. Mathematical Problems in Engineering 2022, 2022, 1–9. [Google Scholar] [CrossRef]

- Chen, F.; Chen, X.; Xu, S.; Xu, B. Improving Cross-Modal Understanding in Visual Dialog Via Contrastive Learning. In Proceedings of the ICASSP 2022 - 2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), May 2022; pp. 7937–7941. [Google Scholar]

- Xu, B.; Huang, S.; Sha, C.; Wang, H. MAF: A General Matching and Alignment Framework for Multimodal Named Entity Recognition. In Proceedings of the Fifteenth ACM International Conference on Web Search and Data Mining, February 11 2022; ACM: Virtual Event AZ USA; pp. 1215–1223. [Google Scholar]

- Chai, Y.; Xie, H.; Qin, J.S. Text Data Augmentation for Large Language Models: A Comprehensive Survey of Methods, Challenges, and Opportunities. Artif Intell Rev 2025, 59, 35. [Google Scholar] [CrossRef]

- Wang, Z.; Chen, H.; Xu, G.; Ren, M. A Novel Large-Language-Model-Driven Framework for Named Entity Recognition. Information Processing & Management 2025, 62, 104054. [Google Scholar] [CrossRef]

- Zhang, Q.; Fu, J.; Liu, X.; Huang, X. Adaptive Co-Attention Network for Named Entity Recognition in Tweets. In Proceedings of the AAAI conference on artificial intelligence, 2018; Vol. 32. [Google Scholar]

- Yu, J.; Jiang, J.; Yang, L.; Xia, R. Improving Multimodal Named Entity Recognition via Entity Span Detection with Unified Multimodal Transformer. Association for Computational Linguistics, 2020. [Google Scholar]

- Zhang, D.; Wei, S.; Li, S.; Wu, H.; Zhu, Q.; Zhou, G. Multi-Modal Graph Fusion for Named Entity Recognition with Targeted Visual Guidance. Proceedings of the AAAI conference on artificial intelligence 2021, Vol. 35, 14347–14355. [Google Scholar] [CrossRef]

- Xu, M.; Peng, K.; Liu, J.; Zhang, Q.; Song, L.; Li, Y. Multimodal Named Entity Recognition Based on Topic Prompt and Multi-Curriculum Denoising. Information Fusion 2025, 124, 103405. [Google Scholar] [CrossRef]

- Li, E.; Li, T.; Luo, H.; Chu, J.; Duan, L.; Lv, F. Adaptive Multi-Scale Language Reinforcement for Multimodal Named Entity Recognition. IEEE Transactions on Multimedia; 2025. [Google Scholar]

- Zhang, Q.; Song, Z.; Wang, D.; Cai, Y.; Bi, M.; Zuo, M. Named Entity Recognition and Coreference Resolution Using Prompt-Based Generative Multimodal. Complex Intell. Syst. 2025, 12, 10. [Google Scholar] [CrossRef]

- Mu, Y.; Guo, Z.; Li, X.; Shao, L.; Liu, S.; Li, F.; Mei, G. MCIRP: A Multi-Granularity Cross-Modal Interaction Model Based on Relational Propagation for Multimodal Named Entity Recognition with Multiple Images. Information Processing & Management 2026, 63, 104384. [Google Scholar] [CrossRef]

- Wei, X.; Cui, X.; Cheng, N.; Wang, X.; Zhang, X.; Huang, S.; Xie, P.; Xu, J.; Chen, Y.; Zhang, M.; et al. ChatIE: Zero-Shot Information Extraction via Chatting with ChatGPT 2024.

- Chen, Y.; Zhang, B.; Li, S.; Jin, Z.; Cai, Z.; Wang, Y.; Qiu, D.; Liu, S.; Zhao, J. Prompt Robust Large Language Model for Chinese Medical Named Entity Recognition. Information Processing & Management 2025, 62, 104189. [Google Scholar] [CrossRef]

- De, S.; Sanyal, D.K.; Mukherjee, I. Fine-Tuned Encoder Models with Data Augmentation Beat ChatGPT in Agricultural Named Entity Recognition and Relation Extraction. Expert Systems with Applications 2025, 277, 127126. [Google Scholar] [CrossRef]

- Han, R.; Peng, T.; Yang, C.; Wang, B.; Liu, L.; Wan, X. Is Information Extraction Solved by Chatgpt? An Analysis of Performance, Evaluation Criteria, Robustness and Errors. arXiv 2023, arXiv:2305.14450, 48. [Google Scholar] [CrossRef]

- Li, B.; Fang, G.; Yang, Y.; Wang, Q.; Ye, W.; Zhao, W.; Zhang, S. Evaluating ChatGPT’s Information Extraction Capabilities: An Assessment of Performance, Explainability, Calibration, and Faithfulness 2023.

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. Bert: Pre-Training of Deep Bidirectional Transformers for Language Understanding. Proceedings of the 2019 conference of the North American chapter of the association for computational linguistics: human language technologies, volume 1 (long and short papers) 2019, 4171–4186. [Google Scholar]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A. Language Models Are Few-Shot Learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Yang, Z.; Gan, Z.; Wang, J.; Hu, X.; Lu, Y.; Liu, Z.; Wang, L. An Empirical Study of Gpt-3 for Few-Shot Knowledge-Based Vqa. Proceedings of the AAAI conference on artificial intelligence 2022, Vol. 36, 3081–3089. [Google Scholar] [CrossRef]

- Lopes, R.G.; Ha, D.; Eck, D.; Shlens, J. A Learned Representation for Scalable Vector Graphics; 2019; pp. 7930–7939. [Google Scholar]

- G. C. Lab The Quick, Draw! Dataset. Available online: https://github.com/googlecreativelab/quickdraw-dataset (accessed on 25 January 2026).

- Moon, S.; Neves, L.; Carvalho, V. Multimodal Named Entity Recognition for Short Social Media Posts. In Proceedings of the Proceedings of NAACL-HLT, 2018; pp. 852–860. [Google Scholar]

- Huang, Z.; Xu, W.; Yu, K. Bidirectional LSTM-CRF Models for Sequence Tagging 2015.

- Yamada, I.; Asai, A.; Shindo, H.; Takeda, H.; Matsumoto, Y. LUKE: Deep Contextualized Entity Representations with Entity-Aware Self-Attention 2020.

- Jia, M.; Shen, L.; Shen, X.; Liao, L.; Chen, M.; He, X.; Chen, Z.; Li, J. Mner-Qg: An End-to-End Mrc Framework for Multimodal Named Entity Recognition with Query Grounding. Proceedings of the AAAI conference on artificial intelligence 2023, Vol. 37, 8032–8040. [Google Scholar] [CrossRef]

- Wang, X.; Ye, J.; Li, Z.; Tian, J.; Jiang, Y.; Yan, M.; Zhang, J.; Xiao, Y. CAT-MNER: Multimodal Named Entity Recognition with Knowledge-Refined Cross-Modal Attention. In Proceedings of the 2022 IEEE international conference on multimedia and expo (ICME), 2022; IEEE; pp. 1–6. [Google Scholar]

| Prompt Input (Few-Shot + Test) | ChatGPT Output (Auxiliary Knowledge) |

|---|---|

| Task: Extract named entities from the following text and SVG description. Example1: Text: “Tesla opens a factory in Berlin.” SVG: factory icon. Entities: [“Tesla”: ORG, “Berlin”: LOC]. Input: Text: “Beijing University organizes a seminar in Shanghai.” SVG: university logo icon. Entities: [?] — to be identified. |

“The SVG shows a stylized educational institution logo. So ‘Beijing University’ should be ORG and ‘Shanghai’ LOC.” This auxiliary text helps disambiguate that “Beijing University” is an organization entity in the educational context. |

| Total entities | Beginning of Entity | Inside of Entity | Outside of Entity |

|---|---|---|---|

| 2130 | 903 | 462 | 765 |

| Methods | Year | Text Encoder | Image Encoder | Multimodal Fusion | Decoder |

|---|---|---|---|---|---|

| Text-based Methods | |||||

| BiLSTM-CRF [28] | 2015 | BiLSTM | N/A | N/A | CRF |

| BERT-CRF [22] | 2018 | BERT | N/A | N/A | CRF |

| RoBERTa-span [29] | 2020 | RoBERTa | N/A | N/A | N/A |

| Multimodal Methods (Text + Image) | |||||

| UMT [11] | 2020 | BERT | ResNet (Local) | Transformer + Cross-attention | CRF |

| MNER-QG [30] | 2022 | BERT | ResNet-101 | Query Grounding + Concatenation | CRF |

| CAT-MNER [31] | 2022 | BERT | ResNet-50 | Cross-Modal Refinement | CRF |

| MCIRP[16] | 2026 | BERT | ResNet | Relational Propagation + Multi-granularity Cross-modal Interaction |

CRF |

| Methods | PER/ F1 |

LOC/ F1 |

ORG/ F1 |

MISC/ F1 |

Overall Pre. |

Overall Rec. |

Overall F1 |

|---|---|---|---|---|---|---|---|

| BiLSTM-CRF (text only) |

72.34 | 76.83 | 51.59 | 32.52 | 60.32 | 58.05 | 59.17 |

| BERT-CRF (text only) |

74.74 | 70.51 | 60.27 | 37.29 | 59.22 | 64.59 | 61.81 |

| RoBERTa-span (text only) |

77.20 | 73.58 | 66.33 | 50.66 | 67.48 | 67.43 | 67.45 |

| UMT | 85.24 | 81.58 | 73.03 | 49.45 | 71.67 | 75.23 | 73.41 |

| MNER-QG | 85.68 | 81.42 | 73.62 | 41.53 | 77.76 | 72.31 | 74.94 |

| CAT-MNER | 88.04 | 84.70 | 68.04 | 52.33 | 78.75 | 78.69 | 78.72 |

| MCIRP | 89.23 | 86.72 | 81.61 | 79.49 | 81.39 | 78.45 | 79.89 |

| MNER-SVG (ours) | 89.37 | 88.29 | 83.59 | 85.56 | 80.27 | 79.84 | 82.23 |

| SvgNER Pre. | SvgNER Rec. | SvgNER F1 | |

|---|---|---|---|

| fs-50 | 48.72 | 50.38 | 49.51 |

| fs-100 | 58.63 | 67.58 | 62.82 |

| fs-200 | 67.48 | 71.34 | 69.44 |

| full-shot | 82.34 | 82.09 | 82.23 |

| VanillaGPT | 51.83 | 73.17 | 59.76 |

| PromptGPTN=1 | 53.62 | 71.26 | 62.20 |

| PromptGPTN=5 | 68.37 | 72.45 | 71.81 |

| PromptGPTN=10 | 71.86 | 75.50 | 74.38 |

| SvgNER Pre. | SvgNER Rec. | SvgNER F1 | |

|---|---|---|---|

| w/o ChatGPT (plain SVG) | 78.90 | 79.55 | 79.22 |

| MNER-SVG (full) | 82.34 | 82.09 | 82.23 |

| SvgNER Pre. | SvgNER Rec. | SvgNER F1 | |

|---|---|---|---|

| 80.42 | 80.91 | 80.16 | |

| 81.59 | 80.27 | 81.40 | |

| 80.19 | 83.14 | 80.22 | |

| 79.58 | 81.89 | 80.25 | |

| MNER-SVG | 82.34 | 82.09 | 82.23 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).