Submitted:

13 February 2026

Posted:

14 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

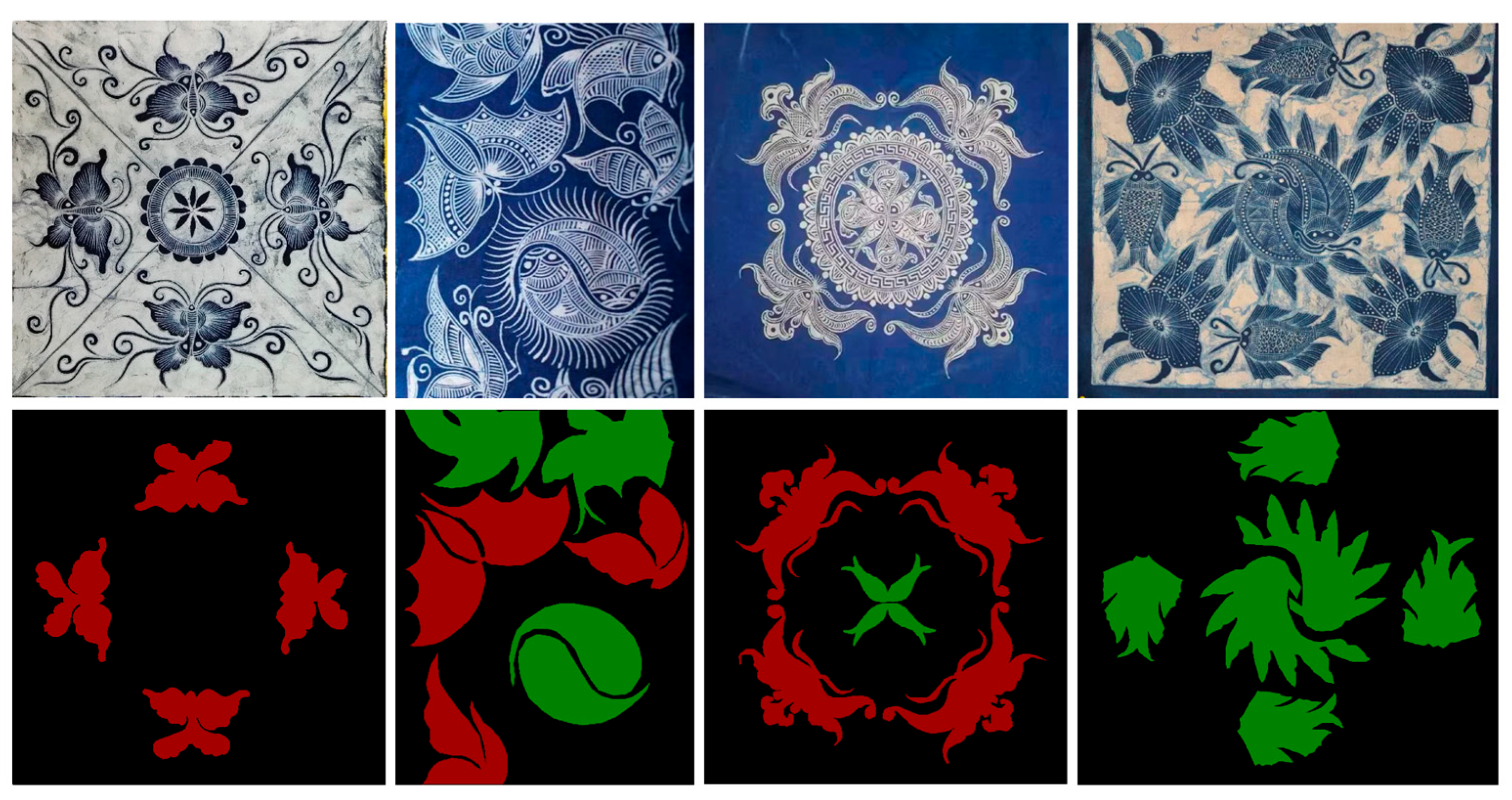

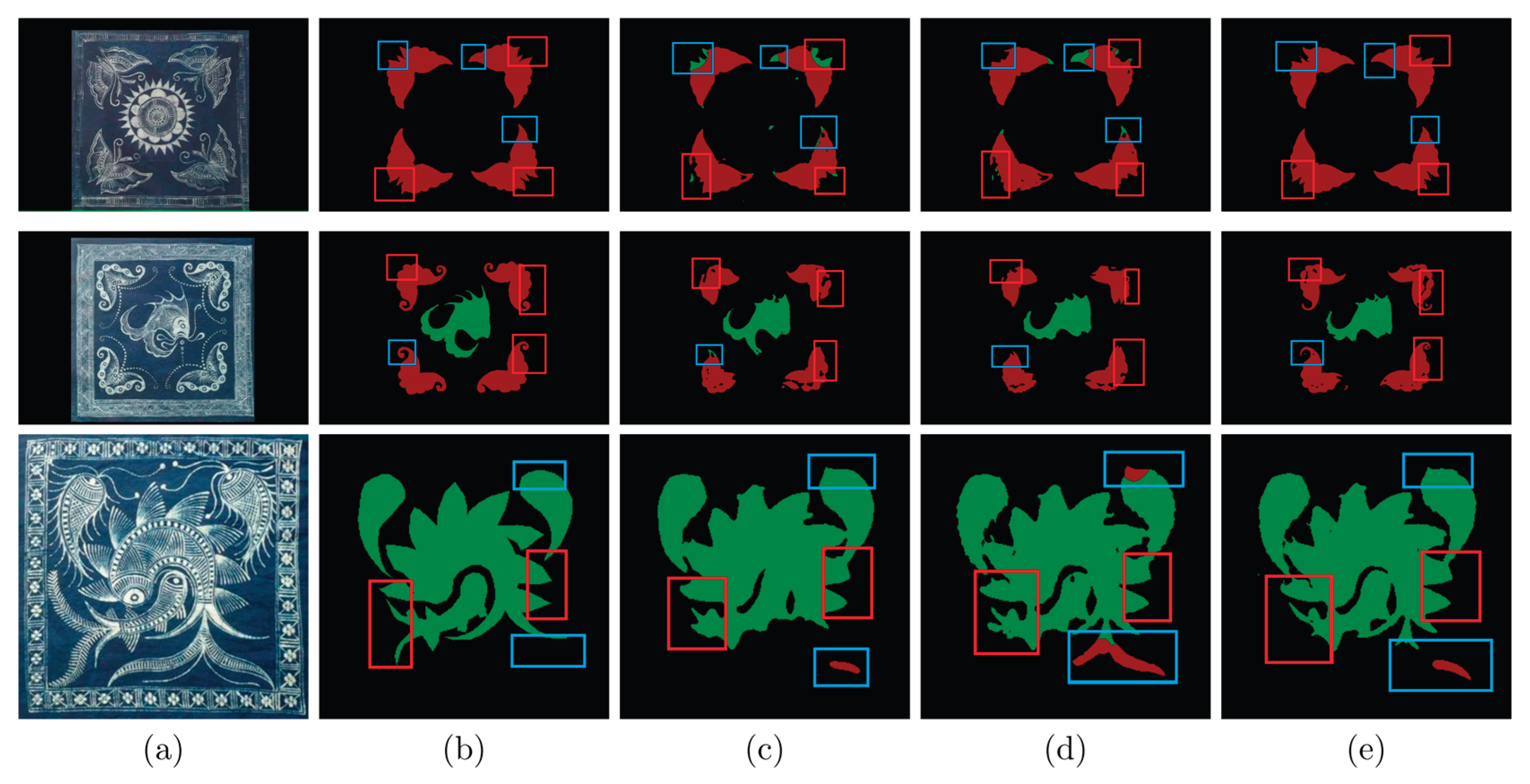

- This paper presents the first study on semantic image segmentation of intangible cultural heritage batik patterns. To this end, we have constructed a few-shot batik pattern dataset (publicly available for download) and annotated the batik images. Our annotation methodology was jointly developed with batik experts to ensure the accuracy and scientific rigor of the dataset.

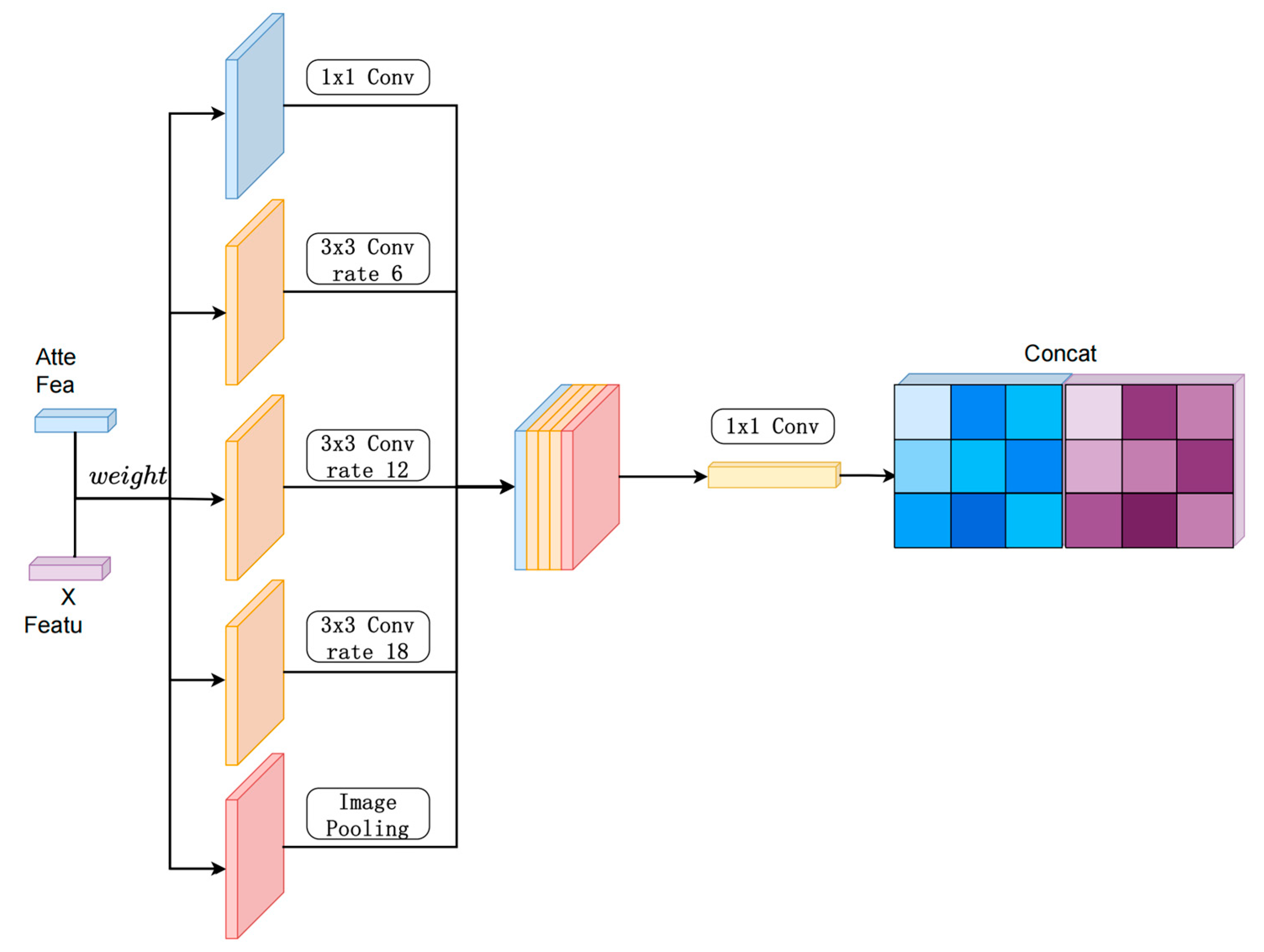

- Aiming at the problems of complicated texture details and blurred salient regions in batik images, this paper designs a multi-scale feature extraction network combining parallel dual-path structure and cavity convolution to realize feature capture and fusion from fine-grained to coarse-grained, which on the one hand effectively expands the sensory field of the network, and on the other hand makes use of auxiliary paths for feature compression and lightweighting, which reduces the amount of computation and guarantees the completeness of the key features at the same time.

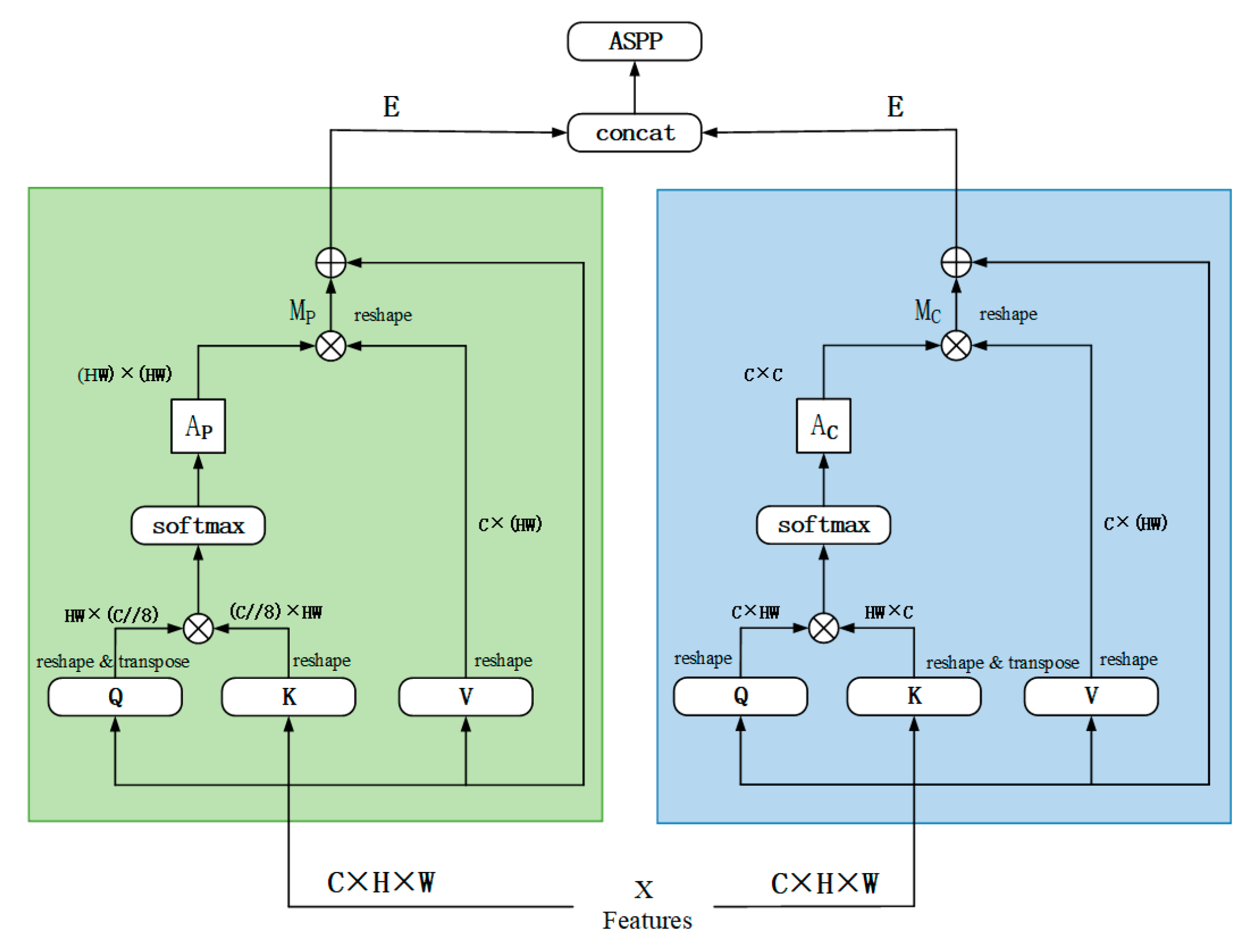

- In the feature fusion stage, a dual-attention module combining channel attention and spatial attention is introduced, where channel attention can strengthen features with high information content, and spatial attention highlights the localization of key regions in the image, especially in the segmentation of complex patterns and abstract textures in batik, which shows higher accuracy, and effectively counteracts the defects of traditional methods that tend to ignore subtle features or key textures.

- Through the combination of hierarchical fusion decoding module and data enhancement strategy, not only the complementary relationship between high-level semantics and low-level details is fully explored during the decoding process, which improves the adaptability of the model to diversified interferences and deformations, but also the overfitting risk of few-shot samples is alleviated through various data enhancement operations such as rotating, flipping, cropping, etc., and the model’s robustness and generalization ability are further strengthened.

2. Related Works

3. Methods

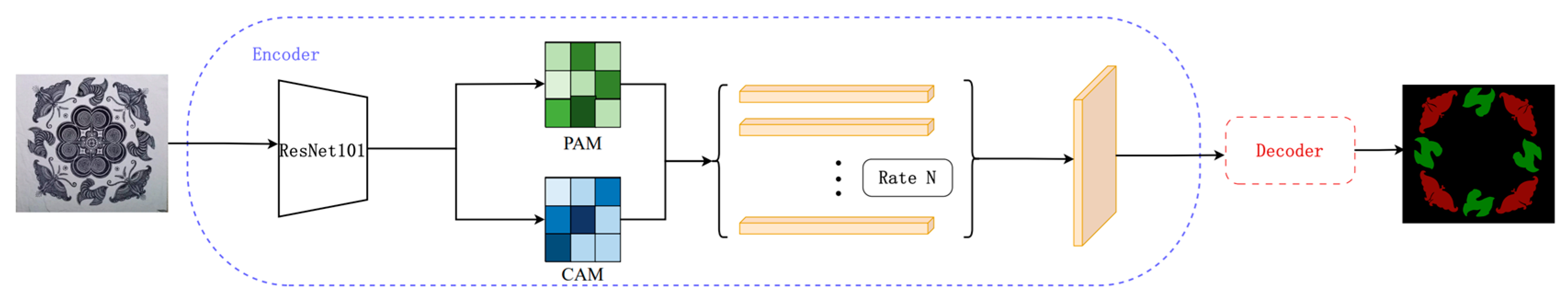

3.1. Modeling Framework

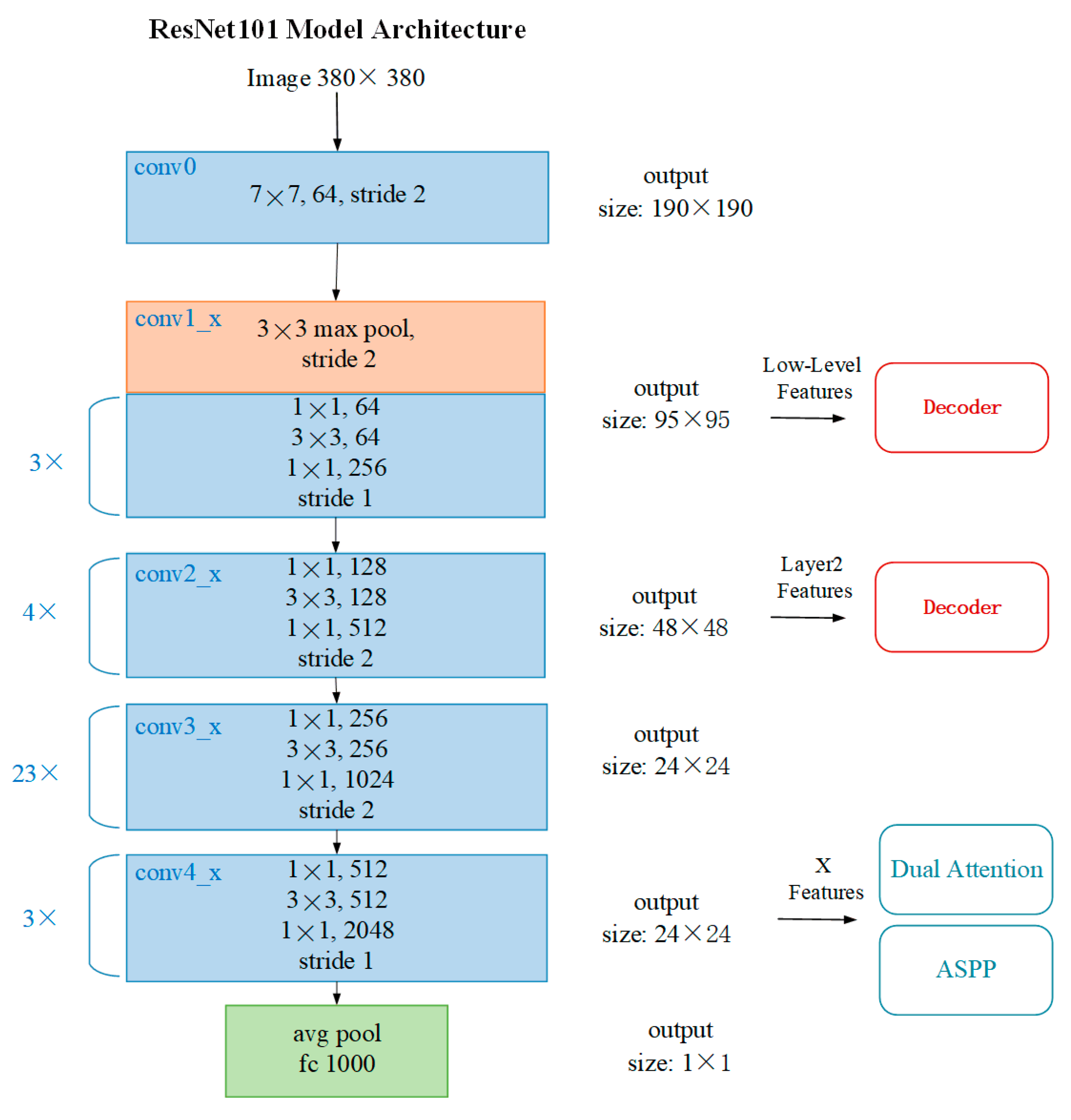

3.2. Backbone Feature Extraction Module

3.3. Dual Attention Feature Enhancement Module

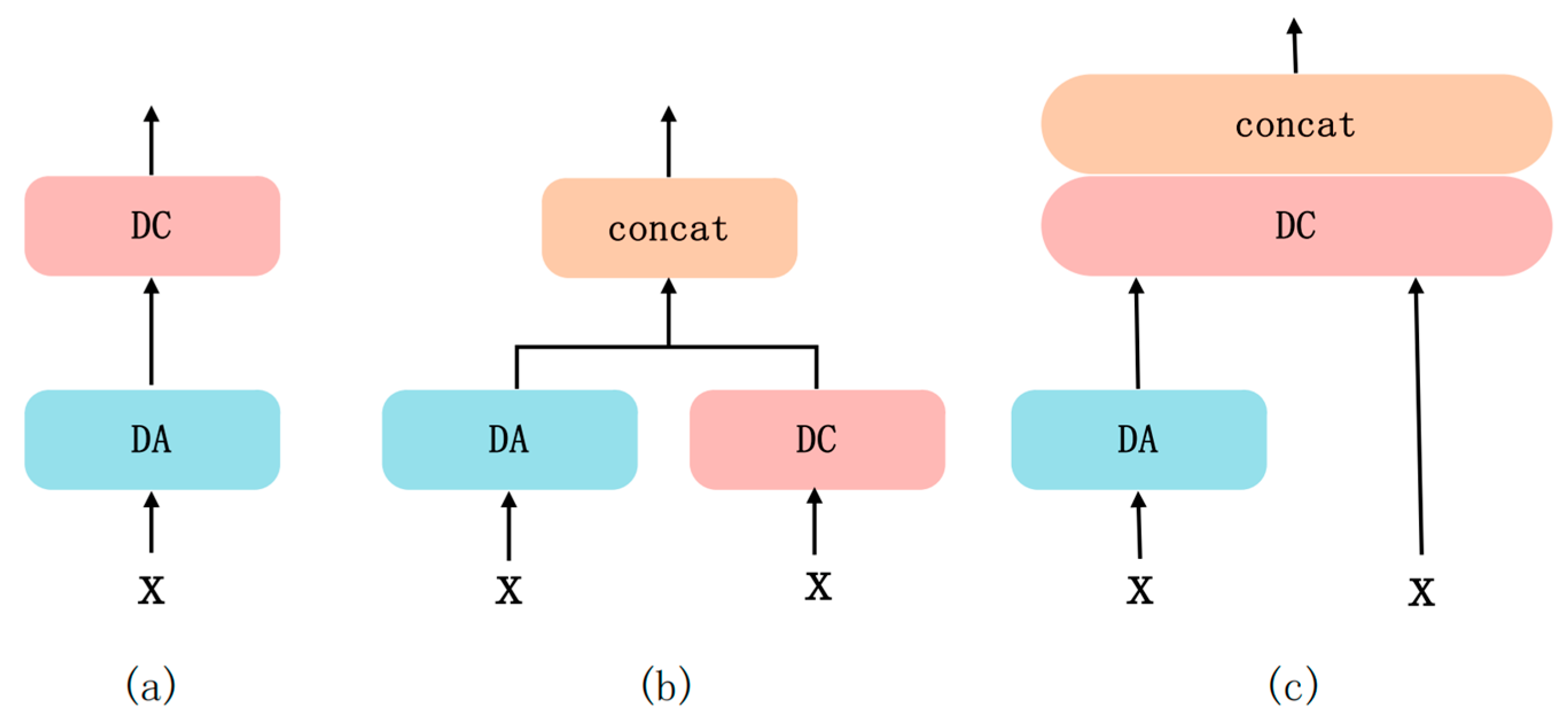

3.4. Lightweight Multi-Scale Feature Fusion Module

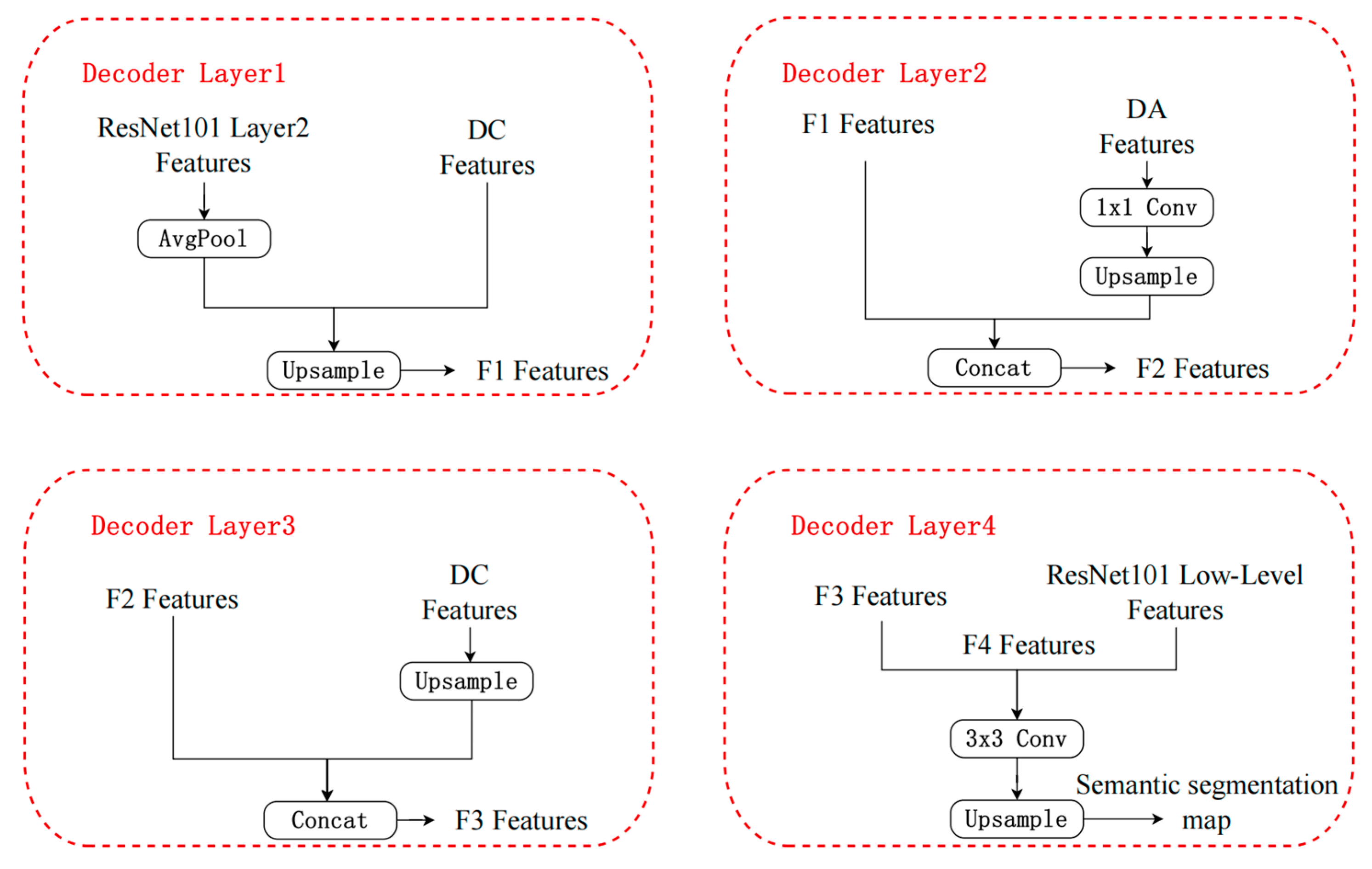

3.5. Hierarchical Semantic Decoding Module

4. Experimental Evaluation

4.1. Datasets

4.2. Experimental Environment and Parameter Settings

4.3. Evaluation Indicators

4.4. Ablation Study

4.4.1. Encoder Ablation Experiment

4.4.2. Decoder Ablation Experiment

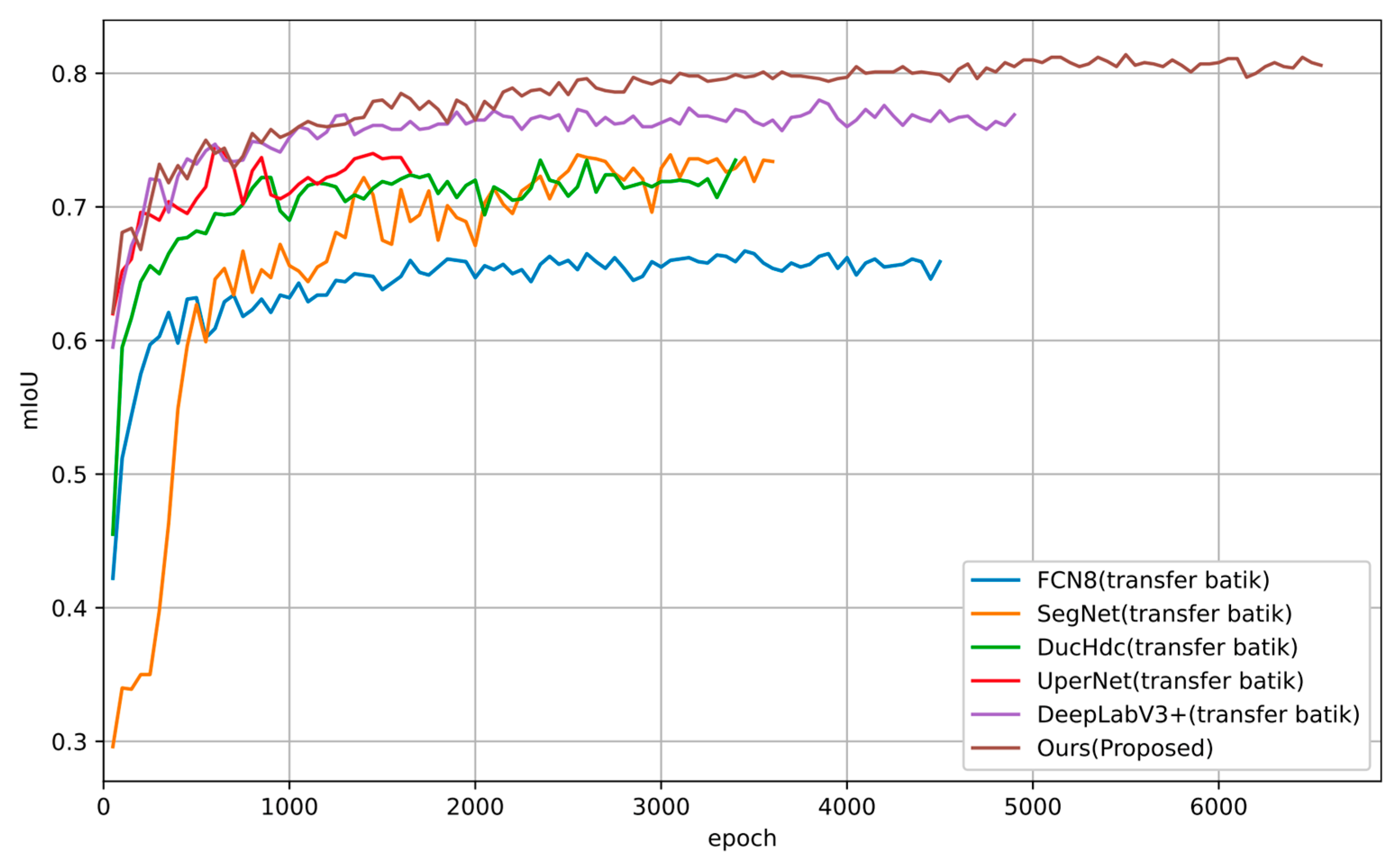

4.5. Comparative Experiment

4.5.1. Comparative Experiments of Multiscale Feature Fusion Modules

4.5.2. Dominant Model Comparison Experiment

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Tian, J.; Xin, P.; Bai, X.; Xiao, Z.; Li, N. An Efficient Semantic Segmentation Framework with Attention-Driven Context Enhancement and Dynamic Fusion for Autonomous Driving. Applied Sciences 2025, 15, 8373. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, J.; Chen, Y.; Yuan, H.; Wu, C. An Automated Learning Method of Semantic Segmentation for Train Autonomous Driving Environment Understanding. IEEE Transactions on Industrial Informatics 2024, 20, 6913–6922. [Google Scholar] [CrossRef]

- Cheng, J.; Deng, C.; Su, Y.; An, Z.; Wang, Q. Methods and Datasets on Semantic Segmentation for Unmanned Aerial Vehicle Remote Sensing Images: A Review. ISPRS Journal of Photogrammetry and Remote Sensing 2024, 211, 1–34. [Google Scholar] [CrossRef]

- Yu, A.; Quan, Y.; Yu, R.; Guo, W.; Wang, X.; Hong, D.; Zhang, H.; Chen, J.; Hu, Q.; He, P. Deep Learning Methods for Semantic Segmentation in Remote Sensing with Small Data: A Survey. Remote Sensing 2023, 15, 4987. [Google Scholar] [CrossRef]

- Azad, R.; Aghdam, E.K.; Rauland, A.; Jia, Y.; Avval, A.H.; Bozorgpour, A.; Karimijafarbigloo, S.; Cohen, J.P.; Adeli, E.; Merhof, D. Medical Image Segmentation Review: The Success of U-Net. IEEE Transactions on Pattern Analysis and Machine Intelligence 2024, 46, 10076–10095. [Google Scholar] [CrossRef]

- Liu, H.; Gou, S.; Zhou, Y.; Jiao, C.; Liu, W.; Shi, M.; Luo, Z. Object Knowledge-Aware Multiple Instance Learning for Small Tumor Segmentation. Biomedical Signal Processing and Control 2026, 115, 109400. [Google Scholar] [CrossRef]

- Lu, Y.; Li, W.; Cui, Z.; Zhang, Y. Beyond Low-Dimensional Features: Enhancing Semi-Supervised Medical Image Semantic Segmentation with Advanced Consistency Learning Techniques. Expert Systems with Applications 2025, 261, 125456. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015; Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F., Eds.; Springer International Publishing: Cham, 2015; pp. 234–241. [Google Scholar]

- Chen, L.-C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected Crfs. IEEE transactions on pattern analysis and machine intelligence 2017, 40, 834–848. [Google Scholar] [CrossRef]

- Chen, Z.; Ren, X.; Zhang, Z. Cultural Heritage as Rural Economic Development: Batik Production amongst China’s Miao Population. Journal of Rural Studies 2021, 81, 182–193. [Google Scholar] [CrossRef]

- Gu, Z.; Zhang, Y.; Xian, S.; Pan, S.; Wang, L. Conservation and Inheritance of Cultural Heritage in Regeneration of Urban Villages: A Tale of Two Villages in Guangzhou, China. Journal of Rural Studies 2026, 122, 103989. [Google Scholar] [CrossRef]

- Yuan, X.; Li, H.; Ota, K.; Dong, M. Building Energy Efficient Semantic Segmentation in Intelligent Edge Computing. IEEE Transactions on Green Communications and Networking 2023, 8, 572–582. [Google Scholar] [CrossRef]

- Guo, F.; Zhou, D. Few-Shot Semantic Segmentation Network for Distinguishing Positive and Negative Examples. Applied Sciences 2025, 15, 3627. [Google Scholar] [CrossRef]

- Lee, S.; Kim, S. Semi-Supervised Pointwise VIV Detection via Few-Shot and Sequential Transfer Learning. Engineering Structures 2026, 351, 122058. [Google Scholar] [CrossRef]

- He, W.; Zhang, Y.; Zhuo, W.; Shen, L.; Yang, J.; Deng, S.; Sun, L. Apseg: Auto-Prompt Network for Cross-Domain Few-Shot Semantic Segmentation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 23762–23772. [Google Scholar]

- Liu, H.; Peng, P.; Chen, T.; Wang, Q.; Yao, Y.; Hua, X.-S. Fecanet: Boosting Few-Shot Semantic Segmentation with Feature-Enhanced Context-Aware Network. IEEE Transactions on Multimedia 2023, 25, 8580–8592. [Google Scholar] [CrossRef]

- Ding, H.; Zhang, H.; Jiang, X. Self-Regularized Prototypical Network for Few-Shot Semantic Segmentation. Pattern Recognition 2023, 133, 109018. [Google Scholar] [CrossRef]

- Cao, L.; Guo, Y.; Yuan, Y.; Jin, Q. Prototype as Query for Few Shot Semantic Segmentation. Complex Intell. Syst. 2024, 10, 7265–7278. [Google Scholar] [CrossRef]

- Lu, Z.; He, S.; Li, D.; Song, Y.-Z.; Xiang, T. Prediction Calibration for Generalized Few-Shot Semantic Segmentation. IEEE transactions on image processing 2023, 32, 3311–3323. [Google Scholar] [CrossRef]

- Gao, J.; Liao, W.; Nuyttens, D.; Lootens, P.; Xue, W.; Alexandersson, E.; Pieters, J. Cross-Domain Transfer Learning for Weed Segmentation and Mapping in Precision Farming Using Ground and UAV Images. Expert Systems with applications 2024, 246, 122980. [Google Scholar] [CrossRef]

- Cuttano, C.; Tavera, A.; Cermelli, F.; Averta, G.; Caputo, B. Cross-Domain Transfer Learning with CoRTe: Consistent and Reliable Transfer from Black-Box to Lightweight Segmentation Model. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023; pp. 1412–1422. [Google Scholar]

- Tan, Y.; Zhang, E.; Li, Y.; Huang, S.-L.; Zhang, X.-P. Transferability-Guided Cross-Domain Cross-Task Transfer Learning. IEEE Transactions on Neural Networks and Learning Systems, 2024. [Google Scholar]

- Li, D.; Li, J.; Zeng, X.; Stankovic, V.; Stankovic, L.; Xiao, C.; Shi, Q. Transfer Learning for Multi-Objective Non-Intrusive Load Monitoring in Smart Building. Applied Energy 2023, 329, 120223. [Google Scholar] [CrossRef]

- Gao, G.; Dai, Y.; Zhang, X.; Duan, D.; Guo, F. ADCG: A Cross-Modality Domain Transfer Learning Method for Synthetic Aperture Radar in Ship Automatic Target Recognition. IEEE transactions on geoscience and remote sensing 2023, 61, 1–14. [Google Scholar] [CrossRef]

- Fang, J.; Wang, Z.; Liu, W.; Chen, L.; Liu, X. A New Particle-Swarm-Optimization-Assisted Deep Transfer Learning Framework with Applications to Outlier Detection in Additive Manufacturing. Engineering Applications of Artificial Intelligence 2024, 131, 107700. [Google Scholar] [CrossRef]

- Wang, Y.; Hu, S.; Liu, J.; Wang, A.; Zhou, G.; Yang, C. Bridging the Gap between Computer Vision and Bioelectrical Signal Analysis. Information Fusion 2026, 129, 104047. [Google Scholar] [CrossRef]

| Backbone | Description | mIoU | PA |

|---|---|---|---|

| ResNet101 | None | 54.51% | 82.37% |

| ResNet101 | Dual Attention Feature Enhancement Module | 76.02% | 91.34% |

| ResNet101 | Dual Attention + Multiscale Features | 79.22% | 92.47% |

| Backbone | Description | mIoU | PA |

|---|---|---|---|

| ResNet101 | Decoder (Layer1+Layer4) | 73.43% | 90.65% |

| ResNet101 | Decoder (Layer1+Layer2+Layer4) | 75.65% | 91.48% |

| ResNet101 | Decoder (Layer1+Layer2+Layer3+Layer4) | 79.22% | 92.47% |

| Backbone | Description | mIoU | PA |

|---|---|---|---|

| ResNet101 | SERIES | 77.89% | 92.32% |

| ResNet101 | PARALLEL | 75.79% | 91.44% |

| ResNet101 | INTEGRATION | 79.22% | 92.47% |

| Methods | Params | mIoU | PA |

|---|---|---|---|

| FCN8 | 134.28M | 64.69% | 86.72% |

| SegNet | 72.55M | 67.70% | 87.99% |

| DucHdc | 69.17M | 70.58% | 89.64% |

| UperNet | 126.08M | 71.46% | 89.89% |

| DeepLabV3+ | 59.34M | 75.92% | 91.52% |

| Proposed | 76.65M | 79.22%+3.3% | 92.47%+0.95% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.