Submitted:

10 March 2026

Posted:

11 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

2.1. Anticipatory AI Governance

2.2. Mixed-Methods to Shed Light on AI’s and Supercomputing’s Social Impact

2.3. AI’s and Supercomputing’s Social Impact

3. Mixed Methods: Qualitative Action Research and Quantitative Online Survey

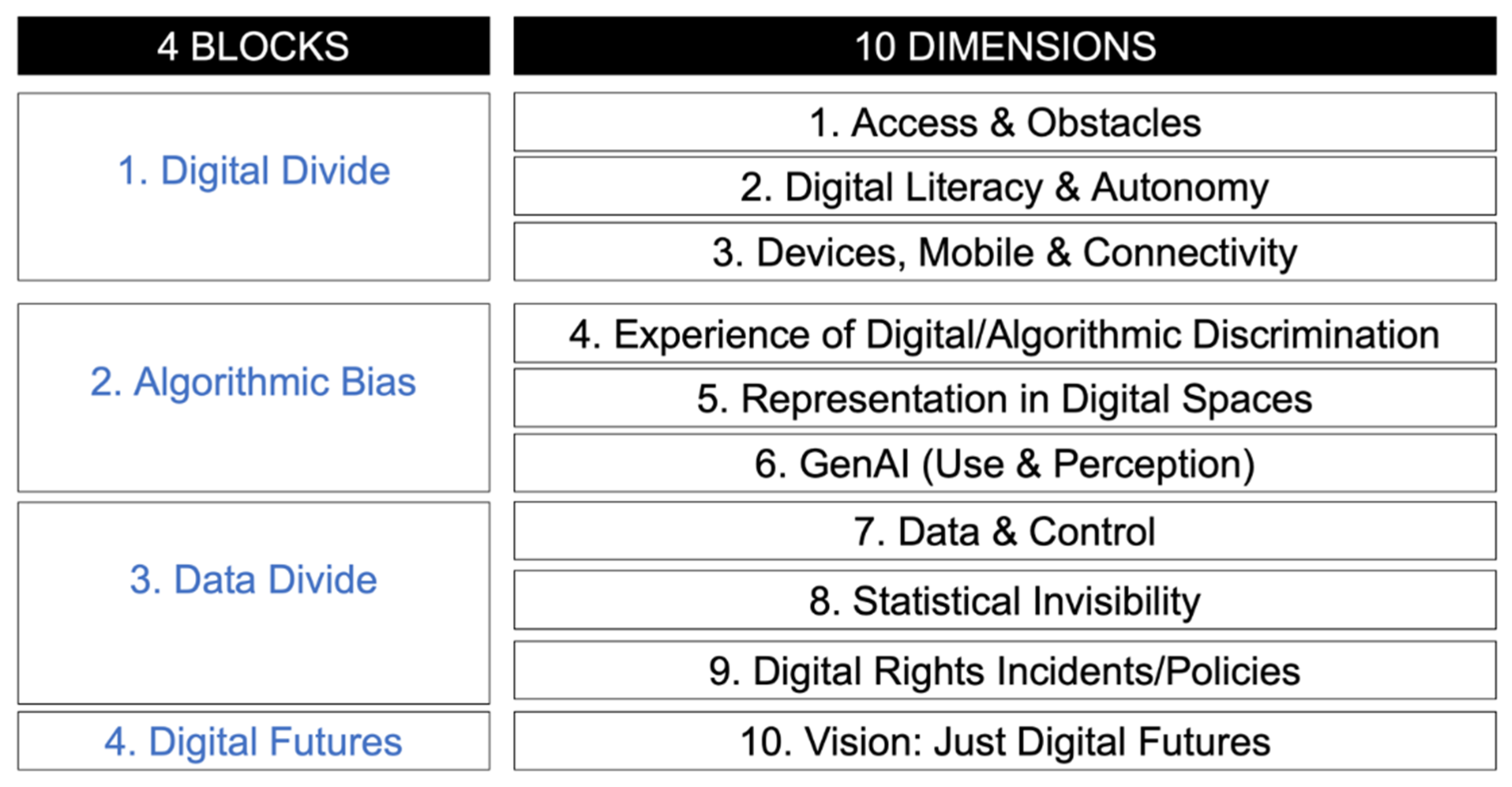

3.1. Qualitative: Action Research with Three Stakeholders Group Through an Analytical Decalogue

- 1. Digital Divide

- 2. Algorithmic Bias

- 3. Data Divide

- 4. Digital Futures

| Block | Dimension | Description | Policy Relevance |

| 1. Digital Divide | 1. Access & Obstacles | Persistent connectivity barriers affecting migrants, rural residents, and precarious users when accessing digital public services. | Highlights infrastructure and accessibility gaps requiring targeted territorial policies. |

| 2. Digital Literacy & Autonomy | Digital inclusion requires cognitive capacity to navigate, interpret, and critically assess digital systems and online information. | Emphasises training, empowerment, and algorithmic literacy as central components of inclusion strategies. | |

| 3. Devices, Mobile & Connectivity | High dependence on mobile devices among vulnerable users due to affordability constraints and limited access to alternative infrastructures. | Demonstrates the importance of device accessibility and affordable connectivity policies. | |

| 2. Algorithmic Bias | 4. Experiences of Digital/Algorithmic Discrimination | Cases of automated refusals, discriminatory content filtering, and opaque algorithmic processes affecting marginalised communities. | Signals risks in automated public services and the need for algorithmic accountability mechanisms. |

| 5. Representation in Digital Spaces | Communities reported stereotypes or invisibility within institutional digital platforms and algorithmic systems. | Points to the need for inclusive design and culturally responsive digital governance. | |

| 6. Generative AI (Use & Perception) | CSOs/NGOs, in the summer school, expressed interest in generative AI tools but lack training and institutional support for safe and ethical usage. | Suggests the need for public sector guidance and digital capacity-building around generative AI. | |

| 3. Data Divide | 7. Data & Control | CSOs/NGOs questioned who controls data infrastructures and under what governance frameworks. | Connects territorial policy with debates on data sovereignty and public data governance. |

| 8. Statistical Invisibility | Marginalised groups are frequently absent from official datasets, leading to under-representation in policy design. | Demonstrates the need for inclusive data infrastructures and improved statistical representation. | |

| 9. Digital Rights Incidents / Policy Gaps | Experiences of data breaches, harmful chatbots, and automated decisions often lack clear complaint or redress mechanisms. | Indicates the need for stronger digital rights protections and oversight frameworks. | |

| 4. Digital Futures | 10. Vision: Just Digital Futures | CSOs/NGOs envisioned inclusive digital ecosystems characterised by multilingual tools, community platforms, and ethical AI. | Provides normative direction for democratic and inclusive digital governance. |

3.1.1. Six Civil Society Organizations/NGOs

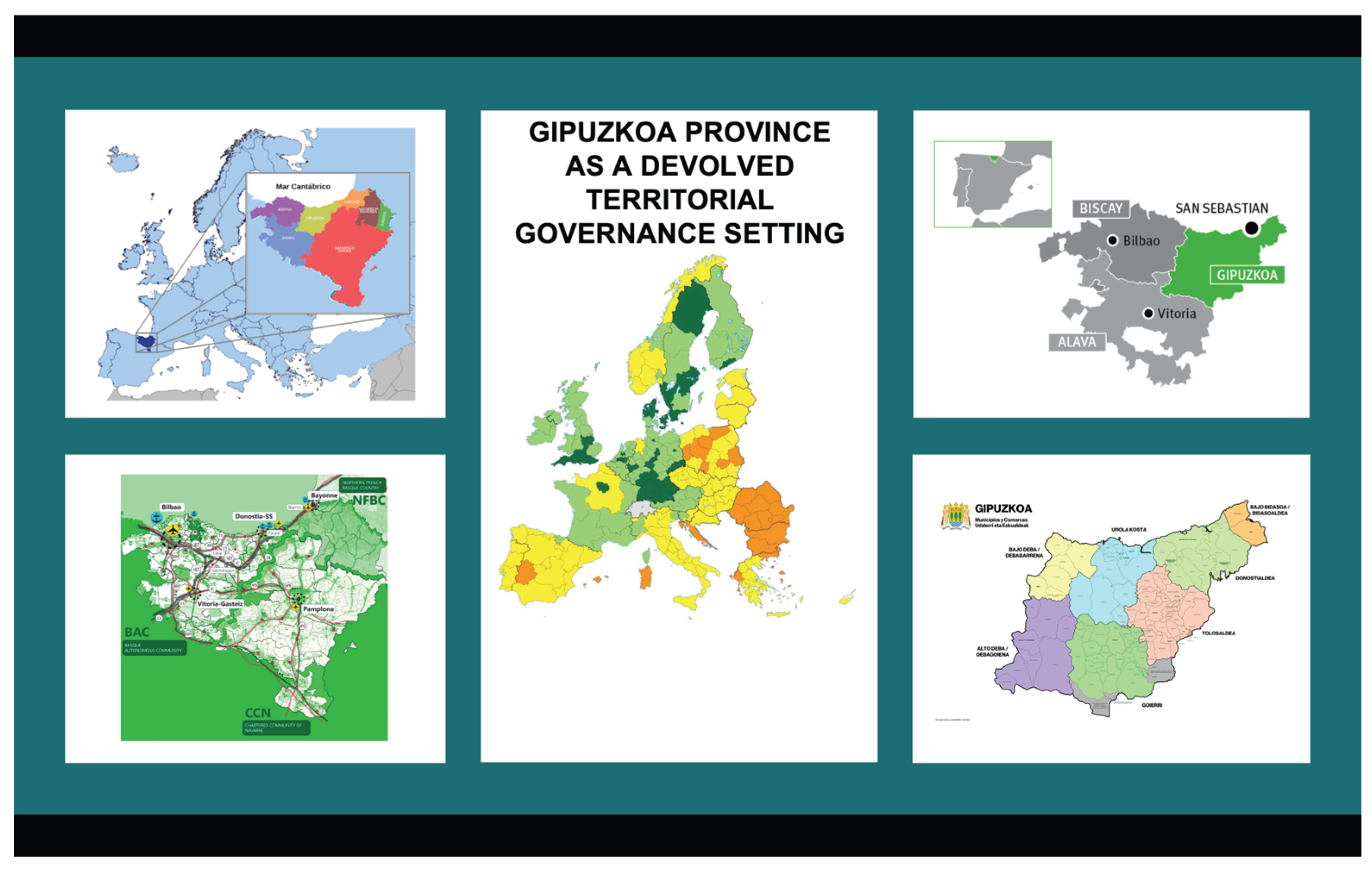

3.1.2. Seven Directorates with the Provincial Council of Gipuzkoa

3.1.3. Eleven Municipalties

3.2. Quantitative: Online Survey with Citizens (N=911)

4. Discussion: Digital Inclusion Index and AI Governance Perception Index

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix

Questionnaire (Translated from Basque and Spanish into English)

References

- Zuiderwijk, A.; Chen, Y.-C.; Salem, F. Implications of the use of artificial intelligence in public governance: A systematic literature review and a research agenda. Gov. Inf. Q. 2021, 38, 101577. [Google Scholar] [CrossRef]

- Giest, S.; McBride, K.; Nikiforova, A.; Sikder, S.K. Digital and data-driven transformations in governance: A landscape review. Data Policy 2025, 7, e21. [Google Scholar] [CrossRef]

- Katzenbach, C.; Ulbricht, L. Algorithmic governance. Internet Policy Rev. 2019, 8. [Google Scholar] [CrossRef]

- Dunleavy, P.; Margetts, H. Data science, artificial intelligence and the third wave of digital era governance. Public Policy Adm. 2025, 40, 185–214. [Google Scholar] [CrossRef]

- Braithwaite, V. Beyond the bubble that is Robodebt: How governments that lose integrity threaten democracy. Aust. J. Soc. Issues 2020, 55, 242–259. [Google Scholar] [CrossRef]

- de Fine Licht, K.; Folland, A. AI in public decision-making: A philosophical and practical framework for assessing and weighing harm and benefit. Public Adm. 2025, 1–15. [Google Scholar] [CrossRef]

- Rinta-Kahila, T.; Someh, I.; Gillespie, N.; Indulska, M.; Gregor, S. Managing unintended consequences of algorithmic decision-making: The case of Robodebt. J. Inf. Technol. Teach. Cases 2024, 14, 165–171. [Google Scholar] [CrossRef]

- Burrell, J. How the machine ‘thinks’: Understanding opacity in machine learning algorithms. Big Data Soc. 2016, 3, 2053951715622512. [Google Scholar] [CrossRef]

- Mergel, I.; Dickinson, H.; Stenvall, J.; Gasco, M. Implementing AI in the public sector. Public Manag. Rev. 2023, 1–14. [Google Scholar] [CrossRef]

- Dickinson, H.; Yates, S. From external provision to technological outsourcing: Lessons for public sector automation from the outsourcing literature. Public Manag. Rev. 2023, 25, 243–261. [Google Scholar] [CrossRef]

- Rachovitsa, A.; Johann, N. The human rights implications of the use of AI in the digital welfare state: Lessons learned from the Dutch SyRI case. Hum. Rights Law Rev. 2022, 22, ngac010. [Google Scholar] [CrossRef]

- Caragliu, A.; Del Bo, C.F. Regional institutions and the urban digital divide. Pap. Reg. Sci. 2025, 104, 100118. [Google Scholar] [CrossRef]

- Caragliu, A.; Mora, L.; Appio, F. AI governance, smart urban futures and territorial innovation systems. Reg. Stud. 2025, 59, 77–94. [Google Scholar]

- Fuerth, L.S.; Faber, E.M.H. Anticipatory governance: Practical upgrades. Issues Sci. Technol. 2012, 28, 44–50. [Google Scholar]

- OECD. Strategic Foresight for Better Policies: Building Effective Governance in the Face of Uncertainty; OECD Publishing: Paris, France, 2020. [Google Scholar]

- Mazzucato, M. The Entrepreneurial State: Debunking Public vs. Private Sector Myths; Anthem Press: London, UK, 2018. [Google Scholar]

- Mazzucato, M.; Kattel, R. Market-Shaping States: A New Theory of Public Sector Capacities and Capabilities; University College London: London, UK, 2026; Available online: https://www.ucl.ac.uk/bartlett/publications/2026/jan/market-shaping-states-new-theory-public-sector-capacities-and-capabilities.

- Bolton, M.; Mintrom, M. RegTech and creating public value: Opportunities and challenges. Policy Des. Pract. 2023, 6, 266–282. [Google Scholar] [CrossRef]

- Innobasque; Diputación Foral de Gipuzkoa. Guía para la Creación de Iniciativas Digitales sin Brechas; Diputación Foral de Gipuzkoa: Donostia–San Sebastián, Spain, 2023. [Google Scholar]

- Calzada, I.; Eizaguirre, I. Anticipatory AI governance in practice: Data sovereignty, urban AI, and trustworthy GenAI in the Basque Country. In Proceedings of the 2025 IEEE International Conference on Agentic AI (ICA), Wuhan, China, 5–7 December 2025; pp. 248–251. [Google Scholar] [CrossRef]

- Lewin, K. Action research and minority problems. J. Soc. Issues 1946, 2, 34–46. [Google Scholar] [CrossRef]

- Adib-Moghaddam, A. The Myth of Good AI: A Manifesto for Critical Artificial Intelligence; AI Futures, 2024. [Google Scholar]

- Calzada, I.; Eizaguirre, I. Digital inclusion and urban AI: Strategic roadmapping and policy challenges. Discover Cities 2025, 2, 73. [Google Scholar] [CrossRef]

- Calzada, I. Datafied Democracies and AI Economies Unplugged; Springer Nature: Cham, Switzerland, 2025. [Google Scholar]

- European Commission. Apply AI Strategy: Speeding up AI Adoption in Key Sectors across Europe; European Commission: Brussels, Belgium, 2025. [Google Scholar]

- European Commission; Joint Research Centre. Future Directions for Quantum Technology in Europe: An Analysis of Policy Questions; Publications Office of the European Union: Luxembourg, 2025. [Google Scholar] [CrossRef]

- European Commission; Directorate-General for Communications Networks; Content and Technology. Study on the Next Data Frontier: Generative AI, Regulatory Compliance and International Dimensions (No. 2024-020); Publications Office of the European Union: Luxembourg, 2025. [Google Scholar] [CrossRef]

- European Commission; Joint Research Centre. TechDispatch: Human Oversight of Automated Decision-Making; Publications Office of the European Union: Luxembourg, 2025. [Google Scholar] [CrossRef]

- Calzada, I. How do small nations cooperate? An action research framework for Wales and the Basque Country. Reg. Stud. Reg. Sci. 2024, 11, 87–102. [Google Scholar] [CrossRef]

- IBM; Basque Government. The Basque Government and IBM inaugurate Europe’s first IBM Quantum System Two in Donostia/San Sebastián. IBM Newsroom 2025. 14 October. Available online: https://newsroom.ibm.com/2025-10-14-the-basque-government-and-ibm-inaugurate-europes-first-ibm-quantum-system-two-in-donostia-san-sebastian (accessed on 19 October 2025).

- Kuziemski, M.; Misuraca, G. AI governance in the public sector: Three tales from the frontiers of automated decision-making in democratic settings. Telecomm. Policy 2020, 44, 101976. [Google Scholar] [CrossRef]

- Belmonte, B.; Villa, D.P.; Ardaiz, I.; Goia, N.; Valenciano, A.M. Democracy in the Digital Era: Digital Technologies and Democracy—An Analysis from the Perspective of Arantzazulab’s Laboratory Activities; Arantzazulab: Donostia–San Sebastián, Spain, 2025. [Google Scholar]

- Creswell, J.W.; Plano Clark, V.L. Designing and Conducting Mixed Methods Research, 3rd ed.; SAGE: Thousand Oaks, CA, USA, 2018. [Google Scholar]

- SAGE Handbook of Mixed Methods in Social & Behavioral Research, 2nd ed.; Tashakkori, A., Teddlie, C., Eds.; SAGE: Thousand Oaks, CA, USA, 2010. [Google Scholar]

- Cugurullo, F. AIdeology: Unpacking the ideology of artificial intelligence and its spaces. Antipode 2025. [Google Scholar] [CrossRef]

- Tubaro, P. The dual footprint of artificial intelligence: Environmental and social impacts across the globe. Environ. Sci. Policy 2025, 174, 104267. [Google Scholar] [CrossRef]

- O’Connor, R.; Bolton, M.; Saeri, A.K.; Chan, T.; Pearson, R. Artificial intelligence and complex sustainability policy problems: Translating promise into practice. Policy Des. Pract. 2024, 1–16. [Google Scholar] [CrossRef]

- Vasilopoulou, S.; Almeida, M.; Chiva, C.; Boda, Z.; Campos, A.S.; Falanga, R.; Stasavage, D.; Weimer, M. Future Challenges to Democracy; Publications Office of the European Union: Luxembourg, 2026. [Google Scholar]

- Coeckelbergh, M.; Sætra, H.S. Climate change and the political pathways of AI: The technocracy-democracy dilemma in light of artificial intelligence and human agency. Technol. Soc. 2023, 75, 102406. [Google Scholar] [CrossRef]

- Barasa, H.; Tay, P.; McBride, K.; Iosad, A.; Mökander, J. Sovereignty in the Age of AI: Strategic Choices, Structural Dependencies and the Long Game Ahead; Tony Blair Institute for Global Change: London, UK, 2026. [Google Scholar]

- Hawkins, Z.J.; Razavi, R.; Hodgman, M.; Weaver, J.; Lehdonvirta, V.; et al. From AI Sovereignty to AI Agency: Measuring Capability, Agency and Power: A Practical Tool for Policymakers; Tech Policy Design Institute: London, UK, 2025. [Google Scholar]

- Bolton, M. Transforming governance: Critical questions to guide public sector engagement with AI forthcoming. Data Policy, 2026. [Google Scholar]

- Albrengues, A.; Lu, L. Weight of gender in artificial intelligence models’ implementation in the European Union non-discrimination laws. Law Ethics Technol. 2025, 2025, 0007. [Google Scholar] [CrossRef]

- Tang, S.; Zhu, H. Mitigating bias in generative AI: A comprehensive framework for governance and accountability. Law Ethics Technol. 2024, 2024, 0008. [Google Scholar] [CrossRef]

- Zhang, C. An environmental understanding of privacy and data protection law. Law Ethics Technol. 2024, 2024, 0005. [Google Scholar] [CrossRef]

- Sieber, R.; Brandusescu, A.; van Geuns, J. Building AI Governance in Municipalities from the Ground Up; University of Toronto, School of Cities: Toronto, ON, Canada, 2026. [Google Scholar]

- Miller, R. Transforming the Future: Anticipation in the 21st Century; UNESCO Publishing: Paris, France, 2018. [Google Scholar]

- Purificato, E.; Bili, D.; Jungnickel, R.; Ruiz-Serra, V.; Fabiani, J.; et al. The Role of Artificial Intelligence in Scientific Research: A Science for Policy, European Perspective; Publications Office of the European Union: Luxembourg, 2025. [Google Scholar] [CrossRef]

- Cugurullo, F.; Xu, Y. When AIs become oracles: Generative artificial intelligence, anticipatory urban governance, and the future of cities. Policy Soc. 2025, 44, 98–115. [Google Scholar] [CrossRef]

- Sætra, H.S. A shallow defence of a technocracy of artificial intelligence: Examining the political harms of algorithmic governance in the domain of government. Technol. Soc. 2020, 62, 101283. [Google Scholar] [CrossRef]

- Lee-Geiller, S. Integrating civic and artificial intelligence in policymaking: Experimental insights on public policy evaluations. Policy Internet 2025, 17, 1–24. [Google Scholar] [CrossRef]

- Ostrom, E. Crossing the great divide: Coproduction, synergy, and development. World Dev. 1996, 24, 1073–1087. [Google Scholar] [CrossRef]

- Eaves, D.; Rao, K. Digital Public Infrastructure: A Framework for Conceptualisation and Measurement . In IIPP Working Paper 2025–01; UCL Institute for Innovation and Public Purpose: London, UK, 2025. [Google Scholar]

- OECD. Digital Public Infrastructure for Digital Governments; OECD Public Governance Policy Papers No. 68; OECD Publishing: Paris, France, 2024. [Google Scholar]

- Verhulst, S.G.; Chafetz, H.; Zahuranec, A. Data Commons in an Era of AI: Rethinking Data Access and Re-Use; SSRN: 2025. Available online: https://ssrn.com/abstract=4836354 (accessed on 19 October 2025).

- Indigenous Data Sovereignty and Policy; Walter, M., Kukutai, T., Carroll, S.R., Rodriguez-Lonebear, D., Eds.; Routledge: London, UK; New York, NY, USA, 2021. [Google Scholar]

- Aghion, P.; Antonin, C.; Bunel, S. The Power of Creative Destruction: Economic Upheaval and the Wealth of Nations; Harvard University Press: Cambridge, MA, USA, 2021. [Google Scholar]

- The Economics of Creative Destruction: New Research on Themes from Aghion and Howitt; Akcigit, U., Van Reenen, J., Eds.; Harvard University Press: Cambridge, MA, USA, 2023. [Google Scholar]

- Eloundou, T.; Manning, S.; Mishkin, P.; Rock, D. The labor market impact of ChatGPT: Evidence from online platforms. Sci. Adv. 2024, 10, eaaz1234. [Google Scholar]

- Albizu, M.; Estensoro, M. Mind the AI Gap: Bridging the AI Talent Mismatch in Education and Industry . In Orkestra Policy Briefs 01/2026; Orkestra, 2026. [Google Scholar] [CrossRef]

- International Monetary Fund. Gen-AI: Artificial Intelligence and the Future of Work; IMF Staff Discussion Note SDN/2024/001. Gen-AI: Artificial Intelligence and the Future of Work; International Monetary Fund: Washington, DC, USA, 2024. [Google Scholar]

- Jaumotte, F.; Kim, J.; Koll, D.; Li, E.Z.; Li, L.; et al. Bridging Skill Gaps for the Future: New Jobs Creation in the AI Age; IMF Staff Discussion Note SDN/2026/001; International Monetary Fund: Washington, DC, USA, 2026; ISBN 979-8-22902-819-6. [Google Scholar]

- Velasco, L.; Adan, S.N.; Khan, M.S.; Fox, J.; Corona, R.; Adeleke, F.; Effoduh, J.O.; Eder, L.; Sharp, M.; Muhaj, D.; Kalcic, K.; Trager, R. Financing the AI Triad: Compute, Data and Algorithms. A Framework to Build Local Ecosystems . In Oxford Martin AI Governance Initiative; University of Oxford: Oxford, UK, 2026. [Google Scholar]

- Calzada, I.; Eizaguirre, I. EcoTechnoPolitics: Towards planetary thinking beyond digital–green twin transitions. Societies 2026, 16, 57. [Google Scholar] [CrossRef]

- Vinuesa, R.; Azizpour, H.; Leite, I.; Balaam, M.; Dignum, V.; et al. The role of artificial intelligence in achieving the Sustainable Development Goals. Nat. Commun. 2020, 11, 233. [Google Scholar] [CrossRef]

- Luccioni, A.S.; Strubell, E.; Crawford, K. From efficiency gains to rebound effects: The problem of Jevons’ paradox in AI’s polarized environmental debate. arXiv 2025. [Google Scholar] [CrossRef]

- Wang, C.; Yin, Y.; Hu, H. The rise of algorithmic governance and the dual revolution: Applications, challenges, and governance of artificial intelligence in public administration. Technol. Soc. 2026, 86, 103264. [Google Scholar] [CrossRef]

- Papagiannidis, E.; Mikalef, P.; Conboy, K. Responsible artificial intelligence governance: A review and research framework. J. Strateg. Inf. Syst. 2025, 34, 101885. [Google Scholar] [CrossRef]

- Raieste, A.; Solvak, M.; Velsberg, O.; McBride, K. Government Efficiency in the Age of AI: Toward Resilient and Efficient Digital Democracies; Nortal: Tallinn, Estonia, 2025. [Google Scholar]

- Ozili, P.K. Digital public infrastructure: Concepts, global efforts, benefits, challenges, and success stories. Digit. Soc. 2025, 4, 1–22. [Google Scholar] [CrossRef]

- United Nations Development Programme (UNDP). Accelerating the SDGs through Digital Public Infrastructure: A Compendium of the Potential of Digital Public Infrastructure; UNDP: New York, NY, USA, 2023. [Google Scholar]

- Mazarr, M.J. A New Age of Nations: Power and Advantage in the AI Era; RAND Corporation: Santa Monica, CA, USA, 2026. [Google Scholar]

- Kerche, F.W.; Zook, M.; Graham, M. The silicon gaze: A typology of biases and inequality in LLMs through the lens of place. Platforms Soc. 2026, 3, 1–20. [Google Scholar] [CrossRef]

- Levy, H. Ethical, legal, and governance dimensions of responsible research and innovation: Global perspectives and challenges in emerging technologies. Law Ethics Technol. 2025, 2025, 0012. [Google Scholar] [CrossRef]

- Calzada, I. Data sovereignties in the GenAI age: From data-opolies to data cooperatives, trust, and geopolitical governance. In Springer Proceedings on Complexity; Springer: Cham, Switzerland, 2026; Available online: https://ssrn.com/abstract=5453496. [CrossRef]

- International Monetary Fund. Artificial Intelligence and the Future of Work: Macroeconomic Implications; IMF Staff Discussion Note; International Monetary Fund: Washington, DC, USA, 2024. [Google Scholar]

- World Economic Forum. Rethinking AI Sovereignty: Pathways to Competitiveness through Strategic Investments; World Economic Forum: Cologny/Geneva, Switzerland, 2026. [Google Scholar]

- Calzada, I. Understanding AI Economics; Edward Elgar: Cheltenham, UK, 2026. [Google Scholar]

- Barac, M.; López Rodríguez, M.I. Geopolítica digital: política de regulación y su importancia en inteligencia artificial en EE. UU., China y la UE. Int. Rev. Econ. Policy 2025, 7, 77–100. [Google Scholar] [CrossRef]

- Haider, J.; Rödl, M. Google Search and the creation of ignorance: The case of the climate crisis. Big Data Soc. 2023, 10, 1–12. [Google Scholar] [CrossRef]

- Chatterji, A.; Cunningham, T.; Deming, D.J.; Hitzig, Z.; Ong, C.; et al. How People Use ChatGPT; National Bureau of Economic Research: Cambridge, MA, USA, 2025; Available online: http://www.nber.org/papers/w34255.

- Han, J.; Qiu, W.; Lichtfouse, E. ChatGPT in Scientific Research and Writing: A Beginner’s Guide; Springer: Cham, Switzerland, 2024. [Google Scholar] [CrossRef]

- Kennedy, B.; Yam, E.; Kikuchi, E.; Pula, I.; Fuentes, J. How Americans View AI and Its Impact on People and Society; Pew Research Center: Washington, DC, USA, 2025. [Google Scholar]

- Li, Z.; Wan, X. Ethical challenges and innovations in AI-driven predictive policing: The case of China. Law Ethics Technol. 2025, 2025, 0005. [Google Scholar] [CrossRef]

- International Telecommunication Union. AI Standards for Global Impact: From Governance to Action (2025 Report); ITU Publications: Geneva, Switzerland, 2025. [Google Scholar]

- International Telecommunication Union. AI for Good Global Summit 2025: International AI Standards Exchange Report; ITU: Geneva, Switzerland, 2025. [Google Scholar]

- OECD. The OECD.AI Index: Technical Paper; OECD Publishing: Paris, France, 2026. [Google Scholar]

- OECD. Explanatory Memorandum on the Updated OECD Definition of an AI System; OECD Publishing: Paris, France, 2024; Available online: https://www.oecd.org/en/publications/explanatory-memorandum-on-the-updated-oecd-definition-of-an-ai-system_623da898-en.html.

- OECD; UNESCO. G7 Toolkit for Artificial Intelligence in the Public Sector; OECD Publishing: Paris, France, 2024. [Google Scholar]

- Barrett, A.; et al. The Multiple Streams Framework: A lens for understanding policy change. Policy Politics 2026, 54, 1–18. [Google Scholar]

- Bolton, M. What influences public decision-makers? An Australian case study. Aust. J. Public Adm. 2024, 83, 457–474. [Google Scholar] [CrossRef]

- Ilves, L.; Kilian, M.; Parazzoli, S.M.; Peixoto, T.C.; Velsberg, O. The Agentic State: Rethinking Government for the Era of Agentic AI; Global Government Technology Centre & The World Bank: Berlin, Germany, 2025. [Google Scholar]

- Ilves, L.; Kilian, M.; Peixoto, T.C.; Velsberg, O. The Agentic State: How Agentic AI Will Revamp 10 Functional Layers of Government and Public Administration; Global Government Technology Centre: Berlin, Germany, 2025. [Google Scholar]

- Calzada, I. Digital infrastructures of democracy. In Oxford Research Encyclopedia of Science, Technology, and Society; Fouché, R., Ed.; Oxford University Press: New York, NY, USA, 2026. [Google Scholar] [CrossRef]

- Chawla, R.; Iyer, A. Foundations of Digital Public Infrastructure; RIS: New Delhi, India, 2025; ISBN 81-7122-190-4. [Google Scholar]

- Clark, J.; Marin, G.; Ardic Alper, O.P.; Galicia Rabadan, G.A. Digital Public Infrastructure and Development: A World Bank Group Approach . In Digital Transformation White Paper; World Bank: Washington, DC, USA, 2025; Vol. 1. [Google Scholar]

- Partnership, Access; Digital Cooperation Organization (DCO). Digital Public Infrastructure: A Key Building Block for Social Inclusion and Economic Development; DCO: Riyadh, Saudi Arabia, 2024. [Google Scholar]

- Ford, C.; Dell’Aquila, M.; Grabova, O.; Muñoz, I.; Renda, A. Building the European Digital Public Infrastructure: Rationale, Options, and Roadmap; CEPS In-Depth Analysis; CEPS: Brussels, Belgium, March 2025. [Google Scholar]

- Fountain, J. Public Sector Digital Infrastructure: Concepts, Measurement, and Frameworks; Working Paper No. 2025-04; UCL Institute for Innovation and Public Purpose: London, UK, 2025. [Google Scholar]

- Machen, R.; Nost, E. Thinking algorithmically: The making of hegemonic knowledge in climate governance. Trans. Inst. Br. Geogr. 2021, 46, 555–569. [Google Scholar] [CrossRef]

- Meneghin, G.; Stefani, S. Energy efficiency and social justice: The European challenge of the Energy Performance of Buildings Directive. Renew. Sust. Energy 2026, 2026, 0001. [Google Scholar] [CrossRef]

- Manor, I. What ChatGPT thinks about your country: Sentiments and frames of AI geographies. Policy Internet 2025, 17, 201–225. [Google Scholar] [CrossRef]

- Daly, A.; Hagendorff, T.; Hui, L.; Mann, M.; Marda, V.; et al. Artificial Intelligence Governance and Ethics: Global Perspectives; The Chinese University of Hong Kong, Faculty of Law: Hong Kong, China, 2019. [Google Scholar]

- UNESCO. Companion Document: The Guidelines for the Governance of Digital Platforms and Generative Artificial Intelligence; UNESCO: Paris, France, 2025. [Google Scholar]

- Lee-Geiller, S. Integrating Civic and Artificial Intelligence in Policymaking: Experimental Insights on Public Policy Evaluations; SSRN Working Paper No. 5063392; Yale University Institution for Social and Policy Studies: New Haven, CT, USA, 2024. [Google Scholar]

- Kasula, P.; Dedekorkut-Howes, A.; Shearer, H.; Baum, S. Social inclusion of urban villages: A systematic review of global urban planning practices. Cities 2026, 169, 106509. [Google Scholar] [CrossRef]

- Calzada, I. Human–AI governance through innovation systems: Digital sovereignty in the Basque Country’s healthcare system. In Springer Proceedings on Complexity; Springer: Cham, Switzerland, 2026; Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5931987.

- Fullerton, J.B. Regenerative Economics: Revolutionary Thinking for a World in Crisis; Capital Institute: Great Barrington, MA, USA, 2015. [Google Scholar]

- Dong, G. Environmental epidemiology in environmental health research: Opportunities and challenges for a new era. Int. J. Environ. Epidemiol. 2026, 2026, 0001. [Google Scholar] [CrossRef]

- Gaventa, J. Finding the spaces for change: A power analysis. IDS Bull. 2006, 37, 23–33. [Google Scholar] [CrossRef]

- Calzada, I.; Eizaguirre, I. Anticipatory AI governance in public administrations worldwide: Digital inclusion, territorial innovation, and datafied democracies. In Proceedings of the AAG 2026 Annual Meeting of the American Association of Geographers, San Francisco, CA, USA, 17–21 March 2026. [Google Scholar]

- Democratising AI: Towards Open, Decentralised AI Ecosystems; Chandola, B., Sarma, A., Eds.; Observer Research Foundation: New Delhi, India, 2026. [Google Scholar]

- Fountain, J.E. The moon, the ghetto and artificial intelligence: Reducing systemic racism in computational algorithms. Gov. Inf. Q. 2022, 39, 101645. [Google Scholar] [CrossRef]

- Leone de Castris, A. AI Governance around the World: European Union; The Alan Turing Institute: London, UK, 2025. [Google Scholar]

- Sharma, S.; Ramanathan, M.; Iyer, A.; Abraham, V. Digital Public Infrastructures: Lessons from India for a Thriving Data Economy; IE Center for the Governance of Change/iSPIRT Foundation: Madrid, Spain, 2023. [Google Scholar]

- Xu, C.; Munday, M.; Jones, C. Can an ICT satellite account help us to understand digital sovereignty? Econ. Syst. Res. 2025. [Google Scholar] [CrossRef]

- Hope, J.; Ludlow, P. Farewell to Westphalia: Crypto Sovereignty and Post-Nation-State Governance; Logos Press Engine: Zug, Switzerland, 2025. [Google Scholar]

- Calzada, I.; Garaikoetxea, A. Anticipating trustworthy GenAI in the public healthcare system: Co-producing human–AI governance between patients and GPs in the Basque Country through living lab assemblages. In Human-Centric AI: Harmonizing Humans and Technology; Misra, S., Traymbak, S., Chockalingam, S., Kjølerbakken, K.M., Braarud, P.Ø., Eds.; Routledge: Oxon, UK, 2026; Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5932177.

- Calzada, I.; Eizaguirre, I. Adimen Artifizialaren Gizarte Inpaktua Gipuzkoan . Berria. 7th March 2026. Available online: https://www.berria.eus/iritzia/artikuluak/adimen-artifizialaren-gizarte-inpaktua-gipuzkoan_2154966_102.html.

| Method | Technique | Multistakeholder Framework | Number |

| Qualitative | Action Research (via a Decalogue) | CSO/NGOs | 6 |

| Directorates | 7 | ||

| Municipalities | 11 | ||

| Quantitative | Online Survey | Citizens | 911 |

| DIGITAL DIVIDE | ALGORITHMIC BIAS | DATA DIVIDE | DIGITAL FUTURES | |||||||

| NGO | 1. Access & Obstacles |

2. Digital Literacy & Autonomy |

3. Devices, Mobile & Connectivity | 4. Experience of Algorithmic Discrimination | 5. Representation in Digital Spaces | 6. GenAI (Use & Perception) |

7. Data & Control |

8. Statistical Invisibility | 9. Digital Rights Incidents / Policies | 10. Vision: Just Digital Futures |

| Jatorkin | Migrants face language barriers in digital public services. | Need for multilingual digital training and support. | Strong reliance on smartphones due to affordability constraints. | Automated systems sometimes reject documents or identity verification. | Migrants often invisible in institutional digital interfaces, i.e., women. | Interest in GenAI for translation and administrative help. | Concerns about how migration data are used. | Migrant communities underrepresented in official statistics. | Few mechanisms to contest automated decisions. | Multilingual inclusive public digital services. |

| BidezBide | Refugees depend on NGOs to access digital administrative services. | Limited autonomy due to legal and documentation barriers. | Shared devices and unstable connectivity common. | Administrative algorithms may produce automatic rejections. | Refugees rarely represented in digital governance narratives. | GenAI perceived useful for translation and orientation. | Concerns about data surveillance and personal records. | Refugees frequently absent from policy datasets. | Lack of legal clarity around digital rights protections. | Rights-based digital services ensuring dignity and accessibility. |

| Haurralde | Women in vulnerable contexts face multiple barriers to digital access. | Digital literacy linked to empowerment and participation. | Household device sharing limits autonomy. | Online harassment and gender discrimination occur in digital platforms. | Women underrepresented in digital governance debates. | Interest in GenAI for education and employment opportunities. | Concerns regarding gender data gaps. | Limited gender-disaggregated data in digital policy. | Weak institutional responses to online harassment. | Feminist digital infrastructures prioritising safety and empowerment. |

| Elkartu | Accessibility barriers remain in digital public services. | Need for accessible training and assistive technologies. | Assistive technologies often expensive or incompatible. | Automated systems sometimes ignore accessibility needs. | Disabled users excluded from digital design processes. | GenAI may enhance accessibility but also risk bias. | Need for accessible data governance frameworks. | Disability statistics often incomplete. | Social media content containing hate speech. | Universal design embedded in digital public services. |

| Agifugi | Gypsy community seekers face connectivity barriers due to unstable housing. | Literacy challenges linked to disrupted education trajectories. | Dependence on low-cost mobile connectivity. | Automated identity checks may trigger suspicion. | Roma community invisible in digital policy debates. | GenAI useful for legal information and translation. | Concerns about biometric data governance. | Gypsy community often absent in official datasets. | Limited ways to report digital harms. | Transparent digital governance and rights protection. |

| Emigrados Sin Fronteras (ESF) | Infrastructure inequalities drive digital exclusion. Digital identity as key element through BakQ. | Community-based digital literacy programmes needed. | Territorial connectivity gaps persist. | Algorithmic bias reflects structural inequalities in datasets. | Global South and marginalised perspectives underrepresented. | GenAI requires ethical governance frameworks. | Data governance should prioritise public interest. | Structural invisibility of vulnerable groups in official data. | Need for accountability frameworks for digital harms. | Community-centred digital ecosystems based on social justice. |

| DIGITAL DIVIDE |

ALGORITHMIC BIAS |

DATA DIVIDE | DIGITAL FUTURES | |||||||

| Directorate | 1. Access & Obstacles | 2. Digital Literacy & Autonomy | 3. Devices, Mobile & Connectivity | 4. Algorithmic Discrimination | 5. Representation in Digital Spaces | 6. GenAI (Use & Perception) | 7. Data & Control | 8. Statistical Invisibility | 9. Digital Rights Incidents / Policies | 10. Vision: Just Digital Futures |

| Tax Directorate | Reduce barriers to digital tax services (language/age/low skills) via assistance tools. | Plain-language explanations + training to improve “fiscal autonomy.” | Design GenAI services mobile-first and universally accessible. | Avoid automated “risk profiling” that stigmatizes groups; independent ethical audits. | Diversify internal teams (gender/language/origin) to reduce bias in design/data. 1Million invoices delivered through TiketBai system being assisted by Multiverse Computing |

Communicate clearly: “AI assists, does not decide”; manage perceptions of AI use. | Data sovereignty architecture; no secondary use without clear authorization. | Expand/repair representativeness (e.g., migrants/older adults) in datasets. | Use Algorithmic Impact Assessments + AI Ethics Charter in taxation domain. | “AI for Tax Justice”: efficiency plus rights plus social trust. |

| Road Directorate: Cross-border / Data Spaces & Sensing |

Access barriers framed around cross-border service interoperability and “usable” interfaces for citizens in the border context. | Need for literacy/autonomy to understand data-driven services and cross-border digital processes. | Connectivity & device constraints matter where sensing infrastructures (IoT) and services meet citizens. Ps: MUGI data availability could be a boost, an opportunity. |

Risk that automated classifications in border/security/mobility contexts reproduce bias if not governed. | Who is “seen” by border data infrastructures; representation risks for cross-border users. | GenAI seen as useful (support/automation), but requires governance and bounded use cases. | Data spaces governance is central: roles, ownership, permissions across institutions. | “Invisible” populations can be missed if cross-border data standards/datasets exclude them. | Need formal channels/protocols for incidents in sensor/data-space environments. | “Trusted cross-border data spaces” that remain rights-based and inclusion-oriented. |

| Mobility / MUBIL–Landago | Inclusion depends on ensuring mobility-related digital services don’t exclude low-access users. | Literacy/autonomy needed for users and staff to navigate mobility platforms and data-driven services. | Reliance on mobile access is treated as a design constraint in mobility/service delivery. | Automated decisions in mobility/logistics require safeguards against biased routing/priority outcomes. | Representation concerns in how mobility data reflects different communities/territories. | GenAI potential for internal knowledge/support functions; needs clear boundaries. | Strong emphasis on data governance arrangements across partners/ecosystem. Ps: key question, what to do and who should do what with this date. Multistakeholder arrangement is required |

Territorial “blind spots” in mobility data can undermine policy design. | Governance routines needed for harms/complaints when systems affect rights/access. | Place-based, sustainable mobility innovation aligned with inclusion and public value considering multistakeholder frameworks. |

| Open Governance | Digital access barriers framed as democratic/participation obstacles in public services. | Literacy as civic capability: understanding, navigating, contesting digital procedures. | Device/connectivity constraints treated as structural inequalities affecting participation. | Concern with institutional accountability if automated processes reduce contestability. | Representation as democratic legitimacy: whose voices/data shape institutional systems. | GenAI adoption should be aligned with transparency and public accountability norms. | Data control linked to governance-by-design and institutional responsibility. | Missing data on excluded groups undermines evidence-based public policy. | Need clear accountability/oversight mechanisms for digital harms and failures. | Democratic innovation agenda: digital transformation that strengthens legitimacy and trust. |

| Environment / Territorial governance: EcoTechnoPolitical challenge | Access obstacles understood via service design and territorial heterogeneity (who can actually use digital channels). | Literacy/autonomy relevant for understanding complex environmental info/services and procedures. | Connectivity/device dependence matters in territorial/environmental service contexts. | Algorithmic bias risks in classification/assessment tools if datasets or proxies are skewed. | Representation issues: which territories/groups are made visible in environmental data. | GenAI usefulness acknowledged, but needs governance, constraints, and verification. | Data governance stressed (sharing, stewardship, legality) in environmental data ecosystems: Data Cooperatives as an opportunity | “Invisibility” arises when monitoring/data collection under-captures some areas/groups. | Need policies for incident response and safeguards where automated outputs affect rights/services. | Anticipatory, rights-aware digital futures integrated with sustainability aims. |

| Gender Equality | Women constitute a structurally vulnerable population in increasingly digitised private and public spheres, including exposure in social media environments. | Digital literacy and autonomy are essential for women to access services independently and participate in digital governance processes. | Mobile-first digital realities require services that do not assume access to high-end devices or stable infrastructures. | Algorithmic bias in AI systems may reproduce gender stereotypes and structural inequalities if governance mechanisms are not implemented. | Persistent concerns regarding the representation and visibility of women in digital interfaces, technological sectors, and AI datasets. | Generative AI is perceived as a promising tool but requires guidance, ethical safeguards, and gender-sensitive governance frameworks. | Data governance must ensure control, privacy, and responsible reuse of gender-related datasets. | Lack of gender-disaggregated data limits the ability of institutions to design inclusive and evidence-based policies. | Online harassment and gender-based digital violence (including social media behaviour) remain significant governance challenges. | A feminist and inclusive digital transition where AI governance integrates equality, transparency, and democratic accountability. |

| Administration Modernization | Access improved through conversational interfaces that reduce friction (if designed for inclusion). | Literacy support: chatbots can guide procedures, but must avoid over-reliance and provide alternatives. Human-AI Governance (HAIG) | Works well on mobile; must be accessibility-compliant and multilingual where relevant. | Chatbot outputs can discriminate or mislead; requires monitoring, testing, and guardrails. | Representation: language, disability access, and cultural fit in conversational design. | Chatbot as high-impact GenAI use case; needs bounded scope and human oversight. | Governance over training data, logs, retention, and vendor dependencies. | Interaction logs can reveal “who is missing” and where services fail—if ethically governed. | Incident protocols needed (hallucinations, harmful advice, privacy failures). | “Trustworthy admin chatbot”: transparency + oversight + inclusion by design. |

| DIGITAL DIVIDE |

ALGORITHMIC BIAS |

DATA DIVIDE |

DIGITAL FUTURES |

|||||||

| Municipality | 1. Access & Obstacles | 2. Digital Literacy & Autonomy | 3. Devices, Mobile & Connectivity | 4. Algorithmic Discrimination | 5. Representation in Digital Spaces | 6. GenAI (Use & Perception) | 7. Data & Control | 8. Statistical Invisibility | 9. Digital Rights Incidents / Policies | 10. Vision: Just Digital Futures |

| Hernani | Many residents struggle with digital procedures and often need to visit the town hall in person for assistance. | Citizens frequently require support to complete digital processes, indicating limited autonomy. | Older devices and slow internet connections remain common among vulnerable groups. | Public digital services are often perceived as not designed for disadvantaged users. | Vulnerable populations rarely appear in digital platforms or public communication channels. | Generative AI is sometimes perceived as a control tool rather than a support mechanism. | Data governance issues are considered secondary within local service priorities. Hernani is pushing ahead a municipalistic agenda called Hernani Burujabe based digital sovereignty principles [125]. |

Some communities remain absent from official administrative registers. | Limited local guidance exists for handling digital rights issues. | Future governance in Hernani will be established a municipalistic agenda imitating Barcelona in 2015-2018. |

| Zarautz | Many citizens still lack basic digital access to public services. | Informal mutual support networks help residents navigate digital procedures. | Smartphones represent the dominant form of access to digital services. | Hate speech and discriminatory discourse appear frequently on digital platforms. | Some communities are represented through stigmatizing narratives online. | Generative AI adoption remains limited and is often viewed with distrust. | Data infrastructures are perceived as distant and difficult for citizens to influence. | Certain social groups remain statistically invisible within administrative datasets. | Municipal competences regarding digital rights remain unclear. Is this competence of ICT department or Inclusion department? |

Digital inclusion should guide future digital policy development. |

| Ordizia | Digital access varies significantly between neighbourhoods. | Low levels of digital literacy hinder effective participation in digital services. | Mobile phones constitute the only digital access point for many residents. | Structural discrimination may emerge through automated systems and administrative procedures. | Persistent stereotypes shape the digital representation of certain communities. | Generative AI remains perceived as distant and unfamiliar. | Limited knowledge exists regarding how municipal data infrastructures function. | Some communities remain outside digital governance processes. | Municipal digital rights policies remain insufficiently defined. | Ethical reflection should guide future digital governance. |

| Urretxu | Digital services exist but often require support or mediation to be effectively used. | Digital literacy varies strongly depending on education level. | Connectivity infrastructure is relatively functional but unevenly used. | Digital environments are sometimes perceived as potentially risky spaces. | Municipal communication campaigns attempt to ensure inclusive representation. | Safe and responsible use of AI technologies still requires institutional development. | Risks associated with data governance are not always clearly understood. | Some groups attempt to increase visibility through community data initiatives. | Follow-up mechanisms for digital harms remain limited. | Transparency should guide future digital transformation policies. |

| Arrasate | Cultural and community centres function as key access points to digital services. | Citizens’ digital autonomy remains very limited without institutional support. | Access frequently depends on shared community infrastructures. | Algorithmic bias is sometimes perceived in automated systems. | Digital infrastructures are not considered neutral by users. | Fear and uncertainty dominate perceptions of generative AI technologies. | Data governance is rarely prioritised within municipal decision-making. | Digital representations of communities may project inaccurate images. | Institutional roles in protecting digital rights remain unclear. | Policies should aim at fair and inclusive digital governance. |

| Donostia | Inclusion department has not conducted such observation so far. Who should be in charge of digital inclusion? | Public awareness of digital risks and rights remains relatively low. | Public innovation spaces (e.g., Tabakalera) provide alternative access infrastructures. | Online narratives may criminalise vulnerable groups. | Negative digital narratives often shape public perceptions. | Generative AI is seen as having strong potential but also generating confusion. | Data governance sometimes evokes “Big Brother” concerns among citizens. | Risks of data manipulation are frequently discussed. | Lack of training limits understanding of digital rights. | Digital empowerment should guide the development of future governance models. |

| Errenteria | Digital access depends heavily on public spaces and shared infrastructures. | Citizens’ autonomy in digital environments remains very limited. | Women in particular often rely on outdated devices. | Online discourse may reinforce social roles and criminalisation narratives. | Hate speech is frequently present in digital spaces. | Generative AI is sometimes perceived as exclusionary. | Citizens often feel subject to surveillance and control. | Lack of integrated data systems limits understanding of social realities. | Protection mechanisms for digital harms remain insufficient. | More institutional resources are required to ensure inclusive digital governance. |

| Eibar | Many citizens still rely on in-person administrative procedures. | Basic digital skills are lacking among parts of the population. | Mobile phones represent the only digital access channel for many residents. | Online environments are sometimes perceived as spaces of hostility. | Some groups remain difficult to identify or represent in digital datasets. | Adoption of generative AI is conditional and cautious. | Citizens sometimes perceive increasing digital restrictions. | Disabilities and accessibility needs are often underrepresented in datasets. | Municipal competences regarding digital rights protection are limited. | Policies should prioritise digital empowerment. |

| Pasaia | A significant generational digital divide persists. | Limited critical engagement with digital systems among some residents. | Moderate smartphone usage represents the main access channel. | Digital systems may fail to support social integration. | Digital representations often fail to reflect local realities. | Generative AI remains largely unknown among many citizens. | Data use is often perceived as restrictive rather than empowering. | Increased visibility may sometimes generate harmful stereotyping. | Training on digital rights remains insufficient. | Inclusive public spaces should guide digital futures. |

| Tolosaldea | Small municipalities face resource constraints that affect digital inclusion policies. Ps. Although it contrasts with landago.eus in Goiherri county. |

Citizens’ use of digital services remains very limited. | Digital connectivity often occurs collectively through shared infrastructures. | Housing and employment barriers intersect with digital exclusion. | Representation of vulnerable groups remains weak. | Generative AI governance is often framed through a logic of institutional control. | Citizens often perceive data governance as risky. | Rural territories remain statistically invisible in many datasets. | Lack of resources limits digital rights policies. | A comprehensive territorial digital inclusion policy is needed. |

| Irun | Public libraries play a key role as digital access points. | Citizens’ digital autonomy remains limited. | Community infrastructures facilitate connectivity. | Experiences with digital systems are often mixed. | Representation of some communities remains weak. | Generative AI is sometimes discussed from a care and social-support perspective. | Awareness of digital rights remains low. | Experimental initiatives attempt to increase visibility through local data. | Concerns about freedom and privacy occasionally arise. | Digital coexistence and social cohesion should guide future governance. |

| Decalogue Block | Decalogue Dimension |

Main Questionnaire Items |

Quantitative Operationalisation |

| Digital Divide | 1. Access & Obstacles | Q4, Q21–Q23 | Use of AI; perceived facilitation or complication of everyday and administrative tasks |

| 2. Digital Literacy & Autonomy | Q10, Q30 | Self-reported knowledge of AI; perceived usefulness of AI for youth guidance and training | |

| 3. Devices, Mobile & Connectivity | Q6–Q9, Q50 | Use of AI in learning, work, shopping, and wellbeing contexts; indirect evidence of everyday device-based uptake | |

| Algorithmic Bias | 4. Experiences of Digital/Algorithmic Discrimination | Q11, Q35 | Privacy-risk perception; perception of discrimination and opacity in automated public decisions |

| 5. Representation in Digital Spaces | Q24–Q28 | Perceptions of gender gaps and uneven representation in AI use | |

| 6. Generative AI (Use & Perception) | Q4–Q9, Q16 | Extent and frequency of AI use; perceived dependency risks | |

| Data Divide | 7. Data & Control | Q33–Q34 | Willingness to share data; views on who should guarantee ethical AI development |

| 8. Statistical Invisibility | Q35 | Perception that digital bureaucracy excludes part of the population | |

| 9. Digital Rights Incidents / Policy Gaps | Q35, Q40–Q43 | Privacy, transparency, discrimination, big-tech control, and views on automation in public administration | |

| Digital Futures | 10. Vision: Just Digital Futures | Q36–Q39, Q45–Q46 | Support for community AI, sustainable AI, and socially oriented digital futures |

|

Decalogue Block |

Decalogue Dimension |

Q/Indicator | Result (%) |

| 1.DIGITAL DIVIDE |

1. Access & Obstacles | Q4: Respondents who use or have used AI | 72.7 |

| Q23: Respondents saying AI facilitates administrative requirements | 35.9 | ||

| 2. Digital Literacy & Autonomy | Q10: Respondents reporting good or deep knowledge of AI | 46.3 | |

| Q2 + Q4: AI use among ages 16–34 | 89.1 | ||

| Q2 + Q4: AI use among ages 55+ | 52.4 | ||

| 3. Devices, Mobile & Connectivity | Q7: Use AI for learning/study at least sometimes | 47.4 | |

| Q9: Use AI for work at least sometimes | 46.8 | ||

| 2. ALGORITHMIC BIAS | 4. Digital/Algorithmic Discrimination | Q11: Agree that AI creates privacy risks | 71.8 |

| Q35: Identify exclusion through digitalised bureaucracy as a public-sector risk | 35.3 | ||

| Q35: Identify lack of transparency/discrimination in automated decisions | 46.8 | ||

| 5. Representation in Digital Spaces | Q24: Believe AI increases gender gaps | 11.5 | |

| Q27: Believe men and women use AI similarly in education | 58.6 | ||

| 6. Generative AI (Use & Perception) | Q5: AI users who use it at least weekly | 62.2 | |

| Q16: Perceive high or very high dependency risk | 43.0 | ||

| 3. DATA DIVIDE |

7. Data & Control | Q33: Would share data only with privacy guarantees or anonymisation | 47.5 |

| Q33: Would not share personal data | 32.5 | ||

| Q34: Believe governments should guarantee ethical AI | 53.7 | ||

| 8. Statistical Invisibility | Q35: Perceive that bureaucracy digitalisation excludes part of the population | 35.3 | |

| 9. Digital Rights / Policy Gaps | Q40: Have used an automated chatbot to communicate with an administration | 42.3 | |

| Q41: Prefer face-to-face public-service attention | 59.9 | ||

| Q42: Support automating documents and procedures | 63.7 | ||

| 4. DIGITAL FUTURES |

10. Vision: Just Digital Futures | Q39: Support specific measures to align AI with environmental sustainability | 55.9 |

| Q38: Support stricter sustainability criteria for AI use | 51.6 | ||

| Q29: Believe AI offers more opportunities to young people | 60.4 |

| Index | Dimension | Survey Question | Indicator Description |

| Digital Inclusion Index | Access to AI | Q4 | Respondents who use or have used AI tools |

| Frequency of use | Q5 | AI users who use AI at least weekly | |

| AI in education | Q7 | Use of AI tools for learning or study | |

| AI in work | Q9 | Use of AI tools for work-related activities | |

| AI literacy | Q10 | Respondents reporting good or deep knowledge of AI | |

| Digital public services | Q40 | Respondents who have used an automated chatbot to interact with public administration | |

| AI Governance Perception Index | Privacy risks | Q11 | Agreement that AI creates risks for personal data privacy |

| Data governance | Q33 | Willingness to share personal data under privacy guarantees or anonymisation | |

| Institutional responsibility | Q34 | Belief that governments should guarantee ethical AI development | |

| Bureaucratic exclusion | Q35 | Perception that digitalisation of bureaucracy may exclude part of the population | |

| Algorithmic transparency | Q35 | Perception of lack of transparency or discrimination in automated decisions | |

| Human oversight | Q41 | Preference for face-to-face interaction in public services rather than automated systems |

| County |

Digital Inclusion Index |

Rank |

AI Governance Perception Index |

Rank |

| Donostialdea | 57.6 | 1 | 50.1 | 1 |

| Oarsoaldea | 54.6 | 2 | 49.6 | 2 |

| Debagoiena | 54.2 | 3 | 49.6 | 2 |

| Goierri | 53.9 | 4 | 49.4 | 4 |

| Bidasoa-Oiartzun | 53.5 | 5 | 49.1 | 7 |

| Tolosaldea | 53.4 | 6 | 49.3 | 5 |

| Urola Garaia | 53.0 | 7 | 49.2 | 6 |

| Urola Kosta | 52.8 | 8 | 49.1 | 7 |

| Debabarrena | 52.3 | 9 | 49.0 | 9 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).