Submitted:

07 April 2026

Posted:

08 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

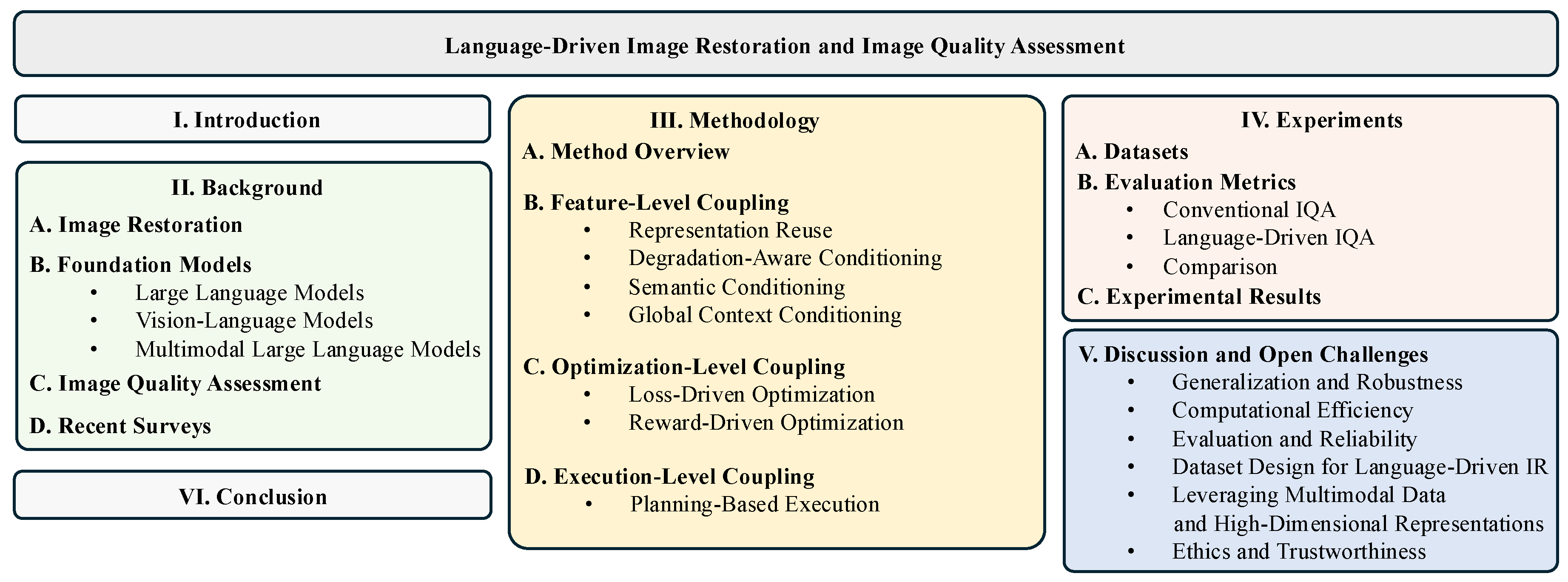

- We introduce a unified conceptual framework that interprets language-driven IR as an interaction-centric paradigm, revealing how language models affect restoration behavior beyond architectural modifications, including feature-level, optimization-level, and execution-level coupling.

- We analyze language-driven IQA, highlighting its conceptual distinctions from conventional fidelity metrics and clarifying the challenges of evaluation reliability, calibration stability, and semantic bias.

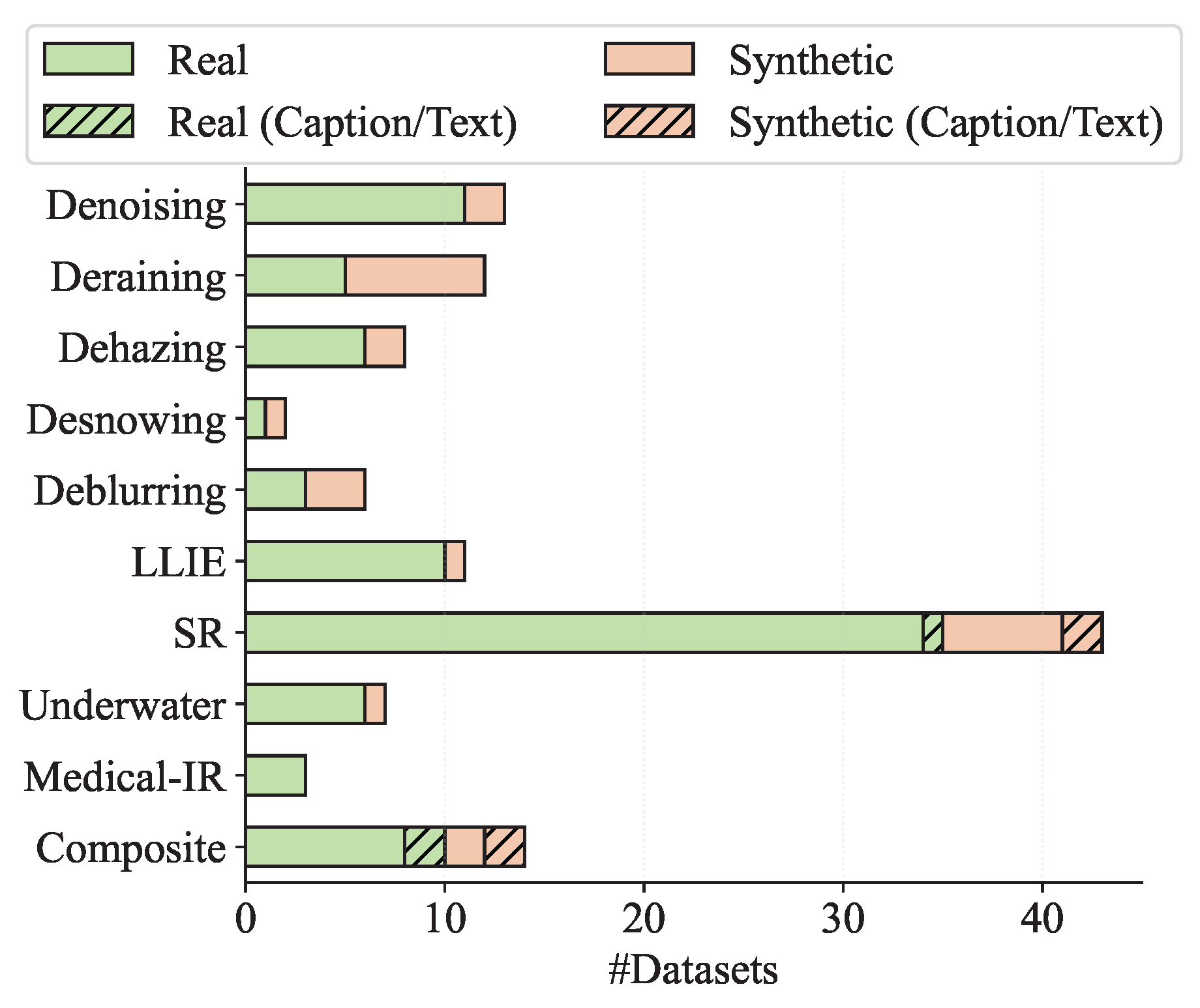

- We summarize the restoration datasets used in language-driven frameworks and analyze their limitations and emerging requirements from a language-driven perspective, emphasizing the need for semantically enriched, language-aware benchmarks. We also provide comparisons between conventional frameworks and language-driven methods across different settings.

- We investigate open challenges posed by language-integrated restoration systems and outline promising directions for future research that bridge multimodal reasoning, visual perception, and restoration optimization.

2. Background

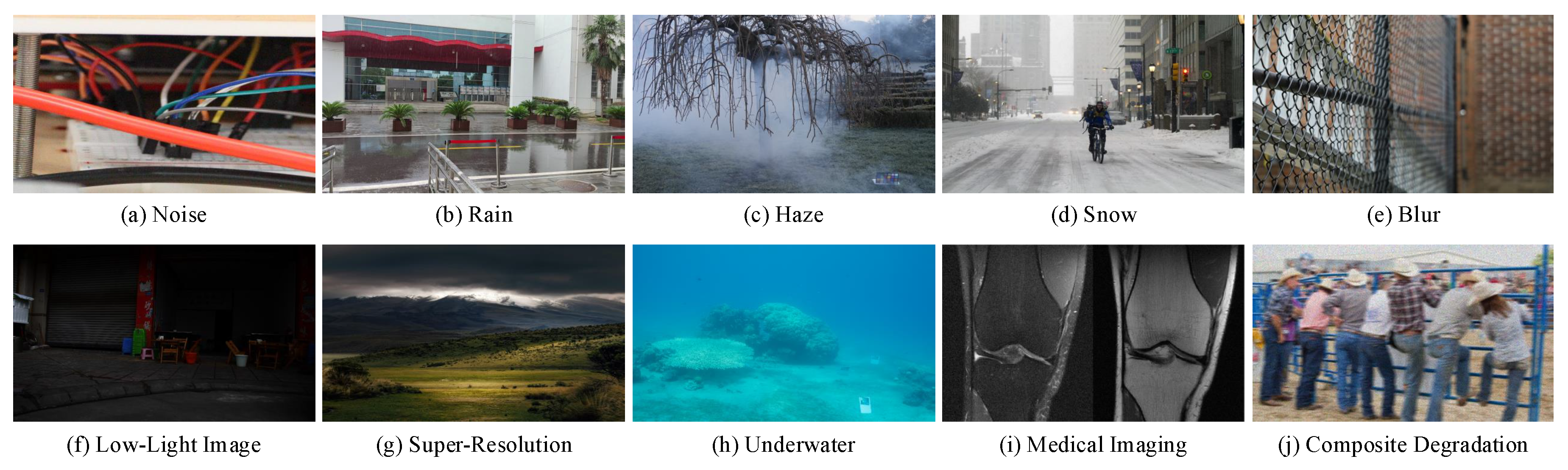

2.1. Image Restoration

2.2. Foundation Models

2.3. Image Quality Assessment

2.4. Relevant Surveys

3. Methodology

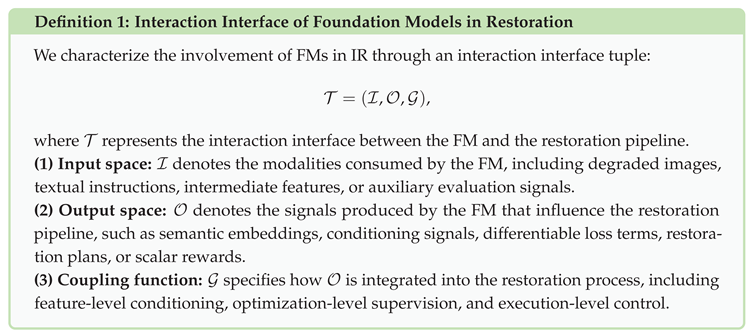

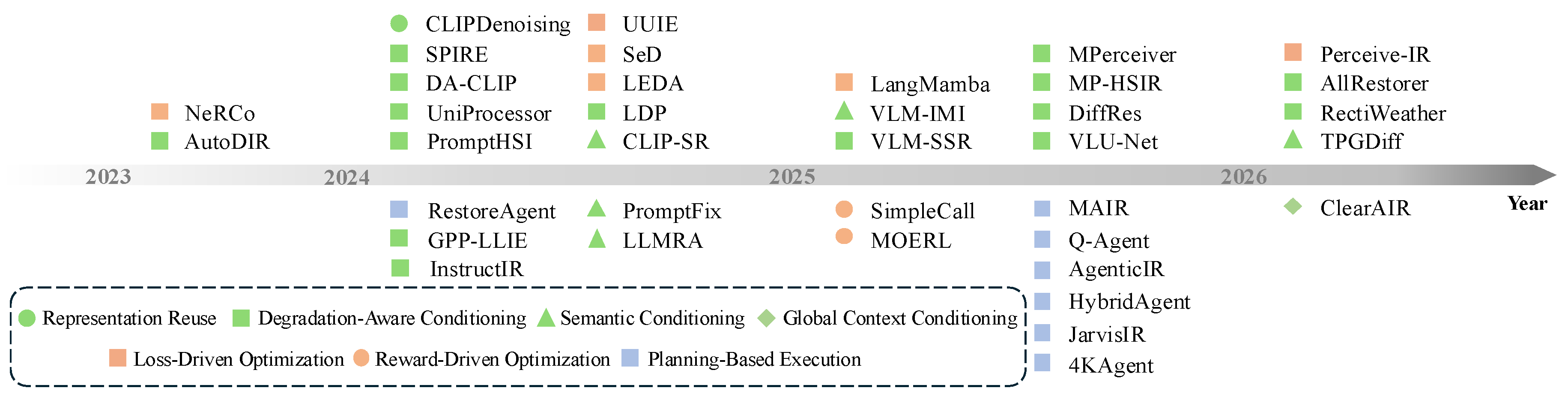

3.1. Overview of Language-Driven Restoration and Taxonomy Definition

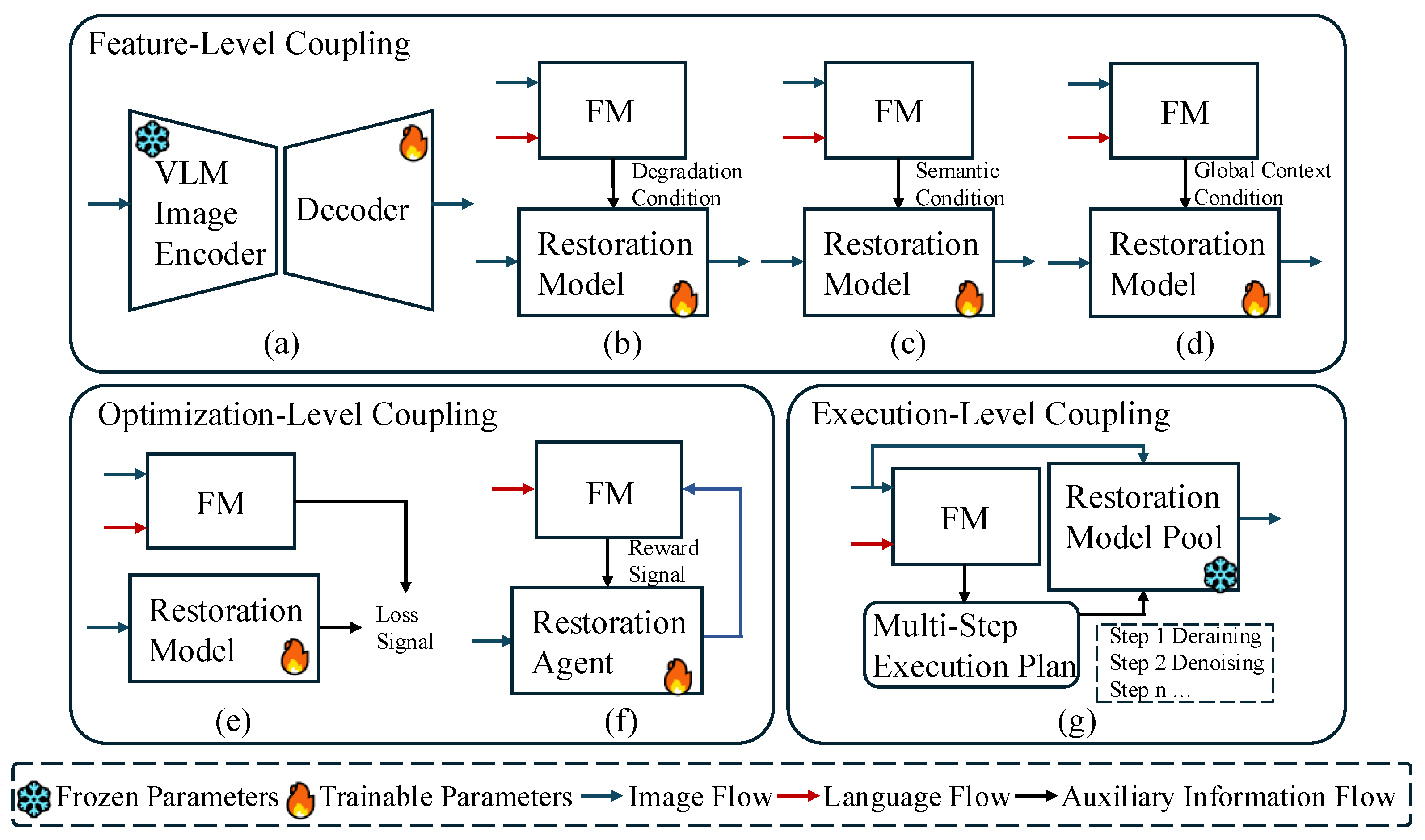

- Feature-Level Coupling: FM outputs are injected into the forward process to modulate intermediate representations without altering the optimization objective or execution structure. This includes pretrained feature conditioning, degradation-aware conditioning, semantic conditioning, and global context conditioning.

- Optimization-Level Coupling: FM outputs define or reshape the optimization objective by introducing differentiable loss terms or scalar reward functions, thereby altering optimization dynamics.

- Execution-Level Coupling: FM outputs determine the execution logic of the restoration pipeline by generating high-level plans or control signals, enabling dynamic selection, composition, or scheduling of restoration modules beyond a fixed computational graph.

3.2. Feature-Level Coupling

3.3. Optimization-Level Coupling

3.4. Execution-Level Coupling

4. Experiments

4.1. Datasets

4.2. Evaluation Metrics

4.3. Experimental Results

5. Discussion and Open Challenges

5.1. Generalization and Robustness

5.2. Computational Efficiency

5.3. Cross-Paradigm Trade-Offs

5.4. Evaluation Reliability

5.5. Dataset Design for Language-Driven IR

5.6. Leveraging Multimodal Data and High-Dimensional Representations

5.7. Ethics and Trustworthiness

6. Conclusions

References

- Jiang, B.; Li, J.; Lu, Y.; Cai, Q.; Song, H.; Lu, G. Efficient image denoising using deep learning: A brief survey. Information Fusion 2025, p. 103013. [CrossRef]

- Tian, C.; Xu, Y.; Zuo, W. Image denoising using deep CNN with batch renormalization. Neural Networks 2020, 121, 461–473. [CrossRef]

- Zhang, K.; Zuo, W.; Chen, Y.; Meng, D.; Zhang, L. Beyond a gaussian denoiser: Residual learning of deep cnn for image denoising. IEEE transactions on image processing 2017, 26, 3142–3155. [CrossRef]

- Chen, X.; Pan, J.; Dong, J.; Tang, J. Towards unified deep image deraining: A survey and a new benchmark. IEEE Transactions on Pattern Analysis and Machine Intelligence 2025. [CrossRef]

- Chen, X.; Li, H.; Li, M.; Pan, J. Learning a sparse transformer network for effective image deraining. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023, pp. 5896–5905.

- Xiao, J.; Fu, X.; Liu, A.; Wu, F.; Zha, Z.J. Image de-raining transformer. IEEE transactions on pattern analysis and machine intelligence 2022, 45, 12978–12995. [CrossRef]

- Gui, J.; Cong, X.; Cao, Y.; Ren, W.; Zhang, J.; Zhang, J.; Cao, J.; Tao, D. A comprehensive survey and taxonomy on single image dehazing based on deep learning. ACM Computing Surveys 2023, 55, 1–37. [CrossRef]

- Tsai, F.J.; Peng, Y.T.; Lin, Y.Y.; Lin, C.W. PHATNet: A Physics-guided Haze Transfer Network for Domain-adaptive Real-world Image Dehazing. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025, pp. 5591–5600.

- Yu, H.; Huang, J.; Zheng, K.; Zhao, F. High-quality image dehazing with diffusion model. arXiv preprint arXiv:2308.11949 2023. [CrossRef]

- Quan, Y.; Tan, X.; Huang, Y.; Xu, Y.; Ji, H. Image desnowing via deep invertible separation. IEEE Transactions on Circuits and Systems for Video Technology 2023, 33, 3133–3144. [CrossRef]

- Guo, X.; Wang, X.; Fu, X.; Zha, Z.J. Deep unfolding network for image desnowing with snow shape prior. IEEE Transactions on Circuits and Systems for Video Technology 2025. [CrossRef]

- Liu, Y.F.; Jaw, D.W.; Huang, S.C.; Hwang, J.N. Desnownet: Context-aware deep network for snow removal. IEEE Transactions on Image Processing 2018, 27, 3064–3073. [CrossRef]

- Xiang, Y.; Zhou, H.; Li, C.; Sun, F.; Li, Z.; Xie, Y. Deep learning in motion deblurring: current status, benchmarks and future prospects. The Visual Computer 2025, 41, 3801–3827. [CrossRef]

- Abuolaim, A.; Brown, M.S. Defocus deblurring using dual-pixel data. In Proceedings of the European conference on computer vision. Springer, 2020, pp. 111–126. [CrossRef]

- Nah, S.; Hyun Kim, T.; Mu Lee, K. Deep multi-scale convolutional neural network for dynamic scene deblurring. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 3883–3891.

- Li, C.; Guo, C.; Han, L.; Jiang, J.; Cheng, M.M.; Gu, J.; Loy, C.C. Low-light image and video enhancement using deep learning: A survey. IEEE transactions on pattern analysis and machine intelligence 2021, 44, 9396–9416. [CrossRef]

- Wei, C.; Wang, W.; Yang, W.; Liu, J. Deep Retinex Decomposition for Low-Light Enhancement. In Proceedings of the BMVC, 2018. [CrossRef]

- Yang, W.; Wang, W.; Huang, H.; Wang, S.; Liu, J. Sparse gradient regularized deep retinex network for robust low-light image enhancement. IEEE Transactions on Image Processing 2021, 30, 2072–2086. [CrossRef]

- Wang, Z.; Chen, J.; Hoi, S.C. Deep learning for image super-resolution: A survey. IEEE transactions on pattern analysis and machine intelligence 2020, 43, 3365–3387. [CrossRef]

- Wang, X.; Xie, L.; Dong, C.; Shan, Y. Real-esrgan: Training real-world blind super-resolution with pure synthetic data. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 1905–1914.

- Chen, X.; Wang, X.; Zhou, J.; Qiao, Y.; Dong, C. Activating more pixels in image super-resolution transformer. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023, pp. 22367–22377.

- Zhang, W.; Dong, L.; Pan, X.; Zou, P.; Qin, L.; Xu, W. A survey of restoration and enhancement for underwater images. IEEE Access 2019, 7, 182259–182279. [CrossRef]

- Li, C.; Guo, C.; Ren, W.; Cong, R.; Hou, J.; Kwong, S.; Tao, D. An underwater image enhancement benchmark dataset and beyond. IEEE transactions on image processing 2019, 29, 4376–4389. [CrossRef]

- Islam, M.J.; Xia, Y.; Sattar, J. Fast underwater image enhancement for improved visual perception. IEEE robotics and automation letters 2020, 5, 3227–3234. [CrossRef]

- Kermany, D.S.; Goldbaum, M.; Cai, W.; Valentim, C.C.; Liang, H.; Baxter, S.L.; McKeown, A.; Yang, G.; Wu, X.; Yan, F.; et al. Identifying medical diagnoses and treatable diseases by image-based deep learning. cell 2018, 172, 1122–1131. [CrossRef]

- McCollough, C.H.; Bartley, A.C.; Carter, R.E.; Chen, B.; Drees, T.A.; Edwards, P.; Holmes III, D.R.; Huang, A.E.; Khan, F.; Leng, S.; et al. Low-dose CT for the detection and classification of metastatic liver lesions: results of the 2016 low dose CT grand challenge. Medical physics 2017, 44, e339–e352. [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems 2012, 25.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30.

- Gu, A.; Dao, T. Mamba: Linear-time sequence modeling with selective state spaces. In Proceedings of the First conference on language modeling, 2024. [CrossRef]

- Cai, Y.; Bian, H.; Lin, J.; Wang, H.; Timofte, R.; Zhang, Y. Retinexformer: One-stage retinex-based transformer for low-light image enhancement. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023, pp. 12504–12513.

- Jiang, J.; Zuo, Z.; Wu, G.; Jiang, K.; Liu, X. A survey on all-in-one image restoration: Taxonomy, evaluation and future trends. IEEE Transactions on Pattern Analysis and Machine Intelligence 2025. [CrossRef]

- Li, R.; Tan, R.T.; Cheong, L.F. All in one bad weather removal using architectural search. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 3175–3185.

- Potlapalli, V.; Zamir, S.W.; Khan, S.H.; Shahbaz Khan, F. Promptir: Prompting for all-in-one image restoration. Advances in Neural Information Processing Systems 2023, 36, 71275–71293.

- Conde, M.V.; Geigle, G.; Timofte, R. Instructir: High-quality image restoration following human instructions. In Proceedings of the European Conference on Computer Vision. Springer, 2024, pp. 1–21. [CrossRef]

- Guan, C.; Yoshie, O. CLIP-driven rain perception: Adaptive deraining with pattern-aware network routing and mask-guided cross-attention. arXiv preprint arXiv:2506.01366 2025. [CrossRef]

- Lin, Y.; Lin, Z.; Chen, H.; Pan, P.; Li, C.; Chen, S.; Wen, K.; Jin, Y.; Li, W.; Ding, X. Jarvisir: Elevating autonomous driving perception with intelligent image restoration. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 22369–22380.

- Zhu, K.; Gu, J.; You, Z.; Qiao, Y.; Dong, C. An intelligent agentic system for complex image restoration problems. arXiv preprint arXiv:2410.17809 2024. [CrossRef]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: from error visibility to structural similarity. IEEE transactions on image processing 2004, 13, 600–612. [CrossRef]

- Huynh-Thu, Q.; Ghanbari, M. Scope of validity of PSNR in image/video quality assessment. Electronics letters 2008, 44, 800–801. [CrossRef]

- Zhang, R.; Isola, P.; Efros, A.A.; Shechtman, E.; Wang, O. The unreasonable effectiveness of deep features as a perceptual metric. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 586–595.

- Wang, J.; Chan, K.C.; Loy, C.C. Exploring clip for assessing the look and feel of images. In Proceedings of the Proceedings of the AAAI conference on artificial intelligence, 2023, Vol. 37, pp. 2555–2563. [CrossRef]

- Hessel, J.; Holtzman, A.; Forbes, M.; Le Bras, R.; Choi, Y. Clipscore: A reference-free evaluation metric for image captioning. In Proceedings of the Proceedings of the 2021 conference on empirical methods in natural language processing, 2021, pp. 7514–7528. [CrossRef]

- Agnolucci, L.; Galteri, L.; Bertini, M. Quality-aware image-text alignment for opinion-unaware image quality assessment. arXiv preprint arXiv:2403.11176 2024. [CrossRef]

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual instruction tuning. Advances in neural information processing systems 2023, 36, 34892–34916.

- You, Z.; Cai, X.; Gu, J.; Xue, T.; Dong, C. Teaching large language models to regress accurate image quality scores using score distribution. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 14483–14494.

- Wu, H.; Zhang, Z.; Zhang, W.; Chen, C.; Liao, L.; Li, C.; Gao, Y.; Wang, A.; Zhang, E.; Sun, W.; et al. Q-align: Teaching lmms for visual scoring via discrete text-defined levels. arXiv preprint arXiv:2312.17090 2023. [CrossRef]

- Zhang, Z.; Wu, H.; Jia, Z.; Lin, W.; Zhai, G. Teaching lmms for image quality scoring and interpreting. arXiv preprint arXiv:2503.09197 2025. [CrossRef]

- Zhu, H.; Tian, Y.; Ding, K.; Chen, B.; Chen, B.; Wang, S.; Lin, W. Agenticiqa: An agentic framework for adaptive and interpretable image quality assessment. arXiv preprint arXiv:2509.26006 2025. [CrossRef]

- Su, J.; Xu, B.; Yin, H. A survey of deep learning approaches to image restoration. Neurocomputing 2022, 487, 46–65. [CrossRef]

- Wang, L.; Zhou, W.; Wang, C.; Lam, K.M.; Su, Z.; Pan, J. Deep Learning-Driven Ultra-High-Definition Image Restoration: A Survey. arXiv preprint arXiv:2505.16161 2025. [CrossRef]

- Zhang, J.; Huang, J.; Jin, S.; Lu, S. Vision-language models for vision tasks: A survey. IEEE transactions on pattern analysis and machine intelligence 2024, 46, 5625–5644. [CrossRef]

- Suhr, A.; Zhou, S.; Zhang, A.; Zhang, I.; Bai, H.; Artzi, Y. A corpus for reasoning about natural language grounded in photographs. In Proceedings of the Proceedings of the 57th annual meeting of the association for computational linguistics, 2019, pp. 6418–6428. [CrossRef]

- Xu, J.; Li, H.; Liang, Z.; Zhang, D.; Zhang, L. Real-world noisy image denoising: A new benchmark. arXiv preprint arXiv:1804.02603 2018. [CrossRef]

- Guo, Y.; Xiao, X.; Chang, Y.; Deng, S.; Yan, L. From sky to the ground: A large-scale benchmark and simple baseline towards real rain removal. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023, pp. 12097–12107.

- Ancuti, C.O.; Ancuti, C.; Timofte, R. NH-HAZE: An image dehazing benchmark with non-homogeneous hazy and haze-free images. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition workshops, 2020, pp. 444–445.

- Lai, J.; Chen, S.; Lin, Y.; Ye, T.; Liu, Y.; Fei, S.; Xing, Z.; Wu, H.; Wang, W.; Zhu, L. SnowMaster: Comprehensive Real-world Image Desnowing via MLLM with Multi-Model Feedback Optimization. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 4302–4312.

- Hai, J.; Xuan, Z.; Yang, R.; Hao, Y.; Zou, F.; Lin, F.; Han, S. R2rnet: Low-light image enhancement via real-low to real-normal network. Journal of Visual Communication and Image Representation 2023, 90, 103712. [CrossRef]

- Zuo, Y.; Zheng, Q.; Wu, M.; Jiang, X.; Li, R.; Wang, J.; Zhang, Y.; Mai, G.; Wang, L.V.; Zou, J.; et al. 4kagent: agentic any image to 4k super-resolution. arXiv preprint arXiv:2507.07105 2025. [CrossRef]

- Berman, D.; Levy, D.; Avidan, S.; Treibitz, T. Underwater single image color restoration using haze-lines and a new quantitative dataset. IEEE transactions on pattern analysis and machine intelligence 2020, 43, 2822–2837. [CrossRef]

- Knoll, F.; Zbontar, J.; Sriram, A.; Muckley, M.J.; Bruno, M.; Defazio, A.; Parente, M.; Geras, K.J.; Katsnelson, J.; Chandarana, H.; et al. fastMRI: A publicly available raw k-space and DICOM dataset of knee images for accelerated MR image reconstruction using machine learning. Radiology: Artificial Intelligence 2020, 2, e190007. [CrossRef]

- Kong, X.; Dong, C.; Zhang, L. Towards effective multiple-in-one image restoration: A sequential and prompt learning strategy. arXiv preprint arXiv:2401.03379 2024. [CrossRef]

- Wang, Z.; Cun, X.; Bao, J.; Zhou, W.; Liu, J.; Li, H. Uformer: A general u-shaped transformer for image restoration. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 17683–17693.

- Zamir, S.W.; Arora, A.; Khan, S.; Hayat, M.; Khan, F.S.; Yang, M.H. Restormer: Efficient transformer for high-resolution image restoration. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 5728–5739.

- Liu, M.; Cui, Y.; Liu, X.; Strand, L.; Yin, H.; Knoll, A. Drfir: A dimensionality reduction framework for all-in-one image restoration in spatial and frequency domains. Expert Systems with Applications 2025, p. 128959. [CrossRef]

- Zhang, X.; Zhang, H.; Wang, G.; Zhang, Q.; Zhang, L.; Du, B. UniUIR: Considering Underwater Image Restoration as An All-in-One Learner. arXiv preprint arXiv:2501.12981 2025. [CrossRef]

- Zhang, X.; Zhang, H.; Wang, G.; Zhang, Q.; Zhang, L. ClearAIR: A Human-Visual-Perception-Inspired All-in-One Image Restoration. arXiv preprint arXiv:2601.02763 2026. [CrossRef]

- Zeng, H.; Wang, X.; Chen, Y.; Su, J.; Liu, J. Vision-Language Gradient Descent-driven All-in-One Deep Unfolding Networks. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 7524–7533.

- Jin, X.; Shi, Y.; Xia, B.; Yang, W. Llmra: Multi-modal large language model based restoration assistant. arXiv preprint arXiv:2401.11401 2024. [CrossRef]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901.

- Grattafiori, A.; Dubey, A.; Jauhri, A.; Pandey, A.; Kadian, A.; Al-Dahle, A.; Letman, A.; Mathur, A.; Schelten, A.; Vaughan, A.; et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783 2024. [CrossRef]

- Bai, J.; Bai, S.; Chu, Y.; Cui, Z.; Dang, K.; Deng, X.; Fan, Y.; Ge, W.; Han, Y.; Huang, F.; et al. Qwen technical report. arXiv preprint arXiv:2309.16609 2023. [CrossRef]

- Liu, A.; Feng, B.; Xue, B.; Wang, B.; Wu, B.; Lu, C.; Zhao, C.; Deng, C.; Zhang, C.; Ruan, C.; et al. Deepseek-v3 technical report. arXiv preprint arXiv:2412.19437 2024. [CrossRef]

- Luo, H.; Bao, J.; Wu, Y.; He, X.; Li, T. Segclip: Patch aggregation with learnable centers for open-vocabulary semantic segmentation. In Proceedings of the International Conference on Machine Learning. PMLR, 2023, pp. 23033–23044.

- Yao, L.; Han, J.; Wen, Y.; Liang, X.; Xu, D.; Zhang, W.; Li, Z.; Xu, C.; Xu, H. Detclip: Dictionary-enriched visual-concept paralleled pre-training for open-world detection. Advances in Neural Information Processing Systems 2022, 35, 9125–9138.

- Jia, C.; Yang, Y.; Xia, Y.; Chen, Y.T.; Parekh, Z.; Pham, H.; Le, Q.; Sung, Y.H.; Li, Z.; Duerig, T. Scaling up visual and vision-language representation learning with noisy text supervision. In Proceedings of the International conference on machine learning. PMLR, 2021, pp. 4904–4916.

- Hurst, A.; Lerer, A.; Goucher, A.P.; Perelman, A.; Ramesh, A.; Clark, A.; Ostrow, A.; Welihinda, A.; Hayes, A.; Radford, A.; et al. Gpt-4o system card. arXiv preprint arXiv:2410.21276 2024. [CrossRef]

- Team, G.; Anil, R.; Borgeaud, S.; Alayrac, J.B.; Yu, J.; Soricut, R.; Schalkwyk, J.; Dai, A.M.; Hauth, A.; Millican, K.; et al. Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805 2023. [CrossRef]

- Wang, P.; Bai, S.; Tan, S.; Wang, S.; Fan, Z.; Bai, J.; Chen, K.; Liu, X.; Wang, J.; Ge, W.; et al. Qwen2-vl: Enhancing vision-language model’s perception of the world at any resolution. arXiv preprint arXiv:2409.12191 2024. [CrossRef]

- Bai, S.; Chen, K.; Liu, X.; Wang, J.; Ge, W.; Song, S.; Dang, K.; Wang, P.; Wang, S.; Tang, J.; et al. Qwen2. 5-vl technical report. arXiv preprint arXiv:2502.13923 2025. [CrossRef]

- Zhai, G.; Min, X. Perceptual image quality assessment: a survey. Science China Information Sciences 2020, 63, 211301. [CrossRef]

- Ding, K.; Ma, K.; Wang, S.; Simoncelli, E.P. Image quality assessment: Unifying structure and texture similarity. IEEE transactions on pattern analysis and machine intelligence 2020, 44, 2567–2581. [CrossRef]

- Zheng, H.; Yang, H.; Fu, J.; Zha, Z.J.; Luo, J. Learning conditional knowledge distillation for degraded-reference image quality assessment. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 10242–10251.

- Lao, S.; Gong, Y.; Shi, S.; Yang, S.; Wu, T.; Wang, J.; Xia, W.; Yang, Y. Attentions help cnns see better: Attention-based hybrid image quality assessment network. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 1140–1149.

- Chen, C.; Mo, J.; Hou, J.; Wu, H.; Liao, L.; Sun, W.; Yan, Q.; Lin, W. Topiq: A top-down approach from semantics to distortions for image quality assessment. IEEE Transactions on Image Processing 2024, 33, 2404–2418. [CrossRef]

- Mittal, A.; Moorthy, A.K.; Bovik, A.C. No-reference image quality assessment in the spatial domain. IEEE Transactions on image processing 2012, 21, 4695–4708. [CrossRef]

- Mittal, A.; Soundararajan, R.; Bovik, A.C. Making a “completely blind” image quality analyzer. IEEE Signal processing letters 2012, 20, 209–212. [CrossRef]

- Venkatanath, N.; Praneeth, D.; Sumohana, S.C.; Swarup, S.M.; et al. Blind image quality evaluation using perception based features. In Proceedings of the 2015 twenty first national conference on communications (NCC). IEEE, 2015, pp. 1–6. [CrossRef]

- Ke, J.; Wang, Q.; Wang, Y.; Milanfar, P.; Yang, F. Musiq: Multi-scale image quality transformer. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 5148–5157.

- Talebi, H.; Milanfar, P. NIMA: Neural image assessment. IEEE transactions on image processing 2018, 27, 3998–4011. [CrossRef]

- Yang, S.; Wu, T.; Shi, S.; Lao, S.; Gong, Y.; Cao, M.; Wang, J.; Yang, Y. Maniqa: Multi-dimension attention network for no-reference image quality assessment. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 1191–1200.

- Su, S.; Yan, Q.; Zhu, Y.; Zhang, C.; Ge, X.; Sun, J.; Zhang, Y. Blindly assess image quality in the wild guided by a self-adaptive hyper network. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 3667–3676.

- Kang, L.; Ye, P.; Li, Y.; Doermann, D. Convolutional neural networks for no-reference image quality assessment. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2014, pp. 1733–1740.

- Wu, H.; Zhang, Z.; Zhang, E.; Chen, C.; Liao, L.; Wang, A.; Xu, K.; Li, C.; Hou, J.; Zhai, G.; et al. Q-instruct: Improving low-level visual abilities for multi-modality foundation models. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 25490–25500.

- Zhu, H.; Wu, H.; Li, Y.; Zhang, Z.; Chen, B.; Zhu, L.; Fang, Y.; Zhai, G.; Lin, W.; Wang, S. Adaptive image quality assessment via teaching large multimodal model to compare. Advances in Neural Information Processing Systems 2024, 37, 32611–32629.

- You, Z.; Li, Z.; Gu, J.; Yin, Z.; Xue, T.; Dong, C. Depicting beyond scores: Advancing image quality assessment through multi-modal language models. In Proceedings of the European Conference on Computer Vision. Springer, 2024, pp. 259–276. [CrossRef]

- Chen, Z.; Wang, J.; Wang, W.; Xu, S.; Xiong, H.; Zeng, Y.; Guo, J.; Wang, S.; Yuan, C.; Li, B.; et al. Seagull: No-reference image quality assessment for regions of interest via vision-language instruction tuning. arXiv preprint arXiv:2411.10161 2024. [CrossRef]

- Chen, C.; Yang, S.; Wu, H.; Liao, L.; Zhang, Z.; Wang, A.; Sun, W.; Yan, Q.; Lin, W. Q-ground: Image quality grounding with large multi-modality models. In Proceedings of the Proceedings of the 32nd ACM International Conference on Multimedia, 2024, pp. 486–495. [CrossRef]

- Tang, Z.; Yang, S.; Peng, B.; Wang, Z.; Dong, J. Revisiting MLLM Based Image Quality Assessment: Errors and Remedy. arXiv preprint arXiv:2511.07812 2025. [CrossRef]

- Su, Z.; Zhang, Y.; Shi, J.; Zhang, X.P. A survey of single image rain removal based on deep learning. ACM Computing Surveys 2023, 56, 1–35. [CrossRef]

- Zhang, K.; Ren, W.; Luo, W.; Lai, W.S.; Stenger, B.; Yang, M.H.; Li, H. Deep image deblurring: A survey. International Journal of Computer Vision 2022, 130, 2103–2130. [CrossRef]

- Zhu, R.; Sheng, L.; Wu, K.; Boukerche, A.; Long, L.; Yang, Q. Toward Efficient Underwater Visual Perception through Image Enhancement, Compression, and Understanding. ACM Computing Surveys 2026, 58, 1–46. [CrossRef]

- Li, X.; Ren, Y.; Jin, X.; Lan, C.; Wang, X.; Zeng, W.; Wang, X.; Chen, Z. Diffusion models for image restoration and enhancement: a comprehensive survey. International Journal of Computer Vision 2025, 133, 8078–8108. [CrossRef]

- Cheng, J.; Liang, D.; Tan, S. Transfer clip for generalizable image denoising. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 25974–25984.

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. In Proceedings of the International conference on machine learning. PmLR, 2021, pp. 8748–8763.

- Yang, H.; Pan, L.; Yang, Y.; Hartley, R.; Liu, M. Ldp: Language-driven dual-pixel image defocus deblurring network. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 24078–24087.

- Zhou, H.; Dong, W.; Liu, X.; Zhang, Y.; Zhai, G.; Chen, J. Low-light image enhancement via generative perceptual priors. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2025, Vol. 39, pp. 10752–10760. [CrossRef]

- Duan, H.; Min, X.; Wu, S.; Shen, W.; Zhai, G. Uniprocessor: a text-induced unified low-level image processor. In Proceedings of the European Conference on Computer Vision. Springer, 2024, pp. 180–199. [CrossRef]

- Mao, J.; Yang, Y.; Yin, X.; Shao, L.; Tang, H. AllRestorer: All-in-One Transformer for Image Restoration under Composite Degradations. arXiv preprint arXiv:2411.10708 2024. [CrossRef]

- Wang, C.; Fan, H.; Yang, H.; Karimi, S.; Yao, L.; Yang, Y. Adapting Text-to-Image Generation with Feature Difference Instruction for Generic Image Restoration. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 23539–23550.

- Li, J.; Li, D.; Savarese, S.; Hoi, S. Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. In Proceedings of the International conference on machine learning. PMLR, 2023, pp. 19730–19742.

- Dong, W.; Zhou, H.; Ji, T.; Zhao, G.; Zhang, Y.; Zhai, G.; Liu, X.; Chen, J. RectiWeather: Photo-Realistic Adverse Weather Removal via Zero-shot Soft Weather Perception and Rectified Flow 2026.

- Wu, Z.; Chen, Y.; Yokoya, N.; He, W. MP-HSIR: A Multi-Prompt Framework for Universal Hyperspectral Image Restoration. arXiv preprint arXiv:2503.09131 2025. [CrossRef]

- Lee, C.M.; Cheng, C.H.; Lin, Y.F.; Cheng, Y.C.; Liao, W.T.; Hsu, C.C.; Yang, F.E.; Wang, Y.C.F. Prompthsi: Universal hyperspectral image restoration framework for composite degradation. arXiv e-prints 2024, pp. arXiv–2411.

- Luo, Z.; Gustafsson, F.K.; Zhao, Z.; Sjölund, J.; Schön, T.B. Controlling vision-language models for multi-task image restoration. arXiv preprint arXiv:2310.01018 2023. [CrossRef]

- Jiang, Y.; Zhang, Z.; Xue, T.; Gu, J. Autodir: Automatic all-in-one image restoration with latent diffusion. In Proceedings of the European Conference on Computer Vision. Springer, 2024, pp. 340–359. [CrossRef]

- Qi, C.; Tu, Z.; Ye, K.; Delbracio, M.; Milanfar, P.; Chen, Q.; Talebi, H. Spire: Semantic prompt-driven image restoration. In Proceedings of the European Conference on Computer Vision. Springer, 2024, pp. 446–464. [CrossRef]

- Ai, Y.; Huang, H.; Zhou, X.; Wang, J.; He, R. Multimodal prompt perceiver: Empower adaptiveness generalizability and fidelity for all-in-one image restoration. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 25432–25444.

- Zhang, Z.; Lei, J.; Peng, B.; Zhu, J.; Xu, L.; Huang, Q. Advancing Real-World Stereoscopic Image Super-Resolution via Vision-Language Model. IEEE Transactions on Image Processing 2025. [CrossRef]

- Hu, B.; Liu, H.; Zheng, Z.; Liu, P. CLIP-SR: Collaborative Linguistic and Image Processing for Super-Resolution. IEEE Transactions on Multimedia 2025. [CrossRef]

- Sun, X.; Wang, L.; Wang, C.; Jin, Y.; Lam, K.m.; Su, Z.; Yang, Y.; Pan, J. Adapting Large VLMs with Iterative and Manual Instructions for Generative Low-light Enhancement. arXiv preprint arXiv:2507.18064 2025. [CrossRef]

- Tu, Y.; Yan, Q.; Niu, A.; Tang, J. TPGDiff: Hierarchical Triple-Prior Guided Diffusion for Image Restoration. arXiv preprint arXiv:2601.20306 2026. [CrossRef]

- Hugging Face. Introducing IDEFICS: An Open Reproduction of State-of-the-Art Visual Language Models. https://huggingface.co/blog/idefics, 2023.

- Yu, Y.; Zeng, Z.; Hua, H.; Fu, J.; Luo, J. Promptfix: You prompt and we fix the photo. arXiv preprint arXiv:2405.16785 2024. [CrossRef]

- Chen, Z.; Chen, T.; Wang, C.; Gao, Q.; Niu, C.; Wang, G.; Shan, H. Low-dose CT denoising with language-engaged dual-space alignment. In Proceedings of the 2024 IEEE International Conference on Bioinformatics and Biomedicine (BIBM). IEEE, 2024, pp. 3088–3091. [CrossRef]

- Chen, Z.; Chen, T.; Wang, C.; Gao, Q.; Xie, H.; Niu, C.; Wang, G.; Shan, H. LangMamba: A Language-driven Mamba Framework for Low-dose CT Denoising with Vision-language Models. IEEE Transactions on Radiation and Plasma Medical Sciences 2025. [CrossRef]

- Yang, S.; Ding, M.; Wu, Y.; Li, Z.; Zhang, J. Implicit neural representation for cooperative low-light image enhancement. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023, pp. 12918–12927.

- Li, B.; Li, X.; Zhu, H.; Jin, Y.; Feng, R.; Zhang, Z.; Chen, Z. Sed: Semantic-aware discriminator for image super-resolution. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 25784–25795.

- Song, W.; Liu, C.; Di Mauro, M.; Liotta, A. Unsupervised Underwater Image Enhancement Combining Imaging Restoration and Prompt Learning. In Proceedings of the Chinese Conference on Pattern Recognition and Computer Vision (PRCV). Springer, 2024, pp. 421–434. [CrossRef]

- Li, Y.; Fan, H.; Hu, R.; Feichtenhofer, C.; He, K. Scaling language-image pre-training via masking. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023, pp. 23390–23400.

- Zhang, X.; Ma, J.; Wang, G.; Zhang, Q.; Zhang, H.; Zhang, L. Perceive-ir: Learning to perceive degradation better for all-in-one image restoration. IEEE Transactions on Image Processing 2025. [CrossRef]

- Wang, T.; Xia, P.; Li, B.; Jiang, P.T.; Kong, Z.; Zhang, K.; Lu, T.; Luo, W. MOERL: When Mixture-of-Experts Meet Reinforcement Learning for Adverse Weather Image Restoration. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025, pp. 13673–13683.

- Lu, J.; Wu, Y.; Zhao, Z.; Wang, H.; Jimenez, F.; Majeedi, A.; Fu, Y. SimpleCall: A Lightweight Image Restoration Agent in Label-Free Environments with MLLM Perceptual Feedback. arXiv preprint arXiv:2512.18599 2025. [CrossRef]

- Chen, H.; Li, W.; Gu, J.; Ren, J.; Chen, S.; Ye, T.; Pei, R.; Zhou, K.; Song, F.; Zhu, L. Restoreagent: Autonomous image restoration agent via multimodal large language models. Advances in Neural Information Processing Systems 2024, 37, 110643–110666.

- Zhou, Y.; Cao, J.; Zhang, Z.; Wen, F.; Jiang, Y.; Jia, J.; Liu, X.; Min, X.; Zhai, G. Q-Agent: Quality-Driven Chain-of-Thought Image Restoration Agent through Robust Multimodal Large Language Model. arXiv preprint arXiv:2504.07148 2025. [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D.; et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 2022, 35, 24824–24837.

- Jiang, X.; Li, G.; Chen, B.; Zhang, J. Multi-Agent Image Restoration. arXiv preprint arXiv:2503.09403 2025. [CrossRef]

- Li, B.; Li, X.; Lu, Y.; Chen, Z. Hybrid agents for image restoration. arXiv preprint arXiv:2503.10120 2025. [CrossRef]

- Wei, Y.; Zhang, Z.; Ren, J.; Xu, X.; Hong, R.; Yang, Y.; Yan, S.; Wang, M. Clarity chatgpt: An interactive and adaptive processing system for image restoration and enhancement. arXiv preprint arXiv:2311.11695 2023. [CrossRef]

- Franzen, R. Kodak lossless true color image suite, 1999.

- Zhang, L.; Wu, X.; Buades, A.; Li, X. Color demosaicking by local directional interpolation and nonlocal adaptive thresholding. Journal of Electronic imaging 2011, 20, 023016–023016. [CrossRef]

- Martin, D.; Fowlkes, C.; Tal, D.; Malik, J. A database of human segmented natural images and its application to evaluating segmentation algorithms and measuring ecological statistics. In Proceedings of the Proceedings eighth IEEE international conference on computer vision. ICCV 2001. IEEE, 2001, Vol. 2, pp. 416–423. [CrossRef]

- Huang, J.B.; Singh, A.; Ahuja, N. Single image super-resolution from transformed self-exemplars. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 5197–5206. [CrossRef]

- Agustsson, E.; Timofte, R. Ntire 2017 challenge on single image super-resolution: Dataset and study. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition workshops, 2017, pp. 126–135.

- Abdelhamed, A.; Lin, S.; Brown, M.S. A high-quality denoising dataset for smartphone cameras. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 1692–1700.

- Ma, K.; Duanmu, Z.; Wu, Q.; Wang, Z.; Yong, H.; Li, H.; Zhang, L. Waterloo exploration database: New challenges for image quality assessment models. IEEE Transactions on Image Processing 2016, 26, 1004–1016. [CrossRef]

- Arbelaez, P.; Maire, M.; Fowlkes, C.; Malik, J. Contour detection and hierarchical image segmentation. IEEE transactions on pattern analysis and machine intelligence 2010, 33, 898–916. [CrossRef]

- Yang, W.; Tan, R.T.; Feng, J.; Liu, J.; Guo, Z.; Yan, S. Deep joint rain detection and removal from a single image. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 1357–1366.

- Zhang, H.; Sindagi, V.; Patel, V.M. Image de-raining using a conditional generative adversarial network. IEEE transactions on circuits and systems for video technology 2019, 30, 3943–3956. [CrossRef]

- Fu, X.; Huang, J.; Zeng, D.; Huang, Y.; Ding, X.; Paisley, J. Removing rain from single images via a deep detail network. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 3855–3863.

- Qian, R.; Tan, R.T.; Yang, W.; Su, J.; Liu, J. Attentive generative adversarial network for raindrop removal from a single image. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 2482–2491.

- Li, R.; Cheong, L.F.; Tan, R.T. Heavy rain image restoration: Integrating physics model and conditional adversarial learning. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 1633–1642.

- Quan, R.; Yu, X.; Liang, Y.; Yang, Y. Removing raindrops and rain streaks in one go. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 9147–9156.

- Huang, H.; Luo, M.; He, R. Memory uncertainty learning for real-world single image deraining. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022, 45, 3446–3460. [CrossRef]

- Sakaridis, C.; Dai, D.; Van Gool, L. Semantic foggy scene understanding with synthetic data. International Journal of Computer Vision 2018, 126, 973–992. [CrossRef]

- Sakaridis, C.; Wang, H.; Li, K.; Zurbrügg, R.; Jadon, A.; Abbeloos, W.; Reino, D.O.; Van Gool, L.; Dai, D. ACDC: The adverse conditions dataset with correspondences for robust semantic driving scene perception. arXiv preprint arXiv:2104.13395 2021. [CrossRef]

- Li, B.; Ren, W.; Fu, D.; Tao, D.; Feng, D.; Zeng, W.; Wang, Z. Benchmarking single-image dehazing and beyond. IEEE transactions on image processing 2018, 28, 492–505. [CrossRef]

- Ancuti, C.O.; Ancuti, C.; Sbert, M.; Timofte, R. Dense-haze: A benchmark for image dehazing with dense-haze and haze-free images. In Proceedings of the 2019 IEEE international conference on image processing (ICIP). IEEE, 2019, pp. 1014–1018. [CrossRef]

- Punnappurath, A.; Abuolaim, A.; Afifi, M.; Brown, M.S. Modeling defocus-disparity in dual-pixel sensors. In Proceedings of the 2020 IEEE International Conference on Computational Photography (ICCP). IEEE, 2020, pp. 1–12. [CrossRef]

- Pan, L.; Chowdhury, S.; Hartley, R.; Liu, M.; Zhang, H.; Li, H. Dual pixel exploration: Simultaneous depth estimation and image restoration. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021, pp. 4340–4349.

- Abuolaim, A.; Delbracio, M.; Kelly, D.; Brown, M.S.; Milanfar, P. Learning to reduce defocus blur by realistically modeling dual-pixel data. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 2289–2298.

- Lee, C.; Lee, C.; Kim, C.S. Contrast enhancement based on layered difference representation of 2D histograms. IEEE transactions on image processing 2013, 22, 5372–5384. [CrossRef]

- Wang, S.; Zheng, J.; Hu, H.M.; Li, B. Naturalness preserved enhancement algorithm for non-uniform illumination images. IEEE transactions on image processing 2013, 22, 3538–3548. [CrossRef]

- Vonikakis, V.; Kouskouridas, R.; Gasteratos, A. On the evaluation of illumination compensation algorithms. Multimedia Tools and Applications 2018, 77, 9211–9231. [CrossRef]

- Ma, K.; Zeng, K.; Wang, Z. Perceptual quality assessment for multi-exposure image fusion. IEEE Transactions on Image Processing 2015, 24, 3345–3356. [CrossRef]

- Cai, J.; Gu, S.; Zhang, L. Learning a deep single image contrast enhancer from multi-exposure images. IEEE Transactions on Image Processing 2018, 27, 2049–2062. [CrossRef]

- Guo, X.; Li, Y.; Ling, H. LIME: Low-light image enhancement via illumination map estimation. IEEE Transactions on image processing 2016, 26, 982–993. [CrossRef]

- Bevilacqua, M.; Roumy, A.; Guillemot, C.; Alberi-Morel, M.L. Low-complexity single-image super-resolution based on nonnegative neighbor embedding 2012. [CrossRef]

- Zeyde, R.; Elad, M.; Protter, M. On single image scale-up using sparse-representations. In Proceedings of the International conference on curves and surfaces. Springer, 2010, pp. 711–730. [CrossRef]

- Matsui, Y.; Ito, K.; Aramaki, Y.; Fujimoto, A.; Ogawa, T.; Yamasaki, T.; Aizawa, K. Sketch-based manga retrieval using manga109 dataset. Multimedia tools and applications 2017, 76, 21811–21838. [CrossRef]

- Cheng, D.; Price, B.; Cohen, S.; Brown, M.S. Beyond white: Ground truth colors for color constancy correction. In Proceedings of the Proceedings of the IEEE International Conference on Computer Vision, 2015, pp. 298–306.

- Cai, J.; Zeng, H.; Yong, H.; Cao, Z.; Zhang, L. Toward real-world single image super-resolution: A new benchmark and a new model. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019, pp. 3086–3095.

- Wei, P.; Xie, Z.; Lu, H.; Zhan, Z.; Ye, Q.; Zuo, W.; Lin, L. Component divide-and-conquer for real-world image super-resolution. In Proceedings of the European conference on computer vision. Springer, 2020, pp. 101–117. [CrossRef]

- Wu, R.; Yang, T.; Sun, L.; Zhang, Z.; Li, S.; Zhang, L. Seesr: Towards semantics-aware real-world image super-resolution. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 25456–25467.

- Zhang, K.; Liang, J.; Van Gool, L.; Timofte, R. Designing a practical degradation model for deep blind image super-resolution. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 4791–4800.

- Wang, Z.J.; Montoya, E.; Munechika, D.; Yang, H.; Hoover, B.; Chau, D.H. Diffusiondb: A large-scale prompt gallery dataset for text-to-image generative models. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2023, pp. 893–911. [CrossRef]

- Xia, G.S.; Hu, J.; Hu, F.; Shi, B.; Bai, X.; Zhong, Y.; Zhang, L.; Lu, X. AID: A benchmark data set for performance evaluation of aerial scene classification. IEEE Transactions on Geoscience and Remote Sensing 2017, 55, 3965–3981. [CrossRef]

- Li, K.; Wan, G.; Cheng, G.; Meng, L.; Han, J. Object detection in optical remote sensing images: A survey and a new benchmark. ISPRS journal of photogrammetry and remote sensing 2020, 159, 296–307. [CrossRef]

- Xia, G.S.; Bai, X.; Ding, J.; Zhu, Z.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; Zhang, L. DOTA: A large-scale dataset for object detection in aerial images. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 3974–3983.

- Jia, F.; Tan, L.; Wang, G.; Jia, C.; Chen, Z. A super-resolution network using channel attention retention for pathology images. PeerJ Computer Science 2023, 9, e1196. [CrossRef]

- FUJIFILM Healthcare Europe.; SonoSkills. US-CASE: Ultrasound Cases Dataset, 2025.

- Liu, R.; Fan, X.; Zhu, M.; Hou, M.; Luo, Z. Real-world underwater enhancement: Challenges, benchmarks, and solutions under natural light. IEEE transactions on circuits and systems for video technology 2020, 30, 4861–4875. [CrossRef]

- Tang, L.; Yuan, J.; Zhang, H.; Jiang, X.; Ma, J. PIAFusion: A progressive infrared and visible image fusion network based on illumination aware. Information Fusion 2022, 83, 79–92. [CrossRef]

- Liu, J.; Liu, Z.; Wu, G.; Ma, L.; Liu, R.; Zhong, W.; Luo, Z.; Fan, X. Multi-interactive feature learning and a full-time multi-modality benchmark for image fusion and segmentation. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023, pp. 8115–8124.

- Guo, Y.; Gao, Y.; Lu, Y.; Zhu, H.; Liu, R.W.; He, S. Onerestore: A universal restoration framework for composite degradation. In Proceedings of the European conference on computer vision. Springer, 2024, pp. 255–272. [CrossRef]

- Zhou, Y.; Ren, D.; Emerton, N.; Lim, S.; Large, T. Image restoration for under-display camera. In Proceedings of the Proceedings of the ieee/cvf conference on computer vision and pattern recognition, 2021, pp. 9179–9188.

- Lin, C.H.; Hsu, C.C.; Young, S.S.; Hsieh, C.Y.; Tai, S.C. QRCODE: Quasi-residual convex deep network for fusing misaligned hyperspectral and multispectral images. IEEE Transactions on Geoscience and Remote Sensing 2024, 62, 1–15. [CrossRef]

- Arad, B.; Timofte, R.; Yahel, R.; Morag, N.; Bernat, A.; Cai, Y.; Lin, J.; Lin, Z.; Wang, H.; Zhang, Y.; et al. Ntire 2022 spectral recovery challenge and data set. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 863–881.

- Zhang, L.; Zhang, L.; Mou, X.; Zhang, D. FSIM: A feature similarity index for image quality assessment. IEEE transactions on Image Processing 2011, 20, 2378–2386. [CrossRef]

- Du, Q.; Younan, N.H.; King, R.; Shah, V.P. On the performance evaluation of pan-sharpening techniques. IEEE Geoscience and Remote Sensing Letters 2007, 4, 518–522. [CrossRef]

- Heusel, M.; Ramsauer, H.; Unterthiner, T.; Nessler, B.; Hochreiter, S. Gans trained by a two time-scale update rule converge to a local nash equilibrium. Advances in neural information processing systems 2017, 30.

- Blau, Y.; Mechrez, R.; Timofte, R.; Michaeli, T.; Zelnik-Manor, L. The 2018 PIRM challenge on perceptual image super-resolution. In Proceedings of the Proceedings of the European conference on computer vision (ECCV) workshops, 2018, pp. 0–0.

- Ying, Z.; Niu, H.; Gupta, P.; Mahajan, D.; Ghadiyaram, D.; Bovik, A. From patches to pictures (PaQ-2-PiQ): Mapping the perceptual space of picture quality. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 3575–3585.

- Network, A. Blind image quality assessment using a deep bilinear convolutional neural network. Deep Bilinear Convolutional Neural 2022, 5. [CrossRef]

- Zhang, W.; Zhai, G.; Wei, Y.; Yang, X.; Ma, K. Blind image quality assessment via vision-language correspondence: A multitask learning perspective. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023, pp. 14071–14081.

- Zhou, J.; Liu, C.; Jiang, Q.; Fu, X.; Hou, J.; Li, X. Semantic Contrast for Domain-Robust Underwater Image Quality Assessment. IEEE Transactions on Pattern Analysis and Machine Intelligence 2026. [CrossRef]

- Chen, Z.; Qin, H.; Wang, J.; Yuan, C.; Li, B.; Hu, W.; Wang, L. Promptiqa: Boosting the performance and generalization for no-reference image quality assessment via prompts. In Proceedings of the European conference on computer vision. Springer, 2024, pp. 247–264. [CrossRef]

- Li, X.; Huang, Z.; Zhang, Y.; Shen, Y.; Li, K.; Zheng, X.; Cao, L.; Ji, R. Few-Shot Image Quality Assessment via Adaptation of Vision-Language Models. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025, pp. 10442–10452.

- Kwon, D.; Kim, D.; Ki, S.; Jo, Y.; Lee, H.E.; Kim, S.J. ATTIQA: Generalizable image quality feature extractor using attribute-aware pretraining. In Proceedings of the Proceedings of the Asian Conference on Computer Vision, 2024, pp. 4526–4543.

- Rifa, K.R.; Zhang, J.; Imran, A. CAP-IQA: Context-Aware Prompt-Guided CT Image Quality Assessment. arXiv preprint arXiv:2601.01613 2026. [CrossRef]

- Dong, G.; Liao, X.; Li, M.; Guo, G.; Ren, C. Exploring semantic feature discrimination for perceptual image super-resolution and opinion-unaware no-reference image quality assessment. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 28176–28187.

- Zhou, H.; Tang, L.; Yang, R.; Qin, G.; Zhang, Y.; Li, Y.; Li, X.; Hu, R.; Zhai, G. UniQA: Unified vision-language pre-training for image quality and aesthetic assessment. arXiv preprint arXiv:2406.01069 2024. [CrossRef]

- Zhao, S.; Zhang, X.; Li, W.; Li, J.; Zhang, L.; Xue, T.; Zhang, J. Reasoning as Representation: Rethinking Visual Reinforcement Learning in Image Quality Assessment. arXiv preprint arXiv:2510.11369 2025. [CrossRef]

- You, Z.; Gu, J.; Li, Z.; Cai, X.; Zhu, K.; Dong, C.; Xue, T. Descriptive image quality assessment in the wild. arXiv preprint arXiv:2405.18842 2024. [CrossRef]

- Chen, Z.; Hu, B.; Niu, C.; Chen, T.; Li, Y.; Shan, H.; Wang, G. IQAGPT: computed tomography image quality assessment with vision-language and ChatGPT models. Visual Computing for Industry, Biomedicine, and Art 2024, 7, 20. [CrossRef]

- Wu, H.; Zhu, H.; Zhang, Z.; Zhang, E.; Chen, C.; Liao, L.; Li, C.; Wang, A.; Sun, W.; Yan, Q.; et al. Towards open-ended visual quality comparison. In Proceedings of the European Conference on Computer Vision. Springer, 2024, pp. 360–377. [CrossRef]

- Liu, K.; Zhang, Z.; Li, W.; Pei, R.; Song, F.; Liu, X.; Kong, L.; Zhang, Y. Dog-IQA: Standard-guided Zero-shot MLLM for Mix-grained Image Quality Assessment. arXiv preprint arXiv:2410.02505 2024. [CrossRef]

- Li, W.; Zhang, X.; Zhao, S.; Zhang, Y.; Li, J.; Zhang, L.; Zhang, J. Q-insight: Understanding image quality via visual reinforcement learning. arXiv preprint arXiv:2503.22679 2025. [CrossRef]

- Cai, Z.; Zhang, J.; Yuan, X.; Jiang, P.T.; Chen, W.; Tang, B.; Yao, L.; Wang, Q.; Chen, J.; Li, B. Q-ponder: A unified training pipeline for reasoning-based visual quality assessment. arXiv preprint arXiv:2506.05384 2025. [CrossRef]

- Xie, W.; Dai, R.; Ding, R.; Liu, K.; Chu, X.; Hou, X.; Wen, J. Q-Hawkeye: Reliable Visual Policy Optimization for Image Quality Assessment. arXiv preprint arXiv:2601.22920 2026. [CrossRef]

- Li, X.; Zhang, Z.; Xu, Z.; Xu, S.; Min, X.; Chen, Y.; Zhai, G. Decoupling Perception and Calibration: Label-Efficient Image Quality Assessment Framework. arXiv preprint arXiv:2601.20689 2026. [CrossRef]

- Wu, H.; Zhang, Z.; Zhang, E.; Chen, C.; Liao, L.; Wang, A.; Li, C.; Sun, W.; Yan, Q.; Zhai, G.; et al. Q-bench: A benchmark for general-purpose foundation models on low-level vision. arXiv preprint arXiv:2309.14181 2023. [CrossRef]

- Zhang, Z.; Wu, H.; Zhang, E.; Zhai, G.; Lin, W. Q-Bench +: A Benchmark for Multi-Modal Foundation Models on Low-Level Vision From Single Images to Pairs. IEEE Transactions on Pattern Analysis and Machine Intelligence 2024, 46, 10404–10418. [CrossRef]

- Kendall, M.G. A new measure of rank correlation. Biometrika 1938, 30, 81–93. [CrossRef]

- Tinsley, H.E.; Weiss, D.J. Interrater reliability and agreement of subjective judgments. Journal of Counseling Psychology 1975, 22, 358. [CrossRef]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. Bleu: a method for automatic evaluation of machine translation. In Proceedings of the Proceedings of the 40th annual meeting of the Association for Computational Linguistics, 2002, pp. 311–318.

- Lin, C.Y. Rouge: A package for automatic evaluation of summaries. In Proceedings of the Text summarization branches out, 2004, pp. 74–81.

- Banerjee, S.; Lavie, A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. In Proceedings of the Proceedings of the acl workshop on intrinsic and extrinsic evaluation measures for machine translation and/or summarization, 2005, pp. 65–72.

- Vedantam, R.; Lawrence Zitnick, C.; Parikh, D. Cider: Consensus-based image description evaluation. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 4566–4575.

- Sun, S.; Ren, W.; Wang, T.; Cao, X. Rethinking image restoration for object detection. Advances in Neural Information Processing Systems 2022, 35, 4461–4474.

- Zhu, H.; Sui, X.; Chen, B.; Liu, X.; Chen, P.; Fang, Y.; Wang, S. 2AFC prompting of large multimodal models for image quality assessment. IEEE Transactions on Circuits and Systems for Video Technology 2024. [CrossRef]

- Li, B.; Liu, X.; Hu, P.; Wu, Z.; Lv, J.; Peng, X. All-in-one image restoration for unknown corruption. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 17452–17462.

- Zhang, J.; Huang, J.; Yao, M.; Yang, Z.; Yu, H.; Zhou, M.; Zhao, F. Ingredient-oriented multi-degradation learning for image restoration. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023, pp. 5825–5835.

- Cui, Y.; Zamir, S.W.; Khan, S.; Knoll, A.; Shah, M.; Khan, F.S. Adair: Adaptive all-in-one image restoration via frequency mining and modulation. In Proceedings of the 13th international conference on learning representations, ICLR 2025. International Conference on Learning Representations, ICLR, 2025, pp. 57335–57356.

- Wu, G.; Jiang, J.; Jiang, K.; Liu, X.; Nie, L. DSwinIR: Rethinking Window-Based Attention for Image Restoration. IEEE Transactions on Pattern Analysis and Machine Intelligence 2025. [CrossRef]

- Cui, Y.; Ren, W.; Shi, B.; Knoll, A. Visual-in-Visual: A Unified and Efficient Baseline for Image Restoration. IEEE Transactions on Pattern Analysis and Machine Intelligence 2026. [CrossRef]

- Wang, T.; Zhang, K.; Shen, T.; Luo, W.; Stenger, B.; Lu, T. Ultra-high-definition low-light image enhancement: A benchmark and transformer-based method. In Proceedings of the Proceedings of the AAAI conference on artificial intelligence, 2023, Vol. 37, pp. 2654–2662. [CrossRef]

- Zhang, T.; Liu, P.; Lu, Y.; Cai, M.; Zhang, Z.; Zhang, Z.; Zhou, Q. Cwnet: Causal wavelet network for low-light image enhancement. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025, pp. 8789–8799.

- Yi, X.; Xu, H.; Zhang, H.; Tang, L.; Ma, J. Diff-Retinex++: Retinex-driven reinforced diffusion model for low-light image enhancement. IEEE Transactions on Pattern Analysis and Machine Intelligence 2025. [CrossRef]

- Deng, X.; Dragotti, P.L. Deep convolutional neural network for multi-modal image restoration and fusion. IEEE transactions on pattern analysis and machine intelligence 2020, 43, 3333–3348. [CrossRef]

- Wang, Z.; Wu, Y.; Li, D.; Li, G.; Zhu, P.; Zhang, Z.; Jiang, R. LiDAR-assisted image restoration for extreme low-light conditions. Knowledge-Based Systems 2025, 316, 113382. [CrossRef]

- Janjua, M.K.; Ghasemabadi, A.; Zhang, K.; Salameh, M.; Gao, C.; Niu, D. Grounding Degradations in Natural Language for All-In-One Video Restoration. arXiv preprint arXiv:2507.14851 2025. [CrossRef]

| Survey | Year | Tasks | Domains | M | L | A | IQA | Key contributions |

|---|---|---|---|---|---|---|---|---|

| Jiang et al. [1] | 2025 | DN | Nat | × | × | ✓ | × | Reviews deep denoising methods |

| Su et al. [99] | 2023 | DR | Nat | × | × | × | × | Reviews deraining architectures and benchmarks |

| Gui et al. [7] | 2023 | DH | Nat | × | × | × | × | Categorizes dehazing (CNN/GAN/Transformer) |

| Xiang et al. [13] | 2025 | DB | Nat | × | × | ✓ | × | Organizes CNN-based deblurring frameworks |

| Li et al. [16] | 2021 | LLIE | Nat | × | × | ✓ | × | Organizes LLIE by illumination modeling |

| Zhang et al. [100] | 2022 | SR | Nat | × | × | ✓ | × | Taxonomy of SR (architecture/loss/training) |

| Zhu et al. [101] | 2026 | DH/DN/Enhancement | UW | ✓ | ✓ | ✓ | × | Reviews underwater enhancement and restoration methods |

| Wang et al. [50] | 2025 | DR/DH/DB/DS/LLIE/SR/AiO | Nat (UHD) | ✓ | × | ✓ | × | UHD restoration across degradations |

| Li et al. [102] | 2025 | DN/DR/DH/DB/LLIE/SR/AiO | Nat | ✓ | × | ✓ | × | Diffusion-based restoration across tasks |

| Jiang et al. [31] | 2025 | AiO | Nat/UW/Med/HS | ✓ | × | ✓ | × | AiO restoration across domains |

| Ours | 2026 | DN/DR/DH/DB/DS/LLIE/SR/AiO | Nat/UW/Med/HS | ✓ | ✓ | ✓ | ✓ | Systematizes language-driven IR and IQA with unified taxonomy |

| Task | Dataset | Year | Type | Domain | Training/Testing | Description |

|---|---|---|---|---|---|---|

| Denoising | Kodak24 [139] | 1999 | r | Natural | -/24 | Clean color images |

| McMaster [140] | 2011 | r | Natural | -/18 | 18 high-quality color images | |

| CBSD68 [141] | 2001 | r | Natural | -/68 | 68 clean natural images with different noise levels | |

| Urban100 [142] | 2015 | r | Natural | -/100 | 100 high-resolution urban scenes with repetitive structures | |

| DIV2K [143] | 2017 | r | Natural | 800/100 | 1000 high-resolution images | |

| SIDD [144] | 2018 | r | Natural | -/160 | Real-noise image pairs with clean ground truth | |

| PolyU [53] | 2018 | r | Natural | -/40 | Real-noise paired dataset with 40 scenes | |

| WED [145] | 2016 | r&s | Natural | 4744/- | Waterloo Exploration Database | |

| BSD400 [146] | 2010 | r | Natural | 400/- | Training subset from BSD500 | |

| Mayo-2016 [26] | 2016 | r | Medical | 4800/1136 | Paired normal-dose and simulated quarter-dose abdominal CT | |

| Deraining | Rain100L [147] | 2017 | s | Natural | 200/100 | Images with light rain effect |

| Rain100H [147] | 2017 | s | Natural | 1800/100 | Images with heavy rain conditions | |

| Rain800 [148] | 2019 | s | Natural | 700/100 | Images with diverse rain patterns | |

| Rain1400 [149] | 2017 | s | Natural | 12600/1400 | 14 rain streak types | |

| Raindrop [150] | 2018 | r | Natural | 1069/58 | A paired raindrop dataset captured using dual identical glass setups | |

| Outdoor-Rain [151] | 2019 | r&s | Natural | 9000/1500 | A synthetic outdoor rain dataset with streak and accumulation effects | |

| RainDS [152] | 2021 | r&s | Natural | -/5800 | Paired deraining dataset organized as a 4-image set | |

| SSID [153] | 2022 | r&s | Natural | 47600/200 | Semi-supervised image deraining sets | |

| LHP [54] | 2023 | r | Natural | 2100/300 | Largest paired real rain dataset with image resolution | |

| Dehazing | FoggyCityscapes [154] | 2018 | s | Natural | 2975/1525 | Paired foggy and clear images |

| ACDC [155] | 2021 | r | Natural | 1600/2400 | Real-world images captured under adverse conditions | |

| RESIDE [156] | 2018 | r | Natural | 86125/4842 | Real and synthetic data across indoor and outdoor scenarios | |

| NH-HAZE [55] | 2020 | r | Natural | 45/5 | A real paired outdoor dehazing set with non-homogeneous haze | |

| Dense-Haze [157] | 2019 | r | Natural | 45/5 | A real paired dehazing dataset for dense, homogeneous haze | |

| Desnowing | RealSnow10K [56] | 2025 | r | Natural | 6406/1047 | Real-world snow removal dataset |

| Snow100K-L [12] | 2018 | s | Natural | 1872/601 | A single-image snow removal benchmark | |

| Deblurring | DPD-blur [14] | 2020 | r | Natural | 350/150 | 500 real defocus blur image pairs |

| DPD-disp [158] | 2020 | r | Natural | -/350 | Reuse the checkpoints trained on the DPD-blur dataset | |

| DDD-syn [159] | 2021 | s | Natural | 10000/1000 | Synthetic deblurring dataset with paired blurry and sharp images | |

| RDPD [160] | 2021 | s | Natural | 18000/1000 | Images captured using a dual-pixel camera | |

| GoPro [15] | 2017 | r&s | Natural | 2103/1111 | Paired images generated from real high-frame-rate GoPro videos | |

| LLIE | LOL-v1 [17] | 2018 | r | Natural | 485/15 | Paired low-light and normal-light under controlled conditions |

| LSRW [57] | 2023 | r | Natural | 445/50 | Paired low-light LR with normal-light HR | |

| DICM [161] | 2013 | r | Natural | -/64 | Low light images without ground truth for visual comparison | |

| NPE [162] | 2013 | r | Natural | -/85 | Unpaired low light images | |

| VV [163] | 2018 | r | Natural | -/24 | 24 real-world unpaired low light images | |

| LOL-v2-real [18] | 2021 | r | Natural | 689/100 | Real paired low-light sets | |

| LOL-v2-syn [18] | 2021 | s | Natural | 900/100 | Synthetic paired low-light sets | |

| MEF [164] | 2015 | r | Natural | -/17 | Multiple images with different exposure levels for the same scene | |

| SICE [165] | 2018 | r | Natural | 360/229 | Multiple reference images of different enhancement levels | |

| LIME [166] | 2016 | r | Natural | -/10 | 10 images without ground truth | |

| Super Resolution | Set5 [167] | 2021 | r | Natural | -/5 | 5 real-world natural images |

| Set14 [168] | 2010 | r | Natural | -/14 | 14 real-world natural images | |

| Manga109 [169] | 2017 | r | Natural | -/109 | 109 real-world manga images | |

| CelebA [170] | 2015 | r | Natural | 162770/19867 | Images with 40 binary attributes | |

| RealSR [171] | 2019 | r | Natural | -/35 | Real-world low-and high-resolution image pairs | |

| DrealSR [172] | 2020 | r | Natural | -/93 | 93 aligned LR-HR image pairs | |

| DIV2K-Val [173] | 2024 | r | Natural | -/100 | 3K patches from the DIV2K validation set | |

| RealSRSet [174] | 2021 | r | Natural | -/20 | Contains images captured in practical scenarios | |

| DIV4K-50 [58] | 2024 | r | Natural | -/50 | distorted images paired with counterparts | |

| DiffusionDB [175] | 2023 | s | Natural | -/100 | Text-to-image prompt gallery sets | |

| AID [176] | 2017 | r | Natural | -/135 | Aerial image dataset | |

| DIOR [177] | 2019 | r | Natural | -/154 | Object detection in optical remote sensing images | |

| DOTA [178] | 2018 | r | Natural | -/183 | Dataset for object detection in aerial images | |

| bcSR [179] | 2023 | r | Medical | -/200 | Pathology images patches from breast cancer whole slide images | |

| US-Case [180] | 2025 | r | Medical | -/111 | Ultrasound cases |

| Task | Dataset | Year | Type | Domain | Training/Testing | Description |

|---|---|---|---|---|---|---|

| Underwater | UIEB [23] | 2019 | r | Underwater | 800/90 | Underwater image enhancement benchmark |

| EUVP [24] | 2019 | r | Underwater | 20000/- | Include both paired and unpaired samples | |

| RUIE [181] | 2020 | r | Underwater | -/4230 | Real-world underwater image enhancement | |

| Composite | PromptFix [123] | 2024 | r&s | Natural | 101320/- | Paired input–goal–instruction triplets spanning 7 tasks |

| MiO100 [61] | 2024 | r&s | Natural | -/700 | Each image is degraded with 7 single degradation types | |

| AgenticIR [37] | 2025 | r&s | Natural | -/1440 | 16 mixed-degradation combinations (2–3 types) | |

| CleanBench [36] | 2025 | r&s | Natural | 150000/80000 | A large-scale, high-quality instruction-response | |

| MSRS [182] | 2022 | r | Natural | 1163/361 | A multi-spectral IR-VIS paired set | |

| FMB [183] | 2023 | r | Natural | 1220/280 | 1500 aligned pairs | |

| CDD-11 [184] | 2024 | r&s | Natural | 13013/2200 | images selected from the RAISE dataset | |

| TOLED [185] | 2021 | r | Natural | 240/30 | A real paired under-display camera restoration set | |

| AVIRIS [186] | 2024 | r | HSI | 1678/200 | Airborne visible/infrared imaging spectrometer | |

| ARAD [187] | 2022 | r | HSI | 1000/- | A large natural spectral image set |

| Category | Sub-category | Representative Methods | GT | Usage |

|---|---|---|---|---|

| Full-Reference | Non-Learning-Based | PSNR, SSIM [38], FSIM [188], MAE, MSE, RMSE, ERGAS [189] | ✓ | Pixel-level fidelity or structural consistency |

| Learning-Based | LPIPS [40], DISTS [81], CKDN [82], AHIQ [83], TOPIQ-FR [84] | ✓ | Feature-based perceptual similarity | |

| Distribution-based | FID [190] | ✓ | Feature-space distribution alignment | |

| No-Reference | Hand-Crafted | BRISQUE [85], NIQE [86], PIQE [87], LOE [162], PI [191] | × | Blind perceptual quality estimation |

| Learning-Based | MUSIQ [88], MANIQA [90], NIMA [89], HyperIQA [91], PAQ2-PIQ [192], DBCNN [193], TOPIQ-NR [84], CNNIQA [92] | × | Learning-based NR-IQA | |

| Alignment-Based | CLIP-IQA [41], QualiCLIP [43], LIQE [194], SCUIA [195], PromptIQA [196], GRMP-IQA [197], ATTIQA [198], CAP-IQA [199], SFD [200], UniQA [201], RALI [202] | × | Language as representation for perceptual alignment | |

| Reasoning-Based | DepictQA [95], DepictQA-Wild [203], IQAGPT [204], Co-Instruct [205], Q-Ground [97], SEAGULL [96], AgenticIQA [48] | × | Language-driven quality understanding, explanation, grounding, and decision-making | |

| Scoring-Based | Q-Align [46], DeQA-Score [45], Dog-IQA [206], Q-Scorer [98], Compare2Score [94], Q-Insight [207], Q-Ponder [208], Q-Hawkeye [209], LEAF [210] | × | Language-guided quality scoring and calibration | |

| Resources / Benchmarks | Q-Bench [211], Q-Bench+ [212], Q-Instruct [93] | × | Benchmark datasets and instruction resources for IQA |

| Category | Sub-category | Representative Methods | GT | Usage |

|---|---|---|---|---|

| Evaluation Protocols | Human-Aligned | PLCC, SRCC, KRCC [213], Weighted Kappa [214], Percent Agreement | × | Correlation with human subjective perception |

| Task-Oriented | Precision, Recall, F1, mIoU, Accuracy [95] | ✓ | Downstream task performance | |

| Text-Based | BLEU-N [215], ROUGE-L [216], METEOR [217], CIDEr [218] | ✓ | Textual or semantic fidelity evaluation |

| Method | Venue | Params | Deraining | Denoising (BSD68 [141]) | Dehazing | Average | ||||||||||

| Rain100L [147] | SOTS [156] | |||||||||||||||

| AirNet [221] | CVPR’22 | 9M | 34.90 | 0.967 | 33.92 | 0.933 | 31.26 | 0.888 | 28.00 | 0.797 | 27.94 | 0.962 | 31.20 | 0.910 | ||

| IDR [222] | CVPR’23 | 15M | 36.03 | 0.971 | 33.89 | 0.931 | 31.32 | 0.884 | 28.04 | 0.798 | 29.87 | 0.970 | 31.83 | 0.911 | ||

| PromptIR [33] | NeurIPS’23 | 33M | 36.37 | 0.972 | 33.98 | 0.933 | 31.31 | 0.888 | 28.06 | 0.799 | 30.58 | 0.974 | 32.06 | 0.913 | ||

| AdaIR [223] | ICLR’25 | 29M | 38.64 | 0.983 | 34.12 | 0.934 | 31.45 | 0.892 | 28.19 | 0.802 | 31.06 | 0.980 | 32.69 | 0.918 | ||

| DSwinIR [224] | T-PAMI’25 | 24M | 37.73 | 0.983 | 34.12 | 0.933 | 31.59 | 0.890 | 28.31 | 0.803 | 31.86 | 0.980 | 32.72 | 0.917 | ||

| VIVNet [225] | T-PAMI’26 | 7.42M | 38.47 | 0.983 | 34.16 | 0.936 | 31.50 | 0.893 | 28.24 | 0.806 | 32.19 | 0.982 | 32.91 | 0.920 | ||

| InstructIR-3D [34] | ECCV’24 | 16M | 37.98 | 0.978 | 34.15 | 0.933 | 31.52 | 0.890 | 28.30 | 0.803 | 30.22 | 0.959 | 32.43 | 0.913 | ||

| VLU-Net [67] | CVPR’25 | 35M | 38.93 | 0.984 | 34.13 | 0.935 | 31.48 | 0.892 | 28.23 | 0.804 | 30.71 | 0.980 | 32.70 | 0.919 | ||

| Perceive-IR [130] | T-IP’25 | 42M | 38.29 | 0.980 | 34.13 | 0.934 | 31.53 | 0.890 | 28.31 | 0.804 | 30.87 | 0.975 | 32.63 | 0.917 | ||

| ClearAIR [66] | AAAI’26 | 31M | 38.61 | 0.984 | 34.18 | 0.935 | 31.50 | 0.891 | 28.31 | 0.804 | 31.08 | 0.981 | 32.74 | 0.919 | ||

| Method | Venue | Params | Dehazing | Deraining | Denoising | Deblurring | LLIE | Average | ||||||||||

| SOTS [156] | Rain100L [147] | [141] | GoPro [15] | LOL [17] | ||||||||||||||

| AirNet [221] | CVPR’22 | 9M | 21.04 | 0.884 | 32.98 | 0.951 | 30.91 | 0.882 | 24.35 | 0.781 | 18.18 | 0.735 | 25.49 | 0.847 | ||||

| IDR [222] | CVPR’23 | 15M | 25.24 | 0.943 | 35.63 | 0.965 | 31.60 | 0.887 | 27.87 | 0.846 | 21.34 | 0.826 | 28.34 | 0.893 | ||||

| PromptIR [33] | NeurIPS’23 | 33M | 26.54 | 0.949 | 36.37 | 0.970 | 31.47 | 0.886 | 28.71 | 0.881 | 22.68 | 0.832 | 29.15 | 0.904 | ||||

| AdaIR [223] | ICLR’25 | 29M | 30.53 | 0.978 | 38.02 | 0.981 | 31.35 | 0.888 | 28.12 | 0.858 | 23.00 | 0.845 | 30.20 | 0.910 | ||||

| DSwinIR [224] | T-PAMI’25 | 24M | 30.09 | 0.975 | 37.77 | 0.982 | 31.34 | 0.885 | 29.17 | 0.879 | 22.64 | 0.843 | 30.20 | 0.913 | ||||

| VIVNet [225] | T-PAMI’26 | 7.42M | 31.85 | 0.982 | 38.67 | 0.984 | 31.46 | 0.892 | 28.50 | 0.866 | 23.03 | 0.857 | 30.70 | 0.916 | ||||

| DA-CLIP [114] | ICLR’24 | 125M | 26.28 | 0.939 | 35.91 | 0.972 | 25.77 | 0.653 | 28.81 | 0.882 | 22.57 | 0.832 | 29.23 | 0.898 | ||||

| DiffRes [109] | CVPR’25 | 45M | 27.23 | 0.958 | 37.25 | 0.979 | 32.07 | 0.890 | 29.33 | 0.883 | 23.13 | 0.843 | 29.78 | 0.911 | ||||

| InstructIR-5D [34] | ECCV’24 | 16M | 27.10 | 0.956 | 36.84 | 0.973 | 31.40 | 0.887 | 29.40 | 0.886 | 23.00 | 0.836 | 29.55 | 0.907 | ||||

| VLU-Net [67] | CVPR’25 | 35M | 30.84 | 0.980 | 38.54 | 0.982 | 31.43 | 0.891 | 27.46 | 0.840 | 22.29 | 0.833 | 30.11 | 0.905 | ||||

| Perceive-IR [130] | T-IP’25 | 42M | 28.19 | 0.964 | 37.25 | 0.977 | 31.44 | 0.887 | 29.46 | 0.886 | 22.88 | 0.833 | 29.84 | 0.909 | ||||

| ClearAIR [66] | AAAI’26 | 31M | 30.12 | 0.978 | 38.20 | 0.982 | 31.53 | 0.888 | 29.67 | 0.887 | 22.83 | 0.846 | 30.45 | 0.916 | ||||

| Method | Venue | PSNR | SSIM |

|---|---|---|---|

| RetinexFormer [30] | ICCV’23 | 25.16 | 0.845 |

| LLFormer [226] | AAAI’23 | 23.65 | 0.8163 |

| CWNet [227] | ICCV’25 | 23.60 | 0.8496 |

| RetinexDiff++ [228] | T-PAMI’25 | 24.67 | 0.867 |

| LLMRA [68] | ECCV’24 | 23.30 | 0.846 |

| DA-CLIP [114] | ICLR’24 | 23.40 | 0.811 |

| DiffRes [109] | CVPR’25 | 24.55 | 0.839 |

| Perceive-IR [130] | T-IP’25 | 23.79 | 0.841 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).